Enterprise networking has moved away from a purely hardware-centric and manually operated discipline toward a model where software, automation, and programmability define day-to-day operations. This transformation is not cosmetic; it reflects a deeper architectural change in how networks are designed, deployed, and maintained at scale. Traditional network engineering relied heavily on CLI-based configuration, where administrators would individually log into devices and apply changes one by one. While effective in smaller environments, this approach does not scale efficiently in modern infrastructures that may span multiple data centers, cloud environments, and geographically distributed branches.

The CCNP Enterprise certification framework reflects this industry evolution by embedding automation and programmability as core competencies rather than optional skills. The goal is no longer limited to understanding routing protocols or switching behavior in isolation but extends to how networks can be controlled programmatically using structured interfaces and automation systems. This aligns enterprise networking with broader trends in IT, where infrastructure is increasingly treated as code rather than as manually managed hardware.

The emphasis on automation represents a shift in mindset. Instead of focusing solely on device-level configuration, engineers are now expected to understand how systems interact, how configurations are generated programmatically, and how network state can be managed through APIs and data models. This transition is central to modern certification objectives and reflects real-world operational demands.

Automation as a Structural Requirement in Modern Networks

Automation is no longer positioned as an advanced or optional specialization within enterprise networking. It has become a structural requirement driven by the complexity and scale of modern environments. Enterprises today operate networks that support cloud workloads, remote users, distributed applications, and dynamic security policies. Managing these manually introduces inefficiencies and increases the likelihood of inconsistencies across devices.

Automation addresses these challenges by introducing repeatable processes that can be executed consistently across large numbers of network elements. Instead of configuring each device independently, automation allows engineers to define desired states that are applied uniformly across the infrastructure. This reduces configuration drift and improves operational reliability.

From a conceptual standpoint, automation introduces abstraction between the engineer and the underlying hardware. Rather than interacting directly with device configurations, engineers interact with higher-level logic that defines what the network should achieve. The automation system then translates these intentions into device-specific configurations.

This abstraction layer is a fundamental change in networking philosophy. It reduces the cognitive load on engineers and enables networks to scale more efficiently. It also introduces consistency, as configurations are generated from standardized templates or models rather than manually written commands.

Network Programmability as a Control Mechanism

Network programmability refers to the ability to interact with network infrastructure using software-based methods instead of manual configuration. It enables external systems to read, modify, and manage network behavior through structured interfaces. This concept is essential for modern enterprise environments where networks must respond dynamically to changing application requirements and traffic conditions.

Programmability introduces a shift from static configuration to dynamic control. In traditional environments, configuration changes are applied manually and remain static until modified again. In programmable environments, configurations can be adjusted automatically based on external inputs, policies, or telemetry data.

This capability is particularly important in environments that use cloud-native architectures or software-defined networking principles. In such systems, networking is integrated into broader orchestration platforms that manage compute, storage, and application resources together. Programmability ensures that the network can respond in real time to changes in these environments.

At a technical level, programmability is achieved through structured communication mechanisms that allow systems to exchange configuration and operational data in a standardized format. These mechanisms are built on data models and protocols that define how information is structured and transmitted.

Integration of Automation into CCNP Enterprise Structure

The CCNP Enterprise certification reflects the importance of automation by embedding it into both the core and specialization pathways. The core certification includes a significant portion of content related to automation concepts, signaling that these skills are foundational rather than optional.

Candidates are expected to understand how automation integrates with traditional networking functions. This includes awareness of scripting concepts, data representation formats, and configuration interfaces. The objective is not necessarily to turn network engineers into software developers but to ensure they can operate effectively in environments where automation tools are standard.

The concentration pathways extend these concepts further by focusing on advanced automation techniques. These include programmatic interaction with network devices, integration with orchestration systems, and the use of APIs to manage infrastructure at scale. This layered approach ensures that professionals can develop both foundational and specialized automation skills.

Emergence of Data-Centric Networking Models

Modern network automation relies heavily on data-centric models where configuration and operational state are represented as structured data rather than unstructured commands. This allows automation systems to interpret and manipulate network configurations programmatically.

Data-centric networking separates the definition of intent from the implementation of configuration. Engineers define what the network should achieve, and the system translates that intent into specific device-level changes. This separation improves scalability and reduces operational complexity.

Structured data models also enable validation before configuration changes are applied. This reduces errors and ensures consistency across network devices. It allows automation systems to verify that changes conform to predefined rules and constraints before execution.

Role of Structured Data Formats in Automation

Structured data formats are essential for enabling communication between automation systems and network devices. These formats provide a standardized way to represent configuration data, operational states, and policy definitions.

Several widely used formats exist in modern networking environments, each with distinct characteristics. These formats are designed to balance readability and machine interpretability, allowing both engineers and systems to work with the same data structures.

Structured formats ensure that information is transmitted consistently across different systems. This is critical in environments where multiple tools and platforms interact with the same network infrastructure. Without standardization, data interpretation would vary between systems, leading to inconsistencies and potential configuration errors.

XML as a Hierarchical Data Representation Format

XML represents one of the earliest widely adopted structured data formats used in network automation and API communication. It organizes data using nested tags that define elements and their relationships.

The hierarchical nature of XML makes it suitable for representing complex configurations that include multiple levels of detail. Each element can contain sub-elements, allowing detailed modeling of network configurations.

XML is both human-readable and machine-processable, which made it a popular choice for early automation systems. Its strict syntax rules ensure consistency, although they can sometimes make it verbose compared to newer formats.

In network automation contexts, XML is often used for encoding configuration data exchanged between systems. Its structured nature allows it to be validated against predefined schemas, ensuring that data adheres to expected formats.

JSON as a Lightweight Data Interchange Format

JSON emerged as a more lightweight alternative to XML, designed for simplicity and ease of use in modern applications. It uses key-value pairs and nested objects to represent structured data in a compact format.

JSON is widely used in network automation because it integrates easily with programming languages and web-based systems. It is less verbose than XML and generally easier to parse, making it suitable for high-performance automation workflows.

In networking environments, JSON is commonly used in API responses where devices return structured configuration or operational data. Its compatibility with web technologies makes it ideal for REST-based communication models.

Despite its simplicity, JSON maintains strong structural capabilities, allowing it to represent complex nested configurations effectively.

YAML as a Human-Friendly Configuration Format

YAML is designed with readability as a primary goal. It uses an indentation-based structure to represent hierarchical data, making it easy for humans to read and write.

In network automation, YAML is often used for configuration files and orchestration templates. Its simplicity makes it suitable for defining automation workflows and infrastructure definitions.

Unlike XML and JSON, YAML prioritizes readability over strict structural verbosity. This makes it popular in environments where configurations are frequently reviewed or edited by engineers.

YAML’s role in automation is closely tied to tools that rely on declarative configuration approaches, where the desired state is defined in a readable format.

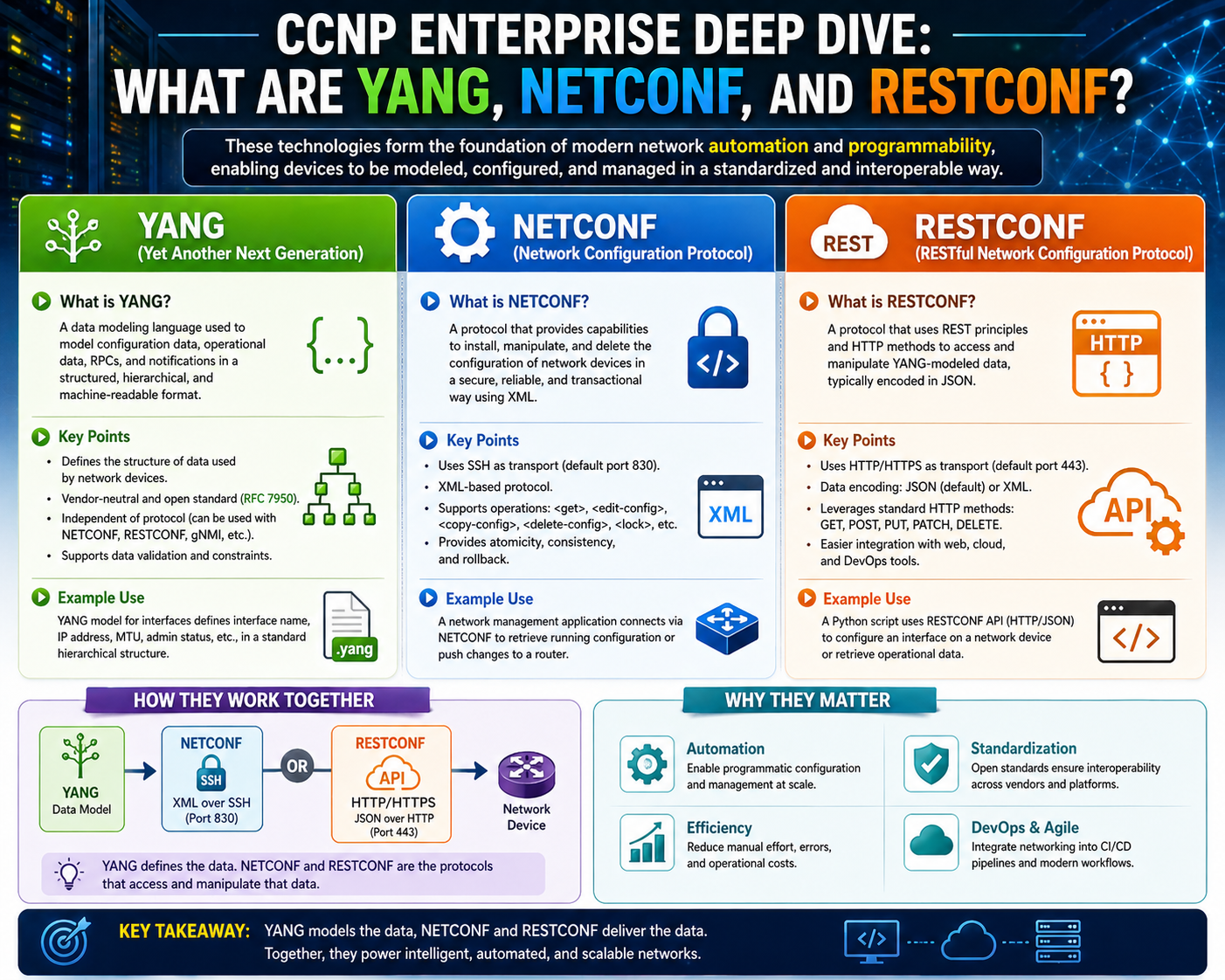

YANG as a Data Modeling Language for Networks

YANG is fundamentally different from XML, JSON, and YAML because it is not simply a data format but a data modeling language. It defines how data should be structured, validated, and interpreted within network systems.

YANG models describe the schema of network configurations, specifying what data exists, how it is organized, and what constraints apply. These models act as blueprints for how configuration data should be structured in systems that support programmability.

Unlike simple data formats, YANG introduces rules and constraints that ensure consistency across implementations. It defines relationships between data elements and enforces structure at a conceptual level.

YANG models are often used in conjunction with configuration protocols, where they define the structure of data exchanged between systems.

Relationship Between YANG and Configuration Validation

One of the key roles of YANG is to enable validation of configuration data before it is applied to network devices. When automation systems generate configuration changes, those changes must conform to the structure defined by YANG models.

This validation ensures that only correctly structured and permissible configurations are accepted by the system. It reduces the risk of configuration errors and improves network stability.

YANG also enables interoperability by providing a standardized way to define network data structures. This ensures that different systems interpret configuration data consistently.

NETCONF as a Structured Configuration Protocol

NETCONF is a protocol designed specifically for network configuration and management in automated environments. It provides a structured mechanism for retrieving and modifying configuration data on network devices.

NETCONF operates using a client-server model where the client sends structured requests and the server responds with structured data. These interactions are typically encoded in XML and are validated against YANG models.

A key feature of NETCONF is its use of configuration datastores, which represent different states of configuration within a device. These include running configuration, candidate configuration, and startup configuration, each serving a specific role in managing changes.

NETCONF also supports transactional operations, allowing configuration changes to be applied in a controlled manner. This reduces the risk of partial or inconsistent configurations being deployed.

NETCONF Layered Communication Structure

NETCONF communication is organized into multiple layers that define how messages are structured and transmitted. These layers include content, operations, messages, and transport mechanisms.

The content layer defines the actual configuration data being manipulated. The operations layer defines the actions being performed, such as retrieving or modifying configuration data. The message layer defines how requests and responses are structured, while the transport layer ensures secure delivery of communication.

This layered structure provides a clear separation of concerns, making NETCONF both flexible and reliable for automation use cases.

RESTCONF as a Web-Based Configuration Interface

RESTCONF provides a web-based alternative to NETCONF, using standard HTTP methods to interact with network devices. It is designed to offer a simpler and more familiar interface for automation systems that already use web technologies.

RESTCONF supports both JSON and XML data formats, making it flexible for different application environments. It maps traditional REST operations such as GET, POST, PUT, and DELETE to configuration actions on network devices.

Unlike NETCONF, RESTCONF is stateless and does not maintain session-based locking mechanisms. This makes it more lightweight but less transactional in nature.

RESTCONF is often used in environments where integration with web applications and cloud services is required, as it aligns closely with modern API design principles.

Interconnection Between YANG, NETCONF, and RESTCONF

YANG, NETCONF, and RESTCONF work together to form a complete network programmability stack. YANG defines the data structure, NETCONF provides a transactional configuration protocol, and RESTCONF offers a web-based interface for interacting with the same underlying data models.

This layered architecture allows network systems to be both structured and flexible. It enables automation tools to interact with devices using consistent data definitions while supporting multiple communication methods.

Together, these technologies form the foundation of modern network automation and programmable infrastructure, enabling scalable and dynamic enterprise network operations.

Rise of Data-Centric Networking in Enterprise Environments

Modern enterprise networking has shifted toward a data-centric operational model where configuration, state information, and policy definitions are treated as structured data rather than manual command sequences. This evolution is driven by the need to manage large-scale, distributed infrastructures that span on-premises data centers, cloud platforms, and hybrid connectivity models.

In traditional networking, configurations were applied directly to devices using command-line interfaces. Each device maintained its own configuration state, and consistency depended entirely on manual accuracy and operational discipline. As environments grew in complexity, this approach became unsustainable. Even minor inconsistencies between devices could lead to operational issues, security vulnerabilities, or performance degradation.

Data-centric networking resolves these challenges by abstracting configuration into structured representations that can be processed, validated, and applied programmatically. Instead of issuing device-specific commands, engineers define desired outcomes in a structured format. Automation systems then interpret these definitions and translate them into device-specific instructions.

This shift fundamentally changes how networks are designed and managed. The focus moves away from individual device configuration toward system-wide intent and policy enforcement. As a result, networks become more predictable, scalable, and easier to manage at enterprise scale.

Importance of Structured Data in Network Automation

Structured data plays a central role in enabling automation across modern network infrastructures. It provides a consistent framework for representing configuration and operational information in a way that can be interpreted by both humans and machines.

Without structured data, automation systems would struggle to interpret configuration intent reliably. Inconsistent formatting, ambiguous syntax, and device-specific variations would introduce complexity and reduce reliability. Structured formats eliminate these issues by enforcing strict rules for how data is organized and validated.

In automation workflows, structured data acts as the communication layer between orchestration systems and network devices. It defines not only what configuration should be applied but also how it should be interpreted and validated. This ensures consistency across diverse environments and reduces the likelihood of configuration errors.

Structured data also enables interoperability between different systems. In enterprise environments, multiple tools often interact with the same infrastructure, including monitoring systems, orchestration platforms, and configuration engines. A standardized data structure ensures that all these systems interpret network state in a consistent manner.

Hierarchical Representation of Network Configurations

Most structured data models used in networking are hierarchical in nature. This means that data is organized in a tree-like structure, where high-level elements contain nested sub-elements that define specific attributes.

This hierarchical approach closely mirrors the structure of real-world networks. For example, a network device may contain interfaces, routing protocols, security policies, and system settings. Each of these components can be further broken down into detailed sub-elements.

Hierarchical models provide several advantages. They improve readability by organizing complex configurations into logical groupings. They also enhance scalability, allowing additional configuration elements to be added without disrupting existing structures.

From an automation perspective, hierarchical models make it easier to map configuration intent to device behavior. Automation systems can traverse these structures systematically, applying changes at the appropriate level of detail.

Role of Data Validation in Automated Networks

Data validation is a critical component of network automation systems. It ensures that configuration changes are correct, consistent, and compliant with predefined rules before they are applied to devices.

Validation occurs at multiple levels. At the structural level, data must conform to the required format. At the semantic level, values must be within acceptable ranges or match defined constraints. At the policy level, configurations must align with organizational rules and operational standards.

This multi-layer validation process reduces the risk of misconfigurations that could lead to service disruption or security vulnerabilities. It also ensures that automation systems do not introduce invalid or inconsistent states into the network.

By enforcing validation before deployment, network automation systems improve reliability and reduce operational risk. This is especially important in large-scale environments where manual verification is not feasible.

Serialization and Data Exchange Mechanisms

Serialization refers to the process of converting structured data into a format that can be transmitted across network systems and then reconstructed at the destination. In network automation, serialization enables communication between orchestration platforms and network devices.

Serialized data is transmitted over transport protocols and then deserialized by the receiving system. This process ensures that configuration data maintains its structure and meaning during transmission.

Different serialization formats are used depending on system requirements. Some formats prioritize readability, while others prioritize performance or compactness. Regardless of format, the goal is to maintain consistency and accuracy in data exchange.

Serialization is essential for distributed network management systems, where multiple components must communicate reliably across different environments.

XML as a Structured Configuration Format

XML (Extensible Markup Language) is one of the earliest and most widely used structured data formats in network automation. It represents data using nested tags that define elements and their relationships.

XML is highly structured and enforces strict syntax rules. Each element must be properly opened and closed, and hierarchical relationships are clearly defined through nesting. This structure makes XML highly suitable for representing complex configuration data.

In networking environments, XML is often used for configuration exchange and API communication. Its structured nature allows it to be validated against schemas, ensuring consistency and correctness.

Although XML can be verbose, its clarity and strict structure make it reliable for systems that require precise data representation.

JSON as a Lightweight Data Interchange Format

JSON (JavaScript Object Notation) is a lightweight alternative to XML that has become widely adopted in modern network automation systems. It uses key-value pairs and nested objects to represent structured data.

JSON is simpler and more compact than XML, making it easier to parse and process. It integrates seamlessly with modern programming languages, particularly those used in automation and web development.

In network environments, JSON is commonly used in API responses and configuration exchanges. Its simplicity makes it ideal for high-speed automation workflows where efficiency is important.

Despite its simplicity, JSON is capable of representing complex hierarchical data structures, making it suitable for a wide range of automation use cases.

YAML as a Human-Oriented Configuration Format

YAML (Yet Another Markup Language) is designed to be highly readable and user-friendly. It uses indentation-based structure rather than tags or braces to represent hierarchy.

This design makes YAML particularly suitable for configuration files and automation scripts. Engineers can easily read and modify YAML files without needing specialized parsing tools.

In network automation, YAML is commonly used in orchestration tools and infrastructure configuration definitions. Its readability makes it ideal for defining automation workflows and system configurations.

YAML emphasizes clarity and simplicity, making it a preferred choice for environments where configurations are frequently reviewed or modified by engineers.

Comparison of XML, JSON, and YAML in Automation Workflows

Each structured data format serves a different role in network automation. XML provides strict structure and validation capabilities, making it suitable for formal configuration exchanges. JSON offers lightweight and efficient data representation, making it ideal for APIs and real-time communication. YAML prioritizes readability and is often used for configuration files and orchestration templates.

The choice of format depends on the specific requirements of the automation system. Some environments prioritize performance, while others prioritize readability or strict validation.

Despite their differences, all three formats serve the same fundamental purpose: enabling structured communication between systems in a consistent and machine-readable way.

Introduction to Data Modeling in Networking

Data modeling is a more advanced concept that defines how network data should be structured, interpreted, and validated. Unlike simple data formats, data models provide a formal definition of network configuration elements and their relationships.

A data model acts as a blueprint for how network systems should represent configuration and operational data. It defines what data exists, how it is organized, and what constraints apply.

This abstraction allows automation systems to interact with network devices consistently, regardless of underlying hardware or vendor differences.

YANG as a Network Data Modeling Language

YANG (Yet Another Next Generation) is a specialized data modeling language designed specifically for networking environments. Unlike XML, JSON, or YAML, which are data representation formats, YANG defines the structure and rules for network data.

YANG models describe how configuration and operational data are organized within a network system. They define data hierarchies, relationships, constraints, and types.

These models serve as authoritative definitions of network behavior, ensuring consistency across devices and platforms. They are used by automation systems to validate and structure configuration data before it is applied.

YANG enables interoperability by providing a standardized way to define network data structures, regardless of vendor-specific implementations.

Relationship Between YANG Models and Device Configuration

YANG models act as intermediaries between automation systems and network devices. When an automation tool generates configuration data, it must conform to the structure defined by the relevant YANG model.

This ensures that configuration changes are valid and consistent before being applied. If the data does not match the model, it is rejected or corrected before execution.

This validation process reduces errors and improves reliability in automated network environments. It also ensures that all systems interpret configuration data in a consistent manner.

NETCONF as a Configuration Management Protocol

NETCONF (Network Configuration Protocol) is designed to provide a standardized mechanism for managing network device configurations. It enables systems to retrieve, modify, and manage configuration data using structured communication.

NETCONF operates using a client-server model. The client sends structured requests, and the server responds with configuration data or status information. These interactions are typically encoded in XML and validated against YANG models.

One of the key features of NETCONF is its support for configuration datastores. These datastores represent different states of configuration within a device, allowing controlled management of changes.

NETCONF also supports transactional operations, enabling configuration changes to be applied in a controlled and reversible manner.

Operational Structure of NETCONF Layers

NETCONF communication is organized into multiple layers that define how messages are structured and transmitted. These layers include content, operations, messages, and transport.

The content layer represents the actual configuration data being manipulated. The operations layer defines the type of action being performed, such as retrieving or modifying configuration data. The message layer structures the communication between systems, while the transport layer ensures secure delivery.

This layered approach provides clarity and modularity, making NETCONF a robust protocol for network automation.

RESTCONF as a Web-Based Alternative

RESTCONF provides a simpler, web-based approach to network configuration. It uses HTTP methods to interact with network devices and supports both JSON and XML data formats.

RESTCONF aligns closely with modern web application design principles, making it easier to integrate with cloud services and automation tools.

Unlike NETCONF, RESTCONF is stateless and does not maintain session-based control mechanisms. This makes it lighter but less transactional in nature.

RESTCONF is widely used in environments where integration with web-based systems is required.

Integration of Data Models and Configuration Protocols

YANG, NETCONF, and RESTCONF together form a unified ecosystem for network automation. YANG defines the structure of data, NETCONF provides a transactional configuration protocol, and RESTCONF offers a web-based interface.

This integration ensures that network configuration is both structured and flexible. It allows automation systems to interact with devices using consistent data definitions while supporting multiple communication methods.

This layered architecture is fundamental to modern network programmability and enterprise automation strategies.

Transition from Manual Networking to API-Driven Infrastructure Control

Enterprise networking has progressively shifted toward API-driven infrastructure control, where network devices are no longer managed primarily through direct command-line interaction but instead through programmable interfaces exposed over standardized communication methods. This shift is a defining characteristic of modern infrastructure engineering and represents a move toward treating network devices as programmable entities rather than static configuration targets.

In earlier operational models, network changes were executed manually by logging into individual devices and applying configuration commands sequentially. While this approach provided direct control, it introduced significant limitations in scalability, consistency, and speed. As networks expanded in size and complexity, manual methods became increasingly inefficient and prone to human error.

API-driven control addresses these challenges by introducing a structured communication layer between automation systems and network infrastructure. Instead of interacting directly with device interfaces, engineers and automation tools communicate with devices through standardized requests that define desired actions or states. These requests are processed by the device and translated into internal configuration changes.

This abstraction allows networks to be managed as systems rather than collections of independent devices. It also enables integration with broader IT ecosystems, including orchestration platforms, cloud services, and application delivery systems.

Operational Mechanics of Programmable Network Interfaces

Programmable network interfaces operate by exposing structured endpoints that accept requests and return responses based on defined data models. These interfaces allow external systems to retrieve configuration data, modify operational parameters, and monitor device state.

The interaction between automation systems and network devices follows a predictable pattern. A request is generated by an external system, transmitted to the device, processed according to internal logic and data models, and then returned as a structured response. This cycle enables consistent and repeatable interactions that can be scaled across large infrastructures.

These interfaces are designed to be stateless or minimally stateful, depending on the protocol used. Stateless designs improve scalability by reducing dependency on session management, while stateful mechanisms provide stronger transactional guarantees when required.

The key advantage of programmable interfaces lies in their ability to standardize communication across heterogeneous environments. Devices from different vendors can expose consistent interfaces, enabling unified automation workflows.

Role of Structured Communication in Network Automation

Structured communication is essential for ensuring that automation systems and network devices interpret information consistently. Without structure, communication between systems would be ambiguous, leading to misinterpretation of configuration intent.

Structured communication relies on predefined formats that define how data is organized, transmitted, and interpreted. These formats ensure that both sender and receiver understand the meaning of exchanged information without requiring manual interpretation.

In network automation, structured communication enables predictable behavior. Automation systems can generate configuration requests knowing that devices will interpret them according to shared rules and models. This predictability is critical for maintaining stability in large-scale environments.

Structured communication also enables validation at multiple stages of the automation workflow. Data can be checked for correctness before transmission, during processing, and after application. This layered validation reduces the likelihood of configuration errors propagating through the system.

Configuration Lifecycle in Automated Network Systems

In automated environments, configuration changes follow a defined lifecycle that ensures consistency and reliability. This lifecycle begins with intent definition, where desired network behavior is specified in a structured format.

Once intent is defined, automation systems translate it into structured configuration data. This data is validated against predefined models to ensure correctness and compatibility. After validation, the configuration is transmitted to network devices using programmable interfaces.

Upon receipt, devices process the configuration and apply changes to their internal state. This process may involve updating routing tables, modifying interface parameters, or adjusting policy rules.

After changes are applied, systems typically perform verification to ensure that the resulting state matches the intended configuration. This feedback loop is essential for maintaining consistency between desired and actual network states.

The configuration lifecycle ensures that changes are controlled, traceable, and reversible when necessary. It also provides a framework for integrating automation into operational processes without compromising reliability.

Role of Transactional Operations in Network Configuration

Transactional operations play a critical role in ensuring that configuration changes are applied consistently across network devices. A transaction groups multiple configuration actions into a single logical operation that either succeeds entirely or fails without partial application.

This approach prevents inconsistent states that could arise if only部分 of a configuration were applied successfully. In complex network environments, even small inconsistencies can lead to significant operational issues.

Transactional mechanisms allow automation systems to stage configuration changes before committing them. This staging process enables validation and verification before changes become active.

If any part of a transaction fails validation or execution, the entire operation can be rolled back. This ensures that the network remains in a stable and predictable state.

Transactional control is particularly important in environments where multiple dependencies exist between configuration elements. It ensures that changes are applied atomically, preserving system integrity.

Role of Network Datastores in Configuration Management

Network datastores represent structured storage areas within devices that hold configuration and operational data. These datastores allow systems to manage different states of configuration independently.

Typically, multiple datastores exist to represent different stages of the configuration lifecycle. One datastore may represent the currently active configuration, while another may hold candidate changes that are under review or staging.

This separation allows changes to be prepared, validated, and reviewed before being applied to the live system. It also enables rollback capabilities in case of configuration errors or unexpected behavior.

Datastores provide a structured way to manage configuration state over time. They ensure that changes are not applied directly to operational systems without proper validation and control.

This model improves reliability and reduces the risk associated with configuration changes in large-scale environments.

REST-Based Communication and Web-Oriented Networking Models

REST-based communication models have become widely adopted in network automation due to their simplicity and compatibility with web technologies. These models use standard HTTP methods to perform operations on network devices.

Each request corresponds to a specific action, such as retrieving configuration data, updating settings, or deleting resources. Responses are returned in structured formats that can be easily processed by automation systems.

REST-based models are stateless, meaning that each request contains all necessary information for processing. This simplifies scalability and reduces dependency on persistent sessions.

The use of web-based communication allows integration with a wide range of tools and platforms, including cloud services and application programming environments. This makes REST-based networking highly flexible and adaptable.

REST-oriented models also support multiple data formats, allowing systems to choose the most appropriate representation for their needs. This flexibility enhances interoperability across diverse environments.

Interaction Between REST-Based Systems and Network Models

REST-based systems interact with network devices by mapping HTTP operations to configuration actions defined by underlying data models. These mappings ensure that web-based requests correspond to valid network operations.

For example, retrieval operations may correspond to reading configuration data, while update operations modify existing settings. These mappings are defined in a structured way to maintain consistency across systems.

Data models play a critical role in ensuring that REST-based communication aligns with device capabilities. Without standardized models, communication would lack structure and reliability.

This integration allows network devices to be managed using familiar web technologies while maintaining strict control over configuration behavior.

Automation Workflows in Enterprise Networking

Automation workflows define sequences of operations that manage network configuration and state. These workflows integrate data models, communication protocols, and orchestration logic into a unified process.

A typical workflow begins with intent definition, followed by data generation, validation, transmission, and verification. Each stage is designed to ensure accuracy and consistency.

Workflows can be triggered manually or automatically based on system events. For example, changes in network traffic, security policies, or application demands can trigger automated adjustments.

Automation workflows reduce the need for manual intervention and improve operational efficiency. They also enable rapid response to changing conditions in dynamic environments.

In large-scale infrastructures, workflows are often orchestrated centrally to ensure consistency across multiple systems and devices.

Role of Scripting in Network Automation

Scripting plays a crucial role in network automation by enabling programmable control over network devices and systems. Scripts are used to generate configuration data, interact with APIs, and process operational information.

Scripting languages allow engineers to automate repetitive tasks and integrate multiple systems into cohesive workflows. This reduces manual effort and improves consistency.

Scripts often interact with network APIs to retrieve data, apply configurations, or monitor system state. This interaction enables dynamic control over network behavior.

Scripting also supports customization of automation workflows, allowing organizations to tailor processes to specific operational requirements.

Integration of Automation Frameworks in Enterprise Systems

Automation frameworks provide structured environments for managing network automation workflows. These frameworks define how scripts, data models, and communication protocols interact.

They enable centralized control over distributed infrastructure and provide tools for managing configuration, monitoring, and orchestration tasks.

Frameworks often include modules for validation, execution, and reporting. These components ensure that automation workflows are reliable and traceable.

By integrating multiple automation components, frameworks provide a unified platform for managing complex network environments.

Role of APIs in Network Orchestration Systems

APIs serve as the primary interface between automation systems and network infrastructure. They define how external systems can interact with devices and retrieve or modify configuration data.

APIs provide a standardized way to expose device functionality, enabling consistent interaction across different platforms. This standardization is essential for large-scale automation.

In orchestration systems, APIs are used to coordinate actions across multiple devices and services. They allow systems to manage complex workflows that span multiple layers of infrastructure.

APIs also enable integration with external systems such as monitoring tools, analytics platforms, and cloud services.

Security Considerations in Programmable Networking

Security is a critical aspect of programmable networking environments. Since automation systems have direct access to network configurations, ensuring secure communication is essential.

Secure transport mechanisms are used to protect data during transmission. Authentication and authorization controls ensure that only authorized systems can modify network configurations.

Validation mechanisms help prevent unauthorized or malformed configuration changes from being applied. These controls are essential for maintaining network integrity.

Security considerations extend to automation workflows, ensuring that scripts and orchestration systems operate within defined boundaries.

Telemetry and Data-Driven Network Operations

Telemetry refers to the continuous collection of operational data from network devices. This data is used to monitor performance, detect anomalies, and inform automation decisions.

In data-driven networks, telemetry provides real-time insights into network behavior. Automation systems can use this data to adjust configurations dynamically.

Telemetry enables proactive network management by identifying issues before they impact users. It also supports optimization of network performance based on observed conditions.

The integration of telemetry with automation systems creates a feedback loop that enhances network intelligence and responsiveness.

Evolution Toward Fully Programmable Infrastructure

Modern enterprise networks are evolving toward fully programmable infrastructures where configuration, monitoring, and optimization are all handled through automated systems.

This evolution represents a convergence of networking and software engineering disciplines. Networks are no longer static systems but dynamic environments controlled through programmable logic.

Fully programmable infrastructure enables rapid scaling, improved reliability, and greater operational efficiency. It also supports integration with cloud-native architectures and distributed application environments.

As this evolution continues, network engineers increasingly operate in roles that combine infrastructure knowledge with programming and automation expertise.

Conclusion

The evolution of enterprise networking toward automation and programmability represents a structural transformation rather than a simple feature enhancement. Across modern infrastructures, the role of the network engineer is no longer confined to device configuration and protocol troubleshooting. Instead, it now includes understanding how systems interact through data models, APIs, and automation workflows that define and control network behavior at scale. This shift is driven by the increasing complexity of enterprise environments, where static and manually managed configurations are no longer sufficient to meet operational demands.

At the core of this transformation is the separation of intent from implementation. In traditional networking, engineers directly implemented configurations on individual devices, translating design requirements into CLI commands. This approach tightly coupled human interpretation with device-level execution. In contrast, modern programmable networks introduce abstraction layers where intent is expressed in structured form, and automation systems determine how that intent is applied across infrastructure components. This separation improves consistency, reduces operational overhead, and enables repeatable deployments across distributed environments.

Data modeling plays a central role in enabling this abstraction. Structured models define how network elements are represented, how they relate to each other, and what constraints govern their behavior. These models ensure that configurations are not only syntactically correct but also semantically valid within the context of the network system. By enforcing structure at the model level, networks gain a form of built-in governance that reduces misconfigurations and improves reliability.

The relationship between data formats and data models is also critical. Formats such as XML, JSON, and YAML serve as transport and representation mechanisms, allowing structured information to be exchanged between systems. However, they do not define meaning on their own. That role belongs to data models, which provide the schema and rules that determine how information should be structured. This distinction is important because it highlights that automation is not simply about exchanging data, but about ensuring that the data conforms to a defined operational logic.

Protocols such as NETCONF and RESTCONF provide the communication mechanisms that bring these models into operational use. NETCONF introduces a structured, transactional approach to configuration management, allowing changes to be staged, validated, and applied in a controlled manner. Its use of configuration datastores and RPC-based interactions enables precise control over network state. RESTCONF, on the other hand, simplifies interaction by leveraging HTTP-based methods, making it more accessible and easier to integrate with modern web and cloud-native systems.

Together, these protocols represent different approaches to the same underlying goal: enabling reliable, programmatic control over network infrastructure. While their implementation differs, both rely on structured data models to ensure consistency and correctness. This alignment between models and protocols forms the foundation of network programmability.

Automation frameworks build on top of these protocols and models to orchestrate complex workflows across distributed environments. These frameworks coordinate multiple operations, such as configuration deployment, validation, monitoring, and rollback. By chaining these operations together, automation systems can execute high-level tasks that would otherwise require extensive manual intervention.

A key advantage of automation workflows is their ability to enforce consistency across large-scale environments. Instead of relying on individual engineers to apply configurations manually, workflows ensure that every device receives the same validated configuration derived from a single source of intent. This reduces configuration drift and enhances operational stability.

Telemetry further strengthens automation by introducing real-time visibility into network behavior. Continuous data collection allows systems to monitor performance, detect anomalies, and adjust configurations dynamically. This feedback loop transforms networks from static systems into adaptive environments capable of responding to changing conditions. When combined with automation, telemetry enables closed-loop control where the network continuously optimizes itself based on observed state.

Security considerations are deeply embedded in programmable networking systems. Since automation frameworks and APIs have direct access to network configurations, strong authentication, authorization, and transport security mechanisms are required. These controls ensure that only trusted systems can initiate configuration changes and that data integrity is preserved during communication. Additionally, validation layers within data models and protocols help prevent invalid or malicious configurations from being applied.

The integration of scripting and programming languages into networking workflows further expands the capabilities of automation systems. Scripts act as glue between different components, enabling engineers to customize workflows, interact with APIs, and process structured data. This integration reduces dependency on manual processes and allows for more dynamic and flexible network operations.

As enterprise environments continue to evolve, the boundary between networking and software engineering continues to blur. Network engineers are increasingly expected to understand programming concepts, while software engineers working in infrastructure domains must understand networking fundamentals. This convergence reflects the reality that modern infrastructure is no longer purely hardware-driven but is instead defined by software logic and data-driven control mechanisms.

Scalability is one of the most significant drivers of this transformation. Large-scale networks cannot be efficiently managed through manual processes. Automation provides the mechanism through which organizations can scale operations without proportionally increasing operational complexity. By standardizing configurations and automating deployments, enterprises can support rapid growth while maintaining stability and performance.

Reliability is another key outcome of programmable networking. Automation reduces the likelihood of human error, while structured validation ensures that configurations meet predefined standards before deployment. Transactional mechanisms further enhance reliability by ensuring that changes are applied consistently or not at all, preventing partial updates that could destabilize the network.

From an architectural perspective, modern networks are increasingly designed with programmability as a core principle rather than an afterthought. Devices are built to expose APIs, support structured data models, and integrate with automation frameworks. This native support enables deeper integration between infrastructure and orchestration systems, allowing networks to function as programmable platforms rather than static systems.

The long-term direction of enterprise networking points toward fully autonomous infrastructure systems where configuration, optimization, and recovery are handled dynamically through automated processes. In such environments, human intervention is reserved primarily for defining high-level intent and policy, while execution is delegated to intelligent systems that manage operational complexity.

This trajectory reflects a broader trend in IT infrastructure where abstraction, automation, and programmability converge to create self-managing systems. Networking, as a discipline, is central to this evolution because it connects all components of distributed computing environments. As a result, mastery of data models, protocols, automation frameworks, and programmable interfaces becomes essential for operating effectively in modern enterprise environments.

Ultimately, the integration of YANG-based modeling, NETCONF and RESTCONF protocols, structured data formats, and automation frameworks forms a cohesive ecosystem. This ecosystem enables networks to be managed at scale, with precision, and with a level of adaptability that was not possible in traditional manual models. The result is a shift from reactive network management to proactive and increasingly autonomous infrastructure control, defining the next stage of enterprise networking evolution.