DevOps originated as a response to structural inefficiencies in traditional software delivery pipelines, where development and operations teams functioned as isolated entities with conflicting priorities. Development groups were primarily focused on feature creation and rapid innovation cycles, while operations teams were responsible for stability, uptime, and infrastructure reliability. This separation created friction, especially during deployment phases, where changes from development often conflicted with operational constraints. The DevOps model emerged to address this gap by promoting a unified approach that integrates both domains into a shared workflow. Over time, this integration evolved into a broader methodology that emphasizes collaboration, automation, and continuous improvement across the entire software lifecycle. The foundational idea is that software delivery should not be segmented into disconnected stages but instead treated as a continuous, iterative process where all stakeholders contribute to outcomes. This shift significantly improved deployment frequency and reduced time-to-market for applications. DevOps also introduced the concept of shared responsibility, where both development and operations teams are accountable for application performance and stability. This represented a major departure from traditional IT structures, where accountability was clearly divided and often siloed. As adoption expanded across industries, DevOps began to take on multiple interpretations depending on organizational context. Some viewed it as a cultural transformation, others as a technical framework, and many as a combination of both. This variability contributed to the evolving nature of DevOps as both a philosophy and an operational strategy.

Cultural Model vs Operational Model in DevOps

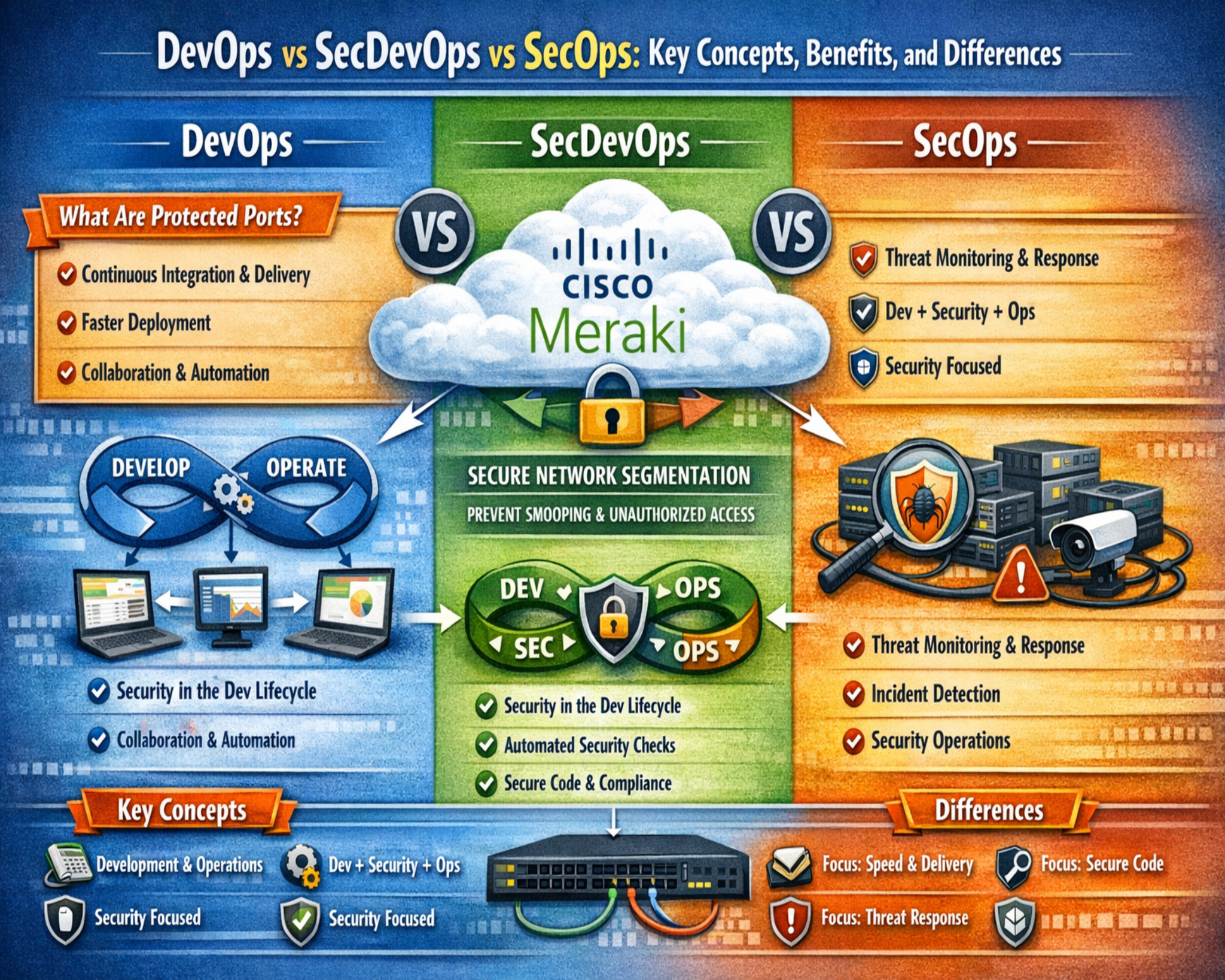

One of the central complexities in understanding DevOps lies in the dual interpretation of its meaning as both a cultural model and an operational model. As a cultural model, DevOps emphasizes collaboration, communication, and shared ownership between teams that were traditionally separated. This cultural shift requires breaking down long-standing organizational silos and encouraging cross-functional responsibility for software delivery outcomes. Teams are expected to work in alignment rather than in isolation, ensuring that development decisions take operational constraints into account and that operational feedback influences development priorities. On the other hand, the operational model of DevOps focuses on implementation practices such as automation, infrastructure orchestration, and continuous integration pipelines. These practices enable teams to streamline workflows, reduce manual intervention, and improve consistency across environments. The tension between these two interpretations has contributed to ongoing debate about what DevOps truly represents. In practice, organizations often adopt elements of both models without explicitly defining which aspect they prioritize. This leads to inconsistent implementations where some teams focus heavily on tooling while others emphasize cultural change. The dual nature of DevOps also explains why job roles labeled as DevOps engineers vary significantly across organizations. In some environments, the role is primarily infrastructure-focused, while in others it involves software development and automation engineering. This inconsistency highlights the fluid nature of DevOps as a discipline that adapts to organizational needs rather than following a fixed framework. The combination of cultural and operational elements is what gives DevOps its flexibility, but also contributes to its ambiguity in definition.

Toolchain Ecosystem and Automation Practices

Automation is a defining characteristic of DevOps, and it is implemented through a broad ecosystem of tools that support different stages of the software lifecycle. These tools are used to manage source code, build applications, test software, deploy updates, and monitor system performance. Continuous integration systems enable developers to merge code changes frequently, ensuring that integration issues are identified early. Continuous deployment pipelines extend this process by automating the release of applications into production environments once predefined conditions are met. Infrastructure automation tools allow environments to be provisioned and configured consistently, reducing manual errors and improving scalability. Configuration management systems ensure that system settings remain consistent across multiple environments, preventing configuration drift. Monitoring and observability tools provide real-time insights into application behavior, system health, and performance metrics. This interconnected toolchain creates a seamless workflow where changes can move rapidly from development to production with minimal manual intervention. However, the reliance on tools has also contributed to a common misconception that DevOps is primarily a technological framework rather than a cultural transformation. While tools are essential enablers, they are not the defining element of DevOps. Instead, they serve to support the underlying principles of collaboration and continuous delivery. The automation-driven nature of DevOps has also influenced how organizations structure their teams, often leading to the formation of specialized roles focused on pipeline management, infrastructure automation, and system reliability. This specialization further reinforces the perception of DevOps as a technical discipline, even though its foundational principles remain organizational and cultural in nature.

The Ambiguity Problem in DevOps Definitions

A significant challenge in understanding DevOps is the lack of a universally accepted definition. Different organizations, professionals, and industry sources interpret the term in varying ways, leading to inconsistent usage across the technology landscape. In some contexts, DevOps is defined strictly as a cultural philosophy that promotes collaboration between development and operations teams. In others, it is described as a set of practices or methodologies used to improve software delivery efficiency.Some interpretations framee DevOps as a specific job role or a category of tools used in modern IT environments. This ambiguity creates challenges for professionals trying to standardize processes or establish clear organizational structures. It also affects hiring practices, where job descriptions labeled as DevOps engineer can vary widely in required skills and responsibilities. The lack of definition consistency has led to confusion when comparing DevOps with related concepts such as SecOps and DevSecOps. Because DevOps itself is not strictly defined, its boundaries with other operational models become blurred. This ambiguity is further amplified by the rapid evolution of technology practices, where new tools and methodologies continuously reshape how DevOps is implemented. As a result, DevOps functions more as a flexible concept than a rigid framework. This flexibility allows organizations to adapt it to their specific needs, but also prevents the establishment of a standardized global definition. The ongoing debate surrounding DevOps terminology reflects the broader evolution of IT practices toward more integrated and adaptive systems.

Transition Toward Security Awareness in DevOps Environments

As software systems became more complex and interconnected, security considerations began to play a more prominent role in development and operational workflows. Early DevOps implementations primarily focused on speed, automation, and collaboration, often treating security as a separate concern handled after deployment. However, increasing cybersecurity threats and regulatory requirements highlighted the need to integrate security earlier in the software lifecycle. This shift led to a gradual transition toward security-aware development practices within DevOps environments. Instead of treating security as an external checkpoint, organizations began incorporating it into continuous integration and deployment processes. This integration allowed vulnerabilities to be identified and addressed earlier, reducing risk exposure in production systems. Security awareness in DevOps also encouraged teams to consider threat modeling, secure coding practices, and compliance requirements during the development phase. This marked an important evolution in how organizations approached software delivery, as security was no longer viewed as a final validation step but as an ongoing responsibility throughout the lifecycle. The increasing importance of security within DevOps environments laid the foundation for more structured security integration models, which eventually contributed to the development of SecOps and DevSecOps concepts.

Emergence of Security Operations Thinking (SecOps Introduction)

Security operations thinking emerged as organizations recognized the need for a more structured approach to managing cybersecurity within operational environments. Traditional security models often operated independently from IT operations, resulting in delayed responses to threats and limited coordination between teams. The SecOps approach introduced a model where security and operations functions work more closely together to monitor, detect, and respond to threats in real time. This integration improved situational awareness and enabled faster incident response across infrastructure systems. SecOps also emphasized continuous monitoring of systems to identify anomalies and potential vulnerabilities before they could be exploited. Unlike traditional security frameworks that relied heavily on periodic audits and reactive measures, SecOps promotes ongoing vigilance and proactive risk management. This shift reflects a broader transformation in IT operations where security is treated as an embedded function rather than a separate domain. The development of SecOps thinking was influenced by the increasing complexity of enterprise networks, cloud adoption, and distributed architectures. These environments require constant monitoring and rapid response capabilities, which are difficult to achieve through isolated security teams. By integrating security into operational workflows, organizations can improve coordination, reduce response times, and strengthen overall system resilience. This evolution also set the stage for deeper integration between development, operations, and security functions in later models such as DevSecOps.

How Modern IT Environments Create Overlapping Operational Models

Modern IT environments are characterized by high levels of complexity, scalability, and interconnectivity, which naturally lead to overlapping operational models. Development, operations, and security functions are no longer isolated domains but interconnected components of a unified digital ecosystem. Cloud computing, microservices architectures, and continuous deployment pipelines have further blurred the boundaries between these disciplines. In such environments, changes in one area often have immediate impacts on others, requiring coordinated responses across multiple teams. This interconnectedness has made traditional siloed approaches inefficient and outdated. As a result, organizations are increasingly adopting hybrid operational models that combine elements of DevOps, SecOps, and other related frameworks. These models emphasize collaboration, automation, and continuous feedback across all stages of the software lifecycle. The overlap between these domains also creates challenges in defining clear responsibilities, as security, development, and operations tasks often intersect. For example, infrastructure configuration may involve both operational and security considerations, while application deployment may require input from both development and security teams. This convergence of responsibilities reflects the evolving nature of IT systems, where rigid boundaries are replaced by flexible, adaptive workflows. The rise of overlapping operational models highlights the need for integrated approaches that can accommodate the complexity of modern digital environments.

Why Terminology Confusion Matters in Enterprise Architecture

The lack of consistent terminology in DevOps and related operational models has significant implications for enterprise architecture and organizational design. When terms such as DevOps, SecOps, and DevSecOps are used inconsistently, it becomes difficult to establish clear governance structures, define responsibilities, and align teams around shared objectives. This ambiguity can lead to miscommunication, inefficiencies, and overlapping roles within organizations. It also complicates the process of designing scalable IT architectures, as different interpretations of these terms may result in conflicting implementation strategies. In enterprise environments, clarity of terminology is essential for ensuring that teams understand their responsibilities and how they contribute to broader organizational goals. Without this clarity, it becomes challenging to measure performance, enforce standards, or optimize workflows effectively. The confusion surrounding these terms also affects strategic decision-making, particularly when organizations attempt to adopt new methodologies or restructure existing teams. Inconsistent definitions can lead to partial or fragmented implementations that fail to achieve intended benefits. Therefore, understanding the evolving and sometimes ambiguous nature of these operational models is critical for building effective enterprise systems that support collaboration, security, and scalability in modern IT landscapes.

Evolution of Security Integration in Modern Software Delivery Pipelines

As software delivery systems matured, security gradually shifted from a peripheral concern to a central component of engineering workflows. Early development models treated security as a late-stage validation process, typically performed after application completion and before deployment. This approach was adequate in simpler monolithic systems, but it became increasingly ineffective as software architectures evolved into distributed, cloud-native, and microservice-based environments. The growing complexity of systems meant that vulnerabilities could emerge at multiple layers, including application code, infrastructure configurations, APIs, and third-party dependencies. As a result, reactive security practices were no longer sufficient to protect modern systems.

The integration of security into delivery pipelines emerged as a necessary evolution. Instead of treating security as a separate phase, organizations began embedding security checks directly into automated workflows. These checks include static analysis of source code, dependency scanning, configuration validation, and runtime monitoring. By incorporating these mechanisms into continuous integration and continuous delivery pipelines, organizations are able to detect issues earlier in the lifecycle. This early detection significantly reduces remediation costs and minimizes exposure to potential threats in production environments.

The evolution of security integration also reflects a shift in mindset. Security is no longer viewed as a gatekeeping function that blocks releases, but rather as an enabling capability that supports faster and safer delivery. This change is essential in environments where frequent deployments are expected and downtime is unacceptable. By embedding security into the pipeline, teams can maintain delivery speed without compromising system integrity. This transformation forms the foundation for modern security-driven development models.

Security as a Continuous Process Rather Than a Static Phase

Traditional security approaches relied heavily on periodic assessments, such as audits, penetration tests, and manual code reviews. These methods provided valuable insights but were inherently limited in their ability to keep pace with rapid deployment cycles. In modern systems, where code changes may be deployed multiple times per day, static security checks quickly become outdated. This mismatch between deployment speed and security validation created gaps in protection that attackers could exploit.

The modern approach reframes security as a continuous process integrated into every stage of software development and deployment. Rather than performing security checks at fixed intervals, systems now incorporate automated validation at each code commit, build, and deployment stage. Continuous security monitoring ensures that vulnerabilities are identified in real time, allowing teams to respond immediately. This approach also enables organizations to maintain a constantly updated understanding of their security posture.

Continuous security processes rely heavily on automation and telemetry. Automated scanning tools evaluate code for known vulnerabilities, misconfigurations, and insecure dependencies. At the same time, runtime monitoring systems track system behavior to detect anomalies that may indicate malicious activity. These tools generate continuous feedback loops that inform both development and operations teams. This feedback-driven model allows organizations to refine their security posture dynamically rather than relying on periodic evaluations.

The transition to continuous security also requires cultural alignment. Development, operations, and security teams must collaborate closely to ensure that security findings are addressed quickly and effectively. This shared responsibility model eliminates delays caused by siloed workflows and ensures that security issues are treated as priority concerns throughout the lifecycle.

Expanding Role of Automation in Security-Centric Workflows

Automation plays a critical role in enabling scalable security practices within modern IT environments. As systems grow in complexity, manual security processes become increasingly impractical. Automation addresses this challenge by providing consistent, repeatable, and scalable mechanisms for identifying and mitigating risks. In security-centric workflows, automation is applied across multiple stages, including code analysis, infrastructure provisioning, configuration enforcement, and incident response.

Automated code analysis tools evaluate source code for potential vulnerabilities such as injection flaws, insecure data handling, and improper authentication mechanisms. These tools are integrated directly into development pipelines, ensuring that issues are detected before code is merged or deployed. Dependency scanning tools examine third-party libraries for known security vulnerabilities, reducing the risk associated with external components.

Infrastructure automation extends these principles to system configuration. Infrastructure-as-code practices allow organizations to define infrastructure configurations in version-controlled templates. These templates can be automatically validated against security policies to ensure compliance before deployment. This reduces the likelihood of misconfigurations that could expose systems to threats.

Automation also enhances incident response capabilities. When security anomalies are detected, automated systems can trigger predefined responses such as isolating affected systems, revoking credentials, or generating alerts for security teams. This rapid response capability is essential in minimizing the impact of potential breaches.

Despite its advantages, automation must be carefully governed to avoid unintended consequences. Poorly configured automation rules can lead to false positives, unnecessary disruptions, or overlooked vulnerabilities. Therefore, automation must be combined with human oversight to ensure accuracy and effectiveness.

SecOps as a Structured Approach to Operational Security

Security Operations, commonly referred to as SecOps, represents a structured approach to integrating security into operational environments. Unlike traditional security models that operate independently, SecOps emphasizes collaboration between security teams and IT operations teams. This collaboration ensures that security considerations are embedded directly into system management processes.

In SecOps environments, security is treated as a continuous operational responsibility rather than a separate function. This includes monitoring system activity, analyzing logs, managing vulnerabilities, and responding to incidents. Security teams work closely with system administrators and infrastructure engineers to ensure that systems remain secure and compliant.

One of the key principles of SecOps is centralized visibility. Security teams require comprehensive insights into system behavior across networks, applications, and infrastructure layers. Centralized monitoring platforms aggregate data from multiple sources, enabling teams to identify patterns and detect anomalies more effectively. This visibility is essential for maintaining situational awareness in complex environments.

Incident response is another core aspect of SecOps. When security events occur, coordinated response procedures are activated to contain and resolve threats. These procedures often involve multiple teams working together to isolate affected systems, analyze attack vectors, and restore normal operations. The effectiveness of SecOps depends heavily on communication and clearly defined response protocols.

SecOps also incorporates proactive risk management. Rather than reacting to incidents after they occur, teams continuously assess system vulnerabilities and implement preventive measures. This includes patch management, configuration hardening, and access control optimization. By addressing risks proactively, SecOps reduces the likelihood of successful attacks.

Operational Collaboration Between Security and Infrastructure Teams

A defining characteristic of SecOps is the collaboration between security teams and infrastructure teams. In traditional environments, these groups often operate independently, leading to misaligned priorities and delayed security responses. SecOps addresses this issue by creating integrated workflows where both teams share responsibility for system security.

Infrastructure teams focus on maintaining system availability, performance, and scalability. Security teams focus on protecting systems from threats and ensuring compliance with security standards. In a collaborative SecOps model, these responsibilities overlap, requiring joint decision-making and shared accountability.

This collaboration improves efficiency in several ways. Security updates can be deployed more quickly when infrastructure teams are involved in the process. Configuration changes can be validated for security compliance before implementation. System monitoring becomes more comprehensive when both teams contribute to visibility tools and metrics.

The collaboration also improves communication during incident response. Instead of working in isolation, teams coordinate their actions to resolve issues more effectively. This reduces downtime and minimizes the impact of security incidents on business operations.

However, achieving effective collaboration requires organizational alignment. Clear roles, responsibilities, and communication channels must be established to avoid confusion. Without proper structure, collaboration can lead to overlapping efforts or gaps in accountability.

Risk Visibility and Threat Detection in SecOps Models

Risk visibility is a fundamental requirement in SecOps environments. Organizations must maintain continuous awareness of their security posture across all systems and environments. This includes understanding vulnerabilities, monitoring user activity, and analyzing system behavior.

Threat detection systems play a key role in providing this visibility. These systems analyze large volumes of data to identify patterns that may indicate malicious activity. This includes unusual login attempts, abnormal network traffic, and unauthorized configuration changes. By detecting these patterns early, organizations can respond before threats escalate.

Advanced threat detection systems often use behavioral analysis techniques to identify deviations from normal system behavior. This approach allows for the detection of previously unknown threats that may not match known attack signatures. Behavioral analysis enhances detection capabilities and improves overall security resilience.

Risk visibility also depends on effective data aggregation. Security data is collected from multiple sources, including servers, applications, network devices, and cloud platforms. This data is centralized into monitoring systems that provide a unified view of security events. Without centralized visibility, identifying and responding to threats becomes significantly more difficult.

Governance and Compliance in Security-Integrated Operations

Governance and compliance are essential components of both SecOps and DevSecOps environments. Organizations must adhere to regulatory requirements, industry standards, and internal security policies. These requirements often dictate how data is handled, how systems are secured, and how incidents are reported.

In security-integrated operations, governance is embedded directly into workflows. Policies are enforced automatically through configuration management systems and deployment pipelines. This ensures consistent application of security standards across all environments.

Compliance monitoring systems continuously evaluate system configurations and activities to ensure alignment with regulatory requirements. Any deviations are flagged for review and remediation. This continuous compliance approach reduces the risk of violations and improves audit readiness.

Governance frameworks also define roles and responsibilities within security operations. Clear accountability ensures that security tasks are properly assigned and executed. This structure is essential for maintaining consistency and reliability in security practices.

Transition Toward Unified Security and Development Ecosystems

As organizations continue to evolve, there is a growing shift toward unified ecosystems that integrate development, operations, and security into a single continuous workflow. This integration eliminates traditional boundaries between teams and creates a more cohesive approach to software delivery.

In unified ecosystems, security is no longer treated as a separate function but as an embedded component of the entire development lifecycle. Developers, operations engineers, and security professionals work together from the initial design phase through production deployment.

This unified approach improves efficiency by reducing handoffs between teams and eliminating delays caused by siloed workflows. It also enhances system resilience by ensuring that security considerations are addressed at every stage of development.

The transition to unified ecosystems represents a significant shift in how organizations approach software engineering. It reflects the growing importance of collaboration, automation, and continuous feedback in modern IT environments.

DevSecOps as the Convergence of Development, Security, and Operations

DevSecOps represents the logical progression of modern software delivery practices, where development, security, and operations are unified into a single, cohesive lifecycle. Instead of treating security as a separate checkpoint or an external audit layer, DevSecOps embeds security controls, validation, and accountability directly into the workflow from the earliest stages of design. This convergence is not merely a technical adjustment but a structural and cultural transformation that changes how organizations build, deploy, and maintain applications. The integration ensures that every participant in the lifecycle, from developers writing code to engineers managing infrastructure, contributes to maintaining a secure environment.

The shift toward DevSecOps is driven by the increasing complexity of systems and the growing sophistication of cyber threats. Modern applications often rely on distributed architectures, cloud services, APIs, and third-party dependencies, all of which introduce multiple attack surfaces. In such environments, isolated security reviews are insufficient because vulnerabilities can emerge at any stage and propagate quickly across interconnected systems. DevSecOps addresses this challenge by ensuring that security considerations are continuously applied rather than periodically enforced.

This model also redefines accountability. Instead of assigning responsibility for security solely to specialized teams, DevSecOps distributes this responsibility across all roles. Developers are expected to follow secure coding practices, operations teams must enforce secure configurations, and security professionals guide policy and risk management strategies. This shared responsibility model reduces delays and ensures that security is consistently prioritized without disrupting delivery timelines.

Shift-Left Security and Early Risk Mitigation

A core principle of DevSecOps is the concept of shifting security left within the development lifecycle. This means that security activities are introduced as early as possible, ideally during the planning and design phases of a project. By identifying potential risks before code is written, organizations can prevent vulnerabilities from being introduced into the system in the first place. This proactive approach contrasts sharply with traditional model,s where security assessments occur after development is complete.

Early risk mitigation has significant advantages in terms of cost and efficiency. Fixing vulnerabilities during development is far less resource-intensive than addressing them after deployment. When issues are discovered late in the lifecycle, they may require extensive rework, system downtime, or emergency patches. By integrating security into early stages, DevSecOps minimizes these disruptions and improves overall system stability.

Shift-left practices often include threat modeling, secure design reviews, and code-level security analysis. Developers are encouraged to think about potential attack vectors while writing code, ensuring that security considerations are embedded into application logic. Automated tools further support this process by scanning code for vulnerabilities as it is written, providing immediate feedback that can be addressed before changes are committed.

This early integration also fosters a security-conscious culture among development teams. Instead of viewing security as an external requirement, developers begin to see it as an integral part of their responsibilities. Over time, this mindset leads to higher-quality code and more resilient applications.

Continuous Security Testing and Validation Pipelines

Continuous testing is a defining feature of DevSecOps, extending beyond functional validation to include comprehensive security checks. In modern delivery pipelines, every code change triggers a series of automated tests designed to verify both functionality and security. These tests are integrated into continuous integration and continuous deployment workflows, ensuring that security validation occurs consistently at every stage.

Security testing within these pipelines includes static analysis, dynamic testing, and dependency scanning. Static analysis evaluates source code for potential vulnerabilities without executing it, identifying issues such as improper input validation or insecure coding patterns. Dynamic testing examines applications during runtime to detect vulnerabilities that may only appear under specific conditions. Dependency scanning focuses on third-party libraries, identifying known vulnerabilities that could compromise the system.

The integration of these testing methods into automated pipelines ensures that vulnerabilities are detected early and addressed promptly. It also reduces the reliance on manual testing, which can be time-consuming and prone to oversight. Automated testing provides consistent coverage and allows organizations to maintain high levels of security without slowing down development cycles.

Continuous validation also extends to infrastructure and configuration settings. Infrastructure-as-code templates are evaluated against security policies before deployment, ensuring that systems are configured correctly from the outset. This prevents misconfigurations that could expose systems to threats and ensures compliance with organizational standards.

Security as Code and Policy Enforcement Mechanisms

The concept of security as code is central to DevSecOps, enabling organizations to define and enforce security policies programmatically. Instead of relying on manual processes or documentation, security requirements are embedded directly into code and configuration files. This approach ensures that policies are consistently applied across all environments and reduces the risk of human error.

Security as code includes defining access controls, network configurations, encryption standards, and compliance requirements in machine-readable formats. These definitions are integrated into deployment pipelines, where they are automatically validated before changes are applied. If a configuration does not meet security standards, the deployment process is halted until the issue is resolved.

Policy enforcement mechanisms ensure that security requirements are not bypassed during development or deployment. Automated checks verify that all changes comply with predefined policies, providing a consistent and reliable framework for maintaining security. This approach also simplifies auditing and compliance processes, as policies are clearly defined and consistently enforced.

The use of code-based security policies also improves scalability. As systems grow and evolve, policies can be updated centrally and applied across all environments without requiring manual intervention. This ensures that security standards remain consistent even as infrastructure becomes more complex.

Collaboration Models and Cross-Functional Team Alignment

DevSecOps relies heavily on collaboration between development, operations, and security teams. Unlike traditional mode,ls where these groups operate independently, DevSecOps creates cross-functional teams that share responsibility for delivering secure and reliable applications. This alignment is essential for maintaining efficiency and ensuring that security considerations are addressed throughout the lifecycle.

Collaboration in DevSecOps is supported by shared tools, communication platforms, and integrated workflows. Teams work together to define requirements, review code, monitor systems, and respond to incidents. This collaborative approach reduces delays caused by handoffs between teams and ensures that issues are addressed promptly.

Cross-functional alignment also improves decision-making. When all stakeholders are involved in the development process, decisions can be made with a comprehensive understanding of technical, operational, and security implications. This leads to more balanced and effective outcomes.

However, achieving effective collaboration requires cultural change. Organizations must encourage open communication, shared accountability, and continuous learning. Without these elements, collaboration can be hindered by conflicting priorities a or lack of coordination.

Real-Time Monitoring, Feedback Loops, and Observability

Real-time monitoring and observability are critical components of DevSecOps, providing continuous insights into system performance and security posture. Monitoring systems collect data from applications, infrastructure, and networks, enabling teams to detect anomalies and respond to potential threats in real time.

Feedback loops play a crucial role in improving system reliability and security. Data collected from monitoring systems is analyzed to identify trends, performance issues, and potential vulnerabilities. This information is then used to refine development practices, improve configurations, and enhance security controls.

Observability extends beyond basic monitoring by providing deeper insights into system behavior. It includes metrics, logs, and traces that help teams understand how systems operate under different conditions. This level of visibility is essential for diagnosing issues and optimizing performance.

Real-time feedback also supports continuous improvement. Teams can quickly identify areas for optimization and implement changes without disrupting operations. This iterative approach aligns with the core principles of DevSecOps, ensuring that systems remain secure and efficient over time.

Balancing Speed, Agility, and Security in High-Velocity Environments

One of the primary challenges in DevSecOps is balancing the need for rapid delivery with the requirement for robust security. High-velocity environments demand frequent deployments and continuous updates, which can increase the risk of introducing vulnerabilities. DevSecOps addresses this challenge by integrating security into automated workflows, allowing organizations to maintain speed without compromising protection.

Automation plays a key role in achieving this balance. By embedding security checks into pipelines, organizations can validate changes quickly and consistently. This reduces the need for manual reviews and ensures that security does not become a bottleneck.

Agility is also supported by modular architectures and microservices, which allow changes to be implemented in smaller, manageable increments. This reduces the impact of potential vulnerabilities and simplifies remediation efforts. Combined with continuous monitoring, this approach ensures that systems remain secure even as they evolve rapidly.

Maintaining this balance requires careful planning and governance. Organizations must define clear policies, implement effective monitoring systems, and ensure that teams are aligned in their objectives. Without proper oversight, the pursuit of speed can lead to security gaps.

Organizational Transformation and DevSecOps Adoption Challenges

Adopting DevSecOps involves significant organizational transformation, requiring changes in processes, culture, and technology. One of the primary challenges is overcoming resistance to change, as teams may be accustomed to traditional workflows and responsibilities. Transitioning to a shared responsibility model requires a shift in mindset and a willingness to adopt new practices.

Training and skill development are essential for successful adoption. Teams must learn secure coding practices, automation techniques, and monitoring tools. This requires ongoing education and support to ensure that all stakeholders are equipped to contribute effectively.

Another challenge is integrating DevSecOps into existing systems and processes. Organizations often have legacy infrastructure and workflows that may not be compatible with modern practices. Transitioning to DevSecOps requires careful planning and gradual implementation to avoid disruption.

Despite these challenges, the benefits of DevSecOps are substantial. Improved security, faster delivery, and enhanced collaboration contribute to more resilient and efficient systems. Organizations that successfully adopt DevSecOps are better positioned to להתמודד evolving threats and maintain a competitive advantage in dynamic environments.

Future Outlook of DevSecOps and Integrated IT Practices

The future of DevSecOps is closely tied to the continued evolution of technology and the increasing importance of cybersecurity. As systems become more complex and interconnected, the need for integrated approaches to development, operations, and security will continue to grow. Emerging technologies such as artificial intelligence and machine learning are expected to play a significant role in enhancing security capabilities, enabling more advanced threat detection and automated response mechanisms.

Cloud-native architectures and containerization will further drive the adoption of DevSecOps practices, as these environments require continuous monitoring and rapid adaptation. The integration of security into these ecosystems will become increasingly critical for maintaining system integrity.

DevSecOps is also likely to influence organizational structures, leading to more collaborative and flexible team models. The emphasis on shared responsibility and continuous improvement will shape how organizations approach software development and IT operations in the future.

As the landscape continues to evolve, DevSecOps will remain a foundational approach for managing complexity, ensuring security, and enabling innovation in modern digital environments.

Conclusion

The discussion around DevOps, SecOps, and DevSecOps ultimately reflects a broader transformation in how modern organizations design, build, and manage technology systems. These terms are not just labels or industry buzzwords; they represent evolving operational philosophies that respond to the increasing complexity of digital environments. As software systems become more interconnected, distributed, and critical to business operations, the need for integrated workflows and shared responsibility becomes unavoidable. What began as an effort to improve collaboration between development and operations has expanded into a comprehensive model that includes security as a core and continuous element.

At the heart of this evolution is the realization that speed, reliability, and security cannot exist in isolation. Traditional approaches often forced organizations to choose between rapid delivery and robust protection, leading to compromises that introduced risk or slowed innovation. DevOps challenged this limitation by demonstrating that collaboration and automation could significantly improve delivery speed while maintaining stability. SecOps further extended this thinking by embedding security into operational practices, ensuring that protection mechanisms are continuously active rather than reactive. DevSecOps completes this progression by integrating all three domains into a unified lifecycle where security is treated as a foundational requirement rather than an afterthought.

One of the most important takeaways is the shift from siloed responsibilities to shared accountability. In older models, development teams focused on building features, operations teams managed infrastructure, and security teams handled protection independently. This separation often resulted in misalignment, delayed responses, and inefficiencies. In contrast, modern approaches emphasize that every team involved in the lifecycle contributes to overall system integrity. Developers are expected to write secure code, operations teams must enforce secure configurations, and security professionals guide strategy while collaborating across all stages. This shared responsibility model not only improves efficiency but also creates a stronger and more resilient system overall.

Another key insight is the role of automation in enabling these integrated models. Without automation, the level of coordination required between development, operations, and security would be difficult to sustain at scale. Automated pipelines, continuous testing, and real-time monitoring allow organizations to maintain high levels of efficiency while ensuring consistent enforcement of standards. Automation reduces the risk of human error, accelerates feedback loops, and allows teams to focus on higher-level problem-solving rather than repetitive tasks. However, it is important to recognize that automation alone is not sufficient. It must be guided by clear policies, well-defined processes, and a culture that supports collaboration and accountability.

The importance of early integration also stands out as a defining characteristic of modern practices. Security, in particular, benefits significantly from being introduced at the earliest stages of development. Identifying and addressing vulnerabilities during design or coding phases is far more effective than attempting to fix them after deployment. This proactive approach not only reduces costs but also minimizes disruptions and improves overall system quality. The concept of shifting responsibilities earlier in the lifecycle is not limited to security; it also applies to testing, monitoring, and performance optimization. By addressing potential issues early, organizations can create more stable and reliable systems.

At the same time, the conversation highlights the ongoing challenge of terminology and definition. DevOps, SecOps, and DevSecOps are often interpreted differently depending on context, which can lead to confusion in implementation and communication. While this ambiguity can be frustrating, it also reflects the flexibility of these concepts. They are not rigid frameworks but adaptable models that can be tailored to the specific needs of an organization. The key is not to focus on rigid definitions but to understand the underlying principles of collaboration, automation, and continuous improvement that they represent.

The evolution toward integrated operational models also underscores the importance of culture. Technology alone cannot drive the transformation required for DevOps or DevSecOps to succeed. Organizations must foster an environment where teams are encouraged to collaborate, share knowledge, and take collective ownership of outcomes. This cultural shift often requires changes in leadership, communication practices, and performance metrics. Teams must be aligned around common goals and supported with the tools and training needed to achieve them. Without this cultural foundation, even the most advanced tools and processes will fail to deliver their full potential.

Another important dimension is the growing need for visibility and feedback in complex systems. Continuous monitoring and observability provide the insights necessary to maintain performance and security in dynamic environments. Feedback loops allow teams to learn from system behavior, identify areas for improvement, and respond quickly to emerging issues. This constant flow of information is essential for maintaining agility and resilience, especially in environments where change is frequent and unpredictable.

The integration of development, operations, and security also has significant implications for how organizations manage risk. Instead of relying on periodic assessments or isolated reviews, modern approaches enable continuous risk evaluation and mitigation. This ongoing process ensures that vulnerabilities are addressed as they arise and that systems remain aligned with security standards over time. It also allows organizations to respond more effectively to emerging threats, which are becoming increasingly sophisticated and frequent.

Looking ahead, the importance of these integrated models will only continue to grow. As technologies such as cloud computing, microservices, and distributed architectures become more prevalent, the need for seamless collaboration and continuous security will become even more critical. Organizations that embrace these principles will be better equipped to handle complexity, adapt to change, and maintain competitive advantage. Those that rely on outdated, siloed approaches will likely struggle to keep pace with the demands of modern digital environments.

Ultimately, the value of understanding DevOps, SecOps, and DevSecOps lies not in the terminology itself but in the principles they represent. These models emphasize that successful software delivery requires more than just technical expertise; it requires coordination, communication, and a commitment to continuous improvement. By aligning teams, automating processes, and integrating security throughout the lifecycle, organizations can build systems that are not only efficient but also resilient and secure. This integrated approach is no longer optional in today’s technology landscape; it is a fundamental requirement for sustainable and scalable success.