Python generators represent a specialized type of function designed to produce values one at a time rather than generating an entire collection in memory at once. This approach fundamentally changes how data is processed in programming because it shifts execution from an immediate, all-at-once computation model to a gradual, on-demand system. Instead of building and storing a complete list of results, a generator yields values incrementally as they are needed. This makes generators highly efficient when working with large datasets, continuous data streams, or computationally expensive operations.

In traditional programming, functions are designed to execute fully and return a single result. Once a function completes its execution, its memory state is discarded. Generators break this model by allowing a function to pause and resume execution while preserving its internal state. This behavior enables them to act like a controlled sequence producer that delivers values over time instead of producing everything in one step. Because of this, generators are widely used in performance-sensitive applications where memory efficiency is critical.

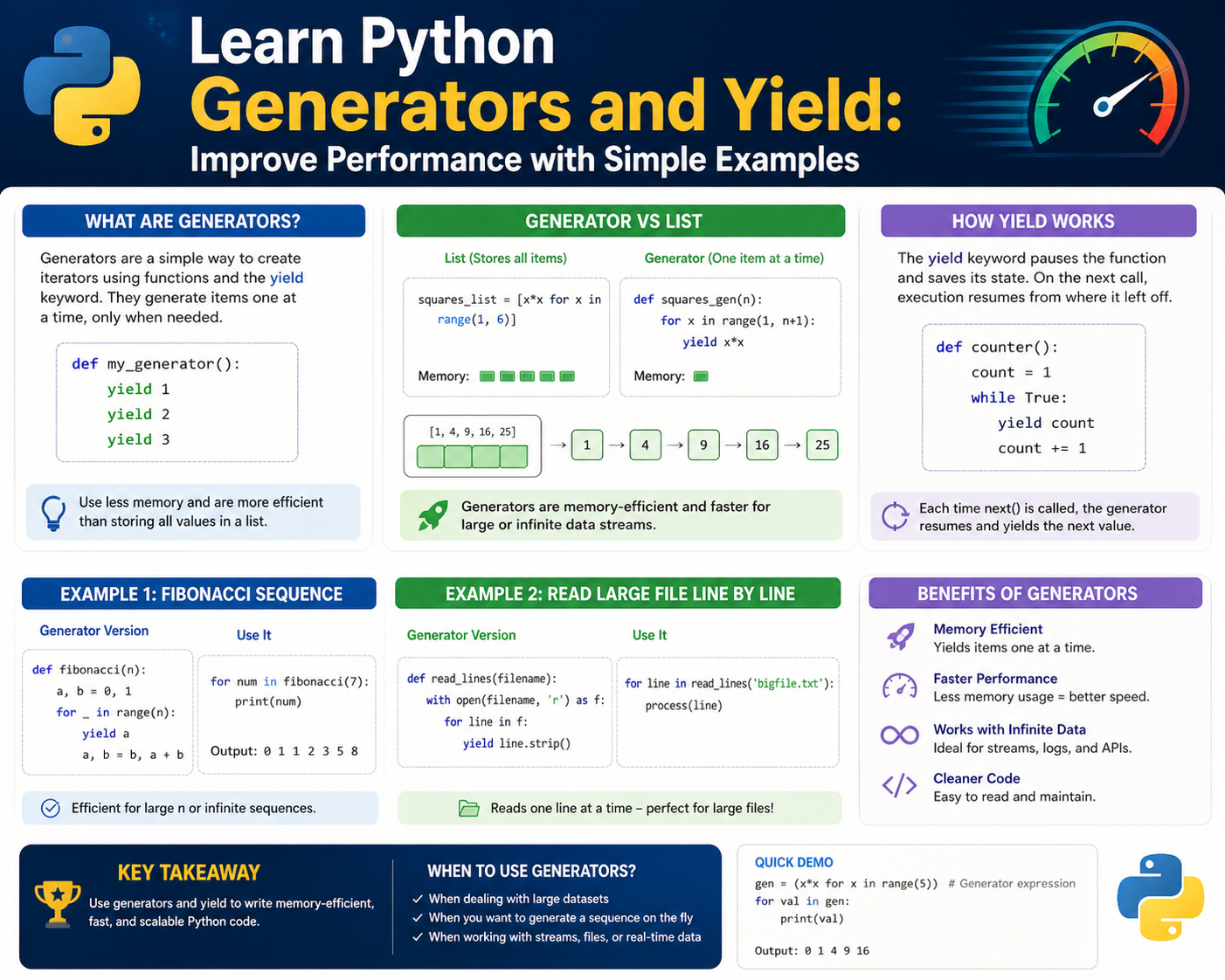

Generators are not separate constructs from functions; instead, they are created using standard function syntax but behave differently due to the inclusion of a special keyword. This keyword transforms a regular function into an iterable object that can be traversed step by step. The ability to pause execution and resume later is what makes generators a powerful tool in modern Python programming.

How Generator Functions Operate in Python Execution Flow

A generator function looks very similar to a standard function in structure, but its behavior during execution is fundamentally different. When a generator function is called, it does not execute immediately. Instead, it returns a generator object that can be iterated over. This object controls the execution flow of the function, allowing it to run incrementally rather than all at once.

Inside a generator function, execution is controlled by specific pause points that define where the function should temporarily stop and return a value. When execution reaches one of these points, the function produces a value and suspends its state. This suspended state includes all local variables, execution progress, and contextual information. When the generator is resumed, execution continues from exactly where it left off.

This behavior makes generator functions significantly different from traditional functions, which lose their internal state once execution is complete. Generators maintain continuity between executions, which allows them to operate more like ongoing processes rather than one-time computations. This is particularly useful in scenarios where data is processed step by step, such as reading large files or generating sequences dynamically.

The ability to pause execution without losing state is a key feature that allows generators to handle large workloads efficiently. Instead of recalculating or storing all results in memory, they continue execution from the last paused point, producing only the next required value.

The Role of Yield in Controlling Function Execution

The yield keyword is the defining feature that transforms a normal function into a generator. While return immediately ends a function and sends back a final result, yield operates differently by temporarily suspending execution. When a function encounters yield, it produces a value but does not terminate. Instead, it pauses at that exact point, preserving its internal state for future resumption.

This pause-and-resume mechanism allows functions to behave like iterative data producers. Each time execution resumes, the function continues from the exact point where it was paused, maintaining all previously computed values and variable states. This makes yield particularly useful for constructing sequences where each value depends on prior computation or where results are needed incrementally.

Unlike return, which ends a function permanently, yield allows multiple outputs to be produced over time. This enables a single function to act as a sequence generator rather than a single-result computation unit. The ability to suspend execution without losing context is what makes yield a core component of generator behavior.

This mechanism also introduces the concept of controlled execution flow, where a function does not run to completion immediately but instead progresses in steps. This step-by-step execution is essential for managing large-scale or infinite data sequences where full computation would be impractical.

Difference Between Return and Yield in Execution Behavior

Understanding the difference between return and yield is essential for grasping how generators work. A return statement immediately ends a function’s execution and sends back a single value to the caller. Once return is executed, the function ceases to exist in its current state, and no further computation occurs.

In contrast, yield does not terminate execution. Instead, it pauses the function and saves its current state. When the function is resumed, it continues execution from the exact point where it was paused. This allows a single function to produce multiple values over time rather than just one final result.

This difference significantly changes how programs handle data. With return, all computations must be completed before results are available. This means that large datasets must be fully processed and stored in memory before they can be used. With yield, results are produced incrementally, allowing data to be processed one piece at a time.

This incremental processing model reduces memory usage and improves efficiency, especially when dealing with large datasets. Instead of storing all results at once, only the current value is kept in memory, making generators ideal for high-performance applications.

State Preservation and Execution Continuity in Generators

One of the most powerful features of generators is their ability to preserve execution state between iterations. When a generator function is paused using yield, all local variables, execution progress, and contextual information are saved internally. This allows the function to resume execution exactly where it left off without losing any information.

This behavior is fundamentally different from regular functions, which reset their state each time they are called. Generators, however, maintain continuity between executions, effectively allowing them to function as long-running processes that produce values over time.

State preservation enables generators to handle complex workflows where each step depends on previous computations. Instead of recomputing values or restarting execution, the generator continues from its last paused state. This makes them highly efficient for tasks such as processing streams of data, reading large files line by line, or generating sequences dynamically.

By maintaining internal state, generators eliminate the need for external data storage or intermediate variables. This reduces memory overhead and simplifies program design, especially in scenarios where data flows continuously or grows indefinitely.

Lazy Evaluation and On-Demand Computation in Python Generators

Generators implement a concept known as lazy evaluation, where values are computed only when they are needed. Unlike eager evaluation, where all values are computed immediately, lazy evaluation delays computation until the moment a value is requested.

This approach significantly improves efficiency by avoiding unnecessary calculations. Instead of generating an entire dataset upfront, a generator produces values one at a time as they are required. This reduces both memory usage and processing time, especially when working with large or infinite sequences.

Lazy evaluation also enables generators to handle datasets that cannot fit into memory. By generating demand values, they avoid the need to store complete datasets, making it possible to work with extremely large or continuous data sources.

This on-demand computation model is one of the key reasons generators are widely used in performance-critical applications. It ensures that resources are used only when necessary, resulting in more efficient and scalable programs.

Iteration Behavior and Generator Object Structure

Generators are inherently iterable, meaning they can be traversed sequentially using iteration constructs. Each time a generator is iterated, it resumes execution from its last yield point, produces the next value, and then pauses again.

This iterative behavior allows generators to integrate seamlessly with looping structures, making them easy to use in sequential processing tasks. Unlike traditional loops that operate on precomputed datasets, generator-based loops compute values dynamically during iteration.

Each generator maintains an internal pointer that tracks its current position in the sequence. When iteration begins, the generator starts executing until it reaches the first yield point. After yielding a value, it pauses and waits for the next iteration request. This cycle continues until the generator exhausts all possible values.

Once all values have been produced, the generator signals completion and stops iteration. This natural termination behavior ensures that generators can be used safely in looping constructs without requiring explicit end conditions.

Efficient Data Processing Through Incremental Computation

Generators are particularly effective in scenarios where data must be processed incrementally rather than all at once. By producing values step by step, they allow systems to handle large workloads without overwhelming memory resources.

This incremental computation model is especially useful in data-intensive applications where datasets are too large to be stored entirely in memory. Instead of loading everything at once, generators process one element at a time, ensuring that memory usage remains constant regardless of dataset size.

This approach also improves responsiveness, as results become available immediately rather than after full computation. It enables real-time processing of data streams and continuous input sources, making generators a key tool in modern programming environments.

By combining lazy evaluation, state preservation, and iterative execution, generators provide a highly efficient mechanism for managing complex data workflows.

How Generator Execution Continues After Yield in Python

In Python generators, execution does not follow the traditional top-to-bottom flow seen in normal functions. Instead, it follows a segmented execution model where the function runs until it encounters a yield statement, pauses, and later resumes from the same location. This continuation behavior is what allows generators to function as stateful sequence producers rather than single-run computations.

When a generator is resumed, Python does not restart the function. Instead, it restores the previously saved execution frame, including local variables, loop counters, and intermediate computations. This restoration process ensures that the function behaves as though it never stopped executing, except that it waits for an external trigger to proceed.

This mechanism is what allows generators to produce sequences gradually. Each time execution resumes, the function advances slightly further, reaches the next yield, and pauses again. This cycle continues until no further yield statements remain, at which point the generator signals completion and stops producing values.

Unlike standard functions, which must execute fully before returning a result, generators allow partial execution. This makes them particularly effective in situations where only part of the result is needed at a time or where full computation would be inefficient or unnecessary.

Understanding Generator Objects and Internal State Tracking

When a generator function is called, it does not execute immediately. Instead, it returns a generator object. This object is responsible for controlling execution, maintaining state, and managing iteration. It acts as a bridge between the function definition and its step-by-step execution process.

The generator object contains an internal execution frame that stores all necessary information about the function’s current state. This includes variable values, loop positions, and the current instruction pointer. When the generator is paused, this state is preserved so that execution can resume seamlessly later.

This internal state tracking is what differentiates generators from regular functions. While normal functions discard their execution context after completion, generators preserve it indefinitely until the sequence is exhausted. This allows them to behave like paused computations that can be resumed at will.

The generator object also provides methods that allow external control over execution. Each time a value is requested, the generator resumes execution, produces the next value, and then pauses again. This controlled interaction model enables fine-grained control over how and when data is produced.

Step-by-Step Execution Flow Inside a Generator Function

The execution flow of a generator function follows a predictable pattern. When the function is first called, it does not execute its body. Instead, it returns a generator object. Execution begins only when the first value is requested.

At this point, the function starts running from the beginning and continues until it encounters a yield statement. When yield is reached, the current value is returned, and execution is paused. The function’s state is stored internally so that it can resume later.

When the next value is requested, execution resumes from the last yield point. The function continues running until it either encounters another yield or reaches the end of the function. This process repeats until all yield statements have been executed.

Once there are no more yield statements, the generator raises a termination signal indicating that the sequence is complete. This signal informs the calling environment that no more values are available.

This structured execution flow makes generators predictable and efficient. Instead of executing everything at once, they break computation into small, manageable steps.

How Yield Controls Data Flow in Generator Functions

The yield statement acts as a control mechanism that governs how data flows from a generator function to the calling environment. Unlike return, which sends a single value and terminates execution, yield sends a value while keeping the function alive.

Each yield acts as a checkpoint in the execution flow. When execution reaches yield, the current value is sent out, and the function pauses. This allows the calling environment to receive values one at a time without waiting for full computation.

When execution resumes, the function continues from the same checkpoint. This creates a controlled data flow where values are produced incrementally rather than all at once. This incremental approach is what makes generators efficient for large-scale data processing.

Yield also allows functions to behave cooperatively with external code. Instead of executing independently to completion, they respond to external requests for data. This creates a back-and-forth communication pattern between the generator and its caller.

Memory Efficiency and Resource Optimization in Generators

One of the most important advantages of generators is their ability to reduce memory usage. Traditional functions that return large datasets must store all results in memory before they can be used. This can become inefficient or even impossible when dealing with large datasets.

Generators avoid this problem by producing values one at a time. Instead of storing all results, they compute and discard each value after it is used. This means memory usage remains constant regardless of dataset size.

This efficiency is especially important in systems that handle large-scale data processing. By avoiding unnecessary storage, generators allow programs to operate smoothly even under heavy computational loads.

This memory optimization also reduces the risk of system crashes caused by excessive memory consumption. Since only one value is processed at a time, memory usage remains predictable and stable.

How Infinite Sequences Are Handled Using Generators

Generators are particularly useful for handling sequences that do not have a defined end. These infinite sequences cannot be stored in memory because they never terminate. Instead, they must be generated dynamically.

A generator can produce infinite sequences by using loops that do not have a fixed stopping condition. However, unlike traditional loops, generators do not compute all values at once. Instead, they generate each value only when requested.

This makes it possible to work with sequences that extend indefinitely without consuming additional memory. Each value is computed, returned, and then discarded, allowing the generator to continue indefinitely without performance degradation.

This capability is especially useful in scenarios involving real-time data streams or continuously changing data sources. The generator can keep producing values as long as they are needed.

Difference Between Iterators and Generators in Execution Design

While both iterators and generators are used for sequential data access, their internal design is different. Iterators require explicit implementation of methods to track state and produce values. Generators, on the other hand, automatically manage state using yield.

This means generators are simpler to write and maintain compared to custom iterators. The internal state management is handled automatically by Python, reducing the complexity of implementation.

Generators also provide a more natural syntax for defining sequences. Instead of manually implementing iteration logic, developers can define a sequence using yield statements.

This simplicity makes generators more accessible and widely used in modern Python programming.

How Looping Works with Generator-Based Sequences

Generators integrate seamlessly with looping structures. When used in a loop, the generator automatically produces values one at a time until it is exhausted.

Each iteration of the loop triggers the generator to resume execution, produce a value, and pause again. This continues until no more values are available.

This behavior allows generators to be used as drop-in replacements for lists in many cases. However, unlike lists, they do not store all values in memory.

This makes looping with generators highly efficient, especially when dealing with large datasets or continuous streams of data.

Internal Mechanics of Generator Suspension and Resumption

When a generator reaches a yield statement, Python performs a suspension operation. This involves saving the current execution state, including the instruction pointer, local variables, and function context.

When execution resumes, Python restores this state and continues execution from the exact point where it left off. This restoration process is what enables generators to behave like paused computations.

This mechanism is managed internally by Python’s runtime system, ensuring that state transitions are smooth and consistent.

The ability to suspend and resume execution without losing context is what makes generators unique compared to traditional functions.

Practical Impact of Generator Efficiency in Computation Workflows

Generators significantly improve computational efficiency in workflows that involve large datasets or continuous processing. By avoiding full data storage, they reduce memory usage and improve performance.

They also allow programs to start producing results immediately rather than waiting for full computation. This improves responsiveness in data-heavy applications.

In real-world systems, this means faster processing times, lower memory consumption, and better scalability.

Generators achieve this by focusing only on the next required value rather than the entire dataset.

How Generator-Based Design Improves System Scalability

Scalability is a key concern in modern computing systems, especially those dealing with large-scale data processing. Generators support scalability by ensuring that memory usage remains constant regardless of dataset size.

Since they process data incrementally, they can handle workloads that grow over time without requiring additional memory allocation.

This makes them suitable for applications that must process continuous or expanding data streams without performance degradation.

By reducing memory dependency, generators allow systems to scale more efficiently and reliably.

Complex Generator Patterns and Multi-Yield Execution Flow

Generators in Python are not limited to producing simple linear sequences of values. They can be structured to handle complex execution patterns where multiple yield statements exist within conditional logic, loops, and branching execution paths. This flexibility allows a single generator function to behave like a multi-stage processing engine that adapts its output based on runtime conditions.

When a generator contains multiple yield statements, execution does not follow a fixed output path. Instead, it dynamically determines which yield to execute based on the current state of computation. Each yield acts as a decision point where execution is paused, and a value is returned. When execution resumes, the function continues from that exact point, but the next yield encountered may differ depending on the internal logic.

This behavior allows generators to represent complex workflows in a compact structure. Instead of breaking logic into multiple functions or storing intermediate states externally, the generator itself manages progression through each stage. This makes it possible to design sequential processes that evolve dynamically over time.

In multi-yield structures, each yield contributes to a specific stage of computation. The generator may alternate between different output behaviors depending on conditions evaluated during execution. This creates a controlled flow where outputs are not just sequential but context-dependent.

The key advantage of this pattern is that it eliminates the need for storing intermediate results. Each stage is computed, yielded, and then discarded unless explicitly needed again. This keeps memory usage minimal, even in highly complex processing pipelines.

Stateful Computation and Persistent Execution Context in Generators

One of the most powerful characteristics of generators is their ability to maintain state across multiple executions. Unlike standard functions, which lose all internal data after completion, generators preserve their execution context between yield points.

This preserved state includes variable values, loop counters, conditional flags, and any intermediate computations. When execution resumes, the generator continues exactly where it left off, with all previous data intact. This creates the effect of a long-running process that evolves without restarting.

State persistence is essential in scenarios where computation depends on previous results. Instead of recalculating values or passing external state objects, generators maintain continuity internally. This reduces complexity and improves performance by avoiding redundant operations.

The execution context of a generator is stored in a suspended state until the next request for a value. When resumed, the generator reconstructs its environment and continues execution seamlessly. This mechanism is handled internally by the runtime system, ensuring consistency across execution cycles.

This ability to preserve state makes generators particularly useful in modeling workflows, simulations, and streaming systems where continuity is essential.

Infinite Data Streams and Continuous Generation Models

Generators are uniquely suited for handling infinite data streams, where the total number of values cannot be predetermined. In such cases, storing all values in memory is impossible, making traditional approaches inefficient or infeasible.

Instead of generating all values at once, a generator produces each value only when requested. This allows it to represent sequences that theoretically never end. The generator continues to produce values indefinitely, responding to each request with the next computed result.

This model is widely used in systems that require continuous data processing, such as monitoring systems, real-time analytics, and dynamic simulations. In these environments, data is not static but constantly evolving, requiring a mechanism that can adapt in real time.

The infinite generation model relies on controlled execution loops that do not terminate unless explicitly stopped. Each iteration produces a new value, which is immediately consumed or processed before the next iteration begins. This ensures that memory usage remains constant regardless of how long the generator runs.

The key insight behind infinite generators is that computation is decoupled from storage. Values are not stored in advance but generated on demand, allowing systems to operate indefinitely without memory exhaustion.

Lazy Evaluation Strategy and On-Demand Computation Efficiency

Generators implement a computational strategy known as lazy evaluation, where values are computed only when required rather than in advance. This approach fundamentally changes how programs handle data processing by shifting computation from upfront execution to runtime execution.

In a lazy evaluation model, no computation is performed until a value is explicitly requested. This means that unnecessary calculations are avoided entirely, improving efficiency and reducing resource consumption.

This strategy is particularly useful when dealing with large datasets or computationally expensive operations. Instead of processing all data at once, only the required portion is computed at any given time. This reduces both memory usage and processing overhead.

Lazy evaluation also improves responsiveness in systems where immediate results are needed. Since values are generated incrementally, results become available as soon as they are computed rather than after full processing is complete.

This model is especially effective in pipelines where data flows through multiple processing stages. Each stage processes values only when needed, ensuring that computation is distributed evenly over time rather than concentrated in a single execution block.

Generator-Based Iteration and Controlled Execution Loops

Generators integrate naturally with iteration mechanisms, allowing them to be used in looping structures without additional complexity. Each iteration triggers the generator to resume execution, produce a value, and then pause again.

This controlled execution loop continues until the generator has no more values to produce. At that point, it signals completion and stops iteration automatically.

This behavior allows generators to function as dynamic data sources that can be seamlessly integrated into processing loops. Unlike static data structures, generators produce values in real time, adapting to execution flow as needed.

The iteration process is tightly controlled, ensuring that only one value is processed at a time. This prevents unnecessary memory usage and allows programs to handle large or infinite datasets efficiently.

Each iteration represents a discrete step in computation, making generators ideal for stepwise processing models where each stage depends on the previous one.

Memory Optimization Through Streaming Computation Models

One of the most significant advantages of generators is their ability to optimize memory usage through streaming computation. Instead of storing entire datasets, generators process and discard values one at a time.

This streaming model ensures that memory consumption remains constant regardless of input size. Whether processing a small dataset or a massive data stream, the memory footprint remains stable.

This is particularly important in environments where memory resources are limited or where datasets are too large to fit into memory. By processing data incrementally, generators eliminate the need for large memory allocations.

Streaming computation also improves system stability by reducing the risk of memory overflow. Since only a small portion of data is held in memory at any time, the system can operate continuously without degradation.

This approach aligns with modern data processing requirements, where scalability and efficiency are critical factors in system design.

Execution Suspension and Resume Mechanisms in Depth

The ability of a generator to pause and resume execution is one of its most advanced features. When a yield statement is executed, the current state of the function is saved, including all variables, execution pointers, and context information.

This state is stored internally until the next value is requested. When execution resumes, the function restores this state and continues from the exact point of suspension.

This process is transparent to the developer but plays a crucial role in enabling generator functionality. It allows functions to behave like paused computations that can be resumed at any time without loss of data.

The suspension mechanism ensures that execution is efficient and consistent. No recomputation is required, and no data is lost between execution cycles. This makes generators highly reliable for long-running or complex processes.

Scalable Processing Architectures Using Generators

Generators provide a foundation for scalable processing architectures by enabling incremental computation. Since they process data one element at a time, they can handle workloads of virtually any size without increasing memory usage.

This scalability makes them suitable for systems that must handle growing or unpredictable data volumes. As data size increases, generator-based systems maintain consistent performance because they do not rely on preloading or storing entire datasets.

Instead, computation scales linearly with processing time rather than memory usage. This decoupling of memory and data size is a key factor in building efficient large-scale systems.

Generators also allow workloads to be distributed over time, reducing peak resource usage and improving system responsiveness.

Dynamic Workflow Construction Using Generator Logic

Generators can be used to construct dynamic workflows where each stage of processing depends on runtime conditions. Instead of defining static execution paths, generators allow workflows to evolve based on input data and internal state.

Each yield represents a stage in the workflow, and execution flow can change dynamically depending on conditions evaluated during runtime. This allows for highly flexible processing pipelines that adapt to changing requirements.

This dynamic behavior eliminates the need for complex control structures or external state management systems. The generator itself manages progression through the workflow, simplifying overall design.

Long-Term Efficiency Gains in Generator-Based Systems

Over time, systems built using generators tend to exhibit significant efficiency gains compared to traditional approaches. By reducing memory usage, minimizing redundant computation, and enabling incremental processing, generators create more sustainable and scalable systems.

These efficiency gains become more pronounced as data size increases. While traditional approaches may struggle with scaling, generator-based systems maintain consistent performance regardless of workload size.

This makes them a foundational tool in modern programming environments where efficiency and scalability are critical design requirements.

Conclusion

Python generators represent a fundamental shift in how data processing is approached in modern programming. Instead of relying on full computation and memory-heavy data structures, generators introduce a model where values are produced incrementally and only when required. This shift from eager computation to lazy evaluation is not just a technical optimization but a conceptual change in how programmers think about efficiency, scalability, and system design. By using yield, Python enables functions to become pauseable execution units that can resume exactly where they left off, preserving state and reducing unnecessary computation.

The importance of this mechanism becomes clearer when considering real-world applications where data volume is large, unpredictable, or continuous. Traditional approaches that require full dataset loading quickly become inefficient as data scales. Memory consumption increases proportionally with dataset size, often leading to performance bottlenecks or system failures. Generators eliminate this limitation by ensuring that only one value is processed at a time. This incremental processing model ensures that memory usage remains stable regardless of how large the input becomes, making generators especially useful in large-scale systems.

Another critical insight is the way generators simplify complex workflows. In traditional programming models, developers often need to manage intermediate states manually, store temporary results, or design elaborate control structures to handle sequential processing. Generators remove much of this overhead by embedding state management directly into the function itself. The execution context is preserved automatically between yield statements, allowing developers to focus on logic rather than memory management or data persistence. This leads to cleaner, more maintainable code structures that are easier to reason about and debug.

The role of yield in this system is particularly significant. It acts as a controlled interruption point within execution, allowing a function to pause without losing context. This ability to suspend and resume execution transforms functions into interactive data producers rather than static computation units. Each yield statement becomes a checkpoint in the execution flow, enabling stepwise progression through a sequence of operations. This mechanism is especially powerful in scenarios where partial results are needed immediately or where full computation is unnecessary.

Generators also introduce a more efficient approach to iteration. Instead of building entire datasets in memory and then looping over them, generators produce values dynamically during iteration. This means that loops become lightweight execution structures that request data only when needed. As a result, programs can handle much larger datasets without increasing memory consumption. This behavior aligns well with modern computing demands, where systems are expected to process large streams of data in real time without performance degradation.

The ability to handle infinite sequences further highlights the flexibility of generators. In traditional programming, infinite sequences are impossible to represent explicitly because they would require infinite memory. However, generators bypass this limitation by producing values on demand rather than storing them. Each value is computed only when requested, allowing sequences to continue indefinitely without exhausting system resources. This makes generators suitable for modeling continuous processes, streaming data systems, and simulations that do not have a predefined endpoint.

From an architectural perspective, generators encourage a shift toward streaming-based computation models. Instead of processing data in large batches, systems can process data in continuous flows. This approach reduces latency, improves responsiveness, and ensures that systems remain stable even under heavy workloads. By processing data incrementally, generators allow systems to scale more effectively without requiring proportional increases in memory or processing power.

Another important aspect is the way generators improve modularity in program design. Since each generator function is responsible for producing a sequence of values, it can be composed with other generators to form processing pipelines. These pipelines allow data to flow through multiple transformation stages without requiring intermediate storage. Each stage processes values as they arrive, making the entire system more efficient and easier to extend. This modular structure supports flexible system design where components can be added, removed, or modified without disrupting the overall workflow.

Generators also contribute to better resource management in computational systems. By limiting active memory usage to only the current value being processed, they reduce the risk of memory overflow and improve system stability. This is particularly valuable in environments with constrained resources or high concurrency demands. Instead of allocating large memory blocks for data storage, generators distribute computation over time, ensuring balanced resource usage.

In addition, generators align well with modern asynchronous and event-driven programming paradigms. Their ability to pause and resume execution mirrors the behavior of systems that respond to external events or signals. This makes them suitable for integration into systems that require non-blocking execution, real-time data handling, or continuous processing workflows. The underlying concept of producing data incrementally fits naturally into these environments, enhancing system responsiveness and efficiency.

Overall, the concept of generators represents a powerful abstraction in Python programming. It combines efficiency, flexibility, and simplicity into a single construct that addresses many of the limitations found in traditional data processing approaches. By leveraging yield and incremental execution, developers can build systems that are capable of handling large-scale, continuous, or infinite data flows without compromising performance or stability. This makes generators not just a technical feature, but a foundational tool for modern software development practices where scalability and efficiency are essential requirements.