The internet was originally built to encourage openness, information sharing, and global connectivity, allowing data to move freely between systems across countries and organizations. While this openness has supported innovation and communication at an unprecedented scale, it has also introduced serious risks when sensitive information is transmitted across public networks. Every message, file, or transaction sent online travels through multiple systems before reaching its destination, and without protection, any of these points can become a vulnerability. Encryption addresses this challenge by transforming readable information into a secure, coded format that cannot be understood without proper authorization. This transformation ensures that even if data is intercepted during transmission, it remains meaningless to anyone who does not possess the correct key or method to decode it. In modern digital environments, encryption is not limited to advanced security systems but is integrated into everyday activities such as messaging, online banking, cloud storage, and corporate communications. It acts as a foundational technology that supports trust in digital interactions by ensuring confidentiality, integrity, and controlled access to information. Without encryption, secure digital communication would not be possible, and users would be exposed to constant risks of data theft and unauthorized surveillance.

Understanding the Purpose and Structure of Encryption Algorithms

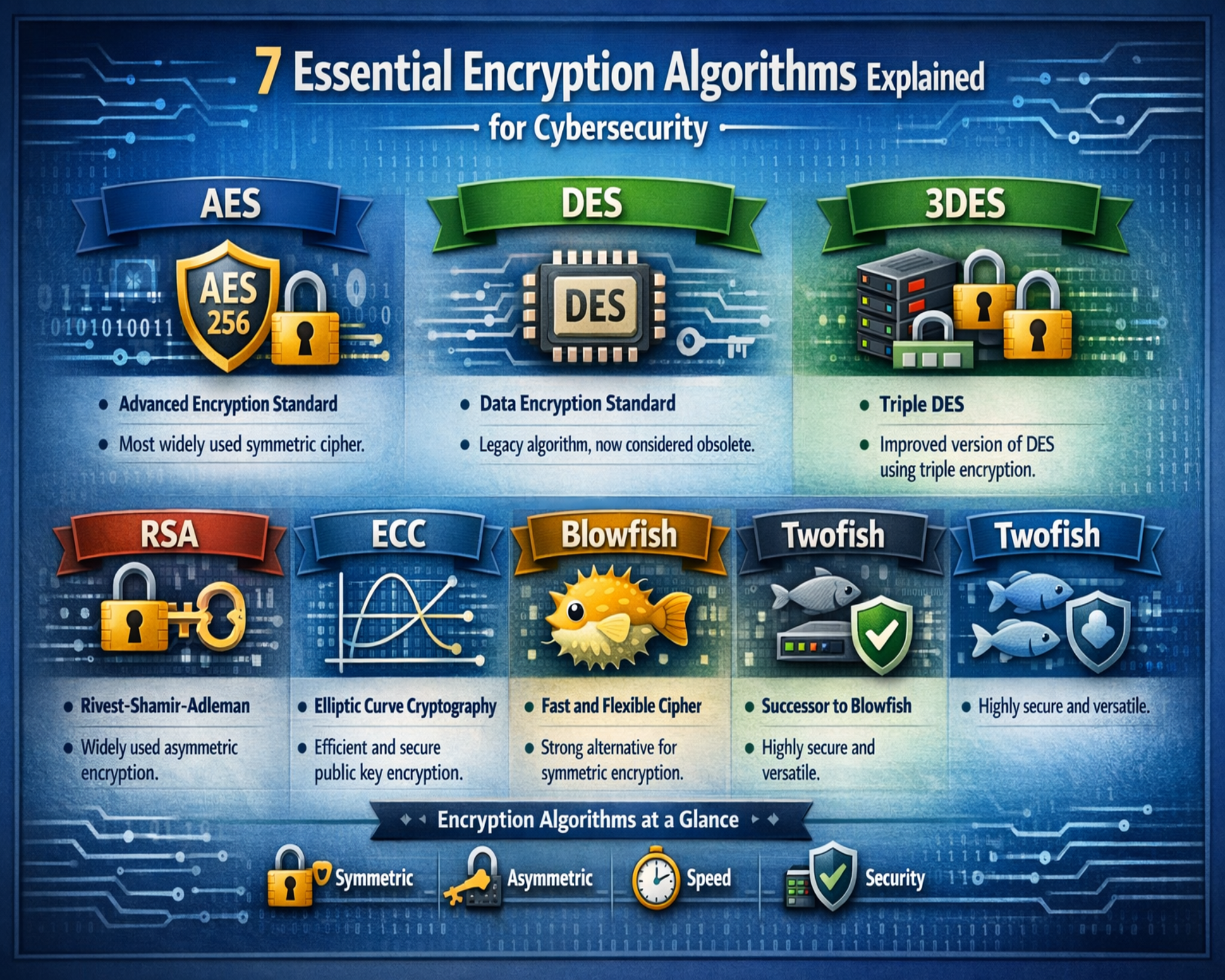

An encryption algorithm is a carefully designed mathematical process that transforms readable data, known as plaintext, into unreadable data called ciphertext. The purpose of this transformation is to protect information from being accessed or understood by unauthorized individuals. At the core of every encryption system is a set of logical rules that define how data is processed. These rules are structured in a way that ensures consistent and predictable results when the same inputs are used, which is essential for both encryption and decryption. The algorithm works alongside a cryptographic key, which serves as the controlling factor in the transformation process. Without the correct key, reversing the encrypted data back into its original form becomes computationally impractical. Encryption algorithms rely heavily on advanced mathematical concepts such as substitution, permutation, and modular arithmetic to create complex patterns that are difficult to analyze or break. The strength of an encryption algorithm is determined by how resistant it is to various forms of attack, including brute force attempts, pattern analysis, and cryptographic exploitation. Over time, encryption algorithms have evolved to become more sophisticated, adapting to increasing computational power and new security threats in the digital landscape.

The Importance of Symmetric Encryption in Data Protection

Symmetric encryption plays a critical role in protecting large volumes of data efficiently and quickly. In this method, the same cryptographic key is used for both encrypting and decrypting information, meaning that both the sender and receiver must share access to this secret key. Because the same key is used on both sides of the process, symmetric encryption is generally faster and less resource-intensive compared to other encryption methods. This makes it particularly suitable for scenarios where large datasets need to be processed, such as database encryption, file storage security, and internal system communications within organizations. However, the primary challenge in symmetric encryption lies in secure key distribution. Since both parties require the same key, it must be shared in a secure manner before communication begins. If the key is intercepted or exposed, the entire security model becomes compromised. Despite this challenge, symmetric encryption remains widely used due to its efficiency and strong performance in high-speed environments. It is often combined with other cryptographic techniques to create hybrid security systems that balance speed and protection effectively.

The Role of Asymmetric Encryption in Secure Systems

Asymmetric encryption introduces a more advanced approach to securing data by using two different but mathematically related keys. One key is known as the public key, which can be shared openly without compromising security, while the other is the private key, which must remain confidential and protected by the owner. Data encrypted with the public key can only be decrypted using the corresponding private key, creating a secure one-way relationship between the two. This structure eliminates the need to share secret keys directly, which significantly reduces the risk of interception during transmission. Asymmetric encryption is widely used in secure communications over public networks, including secure websites, digital signatures, and authentication systems. It also plays a key role in establishing secure connections before symmetric encryption is used for faster data transfer. Although asymmetric encryption provides a higher level of security in key exchange processes, it requires more computational power and is therefore slower than symmetric methods. This trade-off between security and performance makes it an essential component of modern cryptographic systems, particularly in environments where identity verification and secure key exchange are critical.

Evolution and Function of Triple DES Encryption

Triple DES, often abbreviated as 3DES, is an improved version of the original Data Encryption Standard, designed to enhance security by increasing the complexity of the encryption process. Instead of applying the encryption process once, Triple DES applies it three times to each block of data, significantly increasing the difficulty of unauthorized decryption. This repeated encryption structure strengthens the original algorithm by extending the effective key length and making brute force attacks far more difficult. Triple DES operates as a symmetric block cipher, meaning it processes data in fixed-size blocks and uses the same key for both encryption and decryption. It gained popularity in industries that required reliable data protection, particularly in financial systems and payment processing environments. However, as computing technology advanced, the limitations of Triple DES became more apparent, especially in terms of performance efficiency. The multiple encryption steps that enhance its security also slow down processing speed, making it less suitable for modern high-performance applications. As a result, newer encryption standards have largely replaced it, although it remains relevant in legacy systems where compatibility is still required.

Fundamentals of AES and Its Widespread Adoption

The Advanced Encryption Standard is one of the most important and widely used encryption algorithms in modern cybersecurity systems. It is designed as a symmetric block cipher that processes data through multiple rounds of transformation, each adding layers of complexity to the encrypted output. AES supports different key sizes, including 128-bit, 192-bit, and 256-bit configurations, allowing users to choose the appropriate balance between performance and security. The encryption process involves structured transformations such as substitution, permutation, mixing, and key addition, all of which contribute to creating a highly secure ciphertext. AES is recognized for its strong resistance to attacks and its efficiency in both software and hardware implementations. It is used extensively in securing government communications, financial systems, wireless networks, and personal data storage solutions. Its design ensures that even small changes in the input data produce significantly different encrypted outputs, making pattern recognition extremely difficult for attackers. Due to its reliability and performance, AES has become a global standard in data encryption and continues to be a foundational element in modern security architectures.

Structural Characteristics of Twofish Encryption

Twofish is a symmetric encryption algorithm designed to provide strong security while maintaining flexibility and efficiency. It operates as a block cipher and is known for its adaptability in different computing environments. One of its key features is the use of substitution boxes, which transform input data into complex encrypted output through non-linear operations. This design increases resistance against cryptographic attacks by making it difficult to identify patterns in the encrypted data. Twofish supports variable key sizes, allowing users to adjust security levels based on specific requirements. It was developed with a focus on improving upon earlier encryption methods by enhancing both speed and security. The algorithm performs well in both software and hardware systems, making it suitable for a wide range of applications. Its structure allows it to handle complex encryption tasks efficiently while maintaining strong protection against brute force and analytical attacks. Although not as widely adopted as AES, Twofish remains a respected encryption method in cybersecurity due to its strong theoretical design and robust security properties.

IDEA Encryption and Its Historical Significance

The International Data Encryption Algorithm is a symmetric block cipher that played an important role in early digital security systems. It uses a 128-bit key to perform multiple rounds of transformation on data blocks, ensuring that the original information becomes highly obscured during encryption. IDEA processes data in fixed-size segments and applies a combination of mathematical operations, including modular addition, multiplication, and bitwise functions, to create encrypted output. This layered approach enhances security by making it difficult for attackers to reverse-engineer the original data without the correct key. IDEA was widely used in early secure communication systems, particularly in email encryption technologies. Its design focused on balancing security and efficiency at a time when computing resources were limited. Although newer encryption standards have largely replaced IDEA in modern applications, it remains an important milestone in the development of cryptographic systems. Its influence can still be seen in the design principles of many modern encryption algorithms that prioritize structured transformation and key-based security.

Introduction to RC6 and Its Variable Structure Design

RC6 is a symmetric encryption algorithm that builds upon earlier cryptographic models while introducing additional flexibility in its structure. Unlike traditional block ciphers that use fixed parameters, RC6 allows variability in block size, number of processing rounds, and key length. This adaptability makes it suitable for different security requirements and computational environments. The algorithm enhances encryption complexity by incorporating data-dependent rotations and mathematical operations that increase resistance to cryptographic attacks. RC6 was designed as an evolution of earlier algorithms, improving both performance and security through more dynamic processing techniques. It supports large key sizes and can be configured to achieve different levels of encryption strength depending on the application. This flexibility makes it useful in environments where security requirements may vary significantly. RC6’s design emphasizes efficiency without compromising security, allowing it to perform well in both software and hardware implementations. Although it is not as widely implemented as AES in modern systems, it remains an important example of advanced symmetric encryption design that influenced the development of later cryptographic standards.

RSA Encryption and Its Role in Public Key Security Systems

RSA encryption is one of the most important and widely recognized asymmetric encryption algorithms used in modern cybersecurity systems. It is based on the mathematical difficulty of factoring large prime numbers, which makes it extremely secure when implemented with sufficiently large key sizes. In RSA, two large prime numbers are multiplied together to generate a modulus, which forms the foundation of both the public and private keys. The public key is used to encrypt data, while the private key is used to decrypt it, ensuring that only the intended recipient can access the original information. This separation of keys allows secure communication over untrusted networks without the need to share a secret key in advance, which is a major advantage over symmetric encryption methods. RSA is commonly used in secure web communications, digital signatures, email encryption, and authentication processes. Its strength lies in the computational difficulty of reversing the encryption without the private key, even when the public key is widely available. However, RSA requires significant processing power compared to symmetric algorithms, which makes it slower for encrypting large volumes of data. As a result, it is often used to securely exchange symmetric keys rather than encrypting entire datasets directly. This hybrid approach combines the security of RSA with the efficiency of symmetric encryption to create robust cryptographic systems used across modern digital infrastructure.

Diffie-Hellman Key Exchange and Secure Key Sharing Mechanisms

The Diffie-Hellman key exchange is a fundamental cryptographic method that enables two parties to securely generate a shared secret key over an insecure communication channel. Unlike traditional encryption methods, Diffie-Hellman does not directly encrypt or decrypt data but instead focuses on securely establishing a shared key that can later be used for encryption. The process involves both parties agreeing on public parameters and then independently generating private values that are combined mathematically to produce a shared secret. Even though the public information used in the exchange is visible to potential attackers, the private values remain hidden, making it extremely difficult to derive the final shared key. This method is widely used in secure communication protocols, including encrypted internet connections and virtual private networks. One of the key advantages of Diffie-Hellman is that it eliminates the need to transmit secret keys directly, reducing the risk of interception during transmission. However, its security depends on proper implementation and the use of sufficiently large key sizes to resist modern computational attacks. Over time, enhanced versions of the algorithm have been developed to improve security and prevent vulnerabilities such as man-in-the-middle attacks, which can occur if authentication is not properly implemented alongside the key exchange process.

Understanding the Structure and Strength of Twofish Encryption

Twofish is a symmetric block cipher encryption algorithm designed to offer a high level of security while maintaining flexibility and performance efficiency. It operates by dividing data into fixed-size blocks and applying a series of complex transformations to convert plaintext into ciphertext. One of the key features of Twofish is its use of substitution boxes, which introduce non-linear transformations into the encryption process. These transformations help obscure patterns in the original data, making it significantly more difficult for attackers to analyze or predict encrypted outputs. Twofish supports variable key lengths, typically up to 256 bits, which allows users to select the appropriate level of security based on their specific requirements. The algorithm was designed with both software and hardware efficiency in mind, making it suitable for a wide range of applications, from secure file storage to embedded systems. Its structure includes multiple rounds of processing, each adding layers of complexity that strengthen the encryption. Although Twofish was a strong candidate for becoming a global encryption standard, it was ultimately not selected in favor of other algorithms. However, it continues to be respected in the cybersecurity community for its strong design principles and resistance to known cryptographic attacks.

International Data Encryption Algorithm and Its Historical Importance

The International Data Encryption Algorithm is a symmetric encryption method that played a significant role in the development of early secure communication systems. It uses a 128-bit key to encrypt and decrypt data, making it more secure than earlier encryption standards that relied on shorter key lengths. IDEA operates on fixed-size data blocks and applies multiple rounds of mathematical transformations to achieve encryption. These transformations include modular addition, multiplication, and bitwise operations, which collectively ensure that the original data is thoroughly obscured. The algorithm was widely used in early email encryption systems and played an important role in securing digital communications during the early growth of the internet. One of its key strengths is its balanced design, which offers both strong security and reasonable performance for its time. IDEA’s structure ensures that even small changes in the input data produce significantly different encrypted outputs, making it resistant to pattern-based attacks. Although it has been largely replaced by newer encryption standards in modern systems, IDEA remains an important milestone in the evolution of cryptography and helped shape the design of future encryption algorithms by demonstrating the effectiveness of layered mathematical transformations in data protection.

RC6 Encryption and Its Flexible Design Architecture

RC6 is a symmetric encryption algorithm that introduces flexibility into traditional block cipher design by allowing variable block sizes, key lengths, and processing rounds. This adaptability makes RC6 suitable for different levels of security and performance requirements. The algorithm is based on mathematical operations that include modular addition, bitwise shifts, and data-dependent rotations, which contribute to its strong encryption capabilities. RC6 builds upon earlier encryption methods by enhancing both efficiency and complexity, making it more resistant to modern cryptographic attacks. Its design allows it to process data in a way that increases unpredictability, making it difficult for attackers to identify patterns or reverse-engineer the encryption process. RC6 was developed as part of an effort to create a new global encryption standard and was designed to be scalable across different computing environments. Although it was not ultimately selected as the final standard, it remains an important example of advanced cryptographic design. Its flexible structure allows it to be adapted for various applications, including secure communications and data protection systems. RC6 demonstrates how encryption algorithms can evolve to meet increasing security demands while maintaining efficiency in processing.

Role of Public Key Infrastructure in Modern Encryption Systems

Public Key Infrastructure is a framework that supports the use of asymmetric encryption by managing digital keys, certificates, and identity verification processes. It provides the foundation for secure communication in digital environments by ensuring that public keys are properly associated with verified identities. This system relies on trusted authorities that issue digital certificates, which confirm the authenticity of public keys used in encryption and digital signatures. Without this structure, users would have no reliable way to determine whether a public key truly belongs to the intended recipient, creating opportunities for impersonation and data interception. Public Key Infrastructure plays a crucial role in securing online transactions, secure websites, email communication, and identity verification systems. It works alongside encryption algorithms such as RSA and Diffie-Hellman to create a complete security framework that protects data integrity and confidentiality. The system ensures that encrypted communication is not only secure but also trustworthy by verifying the identities of all parties involved. As digital communication continues to expand, Public Key Infrastructure remains essential for maintaining secure and reliable information exchange across networks.

Mathematical Foundations Behind Modern Encryption Algorithms

Encryption algorithms are built on complex mathematical principles that ensure data security through structured transformations. These principles include number theory, modular arithmetic, algebraic structures, and probability theory. The use of large prime numbers is especially important in asymmetric encryption systems, where the difficulty of factoring large numbers forms the basis of security. In symmetric encryption, mathematical transformations such as substitution and permutation are used to create confusion and diffusion within the data, making it difficult to identify original patterns. These transformations ensure that even small changes in the input produce drastically different outputs, which is a key requirement for secure encryption systems. The strength of an encryption algorithm depends on how effectively it can resist mathematical attacks that attempt to reverse these transformations. As computational power continues to increase, encryption algorithms must evolve to maintain their security by increasing key sizes and improving structural complexity. The relationship between mathematics and encryption is fundamental, as every secure system relies on carefully designed mathematical operations that balance efficiency and resistance to attack.

Hybrid Encryption Systems in Modern Cybersecurity

Modern encryption systems often combine both symmetric and asymmetric encryption methods to achieve a balance between security and performance. This approach is known as hybrid encryption and is widely used in real-world applications such as secure web communication and data transmission. In a typical hybrid system, asymmetric encryption is used to securely exchange a symmetric key between parties. Once the key is exchanged, symmetric encryption is used to encrypt the actual data being transmitted. This combination allows systems to take advantage of the speed of symmetric encryption while maintaining the secure key exchange capabilities of asymmetric encryption. Hybrid encryption is particularly useful in environments where large amounts of data need to be transmitted securely over public networks. It ensures that sensitive information remains protected while maintaining efficient communication performance. This approach has become a standard practice in modern cybersecurity architecture, providing a practical solution to the limitations of using either encryption method alone.

Advanced Encryption Standard and Its Dominance in Modern Security Systems

The Advanced Encryption Standard is widely recognized as one of the most secure and efficient symmetric encryption algorithms in use today. It was designed to replace older encryption methods that were no longer considered strong enough to withstand modern computational attacks. AES operates by dividing data into fixed-size blocks and applying multiple rounds of transformation to each block. These transformations include substitution, permutation, mixing, and key addition, all of which work together to create a highly complex ciphertext that is extremely difficult to reverse without the correct key. The algorithm supports different key lengths, typically 128-bit, 192-bit, and 256-bit, allowing organizations to choose the appropriate level of security based on their requirements. The number of processing rounds increases with key size, which further enhances the strength of the encryption. AES is widely used across industries for securing sensitive data such as financial transactions, government communications, personal files, and corporate databases. Its efficiency in both software and hardware implementations makes it suitable for a wide range of devices, from high-performance servers to mobile devices. One of the key reasons for its widespread adoption is its strong resistance to known cryptographic attacks, including brute force and differential cryptanalysis. The structure of AES ensures that even small changes in the input produce significantly different outputs, making it extremely difficult for attackers to identify patterns or reconstruct the original data.

Triple DES and the Transition from Legacy Encryption Systems

Triple DES represents an important stage in the evolution of encryption technology, serving as an enhanced version of the original Data Encryption Standard. It was developed to address the growing vulnerabilities of DES, which became increasingly insecure due to advances in computing power. Triple DES improves security by applying the DES encryption process three times to each data block, significantly increasing the complexity of the encrypted output. This repeated encryption process effectively extends the key length and makes brute force attacks far more difficult. Triple DES operates as a symmetric block cipher and processes data in fixed-size blocks, making it suitable for structured data encryption tasks. It was widely used in financial systems, banking networks, and electronic payment processing due to its reliability and compatibility with existing systems. However, despite its improved security compared to DES, Triple DES is relatively slow when compared to modern encryption standards. The multiple encryption stages that enhance its security also reduce its performance efficiency, especially in high-volume data environments. As a result, it has gradually been replaced by more efficient algorithms such as AES. Even though it is no longer considered a modern standard, Triple DES remains relevant in legacy systems where backward compatibility is still required.

RSA and the Mathematical Complexity Behind Public Key Encryption

RSA encryption is based on the mathematical challenge of factoring large composite numbers into their prime components. This difficulty forms the foundation of its security model. In RSA, two large prime numbers are multiplied together to create a modulus, which is used to generate both the public and private keys. The public key is openly distributed and used for encrypting data, while the private key is kept secret and used for decryption. The strength of RSA lies in the fact that while it is easy to multiply large prime numbers, it is extremely difficult to reverse the process and determine the original primes without significant computational resources. This asymmetry makes RSA highly secure for digital communication. It is widely used in secure web connections, digital signatures, email encryption, and authentication systems. RSA also plays an important role in establishing secure communication channels by enabling the safe exchange of symmetric keys, which are then used for faster data encryption. However, RSA requires relatively high computational power compared to symmetric encryption methods, making it less efficient for encrypting large amounts of data directly. To address this limitation, it is often combined with symmetric encryption in hybrid systems, ensuring both security and performance in real-world applications.

Diffie-Hellman and Secure Key Exchange Mechanisms

The Diffie-Hellman key exchange is a foundational method in modern cryptography that enables two parties to establish a shared secret key over an insecure communication channel. Unlike traditional encryption methods, it does not directly encrypt data but instead focuses on securely generating a shared key that can later be used for encryption. The process begins with both parties agreeing on public parameters, which are shared openly. Each party then generates a private value that is combined with the public parameters through mathematical operations to produce a shared secret. Although the public values used in the exchange can be observed by potential attackers, the private values remain hidden, making it extremely difficult to derive the final shared key. This method eliminates the need to transmit secret keys directly, reducing the risk of interception during transmission. Diffie-Hellman is widely used in secure communication protocols, including encrypted internet connections and virtual private networks. Its security depends on the difficulty of solving certain mathematical problems, such as the discrete logarithm problem. While highly effective, the algorithm must be properly implemented and combined with authentication mechanisms to prevent attacks such as impersonation or man-in-the-middle interference.

Twofish and Its Role in Symmetric Encryption Evolution

Twofish is a symmetric block cipher designed to provide strong encryption performance while maintaining flexibility and efficiency. It processes data in fixed-size blocks and applies multiple rounds of transformation to produce encrypted output. One of its defining features is the use of substitution boxes, which introduce non-linear transformations that significantly increase the complexity of the encryption process. These transformations help obscure patterns in the original data, making it difficult for attackers to analyze or predict the structure of the ciphertext. Twofish supports variable key lengths, typically up to 256 bits, allowing users to select a level of security appropriate for their needs. The algorithm was designed to perform efficiently in both software and hardware environments, making it suitable for a wide range of applications, including file encryption, secure communication, and embedded systems. Although it was a strong candidate in the development of modern encryption standards, it was ultimately not selected as the global standard. Despite this, Twofish remains respected in the cybersecurity field due to its strong theoretical design and resistance to known cryptographic attacks. Its structure continues to influence the development of modern encryption systems that require both security and flexibility.

IDEA Encryption and Its Contribution to Early Digital Security

The International Data Encryption Algorithm played a significant role in the early development of secure digital communication systems. It is a symmetric block cipher that uses a 128-bit key to encrypt and decrypt data through multiple rounds of transformation. IDEA operates on fixed-size data blocks and applies a combination of mathematical operations, including modular addition, modular multiplication, and bitwise exclusive OR functions. These operations work together to create a highly complex encryption process that ensures data confidentiality. One of the strengths of IDEA is its balanced design, which offers both strong security and reasonable performance for its time. It was widely used in early secure email systems and played an important role in protecting sensitive digital communication during the early expansion of the internet. The algorithm ensures that even small changes in the input data result in significantly different encrypted outputs, making it resistant to pattern-based attacks. Although it has been largely replaced by more modern encryption standards, IDEA remains an important milestone in cryptographic history and helped establish key design principles that influenced future encryption algorithms.

RC6 and the Concept of Flexible Cryptographic Design

RC6 is a symmetric encryption algorithm that introduces flexibility into traditional block cipher design by allowing variable parameters such as block size, key length, and number of processing rounds. This adaptability makes it suitable for a wide range of security requirements and computational environments. RC6 builds upon earlier encryption models by incorporating advanced mathematical operations, including data-dependent rotations and modular arithmetic, which increase the complexity of the encryption process. These operations ensure that encrypted data does not follow predictable patterns, making it more resistant to cryptographic attacks. The algorithm was designed with scalability in mind, allowing it to be adjusted based on performance and security needs. RC6 supports large key sizes, which enhances its resistance to brute force attacks and improves overall security strength. Although it was not selected as a global encryption standard, it remains an important example of modern cryptographic design that emphasizes flexibility and efficiency. Its structure demonstrates how encryption algorithms can evolve to meet changing technological demands while maintaining strong security principles.

Role of Cryptographic Hashing in Data Protection Systems

Cryptographic hashing is an essential component of modern data security systems, although it is not an encryption method in the traditional sense. Instead of converting data into a reversible format, hashing transforms input data into a fixed-length output known as a hash value. This process is one-way, meaning it cannot be reversed to retrieve the original data. Hashing is commonly used to verify data integrity, store passwords securely, and detect unauthorized modifications to information. Even a small change in the input data produces a completely different hash value, making it easy to detect alterations. Cryptographic hash functions are designed to be collision-resistant, meaning it is extremely difficult for two different inputs to produce the same hash output. This property ensures the reliability of data verification systems. Hashing plays a crucial role in password security systems, where actual passwords are not stored directly but are instead converted into hash values. When a user attempts to log in, the system hashes the entered password and compares it to the stored hash value. This approach enhances security by ensuring that even if stored data is compromised, the original passwords remain protected.

Future Trends in Encryption and Evolving Security Requirements

As digital systems continue to evolve, encryption algorithms must adapt to meet increasing security demands and emerging technological challenges. One of the most significant future considerations in encryption is the development of quantum-resistant algorithms that can withstand the computational power of quantum computing. Traditional encryption methods, particularly those based on mathematical problems like factorization and discrete logarithms, may become vulnerable to quantum attacks. As a result, researchers are actively developing new cryptographic techniques that rely on alternative mathematical structures. Another important trend is the increasing use of lightweight encryption algorithms designed for resource-constrained environments such as Internet of Things devices. These systems require efficient encryption methods that provide strong security without consuming excessive computational resources. Additionally, the integration of encryption with artificial intelligence is becoming more prominent, allowing systems to detect and respond to security threats in real time. As data continues to grow in volume and importance, encryption will remain a fundamental technology in ensuring privacy, security, and trust across digital ecosystems.

Conclusion

Encryption algorithms form the backbone of modern digital security systems, ensuring that sensitive information remains protected as it moves across increasingly complex and interconnected networks. In an environment where data is constantly transmitted between devices, servers, and global infrastructure, the risk of interception or unauthorized access has become a persistent concern. Encryption addresses this challenge by transforming readable information into a structured, unreadable format that can only be reversed with the correct cryptographic key. This fundamental process allows individuals, organizations, and governments to communicate and exchange data with confidence, knowing that confidentiality and integrity are preserved.

The evolution of encryption algorithms reflects the ongoing struggle between data protection and computational advancement. Early encryption methods were relatively simple and could be broken with limited computing power. However, as technology progressed, attackers gained access to more advanced tools, forcing cryptographic systems to evolve in complexity and strength. Modern algorithms such as AES, RSA, and Diffie-Hellman are designed to withstand sophisticated attacks by leveraging deep mathematical principles, large key sizes, and multi-layered transformation processes. These improvements ensure that encrypted data remains secure even in the face of rapidly growing computational capabilities.

Symmetric encryption algorithms continue to play a critical role in securing large volumes of data due to their speed and efficiency. They are widely used in scenarios where performance is essential, such as database encryption, file storage, and internal communications within organizations. However, the challenge of secure key distribution remains a key limitation. If encryption keys are exposed during transmission or storage, the entire security model can be compromised. Despite this limitation, symmetric encryption remains indispensable because of its ability to handle large-scale data processing efficiently.

On the other hand, asymmetric encryption has introduced a more secure and scalable approach to key management. By using a pair of mathematically linked keys, public and private, it eliminates the need to share secret keys directly between parties. This innovation has transformed secure communication over public networks by enabling safe key exchange and identity verification. Although asymmetric encryption is computationally more expensive and slower than symmetric methods, its role in establishing trust and secure connections is irreplaceable. In practice, most modern systems combine both approaches to achieve a balance between security and performance.

The integration of encryption into everyday digital systems has made it an invisible yet essential part of modern life. From securing online banking transactions to protecting personal messages and safeguarding corporate data, encryption operates silently in the background to ensure privacy and trust. Users often interact with encrypted systems without even realizing it, as it has become deeply embedded in operating systems, web browsers, mobile applications, and cloud services. This widespread adoption highlights the importance of encryption as a foundational technology rather than a specialized security feature.

Another important aspect of encryption algorithms is their reliance on mathematical complexity. The security of these systems is not based on the secrecy of the algorithm itself but on the difficulty of solving specific mathematical problems without the correct key. This principle, known as Kerckhoffs’s principle, ensures that even if the structure of an encryption algorithm is known, it remains secure as long as the key is protected. This approach has allowed cryptography to evolve into a highly transparent and peer-reviewed field, where algorithms are constantly tested and improved by experts worldwide.

Despite their strength, encryption algorithms are not static. They must continuously evolve to address new threats and technological advancements. The rise of quantum computing represents one of the most significant future challenges for cryptography. Quantum computers have the potential to solve certain mathematical problems much faster than classical computers, which could undermine the security of widely used encryption methods such as RSA and ECC. In response, researchers are developing post-quantum cryptographic algorithms designed to resist quantum-based attacks. This ongoing research highlights the dynamic nature of cybersecurity and the need for continuous innovation.

In addition to quantum threats, the increasing number of connected devices through the Internet of Things has created new challenges for encryption systems. These devices often have limited processing power and memory, requiring lightweight encryption methods that can still provide strong security. As a result, cryptographic research is increasingly focused on designing efficient algorithms that can operate effectively in resource-constrained environments without compromising protection.

Another emerging trend in encryption is its integration with artificial intelligence and machine learning systems. These technologies are being used to detect anomalies, identify potential security breaches, and enhance encryption key management. While AI does not replace encryption, it complements it by improving the overall security ecosystem. This combination of cryptography and intelligent systems is expected to play a major role in future cybersecurity frameworks.

Ultimately, encryption algorithms represent a critical layer of defense in the digital world. They protect personal privacy, secure financial systems, safeguard national security data, and enable trust in online interactions. Without encryption, modern digital communication would be vulnerable to widespread exploitation and misuse. As technology continues to evolve, the importance of strong, efficient, and adaptable encryption systems will only increase. The ongoing development of cryptographic techniques ensures that data remains protected in an increasingly complex and interconnected global environment, reinforcing the essential role of encryption in maintaining digital trust and security.