The rapid expansion of digital ecosystems has fundamentally reshaped how computing systems are built, scaled, and maintained across industries. Modern organizations no longer rely on small, isolated server environments or traditional hardware setups with limited capacity. Instead, they operate within highly distributed and interconnected infrastructures designed to handle massive data flows in real time. This transformation has been driven by the explosive growth of cloud computing, artificial intelligence, streaming services, online financial systems, and global communication platforms. As data generation accelerates at an unprecedented pace, the need for scalable, resilient, and highly efficient infrastructure has become central to business and technological strategy.

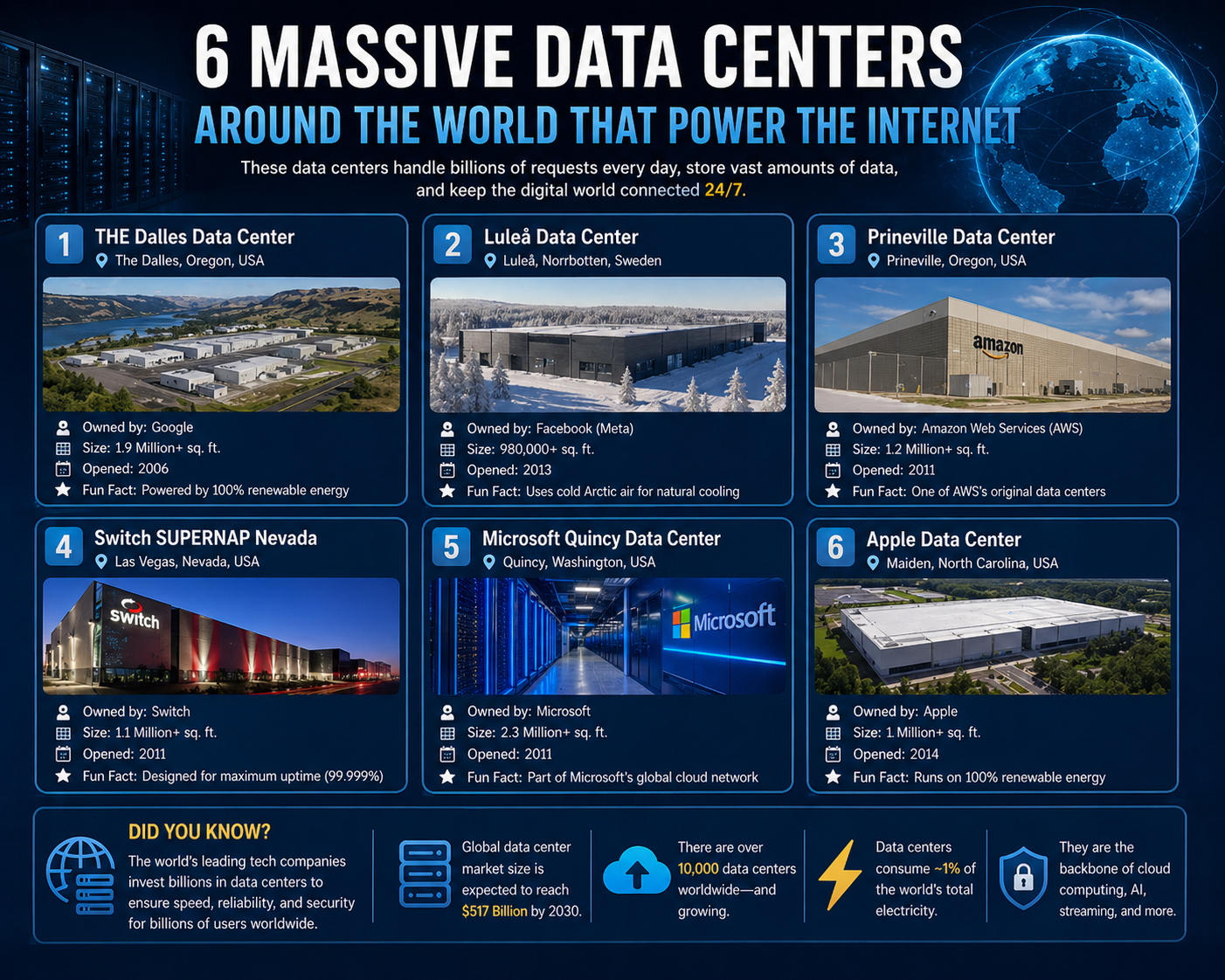

Data centers have evolved into the physical backbone of the digital world. They are no longer simple storage facilities but complex, engineered environments that support computing at a global scale. Every digital interaction, from sending messages and streaming content to processing financial transactions and running enterprise systems, depends on these large-scale infrastructures operating continuously behind the scenes.

Transition from Traditional Infrastructure to Modern Systems

In earlier computing eras, organizations typically relied on proprietary hardware systems arranged in fixed configurations. These environments were expensive, rigid, and difficult to scale. As digital demand increased, these traditional architectures became inefficient and unable to support rapidly growing workloads. Businesses faced challenges related to capacity limitations, high maintenance costs, and lack of flexibility in resource allocation.

The modern approach to infrastructure design has shifted dramatically. Instead of static systems, organizations now adopt flexible and scalable architectures built around virtualization, automation, and distributed computing. These systems allow computing resources to be dynamically allocated based on demand, ensuring optimal utilization of hardware. This shift has significantly improved efficiency while reducing operational overhead.

Rise of Hyperscale Data Center Architecture

The concept of hyperscale computing has become a defining feature of modern infrastructure development. Hyperscale data centers are designed to support massive workloads by integrating thousands of servers into unified, highly efficient environments. These facilities are built with scalability at their core, allowing rapid expansion without disrupting ongoing operations.

A key characteristic of hyperscale architecture is modular design. Instead of constructing a single monolithic structure, these facilities are developed in phases, with additional modules added as demand increases. This approach enables organizations to scale infrastructure in alignment with business growth while maintaining cost efficiency and operational stability.

Another critical feature is software-defined management. Rather than manually configuring individual hardware components, administrators rely on centralized software systems to automate provisioning, monitoring, and optimization. This reduces complexity while improving system performance and responsiveness. Virtualization further enhances efficiency by allowing multiple workloads to operate on shared physical resources, increasing overall utilization rates.

The Emergence of Mega-Scale Data Center Facilities

As digital demand continues to grow globally, certain infrastructure projects have expanded into mega-scale data centers spanning millions of square feet. These facilities represent the highest level of modern engineering applied to computing environments. They are designed not only for storage and processing but also for long-term scalability, energy efficiency, and global connectivity.

Mega data centers require advanced planning to manage power distribution, cooling systems, and network architecture. Redundancy is a core design principle, ensuring continuous operation even in the event of system failures or maintenance activities. These facilities often include multiple layers of backup power systems, high-capacity cooling technologies, and redundant network pathways.

The scale of these environments allows them to support billions of users simultaneously, powering global applications and services that require uninterrupted performance. Their infrastructure is carefully engineered to balance computational density with operational stability.

The Citadel Mega Data Center in Nevada

In Nevada, United States, one of the largest data center campuses in the world has been developed as part of a long-term expansion strategy. This facility is projected to span approximately 7.2 million square feet when fully completed, making it one of the most expansive infrastructure projects of its kind.

The campus is designed using a modular development model, allowing new buildings to be added progressively as demand increases. This phased approach ensures that infrastructure growth remains aligned with operational requirements while minimizing disruption. The first completed structure already exceeds one million square feet, establishing the site as a major hub for high-density computing operations.

The design of the facility emphasizes energy efficiency, scalability, and advanced engineering integration. It incorporates optimized cooling systems, high-capacity power infrastructure, and flexible server deployment configurations. The surrounding region offers strategic advantages such as available land, favorable regulatory conditions, and access to large-scale energy resources, all of which contribute to its long-term viability.

Hyperscale Social Infrastructure in Oregon

In Oregon, United States, a major hyperscale data center was developed to support one of the world’s largest social technology ecosystems. This facility spans more than a million square feet and was engineered specifically to handle massive global user traffic.

The infrastructure is designed for efficiency and performance optimization. One of its key advantages is its location, which benefits from a naturally cool climate. This environmental factor significantly reduces the need for mechanical cooling systems, thereby lowering energy consumption and operational costs.

The facility uses advanced airflow and thermal management strategies to maintain optimal server conditions. Server clusters are arranged in modular units, allowing flexible expansion and efficient workload distribution. This ensures that the system can scale seamlessly as user demand increases.

Over time, this facility became part of a broader global network of interconnected data centers. These distributed systems work together to balance workloads, reduce latency, and improve service reliability across different geographic regions.

High-Security Data Processing Infrastructure in Utah

In Utah, United States, a highly advanced data center has been developed for large-scale data processing and analytical operations. This facility is recognized for its high computational capacity and its ability to process vast streams of digital information from multiple sources simultaneously.

The infrastructure is located in a geographically stable environment surrounded by natural mountain formations, which provide both physical isolation and environmental consistency. The internal systems include extensive server arrays, high-density storage configurations, and advanced networking frameworks designed for continuous operation.

Security, redundancy, and reliability are core principles in the design of this facility. Multiple layers of backup systems ensure uninterrupted functionality even under extreme workloads. The architecture supports continuous data processing and real-time analysis across large datasets, enabling advanced computational tasks at a national scale.

Large-Scale Telecommunications Infrastructure in Chicago

In Chicago, United States, a major data center facility plays a critical role in supporting global telecommunications and financial systems. This infrastructure spans over a million square feet and operates as a central hub for data exchange and network connectivity.

The facility is designed for multi-tenant operations, allowing multiple organizations to operate within a shared infrastructure environment while maintaining secure and isolated systems. It is integrated into global fiber-optic networks, making it a key point for data routing and transmission.

High-capacity power systems, advanced cooling technologies, and dense server deployments enable the facility to support continuous high-performance operations. Due to its scale and operational intensity, it is among the most energy-demanding structures in its region.

This infrastructure plays a vital role in enabling real-time financial transactions, enterprise cloud services, and global communication networks. Its design reflects the increasing complexity and importance of modern digital ecosystems, where speed, reliability, and scalability are essential requirements.

The Engineering Foundation of Modern Data Centers

Modern data centers are built on a foundation of advanced engineering principles that prioritize scalability, efficiency, redundancy, and continuous availability. Unlike earlier computing environments that were primarily focused on isolated performance, today’s infrastructure must support global workloads that operate 24/7 without interruption. This requires a highly structured approach to physical design, electrical systems, cooling mechanisms, and network architecture.

At the core of every large-scale data center is a carefully engineered balance between compute density and energy consumption. As server density increases, so does heat generation, requiring sophisticated thermal management systems to maintain optimal operating conditions. Engineers design these environments to minimize energy waste while maximizing computational output. This involves precise airflow modeling, intelligent cooling distribution, and the strategic placement of server racks to ensure uniform temperature regulation across vast facilities.

Power distribution is another critical component. Data centers rely on multiple layers of redundancy to ensure uninterrupted operation. Electrical systems are typically designed with dual or triple feed configurations, allowing power to be rerouted instantly in the event of a failure. Backup generators and uninterruptible power systems ensure that even during external outages, critical operations continue without disruption.

Modular Design and Scalable Infrastructure Models

One of the most significant advancements in data center development is the adoption of modular design principles. Instead of constructing a single, fixed-capacity facility, modern infrastructure is built using modular components that can be expanded incrementally. This approach allows organizations to scale their infrastructure in alignment with demand rather than investing in excessive capacity upfront.

Modular data centers are typically composed of standardized units that include server racks, cooling systems, and power distribution modules. These units can be replicated and added to existing infrastructure without requiring major architectural redesign. This flexibility is essential in environments where computational demand can change rapidly due to user growth, application expansion, or global traffic fluctuations.

The modular approach also improves operational efficiency. Maintenance and upgrades can be performed on individual modules without affecting the entire system. This reduces downtime and enhances overall system reliability. Additionally, modular construction reduces deployment time, enabling organizations to bring new capacity online more quickly compared to traditional infrastructure models.

Converged Infrastructure and Resource Optimization

Another key development in modern data center architecture is the adoption of converged infrastructure systems. These systems integrate computing, storage, and networking resources into a unified platform, reducing the complexity of managing separate hardware components. By consolidating infrastructure layers, organizations can achieve greater efficiency, improved performance, and simplified management.

Converged systems are designed to optimize resource utilization. Instead of dedicating specific hardware for individual tasks, workloads are dynamically allocated across shared infrastructure. This ensures that resources are used efficiently and reduces the risk of underutilization. The integration of software-defined technologies further enhances this model by enabling centralized control over physical and virtual resources.

Automation plays a central role in converged environments. Routine tasks such as provisioning, monitoring, and load balancing are managed through intelligent software systems. This reduces the need for manual intervention and minimizes the potential for human error. As a result, data centers can operate at higher levels of efficiency while maintaining consistent performance standards.

Software-Defined Infrastructure and Centralized Control

The transition to software-defined infrastructure has fundamentally changed how data centers are managed. In traditional environments, hardware components were configured individually, requiring significant manual effort and specialized expertise. In contrast, software-defined systems abstract hardware resources into virtual layers that can be controlled through centralized platforms.

This abstraction enables greater flexibility and agility in resource management. Administrators can allocate computing power, storage capacity, and network bandwidth dynamically based on real-time demand. This allows data centers to respond quickly to changing workloads without requiring physical reconfiguration.

Software-defined networking plays a particularly important role in modern infrastructure. It enables centralized control over data traffic, allowing administrators to optimize routing paths, reduce latency, and improve overall network efficiency. Similarly, software-defined storage systems allow data to be distributed across multiple physical locations while maintaining unified access and management.

The integration of these technologies creates a highly adaptive infrastructure environment capable of supporting complex digital ecosystems at scale.

Energy Efficiency and Sustainable Data Center Design

As data centers continue to grow in size and complexity, energy efficiency has become a critical design consideration. Large-scale computing facilities consume significant amounts of electricity, making energy optimization essential for both operational cost management and environmental sustainability.

Modern data centers incorporate a range of energy-efficient technologies to reduce power consumption. These include advanced cooling systems that utilize outside air, liquid cooling solutions for high-density server environments, and intelligent energy management systems that dynamically adjust power usage based on workload demand.

Location selection also plays a significant role in energy efficiency. Many data centers are built in regions with cooler climates or access to renewable energy sources. This reduces the reliance on mechanical cooling systems and lowers overall carbon footprints. Additionally, some facilities integrate on-site renewable energy generation, such as solar or wind power, to further enhance sustainability.

Energy efficiency is not only an environmental consideration but also a financial one. By reducing power consumption, organizations can significantly lower operational costs while maintaining high levels of performance.

Network Architecture and Global Connectivity

The performance of modern data centers is heavily dependent on network architecture. As digital services become increasingly global, data must be transmitted quickly and reliably across vast distances. To achieve this, data centers are integrated into high-capacity fiber-optic networks that form the backbone of global internet infrastructure.

These networks are designed to minimize latency and maximize throughput. Multiple redundant pathways ensure that data can be rerouted in the event of network congestion or failure. Edge computing nodes are also deployed in strategic locations to bring computational resources closer to end users, further reducing latency and improving performance.

Interconnection between data centers is a key feature of modern infrastructure. Rather than operating as isolated facilities, data centers function as part of a global network ecosystem. This distributed architecture allows workloads to be balanced across multiple locations, improving resilience and scalability.

The Role of Hyperscale Operators in Global Infrastructure

Hyperscale operators play a central role in the development and management of global data center ecosystems. These organizations design and operate massive infrastructure networks capable of supporting billions of users and complex digital services. Their facilities are distributed across multiple geographic regions, ensuring redundancy and high availability.

Hyperscale infrastructure is characterized by its ability to scale rapidly in response to demand. This is achieved through standardized hardware deployments, automated provisioning systems, and global orchestration platforms. These capabilities allow hyperscale operators to maintain consistent performance across diverse environments.

Another defining feature of hyperscale systems is their focus on efficiency. By optimizing hardware utilization and reducing operational overhead, these operators are able to deliver large-scale services at relatively low cost per unit of computation. This efficiency is critical for supporting global platforms that require continuous expansion.

Data Center Security and Physical Resilience

Security is a fundamental aspect of data center design. Modern facilities incorporate multiple layers of physical and digital security to protect critical infrastructure and sensitive data. Physical security measures include controlled access points, biometric authentication systems, surveillance networks, and perimeter defenses.

In addition to physical protection, data centers also employ advanced cybersecurity frameworks to safeguard digital assets. These systems monitor network activity for anomalies, detect potential threats, and respond to security incidents in real time. Encryption technologies are used to protect data both at rest and in transit.

Resilience is equally important. Data centers are designed to withstand a wide range of disruptions, including power outages, natural disasters, and hardware failures. Redundant systems ensure that operations continue even under adverse conditions. This level of resilience is essential for maintaining uninterrupted service in critical applications such as financial systems, healthcare platforms, and global communication networks.

Evolution of Data Center Expansion Strategies

As demand for digital services continues to grow, data center expansion strategies have evolved significantly. Instead of building large monolithic facilities, organizations now adopt distributed expansion models that prioritize flexibility and scalability. This involves constructing multiple smaller facilities across different regions rather than relying on a single centralized location.

Distributed strategies improve performance by reducing latency and enhancing redundancy. They also allow organizations to adapt more easily to regional demand fluctuations. By placing infrastructure closer to end users, data transfer times are reduced, resulting in faster and more responsive digital services.

This approach also improves risk management. In the event of a failure at one location, workloads can be redistributed to other facilities, ensuring continuity of service. This level of resilience is essential in today’s interconnected digital environment.

The Growing Importance of Data Center Innovation

Innovation continues to drive the evolution of data center technology. Emerging trends such as artificial intelligence-driven optimization, liquid immersion cooling, and edge computing are reshaping how infrastructure is designed and operated. These advancements aim to improve efficiency, reduce costs, and enhance performance across large-scale systems.

Artificial intelligence is increasingly being used to optimize resource allocation, predict hardware failures, and manage energy consumption. Liquid cooling technologies are enabling higher server densities by improving thermal efficiency. Edge computing is bringing processing power closer to users, reducing latency and improving real-time performance.

As digital ecosystems continue to expand, data centers will remain at the center of technological innovation, supporting the infrastructure that powers global connectivity and computation.

The Future Trajectory of Global Data Center Growth

The evolution of data centers is closely tied to the accelerating demands of the global digital economy. As industries become increasingly dependent on data-driven decision-making, automation, and real-time computing, the infrastructure supporting these processes must evolve in parallel. Future data center development is expected to focus on three primary pillars: scalability, intelligence, and sustainability. These pillars define how next-generation facilities will be designed, operated, and integrated into global systems.

Scalability will remain a core requirement as digital workloads continue to expand. The growth of artificial intelligence models, high-resolution media streaming, autonomous systems, and cloud-native applications is generating unprecedented computational demand. To accommodate this, future data centers will increasingly rely on distributed architectures that allow seamless expansion across multiple geographic regions. This distributed model ensures that no single facility becomes a bottleneck, while also improving resilience and performance.

Intelligence is another defining factor in future infrastructure. Data centers are moving toward self-optimizing systems that use machine learning algorithms to manage workloads, predict failures, and optimize energy usage. These intelligent systems reduce the need for manual intervention and enable infrastructure to adapt dynamically to changing conditions. Over time, this will lead to highly autonomous environments capable of self-regulation and continuous optimization.

Artificial Intelligence Integration in Data Center Operations

Artificial intelligence is becoming deeply embedded in data center operations, transforming how infrastructure is managed at every level. AI systems are used to analyze massive volumes of operational data generated by servers, cooling systems, and network equipment. This analysis enables predictive maintenance, where potential hardware failures are identified before they occur, reducing downtime and improving system reliability.

AI also plays a critical role in workload optimization. By analyzing usage patterns and performance metrics, intelligent systems can dynamically allocate computing resources to ensure maximum efficiency. This reduces energy consumption while maintaining high levels of performance across workloads.

In addition, AI-driven cooling systems are increasingly being deployed to regulate temperature more efficiently. These systems adjust airflow, cooling intensity, and environmental controls in real time based on server activity. This level of precision significantly improves energy efficiency and reduces operational costs.

The integration of artificial intelligence is gradually transforming data centers into adaptive ecosystems capable of self-management, marking a significant shift from traditional static infrastructure models.

Edge Computing and the Decentralization of Data Processing

One of the most significant architectural shifts in modern infrastructure is the rise of edge computing. Unlike traditional centralized data centers that process information in large remote facilities, edge computing brings processing power closer to the source of data generation. This reduces latency and improves response times for applications that require real-time processing.

Edge computing is particularly important for emerging technologies such as autonomous vehicles, smart cities, industrial automation, and augmented reality systems. These applications require immediate data processing, which cannot be efficiently handled by distant centralized servers.

By distributing computing resources across multiple smaller nodes located closer to users, edge computing reduces the distance data must travel. This results in faster response times, reduced bandwidth usage, and improved overall system performance.

Edge infrastructure works in conjunction with large hyperscale data centers rather than replacing them. While edge nodes handle localized processing, centralized data centers continue to manage large-scale storage, analytics, and global coordination tasks. Together, these systems form a hybrid architecture that balances speed, efficiency, and scalability.

Sustainability and Environmental Optimization in Data Centers

As global awareness of environmental impact increases, sustainability has become a central focus in data center design. Large-scale computing facilities consume significant amounts of energy, making efficiency improvements essential for long-term viability. Future data centers are being designed with sustainability integrated into every layer of their architecture.

One of the most important developments in this area is the adoption of renewable energy sources. Many facilities are increasingly powered by solar, wind, or hydroelectric energy, reducing reliance on fossil fuels. Some data centers are even being constructed near renewable energy generation sites to maximize efficiency and minimize transmission losses.

Cooling systems are also undergoing major transformations. Traditional air-based cooling methods are being replaced or supplemented with advanced technologies such as liquid cooling and immersion cooling. These methods allow for more efficient heat dissipation, enabling higher server densities while reducing energy consumption.

Water usage is another critical factor in sustainability planning. Modern facilities are designed to minimize water dependency by using closed-loop cooling systems or air-based alternatives. This reduces environmental strain and improves long-term operational sustainability.

The Expansion of Global Data Center Networks

The global distribution of data centers is becoming increasingly complex and interconnected. Instead of relying on isolated infrastructure hubs, organizations now operate vast networks of facilities spread across multiple continents. This distributed model improves redundancy, reduces latency, and enhances service availability.

Global data center networks are designed to function as unified systems. Workloads are dynamically distributed across different regions based on demand, proximity, and resource availability. This ensures that users experience consistent performance regardless of their geographic location.

Interconnectivity between facilities is achieved through high-speed fiber-optic networks and dedicated backbone infrastructure. These connections enable seamless data transfer between data centers, supporting synchronized operations and real-time data replication.

This global architecture also enhances disaster recovery capabilities. In the event of a regional disruption, workloads can be quickly rerouted to alternative facilities, ensuring continuity of service. This level of resilience is essential for industries that rely on uninterrupted digital operations.

Hyperscale Expansion and Industrial-Scale Computing

Hyperscale computing continues to redefine the boundaries of infrastructure development. These large-scale systems are designed to support massive workloads across cloud platforms, artificial intelligence systems, and enterprise applications. Hyperscale facilities are characterized by their ability to scale rapidly, often through standardized hardware deployments and automated management systems.

One of the key advantages of hyperscale architecture is its cost efficiency. By standardizing hardware and optimizing resource utilization, operators can reduce the cost per unit of computation while maintaining high performance. This efficiency is critical for supporting global platforms that serve billions of users.

Hyperscale facilities also benefit from advanced automation systems that manage provisioning, monitoring, and maintenance tasks. These systems reduce operational complexity and improve overall reliability. As a result, hyperscale infrastructure has become the dominant model for large-scale digital operations.

Data Center Security in a Hyperconnected World

As data centers become more interconnected and critical to global infrastructure, security has become increasingly complex. Modern facilities must defend against a wide range of threats, including cyberattacks, physical intrusions, and system vulnerabilities.

Physical security measures include multi-layer access control systems, biometric authentication, surveillance technologies, and restricted entry zones. These systems ensure that only authorized personnel can access critical infrastructure components.

Cybersecurity frameworks are equally important. Data centers employ advanced monitoring systems that continuously analyze network traffic for suspicious activity. Artificial intelligence is increasingly being used to detect anomalies and respond to potential threats in real time.

Encryption technologies protect data both during transmission and storage, ensuring that sensitive information remains secure even if intercepted. Redundant systems and backup protocols further enhance resilience by ensuring that operations can continue even during security incidents.

The Role of Cloud Integration in Modern Infrastructure

Cloud computing has become deeply integrated into modern data center architecture. Rather than operating independently, data centers now function as physical extensions of cloud platforms. This integration enables organizations to combine on-premises infrastructure with cloud-based resources, creating hybrid environments that offer both flexibility and control.

Hybrid infrastructure models allow businesses to store sensitive or critical data within private environments while leveraging cloud resources for scalability and computational flexibility. This approach provides a balance between security, performance, and cost efficiency.

Cloud integration also enables global accessibility. Users can access applications and data from anywhere in the world, supported by distributed infrastructure networks that ensure consistent performance.

Automation and the Path Toward Autonomous Data Centers

Automation is playing an increasingly important role in the evolution of data center operations. Modern facilities rely on automated systems to manage tasks such as resource allocation, performance monitoring, energy optimization, and hardware maintenance.

The next stage of this evolution is the development of autonomous data centers. These environments will be capable of self-managing most operational tasks without human intervention. Artificial intelligence and machine learning will enable these systems to adapt dynamically to changing workloads, predict failures, and optimize performance in real time.

Autonomous infrastructure will significantly reduce operational costs while improving efficiency and reliability. It represents a major step toward fully self-sustaining digital ecosystems.

The Economic Impact of Global Data Center Infrastructure

Data centers have become a major driver of global economic activity. They support industries ranging from finance and healthcare to entertainment and manufacturing. The expansion of digital infrastructure has created new markets, enabled digital transformation, and supported the growth of the global cloud economy.

Investment in data center infrastructure continues to increase as organizations recognize the importance of scalable and resilient computing environments. These facilities not only support existing digital services but also enable innovation in emerging technologies such as artificial intelligence, machine learning, and advanced analytics.

As global dependence on digital systems continues to grow, data centers will remain a foundational element of economic development and technological progress.

Conclusion

The global expansion of data centers reflects one of the most significant structural shifts in modern technological history. What began as relatively simple server rooms supporting localized business operations has evolved into vast, interconnected ecosystems that power nearly every aspect of the digital world. From communication platforms and financial systems to artificial intelligence and scientific research, data centers now operate as the essential infrastructure layer beneath global digital activity. Their importance continues to grow as societies become increasingly dependent on real-time data processing, high-speed connectivity, and cloud-based services.

One of the most defining outcomes of this transformation is the scale at which modern data centers now operate. Facilities spanning millions of square feet are no longer exceptional; they are becoming standard in hyperscale computing environments. These massive infrastructures are designed to handle extraordinary workloads, often supporting billions of users simultaneously. The complexity of these systems reflects the growing demands of a hyperconnected world where digital services must remain available at all times, regardless of geographic location or usage intensity.

At the core of this evolution is the shift from static infrastructure models to highly dynamic and adaptive systems. Traditional computing environments were built with fixed capacities and limited flexibility, often resulting in inefficiencies and underutilization. In contrast, modern data centers are engineered for elasticity. They can scale up or down based on demand, distribute workloads intelligently across global networks, and optimize resource usage in real time. This adaptability has become essential for supporting the unpredictable and continuously evolving nature of digital workloads.

Another major development shaping the future of data centers is the integration of advanced software-defined systems. These technologies have fundamentally changed how infrastructure is managed, moving away from manual configuration toward centralized, automated control. Computing, storage, and networking resources are now abstracted into virtual layers that can be dynamically allocated based on operational needs. This shift has significantly improved efficiency while reducing complexity, enabling organizations to manage increasingly large and distributed infrastructures with greater precision.

Energy efficiency has also emerged as a critical focus area in data center development. As computing demand grows, so does energy consumption, making sustainability a key concern for both operational and environmental reasons. Modern facilities are being designed with advanced cooling technologies, renewable energy integration, and optimized power distribution systems. These improvements are not only reducing operational costs but also helping to minimize the environmental footprint of large-scale digital infrastructure. The emphasis on sustainability reflects a broader global awareness of the need to balance technological advancement with environmental responsibility.

The rise of hyperscale computing has further transformed the infrastructure landscape. Hyperscale data centers are designed to support massive, rapidly expanding workloads with exceptional efficiency. They rely on standardized hardware, automated management systems, and globally distributed architectures to achieve scale without sacrificing performance. This model has become the foundation for many of the world’s largest digital platforms, enabling them to serve global user bases with consistent speed and reliability.

Closely linked to hyperscale development is the growing importance of distributed and edge computing models. Instead of relying solely on centralized facilities, modern infrastructure increasingly incorporates smaller, geographically distributed nodes that bring computing power closer to end users. This reduces latency, improves responsiveness, and supports applications that require real-time processing. Edge computing works in combination with large data centers, creating a hybrid ecosystem that balances local responsiveness with global computational power.

Security has also become a central pillar of modern data center design. As these facilities handle vast amounts of sensitive information, they must be protected against a wide range of threats. Physical security systems control access to critical infrastructure, while advanced cybersecurity frameworks monitor and defend against digital attacks. Encryption, intrusion detection systems, and continuous monitoring tools ensure that data remains protected throughout its lifecycle. The increasing sophistication of cyber threats has made security an ongoing priority in infrastructure design and operation.

Another important dimension of modern data centers is their role in enabling cloud computing and hybrid infrastructure models. Today’s digital ecosystems rarely rely on a single type of infrastructure. Instead, organizations combine private data centers, public cloud platforms, and edge environments to create flexible hybrid systems. This approach allows them to balance control, scalability, and cost efficiency while maintaining high levels of performance and reliability. The integration of cloud technologies with physical infrastructure has become a defining characteristic of modern computing architecture.

Automation is also driving a fundamental transformation in how data centers operate. Intelligent systems now manage many aspects of infrastructure performance, including workload distribution, energy optimization, and predictive maintenance. These automated processes reduce the need for manual intervention while improving operational efficiency. As artificial intelligence continues to advance, the industry is moving toward increasingly autonomous data centers capable of self-optimization and self-healing. This represents a significant step toward fully intelligent infrastructure ecosystems.

The economic impact of data center expansion is equally significant. These facilities form the backbone of the digital economy, supporting industries such as finance, healthcare, entertainment, manufacturing, and communications. The growth of cloud services, digital platforms, and data-driven applications has created enormous demand for robust infrastructure. As a result, investment in data center development continues to accelerate globally, driving innovation in engineering, energy systems, and computing technologies.

Looking forward, the role of data centers will continue to expand as new technologies emerge. Artificial intelligence, machine learning, quantum computing, and advanced analytics will place even greater demands on infrastructure capacity and performance. At the same time, societal dependence on digital systems will deepen, making data centers even more critical to daily life and global operations. The infrastructure supporting these technologies will need to become more intelligent, efficient, and resilient to meet future challenges.

Ultimately, data centers represent far more than physical facilities filled with servers. They are the foundational systems that enable modern civilization’s digital functionality. Every online interaction, financial transaction, cloud application, and intelligent system depends on their continuous operation. As technology continues to evolve, data centers will remain at the center of global innovation, shaping how information is processed, shared, and utilized across every sector of society.