A proxy server in modern networking is a specialized intermediary system that sits between client devices and external network resources, typically located on the Internet. Its primary responsibility is to receive requests from internal clients, process those requests according to defined rules, and forward them to external destinations on behalf of the client. When the external system responds, the proxy server retrieves the response and delivers it back to the original requester. This indirect communication model fundamentally changes how network traffic is exchanged because it removes the need for direct interaction between internal devices and external servers. In practical networking environments, this structure is used to enforce control, improve security boundaries, and manage traffic behavior across large and small infrastructures alike.

The importance of a proxy server lies in its ability to act as a controlled gateway rather than just a passive relay. It does not simply pass data back and forth but actively participates in decision-making regarding traffic flow. This includes determining whether a request should be allowed, blocked, modified, or redirected. Because all outbound communication passes through this system, it becomes a strategic point for enforcing organizational policies, protecting internal resources, and regulating how users interact with external networks. In many environments, the proxy server is considered a foundational component of secure and structured network design.

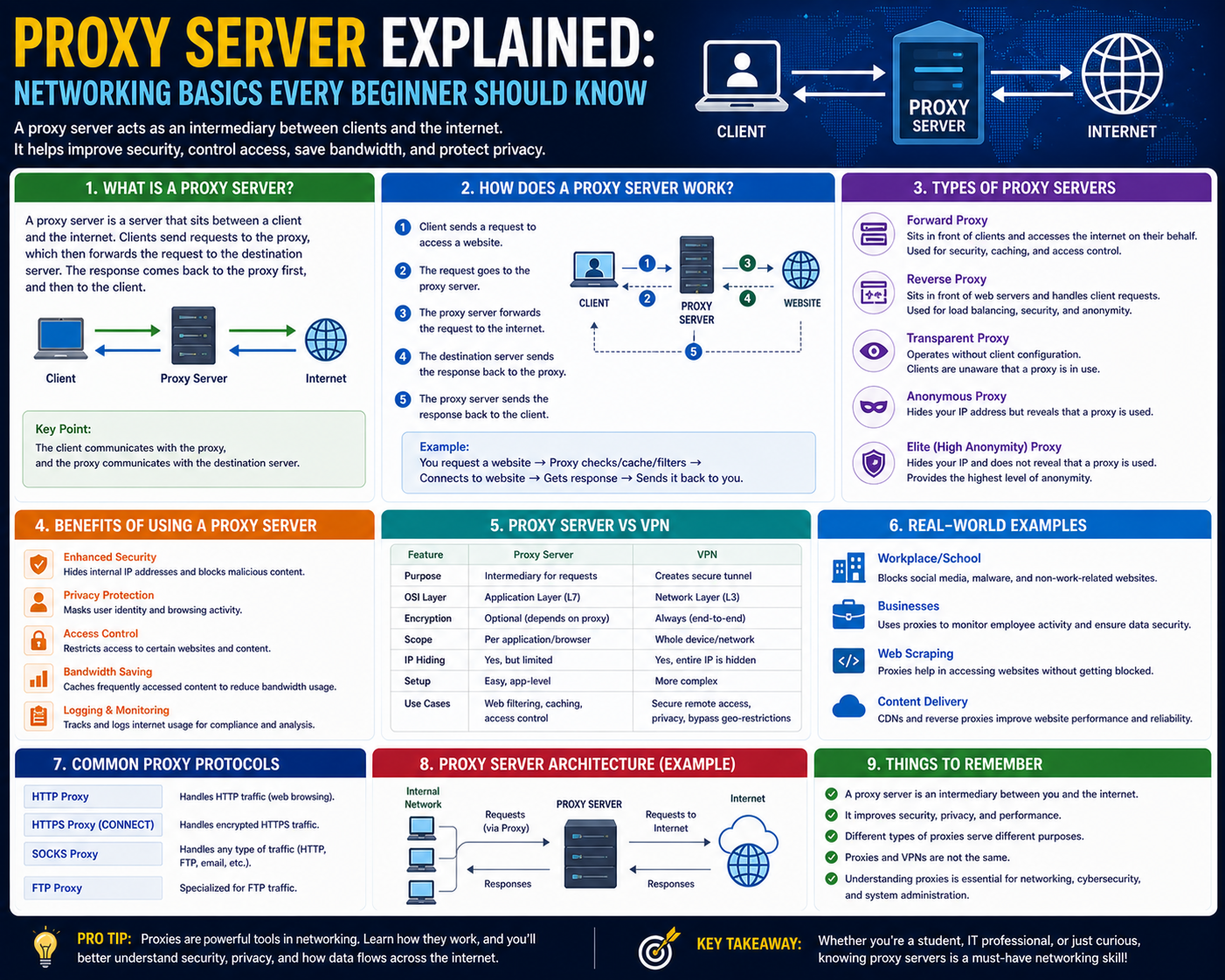

How Proxy Servers Process Requests

The processing cycle of a proxy server begins when a client device initiates a request, such as accessing a website or retrieving external data. Instead of sending this request directly to the destination server, the request is first routed to the proxy server. The proxy evaluates the request against internal rules, which may include access permissions, security policies, or traffic filtering conditions. If the request meets the required criteria, the proxy forwards it to the external server using its own network identity.

Once the external server receives the request, it processes it as if it originated from the proxy server itself. The response generated by the external system is then sent back to the proxy rather than directly to the client device. The proxy server receives this response, performs any required processing such as filtering or caching validation, and finally delivers the content to the requesting client. This entire cycle ensures that the internal device remains abstracted from direct exposure to external systems.

This process also introduces a controlled delay mechanism that can be used to optimize traffic flow. Because the proxy manages multiple requests simultaneously, it can prioritize, queue, or balance traffic depending on system load or configured policies. This makes proxy servers not only intermediaries but also traffic management tools within larger network ecosystems.

Proxy Server Architecture in Depth

The architecture of a proxy server is built around the concept of request interception and controlled forwarding. At a structural level, the proxy sits logically between the client layer and the external network layer. It maintains connections with both sides but ensures that neither side communicates directly with the other. This separation creates a segmented communication model where the proxy acts as the only visible entity to external systems.

Inside the proxy architecture, several functional components work together. The request handler receives incoming client traffic and interprets it. The policy engine evaluates whether the request aligns with configured rules. The routing module determines the correct external destination, and the response handler manages data returned from external servers. These components operate in a coordinated manner to ensure that traffic is processed efficiently and securely.

Some proxy architectures also include caching modules and logging systems integrated directly into the processing pipeline. These modules enhance performance and visibility by storing frequently accessed data and recording detailed activity logs. The modular structure of proxy architecture allows it to scale across different network sizes, from small office environments to large distributed enterprise systems.

Types of Proxy Servers in Networking

Proxy servers can be categorized based on their behavior and placement within a network. A forward proxy is commonly used within internal networks to manage outbound traffic from clients to external servers. In this configuration, the proxy represents internal users when communicating with the internet and controls all outgoing requests.

A reverse proxy operates in the opposite direction, sitting in front of external-facing servers. Instead of protecting clients, it protects backend servers by handling incoming requests from external users. It distributes these requests across multiple internal servers, balancing load and enhancing performance while concealing backend infrastructure details.

A transparent proxy operates without requiring explicit configuration on client devices. It intercepts traffic automatically and applies predefined policies without user awareness. This type of proxy is often used for monitoring and filtering purposes within controlled environments.

There are also specialized proxy implementations, such as anonymous proxies, which focus on hiding client identity, and high-anonymity proxies, which provide enhanced masking by removing identifying metadata from requests. Each type serves a specific purpose depending on network requirements and security objectives.

Security Benefits of Proxy Servers

One of the primary advantages of proxy servers is their contribution to network security. By acting as an intermediary, they prevent direct exposure of internal systems to external networks. This reduces the attack surface available to malicious actors. Since external systems only see the proxy server’s identity, internal IP structures remain hidden.

Proxy servers also help mitigate certain types of network attacks by filtering traffic before it reaches internal systems. They can detect suspicious patterns, block unauthorized requests, and prevent access to known harmful destinations. This proactive filtering mechanism reduces the likelihood of malware infiltration or unauthorized data access.

Another important security benefit is segmentation. By controlling how traffic flows between internal and external environments, proxy servers create logical boundaries that limit the spread of potential threats. Even if a single endpoint is compromised, the proxy layer can restrict further movement within the network, reducing overall impact.

IP Masking and Privacy Control Mechanisms

IP masking is a critical function performed by proxy servers, allowing internal devices to communicate externally without revealing their actual IP addresses. When a client sends a request through a proxy, the proxy replaces the client’s IP with its own public-facing address. This ensures that external systems only see the proxy’s identity, not the originating device.

This mechanism plays an important role in privacy protection because it prevents direct identification of internal network structures. It also reduces tracking capabilities by external systems attempting to map communication sources. Since all requests appear to originate from the proxy, tracing individual internal devices becomes significantly more difficult.

In controlled environments, IP masking is also used for the standardization of outbound traffic. Instead of multiple internal devices appearing separately on external networks, all traffic is unified under a single or limited set of proxy identities. This simplifies network management and improves consistency in external communication patterns.

Traffic Filtering and Policy Enforcement

Proxy servers are widely used for enforcing traffic policies within network environments. These policies define what types of requests are allowed, what destinations are accessible, and what content categories should be restricted. The proxy evaluates each request against these rules before allowing it to proceed.

Filtering can be based on multiple factors, including destination address, request type, or traffic behavior. When a request violates a defined rule, the proxy can block it, redirect it, or modify it. This ensures that all traffic complies with organizational standards before leaving or entering the network boundary.

Policy enforcement through proxies is particularly important in environments where controlled access is required. By centralizing decision-making within the proxy layer, organizations ensure consistent application of rules across all connected devices without needing individual configuration on each endpoint.

Proxy Logging and Monitoring Capabilities

Proxy servers often include detailed logging systems that record all traffic passing through them. These logs typically include information about request origins, destinations, timestamps, and response outcomes. This data provides visibility into how network resources are being used.

Monitoring capabilities built into proxy servers allow administrators to analyze usage patterns, detect anomalies, and identify potential security threats. For example, repeated attempts to access restricted destinations can be flagged for further investigation. These logs also assist in diagnosing network issues by providing a detailed history of request flows.

In addition to real-time monitoring, logs generated by proxy servers can be used for historical analysis. This allows long-term tracking of network behavior, helping identify trends or recurring issues within traffic patterns.

Proxy Caching and Performance Optimization

Proxy caching is a mechanism where frequently accessed content is temporarily stored on the proxy server. When a client requests content that has already been cached, the proxy can deliver it directly without forwarding the request to the external server again. This significantly reduces response time and network load.

Caching improves performance by minimizing repeated data transfers across external networks. It also reduces bandwidth consumption, especially in environments where multiple users request similar content. The proxy continuously updates its cache based on usage patterns and content freshness rules.

Performance optimization through caching is particularly effective in high-traffic environments where repeated access to similar resources is common. By serving cached content locally, proxy servers reduce dependency on external connectivity and improve overall responsiveness.

Role in Enterprise Network Design

In enterprise network design, proxy servers serve as central control points for managing communication between internal systems and external networks. They are integrated into network architecture to enforce security policies, manage traffic flow, and provide visibility into network activity.

Their role extends beyond simple request forwarding, as they contribute to segmentation, load management, and access control. By centralizing external communication through a proxy layer, enterprise networks gain a structured method for handling internet access across large numbers of devices.

Proxy servers also support scalability in enterprise environments by distributing traffic loads and enabling controlled expansion of network access points. This makes them a fundamental component in designing stable, secure, and manageable network infrastructures across organizations of varying sizes.

Proxy Server Security Functions in Advanced Network Environments

Proxy servers extend far beyond simple request forwarding by operating as active security enforcement points within modern network architectures. In advanced environments, they function as inspection layers that evaluate traffic behavior before it reaches either internal systems or external destinations. This inspection is not limited to basic filtering but includes deep evaluation of request patterns, protocol usage, and destination legitimacy. By analyzing traffic at this intermediary stage, proxy servers help reduce the likelihood of direct exposure to malicious content or unauthorized communication attempts.

A key aspect of proxy-based security is centralized control. Instead of relying on individual devices to enforce security rules, the proxy server becomes the authoritative decision point for outbound and inbound traffic. This centralized model ensures consistency in policy application and eliminates variation that might arise from endpoint-level configurations. In environments with large numbers of devices, this consistency becomes critical for maintaining predictable security behavior across the entire network.

Proxy servers also contribute to defense-in-depth strategies. They are not standalone security solutions but rather one layer within a broader architecture that includes firewalls, intrusion detection systems, and endpoint protection. Within this layered model, proxies add a checkpoint where traffic can be evaluated before proceeding further into or out of a network boundary.

Deep Packet Awareness and Traffic Inspection

Modern proxy implementations often incorporate advanced inspection techniques that go beyond simple header analysis. These systems can evaluate traffic content, request structure, and behavioral characteristics to determine whether a request aligns with expected patterns. This level of analysis is sometimes referred to as deep inspection, where the proxy evaluates not just where traffic is going but what the traffic is doing.

This capability allows proxy systems to identify anomalies that may indicate malicious activity. For example, irregular request patterns, unexpected data payloads, or repeated attempts to access restricted endpoints can be flagged for further action. By analyzing traffic at this level, proxy servers become active participants in identifying potential threats rather than passive relays.

Deep inspection also enables more refined policy enforcement. Instead of simply blocking or allowing traffic based on destination, proxy systems can make decisions based on the structure and intent of the request itself. This allows for more granular control over network communication, particularly in environments where strict compliance requirements exist.

Proxy Servers in Threat Mitigation Strategies

Proxy servers play a significant role in mitigating a wide range of network threats. One of the primary ways they achieve this is by reducing direct exposure of internal systems to external networks. Since all communication is routed through the proxy, attackers cannot directly target internal IP addresses.

In addition to exposure reduction, proxies can intercept and block access to known malicious destinations. These destinations are typically identified through threat intelligence systems or internal security policies. When a request matches a known risk pattern, the proxy can terminate the connection before any data exchange occurs.

Another mitigation approach involves behavioral analysis. Proxy servers can monitor traffic patterns over time and detect deviations from normal usage behavior. For example, if a device begins sending unusually large numbers of requests or attempts to access restricted categories of content, the proxy can intervene and restrict further activity. This behavior-based approach adds another layer of defense beyond static rule enforcement.

Role of Proxy Servers in Data Loss Prevention

Data loss prevention in network environments is often supported by proxy servers through controlled monitoring and filtering of outbound traffic. Since all external communication passes through the proxy, it becomes a strategic point for identifying potentially sensitive data being transmitted outside the network.

Proxy systems can be configured to recognize patterns associated with sensitive information, such as structured data formats or specific content types. When such patterns are detected in outbound traffic, the proxy can block or flag the transmission for review. This helps prevent unintentional or unauthorized data leakage.

In addition to detection, proxies also enforce restrictions on destination endpoints. If certain external destinations are deemed unsafe or non-compliant with organizational policy, the proxy can prevent data from being transmitted to those locations. This ensures that sensitive information remains within controlled boundaries.

Authentication and Access Control Integration

Proxy servers are frequently integrated with authentication systems to verify user identity before allowing network access. In such configurations, users must authenticate through the proxy before their requests are processed. This authentication step ensures that only authorized users can generate outbound or inbound traffic through the system.

Access control mechanisms within proxy servers often rely on role-based or group-based policies. These policies define what resources different categories of users are allowed to access. For example, certain users may be restricted from accessing external websites, while others may have broader permissions based on their role within the organization.

The integration of authentication with proxy processing allows for fine-grained control over network usage. Instead of applying uniform access rules across all devices, policies can be tailored based on identity, role, or organizational unit. This improves both security and operational flexibility.

Proxy Server Load Distribution Mechanisms

In environments with high traffic volume, proxy servers can distribute load across multiple backend systems or external paths. This load distribution ensures that no single server becomes overwhelmed by excessive requests. Instead, traffic is balanced based on predefined rules or dynamic performance metrics.

Load distribution can be implemented in several ways. Some proxy systems distribute requests evenly across available resources, while others prioritize based on server health, response time, or geographic proximity. This dynamic routing improves efficiency and reduces latency for end users.

By managing load distribution at the proxy level, network systems can maintain consistent performance even during periods of high demand. This is particularly important in large-scale environments where thousands or even millions of requests may be processed simultaneously.

Proxy Servers and Network Segmentation

Network segmentation is a critical design principle in modern infrastructure, and proxy servers contribute significantly to its implementation. By acting as controlled communication points between segments, proxies help enforce boundaries between different parts of a network.

Each segment may represent a different security level, user group, or functional area within an organization. The proxy ensures that communication between these segments follows defined rules and does not occur freely without authorization. This segmentation reduces the risk of lateral movement within a network in the event of a security compromise.

Proxy-based segmentation also simplifies network management by centralizing inter-segment communication control. Instead of configuring individual routing rules across multiple devices, administrators can enforce segmentation policies directly through proxy systems.

Proxy Servers in Traffic Anonymization Processes

Anonymization is another important function performed by proxy servers in certain configurations. In these scenarios, the proxy removes or alters identifying information from requests before forwarding them to external systems. This may include stripping metadata, modifying headers, or replacing identifiers with generic values.

The purpose of anonymization is to prevent external systems from correlating requests with specific internal devices or users. This reduces traceability and helps protect privacy within network communication. While anonymization is not absolute, it significantly increases the difficulty of mapping traffic back to its origin.

In controlled environments, anonymization can also be used to standardize external communication patterns. By ensuring that all outgoing requests follow a uniform structure, organizations reduce variability in how their network appears externally.

Proxy Server Performance Optimization Techniques

Beyond security and control functions, proxy servers also play a major role in optimizing network performance. One of the primary optimization techniques is request reuse, where repeated requests for the same resource are handled more efficiently through internal processing rather than external retrieval.

Another optimization method involves connection pooling, where the proxy maintains persistent connections to external servers. Instead of establishing a new connection for each request, the proxy reuses existing connections, reducing overhead and improving response times.

Traffic prioritization is also commonly implemented at the proxy level. High-priority requests can be processed ahead of lower-priority traffic, ensuring that critical operations receive faster response times. This prioritization helps maintain performance stability in environments with mixed traffic types.

Proxy Server Role in Hybrid Network Architectures

In hybrid network architectures, where systems span both internal infrastructure and external cloud environments, proxy servers serve as coordination points for traffic management. They help unify communication policies across distributed environments and ensure consistent enforcement regardless of where resources are hosted.

In such architectures, proxies often mediate between on-premises systems and remote services. This ensures that all communication, regardless of origin or destination, passes through a centralized control layer. This centralization simplifies governance and improves visibility across hybrid environments.

Proxy servers also assist in maintaining compatibility between different network protocols or communication standards used across hybrid systems. By translating or adapting requests as needed, they ensure seamless interaction between heterogeneous components.

Proxy Servers in Regulatory and Compliance Frameworks

Many organizations operate under regulatory frameworks that require strict control over data access and transmission. Proxy servers support compliance by enforcing rules that govern how data can be accessed, transmitted, and stored across networks.

Through logging, filtering, and access control mechanisms, proxies provide the necessary visibility and enforcement required to meet compliance standards. They ensure that all network activity is recorded and can be audited if necessary.

This auditing capability is particularly important in regulated industries where traceability of communication is required. Proxy logs provide a detailed record of network interactions that can be reviewed during compliance assessments or investigations.

Scalability Considerations in Proxy Deployments

Scalability is a critical factor in proxy server design, especially in environments with growing user bases or increasing traffic demands. Proxy systems must be able to handle expanding workloads without introducing performance degradation.

Scalability is achieved through horizontal expansion, where additional proxy instances are added to distribute traffic load. It can also be achieved through vertical scaling, where existing proxy systems are enhanced with greater processing capacity.

Efficient scalability ensures that proxy servers remain effective even as network complexity increases. This allows organizations to maintain consistent security and performance standards regardless of infrastructure growth.

Proxy Server Caching Mechanisms and Data Efficiency

Proxy server caching is one of the most important performance-enhancing mechanisms in modern network architectures. It works by temporarily storing frequently accessed web resources within the proxy’s local storage system so that future requests for the same content can be served directly without repeatedly contacting the original external server. This reduces unnecessary network traffic and significantly improves response times for end users.

The caching process begins when a client requests a resource through the proxy server. If the proxy does not already have a cached version of that resource, it forwards the request to the external server and retrieves the response. After delivering the content to the client, the proxy stores a copy of the resource based on caching rules such as expiration time, content type, and access frequency. When another client requests the same resource, the proxy can deliver it instantly from its cache instead of repeating the full retrieval process.

Caching introduces efficiency at multiple levels of the network. It reduces bandwidth consumption by limiting redundant data transfers across external links. It also reduces latency because locally stored data can be accessed significantly faster than remote resources. In environments with high repetition of requests, such as corporate networks or educational systems, caching can dramatically improve perceived network performance.

Cache Validation and Content Freshness Control

While caching improves efficiency, it also introduces the challenge of ensuring that stored data remains accurate and up to date. Proxy servers address this issue through cache validation mechanisms. These mechanisms determine whether cached content is still valid or whether it needs to be refreshed from the source.

Validation typically occurs through time-based rules or conditional checks. Time-based validation assigns an expiration period to cached content, after which the proxy must re-fetch the resource. Conditional validation involves comparing cached data with updated versions from the external server to determine if changes have occurred.

These validation processes ensure that users receive current information while still benefiting from the performance advantages of caching. Without validation, outdated or inconsistent data could persist in the cache, leading to inaccurate results or outdated content delivery.

Proxy Server Load Balancing in Distributed Networks

In distributed network environments, proxy servers often serve as load-balancing points that distribute incoming or outgoing traffic across multiple backend systems. This ensures that no single system becomes overwhelmed by excessive request volumes.

Load balancing can be implemented using several strategies. One approach is round-robin distribution, where requests are assigned sequentially across available servers. Another approach is weighted distribution, where more powerful servers handle a larger share of traffic. Dynamic load balancing takes real-time performance metrics into account, directing traffic based on current server health and responsiveness.

By distributing traffic efficiently, proxy servers improve system stability and ensure consistent performance even during peak usage periods. This is particularly important in large-scale systems where traffic patterns can fluctuate significantly throughout the day.

Proxy Servers in High Availability Architectures

High availability architectures rely on redundancy and failover mechanisms to ensure continuous system operation. Proxy servers contribute to this by acting as resilient entry points that can redirect traffic in the event of backend system failures.

In a high-availability setup, multiple proxy instances may operate in parallel. If one proxy becomes unavailable, traffic is automatically rerouted to another functioning instance. This redundancy ensures that network communication remains uninterrupted even in the presence of hardware or software failures.

Failover mechanisms also apply to backend routing decisions. If a target server becomes unreachable, the proxy can redirect traffic to an alternative system without requiring client-side awareness. This improves reliability and reduces downtime in critical network environments.

Proxy Server Role in Traffic Encryption Handling

Proxy servers often interact with encrypted traffic, particularly in environments where secure communication protocols are used. In such cases, the proxy may act as a termination point for encrypted connections, decrypting traffic for inspection before re-encrypting it for delivery to the destination.

This process allows the proxy to analyze secure traffic for policy compliance, security threats, or content filtering requirements. While encryption protects data in transit, proxy-based inspection ensures that encrypted traffic still adheres to network policies.

In some architectures, proxies do not decrypt traffic but instead operate transparently, forwarding encrypted data without inspection. The choice between inspection and transparency depends on security requirements and privacy considerations within the network environment.

Proxy Servers in Content Filtering Systems

Content filtering is a core function of proxy servers in many controlled network environments. Filtering systems evaluate requests based on predefined categories, keywords, or destination classifications to determine whether access should be allowed or denied.

Filtering can operate at multiple levels, including domain-based filtering, URL-based filtering, and content-type filtering. Domain-based filtering restricts access to specific websites or domains. URL-based filtering evaluates full request paths for compliance. Content-type filtering examines the nature of requested data, such as media files or executable content.

These filtering mechanisms are often used to enforce organizational policies, reduce exposure to unwanted content, and maintain appropriate usage of network resources. Because all traffic passes through the proxy, filtering can be applied consistently across all connected devices.

Proxy Server Integration with Network Monitoring Systems

Proxy servers are frequently integrated with broader network monitoring systems that analyze traffic behavior across entire infrastructures. These integrations allow proxy-generated logs and metrics to be combined with other network data sources for comprehensive analysis.

Monitoring systems use proxy data to identify usage patterns, detect anomalies, and track performance trends. This data can reveal insights such as peak traffic periods, frequently accessed resources, and unusual access attempts.

The integration between proxies and monitoring systems enhances visibility across the network and supports proactive management of network health. It allows administrators to identify potential issues before they escalate into critical failures or security incidents.

Proxy Servers and User Activity Analysis

User activity analysis is another important aspect of proxy server functionality. By tracking request patterns, proxies can build detailed profiles of how network resources are being used by different users or systems.

This analysis can identify frequently accessed services, typical usage times, and abnormal behavior patterns. For example, if a user account suddenly begins accessing unusual destinations or generating excessive traffic, this deviation can be flagged for review.

Activity analysis is not limited to security purposes. It can also be used to optimize network performance by identifying commonly used resources that may benefit from caching or prioritization.

Proxy Server Role in Bandwidth Management

Bandwidth management is a critical function in environments with limited network capacity. Proxy servers help manage bandwidth by controlling the volume and type of traffic allowed through the network.

This is achieved through techniques such as rate limiting, traffic shaping, and prioritization. Rate limiting restricts the number of requests a client can make within a specific time period. Traffic shaping adjusts the flow of data to prevent congestion. Prioritization ensures that important traffic receives higher transmission priority than less critical data.

By managing bandwidth at the proxy level, networks can maintain stability and prevent overload conditions that degrade performance.

Proxy Servers in Multi-Tenant Network Environments

In multi-tenant environments, where multiple user groups or organizations share the same infrastructure, proxy servers provide essential separation and control mechanisms. Each tenant’s traffic can be isolated and managed independently through proxy configurations.

This separation ensures that one tenant’s activity does not interfere with another’s network performance or security. Proxy-based segmentation also allows customized policies for each tenant, including access rules, filtering settings, and performance allocations.

Multi-tenant proxy configurations are commonly used in shared service environments where infrastructure resources must be distributed securely and efficiently across multiple independent entities.

Proxy Server Role in Geographical Traffic Routing

In geographically distributed networks, proxy servers can influence routing decisions based on location. Requests may be directed through specific proxy nodes depending on the client’s geographic region or the location of the target server.

This geographical routing improves performance by reducing physical distance between clients and servers. It also helps comply with regional data handling requirements by ensuring that traffic remains within designated geographic boundaries when necessary.

Geographically aware proxy systems contribute to optimized latency and improved user experience in global network deployments.

Proxy Servers in Network Troubleshooting

Proxy servers are valuable tools for diagnosing network issues due to their centralized position in traffic flow. Because all communication passes through them, they provide a comprehensive view of network activity.

When troubleshooting performance issues, administrators can analyze proxy logs to identify bottlenecks, failed requests, or unusual traffic patterns. This information helps isolate the root cause of network problems more efficiently than endpoint-level analysis alone.

Proxy-based troubleshooting also supports historical investigation of issues, allowing administrators to reconstruct network events leading up to a failure or degradation.

Proxy Server Evolution in Modern Infrastructure

Proxy server technology has evolved significantly alongside modern networking demands. Early proxy systems primarily focused on basic request forwarding and simple caching. Modern implementations now incorporate advanced security inspection, intelligent routing, and deep traffic analysis.

This evolution reflects the increasing complexity of network environments, where performance, security, and scalability requirements must all be balanced simultaneously. Proxy servers have transitioned from optional components to essential infrastructure elements in many network designs.

Today’s proxy systems are deeply integrated into cloud architectures, hybrid networks, and distributed computing environments, where they continue to play a central role in managing communication between systems.

Proxy Server Importance in Scalable Network Design

Scalability in network design relies heavily on the ability to manage increasing traffic without degrading performance or security. Proxy servers contribute to this scalability by centralizing traffic control, distributing load, and optimizing resource usage.

As networks expand, proxy systems can be scaled horizontally by adding additional instances or vertically by increasing processing capacity. This flexibility allows infrastructure to grow in alignment with demand without requiring a complete redesign of communication pathways.

Proxy servers remain a foundational component in scalable network design because they provide a stable and adaptable control layer between internal systems and external networks.

Conclusion

In modern networking environments, proxy servers represent far more than a simple intermediary between client devices and external systems. They function as structural control points that influence how data is transmitted, inspected, filtered, cached, and secured across complex network ecosystems. When viewed as a whole, their role is best understood as a combination of traffic management, security enforcement, performance optimization, and architectural abstraction. This combination is what makes them a persistent and essential component in both small-scale and enterprise-level network designs.

At a fundamental level, the proxy server introduces a controlled separation between internal network environments and the broader internet. This separation is not merely physical or logical; it is operational. Internal systems are no longer directly exposed to external servers, which significantly reduces the attack surface available to malicious actors. Instead, all communication is mediated through a controlled system that can inspect, modify, or block traffic based on predefined policies. This mediation creates a predictable and enforceable communication layer, which is critical in environments where security consistency is required across a large number of devices and users.

One of the most significant long-term advantages of proxy-based architecture is the ability to centralize decision-making for network traffic. Without a proxy, each device independently determines how and where to send requests, often relying on minimal local controls. With a proxy, however, all outbound and inbound traffic decisions are consolidated into a single enforcement layer. This centralization simplifies governance because policies do not need to be duplicated across endpoints. Instead, they are applied uniformly at the proxy level, ensuring consistent enforcement regardless of device type, location, or user behavior.

Another critical dimension of proxy servers is their contribution to visibility and monitoring. In many network environments, understanding how data flows is just as important as controlling it. Proxy servers provide this visibility by logging requests, tracking destinations, and recording interaction patterns. These logs become an essential resource for understanding system behavior over time. They allow administrators to reconstruct communication flows, identify inefficiencies, and detect abnormal patterns that may indicate security concerns or misconfigurations. This level of observability is difficult to achieve without a centralized traffic mediation point.

Performance optimization is another area where proxy servers provide substantial value. Through caching mechanisms, repeated requests for the same resources can be served locally rather than being repeatedly fetched from external sources. This reduces latency and significantly decreases bandwidth consumption. In high-traffic environments, this can result in measurable performance improvements, particularly when large numbers of users access similar resources. Beyond caching, proxy servers also contribute to performance through connection reuse, load distribution, and request prioritization, all of which help maintain stability under varying traffic conditions.

From a security perspective, proxy servers contribute to layered defense strategies by acting as inspection points where potentially harmful traffic can be identified and blocked before reaching internal systems. This is particularly important in environments where external threats are constantly evolving. By analyzing traffic patterns and enforcing access restrictions, proxy systems reduce exposure to malicious destinations and limit the risk of data compromise. They also help prevent unauthorized communication attempts by ensuring that only validated requests are allowed to proceed beyond the proxy boundary.

In addition to direct security enforcement, proxy servers support broader risk mitigation strategies by reducing information exposure. Internal IP structures, network topology details, and device identities are masked from external systems. This lack of visibility makes it more difficult for external actors to map or target internal infrastructure. The anonymization effect created by proxy routing is not absolute protection, but it significantly increases the effort required to analyze or exploit internal network structures.

Proxy servers also play an important role in organizational control and policy enforcement. Many environments require strict regulation of internet usage, including restrictions on certain types of content or destinations. Proxy systems provide the mechanism through which these policies are applied consistently. Because all traffic flows through the proxy, enforcement becomes deterministic rather than distributed. This reduces policy drift and ensures that all users are subject to the same rules regardless of their device configuration or access method.

As network environments become more distributed and hybrid in nature, the role of proxy servers continues to expand. Modern infrastructures often span on-premises systems, cloud services, and remote endpoints, all of which require coordinated traffic management. Proxy servers act as unifying control points across these diverse environments, ensuring that communication remains structured and secure regardless of where resources are located. This unification is particularly important in systems where consistency and compliance must be maintained across geographically dispersed components.

Scalability considerations further reinforce the importance of proxy-based architectures. As organizations grow, the volume of network traffic increases, often unpredictably. Proxy systems provide a flexible scaling model where additional instances can be introduced to distribute load without fundamentally altering network design. This adaptability ensures that performance and security controls remain effective even as infrastructure complexity increases. The ability to scale proxy systems independently of other network components makes them a practical choice for evolving environments.

Proxy servers also contribute to resilience in network operations. By providing failover routing, redundant pathways, and traffic redirection capabilities, they help ensure continuity of communication even when individual components fail. This resilience is particularly important in critical systems where downtime can have a significant operational or financial impact. The proxy layer acts as a stabilizing mechanism that absorbs disruptions and maintains service availability.

Another important aspect is the role of proxy servers in shaping network efficiency over time. Through continuous monitoring and analysis of traffic patterns, proxy systems can inform decisions about infrastructure optimization. For example, frequently accessed resources can be cached more aggressively, or high-demand services can be prioritized for better performance. This feedback loop between observation and optimization allows networks to evolve dynamically based on actual usage patterns rather than static assumptions.

In enterprise and large-scale environments, proxy servers also contribute to compliance and governance frameworks. Many regulatory standards require detailed tracking of data access and transmission activities. Proxy logs provide a reliable source of audit information that can be used to demonstrate compliance with these requirements. This traceability ensures that organizations can maintain accountability for network activity and respond effectively to regulatory scrutiny when necessary.

Ultimately, proxy servers occupy a unique position within networking architecture because they combine multiple functional roles into a single structural component. They are simultaneously security gateways, performance enhancers, policy enforcement points, and monitoring systems. This convergence of roles is what makes them indispensable in modern network design. As digital systems continue to grow in scale, complexity, and interconnectivity, the importance of centralized traffic mediation will only increase.

The continued evolution of proxy technology reflects broader trends in networking, where control, visibility, and efficiency are becoming increasingly intertwined. Rather than being optional or supplementary components, proxy servers are now foundational elements that support the operational stability of modern digital infrastructure.