Server virtualization represents one of the most important architectural shifts in modern computing systems, allowing a single physical machine to be divided into multiple independent computing environments. These environments behave like separate computers even though they share the same underlying hardware. The primary idea behind virtualization is abstraction, where physical resources are separated from the operating systems and applications that use them. This separation enables higher efficiency, better resource utilization, and improved flexibility in managing computing workloads.

In traditional computing environments, one physical server typically runs one operating system and one main workload. This model leads to inefficient use of hardware because most servers are not fully utilized at all times. Virtualization solves this limitation by introducing a software layer that enables multiple operating systems to coexist on the same physical hardware. This software layer is known as the hypervisor, and it is responsible for creating and managing virtual machines. The concept may seem simple on the surface, but it involves complex resource scheduling, hardware abstraction, and system isolation techniques that allow modern computing environments to function efficiently at scale.

Understanding the Role of a Hypervisor in System Architecture

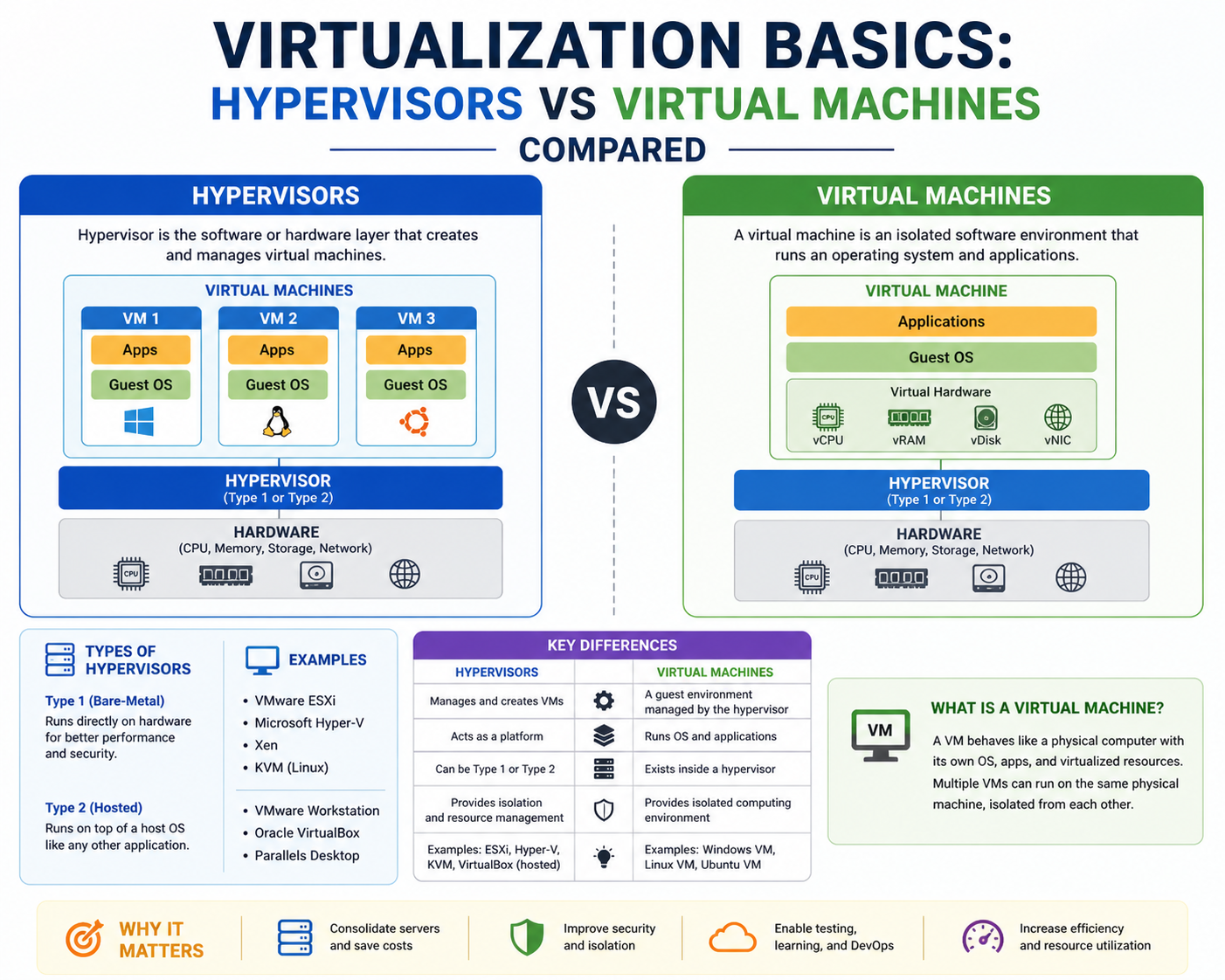

A hypervisor is a specialized software layer that sits between physical hardware and virtual machines. Its primary responsibility is to manage how physical resources are distributed among multiple virtual environments. Instead of allowing operating systems direct access to hardware, the hypervisor controls and mediates every interaction. This design ensures that multiple virtual machines can run simultaneously without interfering with each other.

The hypervisor performs several critical functions that are essential to virtualization. It allocates CPU cycles, manages memory distribution, handles storage access, and controls network communication between virtual machines and external systems. It also enforces isolation, ensuring that each virtual machine operates independently. This isolation is fundamental to virtualization because it prevents system conflicts and enhances security by containing issues within individual virtual environments.

At a deeper level, the hypervisor acts as a resource scheduler. It determines how much processing power each virtual machine receives and ensures that workloads are balanced across available hardware resources. It also translates virtual instructions from guest operating systems into hardware-level instructions that the physical machine can execute. This translation process is essential because virtual machines do not directly interact with hardware components.

Virtual Machines as Independent Computing Environments

A virtual machine is a software-based representation of a physical computer. It contains its own operating system, applications, and system configurations, but it relies entirely on the hypervisor for access to hardware resources. Each virtual machine operates as if it were an independent system, even though it shares physical infrastructure with other virtual machines.

The creation of virtual machines allows organizations to consolidate workloads that would traditionally require multiple physical servers. Each virtual machine is assigned a portion of CPU power, memory, storage, and network bandwidth. These allocations are enforced by the hypervisor, ensuring that no single virtual machine consumes more resources than it is permitted.

Virtual machines are highly flexible because they can be created, modified, or deleted without affecting the underlying physical system. This flexibility allows administrators to rapidly deploy new environments, test software configurations, and scale systems according to demand. Virtual machines also support a wide range of operating systems, which makes them suitable for diverse computing environments where different applications require different system architectures.

Architecture Layers in Virtualization Systems

Virtualization systems are built on a layered architecture that separates physical hardware from software environments. At the lowest level is the physical hardware, which includes the CPU, memory modules, storage devices, and network interfaces. Above this layer sits the hypervisor, which abstracts hardware resources and presents them as virtual components to virtual machines.

Above the hypervisor layer are the virtual machines themselves, each containing its own operating system. These operating systems believe they are running on dedicated hardware, even though they are actually interacting with a virtualized environment. This layered structure allows multiple systems to operate independently while sharing the same physical infrastructure.

This architecture also introduces a level of flexibility that is not possible in traditional computing systems. Virtual machines can be moved between physical hosts, resized in terms of resource allocation, or cloned to create identical environments. The hypervisor manages all these operations while maintaining system stability and consistency.

Resource Management and Scheduling in Hypervisors

One of the most complex functions of a hypervisor is resource management. Since multiple virtual machines share the same physical hardware, the hypervisor must carefully allocate resources to ensure efficient operation. CPU scheduling is one of the most critical tasks, as it determines how processing time is distributed among virtual machines.

The hypervisor uses scheduling algorithms to assign CPU cycles to virtual machines based on priority, workload demand, and system policies. This ensures that high-priority tasks receive sufficient processing power while maintaining fairness across all running environments. Memory management is equally important, as the hypervisor must allocate and track memory usage for each virtual machine to prevent conflicts and ensure stability.

Storage management involves the creation of virtual disks that are mapped to physical storage devices. These virtual disks behave like physical hard drives from the perspective of the virtual machine, but they are actually managed by the hypervisor. Network management is also handled at this layer, allowing virtual machines to communicate with each other and external networks through virtual network interfaces.

Memory Virtualization and Hardware Abstraction

Memory virtualization is a key component of hypervisor functionality. It involves mapping virtual memory addresses used by virtual machines to physical memory locations in the hardware. This process allows multiple virtual machines to share physical memory resources without directly accessing each other’s memory space.

The hypervisor maintains memory isolation to ensure that each virtual machine operates within its allocated memory boundaries. This prevents data corruption and enhances system security. Memory overcommitment is also possible in some virtualization systems, where more virtual memory is allocated than is physically available. The hypervisor manages this through techniques such as memory ballooning and swapping.

Hardware abstraction is another important function of the hypervisor. It presents standardized virtual hardware components to virtual machines, regardless of the underlying physical hardware. This abstraction allows virtual machines to remain portable and independent of specific hardware configurations. As a result, virtual machines can be migrated between different physical systems without requiring changes to their internal configuration.

Input Output Virtualization and Device Handling

Input and output operations are critical for system performance, and virtualization introduces additional complexity in managing these operations. The hypervisor acts as an intermediary between virtual machines and physical input output devices, such as storage drives and network interfaces.

Virtual machines interact with virtualized devices rather than physical hardware directly. When a virtual machine performs an input output operation, the request is intercepted by the hypervisor, which translates it into a physical hardware operation. This process ensures that multiple virtual machines can share the same physical devices without conflict.

Device virtualization also includes the creation of virtual network interfaces and storage controllers. These virtual devices behave like physical hardware from the perspective of the virtual machine, but they are managed entirely by the hypervisor. This allows for efficient sharing of hardware resources while maintaining isolation between virtual environments.

Type One Hypervisors and Direct Hardware Interaction

Type one hypervisors are installed directly on physical hardware without requiring a host operating system. This architecture allows them to have direct access to system resources, resulting in higher performance and lower latency. Since there is no intermediate operating system layer, type one hypervisors are more efficient in managing hardware resources.

In this model, the hypervisor itself functions as a lightweight operating system designed specifically for virtualization. It handles all hardware interactions and provides a platform for virtual machines to run. This architecture is commonly used in enterprise environments where performance, scalability, and stability are critical requirements.

Type one hypervisors are capable of supporting large numbers of virtual machines simultaneously while maintaining strict resource isolation. This makes them suitable for data centers and cloud infrastructure environments where workload density is high and performance consistency is essential.

Type Two Hypervisors and Hosted Virtualization Models

Type two hypervisors operate differently by running on top of an existing operating system. In this model, the hypervisor is installed as an application, and it relies on the host operating system to interact with hardware. This additional layer introduces some overhead, but it simplifies installation and usage.

Type two hypervisors are commonly used in desktop and development environments where ease of setup is more important than maximum performance. They allow users to run multiple operating systems on a single machine without modifying the underlying hardware configuration.

Although type two hypervisors are less efficient than type one hypervisors, they provide a flexible environment for testing, learning, and software development. They are particularly useful for scenarios where multiple operating systems need to be tested in isolation on a single physical system.

Isolation, Security, and Stability in Virtualized Environments

One of the most important advantages of virtualization is isolation. Each virtual machine operates independently, with its own operating system and resources. This isolation is enforced by the hypervisor, which prevents virtual machines from accessing each other’s memory or processes directly.

This design significantly enhances system security because it limits the spread of potential issues. If one virtual machine experiences a failure or security breach, the impact is contained within that environment and does not affect other virtual machines running on the same hardware.

Stability is also improved through isolation because resource conflicts are minimized. The hypervisor ensures that each virtual machine receives its allocated resources without interference, maintaining consistent performance across the system.

Foundational Importance of Virtualization in Modern Computing

Virtualization forms the foundation of many modern computing technologies by enabling efficient resource utilization and system flexibility. It allows multiple workloads to run on shared infrastructure while maintaining independence and isolation. This approach has transformed how computing systems are designed, deployed, and managed.

The combination of hypervisors and virtual machines creates a powerful computing model that supports scalability, efficiency, and adaptability. As computing demands continue to grow, virtualization remains a central technology that supports complex digital environments and distributed systems without relying on rigid hardware constraints.

Evolution of Virtualization and the Expansion of Hypervisor Technology

Virtualization technology did not appear in its current form overnight. It evolved over decades as computing systems became more complex and hardware utilization became a critical concern for organizations. Early computing environments relied heavily on single-purpose servers, where each physical machine was dedicated to one application or service. This approach worked when workloads were small, but it quickly became inefficient as systems scaled and infrastructure costs increased.

The need to optimize hardware usage led to the development of virtualization concepts that allowed multiple operating systems to share a single physical machine. Over time, this idea matured into modern hypervisor-based architectures that form the foundation of today’s cloud computing and enterprise data centers. Hypervisors became the central mechanism for enabling this transformation, acting as the control layer that makes resource sharing possible while maintaining isolation between workloads.

As virtualization technology advanced, hypervisors became more sophisticated in how they managed system resources. They evolved from simple resource partitioning tools into complex orchestration systems capable of handling large-scale distributed environments. This evolution enabled the rise of cloud computing platforms, where thousands of virtual machines can operate simultaneously across vast networks of physical servers.

Deep Dive into Hypervisor Architecture and System Design

A hypervisor is not a single-function tool but a multi-layered system designed to manage hardware abstraction, resource scheduling, and virtualization orchestration. Its architecture is built around the concept of mediating access between virtual machines and physical hardware. This mediation ensures that virtual environments remain isolated while still being able to share underlying computing resources.

At the core of hypervisor design is the virtualization engine, which translates instructions from virtual machines into operations that can be executed by physical hardware. This translation process is essential because virtual machines operate in an abstracted environment where they do not have direct access to CPU instructions or memory addresses.

The hypervisor also includes a resource management layer that tracks CPU usage, memory allocation, storage access, and network activity. This layer continuously monitors system performance and dynamically adjusts resource distribution based on workload demands. The ability to adapt in real time is what allows virtualization systems to maintain stability under varying levels of demand.

Another critical component of hypervisor architecture is the isolation engine. This engine ensures that virtual machines remain separated from one another, preventing data leakage or process interference. Isolation is enforced at multiple levels, including memory separation, CPU scheduling boundaries, and network segmentation.

Virtual Machine Lifecycle and Operational Behavior

A virtual machine goes through several stages during its lifecycle, starting from creation and configuration to active operation and eventual decommissioning. Each stage is managed by the hypervisor, which ensures that resources are allocated appropriately and system stability is maintained.

The creation stage involves defining the virtual machine’s hardware profile, including CPU allocation, memory size, storage capacity, and network configuration. Once these parameters are set, the hypervisor constructs a virtual environment that mimics the behavior of a physical computer.

During the boot process, the virtual machine loads its operating system just like a physical machine would. However, instead of interacting directly with hardware, the operating system communicates through virtual drivers provided by the hypervisor. These drivers translate system calls into virtualized operations that can be executed on physical hardware.

Once operational, the virtual machine behaves like a fully independent system. It runs applications, processes data, and communicates with other systems. The hypervisor continuously monitors its resource usage and adjusts allocations as needed to maintain performance balance across all active virtual machines.

Resource Scheduling and Performance Optimization in Virtual Environments

Resource scheduling is one of the most important responsibilities of a hypervisor. Since multiple virtual machines share the same physical resources, the hypervisor must determine how to distribute CPU time, memory access, and input output operations efficiently.

CPU scheduling is typically handled using time-sharing algorithms, where each virtual machine is given a portion of processing time. The hypervisor rotates between virtual machines rapidly, creating the illusion that they are running simultaneously. This scheduling process must be carefully balanced to avoid performance bottlenecks.

Memory optimization is another critical area of focus. The hypervisor tracks memory usage for each virtual machine and ensures that allocations remain within defined limits. In some cases, memory overcommitment is used, where the total allocated memory exceeds physical capacity. The hypervisor manages this through advanced techniques such as memory compression and swapping.

Storage performance is also optimized through the use of virtual disk caching and input output prioritization. Virtual machines access storage through virtual disks, which are mapped to physical storage devices. The hypervisor manages read and write operations to ensure that storage performance remains consistent across all virtual machines.

Type One Hypervisors in Enterprise Infrastructure

Type one hypervisors are designed for high-performance environments where direct hardware access is required. These hypervisors are installed directly onto physical servers and operate independently of any host operating system. This architecture eliminates unnecessary software layers, resulting in improved performance and reduced latency.

In enterprise environments, type one hypervisors are widely used because they support large-scale virtualization deployments. They are capable of managing hundreds or even thousands of virtual machines on a single infrastructure platform. This scalability makes them ideal for data centers and cloud computing environments.

Type one hypervisors also provide advanced features such as live migration, high availability, and distributed resource management. Live migration allows virtual machines to be moved between physical hosts without downtime, which is essential for maintaining service continuity. High availability ensures that virtual machines are automatically restarted on alternative hosts in the event of hardware failure.

The architecture of type one hypervisors is optimized for efficiency, with minimal overhead between virtual machines and physical hardware. This design allows for maximum resource utilization and consistent performance across workloads.

Type Two Hypervisors and Desktop Virtualization Models

Type two hypervisors operate within a host operating system, functioning as applications rather than standalone systems. This design introduces an additional layer between virtual machines and hardware, which can affect performance but significantly simplifies deployment and usability.

In desktop environments, type two hypervisors are commonly used for testing, development, and educational purposes. They allow users to run multiple operating systems on a single machine without requiring dedicated hardware or complex configuration.

The host operating system handles hardware interactions, while the hypervisor manages virtual machines within the software layer. This architecture makes it easier to install and use virtualization technology, especially for users who are not managing enterprise-scale infrastructure.

Although type two hypervisors are less efficient than type one hypervisors, they provide a practical solution for scenarios where flexibility and ease of use are more important than raw performance.

Virtual Hardware Emulation and Device Abstraction

Virtual machines rely on virtual hardware components that are emulated by the hypervisor. These components include virtual CPUs, memory modules, storage controllers, and network interfaces. The hypervisor ensures that these virtual devices behave consistently, regardless of the underlying physical hardware.

Device abstraction is a key principle in virtualization. It allows virtual machines to operate independently of specific hardware configurations. This means that a virtual machine can be moved from one physical server to another without requiring changes to its internal setup.

Network virtualization is particularly important in modern environments. The hypervisor creates virtual switches and network interfaces that allow virtual machines to communicate with each other and external systems. This network abstraction enables complex network topologies to be created entirely in software.

Storage virtualization works in a similar way by abstracting physical storage devices into virtual disks. These disks can be allocated, resized, and migrated without affecting the underlying hardware structure.

Security Isolation and Virtual Environment Protection

Security is a fundamental aspect of virtualization design. The hypervisor enforces strict isolation between virtual machines to prevent unauthorized access and data leakage. Each virtual machine operates in its own protected environment with no direct access to the memory or processes of other virtual machines.

This isolation is enforced at multiple levels, including memory separation, CPU scheduling boundaries, and network segmentation. Even if one virtual machine is compromised, it cannot directly affect others running on the same system.

Hypervisors also implement security controls such as access management, resource quotas, and monitoring systems. These controls help detect unusual activity and prevent resource abuse within virtual environments.

The layered security model provided by virtualization is one of its most important advantages, especially in multi-tenant environments where multiple users or systems share the same physical infrastructure.

Dynamic Resource Allocation and Load Balancing

Dynamic resource allocation allows hypervisors to adjust system resources in real time based on workload demand. This capability ensures that virtual machines receive adequate resources even during periods of high usage.

Load balancing distributes workloads across multiple physical hosts or virtual machines to prevent performance bottlenecks. The hypervisor continuously monitors system performance and redistributes workloads as needed to maintain efficiency.

This dynamic behavior is essential in cloud environments where workloads can change rapidly. It allows infrastructure to scale up or down without manual intervention, improving overall system responsiveness.

Resource prioritization also plays a role in ensuring that critical applications receive higher access to CPU and memory resources compared to less important workloads.

Virtual Machine Mobility and System Flexibility

One of the most powerful features of virtualization is the ability to move virtual machines between physical hosts without disrupting operations. This process, often referred to as migration, allows systems to be maintained, upgraded, or balanced without downtime.

Virtual machine mobility is enabled by the abstraction layer provided by the hypervisor. Since virtual machines do not depend on specific hardware configurations, they can be transferred between systems that meet minimum compatibility requirements.

This flexibility allows organizations to perform maintenance tasks without interrupting services. It also supports load-balancing strategies where virtual machines are moved to optimize resource usage across the infrastructure.

Foundational Role of Virtualization in Modern IT Systems

Virtualization has become a foundational technology in modern computing systems. It supports cloud computing, data center optimization, disaster recovery strategies, and scalable application deployment.

By abstracting physical hardware into virtual environments, virtualization enables more efficient use of computing resources while maintaining flexibility and control. The combination of hypervisors and virtual machines forms the backbone of this architecture, allowing complex systems to operate in a highly efficient and manageable way.

The continued evolution of virtualization technology ensures that it remains central to modern infrastructure design, supporting increasingly complex computing demands across industries.

Advanced Hypervisor Functions in Large-Scale Virtualization Environments

As virtualization environments expand from small deployments to enterprise-scale infrastructures, hypervisors evolve from simple resource managers into highly advanced orchestration systems. At this stage, the role of a hypervisor extends far beyond basic CPU and memory allocation. It becomes a central intelligence layer that coordinates thousands of virtual machines across multiple physical servers, ensuring consistency, performance, and reliability at scale.

In large-scale environments, hypervisors are responsible for maintaining synchronization between distributed systems. They monitor workloads continuously and adjust resource distribution dynamically based on demand patterns. This ensures that no single physical machine becomes overloaded while others remain underutilized. The hypervisor acts as a real-time balancing system that maintains operational efficiency across the entire infrastructure.

Advanced hypervisors also integrate automation features that reduce the need for manual intervention. These systems can detect performance bottlenecks, predict resource shortages, and automatically migrate workloads to optimize system performance. This level of intelligence is essential in modern data centers where workloads fluctuate constantly and require rapid response mechanisms.

Distributed Virtualization and Multi-Host Architectures

In modern virtualization environments, physical servers are no longer treated as isolated systems. Instead, they are grouped into clusters where multiple hosts work together to support a shared pool of virtual machines. This architecture is known as distributed virtualization, and it significantly enhances scalability and resilience.

Within a distributed environment, hypervisors communicate with each other to coordinate resource usage across multiple machines. This communication allows virtual machines to be moved seamlessly between hosts without interrupting their operation. The system behaves as a unified computing environment rather than a collection of individual servers.

Clustered virtualization also introduces redundancy, ensuring that if one physical host fails, virtual machines can be automatically restarted on another host. This process is managed by the hypervisor, which continuously monitors system health and triggers failover mechanisms when necessary. The result is a highly resilient infrastructure capable of maintaining service availability even in the event of hardware failure.

Live Migration and Continuous Service Availability

Live migration is one of the most important capabilities enabled by advanced hypervisor systems. It allows a running virtual machine to be transferred from one physical host to another without shutting it down. This process ensures uninterrupted service availability, which is critical in environments where downtime is not acceptable.

During live migration, the hypervisor copies the memory state, CPU state, and storage connections of a virtual machine to a new host. Once synchronization is complete, the virtual machine continues running on the destination host with minimal disruption. The transition is designed to be seamless, often occurring without noticeable impact on users or applications.

This capability is particularly valuable for maintenance operations. Physical servers can be updated, repaired, or replaced without affecting running workloads. It also enables dynamic load balancing, where virtual machines are redistributed across hosts to optimize performance and resource usage.

High Availability and Fault Tolerance Mechanisms

High availability is a core feature of enterprise virtualization systems. It ensures that virtual machines remain operational even in the event of hardware or software failures. Hypervisors achieve this by continuously monitoring system health and implementing automated recovery mechanisms.

When a failure is detected on a physical host, the hypervisor can automatically restart affected virtual machines on another available host within the cluster. This process minimizes downtime and maintains service continuity. In more advanced configurations, fault tolerance mechanisms can replicate virtual machine states in real time, allowing instant failover without interruption.

Fault tolerance goes beyond simple recovery by maintaining synchronized duplicate instances of virtual machines. If the primary instance fails, the secondary instance immediately takes over without requiring a restart. This level of redundancy is essential for mission-critical applications that require continuous operation.

Storage Virtualization and Data Management Layers

Storage virtualization is a key component of modern hypervisor systems. It abstracts physical storage devices into logical units that can be dynamically allocated to virtual machines. This abstraction simplifies storage management and improves flexibility in how data is stored and accessed.

In virtualized environments, storage is often pooled across multiple devices, creating a unified storage layer. Hypervisors manage this pool and allocate storage resources based on virtual machine requirements. This allows administrators to expand or reduce storage capacity without disrupting running systems.

Advanced storage features include snapshot creation, cloning, and replication. Snapshots capture the state of a virtual machine at a specific point in time, allowing for quick recovery in case of failure. Cloning enables rapid duplication of virtual machines for testing or deployment purposes. Replication ensures that data is duplicated across multiple storage locations for redundancy and disaster recovery.

Network Virtualization and Software-Defined Networking

Network virtualization is another critical aspect of modern hypervisor environments. It allows physical network infrastructure to be abstracted into virtual networks that can be configured and managed independently of hardware constraints.

Hypervisors create virtual switches that connect virtual machines to external networks. These virtual switches function like physical network devices but are entirely software-based. They enable complex network topologies to be built within virtual environments without requiring physical reconfiguration.

Software-defined networking extends this concept by separating network control logic from physical infrastructure. This allows network configurations to be managed centrally through software, making it easier to deploy, modify, and scale network environments dynamically.

Network virtualization also enhances security by enabling segmentation and isolation between virtual machines. Each virtual machine can be placed in a separate network segment, preventing unauthorized communication and improving overall system security.

Memory Optimization Techniques in Virtual Environments

Memory management in virtualization systems is significantly more complex than in traditional computing environments. Since multiple virtual machines share the same physical memory resources, hypervisors must implement advanced optimization techniques to ensure efficient usage.

One such technique is memory overcommitment, where more memory is allocated to virtual machines than is physically available. The hypervisor manages this by dynamically adjusting memory usage based on demand, ensuring that active workloads receive priority access to resources.

Memory ballooning is another optimization technique used by hypervisors. It involves reclaiming unused memory from idle virtual machines and reallocating it to active workloads. This process helps maximize memory efficiency without affecting system performance.

Swapping is used as a last resort when physical memory becomes fully utilized. In this case, less frequently used memory pages are temporarily moved to disk storage. Although slower than physical memory access, this technique prevents system crashes during high-demand situations.

CPU Scheduling and Parallel Processing Management

CPU scheduling is one of the most critical responsibilities of a hypervisor. It determines how processing power is distributed among virtual machines. Since multiple virtual machines may compete for limited CPU resources, the hypervisor must carefully manage scheduling to maintain performance balance.

Modern hypervisors use advanced scheduling algorithms that consider workload priority, resource allocation policies, and system load. These algorithms ensure that high-priority virtual machines receive sufficient processing power while maintaining fairness across all running environments.

Parallel processing is also managed by the hypervisor, which distributes workloads across multiple CPU cores. This allows virtual machines to execute tasks simultaneously, improving overall system performance. The hypervisor ensures that CPU resources are not over-allocated or underutilized.

Security Layers and Virtual Isolation Enforcement

Security in virtualization environments is enforced through multiple layers of isolation. The hypervisor acts as a security boundary between virtual machines, preventing unauthorized access to system resources.

Each virtual machine operates in a sandboxed environment where its processes, memory, and storage are isolated from others. This prevents malicious activity from spreading across the system. Even if one virtual machine is compromised, the hypervisor ensures that other environments remain unaffected.

Access control mechanisms are also implemented at the hypervisor level. These controls define which users or systems can interact with specific virtual machines or resources. Combined with monitoring systems, they provide a strong security framework for virtualized environments.

Automation and Intelligent Resource Management Systems

Modern hypervisors incorporate automation systems that reduce the need for manual intervention in managing virtual environments. These systems continuously analyze performance metrics and make real-time adjustments to resource allocation.

Automated workload balancing ensures that virtual machines are distributed evenly across physical hosts. If one host becomes overloaded, the hypervisor can automatically migrate virtual machines to less-utilized systems. This improves performance and prevents system bottlenecks.

Predictive analysis is also used in advanced environments to forecast resource demands. By analyzing usage patterns, hypervisors can anticipate future requirements and allocate resources proactively. This improves efficiency and reduces the risk of performance degradation.

Integration of Virtualization with Cloud Infrastructure

Virtualization forms the foundation of cloud computing systems. Cloud environments rely heavily on hypervisors to manage virtual machines across distributed data centers. This integration allows computing resources to be delivered as scalable services over networks.

In cloud infrastructure, virtualization enables multi-tenant environments where multiple users share the same physical resources while maintaining isolation. Hypervisors ensure that each tenant operates within its allocated environment without affecting others.

This integration also supports elasticity, allowing resources to be scaled up or down based on demand. Virtual machines can be created or destroyed dynamically, enabling flexible resource allocation in real time.

Performance Monitoring and System Analytics

Performance monitoring is a continuous process in virtualization systems. Hypervisors collect detailed metrics on CPU usage, memory consumption, storage activity, and network traffic. These metrics are analyzed to ensure optimal system performance.

System analytics help identify bottlenecks, inefficiencies, and potential failures before they occur. This proactive approach improves system reliability and reduces downtime. Administrators can use these insights to optimize configurations and improve resource allocation strategies.

Monitoring tools integrated with hypervisors provide real-time visibility into virtual machine performance. This visibility is essential for maintaining stability in large-scale environments where thousands of virtual machines may be operating simultaneously.

Future Direction of Virtualization and Hypervisor Technology

Virtualization continues to evolve alongside advancements in computing technology. Future hypervisors are expected to incorporate deeper automation, artificial intelligence-driven optimization, and tighter integration with cloud-native architectures.

As workloads become more complex and distributed, hypervisors will play an increasingly important role in managing system efficiency and security. The ongoing development of virtualization technology ensures that it remains a foundational component of modern computing infrastructure, supporting everything from enterprise systems to global cloud platforms.

Conclusion

Virtualization has fundamentally changed the way modern computing systems are designed, deployed, and managed. At the center of this transformation are hypervisors and virtual machines, two tightly connected components that work together to create flexible, scalable, and efficient computing environments. Understanding the distinction between them is essential for anyone exploring server virtualization, because the relationship between these two elements defines how physical hardware is transformed into multiple independent computing systems.

A hypervisor acts as the controlling layer that sits directly on top of physical hardware or on top of a host operating system, depending on its type. Its primary responsibility is to manage and allocate computing resources such as CPU power, memory, storage, and network capacity. It ensures that these resources are distributed efficiently among multiple virtual machines while maintaining strict isolation between them. Without the hypervisor, virtual machines would not be able to exist in a controlled or stable environment, because there would be no system responsible for mediating access to physical hardware.

Virtual machines, on the other hand, represent the end-user computing environments created and managed by the hypervisor. Each virtual machine behaves like an independent computer system with its own operating system and applications. However, unlike physical machines, virtual machines do not interact directly with hardware. Instead, they rely entirely on virtualized resources provided by the hypervisor. This abstraction allows multiple operating systems to run simultaneously on a single physical machine, significantly improving hardware utilization and operational flexibility.

The relationship between hypervisors and virtual machines is hierarchical and interdependent. The hypervisor forms the foundation, while virtual machines exist as workloads running on top of it. This structure is what enables virtualization to deliver its core benefits, including efficiency, scalability, isolation, and flexibility. By separating hardware from software environments, virtualization allows organizations to break free from the limitations of traditional computing models, where one server typically supported only one operating system or application.

One of the most important outcomes of this architecture is resource optimization. In traditional systems, hardware resources are often underutilized because workloads rarely require full capacity at all times. Virtualization solves this problem by allowing multiple virtual machines to share the same physical resources dynamically. The hypervisor continuously monitors demand and adjusts allocations to ensure that resources are used efficiently. This leads to better performance, reduced hardware costs, and improved energy efficiency in large-scale environments.

Another significant advantage of virtualization is isolation. Each virtual machine operates in a separate and secure environment, independent of other virtual machines on the same system. This isolation is enforced by the hypervisor, which prevents direct interaction between virtual machines at the hardware level. As a result, issues such as system crashes, security breaches, or software conflicts in one virtual machine do not affect others. This makes virtualization particularly valuable in environments where stability and security are critical.

The distinction between type-1 and type-2 hypervisors further enhances the flexibility of virtualization systems. Type-1 hypervisors operate directly on physical hardware, providing high performance and low latency. They are commonly used in enterprise data centers and cloud environments where efficiency and scalability are essential. Their direct access to hardware allows them to manage resources more effectively and support large numbers of virtual machines simultaneously.

Type-2 hypervisors, in contrast, operate on top of a host operating system. This makes them easier to install and use,e but introduces additional overhead due to the extra software layer. They are commonly used in development, testing, and educational environments where ease of use is more important than maximum performance. Despite their differences, both types serve the same fundamental purpose of enabling virtualization by managing virtual machines.

As virtualization technology has matured, it has become deeply integrated into modern computing infrastructure. Cloud computing platforms, for example, rely heavily on virtualization to provide scalable and on-demand computing resources. In these environments, virtual machines can be created, modified, or removed dynamically based on workload requirements. This level of flexibility would not be possible without the underlying hypervisor technology that manages resource distribution and system isolation.

Virtualization also plays a critical role in disaster recovery and system resilience. Because virtual machines are independent of physical hardware, they can be backed up, duplicated, or migrated across systems with minimal disruption. In the event of hardware failure, virtual machines can be quickly restarted on alternative systems, reducing downtime and maintaining service continuity. This capability is especially important for organizations that require high availability and cannot afford extended system outages.

Security is another area where virtualization provides significant advantages. The isolation between virtual machines ensures that malicious activity is contained within a single environment. Even if one virtual machine is compromised, it does not have direct access to others or to the underlying hardware. Hypervisors also include security mechanisms that control access to system resources and monitor virtual machine behavior, further strengthening overall system protection.

The evolution of virtualization has also led to advancements in automation and intelligent resource management. Modern hypervisors are capable of analyzing system performance in real time and making adjustments automatically. This includes balancing workloads across multiple hosts, reallocating resources based on demand, and optimizing system performance without human intervention. These capabilities are essential in large-scale environments where manual management would be inefficient and error-prone.