During the formative years of large-scale computer networking, the digital environment was built on principles that prioritized openness, collaboration, and accessibility over defensive isolation. The early interconnected systems that eventually formed the foundation of the global internet were originally designed to support research collaboration between universities, government laboratories, and scientific institutions. These systems operated under a shared assumption that users were authorized, technically competent, and generally cooperative. As a result, the architecture emphasized functionality and interoperability rather than strict security enforcement. Machines were connected through a distributed framework that allowed resource sharing, remote computation, and data exchange across geographically separated locations. The design philosophy treated connectivity as a fundamental requirement for scientific progress, while security was considered a secondary concern that could be addressed later as the system matured. Authentication mechanisms existed, but they were often simple and relied heavily on password-based access without advanced encryption or multi-layer verification. This trust-centric model created an environment where systems were highly interconnected but lightly defended, allowing data and commands to flow freely across institutional boundaries.

System Weaknesses Hidden Within Common Network Services

As interconnected systems expanded, several widely used services became essential components of daily computing operations. These services were designed to facilitate communication, remote login, and system management across multiple machines. However, many of them contained design flaws that were not initially considered critical due to the assumption of trusted usage environments. Services responsible for user identification, message handling, and email routing often operate without strict input validation or robust authentication controls. This allowed remote interactions to be processed with minimal verification, creating unintended pathways for exploitation. The simplicity of these services made them efficient for legitimate use but also introduced structural vulnerabilities that could be leveraged under certain conditions. Operating systems of the time, particularly those based on UNIX-like environments, were widely deployed in academic and research networks. These systems included components such as network-facing daemons and remote execution utilities that were essential for distributed computing. Because these tools were designed to enhance productivity and collaboration, they often executed requests with a high level of implicit trust. Over time, this design approach created an ecosystem where small software flaws could have disproportionate effects when combined with network-wide connectivity.

The Digital Environment Before the First Major Network Worm Incident

Before the emergence of large-scale self-replicating programs, the concept of a network-wide software infection was largely theoretical within academic circles. Early discussions about system security focused primarily on accidental misuse, hardware failures, and isolated software bugs rather than intentional or autonomous propagation across interconnected systems. The user community consisted mainly of researchers, engineers, and students who shared resources in a cooperative environment. Because of this, the prevailing mindset did not anticipate malicious or self-replicating code moving across institutional boundaries. The network itself was relatively small compared to modern standards, yet it was already sufficiently interconnected to allow rapid communication between major research centers. System administrators focused their efforts on maintaining uptime, managing user access, and ensuring compatibility between different computing platforms. Security considerations were often secondary to performance and usability, resulting in minimal protective barriers between connected machines. This environment created a unique technological landscape where software behavior was expected to remain contained within local systems rather than spreading autonomously across a global infrastructure.

The Emergence of a Critical Warning Message on the Network

On November 2, 1988, a message appeared on a widely used technical communication channel frequented by system administrators. The message warned that a potential self-replicating program might be active across connected systems and suggested immediate steps to prevent further spread. It was delivered anonymously and described methods to reduce the risk of infection, including system monitoring and temporary isolation of vulnerable services. However, by the time this message circulated, the network was already experiencing significant performance degradation. High traffic volume and system overload made communication unreliable, causing delays in message delivery across multiple institutions. Some administrators received the warning only after their systems had already begun exhibiting abnormal behavior, while others were unable to access it due to connectivity issues. The timing of the message coincided with the early stages of widespread disruption, meaning that preventive measures were often implemented too late. As the situation escalated, many organizations began disconnecting affected systems from the broader network to prevent further contamination. This fragmentation of connectivity further complicated efforts to coordinate a unified response across the affected infrastructure.

The Design Intent Behind Early Self-Replicating Code

The program responsible for the disruption was originally developed as an experimental tool intended to measure the size and connectivity of a distributed computing environment. Its primary objective was to propagate across as many systems as possible and report back on the scale of the network. To achieve this, it was designed to identify compatible systems and transfer itself using existing network services. Once executed on a target machine, the program would attempt to determine whether it was already present to avoid redundant duplication. If no existing copy was detected, it would replicate and continue scanning for additional vulnerable systems. The design reflected an academic approach to understanding network topology through automated exploration rather than malicious intent. However, the implementation of this concept did not adequately account for the operational realities of large-scale distributed systems. The replication logic, combined with insufficient safeguards against uncontrolled duplication, allowed the program to behave in ways that exceeded its original experimental purpose. The absence of strict execution limits or centralized control mechanisms contributed to its ability to spread beyond intended boundaries.

Technical Pathways That Enabled Rapid System Propagation

The propagation mechanism relied on exploiting weaknesses in commonly used network services that allowed remote interaction between machines. These services were designed to support legitimate administrative and communication functions but lacked strict verification processes for incoming requests. As a result, the program was able to execute commands remotely by leveraging trust relationships between systems. Once inside a target machine, it would activate a sequence of operations designed to establish persistence and initiate further propagation. The program scanned for additional vulnerable systems using predefined patterns and network discovery techniques. Upon identifying a suitable target, it would transfer a copy of itself and execute it remotely. This cycle repeated continuously, creating a cascading effect across interconnected systems. The replication process was further influenced by conditional logic that determined whether duplication should continue or be suppressed. However, this logic included probabilistic elements that occasionally allowed replication even when duplicate detection mechanisms were triggered. This ensured that the program maintained persistence across diverse system environments, even when partial containment measures were in place.

Early Signs of System Strain and Operational Disruption

As the program spread across multiple institutions, system performance began to degrade significantly. Machines that were previously stable started experiencing increased CPU usage and reduced responsiveness. Network traffic levels surged as infected systems continuously communicated and replicated across available connections. This created congestion that affected both local operations and inter-organizational communication. Research institutions and government laboratories were among the first to notice abnormal system behavior, as critical computing resources became increasingly unavailable. Administrators observed unexplained process activity and rapidly diminishing system performance, prompting immediate investigation. In many cases, the severity of the disruption forced operators to shut down or disconnect machines entirely in an attempt to restore stability. However, these actions also reduced visibility into the scope of the issue, making it more difficult to assess the full extent of the propagation. The distributed nature of the network meant that no single point of control existed, and the impact varied across different regions depending on system configuration and exposure level. This phase marked the beginning of widespread recognition that the issue was not isolated but systemic in nature.

Escalation of the Self-Replicating Program Across Distributed Systems

As the self-replicating program continued to propagate across interconnected machines, its impact transitioned from isolated system slowdowns to widespread operational disruption affecting multiple institutions simultaneously. The expansion was not linear but exponential, driven by the program’s ability to identify vulnerable systems and execute remote replication with minimal friction. Each newly infected machine became both a victim and a vector, contributing computational resources to the ongoing spread while simultaneously degrading its own performance. The increasing density of infected systems amplified network traffic, creating a feedback loop in which propagation activity itself became a primary source of system load. Academic institutions, government laboratories, and research centers began reporting similar symptoms: unresponsive terminals, delayed processing tasks, and rapidly increasing system load averages. What initially appeared as localized performance issues quickly revealed itself as a coordinated and system-wide degradation of computing infrastructure. Administrators who initially suspected isolated faults were forced to reconsider the scale of the problem as reports began converging from geographically dispersed locations. The distributed nature of the environment meant that no single organization had complete visibility into the extent of the disruption, further complicating diagnosis and response efforts.

Compounding Effects of Resource Exhaustion and Network Saturation

The rapid replication behavior of the program led to severe resource exhaustion across affected systems. Central processing units became overwhelmed as multiple instances of the program executed simultaneously, competing with legitimate system processes for computational cycles. Memory consumption increased steadily, reducing the availability of system resources for normal operations. Disk activity also surged as processes attempted to manage temporary data and replication-related operations. On the network layer, bandwidth consumption escalated dramatically due to continuous inter-system communication required for propagation. The accumulation of these factors resulted in a cascading degradation of performance across entire subnetworks. As more systems became infected, the volume of replication traffic increased proportionally, further saturating available communication channels. This saturation not only slowed data transmission but also introduced delays in administrative commands, making remote system management increasingly difficult. In some cases, systems became effectively inaccessible due to extreme latency and resource contention. The compounding nature of these effects meant that even systems not yet directly infected experienced indirect performance degradation due to overall network congestion. This created a scenario in which the health of individual machines became dependent on the stability of the entire interconnected environment.

Vulnerability Exposure in Commonly Used Network Services

A significant factor contributing to the widespread propagation was the presence of vulnerabilities in widely deployed network services. These services were designed to facilitate essential functions such as remote command execution, user authentication, and system communication. However, many of them lacked rigorous input validation and were built under assumptions of trusted usage environments. One commonly exploited mechanism involved services that allowed remote users to request system information or execute commands without sufficient verification of origin or intent. Another critical weakness was found in systems that enabled remote email handling and message processing, which could be manipulated to trigger unintended behavior. These services often ran with elevated privileges, amplifying the impact of any successful exploitation. Because they were standard components of many installations, the same vulnerabilities existed across a large portion of the network, creating uniform entry points for propagation. The homogeneity of system configurations played a crucial role in enabling rapid spread, as once a viable exploitation method was identified, it could be reused across many systems without modification. This lack of diversity in defensive architecture significantly reduced the resilience of the network as a whole.

Behavioral Logic Governing Self-Replication Mechanisms

The program incorporated a structured decision-making process that governed its replication behavior across target systems. Upon entering a new machine, it would first perform a check to determine whether an existing instance was already present. This was intended to prevent redundant duplication and uncontrolled exponential growth. If no duplicate was detected, the program would proceed with replication and initiate further scanning for additional vulnerable systems. However, to counteract potential defensive strategies, the program also included probabilistic overrides that allowed replication to continue even when duplicate detection conditions were met. This mechanism introduced variability into its behavior, making it more difficult for system administrators to predict or suppress its activity using simple rule-based interventions. In certain conditions, the program would bypass its own suppression logic based on randomized thresholds, ensuring continued propagation even in partially secured environments. This design choice, while intended to improve resilience during experimentation, had the unintended consequence of undermining containment efforts. The combination of deterministic scanning and probabilistic continuation created a hybrid propagation model that was both efficient and difficult to control once activated across a large-scale network.

Operational Disruption in High-Dependency Computing Environments

As the spread intensified, institutions that relied heavily on networked computing systems began to experience severe operational disruptions. Research facilities that depended on distributed processing for scientific computation found their workloads interrupted or delayed due to system instability. Government laboratories responsible for critical analysis and modeling experienced similar issues, with key systems becoming unresponsive or significantly slowed. Administrative operations that required coordination between multiple machines were particularly affected, as communication delays prevented the timely execution of tasks. In many cases, essential services had to be suspended temporarily to prevent further degradation of system integrity. The disruption also impacted scheduling systems, data analysis pipelines, and interdepartmental communication channels. As infected machines consumed increasing amounts of computational resources, priority processes were often starved of the resources required for normal operation. This led to cascading delays across dependent systems, further amplifying the overall impact. The interconnected nature of the environment meant that failures in one system could propagate indirectly to others, even if those systems were not directly infected. This indirect impact highlighted the systemic vulnerability of tightly coupled computing environments lacking robust isolation mechanisms.

Administrative Response and Early Containment Strategies

System administrators across affected organizations began implementing containment strategies in an attempt to limit further spread. One of the primary methods involved disconnecting compromised machines from the broader network to prevent additional propagation. This approach, while effective in reducing spread, also disrupted communication channels necessary for coordinated recovery efforts. In some environments, administrators opted to shut down entire segments of the network to isolate infected clusters. This allowed for more controlled investigation but significantly reduced overall system availability. Efforts were also made to identify the source of infection by analyzing system logs, monitoring process activity, and tracing network connections. However, the scale and speed of propagation made it difficult to obtain a complete picture of the infection timeline. In many cases, systems had already been partially cleaned or rebooted, erasing valuable diagnostic information. Collaborative communication between institutions became increasingly important, although it was hindered by the very network instability caused by the incident. Despite these challenges, administrators began to develop a clearer understanding of the mechanisms involved, gradually shifting from reactive containment to structured analysis of system behavior.

Discovery of Multi-Vector Exploitation Techniques

Further investigation revealed that the program did not rely on a single method of propagation but instead utilized multiple entry vectors to increase its reach. These vectors included exploitation of remote execution services, misuse of communication protocols, and leveraging of trust relationships between connected systems. By combining multiple attack pathways, the program increased its likelihood of successful replication across diverse system configurations. This multi-vector approach allowed it to bypass partial defenses that might have blocked a single method of entry. Systems that were patched or partially secured against one type of exploitation could still be vulnerable through alternative mechanisms. This redundancy in propagation strategy contributed significantly to the difficulty of containment. It also demonstrated how interconnected system dependencies could be leveraged to bypass localized security improvements. As administrators analyzed infected systems, they observed that different machines exhibited different infection pathways, suggesting adaptive behavior depending on system configuration. This variability further complicated response efforts, as no single mitigation strategy could address all observed cases effectively.

The Rapid Accumulation of System-Level Failures

As propagation continued unchecked across multiple environments, system-level failures began to accumulate. Individual machines experienced crashes, freezes, and severe performance degradation, often requiring manual intervention to restore functionality. Network infrastructure components, including routing and communication systems, also began to experience increased load and instability. The combined effect of widespread infection and resource exhaustion created a situation in which normal operational continuity could not be maintained. Critical computing tasks were delayed or interrupted, and system reliability declined sharply across the board. The accumulation of failures also made recovery more complex, as partially functioning systems required careful analysis before restoration could be attempted. In some cases, administrators had to rebuild systems from baseline configurations to ensure the complete removal of infected components. This process was time-consuming and resource-intensive, further straining already impacted organizations. The scale of disruption revealed fundamental weaknesses in early distributed system design, particularly in areas related to isolation, fault tolerance, and secure execution of remote processes.

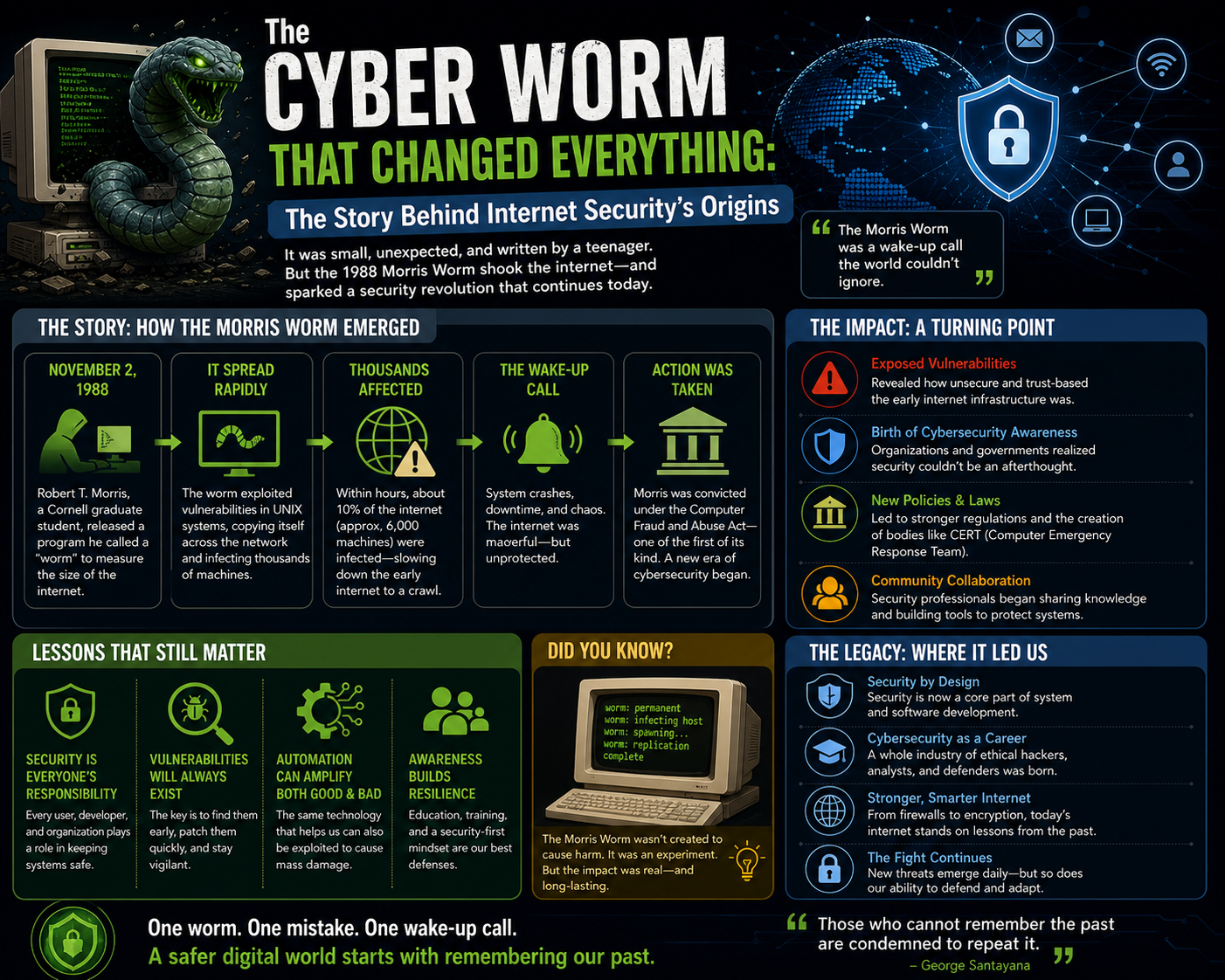

Transition From Technical Disruption to Historical Cybersecurity Turning Point

As the propagation of the self-replicating program reached its peak, the incident transitioned from a technical anomaly into a defining moment in the history of networked computing. What had initially been interpreted as a localized performance degradation across connected systems evolved into a large-scale operational breakdown spanning academic institutions, government laboratories, and research infrastructures. The scale of disruption forced system administrators and researchers to reassess foundational assumptions about the safety of interconnected computing environments. Before this event, networked systems were largely designed under the premise that users were part of a trusted community and that malicious or autonomous self-replicating software was an unlikely scenario. However, the widespread impact of the incident demonstrated that even in environments built on cooperation, software behavior could produce cascading failures when propagation mechanisms were left unrestricted. The transition from theoretical vulnerability discussions to real-world systemic failure marked a critical shift in how computing environments were understood and managed. It became clear that connectivity alone, without robust containment and verification mechanisms, could amplify minor software flaws into large-scale disruptions affecting entire infrastructures.

Structural Weaknesses Revealed in Early Distributed Computing Design

The incident exposed fundamental weaknesses in early distributed computing architecture, particularly in the way systems communicated and trusted external inputs. Many network services were designed to prioritize efficiency and interoperability, often at the expense of strict validation or authentication controls. This created an environment in which remote interactions could be executed with minimal verification, allowing unexpected behaviors to propagate across system boundaries. The lack of strong segmentation between systems meant that once a program gained entry into one machine, it could leverage existing trust relationships to access others without significant resistance. Additionally, system configurations across institutions were often standardized, leading to widespread uniformity in potential vulnerabilities. This homogeneity meant that once a propagation method was successful in one environment, it could be replicated across many others with little modification. The absence of layered defensive mechanisms further compounded the issue, as systems relied heavily on perimeter-based assumptions rather than internal containment strategies. The incident highlighted the necessity of designing systems with the expectation of failure, rather than assuming consistent cooperative behavior among all connected nodes.

The Role of Resource Contention in Amplifying System Failure

One of the most significant contributing factors to the severity of the disruption was the way in which resource contention escalated across infected systems. As the self-replicating program executed concurrently on multiple machines, it consumed increasing amounts of processing power, memory, and network bandwidth. This led to a sharp decline in system responsiveness, as legitimate processes were forced to compete with replication activity for limited resources. In many cases, systems became effectively unusable due to extreme CPU saturation and memory exhaustion. Network congestion further amplified these issues, as continuous communication between infected systems overwhelmed available bandwidth. The resulting latency made even basic administrative tasks difficult to execute, delaying response efforts and increasing the duration of exposure. As more systems became infected, the overall resource pool of the network diminished, creating a feedback loop in which degradation accelerated over time. This dynamic demonstrated how tightly coupled systems without proper isolation can experience nonlinear failure patterns, where small increases in load produce disproportionately large impacts on stability.

Evolution of Administrative Response Strategies Under Crisis Conditions

In response to the escalating disruption, system administrators were forced to rapidly adapt their operational strategies. Initial responses focused on immediate containment through disconnection of affected systems from the broader network. This approach, while effective in limiting further propagation, also fragmented communication channels and reduced visibility into the overall scope of the incident. As the situation developed, administrators began implementing more structured investigative procedures, including system log analysis, process monitoring, and controlled isolation of infected environments. These efforts were complicated by the fact that many systems had already experienced significant instability, resulting in incomplete or corrupted diagnostic data. Coordination between institutions became increasingly important, although it was hindered by the very network disruptions being addressed. In some cases, alternative communication methods had to be used to share information and develop coordinated responses. Over time, response strategies evolved from reactive containment to more systematic approaches focused on understanding propagation mechanisms and restoring system integrity through controlled rebuilding processes. This shift marked an important step toward modern incident response methodologies in distributed computing environments.

Emergence of Awareness Around Autonomous Software Behavior

The incident also played a crucial role in shifting perceptions of software behavior in networked environments. Before this event, most software was assumed to behave deterministically within the bounds of its intended execution context. The idea that a program could autonomously replicate itself across multiple systems without direct user intervention was not widely considered a practical risk. However, the widespread impact of the self-replicating program demonstrated that software could exhibit emergent behavior when operating in sufficiently interconnected environments. This realization led to increased interest in understanding how software interactions could produce unintended systemic effects. Researchers began examining how simple execution rules, when combined with network accessibility, could lead to exponential propagation patterns. The concept of autonomous software behavior became a central topic in discussions about system design, leading to increased focus on containment, verification, and execution boundaries. This shift in understanding contributed to the development of more sophisticated approaches to software deployment and network security in subsequent years.

Legal and Institutional Consequences Following the Incident

Following the widespread disruption, attention turned toward identifying the origin of the program and determining appropriate legal and institutional responses. Investigations traced the source of the self-replicating code to an individual operating within an academic research environment. The legal framework applied to the case was relatively new at the time, reflecting early efforts to address unauthorized access and misuse of networked systems. Proceedings resulted in formal charges related to unauthorized access and interference with computer systems. The outcome of the case included probation, community service, financial penalties, and supervision requirements. The legal proceedings also prompted broader discussions within academic and technical communities about the boundaries between experimentation, research, and unauthorized system interaction. Some viewed the incident as an unintended consequence of exploratory research into network behavior, while others considered it a violation of emerging digital security principles. Regardless of perspective, the case established an important precedent for how autonomous software behavior and network interference would be addressed under legal frameworks in the future. It also reinforced the need for clearer guidelines governing experimental activities in interconnected computing environments.

Long-Term Impact on Cybersecurity Practices and System Design

In the aftermath of the incident, significant changes began to emerge in the design and management of networked systems. One of the most important shifts was the increased emphasis on security as a foundational design principle rather than an auxiliary consideration. System architects began incorporating stronger authentication mechanisms, improved access controls, and more rigorous input validation processes into network services. The concept of segmentation became increasingly important, with systems designed to limit the spread of unauthorized behavior through isolation and controlled communication pathways. Monitoring and detection capabilities were also enhanced to identify unusual patterns of activity that could indicate propagation attempts. Additionally, the incident influenced the development of more formalized incident response procedures, enabling organizations to respond more effectively to future disruptions. The recognition that interconnected systems could fail in cascading ways led to a reevaluation of reliability models and fault tolerance strategies. Over time, these changes contributed to the evolution of modern cybersecurity practices, where resilience, containment, and proactive defense mechanisms became central components of system design.

Institutional Memory and the Evolution of Network Security Thinking

The incident remained a reference point in discussions about early network security challenges and the importance of defensive design in distributed systems. It highlighted the risks associated with assuming trust in interconnected environments and demonstrated how quickly small design flaws could escalate into widespread disruption. Educational institutions and research organizations began incorporating lessons from the event into curricula focused on computer systems, network engineering, and cybersecurity. The incident also influenced how future generations of engineers approached system design, encouraging a mindset that anticipated misuse, failure, and unexpected interaction between software components. Over time, it became recognized not only as a disruptive event but also as a catalyst for foundational improvements in digital infrastructure security. The lessons derived from the incident continued to inform the development of new technologies, ensuring that modern systems incorporate safeguards designed to prevent similar large-scale propagation scenarios.

Conclusion

The self-replicating program incident of 1988 represents one of the earliest large-scale demonstrations of how tightly interconnected computing systems can transition from stable collaboration environments into rapidly cascading failure states. At its core, the event was not simply a technical malfunction or an isolated software defect, but a systemic exposure of design assumptions that had governed early network architecture. Those assumptions—primarily built on trust, academic cooperation, and controlled access—were fundamentally misaligned with the emergent properties of a growing, decentralized digital infrastructure. What unfolded was not just a disruption of machines and services, but a disruption of the prevailing mental model of what networked computing systems were capable of enduring.

One of the most important outcomes of this incident was the realization that connectivity itself is a double-edged property. While interconnection enables collaboration, distributed computing, and resource sharing, it also creates pathways for the rapid propagation of unintended or malicious behavior. Before this event, most system designers treated connectivity as an unqualified benefit. The idea that a program could traverse systems autonomously, replicate itself without direct authorization, and exhaust shared resources at scale was not a central concern in design considerations. After the incident, this perspective began to shift toward a more cautious and structured understanding of interconnected risk.

Another lasting implication lies in the recognition that software behavior cannot be fully understood in isolation from its execution environment. The program’s propagation was not solely the result of its internal logic, but also the result of environmental conditions that allowed that logic to operate at scale. Weak service authentication, homogeneous system configurations, and unrestricted trust relationships between machines created a fertile environment for uncontrolled replication. In other words, the vulnerability was not confined to a single flaw but emerged from the interaction between multiple system design choices. This realization played a key role in shaping later approaches to system security, where emphasis increasingly shifted toward holistic threat modeling rather than isolated vulnerability patching.

The incident also underscored the importance of designing systems with failure as an expected condition rather than an exceptional one. Early network architectures implicitly assumed that participants would behave cooperatively and that system misuse would be rare. This assumption led to minimal containment mechanisms and limited internal safeguards. When the propagation event occurred, it exploited precisely these gaps in expectation. Modern system design philosophy has since evolved to assume that compromise, misconfiguration, and unexpected behavior are not only possible but inevitable. This shift has resulted in more robust segmentation strategies, stricter privilege boundaries, and layered defense models that limit the impact of localized failures.

From an operational perspective, the event demonstrated how quickly resource exhaustion can transform from a localized issue into a network-wide crisis. The combination of CPU saturation, memory pressure, and bandwidth congestion created nonlinear degradation patterns that overwhelmed administrative response capabilities. Systems did not fail in isolation; instead, they failed in clusters, with each compromised node contributing to the overall instability. This cascading effect revealed the limitations of early monitoring and response frameworks, which were not designed to handle synchronized multi-system degradation. As a result, later developments in network management placed increased emphasis on real-time monitoring, anomaly detection, and automated containment mechanisms capable of responding at machine speed.

The human dimension of the incident is equally significant. System administrators were forced to operate under conditions of incomplete information, degraded communication channels, and rapidly evolving system behavior. Decisions had to be made quickly, often with limited visibility into the broader scope of the issue. The choice to disconnect systems from the network, while effective in limiting further propagation, also highlighted the trade-off between containment and continuity. This tension between operational availability and defensive isolation remains a central challenge in modern cybersecurity practice. The incident illustrated that sometimes the most effective defensive action can simultaneously be the most disruptive to normal operations.

Legally and institutionally, the aftermath of the event contributed to the early formation of frameworks governing unauthorized access to computing systems. At the time, legal structures were still adapting to the emergence of networked environments, and the incident became a reference point for defining boundaries between experimentation, negligence, and malicious activity. The resulting legal proceedings established precedents that influenced how similar cases would be evaluated in the future. More broadly, it helped clarify that actions performed in digital environments could have tangible, measurable consequences on critical infrastructure, reinforcing the need for accountability in software development and system access.

In academic and professional circles, the incident became a foundational case study in understanding emergent behavior in distributed systems. It illustrated that complexity does not necessarily arise from individual components but from interactions between components operating at scale. Even relatively simple replication logic, when combined with unrestricted connectivity and insufficient safeguards, can produce outcomes that are difficult to predict or control. This insight contributed to the development of more rigorous approaches to system modeling, including the study of network topology, propagation dynamics, and systemic risk in distributed environments.

Over time, the lessons derived from this event became embedded in the evolution of cybersecurity as a discipline. Concepts such as least privilege access, segmentation, intrusion detection, and behavioral monitoring can all trace part of their conceptual lineage to the failures exposed during this early incident. It also influenced the cultural mindset of system designers, shifting priorities toward resilience, redundancy, and controlled exposure rather than unchecked openness. The balance between usability and security began to tilt toward a more cautious equilibrium, where protection mechanisms were integrated into system architecture from the outset rather than added as afterthoughts.

Perhaps the most enduring lesson is that technological systems are not static constructs but dynamic ecosystems shaped by both design intent and environmental interaction. The incident demonstrated that even in environments built on trust and collaboration, systemic vulnerabilities can emerge when scale, connectivity, and software behavior intersect in unforeseen ways. It also reinforced the importance of continuous reassessment of security assumptions as systems evolve. What was considered safe in a small, controlled network can become dangerous when extended to a larger, more interconnected environment.

In retrospect, the event stands as a pivotal moment in the maturation of networked computing. It marked the transition from an era of experimental openness to one of structured security awareness. While the immediate effects were disruptive and costly, the long-term impact contributed significantly to the development of safer, more resilient digital infrastructures. The understanding gained from analyzing this incident continues to influence how modern systems are designed, deployed, and managed, ensuring that the lessons learned remain relevant in an era of increasingly complex and interconnected technologies.