Modern IT infrastructure relies heavily on continuous data access between compute systems and storage arrays. As workloads grow in complexity, the connection between servers and storage becomes a critical dependency for application performance and system availability. Multipathing is a design approach that addresses this dependency by introducing multiple physical and logical routes between a host system and its storage resources.

In a traditional single-path setup, a server communicates with storage through one dedicated route. This route may include a host bus adapter, network switches, cables, and a storage controller. While functional, this design introduces a clear limitation: any failure along this path disrupts connectivity entirely. As organizations scale their infrastructure, this single-path model becomes increasingly risky.

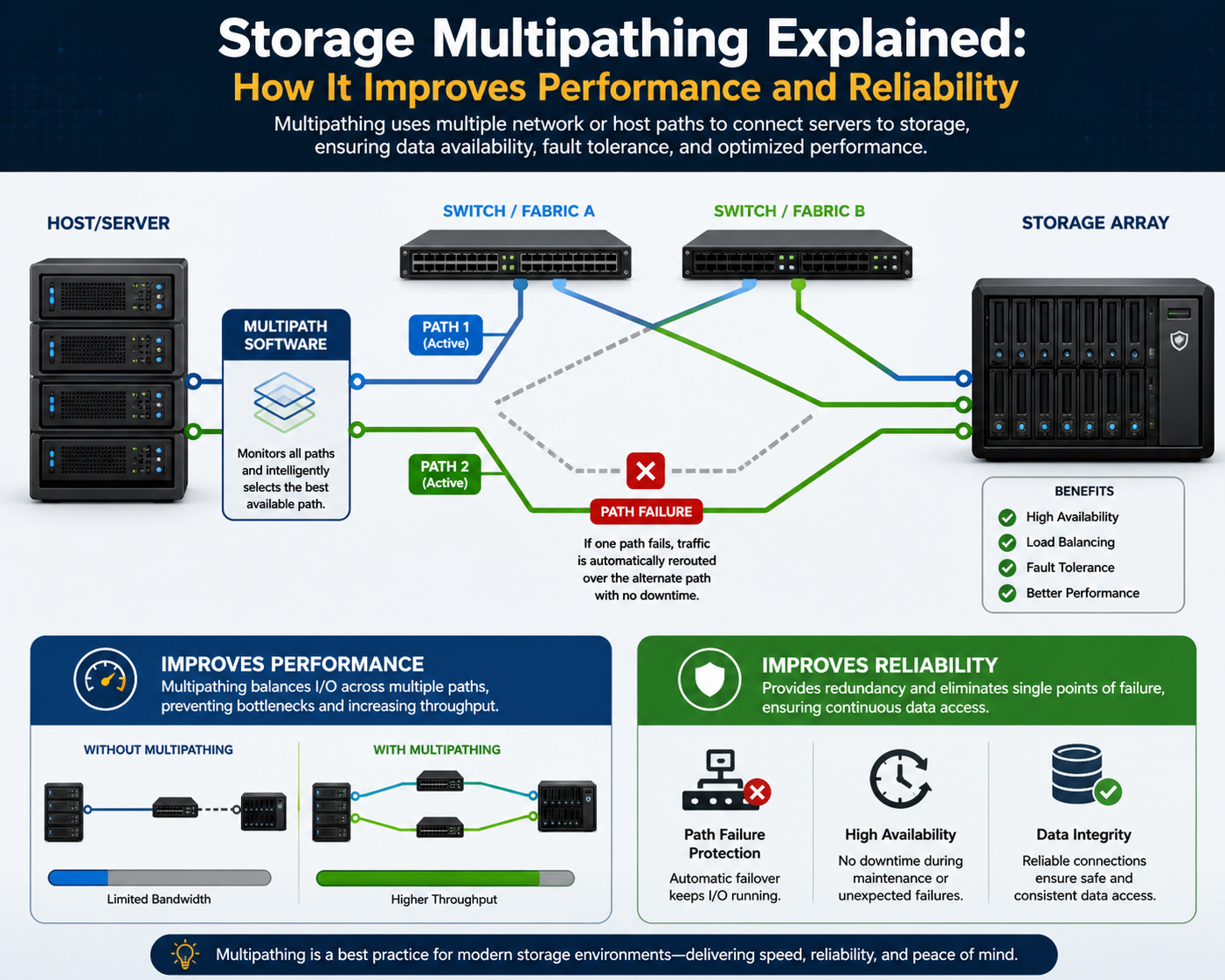

Multipathing eliminates this limitation by establishing more than one independent route between the same endpoints. These routes are constructed using redundant hardware components and are actively managed by specialized software at the operating system or driver level. The system continuously evaluates available paths and distributes or redirects traffic as required.

Core Concept of Multipathing Architecture

At its core, multipathing is a path management mechanism that sits between the operating system and storage hardware. It abstracts multiple physical connections into a single logical storage device. From the perspective of applications and file systems, storage appears as a unified resource, even though multiple underlying paths exist.

Each path consists of a complete communication chain that may include network interface cards, fiber channel host adapters, Ethernet switches, or storage area network fabrics. These paths are typically designed to be physically independent to avoid shared failure points. For example, redundant switches or separate network fabrics may be used so that a failure in one layer does not affect the other.

Multipathing software monitors all active and standby routes in real time. It evaluates their health status, throughput, latency, and availability. Based on predefined policies, it selects the most appropriate path for data transmission. If a failure is detected, traffic is automatically rerouted without requiring manual intervention.

Role of Multipathing in High Availability Systems

High-availability systems are designed to minimize downtime and ensure continuous service delivery. Storage connectivity is one of the most important components in such environments because nearly all application operations depend on persistent data access.

Multipathing contributes to high availability by removing single points of failure in the storage communication layer. If a cable is disconnected, a switch fails, or a controller becomes unresponsive, the system does not lose access to storage. Instead, another available path immediately takes over the workload.

This behavior is particularly important in virtualized environments, clustered database systems, and enterprise application stacks where downtime can impact multiple dependent services simultaneously. Multipathing ensures that storage remains accessible even under partial infrastructure failure conditions.

Physical and Logical Components of Multipathing Systems

A multipathing configuration typically includes several key physical and logical components working together.

On the physical side, infrastructure includes host adapters, network or fiber channel switches, redundant cabling, and storage controllers. Each of these components is duplicated or segmented to ensure independent data paths.

On the logical side, multipathing software or drivers operate within the operating system. This layer is responsible for path discovery, health monitoring, load distribution, and failover execution. It aggregates multiple physical routes into a single logical device representation.

Storage systems also participate in multipathing configurations by presenting logical unit numbers or volumes through multiple front-end ports or controllers. This allows hosts to establish simultaneous sessions through different interfaces.

Path Discovery and Initialization Process

When a host system boots or when new storage is introduced, the multipathing subsystem performs a discovery process. During this stage, it identifies all available storage paths and maps them to logical storage devices.

Each discovered path is evaluated for connectivity and compatibility. The system assigns identifiers to distinguish between active, standby, and inactive routes. Once the mapping is complete, the operating system treats the storage volume as a single entity despite multiple underlying routes.

This initialization process ensures that applications do not need to be aware of underlying physical complexity. Instead, they interact with a consistent storage interface while multipathing logic operates transparently in the background.

Data Flow Behavior in Multipathing Environments

Data transmission in multipathing systems depends on the configured policy. In some cases, all traffic is routed through a single active path, while others remain on standby. In other configurations, traffic is distributed across multiple paths simultaneously.

When multiple paths are active, the system may distribute input/output operations in a sequential or weighted manner. This improves utilization of available bandwidth and reduces congestion on any single route.

In environments with asymmetric path performance, multipathing software may prioritize higher-performing routes while still maintaining lower-performing ones as failover options. This dynamic adjustment helps maintain optimal performance under varying workload conditions.

Importance of Path Independence

A critical design principle in multipathing is path independence. For redundancy to be effective, each route must be isolated from shared failure points. If multiple paths rely on the same switch, power source, or physical conduit, a single failure could still disrupt all connectivity.

To achieve true independence, infrastructure is often segmented into separate physical zones. This may include different switch fabrics, redundant power systems, and isolated cabling routes. Path independence ensures that a failure in one domain does not cascade into others.

This principle is especially important in enterprise storage environments where uptime requirements are strict,t and service interruptions can have cascading operational impacts.

Operating System Integration of Multipathing

Modern operating systems include built-in support or extensible frameworks for multipathing functionality. These frameworks allow storage devices with multiple access routes to be recognized and managed as unified entities.

Once configured, multipathing integrates closely with the kernel-level I/O subsystem. It intercepts storage requests and directs them through the selected path based on current policy and system state. This integration allows for seamless failover and performance optimization without requiring application-level awareness.

The operating system continuously updates path status information, ensuring that changes in hardware availability are reflected immediately in routing decisions.

Early Design Considerations in Multipathing Deployment

Before implementing multipathing, infrastructure planning must consider several architectural factors. These include physical topology, redundancy levels, storage controller capabilities, and network segmentation strategies.

Proper design ensures that multiple independent paths truly exist rather than simply duplicating components within the same failure domain. This often requires careful planning of switch placement, cabling routes, and controller assignments.

Additionally, compatibility between storage hardware and operating system multipathing frameworks must be validated to ensure correct behavior under load and during failure scenarios.

Expanding the Role of Multipathing Beyond Failover

While multipathing is often introduced as a resilience mechanism, its operational value extends well beyond redundancy. In modern storage architectures, it also serves as a performance optimization layer that actively manages how input/output operations are distributed across available physical routes.

Instead of treating all paths as passive backups, multipathing systems evaluate each route as a potential performance channel. This enables storage traffic to be balanced, optimized, and adapted dynamically based on real-time infrastructure conditions. As workloads become more data-intensive and latency-sensitive, this capability becomes increasingly important.

Multipathing software sits at a critical junction in the I/O stack, interpreting application requests and deciding how best to route them to storage. This decision-making process is governed by load-balancing algorithms that define how traffic is distributed across available paths.

Fundamentals of Load Distribution in Storage Networks

In a multipathing environment, each path has its own performance characteristics. These characteristics include bandwidth capacity, latency, queue depth, and current utilization. Because these variables fluctuate over time, static routing decisions are insufficient for optimal performance.

Load distribution mechanisms continuously evaluate path conditions and assign I/O requests accordingly. This ensures that no single path becomes a bottleneck while others remain underutilized. The goal is not simply equal distribution but efficient utilization of all available resources.

The effectiveness of load distribution depends heavily on how accurately the system can measure path health and how intelligently it applies routing decisions based on those measurements.

Round Robin Distribution Strategy

One of the most widely used load-balancing strategies is round robin distribution. In this method, I/O requests are assigned sequentially across all available active paths in a cyclical pattern.

Each path receives an equal number of requests regardless of its underlying performance capacity. This simplicity makes round robin easy to implement and predictable in behavior.

However, its simplicity can also become a limitation in heterogeneous environments. If one path has significantly higher bandwidth or lower latency than another, round robin does not account for these differences. As a result, faster paths may become underutilized while slower paths may become congested.

Despite this limitation, round robin remains effective in environments where paths are identical in configuration and performance characteristics.

Least Queue Depth Scheduling Approach

A more adaptive load balancing method is the least queue depth scheduling. This algorithm evaluates the number of pending I/O requests queued on each path and directs new requests to the path with the smallest queue.

Unlike round robin, this approach dynamically responds to congestion. If one path becomes heavily loaded, new requests are automatically diverted to less congested routes.

This method improves overall system responsiveness by preventing bottlenecks from forming. It is particularly effective in environments where workload intensity fluctuates unpredictably.

However, it requires continuous monitoring of queue states and introduces slightly higher computational overhead compared to simpler algorithms.

Weighted Path Allocation Model

Weighted path allocation introduces administrative control into multipathing decisions. In this model, each path is assigned a weight value based on its performance capabilities, reliability, or strategic importance.

Higher-weighted paths receive a larger share of I/O traffic, while lower-weighted paths act as supplementary or failover routes. This allows system administrators to align traffic distribution with infrastructure design intentions.

For example, a high-speed fiber channel link may be assigned a higher weight than a standard Ethernet-based storage connection. This ensures that faster infrastructure components are utilized more heavily.

Weighted allocation is especially useful in hybrid environments where different generations of hardware coexist within the same storage architecture.

Failover-Only Path Utilization Strategy

In some configurations, multipathing is used strictly for redundancy rather than performance enhancement. In these cases, a failover-only strategy is implemented.

Under this model, a single primary path handles all I/O traffic under normal conditions. Secondary paths remain idle and are only activated when the primary path fails.

This approach simplifies system behavior and reduces complexity in path management. It is often used in environments where predictability and stability are more important than performance optimization.

Although less efficient in terms of resource utilization, failover-only configurations provide strong deterministic behavior, which can be desirable in certain regulated or legacy systems.

Dynamic Path Selection and Real-Time Decision Making

Advanced multipathing implementations incorporate dynamic path selection mechanisms that continuously evaluate system conditions. These mechanisms use metrics such as latency, throughput, error rates, and congestion levels to determine optimal routing paths.

Unlike static algorithms, dynamic selection adapts in real time. If a path begins to degrade in performance, it is automatically deprioritized or temporarily removed from active rotation.

This adaptive behavior improves overall system efficiency by ensuring that only healthy and performant paths are actively used. It also helps prevent cascading performance degradation caused by overloaded infrastructure components.

Impact of Hardware Heterogeneity on Load Balancing

In many enterprise environments, storage infrastructure evolves. As a result, systems often include a mix of hardware generations, interface types, and performance capabilities.

This heterogeneity introduces complexity into multipathing decisions. Different paths may have varying bandwidth limits, processing capabilities, or latency profiles.

Load balancing algorithms must account for these differences to avoid inefficient traffic distribution. Without proper weighting or dynamic adjustment, faster paths may be underused while slower paths become saturated.

Effective multipathing configurations require careful alignment between hardware capabilities and software routing policies.

Queue Depth Management and I/O Contention Control

Queue depth plays a critical role in multipathing performance. Each path maintains a queue of pending I/O operations waiting to be processed. If this queue becomes too deep, latency increases, and application performance may degrade.

Multipathing systems monitor queue depth as a key performance indicator. When queue thresholds are exceeded, new I/O requests are redirected to less congested paths.

This mechanism helps prevent localized congestion from affecting overall system performance. It also ensures more balanced utilization of available infrastructure resources.

Queue management becomes particularly important in high-throughput environments such as database clusters and virtualization platforms,s where simultaneous storage requests are common.

Latency Sensitivity in Path Selection Decisions

Latency is another critical factor influencing multipathing behavior. Even if a path has high bandwidth, elevated latency can negatively impact application performance.

Multipathing systems often prioritize low-latency paths for time-sensitive operations. This ensures that critical workloads experience minimal delay in storage access.

Latency monitoring is typically performed continuously, allowing the system to detect performance degradation early. When latency increases beyond acceptable thresholds, traffic is redistributed to healthier paths.

This adaptive behavior helps maintain consistent application performance even under fluctuating network conditions.

Bandwidth Aggregation Through Parallel Path Usage

One of the most significant advantages of multipathing is its ability to aggregate bandwidth across multiple physical connections.

When multiple paths are active simultaneously, their combined throughput can exceed the capacity of any single route. This is particularly beneficial in high-performance computing environments where large volumes of data must be transferred quickly.

Bandwidth aggregation is not simply a matter of summing individual link speeds. It also depends on how efficiently traffic is distributed and how well the underlying infrastructure handles parallel processing.

Effective multipathing ensures that available bandwidth is fully utilized without introducing congestion or imbalance.

Influence of Storage Controller Architecture

Storage controllers play a significant role in multipathing performance. These controllers manage how data is received, processed, and written to storage media.

In multipathing configurations, controllers may expose multiple front-end ports, each representing a separate access path. The efficiency of these controllers directly impacts overall system performance.

If a controller becomes a bottleneck, multipathing cannot fully compensate, even if multiple external paths are available. This highlights the importance of end-to-end performance alignment between hosts, networks, and storage systems.

Path Health Monitoring and Continuous Evaluation

Continuous monitoring is essential for maintaining reliable multipathing operations. Systems regularly evaluate each path for errors, timeouts, and performance anomalies.

If a path exhibits instability, it may be temporarily removed from active use. Once stability is restored, it can be reintegrated into the routing pool.

This ongoing evaluation ensures that multipathing decisions are always based on current infrastructure conditions rather than static assumptions.

Monitoring also plays a key role in predictive maintenance by identifying early signs of hardware degradation or network issues.

Balancing Reliability and Performance Objectives

Multipathing must strike a balance between two primary objectives: reliability and performance. While redundancy ensures continuous availability, performance optimization ensures efficient resource utilization.

Different environments prioritize these objectives differently. Mission-critical systems may favor failover stability over throughput optimization, while high-performance environments may prioritize load distribution and bandwidth utilization.

Multipathing configurations are therefore highly flexible, allowing administrators to tune behavior according to operational requirements.

Interaction Between Multipathing and Upper-Layer Applications

Although multipathing operates at a lower system layer, its effects are visible to upper-layer applications. Databases, virtualization platforms, and file systems all benefit from improved storage responsiveness and resilience.

However, applications are generally unaware of individual path behavior. They interact with a unified storage interface, while multipathing handles complexity transparently.

This abstraction simplifies application design while still delivering advanced infrastructure capabilities.

Understanding Failover Behavior in Multipathing Systems

Failover is the core resilience mechanism in multipathing architectures. It ensures that when an active storage path becomes unavailable, I/O operations are seamlessly redirected to an alternate path without disrupting applications or causing data access interruptions.

Unlike simple redundancy models, multipathing failover is designed to be automatic, fast, and transparent. The operating system continuously monitors all active and standby routes, detecting path health through heartbeat signals, I/O response times, and error reporting mechanisms. When a failure is detected, the multipathing driver immediately reroutes traffic to a healthy path.

The transition is engineered to avoid data loss and maintain session continuity. In most cases, applications remain unaware that a failure has occurred at the infrastructure layer.

Types of Failover States in Multipathing Architectures

Multipathing systems generally classify paths into several operational states based on their availability and performance.

Active paths are currently carrying I/O traffic and are fully operational. Standby paths remain idle but are ready to take over if required. Failed paths are marked as unavailable due to detected errors or loss of connectivity. In some systems, degraded paths may still function but with reduced priority due to performance issues or intermittent instability.

These states are continuously updated based on real-time monitoring data. This dynamic classification allows multipathing software to make informed routing decisions under changing infrastructure conditions.

Failover Detection Mechanisms and Health Monitoring

Failover events are triggered by a variety of detection mechanisms. One of the most common indicators is I/O timeout, where storage requests fail to complete within an expected time window.

Other indicators include link-level disconnections, switch port failures, controller unresponsiveness, or repeated transmission errors. Multipathing systems may also use periodic health checks to validate path availability even when no active traffic is present.

These checks ensure early detection of issues before they escalate into full outages. The combination of passive monitoring and active probing creates a robust detection system capable of identifying both sudden failures and gradual degradation.

Path Recovery and Reintegration Process

Once a failed path is restored, it does not automatically resume active status in most configurations. Instead, it undergoes a recovery and validation process to ensure stability.

The system verifies that all components along the path are fully operational, including network interfaces, switches, and storage controllers. After validation, the path is gradually reintegrated into the active pool based on the configured multipathing policy.

This controlled reintegration prevents unstable paths from immediately impacting production traffic. It also ensures that load distribution remains balanced during recovery phases.

Common Failover Scenarios in Real Infrastructure

Failover events can occur due to a variety of infrastructure issues. Physical cable disconnections are among the most straightforward causes, often resulting from hardware maintenance or accidental damage.

Switch failures represent another common scenario, particularly in environments where multiple hosts rely on shared network fabrics. In such cases, all paths passing through the affected switch become unavailable simultaneously.

Storage controller failures can also trigger failover events, especially in dual-controller storage arrays where each controller handles a subset of available paths. When one controller fails, multipathing systems redirect traffic to the remaining functional controller.

Impact of Failover on Application Performance

Although failover is designed to be seamless, it can still introduce temporary performance changes. When traffic is redirected to fewer remaining paths, increased load may result in higher latency or reduced throughput.

The severity of impact depends on how many alternative paths are available and how well the system was balanced before the failure. Well-designed multipathing configurations minimize performance degradation by ensuring sufficient redundancy and load distribution.

In high-availability environments, failover events are often designed to be brief and non-disruptive, with recovery mechanisms restoring full performance as quickly as possible.

Troubleshooting Multipathing Connectivity Issues

Troubleshooting multipathing requires a layered approach, starting from physical connectivity and moving up to logical configuration.

One of the most common issues is misconfigured path mapping, where the operating system incorrectly identifies or associates storage routes. This can result in paths being ignored or incorrectly grouped.

Physical layer problems such as faulty cables, malfunctioning network interfaces, or degraded switches can also disrupt multipathing behavior. These issues often manifest as intermittent connectivity or inconsistent performance across paths.

At the storage layer, misconfigured controllers or incorrect zoning can prevent proper path discovery, leading to incomplete or missing routes in the multipathing configuration.

Diagnosing Performance Bottlenecks in Multipath Environments

Performance bottlenecks in multipathing systems can occur even when redundancy is correctly configured. These bottlenecks often arise when traffic distribution is uneven or when one component in the path becomes saturated.

Queue depth imbalances are a common indicator of performance issues. If one path consistently shows higher queue depth than others, it may be handling disproportionate traffic.

Latency inconsistencies across paths can also indicate underlying hardware differences or congestion. Identifying these discrepancies is essential for optimizing load-balancing policies.

Configuration Errors and Misalignment Problems

Incorrect configuration is one of the most frequent causes of multipathing issues. This includes improper policy selection, incorrect path grouping, or mismatched hardware settings.

For example, assigning identical weights to paths with significantly different performance capabilities can lead to inefficient traffic distribution. Similarly, failing to isolate redundant paths physically can create hidden single points of failure.

Misalignment between storage array settings and host multipathing configurations can also result in inconsistent behavior, particularly during failover events.

Importance of End-to-End Path Validation

Effective multipathing requires validation across the entire data path, not just at the host level. This includes verifying connectivity from the server to the switch, the switch to the storage, and the storage controller back to the host.

End-to-end validation ensures that all segments of the infrastructure are functioning correctly and that redundancy is truly independent. Without this validation, hidden dependencies may undermine failover reliability.

Regular testing of each path helps ensure that redundancy assumptions remain valid over time.

Role of Storage Area Networks in Multipathing Design

Storage Area Networks are one of the most common environments where multipathing is deployed. In these architectures, storage devices are centralized and accessed by multiple hosts over a dedicated network fabric.

Multipathing in SAN environments is critical because it ensures that no single network segment becomes a point of failure. Multiple switches, redundant fabrics, and dual-controller storage arrays are typically used to support this design.

The separation of storage traffic from general network traffic further enhances performance and reliability.

Multipathing in Virtualized Infrastructure Environments

Virtualization platforms rely heavily on shared storage to enable features such as live migration, high availability clustering, and centralized management.

In these environments, multipathing ensures that virtual machines maintain uninterrupted access to storage even during hardware failures. Since multiple virtual machines may depend on the same storage volume, path failures can have a widespread impact if not properly mitigated.

Multipathing provides the underlying stability required for virtualized workloads to operate reliably across distributed compute clusters.

Integration with Clustered Computing Systems

In clustered computing environments, multiple servers work together to provide unified application services. These clusters depend on shared storage systems to maintain data consistency and coordination.

Multipathing ensures that all cluster nodes maintain reliable access to shared storage resources. If a path fails for one node, alternative routes prevent disruption to cluster operations.

This capability is essential for maintaining quorum and preventing split-brain scenarios in clustered systems.

High-Performance Computing and Data-Intensive Workloads

High-performance computing environments generate extremely large volumes of data that must be processed and stored efficiently. Multipathing plays a key role in ensuring that storage bandwidth does not become a limiting factor.

By distributing I/O across multiple high-speed paths, multipathing helps sustain the throughput required for scientific computing, analytics, and large-scale simulations.

In these environments, even small improvements in storage efficiency can have a significant impact on overall computation time.

Enterprise Data Center Deployment Strategies

In enterprise data centers, multipathing is deployed as part of a broader redundancy and resilience strategy. It is typically combined with redundant power systems, network segmentation, and high-availability clustering.

Deployment strategies focus on eliminating single points of failure at every layer of the infrastructure stack. Multipathing specifically addresses the storage connectivity layer, ensuring that data access remains uninterrupted under failure conditions.

Careful planning is required to align multipathing configurations with the overall data center architecture.

Monitoring and Long-Term Maintenance of Multipath Systems

Ongoing monitoring is essential to ensure the long-term reliability of multipathing systems. This includes tracking path health, performance metrics, and failure history.

Monitoring tools typically provide visibility into path status, utilization levels, and error rates. This information helps administrators identify potential issues before they escalate into failures.

Long-term maintenance also involves periodic testing of failover behavior to ensure that redundancy mechanisms continue to function as expected.

Scalability Considerations in Expanding Storage Environments

As storage environments scale, multipathing configurations must also evolve. Adding new storage arrays, expanding network fabrics, or increasing host density can introduce additional complexity.

Scalability requires careful management of path distribution to avoid imbalance or congestion. It also requires ensuring that new components are fully integrated into existing multipathing frameworks.

Without proper planning, scaling can introduce inefficiencies that reduce the effectiveness of redundancy and performance optimization mechanisms.

Operational Resilience Through Multipathing Design

Ultimately, multipathing contributes to operational resilience by ensuring that storage connectivity remains stable under a wide range of failure scenarios. It enhances both availability and performance while reducing dependency on any single infrastructure component.

By combining redundancy, intelligent routing, and continuous monitoring, multipathing creates a storage connectivity layer capable of supporting modern enterprise demands without interruption or degradation.

Conclusion

Multipathing has evolved from a specialized storage feature into a foundational component of resilient IT infrastructure. At its core, it addresses a fundamental limitation in traditional computing environments: the reliance on single communication paths between compute systems and storage resources. As data demands continue to grow across enterprises, cloud ecosystems, and high-performance environments, the importance of eliminating single points of failure in storage connectivity becomes increasingly critical.

The primary value of multipathing lies in its ability to unify redundancy and performance within a single operational framework. Rather than treating failover and load balancing as separate concerns, multipathing integrates both into a continuous decision-making process. This allows systems to dynamically adapt to infrastructure conditions while maintaining uninterrupted access to data. In environments where even brief storage interruptions can cascade into application-level disruptions, this capability becomes essential.

From a resilience perspective, multipathing fundamentally changes how infrastructure failure is handled. Instead of reacting to outages after they occur, systems continuously anticipate and absorb failures through redundant design. When a path fails, traffic is immediately redirected to an alternative route without requiring manual intervention. This automatic recovery mechanism reduces operational risk and minimizes downtime, which is especially important in mission-critical systems where service continuity is non-negotiable.

However, the strength of multipathing is not limited to failure recovery alone. Its performance optimization capabilities play an equally important role in modern deployments. By distributing I/O operations across multiple available paths, multipathing increases aggregate throughput and reduces congestion on individual links. This becomes particularly valuable in high-demand environments such as virtualization clusters, database systems, and large-scale analytics platforms where storage performance directly impacts application responsiveness.

The effectiveness of multipathing depends heavily on the intelligence of its underlying algorithms. Simple methods such as sequential distribution provide predictable behavior, while more advanced techniques like queue-based or weighted distribution offer adaptive optimization based on real-time conditions. These mechanisms ensure that infrastructure resources are utilized efficiently, even in heterogeneous environments where paths may differ in speed, latency, or capacity.

Equally important is the role of architectural design in multipathing effectiveness. True redundancy requires more than just multiple connections; it requires independence between those connections. If redundant paths share common physical components such as switches or controllers, the system may still be vulnerable to correlated failures. As a result, careful infrastructure planning is necessary to ensure that redundancy is genuine rather than superficial. This includes separating network fabrics, diversifying hardware components, and eliminating shared points of dependency wherever possible.

Multipathing also plays a critical role in enabling scalability. As organizations expand their infrastructure, storage demand increases not only in capacity but also in throughput and concurrency. Multipathing allows new paths to be integrated into existing environments without disrupting ongoing operations. This flexibility supports incremental growth while maintaining consistent performance and reliability standards. In large-scale deployments, this scalability becomes a key enabler of long-term infrastructure evolution.

From an operational standpoint, monitoring and maintenance are essential to sustaining multipathing effectiveness. Continuous visibility into path health, latency, and utilization allows administrators to detect anomalies before they escalate into failures. Over time, this data also provides insight into infrastructure behavior patterns, enabling more informed decisions regarding optimization and capacity planning. Without consistent monitoring, even well-designed multipathing systems can degrade in efficiency due to unnoticed imbalances or latent hardware issues.

Another important dimension is the interaction between multipathing and higher-level system layers. While multipathing operates primarily at the storage transport layer, its effects propagate upward into applications, virtual machines, and clustered services. These systems benefit from improved stability and performance without needing to be aware of the underlying complexity. This abstraction is a key strength, as it allows application design to remain focused on business logic rather than infrastructure resilience.

In virtualized environments, multipathing becomes even more significant. Shared storage is a foundational requirement for features such as live migration, high availability clustering, and distributed workload balancing. Any disruption in storage connectivity can affect multiple virtual machines simultaneously. Multipathing ensures that storage access remains stable even in the presence of hardware failures, enabling virtualization platforms to deliver consistent service availability across dynamic workloads.

Similarly, in clustered computing systems, multipathing supports coordination and consistency by ensuring that all nodes maintain reliable access to shared data resources. This prevents disruptions that could otherwise compromise cluster integrity or lead to data inconsistency. In these environments, storage connectivity is not just a performance concern but a structural requirement for system correctness.

Despite its advantages, multipathing is not without complexity. Misconfigurations, uneven path distribution, and hardware inconsistencies can all reduce its effectiveness. Proper implementation requires careful alignment between storage systems, network infrastructure, and operating system configurations. When these elements are not properly coordinated, issues such as uneven load distribution or suboptimal failover behavior can emerge.

Long-term success with multipathing depends on continuous validation and refinement. As infrastructure evolves, new components are introduced, workloads shift, and performance expectations change. Multipathing configurations must be revisited regularly to ensure they remain aligned with current conditions. This includes testing failover behavior, evaluating load balancing effectiveness, and ensuring that redundancy assumptions remain valid.

Ultimately, multipathing represents a convergence of reliability engineering and performance optimization within storage architecture. It reflects a broader shift in IT design philosophy, where resilience is built into the foundation of systems rather than added as an afterthought. By combining multiple independent paths with intelligent traffic management, multipathing enables infrastructure that is both robust under failure conditions and efficient under peak demand.

As modern IT environments continue to scale in complexity, the principles embodied by multipathing will remain central to storage architecture design. Its ability to unify redundancy, performance, and adaptability ensures that it will continue to play a critical role in supporting the demands of enterprise computing, cloud ecosystems, and data-intensive workloads.