Voice traffic over IP networks introduces strict performance requirements that differ significantly from typical data applications. Unlike email or file transfers, voice communication is real-time and highly sensitive to delay, jitter, and packet loss. This makes bandwidth planning a core responsibility for network engineers, especially in environments where Cisco IP telephony is deployed across LAN, WAN, and remote access links. As organizations adopt hybrid work models and cloud-connected collaboration systems, voice streams increasingly traverse shared infrastructure alongside critical business data. Without proper bandwidth estimation, even well-designed networks can experience degraded call quality, echo effects, or dropped audio segments. Effective VoIP bandwidth planning ensures that sufficient capacity exists not only for average usage but also for peak concurrent call scenarios. This requires understanding how voice packets are generated, transmitted, and consumed by endpoints across the network fabric, as well as how underlying protocol behavior impacts total bandwidth utilization.

How Analog Voice Becomes Digital Data in VoIP Systems

Voice transmission over IP begins with the conversion of analog sound waves into digital representations. Human speech is naturally continuous, but IP networks require discrete data units for transport. This conversion process begins with sampling, where the analog waveform is measured at fixed time intervals. Each measurement captures the amplitude of the signal at a specific moment. These samples are then quantized into binary values, forming the digital representation of the original sound. The accuracy of this reconstruction depends heavily on the sampling rate, which determines how many measurements are taken per second. Higher sampling rates preserve more detail but generate more data, increasing bandwidth consumption. Lower sampling rates reduce data size but can introduce distortion. In Cisco IP environments, this tradeoff is managed through codec selection, which defines both sampling behavior and compression rules. The result is a stream of structured digital voice data ready for packetization and network transmission.

Codec Behavior and Its Direct Influence on Bandwidth Consumption

Codecs determine how efficiently voice data is compressed before transmission, and they are one of the most important factors in bandwidth calculation. A codec defines the algorithm used to encode and decode audio, balancing quality, compression efficiency, and computational complexity. G.711 is commonly used in enterprise environments due to its high audio fidelity and minimal compression, resulting in higher bandwidth usage per call. G.729 applies more aggressive compression, significantly reducing bandwidth requirements while maintaining acceptable speech quality for many business scenarios. G.722 improves perceived audio clarity by using wideband sampling, capturing a broader frequency range for more natural sound reproduction. ILBC is designed for resilience in unstable network conditions, maintaining intelligibility even when packet loss occurs. Each codec produces a different payload size and transmission behavior, meaning that bandwidth planning cannot be standardized without first identifying codec usage across the network environment. Codec selection becomes a strategic decision balancing cost, quality, and infrastructure capacity.

Packetization and the Formation of Voice Frames for Transmission

Once the voice is encoded by a codec, it is grouped into packets for transmission across the network. This process is known as packetization and is governed by the packetization interval, which defines how frequently voice samples are collected and encapsulated. A shorter interval means more frequent packet transmission, which increases overhead but reduces delay. A longer interval reduces the number of packets sent per second, lowering overhead but potentially increasing latency. In Cisco VoIP systems, typical intervals include values such as 10 milliseconds or 20 milliseconds, depending on configuration. Each packet contains a portion of the voice stream along with metadata required for reconstruction at the receiving end. The packetization interval directly influences how many packets are transmitted each second, which becomes a key variable in bandwidth estimation. Even whenthe codec bitrate remains constant, changing the packetization interval alters overall network consumption due to variations in packet frequency.

Understanding Protocol Encapsulation and Per-Packet Overhead

Voice packets do not exist as standalone data units; they are encapsulated within multiple protocol layers that each add overhead. At the application level, voice data is carried using real-time transport mechanisms designed for sequencing and timing control. Beneath this layer, transport protocols ensure delivery across IP networks, while network-layer protocols manage addressing and routing. At the data link layer, additional framing is required for transmission over physical media such as Ethernet. Each of these layers contributes header information that increases the total packet size beyond the actual voice payload. While this overhead does not carry voice information, it is essential for successful packet delivery. The cumulative effect of these headers can significantly increase bandwidth consumption, especially in environments with small payload sizes or high packet transmission rates. Understanding encapsulation overhead is essential for accurate capacity planning in Cisco IP telephony deployments.

How Bandwidth Usage Is Structurally Composed in VoIP Calls

Total VoIP bandwidth usage is not determined solely by codec bitrate but by a combination of payload size, packet frequency, and protocol overhead. Each voice packet contains a portion of encoded audio along with multiple layers of encapsulation data. The payload represents the compressed voice sample, while headers at various network layers add fixed overhead per packet. As packet frequency increases, overhead accumulates proportionally, making the packetization interval a critical factor in bandwidth efficiency. The overall bandwidth consumed by a single call can therefore be understood as the product of packet size and packets transmitted per second. However, packet size itself is not static, as it includes both voice payload and protocol headers. This layered structure means that small changes in configuration can have significant impacts on network utilization, especially in large-scale deployments with hundreds or thousands of simultaneous calls.

The Role of Quality Factors in Voice Transmission Performance

Voice quality in IP networks is influenced by multiple performance metrics, including latency, jitter, and packet loss. Latency refers to the time it takes for a voice packet to travel from source to destination, while jitter represents variability in packet arrival times. Packet loss occurs when packets are dropped due to congestion or network instability. These factors are closely related to bandwidth availability, as insufficient bandwidth can lead to congestion, which in turn increases latency and packet loss. Codecs are often evaluated using perceived quality metrics that estimate how natural and intelligible the audio sounds under specific conditions. However, these quality metrics are indirectly influenced by bandwidth allocation, since insufficient capacity can degrade performance regardless of codec capability. Proper bandwidth planning ensures that voice traffic is prioritized and transmitted with minimal disruption.

Methodological Approach to Estimating Cisco IP Call Bandwidth

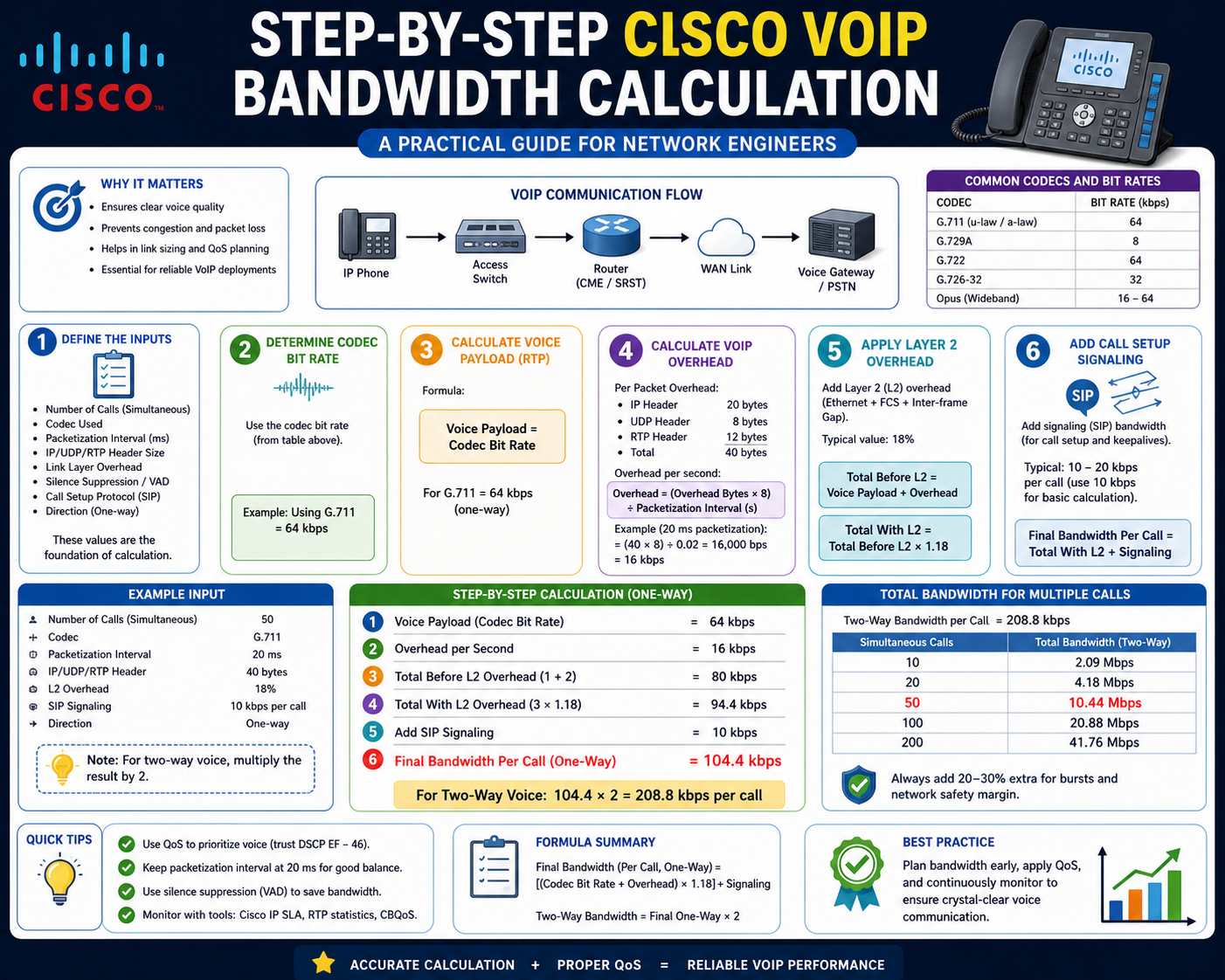

Estimating bandwidth for Cisco IP calls involves analyzing multiple variables rather than relying on a single measurement. The process begins by identifying the codec in use, as this determines base compression and payload size. Next, the packetization interval is considered to determine how frequently packets are transmitted. These values are combined with protocol overhead to calculate the total packet size. Once the packet size is determined, it is multiplied by the number of packets transmitted per second to estimate total bandwidth usage per call. This approach reflects real-world network behavior more accurately than relying on codec bitrate alone. Engineers must also account for scalability, as the number of concurrent calls directly multiplies total bandwidth demand. This structured estimation method ensures that network infrastructure is capable of handling both average and peak traffic conditions without degradation in voice quality.

Translating VoIP Concepts Into Measurable Bandwidth Models

Once the foundational behavior of codecs, sampling, and packetization is understood, the next step is converting those concepts into measurable bandwidth values. In Cisco IP telephony environments, bandwidth calculation is not abstract; it is a direct engineering requirement for designing WAN links, sizing VPN tunnels, and ensuring call quality under load. The key challenge is that VoIP traffic is composed of multiple layers of data encapsulation, each contributing to total bandwidth usage. A codec alone does not define actual network consumption. Instead, real bandwidth usage emerges from the interaction of codec payload size, packetization interval, transport protocols, and Layer 2 framing. To accurately model this, engineers must move from theoretical bitrate thinking into packet-based analysis, where each voice packet is treated as a complete unit of transmission cost.

Core Variables Used in Cisco VoIP Bandwidth Estimation

Accurate bandwidth estimation relies on several interconnected variables that define how voice data is structured and transmitted. The most important include codec type, payload size per sample, packetization interval, packet frequency per second, and protocol overhead. Codec selection determines how efficiently voice is compressed before transmission, while payload size defines how much voice data is included in each packet. The packetization interval determines how often packets are created, directly affecting the packet rate. Protocol overhead includes IP, UDP, RTP, and Ethernet headers, all of which add fixed or semi-fixed costs to each packet. These variables must be analyzed together because changing one parameter can significantly alter total bandwidth usage. For example, reducing the packetization interval increases packet frequency, which increases overhead even if the payload size remains constant. This interconnected structure makes VoIP bandwidth estimation a multi-variable engineering problem rather than a simple formula substitution.

Understanding Packet Structure in Cisco Voice Traffic

Every VoIP packet transmitted across a Cisco IP network is composed of multiple structured layers. At the core is the voice payload, which contains compressed audio generated by the codec. Surrounding this payload are transport and network headers that enable routing, sequencing, and delivery. The IP header provides addressing information required for routing packets across networks. The UDP header ensures lightweight transport without the overhead of connection-oriented protocols. The RTP header adds timing and sequence information critical for reconstructing voice streams in the correct order. At the data link layer, Ethernet framing encapsulates the entire packet for physical transmission across network interfaces. Each of these layers adds fixed byte overhead per packet, meaning that even small voice payloads can result in relatively large total packet sizes. Understanding this layered structure is essential for accurate bandwidth modeling because overhead is incurred per packet, not per byte of voice data.

The Importance of Packetization Interval in Bandwidth Efficiency

The packetization interval is one of the most influential parameters in VoIP bandwidth calculation. It defines how much voice data is collected before being sent as a packet. A shorter interval, such as 10 milliseconds, results in more frequent packet transmission, which increases protocol overhead but reduces latency. A longer interval, such as 20 or 30 milliseconds, reduces the number of packets sent per second, decreasing overhead but increasing potential delay. Cisco voice systems typically standardize on specific intervals depending on the codec and design requirements. The relationship between packetization interval and packet frequency is inverse; as the interval increases, the number of packets per second decreases. This directly affects bandwidth usage because overhead is applied per packet. Therefore, even if payload size remains constant, changing the packetization interval can significantly alter total bandwidth consumption. This makes interval selection a key design decision in VoIP deployments.

Calculating Packets Per Second in Voice Transmission

Packets per second is derived directly from the packetization interval. Since voice traffic is measured in milliseconds, the conversion is straightforward. A 20 millisecond interval means that 50 packets are transmitted per second, while a 10 millisecond interval results in 100 packets per second. This value is critical because it determines how often overhead is applied. Each packet incurs fixed header costs regardless of payload size, meaning higher packet rates increase total bandwidth usage even if voice data volume remains unchanged. This is one of the most commonly misunderstood aspects of VoIP engineering. Many assume that reducing packet size reduces bandwidth consumption, but in reality, smaller packets often increase total overhead due to higher packet frequency. Understanding packets per second allows engineers to accurately scale bandwidth requirements across multiple concurrent calls.

Role of Voice Payload in Total Bandwidth Calculation

Voice payload represents the actual compressed audio data within each packet. It is determined by codec behavior and sampling interval. For example, a codec with a 20 millisecond packetization interval will generate a specific number of bytes per sample based on its encoding algorithm. This payload is the only part of the packet that directly contributes to voice quality. However, it is not the dominant factor in total bandwidth usage once overhead is included. In many cases, protocol headers can represent a significant portion oof the otal packet size, especially for low-bitrate codecs. This means that even highly efficient codecs still generate a non-trivial bandwidth footprint due to encapsulation requirements. As a result, engineers must evaluate payload and overhead together rather than focusing solely on codec bitrate.

Protocol Layers Contributing to VoIP Overhead

VoIP traffic relies on multiple protocol layers, each adding its own overhead to every packet. The IP layer provides routing functionality and adds a fixed header to each packet. The UDP layer enables lightweight transport without session management overhead. The RTP layer introduces sequence numbers and timestamps to maintain proper audio playback order. Finally, Ethernet framing ensures delivery over physical network infrastructure. Each of these layers adds a fixed number of bytes per packet, meaning overhead is incurred repeatedly for every voice frame transmitted. This layered approach ensures reliability and timing accuracy but increases total bandwidth consumption. In high-density environments with many simultaneous calls, protocol overhead can represent a significant portion of total network utilization.

Constructing a Practical Bandwidth Calculation Model

A practical VoIP bandwidth model combines payload size, packet frequency, and protocol overhead into a single expression of network consumption per call. The process begins by determining payload size per packet based on the codec and packetization interval. Next, protocol overhead is added to compute the total packet size. Then, the packet frequency is calculated based on the packetization interval. Finally, total bandwidth is derived by multiplying packet size by packets per second. This model reflects real-world behavior more accurately than bitrate-only calculations because it accounts for encapsulation overhead and packet frequency. It also scales linearly with the number of concurrent calls, making it suitable for enterprise capacity planning. Engineers use this model to estimate WAN link requirements, VPN sizing, and call admission control thresholds.

Scaling Bandwidth for Multiple Concurrent Cisco IP Calls

In enterprise environments, bandwidth planning must account for multiple simultaneous calls rather than a single session. Each call consumes a fixed amount of bandwidth based on codec and packetization configuration. As the number of concurrent calls increases, total bandwidth consumption scales linearly. This makes concurrency a critical factor in network design. For example, a link that supports 10 calls comfortably may become congested when scaled to 100 calls without proper capacity planning. Engineers must therefore estimate peak call volumes rather than average usage. This includes accounting for busy-hour traffic patterns, failover scenarios, and remote access surges. Proper scaling ensures that voice quality remains consistent even under maximum load conditions.

Impact of Codec Selection on Bandwidth Efficiency

Codec selection has a direct impact on both bandwidth usage and voice quality. High-fidelity codecs such as G.711 consume more bandwidth but provide superior audio clarity. Compressed codecs such as G.729 reduce bandwidth requirements significantly but introduce slight degradation in voice quality. Wideband codecs such as G.722 improve clarity by capturing a broader frequency range, which increases the perceived naturalness of speech. Each codec interacts differently with packetization and overhead, meaning that bandwidth efficiency is not solely determined by bitrate. In some cases, a lower bitrate codec may not result in proportional bandwidth savings due to fixed protocol overhead per packet. This makes codec selection a strategic decision based on available infrastructure capacity and quality requirements.

Understanding Jitter, Latency, and Bandwidth Interdependence

Although bandwidth is a primary factor in VoIP performance, it does not operate in isolation. Latency and jitter are equally important and are closely tied to bandwidth availability. Latency refers to the delay between voice transmission and reception, while jitter represents variability in packet arrival times. Insufficient bandwidth can increase both latency and jitter due to congestion and queueing delays. Even if theoretical bandwidth is sufficient, poor traffic engineering can still degrade call quality. VoIP traffic requires consistent packet delivery intervals to maintain smooth audio playback. When network conditions fluctuate, jitter buffers are used at endpoints to compensate, but excessive variation can still result in degraded call quality. Therefore, bandwidth planning must be integrated with the overall quality of service design.

Engineering Considerations for Real-World Cisco Voice Networks

In real deployments, VoIP bandwidth calculation is only one part of a broader network design strategy. Engineers must also consider routing efficiency, quality of service prioritization, WAN link variability, and failover scenarios. Voice traffic is typically prioritized using QoS mechanisms to ensure it receives consistent treatment across congested links. This includes classification, marking, and queuing strategies that protect voice packets from data traffic congestion. Additionally, redundancy and load balancing must be considered to maintain call continuity during network failures. Bandwidth estimation provides the foundation, but operational performance depends on how effectively the network enforces traffic prioritization and manages congestion.

Moving From Basic Calculation to Engineering-Level VoIP Design

At this stage of Cisco VoIP bandwidth planning, the focus shifts from isolated calculations to system-level engineering. Real-world enterprise voice networks are not built around single-call assumptions but around aggregated traffic patterns, variable codec behavior, WAN constraints, and dynamic user loads. Bandwidth calculation becomes a predictive modeling exercise where multiple variables interact under real operating conditions. Engineers must account for concurrency, traffic bursts, failover scenarios, and hybrid connectivity paths. In practice, VoIP bandwidth planning is less about finding a single number and more about defining a resilient operating envelope in which voice quality remains stable under fluctuating network conditions. This requires combining codec mathematics with traffic engineering principles and quality control mechanisms such as prioritization, shaping, and admission control.

Understanding Traffic Aggregation in Cisco IP Telephony Systems

In enterprise networks, VoIP traffic rarely exists as isolated calls. Instead, it aggregates across departments, locations, and communication platforms. Each call contributes a predictable bandwidth load, but when multiplied across hundreds or thousands of endpoints, the cumulative impact becomes significant. Traffic aggregation introduces complexity because calls are not evenly distributed over time. Instead, they follow usage patterns influenced by business hours, regional activity, and organizational behavior. Peak periods can create sudden bandwidth spikes that exceed average estimates by a large margin. This makes peak traffic modeling essential for accurate network design. Engineers must consider not only steady-state usage but also worst-case concurrency scenarios where a large percentage of endpoints are active simultaneously. This ensures that network infrastructure does not become oversubscribed during critical communication periods.

Bandwidth Scaling Principles for Large-Scale Cisco Voice Deployments

Scaling VoIP bandwidth in enterprise environments follows linear and non-linear considerations depending on network topology. At a basic level, each additional call adds a fixed amount of bandwidth based on codec and packetization settings. However, scaling complexity increases when multiple network segments, WAN links, and inter-site connections are involved. Aggregation points such as routers and gateways must handle not only increased bandwidth but also increased packet processing overhead. As call volume grows, CPU utilization, queue depth, and buffer capacity become limiting factors alongside raw bandwidth availability. This means that scaling VoIP systems requires a holistic approach that includes both capacity planning and hardware performance evaluation. Engineers must ensure that network devices are capable of handling peak packet rates without introducing latency or jitter, which can degrade voice quality even when bandwidth appears sufficient.

Quality of Service as a Critical Component in Voice Bandwidth Management

Quality of Service (QoS) mechanisms play a central role in ensuring that VoIP traffic receives consistent treatment across shared networks. Unlike traditional data traffic, voice requires predictable latency and minimal jitter. QoS policies achieve this by classifying voice packets and assigning them a higher priority within network queues. This ensures that voice traffic is transmitted ahead of less time-sensitive data during periods of congestion. Bandwidth allocation policies can also reserve a portion of network capacity exclusively for voice traffic, preventing starvation under heavy load conditions. In Cisco environments, QoS is often implemented through marking mechanisms that identify voice packets at the edge of the network and enforce priority treatment throughout the transmission path. Without QoS, even correctly calculated bandwidth may not guarantee acceptable call quality due to unpredictable congestion behavior.

Call Admission Control and Bandwidth Enforcement Mechanisms

Call Admission Control (CAC) is a preventive mechanism used to limit the number of simultaneous VoIP calls based on available bandwidth. Rather than allowing unlimited call initiation, CAC evaluates current network utilization and determines whether sufficient resources exist for additional calls. If bandwidth thresholds are exceeded, new calls are rejected or rerouted to alternate paths. This ensures that existing calls maintain quality rather than being degraded by oversubscription. CAC operates as a dynamic enforcement layer on top of static bandwidth calculations, translating theoretical capacity into operational limits. In enterprise environments, CAC is essential for maintaining service quality during peak usage periods or network failures where available bandwidth is reduced. It effectively bridges the gap between calculated capacity and real-time network conditions.

Impact of WAN Links and Internet Transport on Voice Bandwidth

When Cisco IP calls traverse WAN or internet-based connections, bandwidth behavior becomes more variable due to fluctuating link conditions. Unlike controlled LAN environments, WAN links may experience variable latency, congestion, and packet loss depending on provider infrastructure and routing paths. This variability introduces additional complexity into bandwidth planning because theoretical capacity may not always reflect the actual available throughput. Engineers must account for overhead introduced by tunneling protocols such as VPN encapsulation, which increases packet size and reduces effective bandwidth. Additionally, shared internet links may introduce contention with non-voice traffic, requiring stricter QoS enforcement and traffic shaping. WAN optimization becomes a critical factor in ensuring consistent voice performance across distributed enterprise sites.

Codec Efficiency Versus Network Cost Trade-Off Analysis

Selecting a codec involves balancing bandwidth efficiency against voice quality and computational cost. High-quality codecs such as G.711 provide excellent audio fidelity but consume significant bandwidth, making them suitable for LAN environments with abundant capacity. Compressed codecs reduce bandwidth usage but may introduce perceptible quality loss under certain conditions. Wideband codecs improve natural speech reproduction but increase payload size and processing requirements. Engineers must evaluate not only bandwidth consumption but also the operational cost of supporting each codec across distributed endpoints. In large-scale environments, even small differences in per-call bandwidth can accumulate into substantial infrastructure requirements. This makes codec selection a strategic decision that directly influences network architecture, cost efficiency, and user experience.

Real-World Variability in VoIP Bandwidth Consumption

Theoretical bandwidth calculations assume consistent packet sizes and stable transmission intervals, but real-world networks introduce variability. Factors such as voice activity detection, silence suppression, packet loss recovery, and jitter buffering can alter actual bandwidth usage. Voice activity detection reduces bandwidth consumption by transmitting packets only during active speech, while silence suppression eliminates unnecessary traffic during pauses. However, these optimizations introduce variability in traffic patterns, making bandwidth usage less predictable. Packet loss recovery mechanisms may retransmit or reconstruct missing data, temporarily increasing bandwidth consumption. Jitter buffers at endpoints also affect perceived latency and packet handling behavior. These dynamic elements make real-world VoIP bandwidth usage more complex than static calculation models suggest.

Enterprise Voice Architecture and Bandwidth Distribution Models

Enterprise VoIP systems are typically distributed across multiple network layers, including access, distribution, and core segments. Each layer contributes differently to overall bandwidth consumption and traffic behavior. Access networks handle endpoint connections and local aggregation, while distribution layers manage inter-segment routing and policy enforcement. Core networks handle high-volume transport between major sites or data centers. Bandwidth must be distributed appropriately across these layers to prevent bottlenecks. Improper distribution can lead to congestion at aggregation points even if the total network capacity is sufficient. Engineers must model traffic flow across the entire architecture, ensuring that each segment has adequate provisioning for both average and peak loads.

High-Density VoIP Environments and Capacity Constraints

In high-density environments such as contact centers or large corporate campuses, VoIP traffic can scale to thousands of simultaneous calls. At this scale, even small inefficiencies in packet structure or codec selection can result in significant bandwidth consumption. Network devices must handle not only increased bandwidth but also high packet-per-second rates, which can stress processing resources. Queue management, buffer sizing, and interface capacity become critical design considerations. Additionally, redundancy mechanisms must be in place to ensure continuity during failures or maintenance events. High-density environments often require specialized design approaches that prioritize scalability, resilience, and deterministic performance over cost optimization.

Bandwidth Optimization Techniques for Cisco IP Telephony

Optimizing VoIP bandwidth involves reducing unnecessary overhead, selecting efficient codecs, and implementing traffic management policies. Codec optimization focuses on choosing the most appropriate encoding method for each use case. Packetization optimization adjusts intervals to balance latency and overhead. Header compression techniques can reduce protocol overhead, especially on WAN links. Traffic prioritization ensures voice packets are transmitted with minimal delay during congestion. Additionally, network segmentation can isolate voice traffic from general data traffic, reducing contention. Each optimization technique contributes incrementally to overall efficiency, and their combined effect can significantly reduce required bandwidth without compromising call quality.

Long-Term Capacity Planning and Network Evolution Considerations

VoIP bandwidth planning is not a one-time activity but an ongoing process that evolves with organizational growth and technology changes. As user bases expand, communication patterns shift, and new collaboration tools are introduced, bandwidth requirements change accordingly. Cloud-based communication platforms may introduce additional traffic paths, while remote work increases reliance on internet-based connectivity. Engineers must continuously reassess bandwidth models to ensure they remain aligned with actual usage patterns. Future-proofing network design involves building capacity buffers, implementing scalable architectures, and maintaining flexibility in codec and routing configurations. This ensures that Cisco IP telephony systems remain reliable and efficient even as enterprise communication demands continue to grow.

Conclusion

Cisco IP call bandwidth calculation is not just a theoretical exercise; it is a practical engineering discipline that directly affects call quality, user experience, and overall network stability. When all the underlying components are combined—codec behavior, sampling, packetization intervals, protocol overhead, and network transport conditions—the result is a complete model for understanding how voice traffic behaves across IP infrastructure. In real environments, this knowledge allows network engineers to move beyond guesswork and design voice networks with predictable performance characteristics. The key takeaway is that bandwidth usage is never defined by codec bitrate alone; instead, it is the cumulative effect of multiple layered processes that must all be accounted for together.

Every VoIP bandwidth model begins with codec selection because it defines the foundational characteristics of voice encoding. Whether using G.711 for high-quality uncompressed audio, G.729 for bandwidth efficiency, or G.722 for wideband clarity, the codec determines payload size and compression behavior. However, codec choice is only the starting point. In isolation, it gives a misleading impression of total network usage because it does not account for packetization or protocol overhead. In real Cisco deployments, engineers must always interpret codec bitrate as a baseline value rather than a final measurement. The actual network impact is always higher once transport encapsulation and packet frequency are considered.

One of the most important insights in VoIP engineering is that protocol overhead often represents a significant portion of total bandwidth consumption. IP, UDP, RTP, and Ethernet headers are required for every packet, regardless of how small the voice payload is. This means that reducing codec bitrate does not always produce proportional bandwidth savings. In fact, in some scenarios, aggressive compression can increase relative overhead impact because more packets are required or payload efficiency decreases. Understanding this hidden cost is essential for accurate network design. Engineers who ignore overhead often underestimate real-world bandwidth requirements, leading to congestion and degraded voice quality during peak usage.

Packetization interval plays a critical role in determining how efficiently voice data is transmitted across a network. Short intervals increase packet frequency, which improves responsiveness but increases overhead. Longer intervals reduce packet frequency, improving efficiency but introducing potential delay. This trade-off must be carefully balanced in Cisco IP telephony design. In practice, the packetization interval often has as much influence on total bandwidth consumption as codec selection itself. A poorly chosen interval can double packet rates unnecessarily, increasing load on routers, switches, and WAN links. For this reason, interval tuning is a key optimization tool in enterprise voice engineering.

While single-call bandwidth calculations are useful for understanding fundamentals, real enterprise networks operate at scale. Hundreds or thousands of concurrent calls may occur during peak business hours, and each call contributes a predictable but cumulative bandwidth load. This scaling effect is linear in theory but operationally complex due to traffic bursts, regional usage differences, and failover conditions. Network engineers must therefore design for peak concurrency rather than average usage. Failure to do so can result in saturation events where available bandwidth is exhausted, causing widespread degradation in call quality. Proper scaling ensures that voice systems remain stable even under maximum load conditions.

Bandwidth availability alone does not guarantee good voice quality. Even well-provisioned networks can experience degraded calls if voice traffic is not prioritized correctly. Quality of Service mechanisms ensure that voice packets are treated with higher priority than general data traffic. This prevents congestion-related delays and jitter, which are particularly damaging to real-time communication. QoS works in conjunction with bandwidth planning by enforcing traffic behavior that aligns with design assumptions. Without QoS, even accurately calculated bandwidth allocations can fail under mixed traffic conditions. This makes QoS an essential companion to any VoIP bandwidth engineering strategy.

Although bandwidth formulas provide a structured way to estimate voice traffic, real-world conditions introduce variability that cannot be fully captured in static models. Network congestion, routing changes, jitter buffer behavior, and voice activity detection all influence actual bandwidth usage. Silence suppression, for example, reduces traffic during inactive speech periods, while packet loss recovery mechanisms may temporarily increase bandwidth consumption. These dynamic factors mean that VoIP networks are inherently fluid systems rather than fixed mathematical models. Engineers must therefore treat calculations as guidelines rather than absolute values and incorporate safety margins into all capacity planning decisions.

A common mistake in VoIP planning is relying on a single bandwidth number per call without considering how that value interacts with the broader network architecture. In reality, voice traffic flows through multiple layers, including access, distribution, and core networks, each with its own capacity constraints. Bottlenecks can occur at any layer, not just at WAN edges. Therefore, engineers must evaluate bandwidth distribution across the entire path rather than focusing on a single link. This layered approach ensures that no segment becomes a hidden point of congestion that undermines overall voice performance.

VoIP bandwidth planning is not a one-time activity but an ongoing process that must evolve alongside organizational growth and technology adoption. As users increase, communication patterns change, and new collaboration tools are introduced, voice traffic patterns shift accordingly. Cloud-based systems, remote work adoption, and hybrid communication platforms all contribute to changing bandwidth demands. Engineers must continuously revisit assumptions, update calculations, and validate network performance against real usage data. This ongoing evaluation ensures that voice systems remain reliable and scalable over time rather than becoming outdated as network conditions evolve.

Ultimately, Cisco IP call bandwidth calculation represents a convergence of multiple networking disciplines, including packet analysis, protocol understanding, traffic engineering, and performance optimization. It requires engineers to think beyond isolated formulas and instead view voice traffic as a structured, multi-layered system operating within shared infrastructure. When properly understood and applied, these principles enable the design of voice networks that are efficient, scalable, and resilient under real-world conditions. The true value of mastering VoIP bandwidth engineering lies not in producing a single number, but in developing the ability to anticipate how voice traffic behaves under different network scenarios and designing systems that maintain consistent performance regardless of load or complexity.