For individuals entering Kubernetes environments for the first time, the conceptual landscape can feel layered and highly abstract. Kubernetes is not a single tool but an orchestration system designed to manage distributed, containerized applications across clusters of machines. This means it introduces multiple levels of abstraction to ensure applications remain scalable, resilient, and manageable in production environments. Among these abstractions, containers and pods form the most essential structural relationship. Containers represent isolated runtime units for applications, while pods represent the execution boundary within which these containers operate. Understanding this distinction is critical because it defines how workloads are deployed, scheduled, and maintained across a Kubernetes cluster.

Foundational Role of Kubernetes in Distributed Systems

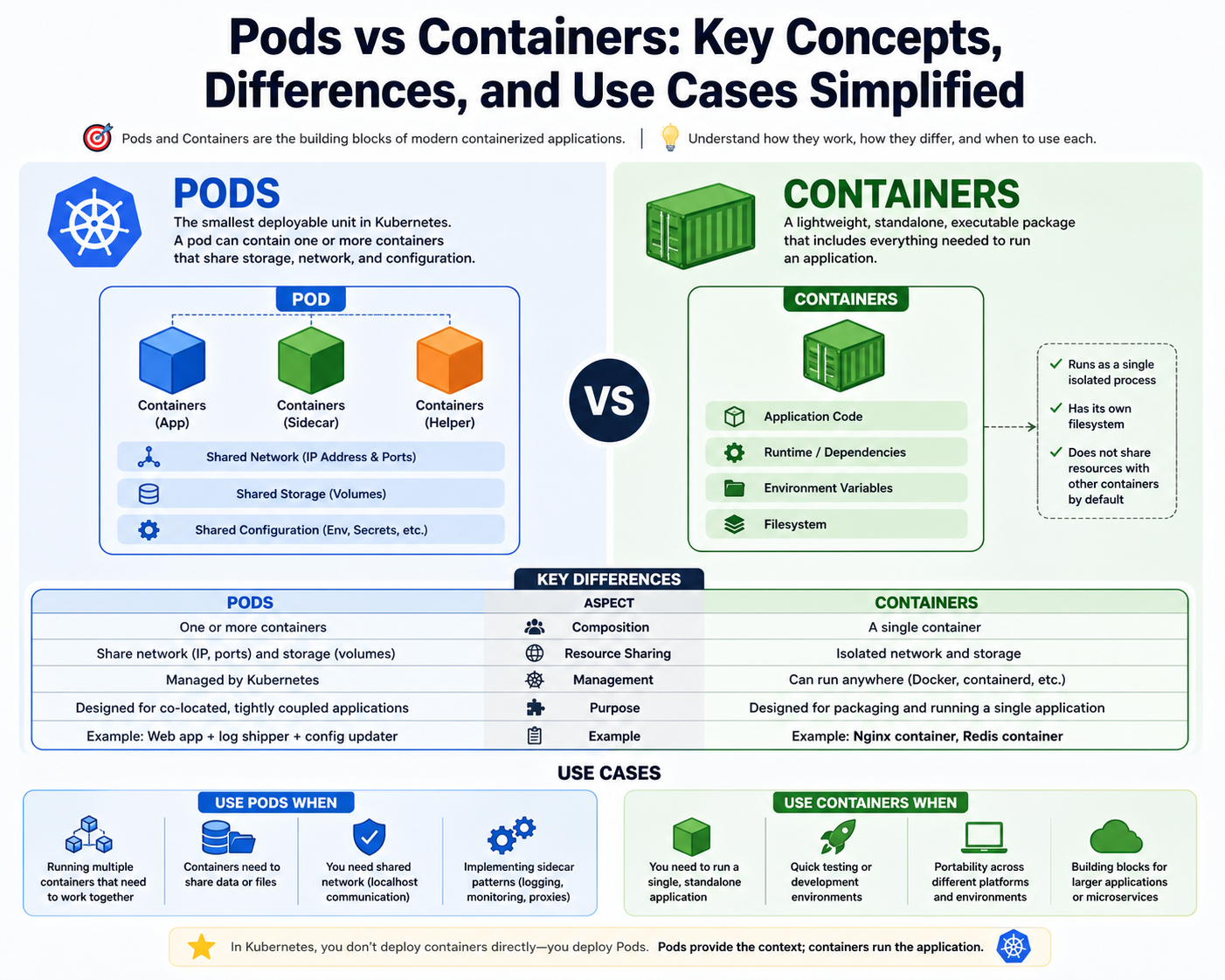

Modern application design has shifted away from monolithic structures toward modular, service-based architectures. These architectures break applications into smaller components that can be developed, deployed, and scaled independently. Containers enable this approach by packaging application code with its dependencies into portable units. However, as the number of containers grows, managing them manually becomes inefficient and unreliable. Kubernetes introduces a control system that automates deployment and operational tasks across distributed infrastructure. It ensures that containers are not only running but are also correctly configured, properly distributed, and continuously monitored. Within this system, pods act as the smallest unit of orchestration, grouping containers into manageable entities.

Understanding Containers as Isolated Runtime Environments

A container is a lightweight execution environment that encapsulates an application and its dependencies. This encapsulation ensures consistency across different computing environments, eliminating the common issue of software behaving differently in development and production. Containers achieve this by leveraging operating system-level virtualization, which isolates processes while sharing the host system kernel. Each container runs as an independent process with its own filesystem, network stack, and runtime environment. This isolation reduces dependency conflicts and enhances portability. Containers are designed to be immutable in nature, meaning they are not modified after creation; instead, new versions are deployed when changes are required.

Container Images and Their Role in Execution

Every container originates from a container image, which serves as a blueprint for its runtime behavior. An image defines the application code, system libraries, dependencies, and configuration settings required to run the application. When a container is launched, it is instantiated from this image, ensuring a consistent environment every time it is executed. Images are stored in registries and pulled into runtime environments when needed. This separation between image creation and container execution allows for reproducibility and version control. In Kubernetes environments, pods reference these images to define which containers should be created and how they should behave.

Introduction to the Pod Abstraction Layer

A pod is the fundamental scheduling unit in Kubernetes and represents a logical grouping of one or more containers. While containers operate as isolated processes, pods provide a shared execution context. This context includes networking, storage access, and lifecycle management. Containers within a pod share the same network identity and storage volumes, allowing them to communicate efficiently without external networking overhead. This design is particularly useful for applications that require tightly coupled components, such as a primary application container paired with logging, monitoring, or proxy containers. By grouping these containers together, Kubernetes ensures they are always deployed, scaled, and managed as a single unit.

Why Kubernetes Uses Pods Instead of Direct Container Management

Directly managing containers at scale introduces complexity, especially when applications require multiple interconnected components. Kubernetes addresses this by introducing pods as an intermediary layer. Instead of scheduling individual containers, Kubernetes schedules pods onto worker nodes. This ensures that all containers within a pod share the same execution environment and are co-located on the same machine. This co-location is essential for performance and communication efficiency. Without pods, managing inter-container relationships would require manual configuration and external coordination, which would reduce the automation benefits that Kubernetes provides.

Pod Lifecycle and Ephemeral Characteristics

Pods are designed to be ephemeral, meaning they are temporary and replaceable. They are not intended to persist indefinitely. When a pod is created, Kubernetes attempts to maintain its desired state as defined by configuration specifications. If a pod fails, becomes unresponsive, or is terminated, it is not repaired directly. Instead, Kubernetes deletes it and creates a new instance that matches the desired configuration. This behavior ensures consistency across the system but requires applications to be designed in a stateless manner. Persistent data must be stored externally so that it is not lost when pods are replaced.

Networking Model Within a Pod

One of the most significant features of pods is their shared networking model. All containers inside a pod share a single network namespace, which means they share the same IP address and port space. This allows containers to communicate using local networking interfaces without requiring external routing mechanisms. This design simplifies inter-container communication and reduces networking complexity. External communication with other pods or services is handled at the pod level rather than at the container level. This abstraction allows Kubernetes to manage networking consistently across large clusters without requiring manual configuration for each container.

Storage and Data Sharing Inside Pods

Pods also provide a mechanism for shared storage between containers. This is achieved through volumes that are mounted into the pod and made accessible to all containers within it. Shared storage is essential for scenarios where multiple containers need access to the same data, such as log aggregation, caching, or configuration sharing. Unlike container filesystems, which are ephemeral and isolated, pod-level storage allows data to persist across container restarts within the same pod lifecycle. This shared storage model enhances collaboration between containers and enables more complex application architectures within a single pod.

Resource Management and Allocation Strategies

Kubernetes provides mechanisms for controlling how much compute resource each container within a pod can consume. These resources include CPU and memory, which are defined through request and limit values. Requests define the minimum resources guaranteed to a container, while limits define the maximum resources it can consume. These constraints help ensure fair resource distribution across the cluster and prevent individual containers from overwhelming system resources. The pod serves as the scheduling unit that Kubernetes uses to evaluate whether a node has sufficient capacity to host its containers. This ensures efficient utilization of infrastructure resources.

Scheduling Behavior and Node Assignment

When a pod is created, Kubernetes evaluates available worker nodes to determine where it should be placed. This decision is based on resource availability, constraints, and scheduling policies. Once assigned, all containers within the pod are launched on the same node. This ensures that they share the same execution environment and can communicate efficiently. If a node becomes unavailable, Kubernetes reschedules the pod onto another node, maintaining system resilience. This scheduling mechanism abstracts infrastructure complexity and allows applications to remain operational even in dynamic environments where nodes may be added or removed.

Security Context and Execution Control Within Pods

Security in Kubernetes is enforced at multiple levels, including the pod level. A security context defines how containers within a pod should operate from a permissions and access standpoint. This includes user identity, privilege escalation rules, and filesystem access restrictions. By applying security policies at the pod level, Kubernetes ensures consistent enforcement across all containers within that pod. This reduces the risk of misconfiguration and enhances system security. Containers can be restricted to run under non-root users, limiting their ability to modify critical system components and reducing potential attack surfaces.

Interaction Between Containers and Pods in Execution Flow

The execution flow in Kubernetes begins with pod creation rather than container creation. When a pod is scheduled, Kubernetes communicates with the container runtime on the selected node to instantiate the containers defined within the pod specification. These containers are then launched within the shared environment provided by the pod. This hierarchical execution model ensures that containers are always managed within a controlled context. It also simplifies lifecycle management, as Kubernetes only needs to monitor the state of the pod rather than tracking individual containers separately.

Operational Importance of Pod-Level Abstraction

The pod abstraction is essential for enabling Kubernetes to manage complex distributed applications efficiently. Without pods, each container would require independent scheduling, networking, and lifecycle management, significantly increasing system complexity. Pods simplify this by grouping related containers into a single manageable unit. This allows Kubernetes to apply policies, monitor health, and perform scaling operations at the pod level. It also ensures that tightly coupled application components remain synchronized in their execution, reducing inconsistencies and operational overhead in large-scale environments.

Relationship Hierarchy Between Pods and Containers

The structural relationship between pods and containers is hierarchical and strictly defined. Containers represent the execution layer of applications, while pods represent the orchestration layer that manages those containers. This hierarchy ensures separation of concerns, where containers focus on application execution and pods handle coordination, scheduling, and resource management. This design is fundamental to Kubernetes architecture and enables it to support highly scalable and resilient distributed systems without requiring manual intervention for routine operational tasks.

Expanding the Kubernetes Execution Model Beyond Basics

Understanding pods and containers at a surface level is only the beginning of working with Kubernetes effectively. Once the foundational relationship between the two is clear, the next layer involves how Kubernetes uses these abstractions to manage distributed workloads at scale. Kubernetes is not simply a runtime system; it is a reconciliation engine that continuously aligns the actual state of a cluster with a desired configuration. In this model, pods are not static objects but dynamic entities that are constantly monitored, replaced, and rescheduled as conditions change. Containers exist as execution units inside pods, but their behavior is entirely governed by the pod lifecycle and the control plane decisions that manage them.

How Kubernetes Treats Pods as Scheduling Units

In Kubernetes architecture, scheduling does not operate at the container level. Instead, the scheduler evaluates pods as complete units and assigns them to nodes within the cluster. This means that all containers defined within a pod are always deployed together on the same machine. The scheduler considers multiple factors when making placement decisions, including available CPU, memory, node labels, affinity rules, and taints or tolerations. This approach ensures that interdependent containers remain co-located, which is essential for performance and reliability. It also simplifies networking and storage coordination, since all containers in a pod share the same runtime environment.

Node-Level Execution and Container Runtime Interaction

Once a pod is assigned to a node, the container runtime on that node is responsible for launching the containers defined in the pod specification. This runtime could be containerd or another compatible runtime interface. The runtime pulls the required container images, creates the container instances, and configures them according to the pod specification. This includes setting environment variables, mounting volumes, applying security contexts, and establishing networking configurations. The pod acts as the instruction set, while the container runtime performs the actual execution. This separation of responsibilities is what enables Kubernetes to remain flexible and platform-agnostic.

Shared Networking Architecture Inside Pods

A key design feature of pods is their shared networking model. Every container within a pod shares the same network namespace, which includes a single IP address and shared port space. This design allows containers to communicate using localhost, eliminating the need for external networking between tightly coupled services. For example, an application container can communicate directly with a logging or monitoring container using local network interfaces. This simplifies communication patterns and reduces latency. External access to pods is handled separately through service-level abstractions, ensuring that internal and external networking concerns remain decoupled.

Implications of Shared Network Identity

Because all containers in a pod share a single IP address, they are treated as a single network entity from outside the cluster. This has important implications for service discovery and load balancing. External services do not communicate with individual containers but instead interact with the pod as a whole. This design ensures consistency in communication and allows Kubernetes to distribute traffic evenly across multiple pod replicas. It also reduces the complexity of managing dynamic IP addresses for individual containers, since the pod acts as the stable network endpoint.

Storage Architecture and Volume Sharing Mechanisms

In addition to networking, pods also provide a shared storage model through volumes. Volumes are defined at the pod level and can be mounted into one or more containers within the same pod. This enables data sharing between containers without requiring external storage systems for temporary data exchange. For example, one container may generate logs while another processes or ships those logs to an external system. Because both containers access the same volume, data exchange is efficient and synchronized. Unlike container filesystems, which are ephemeral and isolated, pod volumes provide a shared persistence layer for the duration of the pod lifecycle.

Types of Volumes and Their Use Cases

Kubernetes supports multiple volume types, each designed for specific use cases. Some volumes are ephemeral and exist only for the lifecycle of the pod, while others are backed by persistent storage systems that survive pod restarts. Ephemeral volumes are commonly used for temporary data exchange between containers, while persistent volumes are used for application data that must survive failures or rescheduling events. The pod specification defines how these volumes are mounted and accessed, ensuring consistent data availability across container restarts within the same pod lifecycle.

Resource Allocation and Scheduling Constraints

Resource management in Kubernetes is a critical aspect of pod behavior. Each container within a pod can define resource requests and limits for CPU and memory usage. These values are used by the scheduler to determine whether a node has sufficient capacity to run the pod. Resource requests define the minimum guaranteed resources, while limits define the maximum allowed usage. This ensures that no single container can monopolize system resources. At the pod level, Kubernetes aggregates these requirements to make scheduling decisions, ensuring efficient distribution of workloads across the cluster.

Impact of Resource Constraints on Cluster Stability

Proper resource allocation is essential for maintaining cluster stability. If containers exceed their allocated resources, they may be throttled or terminated depending on configuration policies. This prevents system-wide resource exhaustion and ensures that other workloads continue to function normally. Pods serve as the enforcement boundary for these resource constraints, ensuring that all containers within them operate within defined limits. This model enables predictable performance and prevents resource contention in multi-tenant environments where multiple applications share the same infrastructure.

Pod Lifecycle Management in Dynamic Environments

Pods are inherently transient and are continuously managed by Kubernetes controllers. When a pod is created, it enters a lifecycle that includes pending, running, and terminating states. If a pod fails health checks or becomes unreachable, Kubernetes marks it for termination and replaces it with a new instance. This self-healing behavior ensures that applications remain available even in the presence of node failures or application errors. Containers within the pod do not have independent lifecycles; instead, they are tightly bound to the lifecycle of the pod itself.

Health Monitoring and Readiness Probes

Kubernetes uses health checks to determine the status of containers within pods. These checks include liveness probes and readiness probes. Liveness probes determine whether a container is running correctly, while readiness probes determine whether it is ready to accept traffic. If a container fails a liveness probe, Kubernetes restarts it. If it fails a readiness probe, it is temporarily removed from service routing. These mechanisms ensure that only healthy pods receive traffic, improving system reliability and reducing downtime in production environments.

Self-Healing Mechanisms and Fault Recovery

One of the most powerful features of Kubernetes is its self-healing capability. When a pod fails, Kubernetes does not attempt to repair it in place. Instead, it creates a new pod based on the original configuration. This approach ensures consistency and eliminates configuration drift. Containers inside failed pods are discarded along with their state unless external storage mechanisms are used. This design encourages stateless application architecture, where application logic is separated from persistent data storage.

Role of Controllers in Managing Pods

Pods are not typically created directly by users in production environments. Instead, they are managed by higher-level controllers such as deployments or replica sets. These controllers define the desired number of pod replicas and ensure that the actual number matches the desired state. If a pod is deleted or fails, the controller automatically creates a replacement. This abstraction simplifies workload management and ensures that applications remain scalable and resilient without manual intervention.

Scaling Behavior and Replica Management

Scaling in Kubernetes is achieved by adjusting the number of pod replicas. When demand increases, additional pods are created and distributed across available nodes. When demand decreases, excess pods are terminated. Each pod is identical in configuration, ensuring consistency across replicas. Containers within these pods run the same application logic but operate independently. This horizontal scaling model allows Kubernetes to handle variable workloads efficiently and maintain performance under changing conditions.

Inter-Pod Communication Versus Intra-Pod Communication

Communication between containers inside a pod is fundamentally different from communication between pods. Intra-pod communication occurs over localhost within a shared network namespace and is highly efficient. Inter-pod communication, however, occurs over the cluster network and involves service discovery mechanisms. This separation ensures that tightly coupled components remain efficient while still allowing distributed communication across the cluster. Kubernetes services act as stable endpoints for routing traffic between pods, abstracting the underlying network complexity.

Security Boundaries at the Pod Level

Security in Kubernetes is enforced through multiple layers, with the pod serving as a key boundary. Security contexts define user permissions, privilege escalation rules, and access controls for containers within a pod. This ensures that containers operate under restricted conditions, reducing the risk of system compromise. Network policies can also be applied at the pod level to control communication between pods. This fine-grained security model allows administrators to enforce strict isolation between workloads while maintaining operational flexibility.

Execution Isolation and Multi-Tenancy Considerations

Pods provide a logical isolation boundary that supports multi-tenancy in Kubernetes clusters. While multiple pods may run on the same node, they remain isolated from each other in terms of networking, storage, and process execution. This isolation ensures that workloads belonging to different applications or teams do not interfere with each other. Containers within a pod, however, are intentionally less isolated from each other, as they are designed to function as part of a single application unit.

Operational Significance of Pod Design in Cloud Systems

The pod abstraction is one of the most important design decisions in Kubernetes architecture. It bridges the gap between low-level container execution and high-level application orchestration. By grouping containers into pods, Kubernetes simplifies scheduling, networking, storage, and lifecycle management. This design enables complex distributed systems to be managed with predictable behavior and high levels of automation. It also provides a consistent model for scaling and maintaining applications across heterogeneous infrastructure environments.

Advanced Kubernetes Architecture and the Role of Pods in Complex Systems

At an advanced level, Kubernetes is best understood as a distributed control system rather than a simple container manager. Pods and containers are not isolated concepts but integral parts of a broader reconciliation architecture that continuously enforces desired system states across a dynamic infrastructure. In this model, pods represent the atomic unit of scheduling and execution, while containers represent the actual workload processes. As systems grow in complexity, the interaction between these two constructs becomes more significant, especially when dealing with multi-tier applications, microservices ecosystems, and large-scale distributed workloads. The abstraction provided by pods allows Kubernetes to manage these complexities without exposing low-level infrastructure details to application developers.

Multi-Container Pod Design Patterns in Real-World Systems

In production environments, pods often contain more than one container, forming what is known as multi-container design patterns. These patterns are used when multiple processes must work closely together within the same execution context. A common example includes a primary application container paired with a supporting sidecar container. The sidecar may handle logging, monitoring, security enforcement, or data synchronization. Because both containers share the same pod, they also share networking and storage resources, enabling efficient communication. This design avoids the overhead of inter-process network communication across separate pods, improving performance and simplifying system architecture.

Sidecar Architecture and Its Operational Advantages

The sidecar pattern is one of the most widely used multi-container strategies in Kubernetes. In this pattern, the main application container is accompanied by a helper container that extends its functionality without modifying its core logic. For example, a sidecar may collect logs from the main application and forward them to an external system, or it may handle encryption and decryption of network traffic. Since both containers exist within the same pod, they can communicate using local interfaces, eliminating the need for external networking. This architecture promotes modularity and separation of concerns while maintaining tight integration between components.

Init Containers and Sequential Startup Behavior

Another important concept in pod design is the use of initialization containers. These are special containers that run before the main application containers start. Init containers are executed sequentially and must complete successfully before the pod transitions into a running state. They are commonly used for setup tasks such as configuration preparation, database migration, or dependency verification. This ensures that the environment is fully prepared before application logic begins execution. Unlike regular containers, init containers are not restarted after completion, reinforcing their role as setup utilities rather than long-running processes.

Pod Networking in Large-Scale Cluster Environments

As Kubernetes clusters scale, networking becomes increasingly complex. Despite this complexity, the pod abstraction simplifies communication by ensuring that each pod receives a unique IP address within the cluster network. This allows pods to communicate directly with each other without requiring additional translation layers. However, in large environments, direct pod-to-pod communication is typically managed through service discovery mechanisms. Services provide stable endpoints that route traffic to dynamically changing pod instances. This ensures that even as pods are created, destroyed, or rescheduled, network communication remains consistent and reliable.

Service Abstraction and Traffic Distribution Across Pods

Services in Kubernetes act as stable network interfaces that abstract away the ephemeral nature of pods. Instead of communicating directly with individual pods, external and internal clients interact with services, which then distribute traffic across healthy pod replicas. This load balancing mechanism ensures that no single pod becomes a bottleneck. It also provides fault tolerance, as failed pods are automatically removed from service routing. This separation between service endpoints and pod instances is critical for maintaining high availability in distributed systems.

Pod Affinity and Anti-Affinity Scheduling Rules

Kubernetes provides advanced scheduling controls through affinity and anti-affinity rules. These rules determine how pods are placed across nodes in a cluster. Affinity rules allow pods to be scheduled close to specific resources or other pods, while anti-affinity rules ensure that certain pods are distributed across different nodes. This is particularly useful for high availability systems where redundancy is required. For example, replicas of the same application may be spread across multiple nodes to prevent a single point of failure. These scheduling policies enhance resilience and optimize resource utilization.

Node Selection and Resource-Aware Scheduling Decisions

The Kubernetes scheduler evaluates multiple factors when placing pods on nodes. These include available CPU, memory, disk capacity, and network conditions. Additionally, node labels and taints are used to control which pods can run on which nodes. This allows administrators to segment infrastructure based on workload requirements. For example, compute-intensive workloads may be scheduled on high-performance nodes, while lightweight services may run on standard nodes. This resource-aware scheduling ensures efficient utilization of cluster resources and prevents performance degradation.

Fault Tolerance and High Availability Through Pod Replication

High availability in Kubernetes is achieved through pod replication. Instead of relying on a single instance of an application, Kubernetes maintains multiple identical pods running across different nodes. If one pod fails, traffic is automatically redirected to healthy replicas. The system continuously monitors pod health and ensures that the desired number of replicas is always maintained. This redundancy eliminates single points of failure and ensures continuous service availability even during node outages or system maintenance events.

State Management and Stateless Design Principles in Pods

Pods are designed to support stateless application architectures. This means that they do not retain critical application state internally. Instead, state is stored externally in databases, object storage systems, or persistent volumes. This design allows pods to be freely created, destroyed, and replaced without impacting application integrity. Stateless design is essential for achieving scalability and resilience in Kubernetes environments. It ensures that applications can scale horizontally without concerns about data consistency within individual pods.

Persistent Storage Integration with Pod Lifecycles

Although pods are ephemeral, they can be connected to persistent storage systems through persistent volumes. These volumes are independent of pod lifecycles and allow data to survive pod termination or rescheduling. When a new pod is created, it can attach to the same persistent volume, ensuring continuity of data. This separation between compute and storage is a key architectural principle in Kubernetes. It allows systems to maintain flexibility in compute resources while preserving critical application data across lifecycle events.

Observability and Monitoring at the Pod Level

Observability in Kubernetes is primarily achieved at the pod level. Metrics such as CPU usage, memory consumption, network traffic, and restart counts are collected for each pod. This data provides insights into application performance and system health. Monitoring tools aggregate this information to detect anomalies, identify bottlenecks, and trigger alerts. Because pods represent the smallest operational unit, they provide the most granular visibility into system behavior. This makes them essential for performance tuning and operational troubleshooting.

Logging Architecture and Centralized Data Collection

Logs generated by containers within pods are typically collected and forwarded to centralized logging systems. Since multiple containers within a pod share the same execution environment, their logs are often correlated to understand application behavior. Logging sidecars are frequently used to capture and transmit log data without modifying the main application container. This approach ensures that logging remains decoupled from application logic while still providing comprehensive visibility into system activity.

Security Hardening and Isolation Strategies in Pods

Security in Kubernetes environments relies heavily on pod-level isolation. Containers within a pod can be restricted using security contexts that define user permissions, filesystem access, and process capabilities. Additionally, network policies can restrict communication between pods, ensuring that only authorized traffic flows between services. This layered security model reduces the attack surface and enforces strict boundaries between workloads. Even though containers within a pod are closely integrated, they can still be governed by fine-grained security rules.

Resource Contention and Quality of Service Classification

Kubernetes classifies pods into different quality-of-service tiers based on their resource configurations. These tiers determine how pods are treated under resource pressure conditions. Pods with guaranteed resources are prioritized over those with burstable or best-effort configurations. This classification ensures that critical workloads maintain performance even when system resources are limited. Containers within pods inherit these classifications, ensuring consistent resource management across the entire workload.

Cluster Scalability and Horizontal Expansion Models

Kubernetes clusters are designed to scale horizontally by adding more nodes and distributing pods across them. As demand increases, additional pods are created and scheduled onto available nodes. This model allows systems to handle large-scale workloads without requiring vertical scaling of individual machines. Containers within pods scale indirectly as pod replicas increase. This separation of scaling responsibilities simplifies system design and ensures predictable performance under varying loads.

Interplay Between Control Plane and Pod Lifecycle Management

The Kubernetes control plane is responsible for managing the entire lifecycle of pods. It continuously monitors cluster state and ensures that actual conditions match the desired configuration. When discrepancies are detected, such as failed pods or insufficient replicas, the control plane takes corrective action. This may involve creating new pods, deleting failed ones, or rescheduling workloads across nodes. Containers are indirectly managed through this system, as their lifecycle is entirely dependent on the pods that host them.

Long-Term Significance of Pod Abstraction in Cloud-Native Systems

The pod abstraction remains one of the most important architectural decisions in Kubernetes. It provides a balance between simplicity and flexibility, allowing complex multi-container applications to be managed as single operational units. By decoupling containers from direct orchestration and introducing pods as an intermediary layer, Kubernetes enables scalable, resilient, and efficient distributed systems. This abstraction continues to serve as the foundation for modern cloud-native architectures, supporting everything from microservices to large-scale enterprise workloads.

Conclusion

The relationship between pods and containers forms the foundation of how Kubernetes operates, and understanding this relationship is essential for grasping the broader principles of cloud-native architecture. At its core, Kubernetes does not treat containers as standalone entities in production environments. Instead, it organizes them into pods, which serve as the smallest deployable and manageable units in the system. This distinction is not arbitrary; it reflects a deliberate architectural decision to simplify orchestration, improve scalability, and enable reliable execution of distributed workloads across clusters of machines.

Containers, by design, are lightweight and isolated runtime environments that package application code along with all necessary dependencies. This makes them highly portable and consistent across different computing environments. However, containers alone do not solve the challenges of orchestration at scale. When applications are composed of multiple interconnected services, managing each container independently becomes inefficient and error-prone. Kubernetes addresses this limitation by introducing pods as a higher-level abstraction that groups related containers together into a single operational unit.

A pod, therefore, is not simply a wrapper around a container. It is an execution context that defines how containers behave collectively. Containers within a pod share networking, storage, and lifecycle characteristics. This shared environment allows them to communicate efficiently using local interfaces, access shared volumes, and operate as part of a unified application component. This design is particularly useful in microservices architectures, where multiple processes need to work closely together while still maintaining logical separation.

One of the most important aspects of this relationship is lifecycle management. Containers do not have independent lifecycles in Kubernetes. Instead, their lifecycle is bound to the pod that hosts them. When a pod is created, all its containers are instantiated together. When a pod is terminated, all containers within it are also removed. If a failure occurs, Kubernetes does not attempt to repair individual containers in isolation. Instead, it replaces the entire pod with a new instance that matches the desired configuration. This approach ensures consistency and simplifies recovery mechanisms, but it also requires applications to be designed with statelessness in mind.

Another key element is the networking model. Containers within a pod share a single network namespace, meaning they operate under the same IP address and can communicate using localhost. This eliminates the complexity of inter-container networking within the same pod and allows developers to design tightly coupled components without external communication overhead. At the same time, communication between different pods is handled through Kubernetes networking abstractions such as services, which provide stable endpoints and load balancing across multiple pod instances. This separation of internal and external communication simplifies system design while maintaining scalability.

Storage behavior also reinforces the close relationship between pods and containers. While containers themselves are ephemeral and do not retain data after termination, pods can define shared volumes that persist for the duration of the pod’s lifecycle. These volumes allow multiple containers within the same pod to access and manipulate shared data, enabling use cases such as logging, caching, and inter-process communication. However, because pods are also ephemeral, persistent storage solutions are required when data must survive beyond the lifecycle of a single pod. This separation between compute and storage is a core principle in Kubernetes architecture.

From a scheduling perspective, Kubernetes treats pods as atomic units. The scheduler does not place individual containers onto nodes; instead, it evaluates entire pods and assigns them to appropriate nodes based on resource availability and constraints. This ensures that all containers within a pod are co-located on the same machine and can operate within a shared environment. Scheduling decisions also take into account factors such as resource requests, node affinity, and anti-affinity rules, which help distribute workloads efficiently across the cluster. This model ensures predictable behavior and efficient utilization of infrastructure resources.

Security considerations further highlight the importance of the pod abstraction. Containers within a pod can share security contexts that define user permissions, access controls, and privilege restrictions. This ensures consistent enforcement of security policies across all containers in the pod. Additionally, network policies can restrict communication between pods, providing isolation between different application components. This layered security approach reduces the attack surface and helps maintain a secure execution environment in multi-tenant clusters.

The pod model also plays a critical role in scaling and high availability. Applications are typically deployed as multiple identical pods distributed across different nodes. This allows Kubernetes to handle traffic spikes by increasing the number of pod replicas and ensures resilience by replacing failed pods automatically. Because each pod is identical in configuration, scaling operations are straightforward and do not require changes to individual containers. This horizontal scaling model is a key advantage of Kubernetes and is made possible by the standardized pod abstraction.

In operational terms, pods provide a unified view of application behavior. Monitoring, logging, and debugging are all performed at the pod level, allowing administrators to observe system performance in a structured and consistent way. Metrics such as CPU usage, memory consumption, and restart counts are collected per pod, providing insight into application health. Logs from multiple containers within a pod can be correlated to understand system behavior more effectively, especially in multi-container designs where components interact closely.

Ultimately, the distinction between pods and containers reflects a deeper design philosophy within Kubernetes. Containers are responsible for executing application logic in isolated environments, while pods are responsible for managing how those containers are deployed, coordinated, and maintained within a distributed system. This separation of concerns allows Kubernetes to scale from small deployments to massive global infrastructures without sacrificing consistency or control.

Understanding this relationship is essential for working effectively with Kubernetes in real-world environments. It clarifies why applications are structured the way they are, how failures are handled, and how scaling decisions are made. More importantly, it provides a conceptual foundation for designing applications that are resilient, scalable, and aligned with cloud-native principles.