Modern enterprise networks are no longer confined to a single physical location. Applications, services, and virtual machines are distributed across multiple data centers that may be located in different cities or even different regions. This distribution introduces a fundamental challenge for network designers: how to maintain seamless connectivity and consistent network behavior across geographically separated environments. Traditional Layer 2 networks were originally designed for localized communication within a single broadcast domain, which makes them inefficient when extended across long distances. As organizations grow, they often require workload mobility, disaster recovery capabilities, and high availability architectures that depend on consistent Layer 2 adjacency between sites. However, extending Layer 2 networks across Layer 3 infrastructure introduces complexity, including broadcast traffic issues, spanning tree limitations, and operational overhead. Overlay Transport Virtualization emerged as a solution to bridge this gap by enabling Layer 2 connectivity over Layer 3 transport networks without requiring fundamental changes to the underlying infrastructure. This approach allows organizations to maintain application continuity while leveraging scalable IP-based routing between data centers.

Understanding the Purpose of Overlay Transport Virtualization

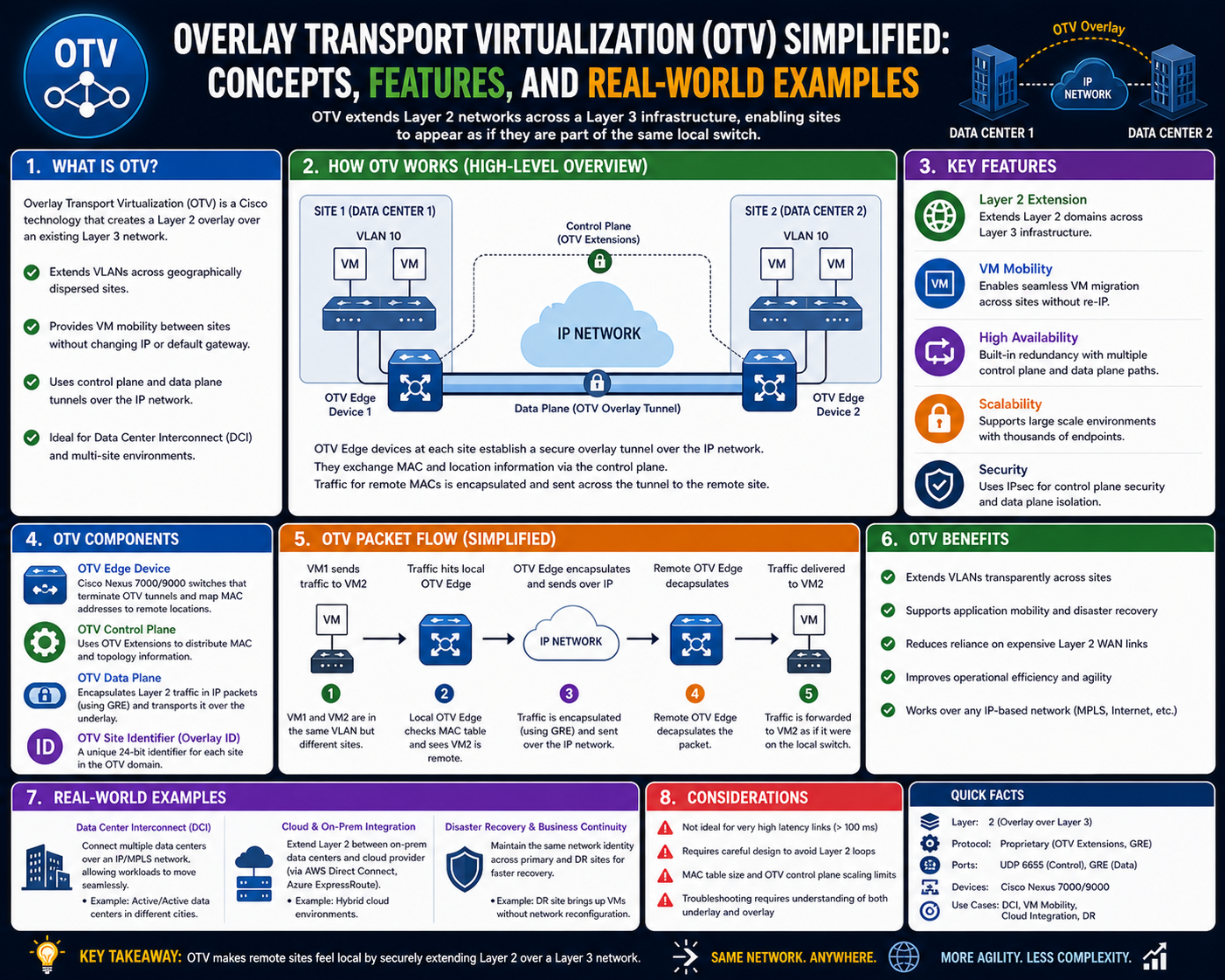

Overlay Transport Virtualization is designed to simplify the process of connecting multiple data centers while preserving the behavior of a single Layer 2 domain. Instead of relying on traditional methods such as stretched VLANs or complex VPN mesh topologies, it introduces an overlay network that operates independently of the physical transport layer. This overlay acts as a logical extension of the local Ethernet environment, allowing devices in different locations to communicate as if they were on the same network segment. The primary purpose of this approach is to enable workload mobility, simplify network management, and reduce dependency on rigid physical infrastructure designs. By decoupling the logical network from the physical network, Overlay Transport Virtualization provides greater flexibility in how data centers are interconnected. It allows engineers to design networks based on application requirements rather than being constrained by geographic limitations. This abstraction also helps reduce operational complexity, as changes to the underlying transport network do not directly impact the overlay configuration.

Core Concept of Layer 2 Extension Across Layer 3 Infrastructure

At the heart of Overlay Transport Virtualization is the concept of extending Layer 2 communication across a Layer 3 routed network. In traditional networking, Layer 2 domains rely on MAC address learning and broadcast mechanisms to facilitate communication between devices. However, these mechanisms do not scale efficiently across large geographic distances. Overlay Transport Virtualization addresses this limitation by encapsulating Layer 2 frames within Layer 3 packets, allowing them to be transported across IP networks. This encapsulation process ensures that Ethernet frames remain intact while being routed through standard IP infrastructure. When a frame needs to travel between data centers, it is wrapped inside an IP header and forwarded through the underlying network. Upon reaching the destination, the encapsulation is removed, and the original Ethernet frame is delivered to the target device. This method preserves the characteristics of a local Layer 2 network while leveraging the scalability and resilience of Layer 3 routing. It effectively creates a virtual bridge between remote locations without requiring direct physical connectivity at Layer 2.

Architectural Foundation of Overlay Transport Virtualization

The architecture of Overlay Transport Virtualization is built around several key components that work together to establish and maintain the overlay network. One of the most important components is the edge device, which acts as the boundary between the local Layer 2 network and the overlay infrastructure. These devices are responsible for encapsulating outgoing traffic and decapsulating incoming traffic. They also participate in the control mechanisms that allow the overlay to function efficiently. Another critical component is the logical overlay interface, which defines the virtual network segment used for communication across sites. This interface behaves like a traditional Layer 2 interface from the perspective of connected devices, even though it operates over a Layer 3 transport. The underlying transport network itself is typically composed of standard IP routing infrastructure, which carries encapsulated traffic between edge devices. This separation between overlay and underlay networks is a fundamental design principle, as it allows each layer to operate independently. The architecture is also designed with scalability in mind, enabling multiple sites to be connected without requiring full mesh connectivity between every location.

Role of Encapsulation in Network Communication

Encapsulation is a core mechanism that enables Overlay Transport Virtualization to function across Layer 3 networks. When a device in one data center sends a frame to a device in another data center, the frame must be prepared for transport across the IP-based infrastructure. This is achieved by wrapping the original Ethernet frame inside an IP packet. The encapsulation process adds routing information to the frame, allowing it to be forwarded like any other IP packet through the network. Once the packet reaches the destination edge device, the outer IP header is removed, and the original Ethernet frame is restored. This process is transparent to end devices, which continue to operate as if they are connected to a local network. Encapsulation also allows multiple Layer 2 domains to coexist over the same physical infrastructure without interfering with each other. By separating logical communication from physical transport, the network gains flexibility and scalability. This mechanism is essential for enabling seamless communication across geographically distributed environments.

MAC Address Learning and Distribution Mechanisms

Efficient handling of MAC address information is essential for the proper functioning of Overlay Transport Virtualization. In traditional Layer 2 networks, switches learn MAC addresses dynamically by observing traffic patterns and flooding unknown destinations. However, this approach does not scale well in distributed environments. Overlay Transport Virtualization improves efficiency by using a more structured approach to MAC address learning and distribution. Instead of relying solely on flooding, edge devices exchange MAC address information through control mechanisms. This allows each device to maintain a global view of where endpoints are located within the overlay network. As a result, traffic can be forwarded directly to the correct destination without unnecessary broadcasting. This reduces network congestion and improves overall performance. The centralized awareness of MAC address locations also helps prevent loops and redundant traffic paths. By maintaining an updated mapping of devices across the network, the system ensures that data is delivered efficiently and accurately.

Separation of Control Plane and Data Plane Functions

A key design principle of Overlay Transport Virtualization is the separation of control plane and data plane operations. The control plane is responsible for exchanging network information, maintaining topology awareness, and distributing MAC address mappings between edge devices. It ensures that all participating devices have a consistent understanding of the network structure. The data plane, on the other hand, handles the actual forwarding of traffic based on the information provided by the control plane. This separation allows each function to operate independently, improving scalability and performance. The control plane focuses on intelligence and decision-making, while the data plane focuses on high-speed packet forwarding. This division of responsibilities ensures that changes in network topology are quickly reflected across all devices without disrupting traffic flow. It also enables more efficient use of network resources, as forwarding decisions are based on accurate and up-to-date information.

Network Transparency and Incremental Deployment Advantages

One of the significant advantages of Overlay Transport Virtualization is its ability to operate transparently over existing network infrastructure. Since it relies on IP-based transport, it does not require major modifications to the underlying network. This makes it possible to deploy the technology incrementally, without disrupting existing services. Organizations can introduce Overlay Transport Virtualization alongside their current interconnect solutions, gradually transitioning to a more flexible architecture. This incremental approach reduces risk and allows for smoother adoption. The transparency of the system also means that individual data center sites do not need to be aware of the entire network topology. Each site operates independently, while still participating in the global overlay. This design simplifies network management and reduces the complexity associated with large-scale deployments. It also allows organizations to reuse existing infrastructure investments while enhancing their capabilities through overlay technology.

Support for Workload Mobility Across Data Centers

Workload mobility is one of the primary use cases for Overlay Transport Virtualization. In modern virtualized environments, applications and virtual machines often need to move between data centers for load balancing, maintenance, or disaster recovery purposes. Such mobility requires consistent Layer 2 connectivity, as many applications depend on stable network identities and configurations. Overlay Transport Virtualization enables this by maintaining the same Layer 2 environment across multiple locations. When a workload is moved from one data center to another, its network characteristics remain unchanged, allowing it to continue functioning without interruption. This capability is particularly important for cloud-based architectures and hybrid environments where resources are dynamically allocated. By preserving network continuity, Overlay Transport Virtualization ensures that applications remain available and responsive regardless of their physical location.

High-Level Operational Flow of Traffic in an Overlay Network

The operational flow of traffic within an Overlay Transport Virtualization environment begins when a device generates a frame destined for another location. The local edge device examines the destination MAC address and determines whether it belongs to a local or remote endpoint. If the destination is remote, the frame is encapsulated within an IP packet and sent across the transport network. The underlying IP infrastructure routes the packet to the appropriate remote edge device based on standard routing protocols. Once the packet arrives at the destination, the encapsulation is removed, and the original frame is delivered to the target device. This entire process occurs transparently, without requiring any changes to the end devices. The efficiency of this flow depends on accurate MAC address mapping and effective control plane communication. By ensuring that each step is optimized, Overlay Transport Virtualization provides a seamless communication experience across distributed data centers.

Evolution of Data Center Interconnect and the Need for Overlay Solutions

As enterprise IT environments evolved from traditional on-premises deployments to highly virtualized and distributed architectures, the way data centers communicate also had to change. Earlier generations of data center interconnect relied heavily on physical circuits, manual configurations, and rigid Layer 2 extensions. These approaches worked well when workloads were static and data centers were few in number. However, modern environments demand something far more dynamic. Applications are now designed for mobility, scalability, and continuous availability. Virtual machines are frequently moved between physical locations, and cloud integration has become a standard requirement rather than an optional enhancement. This shift has placed enormous pressure on traditional network designs, especially those that depend on large stretched Layer 2 domains. The challenge lies in maintaining consistent network behavior while avoiding the instability and inefficiency associated with extending broadcast domains over long distances. Overlay Transport Virtualization emerged as a response to these challenges by introducing a logical abstraction layer that decouples the application network from the physical transport infrastructure. This evolution represents a shift from hardware-centric design to software-defined connectivity, enabling more flexible and scalable data center architectures.

Logical Separation Between Overlay and Underlay Networks

A fundamental principle of Overlay Transport Virtualization is the separation between the overlay network and the underlay network. The underlay network refers to the physical or routed IP infrastructure that carries traffic between data centers. It is responsible for providing basic connectivity, routing, and transport services. The overlay network, on the other hand, is a virtual construct that exists on top of the underlay. It is responsible for maintaining Layer 2 adjacency and enabling seamless communication between distributed endpoints. This separation allows each layer to function independently, which is critical for scalability and operational simplicity. Changes made to the underlay network, such as routing updates or link modifications, do not directly impact the overlay configuration. Similarly, changes within the overlay, such as endpoint mobility or MAC address updates, do not require modifications to the physical infrastructure. This decoupling reduces complexity and allows network administrators to manage each layer according to its specific role. It also improves fault isolation, as issues in one layer do not necessarily propagate to the other.

Role of Edge Devices in Overlay Transport Virtualization

Edge devices play a central role in the operation of Overlay Transport Virtualization. These devices are positioned at the boundary between the local data center network and the overlay infrastructure. Their primary responsibility is to handle the encapsulation and decapsulation of traffic as it enters and exits the overlay. When a frame originates within a local network and is destined for a remote location, the edge device encapsulates it within an IP packet for transport across the underlay network. Upon arrival at the destination site, the receiving edge device removes the encapsulation and forwards the original frame to its intended recipient. Beyond traffic handling, edge devices also participate in control mechanisms that distribute network information across the overlay. They exchange data related to endpoint locations, MAC address mappings, and network topology. This ensures that all participating devices maintain a consistent view of the overlay environment. Edge devices must be highly reliable and capable of handling significant traffic loads, as they represent critical points in the communication path between data centers. Their performance directly impacts the efficiency and stability of the entire overlay network.

Control Plane Communication and Network Intelligence Distribution

The control plane in Overlay Transport Virtualization serves as the intelligence layer of the network. It is responsible for distributing information about network endpoints, maintaining topology awareness, and ensuring consistency across all participating devices. Instead of relying on traditional flooding-based discovery methods, the control plane proactively shares information between edge devices. This allows the network to operate more efficiently and reduces unnecessary traffic. When a new endpoint becomes available, its location is advertised through control plane communication so that all relevant devices are updated. This ensures that traffic can be forwarded directly to the correct destination without relying on broadcast mechanisms. The control plane also plays a key role in maintaining network stability. When changes occur, such as a device failure or a link disruption, updated information is quickly propagated to all devices in the overlay. This rapid convergence helps maintain uninterrupted connectivity and reduces the risk of packet loss or misrouting. The intelligence provided by the control plane is what enables Overlay Transport Virtualization to scale effectively across large and complex environments.

Data Plane Forwarding and Traffic Optimization Techniques

The data plane is responsible for the actual movement of traffic within the Overlay Transport Virtualization environment. It uses information provided by the control plane to make forwarding decisions and ensure that packets reach their intended destinations efficiently. When a frame is received, the data plane determines whether the destination is local or remote. If the destination is remote, the frame is encapsulated and sent through the underlay network. If the destination is local, it is delivered directly without encapsulation. This decision-making process is critical for optimizing performance and reducing unnecessary overhead. The data plane is designed to handle large volumes of traffic at high speed, making it suitable for enterprise-scale deployments. It also supports advanced traffic optimization techniques, such as efficient multicast handling and load balancing across multiple paths. These capabilities ensure that the network can adapt to varying traffic patterns and maintain consistent performance even under heavy load conditions. By separating forwarding logic from control logic, the data plane achieves both efficiency and scalability.

MAC Address Mapping and Reduction of Broadcast Dependency

In traditional Layer 2 networks, MAC address learning relies heavily on broadcast and flooding mechanisms. When a device sends traffic to an unknown destination, switches flood the network until the destination is discovered. While this approach works in small environments, it becomes inefficient in large-scale distributed networks. Overlay Transport Virtualization addresses this limitation by maintaining a centralized or distributed MAC address mapping system. Instead of flooding traffic, edge devices share MAC address information through control plane communication. This allows each device to maintain an accurate database of endpoint locations. As a result, traffic can be forwarded directly to the correct destination without unnecessary broadcasting. This significantly reduces network congestion and improves overall efficiency. It also enhances security by limiting the exposure of traffic to unintended recipients. By minimizing reliance on broadcast mechanisms, Overlay Transport Virtualization creates a more predictable and controlled communication environment. This approach is particularly beneficial in environments with high mobility, where endpoints frequently move between data centers.

Multisite Connectivity and Geographic Distribution of Workloads

One of the most powerful capabilities of Overlay Transport Virtualization is its ability to connect multiple data centers across geographically distributed locations. This enables organizations to build multisite architectures that support redundancy, disaster recovery, and load balancing. Workloads can be distributed across different regions while maintaining consistent network behavior. This geographic flexibility is essential for global enterprises that require high availability and low-latency access to applications. By extending Layer 2 connectivity across sites, Overlay Transport Virtualization allows applications to function as if they were operating within a single unified data center. This simplifies application design and reduces the complexity of managing distributed systems. It also enables more efficient resource utilization, as workloads can be dynamically shifted based on demand or operational requirements. The ability to seamlessly connect multiple locations provides a strong foundation for modern cloud and hybrid infrastructure strategies.

Transport Network Independence and Infrastructure Flexibility

A key advantage of Overlay Transport Virtualization is its independence from the underlying transport network. The system does not require specialized hardware or dedicated circuits to function. Instead, it relies on standard IP routing infrastructure to carry encapsulated traffic between sites. This makes it highly flexible and adaptable to different network environments. Whether the underlying network is based on private WAN links, service provider infrastructure, or internet-based connectivity, Overlay Transport Virtualization can operate effectively as long as IP connectivity is available. This flexibility allows organizations to choose transport options based on cost, performance, and availability requirements. It also reduces dependency on specific vendors or technologies, making the network more resilient to change. By abstracting the transport layer, Overlay Transport Virtualization enables a more modular and future-proof network design.

Redundancy Models and High Availability Design Principles

High availability is a critical requirement in modern data center environments, and Overlay Transport Virtualization is designed with redundancy in mind. Multiple edge devices can be deployed within each data center to ensure that traffic can continue to flow even if a device fails. These devices can operate in active-active or active-standby configurations, depending on design requirements. Redundant paths within the underlay network also contribute to overall resilience, ensuring that traffic can be rerouted in the event of link failures. The control plane continuously monitors network health and updates routing information as needed to maintain optimal connectivity. This dynamic adaptation helps prevent service disruptions and ensures that applications remain accessible even during network instability. High availability is further enhanced by the distributed nature of the overlay, which eliminates single points of failure at the logical network level.

Traffic Segmentation and Logical Network Isolation

Overlay Transport Virtualization supports traffic segmentation by allowing multiple logical networks to coexist over the same physical infrastructure. Each overlay instance operates independently, maintaining its own set of MAC address mappings and forwarding rules. This enables organizations to isolate different application environments, business units, or security zones within the same physical network. Logical segmentation improves security by preventing unauthorized access between isolated network segments. It also enhances performance by reducing unnecessary traffic between unrelated workloads. This capability is particularly useful in large enterprises where multiple teams or services share the same underlying infrastructure. By providing logical separation, Overlay Transport Virtualization enables more efficient resource utilization while maintaining strong isolation between different network domains.

Scalability Considerations in Large-Scale Deployments

Scalability is one of the defining characteristics of Overlay Transport Virtualization. As organizations grow, their networks must be able to accommodate increasing numbers of endpoints, data centers, and applications. The architecture of Overlay Transport Virtualization is designed to scale horizontally, allowing additional edge devices and sites to be added without significant redesign. The use of control plane communication for MAC address distribution reduces the need for flooding, which improves performance as the network grows. The separation of overlay and underlay networks also ensures that scaling the logical network does not place additional strain on the physical infrastructure. This modular approach allows organizations to expand their networks incrementally while maintaining consistent performance and reliability.

Advanced Control Plane Behavior in Overlay Transport Virtualization

The control plane in Overlay Transport Virtualization represents the intelligence layer that ensures all participating network devices maintain a consistent and accurate understanding of the overlay topology. In large-scale environments, where multiple data centers are interconnected, the control plane must operate efficiently to prevent inconsistencies and delays in traffic forwarding decisions. Unlike traditional Layer 2 environments that depend heavily on broadcast-based discovery, the control plane in Overlay Transport Virtualization uses structured communication between edge devices to exchange endpoint and topology information. This reduces unnecessary traffic and ensures that updates are propagated in a controlled and predictable manner. When a new endpoint appears in the network, its presence is communicated through the control plane so that all relevant devices can update their forwarding tables. Similarly, when a device becomes unreachable or a path changes, the control plane ensures that outdated information is removed or corrected. This dynamic synchronization allows the network to adapt quickly to changes while maintaining stability. The efficiency of the control plane directly impacts the overall performance of the overlay, as accurate information is essential for correct traffic forwarding.

Control Plane Convergence and Network Stability Mechanisms

Convergence in Overlay Transport Virtualization refers to the process by which all devices in the overlay network reach a consistent state after a change occurs. This could include the addition of a new data center, failure of a link, or migration of workloads between sites. Fast convergence is essential to ensure that traffic continues to flow without interruption. The control plane is designed to propagate updates rapidly so that all edge devices can adjust their forwarding behavior accordingly. When a change occurs, update messages are distributed across the network, and each device modifies its internal mapping tables. This ensures that traffic is always directed to the correct location, even in dynamic environments. Stability is maintained by avoiding excessive churn in routing information and ensuring that updates are validated before being applied. This prevents inconsistent states that could lead to packet loss or misrouting. The balance between speed and stability is a critical aspect of control plane design, as overly aggressive updates can destabilize the network, while slow updates can result in outdated forwarding decisions.

Data Plane Efficiency and High-Speed Packet Forwarding

The data plane in Overlay Transport Virtualization is optimized for high-speed packet forwarding, enabling efficient communication between distributed data centers. Once the control plane has provided the necessary mapping information, the data plane is responsible for executing forwarding decisions with minimal delay. When a packet arrives at an edge device, it is processed quickly to determine whether it should be forwarded locally or encapsulated for remote delivery. If the destination is within the same site, the packet is delivered directly without additional processing. If the destination is remote, the data plane encapsulates the packet within an IP header and sends it through the underlay network. This encapsulation process is designed to be lightweight to ensure that performance is not degraded. The efficiency of the data plane is critical in environments where large volumes of traffic are constantly flowing between data centers. By minimizing processing overhead and leveraging optimized forwarding paths, Overlay Transport Virtualization ensures that applications experience low latency and high throughput.

Encapsulation Techniques and Transport Efficiency

Encapsulation is the mechanism that allows Layer 2 frames to be transported across Layer 3 networks in Overlay Transport Virtualization. This process involves wrapping Ethernet frames inside IP packets so that they can be routed through standard IP infrastructure. The encapsulation header contains information required for routing the packet to the correct destination edge device. Once the packet arrives at its destination, the encapsulation is removed, and the original Ethernet frame is restored. This process is transparent to end devices, which continue to operate as if they are connected to a local network. One of the key advantages of encapsulation is that it enables the use of existing IP infrastructure without requiring modifications. It also allows multiple virtual networks to coexist over the same physical transport layer. Efficient encapsulation is essential for maintaining performance, as excessive overhead can impact throughput. Therefore, the design of the encapsulation mechanism focuses on minimizing additional headers while preserving necessary routing information.

Multihoming and Redundant Edge Device Architectures

Multihoming is an important design feature in Overlay Transport Virtualization that enhances reliability and availability. It refers to the practice of connecting a single site to multiple edge devices or multiple transport paths. This ensures that if one device or link fails, traffic can continue to flow through alternate paths without interruption. In a multihomed configuration, edge devices work together to coordinate traffic forwarding and maintain consistency in MAC address mappings. The control plane plays a crucial role in ensuring that all devices are aware of each other’s presence and capabilities. Redundancy is achieved not only at the device level but also at the path level, allowing traffic to be rerouted dynamically in response to failures. This design significantly improves resilience and ensures that critical applications remain available even during network disruptions. Multihoming also enables load balancing, where traffic is distributed across multiple devices or links to optimize performance.

Authoritative Edge Device Selection and Traffic Ownership

In environments where multiple edge devices exist within a single site, it is necessary to determine which device is responsible for forwarding specific traffic flows. This is managed through the concept of authoritative edge devices. An authoritative edge device is responsible for handling traffic for a particular endpoint or set of endpoints. This prevents duplicate forwarding and ensures consistency in traffic handling. The selection of authoritative devices is coordinated through the control plane, which distributes information about endpoint ownership. When a device becomes authoritative for a specific MAC address, it is responsible for forwarding traffic destined for that address. If changes occur, such as device failure or workload migration, authority can be reassigned dynamically. This mechanism ensures that traffic is always handled by the most appropriate device, improving efficiency and reducing unnecessary duplication.

Design Considerations for Scalable Overlay Networks

Designing a scalable Overlay Transport Virtualization network requires careful consideration of several factors, including topology, traffic patterns, and failure domains. One of the primary design goals is to minimize complexity while maximizing flexibility. The separation of overlay and underlay networks allows designers to focus on logical connectivity without being constrained by physical limitations. However, it is still important to ensure that the underlying transport network can handle the expected traffic load. Latency and bandwidth between data centers must be considered, as they directly impact application performance. Another key consideration is the number of endpoints and how they are distributed across sites. Large-scale deployments require efficient MAC address distribution mechanisms to prevent control plane overload. Proper planning of redundancy and failover mechanisms is also essential to ensure continuous availability. By carefully balancing these factors, organizations can build robust and scalable overlay networks that support evolving business needs.

Traffic Optimization Strategies in Distributed Environments

Traffic optimization is a critical aspect of Overlay Transport Virtualization, especially in environments where large volumes of data are exchanged between sites. One of the primary optimization strategies is reducing unnecessary flooding by leveraging control plane-based MAC address learning. This ensures that traffic is only sent to relevant destinations rather than being broadcast across the entire network. Another strategy involves path optimization, where the system selects the most efficient route between edge devices based on network conditions. Load balancing can also be used to distribute traffic across multiple links or devices, preventing congestion and improving performance. Quality of service mechanisms may be applied to prioritize critical applications and ensure that they receive sufficient bandwidth. These optimization techniques work together to enhance overall network efficiency and provide consistent performance across distributed environments.

Integration with Virtualized and Cloud-Based Environments

Overlay Transport Virtualization integrates well with virtualized infrastructure and cloud-based environments, where workloads are frequently moved between physical hosts. In such environments, maintaining a consistent network identity is essential for application continuity. The ability to extend Layer 2 connectivity across data centers allows virtual machines to migrate without requiring changes to their network configuration. This simplifies workload mobility and supports dynamic resource allocation. Cloud environments also benefit from the abstraction provided by overlay networks, as they can operate independently of the underlying physical infrastructure. This enables hybrid architectures where on-premises data centers are seamlessly connected to cloud platforms. The flexibility of Overlay Transport Virtualization makes it suitable for modern IT strategies that emphasize agility, scalability, and automation.

Operational Transparency and Network Simplification

One of the key advantages of Overlay Transport Virtualization is its operational transparency. Once configured, the overlay network operates independently of the underlying infrastructure, reducing the need for constant manual intervention. This simplifies network management and allows administrators to focus on higher-level tasks rather than low-level configuration details. Changes to the physical network do not typically require modifications to the overlay, which reduces operational overhead. This transparency also improves troubleshooting, as issues can often be isolated to either the overlay or underlay layer. By separating responsibilities and reducing dependencies, the network becomes easier to manage and scale.

Long-Term Architectural Impact on Data Center Design

The adoption of Overlay Transport Virtualization has a significant impact on long-term data center design strategies. It enables a shift away from rigid, hardware-dependent architectures toward more flexible, software-defined models. This shift allows organizations to design networks based on application requirements rather than physical constraints. As data centers continue to evolve, overlay technologies are expected to play an increasingly important role in enabling distributed, cloud-integrated environments. The ability to extend Layer 2 connectivity across global infrastructures supports emerging use cases such as edge computing, hybrid cloud integration, and disaster recovery automation. Over time, this approach contributes to more agile and resilient network architectures that can adapt to changing business demands without requiring complete redesigns of the underlying infrastructure.

Conclusion

Overlay Transport Virtualization represents a significant shift in how modern data center connectivity is designed and operated. Instead of relying on traditional Layer 2 extension techniques that often introduce complexity, scalability limitations, and operational risk, it introduces a structured overlay model that runs independently of the physical transport network. This separation of logical and physical layers allows organizations to build more flexible, resilient, and scalable architectures that better align with the demands of modern application environments.

One of the most important outcomes of this approach is the ability to extend Layer 2 connectivity across geographically distributed data centers without exposing the network to the typical problems associated with stretched broadcast domains. In traditional designs, extending Layer 2 over long distances often leads to issues such as excessive flooding, spanning tree instability, and inefficient bandwidth utilization. Overlay Transport Virtualization avoids these challenges by encapsulating Layer 2 traffic within Layer 3 packets and using a control-driven approach to manage endpoint reachability. This fundamentally changes how traffic is handled across sites, replacing inefficient broadcast-based discovery with structured information exchange between edge devices.

Another major advantage lies in operational simplicity at scale. As enterprise networks grow, the complexity of managing multiple interconnect technologies across different regions increases significantly. Overlay Transport Virtualization reduces this burden by abstracting the underlying transport network. Once the overlay is established, the physical infrastructure becomes largely transparent to application connectivity. This means that changes in routing, link failures, or infrastructure upgrades can be handled at the underlay level without disrupting the logical network that applications depend on. This separation improves stability and reduces the risk of large-scale outages caused by configuration errors or topology changes.

Workload mobility is another critical area where this technology has a strong impact. In modern IT environments, applications are no longer tied to a single physical location. Virtual machines and containerized workloads are frequently moved between data centers for performance optimization, maintenance, or disaster recovery purposes. Maintaining a consistent network identity during these movements is essential for application continuity. Overlay Transport Virtualization enables this by preserving Layer 2 adjacency across multiple sites, allowing workloads to move without requiring changes to their IP addressing or network configuration. This significantly simplifies operational workflows and supports more dynamic infrastructure strategies.

From a design perspective, the separation of control plane and data plane functions introduces a more efficient way of handling network intelligence and traffic forwarding. The control plane is responsible for maintaining a global view of endpoint locations and network topology, while the data plane focuses on high-speed packet forwarding. This division allows each function to be optimized independently. The control plane ensures that information is accurate and up to date, while the data plane executes forwarding decisions with minimal latency. This architecture improves scalability because it prevents forwarding operations from being burdened with control-related processing tasks.

Encapsulation plays a key role in enabling this entire model. By wrapping Layer 2 frames inside Layer 3 packets, the system allows Ethernet-based communication to traverse IP networks seamlessly. This mechanism ensures that existing infrastructure can be reused without modification, which is particularly important for large enterprises with significant investment in existing network hardware. Encapsulation also enables multi-tenancy and logical segmentation, allowing multiple isolated network domains to coexist over the same physical infrastructure. This improves resource utilization and supports more efficient infrastructure sharing.

Another important aspect is the reduction of broadcast dependency. Traditional Layer 2 networks rely heavily on flooding to discover unknown destinations, which becomes inefficient as the network grows. Overlay Transport Virtualization replaces this mechanism with a distributed mapping system that allows devices to directly identify the location of endpoints. This reduces unnecessary traffic and improves overall network performance. It also enhances predictability, as traffic flows become more deterministic rather than dependent on broadcast behavior. This is especially important in large-scale environments where uncontrolled flooding can lead to congestion and performance degradation.

The introduction of multihoming and redundancy mechanisms further strengthens the resilience of the architecture. By allowing multiple edge devices and redundant paths within the network, the system ensures that failures do not result in service disruption. Traffic can be dynamically rerouted through available paths, maintaining connectivity even in the presence of device or link failures. This level of redundancy is essential for mission-critical applications that require continuous availability. It also supports load balancing, enabling more efficient use of network resources by distributing traffic across multiple active paths.

Scalability is another defining characteristic of Overlay Transport Virtualization. As organizations expand their infrastructure, the overlay model allows new sites and devices to be added without requiring significant redesign of the existing network. The use of structured control communication ensures that new endpoints are quickly integrated into the global topology. This horizontal scalability makes it suitable for large enterprises and service providers that need to support continuous growth without introducing instability or operational complexity.

In addition to scalability, the architecture supports a high degree of flexibility in deployment models. Since it operates independently of the underlying transport network, it can be implemented over a variety of infrastructure types, including private WAN links, metro networks, or IP-based service provider backbones. This flexibility allows organizations to choose transport options based on cost, performance, and availability requirements rather than being constrained by the overlay technology itself. It also enables hybrid designs where multiple transport types coexist within the same overall architecture.

Security and isolation benefits are also inherent in the design. By maintaining separate logical overlays, different application environments can be isolated from each other even when they share the same physical infrastructure. This reduces the risk of cross-contamination between workloads and improves overall security posture. Each overlay can enforce its own forwarding rules and endpoint mappings, ensuring that traffic remains contained within its intended domain. This is particularly valuable in environments where multiple business units or tenants share infrastructure resources.

Operational transparency is another key benefit that contributes to long-term maintainability. Once deployed, the overlay network operates largely independently of the underlying infrastructure. This reduces the need for frequent manual intervention and simplifies troubleshooting by allowing issues to be isolated between layers. Network administrators can focus on logical connectivity rather than physical topology details, which improves efficiency and reduces the likelihood of configuration errors.

Over time, the adoption of overlay-based architectures influences the overall direction of data center design. Traditional hierarchical models that rely heavily on physical segmentation are gradually being replaced by more flexible, software-defined approaches. These newer models prioritize agility, automation, and scalability, enabling organizations to respond more quickly to changing business requirements. Overlay Transport Virtualization fits naturally into this evolution by providing a mechanism for abstracting connectivity and enabling dynamic workload placement.

Ultimately, the value of this technology lies in its ability to unify distributed environments under a consistent networking model. It bridges the gap between physical infrastructure limitations and modern application requirements, allowing enterprises to build networks that are both robust and adaptable. As digital transformation continues to accelerate, the need for such flexible interconnect solutions will only increase, making overlay-based approaches a foundational element of future network architectures.