Network device logs are systematically generated records that capture operational activity across digital infrastructure components. These components include routing devices, switching systems, security appliances, server environments, virtualized platforms, and application services. Every interaction occurring within these environments produces traceable events that are recorded in log form. These events may include authentication attempts, routing decisions, configuration updates, session initiations, protocol exchanges, and error conditions. The purpose of these logs is to establish a continuous and structured record of system behavior over time, enabling visibility into both normal operations and unexpected events. In modern network engineering, logs function as the foundational dataset for operational intelligence, security monitoring, and forensic reconstruction of incidents. Without such records, diagnosing failures or identifying malicious activity would require reactive guesswork rather than evidence-based analysis.

Core Structure and Composition of Log Data in Network Systems

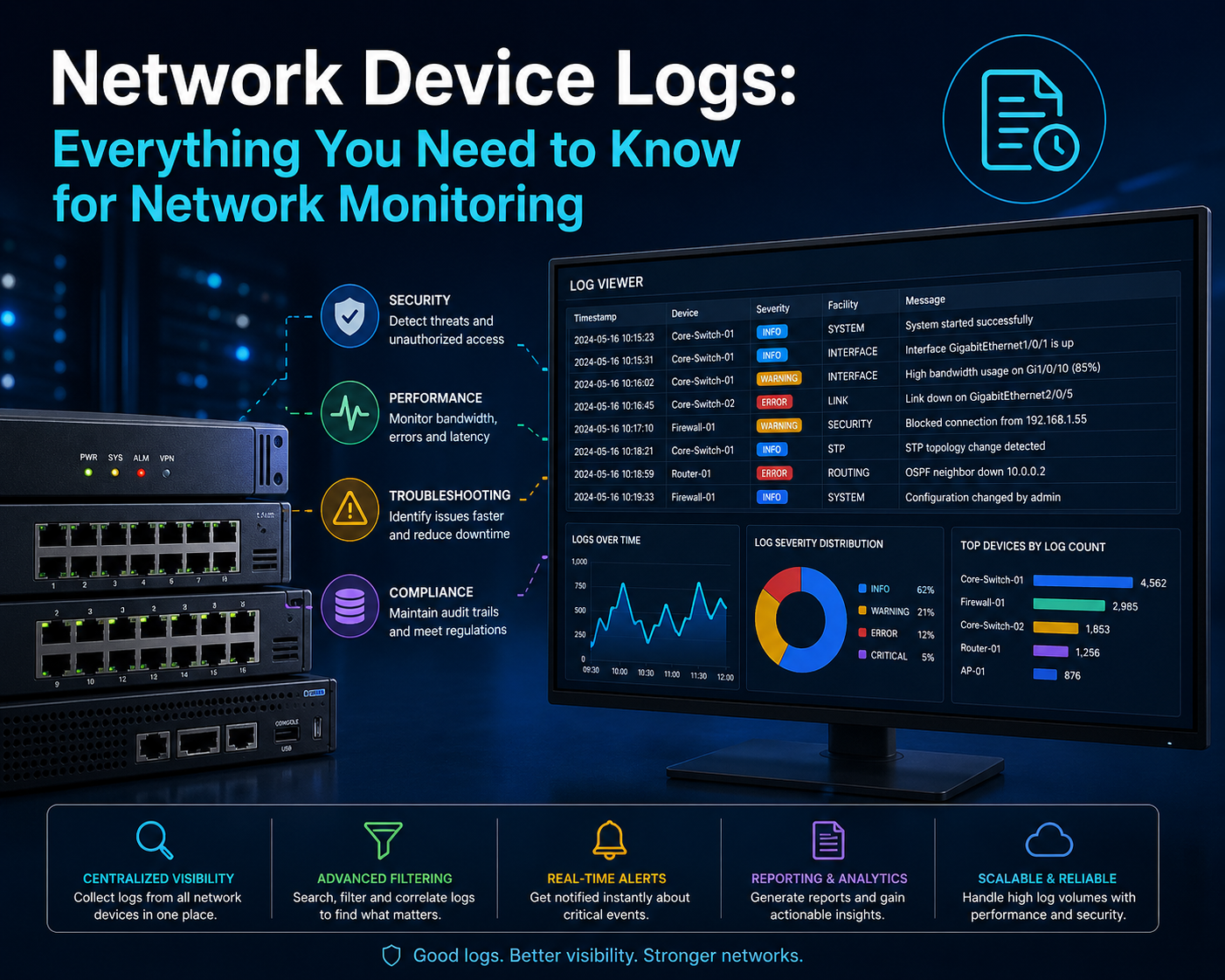

Network logs follow a structured format that allows machines and humans to interpret events consistently. Each log entry typically consists of multiple data fields that describe the context of an event. These fields often include a timestamp indicating when the event occurred, an identifier for the originating device, a severity or priority level, a category describing the event type, and a message field containing descriptive information. Some systems also include metadata such as protocol type, interface identifiers, session duration, or user credentials, depending on the nature of the event. The structured nature of logs ensures that data can be indexed, queried, and correlated across multiple systems. This structure is essential in large-scale environments where millions of events may be generated within short timeframes, requiring efficient parsing and filtering mechanisms for meaningful analysis.

The Importance of Logging in Network Observability and Control

Observability in network systems refers to the ability to understand internal states based on external outputs. Logs are a primary source of such outputs. They allow administrators to observe system behavior without directly interfering with operations. Through logs, it becomes possible to determine how systems respond under different conditions, how traffic flows through network paths, and how applications interact with underlying infrastructure. This visibility is critical for maintaining operational stability and ensuring that systems meet performance expectations. Logs also provide a historical record that supports trend analysis, enabling organizations to identify recurring issues or long-term inefficiencies. As environments scale and become more distributed, logs become even more important for maintaining centralized awareness of system activity.

Lifecycle of Network Log Generation and Processing

The lifecycle of a network log begins at the point of event creation within a device or application. When an action occurs, the system generates a log entry that captures relevant details about the event. This entry is then temporarily stored in local memory or disk storage, depending on device configuration. In many environments, logs are subsequently forwarded to centralized systems for aggregation and long-term storage. Once collected, logs undergo processing steps such as normalization, parsing, enrichment, and indexing. Normalization ensures that data from different sources adheres to a consistent format, making cross-system analysis possible. Enrichment adds contextual information such as geolocation data or user identity mapping. Indexing enables fast retrieval of log entries based on search criteria. Finally, logs are stored for retention periods defined by organizational policies, after which they may be archived or deleted.

Categories of Network Device Logs and Their Functional Roles

Network logging systems typically categorize data based on the nature of the recorded event. System logs focus on internal device operations such as hardware performance, software execution, and system errors. Security logs capture authentication attempts, authorization decisions, and policy enforcement actions. Application logs document behavior within software services, including user interactions and transaction processing. Traffic logs record communication between devices, including packet flow, session initiation, and data transfer metrics. Each category serves a distinct analytical purpose, yet they are often correlated during investigations to provide a complete view of system activity. The integration of multiple log categories enables deeper insights into how different layers of the infrastructure interact with one another.

Traffic Logs and Their Role in Network Communication Analysis

Traffic logs are among the most widely used log types in network analysis due to their ability to reveal how data moves across systems. These logs capture detailed information about communication sessions, including source and destination addresses, protocol usage, connection duration, and data volume transferred. By examining this information, analysts can understand how users and systems interact within the network. Traffic logs are essential for identifying usage patterns, detecting performance bottlenecks, and understanding bandwidth consumption. They also provide insights into application behavior by showing how frequently services communicate and how much data they exchange. This level of visibility is critical for maintaining efficient network operations and ensuring that resources are allocated appropriately across systems.

Techniques Used for Collecting Traffic Log Data in Networks

Traffic log collection can be implemented through multiple mechanisms,s depending on infrastructure design. Many network devices have built-in logging capabilities that automatically record traffic events. These logs may be stored locally on the device or transmitted to external storage systems. In more advanced setups, network traffic is continuously monitored using dedicated collection mechanisms that capture packet-level or flow-level data. Flow-based logging summarizes communication sessions without capturing every individual packet, making it more scalable for large environments. Packet-based logging provides more granular detail but requires significantly more storage and processing capacity. Once collected, traffic data is often transmitted to centralized repositories where it can be analyzed in conjunction with other log types.

Data Organization and Structuring of Traffic Logs for Efficient Analysis

Raw traffic logs are often voluminous and unstructured, requiring organization before they can be effectively used. Structuring involves categorizing entries based on attributes such as time, device identity, protocol type, and communication endpoints. This process allows analysts to filter and group related events, making patterns easier to identify. Time-based sequencing is particularly important because it allows reconstruction of communication flows in chronological order. Tagging mechanisms are also used to assign labels to log entries based on predefined rules or behavioral characteristics. These organizational techniques improve search efficiency and enable correlation between different data sources, which is essential for comprehensive analysis in complex network environments.

Traffic Pattern Recognition and Network Performance Insights

Analyzing traffic logs enables the identification of recurring communication patterns within a network. These patterns reveal how data flows between systems over time and highlight which services generate the most network activity. High-frequency communication between specific endpoints may indicate critical dependencies, while irregular traffic spikes may suggest inefficiencies or anomalies. Performance insights derived from traffic analysis can guide optimization efforts such as load balancing, bandwidth allocation, and routing adjustments. By understanding how resources are utilized across the network, administrators can make informed decisions that improve overall system performance and reduce latency. Pattern recognition also supports predictive analysis by identifying early indicators of potential congestion or system overload.

Security Monitoring Through Traffic Behavior Analysis

Traffic logs play a crucial role in identifying potential security threats within network environments. Unusual communication patterns, such as connections occurring outside normal operational hours or data transfers to unfamiliar external systems, may indicate malicious activity. Repeated connection attempts to restricted resources can suggest intrusion attempts or misconfigured systems. Sudden increases in outbound traffic may indicate data exfiltration behavior. By continuously monitoring these patterns, security teams can detect threats at an early stage and initiate investigation processes. Traffic logs also support post-incident analysis by providing detailed records of communication flows leading up to and during security events, enabling reconstruction of attack paths and identification of affected systems.

Challenges Associated with Large-Scale Traffic Log Management

As networks expand, the volume of traffic logs increases significantly, creating challenges in storage, processing, and analysis. High data volumes require substantial computational resources for real-time monitoring and historical analysis. Without efficient filtering mechanisms, log systems may become overwhelmed with irrelevant or redundant information. This can reduce the effectiveness of monitoring processes and delay incident detection. Storage management also becomes critical, as retaining large volumes of historical data can be costly and resource-intensive. To address these challenges, organizations implement selective logging strategies, data compression techniques, and tiered storage systems that balance accessibility with resource efficiency.

Transition from Raw Traffic Data to Analytical Insights in Network Operations

The transformation of raw traffic logs into actionable insights involves multiple stages of processing and analysis. Initially, data is collected and normalized to ensure consistency across different sources. It is then enriched with contextual information and indexed for efficient retrieval. Analytical tools are applied to identify patterns, detect anomalies, and generate operational intelligence. These insights support decision-making processes related to performance optimization, security enhancement, and infrastructure planning. Continuous monitoring and iterative analysis ensure that insights remain relevant as network conditions evolve. This structured approach enables organizations to move from reactive troubleshooting to proactive network management based on a data-driven understanding of system behavior.

Traffic Log Deep Analysis and Network Behavior Interpretation

Traffic logs extend beyond simple records of communication between devices; they form the analytical backbone for understanding how digital infrastructure behaves under real operational conditions. In advanced network environments, traffic data is not only collected but continuously analyzed to interpret behavioral patterns across systems, users, and applications. This interpretation involves identifying communication flows, session persistence, protocol distribution, and temporal usage characteristics. Each of these elements contributes to a broader understanding of network efficiency and stability. When traffic logs are analyzed over time, they reveal baseline behavior that defines what is considered normal activity. Any deviation from this baseline can be further investigated to determine whether it represents a performance issue, misconfiguration, or potential security concern. The ability to distinguish between expected and unexpected behavior is central to maintaining operational integrity in complex networks.

Correlation of Traffic Data Across Distributed Network Systems

Modern infrastructure is rarely centralized; instead, it consists of distributed systems spanning multiple physical and virtual environments. Traffic logs generated in such environments must be correlated to form a unified view of network activity. Correlation involves aligning log entries from different devices based on shared attributes such as timestamps, IP addresses, session identifiers, and protocol metadata. This process enables analysts to reconstruct end-to-end communication paths across multiple network segments. For example, a single user request may traverse firewalls, load balancers, application servers, and database systems, each generating its own log entries. Without correlation, these events would appear isolated and disconnected. By linking them together, it becomes possible to trace the full lifecycle of a transaction or session, providing deeper insight into system performance and dependency structures.

Advanced Filtering Techniques for Traffic Log Optimization

Due to the high volume of data generated in modern networks, filtering mechanisms are essential for isolating relevant information. Filtering allows analysts to focus on specific types of events, timeframes, devices, or communication patterns. Common filtering dimensions include source and destination IP addresses, protocol types, port numbers, and event severity levels. Advanced filtering techniques also incorporate behavioral conditions, such as identifying traffic that exceeds predefined thresholds or originates from unusual geographic locations. These techniques reduce noise within log datasets and improve the efficiency of analysis workflows. By narrowing the scope of data under review, filtering ensures that critical insights are not obscured by irrelevant information. In high-throughput environments, effective filtering is essential for maintaining real-time monitoring capabilities without overwhelming analytical systems.

Traffic Anomaly Detection and Behavioral Deviation Analysis

Anomaly detection in traffic logs focuses on identifying deviations from established behavioral baselines. These deviations may include sudden spikes in network traffic, unexpected communication between internal and external systems, or irregular session durations. Behavioral deviation analysis relies on statistical models and pattern recognition techniques to differentiate between normal fluctuations and significant anomalies. For instance, a gradual increase in traffic volume may be considered normal growth, while a sudden surge could indicate a misconfiguration or potential security incident. Anomalies are often categorized based on severity and potential impact, allowing prioritization of investigation efforts. In security contexts, anomaly detection is particularly important for identifying early indicators of compromise, such as unauthorized data transfers or suspicious connection attempts.

Audit Logs and Their Role in System Accountability Frameworks

Audit logs serve as a critical component of system accountability by recording detailed information about user actions and system changes. Unlike traffic logs, which focus on data movement, audit logs focus on behavioral events initiated by users or applications. These events may include login attempts, configuration modifications, file access operations, and administrative actions. Each entry typically includes user identifiers, timestamps, action types, and outcome statuses. Audit logs are essential for enforcing compliance requirements and maintaining traceability within digital environments. They provide a verifiable record of who performed specific actions and when those actions occurred. This level of accountability is particularly important in regulated industries where system integrity and user transparency are mandatory.

Cross-Referencing Audit Logs with Traffic Data for Incident Reconstruction

One of the most powerful techniques in log analysis involves cross-referencing audit logs with traffic logs to reconstruct detailed sequences of events. This process allows analysts to connect user actions with corresponding network activity. For example, a successful login recorded in an audit log can be correlated with subsequent network connections observed in traffic logs. This correlation helps establish causality between user behavior and system activity. In security investigations, this technique is used to identify whether suspicious traffic originates from legitimate user actions or unauthorized access attempts. By combining multiple log sources, analysts can build a comprehensive timeline that provides context for each event, improving the accuracy of incident analysis and response strategies.

Authentication Patterns and Security Behavior Insights

Authentication events recorded in audit logs provide valuable insights into user behavior and system access patterns. These logs capture successful and failed login attempts, password changes, multi-factor authentication events, and session terminations. Analyzing authentication patterns helps identify abnormal behavior such as repeated failed login attempts, which may indicate brute-force attacks, or successful logins from unfamiliar locations, which may suggest credential compromise. Over time, authentication data can be used to establish baseline user behavior profiles. Deviations from these profiles can trigger further investigation or automated security responses. This behavioral approach enhances security monitoring by shifting focus from isolated events to contextual analysis of user activity.

Role of Audit Logs in Compliance and Governance Structures

Audit logs are essential for maintaining compliance with regulatory frameworks that require detailed tracking of system and user activity. These frameworks often mandate that organizations retain records of access events, configuration changes, and data handling operations for specified periods. Audit logs provide the necessary evidence to demonstrate adherence to these requirements during inspections or audits. Governance structures rely on audit data to ensure that policies are being enforced consistently across systems. This includes verifying that only authorized users have access to sensitive resources, that administrative actions are properly documented, and that system changes follow approved procedures. The presence of comprehensive audit logs reduces organizational risk by improving transparency and accountability.

Event Sequencing and Temporal Analysis in Log Systems

Temporal analysis involves examining log data based on time sequences to understand how events unfold across systems. This type of analysis is critical for identifying cause-and-effect relationships between different activities. By aligning logs chronologically, analysts can trace the progression of events leading up to a specific outcome. For example, a system failure may be preceded by a series of configuration changes or resource spikes that are visible in logs when viewed in sequence. Temporal analysis also helps identify recurring patterns, such as periodic system maintenance activities or scheduled automated processes. Understanding timing relationships between events allows for more accurate diagnosis of system behavior and improved forecasting of future operational conditions.

Data Enrichment Techniques for Enhanced Log Interpretation

Raw log data often lacks contextual information necessary for full interpretation. Data enrichment addresses this limitation by adding supplementary information to log entries. This may include geolocation data derived from IP addresses, user identity mapping, device classification, or threat intelligence indicators. Enrichment enhances the analytical value of logs by providing additional context that supports deeper insights. For example, identifying the geographic origin of network traffic can help distinguish between legitimate and suspicious activity. Similarly, integrating threat intelligence data can highlight known malicious sources. Enriched logs enable more precise analysis and improve the accuracy of detection mechanisms used in monitoring systems.

Centralized Logging Architectures and Data Aggregation Models

Centralized logging architectures consolidate log data from multiple sources into a single repository for unified analysis. This approach simplifies monitoring by providing a centralized view of system activity across distributed environments. Data aggregation involves collecting logs from endpoints, servers, network devices, and applications, then standardizing and storing them in a centralized system. This architecture supports scalability by allowing organizations to handle large volumes of log data efficiently. It also improves accessibility by enabling analysts to query data from a single interface rather than multiple disconnected systems. Centralized logging is particularly important in large-scale infrastructures where distributed systems generate high volumes of event data continuously.

Log Normalization and Standardization Across Heterogeneous Systems

Different devices and applications often generate logs in varying formats, making direct comparison difficult. Log normalization addresses this challenge by converting diverse log formats into a consistent structure. This process involves mapping different fields into standardized categories such as timestamps, event types, and identifiers. Standardization ensures that log data from different sources can be analyzed together without compatibility issues. It also improves the efficiency of search and correlation processes by reducing variability in data representation. In heterogeneous environments where multiple vendors and technologies coexist, normalization is essential for maintaining analytical consistency and ensuring that insights are derived from unified datasets rather than fragmented information sources.

Scalability Challenges in High-Volume Log Environments

As network environments grow, the volume of generated log data increases exponentially. This creates scalability challenges in storage, processing, and real-time analysis. High ingestion rates require systems capable of handling continuous data streams without performance degradation. Storage systems must be designed to accommodate both short-term and long-term retention requirements while maintaining accessibility. Processing systems must be capable of analyzing large datasets efficiently to support real-time monitoring and alerting. Without scalable infrastructure, organizations risk losing visibility into critical events due to system overload or delayed processing. Scalability planning is therefore a key consideration in the design of modern logging architectures.

Integration of Logging Systems with Operational Monitoring Frameworks

Logging systems are often integrated with broader operational monitoring frameworks that combine metrics, traces, and logs into a unified observability model. This integration allows for more comprehensive analysis of system behavior by correlating different types of telemetry data. Metrics provide quantitative measurements of system performance, traces track request flows across distributed systems, and logs provide detailed event-level information. When combined, these data sources create a holistic view of system operations. This integrated approach enhances diagnostic capabilities by enabling cross-layer analysis of performance issues and security incidents. It also improves response times by providing multiple perspectives on system behavior within a single analytical framework.

Evolution of Log Analysis Techniques in Modern Network Environments

Log analysis techniques have evolved significantly with the increasing complexity of network systems. Traditional manual analysis methods have been replaced by automated systems capable of processing large datasets in real time. Machine learning algorithms are now commonly used to identify patterns, detect anomalies, and predict potential issues based on historical data. These advanced techniques enable proactive monitoring strategies that focus on preventing incidents rather than reacting to them. As network environments continue to evolve, log analysis will increasingly rely on intelligent automation and predictive analytics to manage complexity and maintain operational stability.

Syslog Protocol and Its Role in Centralized Logging Systems

The syslog protocol is a standardized method used for transmitting log data from network devices and applications to centralized logging systems. It provides a consistent framework for message formatting, transport, and storage, enabling interoperability across diverse infrastructure components. Syslog operates by allowing devices to generate log messages and forward them to a designated collector or server, where they can be aggregated and analyzed. This approach eliminates the need to manually access individual devices for log retrieval, significantly improving efficiency in large-scale environments. The protocol supports both connectionless and connection-oriented transport mechanisms, offering flexibility depending on reliability and performance requirements. Through its structured message format, syslog ensures that log data remains consistent and interpretable across systems, forming the backbone of centralized logging architectures.

Components and Structure of Syslog Messages in Network Logging

Syslog messages are composed of multiple components that define the context and content of each event. The header section typically includes priority values that represent facility and severity levels, along with timestamps and host identifiers. These elements provide essential context for determining the importance and origin of an event. The structured data portion contains key-value pairs that offer additional metadata about the event, such as process identifiers or session details. The message body itself provides a descriptive account of the event being recorded. This structured approach allows for efficient parsing and categorization of log entries within centralized systems. By maintaining a consistent format, syslog messages enable automated tools to filter, search, and analyze data effectively, even when logs originate from heterogeneous sources.

Centralized Log Management and the Concept of Unified Visibility

Centralized log management refers to the practice of collecting, storing, and analyzing log data from multiple sources within a single system. This approach provides a unified view of network activity, often described as a single interface through which administrators can monitor all systems simultaneously. Unified visibility is critical in modern infrastructures where devices are distributed across different locations and environments. By consolidating log data, organizations can detect patterns and correlations that would be difficult to identify when logs are stored in isolated silos. Centralized management also simplifies administrative tasks such as log retention, access control, and compliance reporting. The ability to analyze data from multiple sources in one place enhances both operational efficiency and security monitoring capabilities.

Architecture and Components of a Syslog Server Environment

A syslog server environment typically consists of several key components that work together to collect and process log data. The syslog listener acts as the entry point, receiving log messages from various devices and applications. This component must be capable of handling high volumes of incoming data without loss or delay. Once received, log messages are passed to a processing engine that parses and categorizes the data based on predefined rules. Storage systems are then used to retain the logs in an organized manner, allowing for efficient retrieval and long-term analysis. Filtering and indexing mechanisms are applied to ensure that relevant data can be accessed quickly. In advanced deployments, additional components such as analytics engines and visualization tools are integrated to provide deeper insights into system behavior.

Log Aggregation and Data Consolidation Techniques

Log aggregation involves collecting data from multiple sources and consolidating it into a single repository. This process requires careful coordination to ensure that data is transmitted reliably and stored consistently. Aggregation techniques often include buffering mechanisms that temporarily hold data before forwarding it to the central system, reducing the risk of data loss during network disruptions. Data consolidation also involves merging log entries from different sources into a unified dataset, enabling cross-system analysis. This process may include deduplication to remove redundant entries and compression to optimize storage usage. Effective aggregation ensures that log data remains accessible and manageable, even as the volume of generated information increases over time.

Real-Time Monitoring and Alerting Through Centralized Logging

Centralized logging systems enable real-time monitoring by continuously analyzing incoming log data for specific conditions or patterns. When predefined criteria are met, alerts are generated to notify administrators of potential issues. These alerts may be based on thresholds, anomaly detection models, or rule-based conditions. Real-time monitoring is essential for maintaining system availability and security, as it allows for immediate response to critical events. For example, repeated authentication failures or unexpected configuration changes can trigger alerts that prompt further investigation. The effectiveness of alerting systems depends on the accuracy of detection mechanisms and the ability to minimize false positives, which can lead to alert fatigue and reduced responsiveness.

Log Review Practices and Proactive Threat Detection Strategies

While automated monitoring plays a significant role in modern environments, manual log review remains an important practice for ensuring comprehensive analysis. Periodic review of log data allows analysts to validate that monitoring systems are functioning correctly and that no significant events have been overlooked. Proactive threat detection involves actively searching for indicators of compromise within log datasets. This process may include identifying unusual patterns, correlating events across systems, and analyzing historical data for signs of emerging threats. Regular review practices enhance situational awareness and improve the effectiveness of security operations by providing deeper insight into system behavior beyond automated alerts.

Threat Hunting Methodologies Using Log Data Analysis

Threat hunting is an advanced analytical process that involves actively searching for hidden threats within a network environment. Unlike reactive incident response, which focuses on known alerts, threat hunting seeks to identify previously undetected activity by analyzing log data. This methodology relies on hypothesis-driven investigation, where analysts formulate assumptions about potential threats and use log data to validate or refute them. For example, an analyst may investigate whether unauthorized access has occurred by examining authentication logs and correlating them with traffic patterns. Threat hunting requires a deep understanding of normal system behavior and the ability to identify subtle deviations that may indicate malicious activity. Logs provide the primary data source for this process, enabling detailed examination of system events and interactions.

Logging Levels and Severity Classification in Event Management

Logging systems often categorize events based on severity levels, which indicate the importance or urgency of a log entry. Common severity levels range from informational messages that describe normal operations to critical alerts that indicate system failures or security breaches. Configuring appropriate logging levels is essential for balancing visibility and resource utilization. Excessive logging can generate large volumes of data, making analysis more difficult and increasing storage requirements. Insufficient logging, on the other hand, may result in missing critical events. Severity classification helps prioritize events and ensures that high-impact issues receive immediate attention. By tailoring logging levels to organizational needs, administrators can optimize both performance and monitoring effectiveness.

Balancing Log Volume and System Performance in Large Environments

Managing log volume is a significant challenge in large-scale network environments. High levels of logging can impact system performance by consuming processing power, storage capacity, and network bandwidth. To address this challenge, organizations implement strategies such as selective logging, data sampling, and log rotation. Selective logging focuses on capturing only relevant events based on predefined criteria, reducing unnecessary data generation. Data sampling involves recording a subset of events to represent overall behavior, which can be useful in high-frequency scenarios. Log rotation ensures that older data is archived or deleted to free up storage space. Balancing log volume with system performance requires careful planning and continuous adjustment based on operational requirements.

Retention Policies and Long-Term Storage Considerations for Logs

Log retention policies define how long log data should be stored and when it should be archived or deleted. These policies are influenced by regulatory requirements, operational needs, and storage limitations. Long-term storage of logs is important for historical analysis, compliance audits, and forensic investigations. However, retaining large volumes of data can be resource-intensive. To address this, organizations often use tiered storage systems that separate frequently accessed data from archival data. Compression and indexing techniques are applied to optimize storage efficiency while maintaining accessibility. Effective retention policies ensure that critical data is preserved without overwhelming storage infrastructure.

Security Considerations in Log Management and Data Protection

Logs often contain sensitive information such as user credentials, system configurations, and network activity details. Protecting this data is essential to prevent unauthorized access and potential misuse. Security measures include encryption of log data during transmission and storage, access control mechanisms to restrict who can view or modify logs, and integrity checks to ensure that log data has not been altered. Audit trails are also maintained to track access to log systems, providing accountability for actions performed within the logging environment. Implementing strong security controls ensures that logs remain a reliable source of truth for operational and security analysis.

Best Practices for Effective Log Management and Continuous Improvement

Effective log management requires a structured approach that includes clear policies, consistent processes, and ongoing optimization. Organizations should define what data needs to be logged, how it should be collected, and who is responsible for managing it. Regular reviews of logging configurations help ensure that systems remain aligned with changing operational and security requirements. Collaboration between operational teams and security analysts is essential for refining detection mechanisms and improving response strategies. Continuous improvement involves analyzing past incidents to identify gaps in logging coverage and updating systems accordingly. By maintaining a proactive approach to log management, organizations can enhance visibility, improve system reliability, and strengthen their overall security posture.

Conclusion

Network device logs form the backbone of visibility within any modern digital infrastructure, serving as a continuous stream of recorded activity that reflects how systems, users, and applications interact over time. From the moment a device powers on to the execution of complex transactions across distributed environments, logs capture every meaningful event in a structured and traceable format. This persistent recording of activity transforms otherwise invisible processes into analyzable data, allowing organizations to move beyond assumptions and rely on concrete evidence when evaluating performance, diagnosing issues, or responding to security incidents. The value of logs lies not only in their ability to record events but in their capacity to provide context, enabling a deeper understanding of cause-and-effect relationships across interconnected systems.

When examined collectively, logs reveal patterns that define the operational baseline of a network. These patterns include normal traffic flows, expected authentication behaviors, routine system processes, and predictable application interactions. Establishing this baseline is critical because it provides a reference point against which anomalies can be detected. Deviations from normal behavior often signal underlying issues, whether they stem from misconfigurations, performance bottlenecks, or malicious activity. By continuously monitoring and analyzing log data, organizations can identify these deviations early and take corrective action before they escalate into more significant problems. This proactive approach reduces downtime, enhances system reliability, and minimizes the impact of potential security threats.

The integration of multiple log types further amplifies their analytical value. Traffic logs provide visibility into data movement across the network, revealing how systems communicate and how resources are utilized. Audit logs add another layer of insight by documenting user actions and system changes, establishing accountability and traceability. When these log sources are correlated, they create a comprehensive narrative that connects user behavior with network activity. This correlation is particularly important in investigative scenarios, where understanding the sequence of events is essential for determining root causes and assessing the scope of impact. The ability to reconstruct detailed timelines from log data transforms reactive troubleshooting into a structured and methodical process.

Centralized logging plays a pivotal role in making log data accessible and actionable. By aggregating logs from diverse sources into a unified system, organizations eliminate the fragmentation that can hinder analysis. Centralization enables a single point of visibility where data can be searched, filtered, and correlated efficiently. This unified perspective simplifies monitoring and enhances situational awareness, especially in environments where systems are distributed across multiple locations or platforms. It also supports real-time analysis, allowing organizations to detect and respond to critical events as they occur rather than after the fact. The shift from isolated log storage to centralized management represents a significant advancement in how organizations leverage data for operational and security purposes.

The effectiveness of logging systems depends heavily on how data is managed throughout its lifecycle. From generation and collection to processing, storage, and eventual retention or deletion, each stage requires careful planning and execution. Poorly managed log data can quickly become overwhelming, leading to inefficiencies and missed insights. Implementing structured approaches such as normalization, filtering, and indexing ensures that logs remain organized and accessible. These processes enable analysts to focus on relevant information without being distracted by noise, improving both the speed and accuracy of analysis. At the same time, retention policies must strike a balance between preserving valuable historical data and managing storage constraints, ensuring that critical information remains available when needed.

Security is one of the most significant areas where logs provide measurable value. In an environment where threats are constantly evolving, logs serve as a primary source of evidence for detecting and investigating suspicious activity. They reveal unauthorized access attempts, unusual data transfers, and deviations from expected behavior, all of which can indicate potential compromise. By leveraging logs for continuous monitoring, organizations can identify threats at an early stage and respond before significant damage occurs. Logs also play a crucial role in post-incident analysis, enabling investigators to trace attack paths, identify affected systems, and understand how vulnerabilities were exploited. This insight is essential for strengthening defenses and preventing similar incidents in the future.

Despite their importance, logs present challenges that must be addressed to fully realize their potential. The sheer volume of data generated in modern networks can strain storage and processing resources, making it difficult to maintain real-time visibility. Without effective filtering and prioritization, critical events may be buried within large datasets, reducing the effectiveness of monitoring systems. Additionally, excessive alerting can lead to fatigue among analysts, diminishing their ability to respond to genuine threats. Addressing these challenges requires a balanced approach that combines efficient data management techniques with well-defined monitoring strategies. By focusing on quality rather than quantity, organizations can ensure that their logging systems deliver meaningful insights without overwhelming resources.

The continuous evolution of network environments further underscores the need for adaptive log management practices. As technologies change and new systems are introduced, logging configurations must be updated to reflect current operational realities. This includes adjusting what data is collected, refining analysis techniques, and incorporating new sources of information. Collaboration between operational teams and security professionals is essential for maintaining alignment between logging practices and organizational objectives. Through ongoing evaluation and improvement, organizations can ensure that their logging systems remain effective in the face of changing requirements and emerging challenges.

Ultimately, network device logs are more than just records of past events; they are a strategic asset that supports informed decision-making across all aspects of IT operations. They enable organizations to understand how their systems function, identify areas for improvement, and respond effectively to both routine issues and unexpected incidents. By investing in robust logging practices and maintaining a disciplined approach to data management, organizations can transform raw log data into actionable intelligence. This transformation not only enhances operational efficiency but also strengthens resilience, ensuring that networks remain secure, reliable, and capable of supporting evolving business needs.