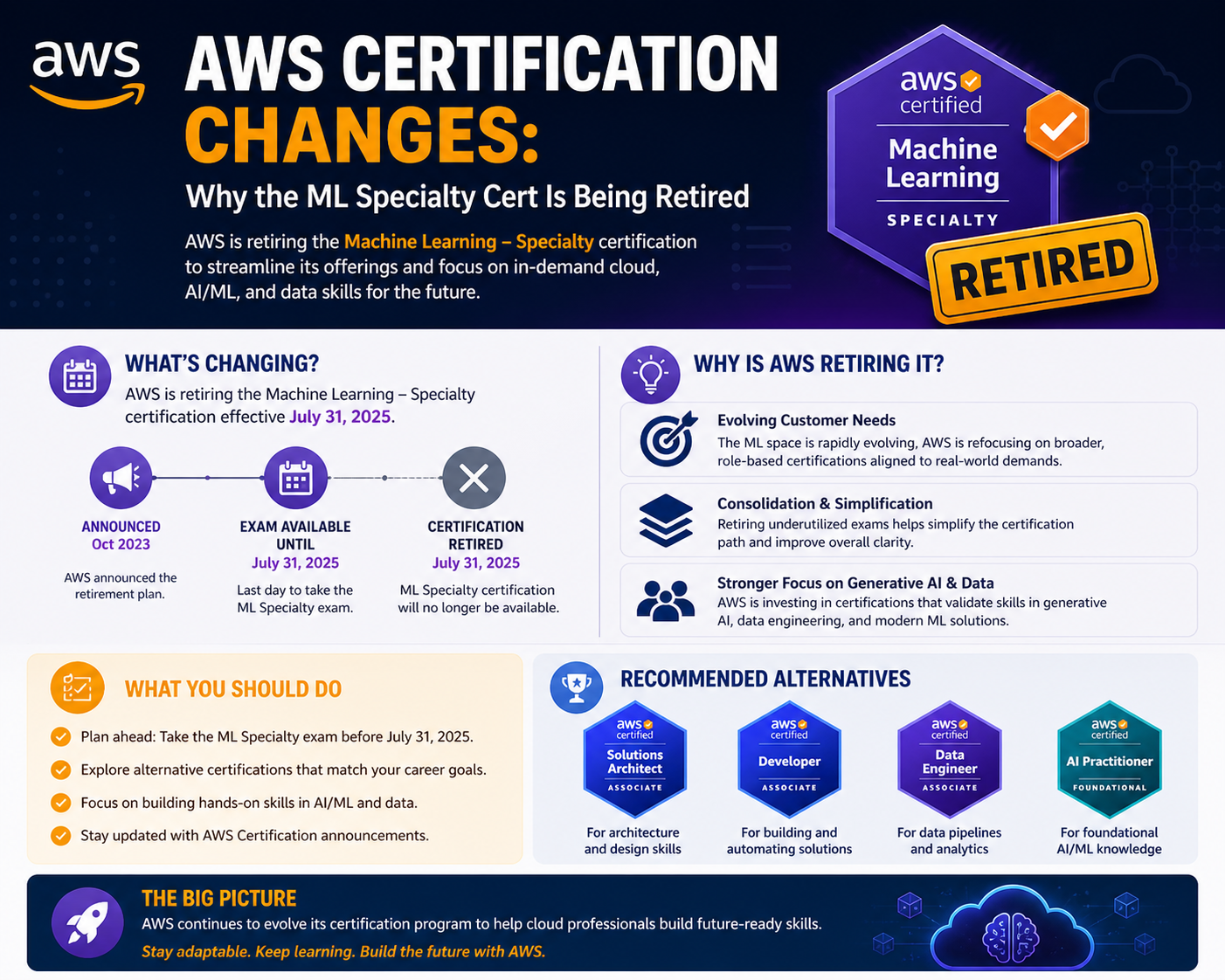

The decision by Amazon Web Services to retire the Machine Learning – Specialty certification reflects a broader transformation in the artificial intelligence and cloud computing landscape. This shift is not an isolated update but a reflection of how rapidly the industry has matured over the past decade. Organizations are no longer experimenting with machine learning in limited environments; instead, they are embedding AI into core business operations, customer experiences, and large-scale digital platforms. As a result, the expectations placed on professionals have evolved, and certifications must keep pace with these changes.

Previously, certifications were designed to validate deep technical expertise in narrow areas such as model development and algorithm design. Today, however, the focus has shifted toward practical implementation, system integration, and operational reliability. Businesses are prioritizing solutions that can be deployed quickly, scaled efficiently, and maintained with minimal overhead. This has led to a growing demand for professionals who understand not only machine learning concepts but also how to apply them effectively within complex cloud environments. The retirement of this certification highlights the gap between traditional skill validation and modern industry requirements.

The Early Era of Model-Centric Machine Learning

When the Machine Learning – Specialty certification was first introduced, the industry was heavily focused on building models from scratch. Organizations invested significant time and resources into developing custom algorithms tailored to their specific needs. This approach required a strong foundation in mathematics, statistics, and programming. Professionals were expected to work extensively with data, experimenting with different techniques to improve model accuracy and performance.

During this period, machine learning was often treated as a specialized field, separate from mainstream software development. Teams of data scientists and researchers were responsible for designing and training models, while other teams handled application development and infrastructure. The certification reflected this separation by focusing primarily on theoretical knowledge and technical depth. It validated the ability to design models, preprocess data, and evaluate results in controlled environments.

However, this approach also had limitations. Building custom models from scratch was time-consuming and required significant expertise. It was not always practical for organizations that needed to deliver solutions quickly. As the demand for machine learning applications grew, businesses began looking for more efficient ways to implement AI capabilities without relying entirely on specialized teams.

The Rise of Managed Services and Pre-Trained Models

As cloud computing evolved, managed services and pre-trained models became increasingly popular. These solutions allowed organizations to leverage machine learning capabilities without having to build everything from the ground up. By using cloud-based tools, teams could access powerful models and integrate them into their applications with minimal effort.

This shift significantly reduced the complexity of machine learning workflows. Tasks that once required extensive expertise could now be performed using user-friendly interfaces and APIs. Developers no longer needed to understand every detail of model training to implement AI features. Instead, they could focus on building applications that delivered value to users.

The adoption of managed services also improved scalability and reliability. Cloud platforms provided the infrastructure needed to handle large volumes of data and high levels of demand. This made it easier for organizations to deploy machine learning solutions at scale, without worrying about the underlying infrastructure. As a result, the role of professionals began to shift from model development to service management and integration.

Generative AI and the Transformation of Development Practices

The emergence of generative artificial intelligence has further accelerated the transformation of development practices. Generative AI technologies enable developers to create intelligent applications using pre-built models that can generate text, images, and other types of content. These models are often accessible through APIs, making them easy to integrate into existing systems.

This approach has fundamentally changed how machine learning is used in practice. Instead of focusing on building models, developers are now focused on designing user experiences and integrating AI capabilities into applications. This requires a different set of skills, including an understanding of APIs, data pipelines, and system architecture.

Generative AI has also expanded the range of professionals who can work with machine learning. Individuals with backgrounds in software development, cloud engineering, and product design can now contribute to AI projects without needing deep expertise in algorithms. This democratization of AI has increased the demand for practical, application-focused skills, further reducing the relevance of traditional model-centric certifications.

The Growing Importance of Production-Ready AI Systems

Modern organizations require AI systems that are not only accurate but also reliable, scalable, and maintainable. This has led to a greater emphasis on production-ready systems that can operate effectively in real-world environments. Unlike experimental models, these systems must handle unpredictable conditions, large datasets, and high levels of user interaction.

Professionals are now expected to understand how to deploy models into production, monitor their performance, and ensure their reliability over time. This includes tasks such as setting up automated pipelines, managing system resources, and troubleshooting issues as they arise. The ability to maintain and optimize AI systems has become a critical skill, reflecting the shift from experimentation to implementation.

Security, Compliance, and Governance in AI Workflows

As machine learning systems become more integrated into business operations, security and compliance have become increasingly important. Organizations must ensure that their AI systems protect sensitive data and comply with regulatory requirements. This involves implementing robust security measures, managing access controls, and maintaining data integrity.

Professionals must also be aware of ethical considerations and governance practices related to AI. This includes ensuring that models are used responsibly and that their outputs are transparent and fair. These responsibilities go beyond technical skills, requiring an understanding of legal and ethical frameworks.

Cost Optimization and Resource Management

The cost of running machine learning workloads in the cloud can be high, particularly for large-scale applications. Organizations are therefore placing a strong emphasis on cost optimization and resource management. Professionals must understand how to allocate resources efficiently, monitor usage, and implement strategies to reduce expenses.

This involves making decisions about when to use managed services, how to scale resources, and how to balance performance with cost. These considerations are now a central part of machine learning workflows, reflecting the need for practical, business-oriented skills.

Changing Roles and Collaborative Team Structures

The structure of teams working on machine learning projects has evolved significantly. Instead of relying solely on specialized data science teams, organizations are adopting a more collaborative approach. Developers, cloud engineers, and operations professionals are all involved in building and maintaining AI systems.

This shift requires individuals to have a broader understanding of different aspects of technology. Professionals must be able to work across disciplines, communicating effectively with team members and understanding how different components of a system interact. Certifications must therefore reflect this interdisciplinary approach, emphasizing practical skills and real-world applications.

Integration of Machine Learning into Broader Systems

Machine learning is now an integral part of larger systems that include databases, APIs, and user interfaces. Professionals must understand how to integrate these components and ensure that data flows smoothly between them. This requires knowledge of system design, data management, and communication protocols.

The ability to build end-to-end solutions is becoming increasingly important. Instead of focusing on individual components, professionals must consider the entire system and how different elements work together to deliver value.

Evolving Definitions of Success in AI Projects

The definition of success in machine learning projects has expanded beyond model accuracy. Organizations now consider a wide range of factors, including scalability, reliability, user experience, and cost efficiency. Professionals must be able to balance these factors when designing and deploying solutions.

This broader perspective reflects the growing importance of practical outcomes over theoretical performance. It also highlights the need for skills that go beyond traditional machine learning concepts.

The Introduction of New Certification Paths

To align with current industry needs, AWS is introducing new certification paths that focus on generative AI and production-level skills. These certifications emphasize the ability to deploy, manage, and monitor AI systems in real-world environments.

They validate skills related to integration, security, and operational efficiency, reflecting the practical requirements of modern AI roles. This shift ensures that certifications remain relevant and valuable for professionals seeking to advance their careers.

Implications for Current and Future Professionals

The retirement of the Machine Learning – Specialty certification underscores the importance of adaptability in the technology field. Professionals must continuously update their skills to stay relevant in a rapidly changing environment.

While foundational knowledge remains important, there is a growing need to understand cloud services, system integration, and operational management. This encourages a more holistic approach to learning and career development.

A Broader Industry Transformation

The decision to retire this certification is part of a larger transformation in the technology industry. As artificial intelligence continues to evolve, the way skills are defined and validated must also change.

Certifications are being redesigned to reflect real-world applications and practical expertise. This ongoing transformation highlights the dynamic nature of the field and the need for continuous learning and adaptation.

How Generative AI Is Reshaping Modern Skill Requirements

The rapid rise of generative artificial intelligence has significantly changed how professionals approach machine learning and cloud-based development. Unlike earlier forms of AI that required deep involvement in model training and experimentation, generative AI focuses on usability, speed, and integration. Platforms provided by Amazon Web Services and similar providers now enable developers to access powerful foundation models through simple interfaces. This shift has redefined what it means to be skilled in artificial intelligence, moving the emphasis from building models to applying them effectively in real-world scenarios.

Generative AI is designed to solve practical problems quickly. Whether it is generating content, summarizing information, or enhancing user interactions, the goal is to deliver value without requiring extensive development cycles. This has created a demand for professionals who can work with these tools efficiently, understand their capabilities, and integrate them into applications. As a result, the traditional boundaries between data science and software development are becoming less distinct.

From Model Building to Application Integration

In earlier machine learning workflows, much of the effort was spent on building and refining models. Developers and data scientists worked closely to prepare datasets, select algorithms, and evaluate performance. While these skills remain valuable, they are no longer the primary focus in many organizations. Instead, the emphasis has shifted toward integrating existing models into applications.

This change has introduced new responsibilities for professionals. They must understand how to connect AI services with web applications, mobile platforms, and enterprise systems. This involves working with APIs, managing data pipelines, and ensuring that different components communicate effectively. The ability to integrate AI seamlessly into user-facing applications is now a critical skill, reflecting the practical nature of modern development practices.

Understanding Foundation Models and Their Role

Foundation models are at the core of generative AI. These large-scale models are trained on vast amounts of data and can perform a wide range of tasks without needing to be retrained for each specific use case. This flexibility allows organizations to use a single model for multiple applications, reducing the need for custom development.

Professionals must understand how these models work at a conceptual level, including their strengths and limitations. While deep technical knowledge of training processes may not be required, it is important to know how to select the right model for a given task and how to configure it effectively. This includes adjusting parameters, managing inputs and outputs, and ensuring that the model behaves as expected in different scenarios.

The Importance of API-Driven Development

One of the defining characteristics of generative AI is its reliance on APIs. These interfaces allow developers to access complex AI capabilities without needing to manage the underlying infrastructure. API-driven development has become a standard approach, enabling teams to build applications quickly and efficiently.

Working with APIs requires a different set of skills compared to traditional machine learning workflows. Professionals must understand how to authenticate requests, handle responses, and manage errors. They must also be able to design systems that can scale as demand increases. This includes optimizing performance, reducing latency, and ensuring that applications remain responsive under heavy usage.

Managing Data Flow and Pipelines

Data remains a critical component of any AI system, even in the era of generative models. Professionals must know how to manage data flow between different components of a system. This involves setting up pipelines that collect, process, and deliver data to AI services in a reliable and efficient manner.

Effective data management ensures that AI systems produce accurate and relevant results. It also helps maintain consistency across different parts of an application. Professionals must be able to design pipelines that handle large volumes of data, adapt to changing requirements, and integrate with other services within the cloud environment.

Security and Access Control in AI Systems

As AI systems become more integrated into business operations, security has become a top priority. Professionals must ensure that only authorized users can access AI services and that sensitive data is protected at all times. This involves implementing access controls, managing identities, and monitoring system activity.

Security considerations extend beyond basic access management. Professionals must also be aware of potential vulnerabilities in AI systems, such as misuse of models or exposure of sensitive information. Ensuring the security of AI applications requires a proactive approach, combining technical measures with ongoing monitoring and evaluation.

Cost Management and Resource Optimization

Generative AI services can be resource-intensive, making cost management an essential skill. Professionals must understand how to use these services efficiently to avoid unnecessary expenses. This includes selecting the right pricing models, optimizing usage patterns, and monitoring costs over time.

Resource optimization is closely linked to performance. By managing resources effectively, professionals can ensure that applications run smoothly while minimizing costs. This requires a balance between delivering high-quality results and maintaining financial efficiency, which is a key consideration for organizations adopting AI technologies.

Observability and Monitoring of AI Applications

In production environments, it is not enough to deploy an AI system and assume it will function correctly. Continuous monitoring is essential to ensure that the system performs as expected. Professionals must track metrics such as response times, error rates, and usage patterns to identify potential issues.

Observability involves collecting and analyzing data from different parts of the system. This allows professionals to gain insights into how the application is functioning and where improvements can be made. Effective monitoring helps maintain reliability, improve performance, and ensure a positive user experience.

Handling Model Behavior in Real-World Scenarios

Generative AI models can behave unpredictably in certain situations, especially when dealing with complex or ambiguous inputs. Professionals must be prepared to handle these challenges by implementing safeguards and validation mechanisms. This includes filtering outputs, setting constraints, and ensuring that results meet quality standards.

Understanding model behavior is essential for building reliable applications. Professionals must test systems thoroughly and be prepared to make adjustments as needed. This iterative approach helps ensure that AI applications deliver consistent and accurate results in real-world conditions.

The Role of Cloud Infrastructure in AI Development

Cloud infrastructure plays a central role in modern AI development. It provides the resources needed to run applications, store data, and manage workloads. Professionals must understand how to use cloud services effectively, including how to deploy applications, manage resources, and ensure scalability.

Working with cloud infrastructure requires knowledge of system architecture and design principles. Professionals must be able to build systems that are resilient, scalable, and efficient. This includes understanding how different services interact and how to optimize performance across the entire system.

Collaboration Between Development and Operations Teams

The shift toward production-focused AI has increased the need for collaboration between development and operations teams. Professionals must work together to ensure that applications are built, deployed, and maintained effectively. This collaborative approach is often referred to as DevOps, and it is becoming an essential part of modern AI workflows.

Collaboration requires clear communication and a shared understanding of goals and responsibilities. Professionals must be able to coordinate their efforts, share knowledge, and adapt to changing requirements. This teamwork is critical for delivering successful AI projects.

Adapting to Rapid Technological Change

The field of generative AI is evolving rapidly, with new tools and technologies being introduced regularly. Professionals must be able to adapt to these changes and continuously update their skills. This requires a commitment to learning and a willingness to explore new approaches.

Staying current with technological developments helps professionals remain competitive and ensures that they can take advantage of new opportunities. It also enables them to provide more effective solutions to the challenges faced by organizations.

Expanding Career Opportunities in Generative AI

The rise of generative AI has created new career opportunities across a wide range of industries. Professionals with skills in AI integration, cloud computing, and system management are in high demand. These roles often involve working on innovative projects that leverage the latest technologies to deliver value.

As organizations continue to adopt AI, the demand for skilled professionals is expected to grow. This creates opportunities for individuals to build rewarding careers in this field, provided they are willing to adapt to changing requirements and develop the necessary skills.

The Shift Toward Practical, Production-Level Expertise

The overall trend in AI development is moving toward practical, production-level expertise. Organizations are looking for professionals who can deliver results, rather than just theoretical knowledge. This includes the ability to build applications, manage systems, and ensure reliability.

Certifications and training programs are evolving to reflect this shift. They focus on real-world scenarios and practical skills, ensuring that professionals are prepared for the challenges of modern AI development. This emphasis on application and implementation is shaping the future of the field.

The Integration of AI into Everyday Applications

AI is no longer a specialized technology used only in advanced research projects. It is now a fundamental part of everyday applications, from customer support systems to content generation tools. Professionals must understand how to integrate AI into these applications in a way that enhances user experience and delivers value.

This integration requires a combination of technical skills and creative thinking. Professionals must be able to design solutions that are both functional and user-friendly, ensuring that AI capabilities are accessible and effective.

The Continuous Evolution of Skill Requirements

As the technology landscape continues to evolve, so too will the skills required to work with AI. Professionals must be prepared to adapt to new tools, methodologies, and best practices. This ongoing evolution is a defining characteristic of the field and highlights the importance of continuous learning.

By understanding the trends shaping the industry, professionals can position themselves for success and ensure that their skills remain relevant in a rapidly changing environment.

Deciding Between Completing the ML Specialty or Switching Paths

The retirement timeline set by Amazon Web Services has created an important decision point for professionals currently preparing for the Machine Learning – Specialty certification. This decision is not only about completing an exam but about aligning your learning path with long-term career goals. Individuals who are close to being exam-ready may benefit from finishing the certification, especially if their current role focuses on data science, experimentation, or model evaluation. However, those who are still early in their preparation may find it more valuable to switch to newer certification paths that emphasize production-level skills and generative AI.

The most important factor in making this decision is understanding where the industry is heading. Machine learning roles are increasingly focused on deploying and managing AI systems rather than building models from scratch. Professionals need to evaluate whether their current learning path supports this shift. If their work involves integrating AI into applications, managing cloud environments, or supporting scalable systems, moving to a more modern certification path may offer greater long-term benefits.

Understanding the Impact on Current Certification Holders

For individuals who have already earned the Machine Learning – Specialty certification, the retirement does not immediately reduce its value. The certification remains valid for its full duration and continues to demonstrate strong knowledge of machine learning concepts. It represents a level of expertise that was highly relevant at the time it was achieved and can still be useful in roles that require a solid understanding of data science and model development.

At the same time, the evolving industry means that certification holders may need to expand their skill sets to stay competitive. This includes learning how to apply machine learning in production environments, working with cloud-based tools, and understanding modern AI workflows. By building on their existing knowledge, professionals can transition into roles that require a broader and more practical set of skills.

Evaluating Career Goals in a Changing Landscape

The retirement of the certification provides a good opportunity for professionals to reassess their career goals. Artificial intelligence is no longer limited to specialized research roles. It now includes a wide range of positions that involve application development, system integration, and operational management.

Professionals should think about where they want to position themselves. Those interested in research-focused roles may still benefit from deep machine learning knowledge, while those aiming for application-focused roles will need to develop skills related to cloud services and system design. Understanding these differences can help in choosing the most suitable path forward.

The Growing Importance of Cloud-Native AI Skills

As AI systems become more integrated into cloud environments, cloud-native skills are becoming essential. Professionals need to understand how to work with cloud services, manage resources, and design scalable systems. This includes knowledge of deployment methods, monitoring tools, and performance optimization.

Cloud-native AI skills also involve understanding how different services interact within a larger system. Professionals must be able to design solutions that are efficient, reliable, and capable of handling changing workloads. This requires both technical knowledge and practical experience.

Bridging the Gap Between Theory and Practice

One of the biggest changes in the field is the shift from theoretical knowledge to practical application. While understanding machine learning concepts is still important, professionals must also know how to apply this knowledge in real-world scenarios. This includes building applications, working with tools, and solving practical problems.

Bridging this gap requires hands-on experience. Professionals should work with real datasets, experiment with tools, and create solutions that can be deployed in production environments. This type of experience is essential for developing confidence and real-world competence.

Adapting to New Certification Frameworks

The introduction of new certification paths reflects the changing needs of the industry. These certifications focus on skills that are directly applicable to modern AI workflows, such as integration, deployment, and system management. They are designed to validate practical abilities rather than just theoretical understanding.

Adapting to these new frameworks requires a shift in mindset. Professionals need to see certifications as a way to demonstrate real-world skills and capabilities. This approach is more aligned with what employers are looking for in today’s job market.

The Role of Continuous Learning in Career Growth

Continuous learning is essential in a field that changes as quickly as artificial intelligence. Professionals need to stay updated with new tools, technologies, and best practices. This includes both structured learning and self-directed exploration.

The ability to learn and adapt is one of the most valuable skills in the technology field. By staying open to new ideas and continuously improving, professionals can remain relevant and competitive. This mindset is especially important during periods of change, such as the transition away from older certifications.

Aligning Skills With Industry Demand

The retirement of the Machine Learning – Specialty certification highlights the need to align skills with current industry demand. Employers are looking for professionals who can work with modern AI tools and deliver practical solutions. This includes integrating AI into applications, managing cloud environments, and ensuring system reliability.

Professionals should focus on developing skills that are in demand. This involves understanding current trends, identifying key technologies, and gaining experience in areas that are growing in importance. Aligning skills with industry needs can improve career opportunities and long-term success.

Exploring New Opportunities in Generative AI

Generative AI is one of the fastest-growing areas in the technology industry. It offers opportunities to work on innovative projects that involve content creation, automation, and improved user experiences. Professionals who develop skills in this area can position themselves at the forefront of modern technology.

Working with generative AI requires both technical and creative skills. Professionals need to understand how to use models effectively, design user-friendly systems, and ensure that outputs meet quality standards. This combination of skills makes generative AI an exciting and valuable field.

The Importance of Practical Experience and Projects

Practical experience is just as important as formal certifications. Employers value professionals who can demonstrate their ability to apply knowledge in real-world situations. This often involves working on projects, building applications, and solving real problems.

Creating a portfolio of projects can help professionals showcase their skills. These projects provide clear evidence of capability and show the ability to deliver results. They also offer opportunities to learn new tools and experiment with different approaches.

Navigating the Transition Period Effectively

The period leading up to the retirement of the certification is an important time for planning. Professionals need to assess their current progress, consider their options, and choose a path that aligns with their goals. This might involve finishing the certification, switching to a new path, or combining both strategies.

Careful planning is essential. Professionals should consider their level of preparation, career objectives, and available time. By taking a thoughtful approach, they can make informed decisions and avoid unnecessary challenges.

The Broader Impact on the AI and Cloud Ecosystem

The retirement of the Machine Learning – Specialty certification reflects a larger shift in the AI and cloud ecosystem. It shows the growing importance of practical, production-level skills and the integration of AI into everyday applications.

As the ecosystem continues to evolve, new tools and approaches will emerge. Professionals need to stay flexible and adapt to these changes. This adaptability is essential for long-term success in the field.

Building a Future-Ready Skill Set

Preparing for the future requires a proactive approach to learning. Professionals should focus on skills that will remain valuable, such as generative AI, cloud computing, and system integration.

A future-ready skill set combines technical knowledge with practical experience. It allows professionals to work effectively in modern environments and adapt to new challenges. By focusing on these areas, individuals can build strong and sustainable careers.

Strengthening Problem-Solving and Analytical Thinking

Problem-solving and analytical thinking are essential skills in AI roles. Professionals must be able to analyze situations, identify challenges, and develop effective solutions. These skills support both technical work and innovation.

Strong problem-solving abilities help professionals handle complex situations and adapt to changing requirements. They also enable creative thinking and the development of new ideas, which are important in a fast-moving field like AI.

Embracing the Evolution of AI Careers

The field of artificial intelligence is constantly evolving, creating new opportunities and challenges. Professionals need to embrace this change and be willing to adapt. This includes learning new skills, exploring different roles, and staying informed about industry trends.

By accepting and adapting to change, professionals can take advantage of new opportunities and build successful careers. The retirement of this certification is just one example of how the industry is evolving.

Preparing for Long-Term Career Sustainability

Long-term career success depends on continuous growth and adaptability. Professionals should invest in their skills, stay informed about industry developments, and remain open to change.

Building a sustainable career also involves gaining diverse experience and maintaining a commitment to learning. These factors help professionals remain relevant and succeed in a competitive and ever-changing environment.

Conclusion

The retirement of the Machine Learning – Specialty certification by Amazon Web Services represents more than just the end of a single credential. It signals a broader transformation in how artificial intelligence skills are defined, developed, and applied in real-world environments. Over time, the industry has moved away from a model-centric approach toward one that prioritizes integration, scalability, and operational efficiency. This evolution reflects the growing maturity of AI technologies and their increasing role in everyday business operations.

In earlier stages of machine learning adoption, success was largely tied to the ability to design and optimize models. Professionals focused on understanding algorithms, refining datasets, and improving accuracy. While these skills remain important, they are no longer sufficient on their own. Organizations now expect AI systems to function reliably in production environments, integrate seamlessly with other technologies, and deliver consistent value to users. This shift has redefined what it means to be skilled in artificial intelligence and has influenced how certifications are structured.

The rise of generative AI has further accelerated this transformation. By enabling developers to access powerful models through simple interfaces, generative AI has made advanced capabilities more accessible than ever before. This has expanded the range of professionals who can work with AI and has shifted the focus toward practical implementation. Instead of spending months building models from scratch, teams can now deploy intelligent features quickly and efficiently. As a result, the demand for skills related to integration, system design, and operational management has increased significantly.

For professionals, this change presents both challenges and opportunities. On one hand, it requires a willingness to adapt and learn new skills. Traditional knowledge of machine learning must be complemented with an understanding of cloud services, APIs, data pipelines, and system architecture. On the other hand, it opens up new career paths and possibilities. Individuals who embrace these changes can position themselves at the forefront of a rapidly evolving field, working on innovative projects that have a direct impact on businesses and users.

The decision to retire the certification also highlights the importance of aligning learning paths with industry trends. Certifications are most valuable when they reflect the skills that employers are actively seeking. As the focus shifts toward production-level expertise, professionals must ensure that their training and development efforts are aligned with these priorities. This may involve transitioning to new certification paths, gaining hands-on experience, or exploring emerging areas such as generative AI.

Another key takeaway is the growing importance of practical experience. While certifications provide a structured way to validate knowledge, real-world application is what ultimately determines success. Professionals who can demonstrate their ability to build, deploy, and manage AI systems are more likely to stand out in the job market. This makes it essential to work on projects, experiment with tools, and continuously refine skills through hands-on practice.

The evolving nature of AI also underscores the need for continuous learning. Technologies are advancing at a rapid pace, and new tools and methodologies are constantly being introduced. Professionals must stay informed about these developments and be willing to update their skills accordingly. This requires a proactive approach to learning, as well as a mindset that embraces change and innovation.

Collaboration is another important aspect of modern AI development. As machine learning becomes more integrated into broader systems, professionals must work closely with teams across different disciplines. This includes developers, cloud engineers, security specialists, and business stakeholders. Effective communication and teamwork are essential for building solutions that meet organizational goals and deliver value to users.

The retirement of the Machine Learning – Specialty certification also reflects a shift in how success is measured in AI projects. Accuracy and performance are still important, but they are no longer the only criteria. Organizations now consider factors such as scalability, reliability, cost efficiency, and user experience. Professionals must be able to balance these factors and make decisions that align with both technical and business objectives.

Looking ahead, the future of AI careers will likely be shaped by the continued integration of intelligent systems into everyday applications. As AI becomes more embedded in business processes, the demand for professionals who can manage and optimize these systems will continue to grow. This creates opportunities for individuals to develop diverse skill sets and take on roles that combine technical expertise with strategic thinking.

The transition away from older certifications should not be viewed as a loss but as an opportunity for growth. It encourages professionals to reassess their skills, explore new areas, and adapt to changing demands. By embracing this transition, individuals can build careers that are not only relevant but also resilient in the face of ongoing technological change.

Ultimately, the key to success in this evolving landscape lies in adaptability. Professionals who are willing to learn, experiment, and evolve with the industry will be well-positioned to thrive. The retirement of the Machine Learning – Specialty certification serves as a reminder that the field of artificial intelligence is dynamic and constantly changing. By staying flexible and focused on practical skills, individuals can navigate these changes effectively and continue to grow in their careers.