Python follows a sequential execution model where each instruction is processed in order, and the next instruction does not begin until the current one has fully completed. This execution style is known as blocking behavior, and it plays a significant role in how Python handles tasks such as function execution, data processing, and network communication. When an API call is made within this model, the entire program pauses at that point until the response is received from the external server. This waiting period becomes part of the program’s execution timeline, which means no other task can proceed during that time unless explicitly structured otherwise.

This behavior is simple to understand and makes Python accessible for beginners, but it introduces performance challenges when dealing with operations that depend on external systems. API calls are one of the most common examples of such operations because they rely on network communication, which is inherently unpredictable in terms of speed. Even if the request is small, the time taken for the request to travel to the server, be processed, and return can vary significantly depending on network conditions and server load.

In a blocking execution model, each API request must complete fully before the next one begins. If multiple API calls are required, they are executed one after another in a strict sequence. This means that the total execution time becomes the sum of all individual waiting times. As the number of requests increases, the delay grows proportionally, leading to slower application performance.

This sequential dependency also affects how Python manages system resources. During the waiting period of an API call, the processor is not actively engaged in computation related to the application’s logic. Instead, it remains idle, waiting for the external response. This results in inefficient resource utilization, especially in applications that rely heavily on network communication or data retrieval from external services.

The blocking nature of Python is not a flaw but rather a design choice that prioritizes simplicity and readability. It allows developers to write code straightforwardly without having to manage complex execution flows. However, as modern applications have evolved to require higher performance and responsiveness, especially in network-heavy environments, this model has shown limitations.

Performance Challenges in Sequential API Processing

When applications rely on multiple API calls to function, the sequential execution model becomes a bottleneck. Each request must wait for the previous one to complete before it begins. This creates a cumulative delay effect where the total execution time increases linearly with the number of API calls. For example, if each API call takes even a small amount of time, the combined delay becomes noticeable when scaled across multiple requests.

This issue becomes more pronounced in applications that depend on real-time or near-real-time data. Systems that fetch data from multiple external sources, such as financial applications, analytics dashboards, or content aggregation tools, often experience performance degradation when using sequential API processing. The delay introduced by each request directly impacts the responsiveness of the entire system.

Another important aspect of this limitation is user experience. In applications where users expect immediate feedback, waiting for multiple sequential API calls can result in noticeable lag. Even a few seconds of delay can affect the perceived performance of an application, leading to reduced usability and engagement. This is especially critical in interactive systems where users expect fast and continuous responses.

Scalability is also affected by sequential processing. As applications grow and the number of API calls increases, the system must handle a larger amount of waiting time. This does not scale efficiently because the execution model does not allow overlapping of tasks. Each new request adds to the total delay instead of being processed concurrently with others.

In addition to performance concerns, sequential API processing can also limit architectural flexibility. Developers are often forced to design workflows that minimize the number of external calls or reduce dependency on external services. While this can help improve performance, it also restricts the functionality and complexity of the application.

Understanding Waiting Time and System Resource Utilization

One of the key inefficiencies in blocking execution is the way it handles waiting time. When an API call is made, the program enters a waiting state until the response is received. During this period, the CPU is not actively executing meaningful tasks related to the application. Instead, it remains idle, which leads to underutilization of system resources.

This idle time is particularly problematic in high-performance applications where efficient resource usage is critical. Modern computing systems are designed to handle multiple operations simultaneously, but blocking execution prevents this capability from being fully utilized. As a result, the system’s potential performance is not fully realized.

In environments where multiple users or processes are interacting with the system simultaneously, this inefficiency becomes even more significant. Each process must wait its turn for execution, leading to increased latency and reduced throughput. Over time, this can create performance bottlenecks that limit the scalability of the application.

The inefficiency of waiting time in blocking execution highlights the need for a different approach to handling tasks that involve delays. Instead of halting the entire program, it becomes more effective to allow other operations to continue while waiting for external responses. This concept forms the foundation of non-blocking execution models.

Transition Toward Concurrent Execution Models

As application demands have evolved, developers have increasingly moved toward execution models that allow multiple tasks to progress simultaneously. This shift is driven by the need for improved performance, better scalability, and more efficient resource utilization.

Concurrent execution models enable systems to handle multiple operations in overlapping time periods. Instead of waiting for one task to complete before starting another, the system can switch between tasks as needed. This creates the illusion of parallelism within a single-threaded environment.

The primary advantage of this approach is improved efficiency. While one task is waiting for a response, other tasks can continue executing. This reduces idle time and ensures that system resources are continuously utilized.

In the context of API calls, concurrency allows multiple requests to be initiated without waiting for each one to complete before starting the next. This significantly reduces total execution time when dealing with multiple external requests.

However, adopting concurrent execution requires a different way of thinking about program structure. Developers must consider how tasks are scheduled, how execution flow is managed, and how results are handled when they arrive asynchronously. This introduces additional complexity compared to traditional sequential execution.

Despite this complexity, the benefits of concurrency make it an essential concept in modern application development. Systems that require high performance and responsiveness often rely on concurrent execution to meet their requirements.

Relevance of Non-Blocking Behavior in Modern Systems

Non-blocking execution plays a critical role in modern software systems, particularly those that rely heavily on external communication. In such systems, waiting for responses is unavoidable, but blocking the entire application during that wait is inefficient.

Non-blocking behavior allows the system to remain active even when certain tasks are delayed. This ensures that other operations can continue without interruption. The result is a more responsive and efficient system that can handle a larger number of tasks simultaneously.

This approach is especially important in distributed systems where multiple services communicate over networks. Network latency can vary significantly, and non-blocking execution helps mitigate the impact of these delays by allowing other processes to continue working while waiting for responses.

Non-blocking systems also improve overall throughput. By overlapping waiting periods with active computation, the system can complete more tasks in a given amount of time compared to a strictly sequential model. This makes it possible to handle higher workloads without increasing hardware requirements proportionally.

Another important benefit is improved responsiveness. In user-facing applications, non-blocking execution ensures that the system remains responsive even when performing multiple background operations. This leads to a smoother and more efficient user experience.

Foundational Shift Toward Asynchronous Thinking

The move from blocking to non-blocking execution represents a fundamental shift in how developers approach problem-solving in programming. Instead of thinking in terms of strict sequences, developers begin to think in terms of tasks that can be managed independently and executed concurrently.

This shift requires understanding how tasks interact with each other and how execution can be structured to maximize efficiency. It also involves recognizing which parts of a program involve waiting and how those parts can be isolated from the main execution flow.

Asynchronous thinking emphasizes flexibility in execution. Tasks are no longer seen as strictly ordered steps but as independent units of work that can be paused and resumed as needed. This allows for more efficient use of system resources and better overall performance.

This approach becomes particularly powerful when dealing with API calls, where waiting times are unpredictable. By decoupling task execution from waiting periods, systems can maintain continuous operation without being slowed down by external dependencies.

The increasing importance of asynchronous execution reflects the changing nature of modern applications. As systems become more interconnected and data-driven, the ability to handle multiple operations efficiently has become a critical requirement for scalable and high-performance software.

Introduction to Asynchronous Execution in Python

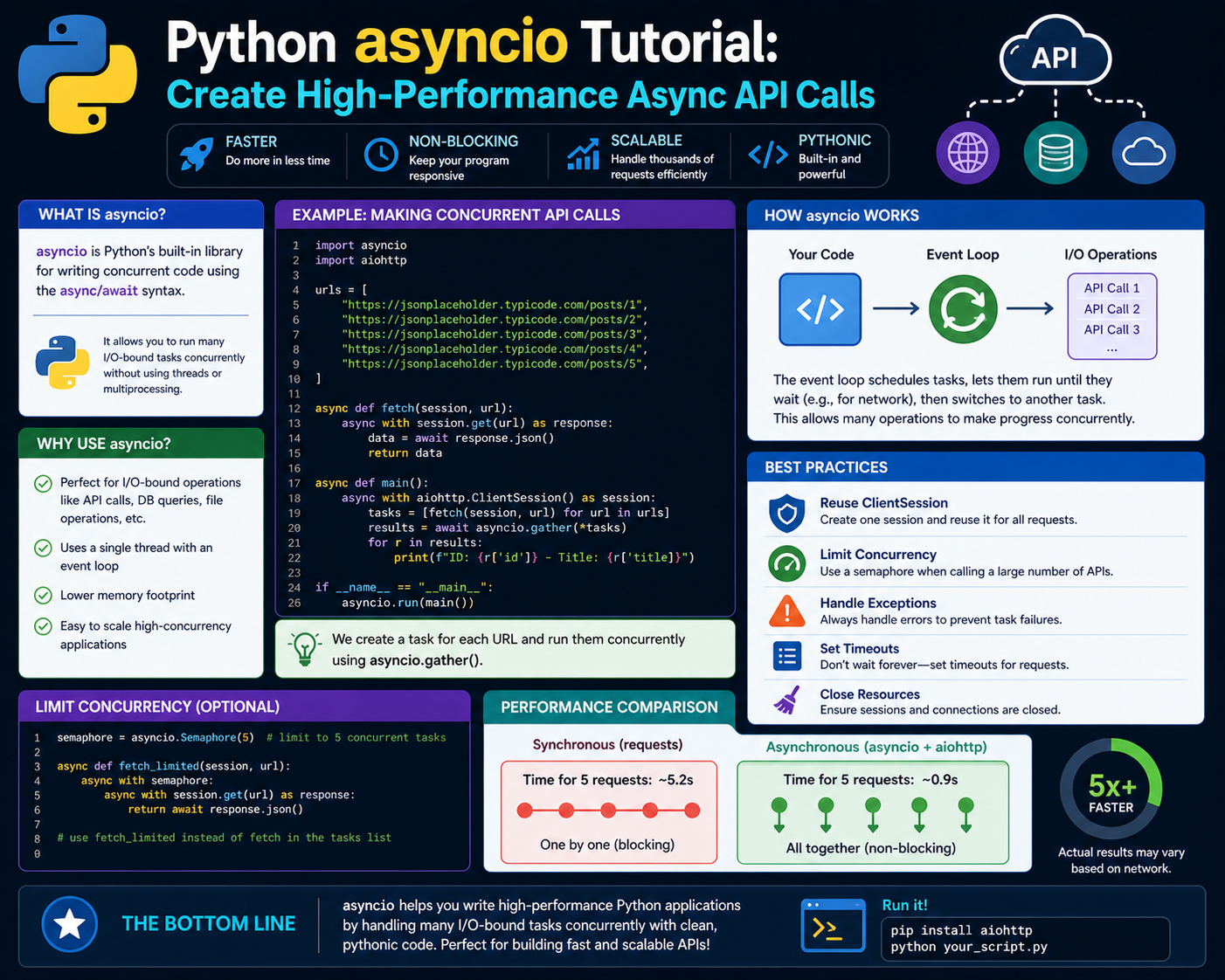

Asynchronous execution in Python introduces a programming approach that allows multiple tasks to be handled in a non-linear manner, improving efficiency when dealing with operations that involve waiting. Instead of executing each instruction in a strict sequence, asynchronous execution enables a program to pause specific tasks when they are waiting for external responses and continue executing other tasks in the meantime. This is especially important in scenarios such as API communication, file handling, and database queries where delays are common and unavoidable.

In a traditional blocking model, each operation must complete before the next begins, which leads to inefficiency when tasks involve waiting. Asynchronous execution addresses this limitation by allowing the program to remain active during these waiting periods. The key idea is not to speed up individual tasks but to ensure that the system as a whole remains productive while certain operations are idle.

Python supports asynchronous execution through language-level constructs that allow developers to define functions capable of being paused and resumed. These constructs change how execution flow is managed, introducing a cooperative scheduling system where tasks voluntarily yield control when they are unable to proceed immediately.

This approach is particularly valuable in modern applications where multiple external requests must be handled simultaneously. Instead of waiting for each request to complete one after another, asynchronous execution allows multiple requests to be initiated and processed concurrently, significantly reducing overall waiting time.

Core Concept of Event-Driven Execution Model

At the heart of asynchronous programming in Python is the event-driven execution model. In this model, the program is structured around events and tasks rather than a fixed sequence of instructions. Each task is treated as an independent unit of work that can be paused and resumed based on the availability of resources or the completion of external operations.

When a task encounters a waiting period, such as an API call, it does not block the entire program. Instead, it yields control back to the event loop, which is responsible for managing all active tasks. The event loop continuously checks which tasks are ready to run and schedules them accordingly.

This mechanism ensures that the system remains responsive even when certain tasks are waiting for external responses. The event loop acts as a central coordinator that distributes execution time among multiple tasks efficiently.

The importance of this model becomes clear in applications that require high concurrency. Instead of dedicating separate threads or processes for each task, asynchronous execution allows a single thread to manage multiple tasks effectively, reducing overhead and improving scalability.

Role of Coroutines in Asynchronous Programming

Coroutines are a fundamental building block of asynchronous execution in Python. A coroutine is a special type of function that can pause its execution at certain points and resume later from the same position. This ability to suspend and resume execution makes coroutines ideal for handling tasks that involve waiting.

Unlike traditional functions that run to completion once called, coroutines operate cooperatively within the event-driven system. They yield control when they reach a point where they must wait for an external operation, such as an API response. Once the required data becomes available, the coroutine resumes execution from where it left off.

This behavior allows multiple coroutines to run concurrently within a single thread. Instead of executing one after another, coroutines take turns executing based on availability, creating the illusion of parallelism without the complexity of multi-threading.

Coroutines are particularly useful in I/O-bound operations, where the program spends more time waiting for input or output rather than performing actual computation. By allowing other coroutines to execute during waiting periods, the overall efficiency of the system is significantly improved.

Async and Await Mechanism in Python

Python introduces two key keywords to support asynchronous programming: async and await. These keywords are used to define and manage coroutines, enabling structured asynchronous execution.

The async keyword is used to define a function as a coroutine. When a function is marked with async, it does not execute immediately when called. Instead, it returns a coroutine object that can be scheduled and managed by the event loop.

The await keyword is used inside asynchronous functions to pause execution until a specific operation is completed. When a coroutine encounters an await statement, it temporarily suspends its execution and allows other coroutines to run. Once the awaited operation is complete, execution resumes from that point.

This mechanism allows developers to write asynchronous code in a way that resembles synchronous code, making it easier to read and maintain while still benefiting from non-blocking execution.

The combination of async and await provides a structured way to handle concurrency without manually managing threads or callbacks. This simplifies the development process while maintaining high performance.

Event Loop as the Execution Controller

The event loop is the central component that drives asynchronous execution in Python. It is responsible for managing the lifecycle of coroutines, scheduling their execution, and handling task switching.

When asynchronous code is executed, the event loop continuously monitors all active tasks. It determines which tasks are ready to run and which ones are waiting for external operations. Tasks that are waiting are temporarily suspended, while ready tasks are executed.

This continuous cycle allows the system to efficiently manage multiple operations without blocking. The event loop ensures that no time is wasted waiting for tasks that are not ready to proceed.

The event loop also handles communication between tasks and external systems. When an API response is received, the event loop identifies the corresponding coroutine and resumes its execution.

This centralized control mechanism is what enables asynchronous programming to function effectively within a single-threaded environment.

Concurrency Without Traditional Multithreading

One of the most important advantages of asynchronous execution is that it provides concurrency without relying on traditional multithreading. In multithreaded systems, multiple threads are created to handle different tasks simultaneously. While this approach can improve performance, it also introduces complexity such as thread synchronization, locking mechanisms, and potential race conditions.

Asynchronous execution avoids these issues by using a single-threaded event loop to manage multiple tasks. Instead of running tasks in parallel, it switches between them efficiently based on their readiness to execute.

This approach reduces overhead and simplifies program design. Since all tasks run within a single thread, there is no need for complex synchronization mechanisms. This makes asynchronous programming safer and easier to manage in many cases.

Although asynchronous execution does not provide true parallelism in the same way as multithreading, it achieves similar performance improvements for I/O-bound tasks by minimizing idle time and maximizing task overlap.

Handling API Calls Using Asynchronous Patterns

API calls are one of the most common use cases for asynchronous programming. Since API requests involve network communication, they are subject to latency and unpredictable response times. In a synchronous model, each API call would block execution until a response is received.

In an asynchronous model, API calls are handled as non-blocking operations. When an API request is initiated, the coroutine responsible for that request is suspended while waiting for the response. During this time, other coroutines can continue executing.

This allows multiple API calls to be processed simultaneously, significantly reducing total execution time. Instead of waiting for each request to complete sequentially, the system can initiate multiple requests and handle responses as they arrive.

This approach is particularly useful in applications that require data from multiple external sources. By executing API calls concurrently, the system can aggregate results more efficiently and improve overall performance.

Task Scheduling and Cooperative Execution

Asynchronous programming relies on cooperative scheduling, where tasks voluntarily yield control when they are unable to proceed. This is different from preemptive scheduling used in traditional multithreading, where the operating system forcibly switches between threads.

In cooperative execution, tasks decide when to pause and resume based on their own state. This allows for more predictable behavior and reduces the risk of conflicts between tasks.

The event loop plays a crucial role in managing this scheduling process. It ensures that tasks are executed in an efficient order and that waiting tasks do not block active ones.

This approach allows for the smooth execution of multiple operations without requiring complex coordination between tasks.

Efficiency Improvements Through Asynchronous Design

Asynchronous design significantly improves efficiency in applications that involve frequent waiting operations. By overlapping execution and waiting periods, the system can perform more work in less time.

This improvement is particularly noticeable in network-heavy applications where delays are common. Instead of sitting idle during network communication, the system continues processing other tasks.

This leads to better utilization of system resources and improved application performance. It also allows applications to scale more effectively, as additional tasks can be handled without a proportional increase in execution time.

Asynchronous design also improves responsiveness, ensuring that applications remain active even when performing multiple background operations. This is critical in modern software systems where user experience is a key factor.

Evolution Toward Modern Asynchronous Systems

The adoption of asynchronous programming reflects the evolution of modern software systems. As applications become more interconnected and data-driven, the need for efficient handling of external operations has increased.

Asynchronous execution provides a solution that aligns with these requirements by enabling non-blocking, concurrent task management. This allows developers to build scalable systems that can handle high workloads without sacrificing performance.

The shift toward asynchronous design represents a broader change in how software is developed, moving from sequential processing to event-driven, concurrent execution models that better reflect real-world application demands.

Understanding Async API Call Design in Real Applications

Async API call design focuses on structuring network communication in a way that avoids blocking the main execution flow while still maintaining correct data flow and response handling. In real-world applications, API communication rarely happens in isolation. Systems typically depend on multiple endpoints, each returning data at different speeds depending on network conditions, server load, and geographic latency. A synchronous approach forces each request to wait for the previous one, which creates inefficiency and slows down overall system performance.

In an asynchronous design model, API requests are initiated without requiring immediate completion before moving on to the next operation. Each request is treated as an independent task that can be paused and resumed. This allows multiple requests to be active at the same time, even though they are not executed in parallel in the traditional CPU sense. Instead, they are interleaved through cooperative scheduling managed by the event loop.

This structure is particularly effective in systems that rely on aggregation of multiple data sources. For example, an application that pulls user data, transaction history, and external analytics from different APIs benefits significantly from async execution because all these requests can be initiated simultaneously. The system does not need to wait for one response before starting another, which reduces total waiting time.

The key idea behind async API design is not to make individual requests faster, but to eliminate unnecessary waiting between requests. This shift in execution flow design leads to more efficient use of system resources and improved responsiveness, especially in applications where user experience depends on fast data retrieval.

Concurrent Execution Flow in API Communication

Concurrent execution in asynchronous API calls is achieved through task interleaving rather than true parallel processing. When multiple API calls are initiated, each call is represented as a task that can pause when waiting for a response. The event loop then manages these tasks by switching between them whenever one enters a waiting state.

This creates a flow where multiple API calls are effectively in progress at the same time. While one request is waiting for a server response, another request can be sent or processed. This overlap of waiting periods is what leads to performance improvement.

The efficiency gain becomes more noticeable as the number of API calls increases. In a sequential model, each additional request adds directly to the total execution time. In an asynchronous model, multiple requests share waiting periods, reducing the cumulative delay.

This approach is particularly important in modern distributed systems where services are often separated across different servers or cloud regions. Network latency becomes a major factor, and asynchronous execution helps minimize its impact by ensuring that the system remains active during waiting periods.

The concurrency model used in async execution is cooperative, meaning tasks voluntarily yield control. This is different from traditional multithreading, where the operating system manages context switching. Cooperative concurrency reduces overhead and avoids many of the complexities associated with thread synchronization.

Role of Event Loop in API Task Coordination

The event loop is the central mechanism that enables asynchronous API execution. It continuously monitors all active tasks, determines their state, and decides which task should execute next. Tasks that are waiting for API responses are temporarily suspended, while ready tasks are executed.

When an API call is made, the corresponding task is registered with the event loop. The event loop then tracks the status of the request. If the request is still pending, the task remains paused. Once the response is received, the event loop resumes the task and continues execution from the point where it was suspended.

This continuous cycle allows the system to manage multiple API calls efficiently without blocking execution. The event loop ensures that CPU time is always used for active tasks rather than idling.

The design of the event loop is optimized for I/O-bound operations such as network communication. Since API calls spend most of their time waiting for external responses, the event loop can easily switch between tasks during these waiting periods.

This mechanism eliminates the need for manual thread management or complex synchronization logic, making asynchronous API handling more efficient and easier to implement.

Non-Blocking Network Requests and Efficiency Gains

Non-blocking network requests are a key advantage of asynchronous API design. In a blocking system, a network request halts execution until a response is received. This creates idle time where the system is unable to perform other operations.

In a non-blocking system, network requests are handled in a way that allows execution to continue while waiting for responses. This means that multiple requests can be active simultaneously without interfering with each other.

The efficiency gains come from overlapping waiting periods. Instead of waiting for each request sequentially, the system initiates multiple requests and handles responses as they arrive. This reduces total execution time and improves throughput.

This model is especially effective in applications that rely on external data aggregation. By allowing multiple API calls to run concurrently, the system can gather information from different sources more quickly.

Non-blocking behavior also improves system responsiveness. Since the main execution flow is not paused, applications remain interactive even when performing background API operations.

Structured Flow of Async API Requests

Async API requests follow a structured flow that begins with task creation and ends with response handling. When a request is initiated, it is converted into a coroutine that can be managed by the event loop. This coroutine represents the lifecycle of the API call.

Once the request is sent, the coroutine enters a waiting state. During this time, it yields control to the event loop, allowing other tasks to execute. This ensures that no processing time is wasted on idle waiting.

When the API response is received, the event loop resumes the coroutine. The response data is then processed, and execution continues from the point where it was suspended.

This structured flow ensures that API calls are handled efficiently without blocking other operations. It also allows developers to manage multiple requests in a clean and organized way.

The ability to suspend and resume execution is what makes asynchronous API handling so powerful. It allows systems to remain active and responsive even when dealing with multiple external dependencies.

Task Lifecycle in Asynchronous API Systems

Each asynchronous API call follows a lifecycle that includes creation, execution, waiting, and completion. Understanding this lifecycle is essential for designing efficient async systems.

The first stage is task creation, where the API request is defined as a coroutine. This coroutine is then scheduled by the event loop.

The second stage is execution, where the request is sent to the external server. Once the request is sent, the coroutine enters a waiting state.

The third stage is suspension, where the coroutine yields control while waiting for a response. During this stage, other tasks continue execution.

The final stage is completion, where the response is received, and the coroutine resumes execution. The result is then processed and returned to the calling function.

This lifecycle allows multiple API calls to be managed efficiently within a single execution thread. It ensures that no time is wasted waiting for external responses.

Performance Optimization Through Async Patterns

Asynchronous API design significantly improves performance in systems that rely on external communication. By allowing multiple requests to be processed concurrently, it reduces total execution time and improves system efficiency.

One of the key optimizations comes from reducing idle time. In a synchronous system, the CPU remains idle while waiting for API responses. In an asynchronous system, this idle time is eliminated by switching to other tasks.

Another optimization comes from improved resource utilization. Since tasks are managed within a single thread, there is no overhead associated with creating and managing multiple threads. This reduces memory usage and improves scalability.

Async patterns also improve scalability by allowing systems to handle more requests without a proportional increase in processing time. This makes it easier to build high-performance applications that can handle large volumes of traffic.

The overall result is a more efficient system that can perform more work in less time while maintaining responsiveness.

Real-World Application Scenarios for Async API Calls

Async API calls are widely used in modern software systems that require fast and efficient data retrieval. Applications such as data aggregation platforms, real-time analytics dashboards, and content delivery systems rely heavily on asynchronous execution.

In these systems, multiple API calls are often required to gather complete information. Asynchronous execution allows these calls to be processed simultaneously, reducing overall latency.

Another common use case is in web services that handle multiple user requests at the same time. By using async API calls, these services can process multiple requests concurrently without blocking the main execution flow.

This approach is also useful in automation systems that interact with external services. By handling API calls asynchronously, these systems can perform complex workflows more efficiently.

The flexibility of async design makes it suitable for a wide range of applications that depend on external data sources.

Architectural Impact of Asynchronous API Design

Asynchronous API design has a significant impact on system architecture. It encourages a shift from linear execution models to event-driven architectures where tasks are managed based on availability rather than sequence.

This architectural shift allows systems to scale more effectively and handle higher workloads. It also reduces dependency on hardware scaling by improving software-level efficiency.

Async architecture also promotes modular design. Since tasks are independent and can be executed concurrently, systems can be broken down into smaller, reusable components.

This modularity improves maintainability and allows developers to build more flexible systems that can adapt to changing requirements.

The overall architectural impact of async API design is a move toward more efficient, scalable, and responsive systems that are better suited for modern computing environments.

Conclusion

The shift from blocking execution to asynchronous programming represents a fundamental change in how Python handles operations such as API calls, file access, and network communication. In a traditional blocking model, each task must finish before the next one begins. This creates a strict linear flow where the program pauses whenever it encounters a waiting operation. While this approach is simple and easy to understand, it becomes inefficient in real-world applications where waiting for external responses is common.

Asynchronous programming removes this limitation by allowing tasks to pause and resume without stopping the entire program. When an API call is made, the program does not freeze until the response arrives. Instead, it continues executing other tasks while the request is being processed in the background. Once the response is ready, the task resumes from where it left off. This approach significantly improves efficiency, especially in applications that rely heavily on network communication.

In modern software systems, API calls are a core part of functionality. Applications frequently depend on external services for authentication, data retrieval, analytics, and communication between services. Each API call introduces a waiting period that cannot be controlled by the application itself. In a blocking system, these waiting periods accumulate and slow down the entire process. As more API calls are added, performance degrades further because each request must complete before the next one starts.

Asynchronous API handling solves this problem by allowing multiple requests to run at the same time. Instead of waiting for one response before sending the next request, the system initiates multiple requests and handles responses as they arrive. This reduces total waiting time and improves overall performance. The key improvement is not that individual API calls become faster, but that the system avoids unnecessary idle time.

This approach is especially important in applications that require real-time or near-real-time data processing. Systems such as financial dashboards, monitoring tools, recommendation engines, and communication platforms depend on fast data retrieval from multiple sources. Even small delays can affect user experience and system performance. Asynchronous execution ensures that these systems remain responsive even under heavy load.

Scalability is another major advantage of asynchronous execution. As applications grow and the number of API calls increases, synchronous systems struggle because each additional request adds directly to the total execution time. Asynchronous systems handle this more efficiently by overlapping waiting periods across multiple tasks. This means the system can handle more requests without a proportional increase in processing time. As a result, applications can scale more effectively without requiring a corresponding increase in hardware resources.

Non-blocking execution plays a central role in this efficiency. In a blocking model, the processor remains idle while waiting for API responses. This leads to poor resource utilization because the system is not doing useful work during waiting periods. In a non-blocking model, tasks are designed to continue executing while others are waiting. This ensures that system resources are used more effectively and reduces wasted processing time.

The improvement in efficiency comes from overlapping computation with waiting time. While one API request is waiting for a response, other tasks can continue running. This allows the system to maintain continuous activity instead of sitting idle. As the number of concurrent operations increases, the benefits of this approach become more noticeable. Systems can handle more work in less time while maintaining stable performance.

Responsiveness is another important benefit of asynchronous programming. In user-facing applications, delays can significantly affect user experience. Blocking systems often freeze or slow down when performing multiple API calls, which makes the application feel unresponsive. Asynchronous execution ensures that the main program remains active even while background operations are running. This allows users to continue interacting with the application without interruption.

For example, in a web application that retrieves data from multiple sources, asynchronous execution ensures that the interface does not freeze while waiting for responses. Data can be loaded progressively, and the application remains responsive throughout the process. This creates a smoother and more efficient user experience.

Over time, asynchronous architecture also improves maintainability and flexibility. Since tasks are designed to operate independently, systems become more modular. Each component can handle its own operations without tightly depending on others. This makes it easier to extend functionality or modify parts of the system without affecting the entire application.

Another long-term advantage is better resource management. Asynchronous systems reduce idle CPU time and improve overall utilization. This leads to more efficient use of computing resources, which can lower operational costs and improve performance in production environments. Systems can handle higher workloads without requiring proportional increases in infrastructure.

From a design perspective, asynchronous programming encourages a shift in thinking. Instead of focusing on step-by-step execution, developers begin to think in terms of independent tasks that can be managed concurrently. This task-based approach aligns more closely with how modern distributed systems operate, where multiple services interact at the same time and responses arrive at unpredictable intervals.

This shift is particularly important in environments where applications depend heavily on external services. Network latency, server response time, and data processing delays are all factors that cannot be controlled internally. Asynchronous programming provides a structured way to manage these uncertainties without sacrificing performance or stability.

Ultimately, asynchronous API design in Python provides a practical solution to the limitations of blocking execution. It enables systems to handle multiple operations efficiently, improves responsiveness, and supports scalability in modern applications. By allowing tasks to run concurrently without interfering with each other, asynchronous programming ensures that Python remains well-suited for building high-performance, data-driven, and network-intensive applications.