The disruption linked to CrowdStrike became one of the most significant technology failures in recent years, affecting millions of Microsoft Windows-powered systems. Organizations across industries experienced widespread outages as systems crashed or became unresponsive. Airlines canceled flights, hospitals faced operational delays, and businesses suffered financial losses due to downtime.

Unlike traditional cybersecurity incidents caused by malicious attacks, this event was triggered by an internal software issue. This distinction is important because it highlights a different kind of risk. Rather than an external breach, the failure stemmed from a trusted system that organizations rely on for protection. This shows how deeply integrated security platforms are in modern IT environments and how a single issue can spread across global systems.

The incident also revealed how dependent organizations are on automated processes. Updates are often deployed quickly to protect against new threats, but when an error occurs, the same speed can amplify the impact. This balance between speed and stability is a major challenge in modern cybersecurity.

What CrowdStrike Represents in Modern Cybersecurity

CrowdStrike is known for its cloud-based approach to cybersecurity. Since its founding in 2011, it has shifted the industry away from traditional antivirus tools toward intelligent, behavior-based detection systems. Its main platform, Falcon, combines multiple security functions into one system.

Falcon works by using lightweight agents installed on devices. These agents monitor activity and send data to a cloud platform for analysis. This allows the system to detect threats in real time. Instead of relying only on known virus signatures, it uses advanced analytics to identify suspicious behavior.

The platform includes several key capabilities, including threat intelligence, incident response, and proactive threat hunting. These features allow organizations to not only detect threats but also respond quickly and prevent future attacks.

However, the recent outage showed that even advanced systems can fail. The reliance on centralized systems and automatic updates creates risks that must be carefully managed.

Breaking Down the Cause of the Outage

The root cause of the disruption was a faulty update related to the Falcon platform on systems running Microsoft Windows. The update introduced a defect that caused system instability and crashes. Devices that received the update were unable to function normally.

The issue was specific to Windows systems, while other operating systems were not affected. This indicates that the problem was related to how the update interacted with the Windows environment.

One of the main reasons the outage spread so widely was the speed of deployment. Automated updates delivered the faulty configuration to a large number of systems in a short time. While automation is essential for security, it also increases the risk of widespread failure if something goes wrong.

This situation highlights the importance of controlled update processes. Testing updates in stages and having the ability to quickly reverse changes can help prevent large-scale disruptions.

The Role of Software Development Practices

The incident raised concerns about the software development lifecycle. This process is designed to ensure that updates are tested and validated before they are released. When testing is not thorough, the risk of errors increases.

In complex systems like cybersecurity platforms, updates must be tested across different environments and configurations. The failure to catch the issue earlier suggests that testing may not have covered all possible scenarios.

There is often pressure to release updates quickly in response to new threats. While speed is important, it must not come at the cost of reliability. Careful testing and validation are essential to ensure that updates do not introduce new problems.

Strong development practices also include change management and version control. These processes help track updates and ensure that only approved changes are deployed. They also make it easier to fix issues when they occur.

Why Endpoint Security Failures Are So Disruptive

Endpoint security platforms operate at a deep level within a system. They interact directly with the operating system and other critical components. This allows them to provide strong protection, but it also means that failures can have serious consequences.

When an endpoint security tool fails, it can affect the entire system. This can lead to crashes, performance issues, or complete shutdowns. Because these tools are integrated into core system functions, any issue can quickly spread.

The widespread use of Microsoft Windows increases the scale of the impact. Since many organizations use the same platform, a single issue can affect millions of users.

Modern IT systems are also highly interconnected. A failure in one system can affect others, creating a chain reaction. This interconnectedness makes it more difficult to contain problems once they start.

Impact on Critical Industries

The outage had a major impact on industries that rely heavily on technology. In the aviation sector, systems used for scheduling and operations were disrupted, leading to flight cancellations and delays. Passengers were affected, and airlines faced operational challenges.

Healthcare organizations also experienced disruptions. Hospitals depend on digital systems for patient records and operations. Any downtime can affect the quality of care and create delays in treatment.

Businesses across many sectors faced interruptions that affected productivity and revenue. Retail, finance, and manufacturing operations were all impacted to some extent. The financial losses from such disruptions can be significant.

This event shows how important it is for organizations to have backup plans and systems in place. Being prepared for unexpected failures can reduce the impact on operations.

Lessons for IT and Security Professionals

The outage provides important lessons for IT and cybersecurity professionals. One key lesson is the importance of visibility. Organizations need tools that provide real-time information about system performance and health. This allows teams to detect problems early.

Another lesson is the need for redundancy. Relying on a single system creates risk. Using multiple solutions and backup systems can improve reliability and reduce the impact of failures.

Training is also essential. IT teams must be prepared to respond quickly to unexpected issues. This includes understanding system architecture and knowing how to troubleshoot problems.

Communication is another important factor. During an incident, clear communication helps teams coordinate their response and minimize confusion.

Broader Implications for the Cybersecurity Industry

The incident has broader implications for the cybersecurity industry. It raises questions about reliability, accountability, and the balance between innovation and stability. As systems become more advanced, they also become more complex, increasing the risk of errors.

Vendors must focus on building reliable systems and providing clear communication during incidents. Organizations must also evaluate their reliance on third-party solutions and ensure they have backup plans.

The event highlights the importance of collaboration. Sharing information and best practices can help prevent similar issues in the future.

The Growing Importance of Resilient Architecture

Modern IT systems are designed to be flexible and scalable. While this improves performance, it also introduces new challenges. Systems must be designed to handle failures without causing widespread disruption.

Resilient architecture includes features such as fault isolation, rollback mechanisms, and continuous monitoring. These features help prevent small issues from becoming major problems.

Organizations must take a comprehensive approach to system design. This includes considering both security and reliability. By focusing on resilience, they can build systems that continue to operate even under difficult conditions.

The lessons from this incident will shape how organizations approach cybersecurity in the future. By improving testing, monitoring, and system design, they can reduce the risk of similar disruptions and build more stable environments.

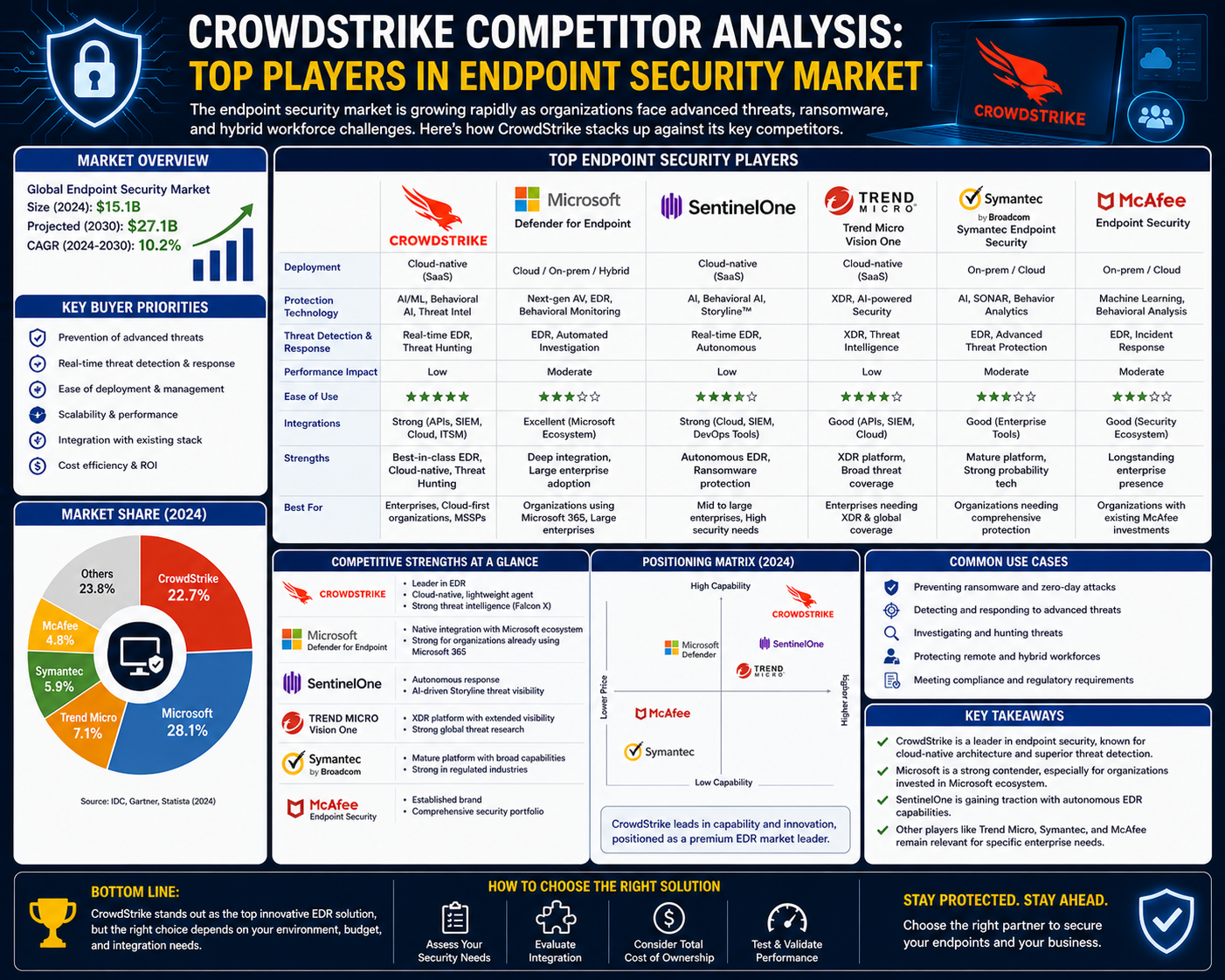

Exploring the Competitive Landscape Around CrowdStrike

The global disruption associated with CrowdStrike did more than expose technical vulnerabilities; it also shifted attention toward the broader cybersecurity market. Organizations that rely heavily on endpoint protection began reassessing their dependence on a single vendor and exploring alternative solutions that could offer similar capabilities with different architectural approaches. This shift is not necessarily about abandoning one provider but about understanding the diversity of tools available and how they compare in terms of resilience, performance, and flexibility.

The cybersecurity industry is highly competitive, with multiple vendors offering overlapping capabilities. Endpoint detection and response, threat intelligence, and cloud security are no longer niche features but standard expectations. What differentiates competitors is how these capabilities are delivered, how systems are architected, and how effectively they handle large-scale operations in real-world environments.

The recent outage has made it clear that technical excellence alone is not sufficient. Reliability, testing rigor, and deployment strategies are equally important. As a result, organizations are taking a closer look at competitors that offer strong security features while also emphasizing stability and controlled update mechanisms.

Symantec and Its Evolution Under Broadcom

Symantec, now operating under Broadcom, represents one of the most established names in cybersecurity. Historically known for its antivirus solutions, the company has evolved into a provider of comprehensive enterprise security offerings. Its portfolio includes endpoint protection, network security, and cloud-based defense systems.

One of the defining characteristics of Symantec’s approach is its transition from traditional monolithic systems to more modular architectures. Earlier versions of its products were built as all-in-one solutions, which provided strong integration but limited flexibility. Over time, the company has adopted more scalable models that allow individual components to operate independently while still contributing to a unified security framework.

This evolution reflects broader industry trends. Organizations today require solutions that can adapt quickly to changing threats without compromising stability. Symantec’s gradual shift toward modularity aims to address this need by enabling targeted updates and reducing the risk of widespread system failures.

In comparison to CrowdStrike, Symantec offers a more traditional yet increasingly modernized approach. Its long-standing presence in the industry gives it a deep understanding of enterprise requirements, while its ongoing transformation demonstrates its effort to remain competitive in a rapidly changing landscape.

McAfee and the Balance Between Legacy and Innovation

McAfee is another major player that competes directly in the endpoint security space. Known for its consumer-focused antivirus products, the company has expanded into enterprise solutions that include advanced threat detection and response capabilities. Its offerings cover a wide range of use cases, from individual users to large organizations.

One of the unique aspects of McAfee’s approach is its hybrid architecture. The company maintains some legacy systems that continue to operate effectively while simultaneously developing newer, more flexible solutions. This combination allows McAfee to support existing customers while also innovating in areas such as cloud security and data protection.

The hybrid model has both advantages and challenges. On the one hand, it provides continuity and reliability for long-term users. On the other hand, integrating legacy systems with modern architectures can create complexity that must be carefully managed. This balance between older and newer systems is a defining feature of McAfee’s strategy.

In the context of recent events, McAfee’s approach highlights the importance of gradual evolution rather than rapid transformation. By maintaining stable systems while introducing new capabilities, the company aims to minimize the risk of large-scale disruptions.

Palo Alto Networks and Its Cloud-First Strategy

Palo Alto Networks has established itself as a leader in next-generation security solutions. The company is particularly known for its advanced firewall technologies and its strong presence in cloud security. Over time, it has expanded its portfolio through strategic acquisitions, enhancing its capabilities in automation, analytics, and threat intelligence.

A key strength of Palo Alto Networks lies in its cloud-first approach. Its platforms are designed to operate in distributed environments, enabling organizations to secure data and applications across multiple locations. This approach aligns well with modern IT environments, where cloud adoption continues to grow.

The company’s use of modular and service-oriented architectures allows for rapid development and deployment of new features. This flexibility is essential in a field where threats evolve quickly. At the same time, it requires robust testing and validation processes to ensure that updates do not introduce instability.

Compared to CrowdStrike, Palo Alto Networks offers a broader range of security solutions that extend beyond endpoint protection. Its integrated ecosystem provides a comprehensive approach to security, covering network, cloud, and application layers.

Cisco and the Power of Integrated Security Ecosystems

Cisco is widely recognized for its networking expertise, but it also plays a significant role in cybersecurity. The company offers a diverse range of security solutions, including endpoint protection, network defense, and advanced threat intelligence.

One of Cisco’s key advantages is its ability to integrate security across multiple layers of the IT infrastructure. Its solutions are designed to work seamlessly with its networking products, creating a unified ecosystem that enhances visibility and control. This integration allows organizations to manage threats more effectively by correlating data from multiple sources.

Cisco’s approach combines legacy systems with modern innovations. While some of its older products are based on traditional architectures, newer solutions increasingly leverage flexible and scalable designs. This combination enables the company to support a wide range of customer needs while continuing to innovate.

In comparison to CrowdStrike, Cisco offers a more holistic approach that extends beyond endpoint security. Its strength lies in its ability to integrate security into the broader network infrastructure, providing a comprehensive defense strategy.

Carbon Black and Cloud-Native Endpoint Protection

Carbon Black, now part of VMware, focuses on cloud-native endpoint protection and security operations. Its platform is designed to provide real-time visibility and control over endpoints, enabling organizations to detect and respond to threats effectively.

The company’s architecture is built around distributed components that can operate independently while sharing data through centralized systems. This design allows for rapid scaling and efficient processing of large volumes of data. It also supports continuous updates without requiring significant downtime.

Carbon Black’s emphasis on predictive analytics and threat hunting aligns closely with industry trends. By analyzing patterns and behaviors, the platform can identify potential threats before they cause harm. This proactive approach is similar to that of CrowdStrike, making the two companies direct competitors in many areas.

The key difference lies in how their systems are implemented and managed. Carbon Black’s integration with VMware’s broader ecosystem provides additional flexibility for organizations that rely on virtualization and cloud technologies.

SentinelOne and AI-Driven Security Innovation

SentinelOne has emerged as a strong competitor due to its focus on artificial intelligence and automation. The company’s platform is designed to operate autonomously, detecting and responding to threats without requiring constant human intervention.

This AI-driven approach allows SentinelOne to process large volumes of data quickly and identify anomalies that might be missed by traditional systems. Its ability to automate responses also reduces the time required to contain and mitigate threats.

The platform’s architecture supports rapid innovation, enabling the company to introduce new features and capabilities frequently. This agility is a significant advantage in a competitive market. However, it also requires careful management to ensure that updates do not compromise system stability.

In comparison to CrowdStrike, SentinelOne emphasizes automation and independence. While both companies leverage advanced analytics, SentinelOne’s focus on autonomous operations sets it apart as a forward-looking solution.

Comparing Architectural Approaches Across Competitors

One of the most important factors distinguishing these competitors is their approach to system architecture. Traditional monolithic systems offer strong integration but can be difficult to scale and update. Modern modular architectures provide greater flexibility but require careful coordination to ensure compatibility.

Companies like Palo Alto Networks and SentinelOne have embraced flexible designs that allow individual components to be updated independently. This approach reduces the risk of widespread failures but introduces challenges in integration and testing.

On the other hand, vendors like McAfee maintain a hybrid model that combines legacy and modern systems. This strategy provides stability while allowing for gradual innovation. However, it can also create complexity that must be carefully managed.

The recent outage highlights the importance of balancing flexibility with reliability. Regardless of the architecture used, thorough testing and controlled deployment are essential to prevent disruptions.

Key Factors Organizations Consider When Evaluating Alternatives

In the wake of the disruption, organizations are evaluating competitors based on several critical factors. Performance and detection capabilities remain important, but reliability and resilience have become equally significant considerations. Companies want assurance that their security solutions will not introduce new risks.

Another important factor is visibility. Solutions that provide detailed insights into system activity and performance enable organizations to detect issues early and respond effectively. This capability is essential for maintaining stability in complex environments.

Integration is also a key consideration. Organizations often use multiple tools and platforms, so compatibility with existing systems is crucial. Solutions that can seamlessly integrate with other technologies provide greater flexibility and efficiency.

Cost and scalability are additional factors that influence decision-making. Organizations must balance their security needs with budget constraints while ensuring that their solutions can grow with their operations.

The Strategic Shift Toward Multi-Vendor Security Models

The incident has encouraged many organizations to adopt multi-vendor strategies. Instead of relying on a single provider, they are distributing their security needs across multiple solutions. This approach reduces the risk of a single point of failure and increases overall resilience.

A multi-vendor model allows organizations to leverage the strengths of different providers while mitigating their weaknesses. For example, one solution might excel in endpoint protection, while another provides superior network security. By combining these capabilities, organizations can create a more robust defense strategy.

However, this approach also introduces challenges. Managing multiple systems requires careful coordination and integration. Organizations must ensure that their tools work together effectively and that data can be shared smoothly across platforms.

Despite these challenges, the benefits of diversification are becoming increasingly clear. As the cybersecurity landscape continues to evolve, organizations are likely to place greater emphasis on flexibility and resilience in their security strategies.

Strengthening Cybersecurity Resilience After Large-Scale Failures

The global disruption involving CrowdStrike made it clear that cybersecurity tools must be designed not only to stop threats but also to remain stable under pressure. Organizations are now focusing on building systems that can continue operating even when unexpected failures occur. This shift reflects a broader understanding that reliability is just as important as protection.

Resilience in cybersecurity means being able to absorb disruptions, recover quickly, and maintain operations without major interruptions. This requires careful planning, strong system design, and ongoing monitoring. The incident showed that even trusted platforms can fail, which is why companies are now prioritizing backup systems, redundancy, and controlled updates.

Modern enterprises are also rethinking their dependency on single solutions. Instead of relying on one platform, they are designing layered defenses that reduce risk and improve stability. This approach ensures that even if one system fails, others can continue to provide protection.

The Importance of Controlled Update Strategies

One of the most critical lessons from the outage is the need for better update management. Automatic updates are necessary for security, but they must be handled carefully to avoid widespread failures. Organizations are now adopting staged deployment strategies where updates are rolled out gradually rather than all at once.

This method allows teams to test updates in smaller environments before applying them across the entire system. If an issue appears, it can be identified early and stopped before causing major disruption. Controlled rollouts also reduce the risk of system-wide crashes.

Another key element is rollback capability. Systems must be able to quickly revert to a previous stable version if something goes wrong. Without this, recovery becomes slow and complicated. Fast rollback mechanisms can significantly reduce downtime and limit damage.

Clear communication during updates is equally important. IT teams need to know when updates are happening and what risks may be involved. This ensures faster response times if issues occur.

Improving System Visibility and Monitoring

Visibility plays a central role in preventing large-scale failures. Organizations need real-time insight into how their systems are performing. Monitoring tools help detect unusual behavior before it turns into a serious problem.

These tools track system activity, resource usage, and performance metrics. When something unusual happens, alerts can be triggered immediately. This allows IT teams to respond quickly and prevent further damage.

Advanced monitoring also helps identify patterns. For example, repeated crashes or performance drops may indicate deeper issues. By analyzing this data, organizations can take preventive action instead of reacting after failure occurs.

For platforms like CrowdStrike, visibility must cover the entire environment, not just individual devices. This ensures that all components are working together properly and that any issues are detected early.

Building Redundant and Fault-Tolerant Systems

Redundancy is essential for maintaining system stability. It involves having backup systems in place so that if one component fails, another can take over. This reduces the risk of total system failure.

Organizations are increasingly adopting multi-layered security strategies. Instead of relying on a single tool, they combine multiple solutions to ensure continuous protection. This approach improves reliability and reduces the impact of failures.

Fault tolerance takes this a step further by allowing systems to continue operating even when parts of them fail. This requires careful design and testing. Systems must be able to handle errors without shutting down completely.

Distributed architectures also help improve fault tolerance. By spreading workloads across multiple systems, organizations can isolate failures and prevent them from affecting the entire network.

The Role of Skilled IT and Security Teams

Technology alone cannot prevent failures. Skilled professionals are essential to manage systems, respond to incidents, and maintain operational stability. Organizations must invest consistently in training and development to ensure their teams are prepared to handle complex and rapidly evolving challenges. Without knowledgeable professionals, even the most advanced tools can be misconfigured, underutilized, or slow to respond during critical situations.

IT professionals need a deep understanding of system architecture, endpoint security, networking, and troubleshooting techniques. They must be capable of identifying root causes quickly and taking corrective action under pressure. Strong analytical thinking and decision-making skills are critical, especially during large-scale outages where every second matters.

Training programs play a vital role in building these capabilities. Structured learning, combined with hands-on practice, helps professionals understand both theoretical concepts and real-world applications. Simulations and controlled lab environments allow teams to practice responding to incidents without real risk. These exercises improve coordination, reduce response time, and increase confidence when dealing with actual disruptions.

Real-world scenario training is particularly valuable. Teams that regularly simulate outages, system failures, and recovery processes are better prepared to handle unexpected events. These exercises also help identify gaps in processes and communication, allowing organizations to refine their incident response strategies.

Continuous learning is equally important. Cybersecurity and IT infrastructure evolve rapidly, with new threats, tools, and technologies emerging regularly. Professionals must stay updated through ongoing education, certifications, and industry research. Organizations that prioritize continuous skill development are better equipped to maintain resilience and adapt to changing conditions.

Adopting Secure Development Practices

The software development process plays a critical role in preventing system failures. Every update, patch, or configuration change introduces potential risk, which is why thorough testing and validation are essential before deployment. Development teams must follow structured processes to ensure that updates meet quality, security, and compatibility standards.

Testing should be comprehensive and include multiple environments that reflect real-world conditions. This ensures that updates perform consistently across different systems and configurations. Skipping or rushing testing phases can lead to unexpected issues that may only appear after deployment, increasing the risk of widespread disruption.

Automated testing tools can significantly improve efficiency by running large numbers of test cases consistently. However, automation alone is not enough. Human oversight is necessary to interpret results, identify edge cases, and ensure that updates align with broader system requirements. Developers must carefully review outcomes and verify that no critical issues are overlooked.

Version control and change management are also essential components of secure development. These practices ensure that every change is documented, reviewed, and approved before implementation. They provide a clear history of updates, making it easier to track issues and revert changes if necessary.

Collaboration between development and operations teams further strengthens reliability. When these teams work closely together, they can ensure that updates are not only functional but also stable in production environments. This collaborative approach reduces the risk of misalignment and improves overall system performance.

Ensuring Compatibility Across Systems

Modern IT environments consist of multiple interconnected systems, platforms, and applications. Ensuring compatibility across these components is a major challenge, especially when updates are introduced. Even a small inconsistency can lead to significant disruptions if it affects widely used systems.

The outage involving Microsoft Windows demonstrated how platform-specific issues can escalate quickly. Since many organizations rely on the same operating system, a single incompatibility can impact millions of devices simultaneously.

To reduce compatibility risks, organizations must maintain detailed inventories of their systems, including hardware, software versions, and configurations. This information helps teams identify potential conflicts and prioritize testing efforts. It also allows for faster troubleshooting when issues arise.

Testing updates across all supported platforms is critical. This includes different operating systems, versions, and configurations. Comprehensive testing ensures that updates behave consistently and do not introduce unexpected issues in specific environments.

Standardization can also improve compatibility. By reducing the number of unique configurations, organizations simplify testing and management processes. While complete standardization may not always be possible, even partial alignment can significantly reduce complexity and improve stability.

Using Automation and AI Carefully

Automation and artificial intelligence are transforming cybersecurity by improving efficiency and enabling faster response times. These technologies allow organizations to analyze large volumes of data, detect threats, and respond to incidents with minimal delay.

Automation can streamline many tasks, including system monitoring, update management, and incident response. For example, automated systems can isolate affected devices, trigger alerts, or roll back faulty updates without requiring manual intervention. This reduces response time and limits the impact of disruptions.

Artificial intelligence enhances this capability by identifying patterns and anomalies that may not be visible through traditional methods. AI-driven systems can detect subtle changes in behavior, providing early warnings of potential issues. This proactive approach helps organizations address problems before they escalate.

However, automation must be used with caution. Over-reliance on automated systems can create new risks if those systems fail or make incorrect decisions. Human oversight remains essential to validate actions and ensure that responses are appropriate.

A balanced approach is necessary. Organizations should combine automation with human expertise to achieve both efficiency and accuracy. This ensures that systems remain reliable while still benefiting from advanced technologies.

Preparing for Future Cybersecurity Risks

The lessons learned from recent disruptions are shaping how organizations prepare for the future. Cybersecurity is no longer limited to defending against external attacks. It now includes maintaining system stability, ensuring reliability, and preventing internal failures.

Organizations are adopting proactive strategies that focus on prevention rather than reaction. Continuous monitoring, regular system audits, and ongoing improvements are key components of this approach. By identifying risks early, organizations can address them before they lead to major incidents.

Risk management is becoming more comprehensive. Organizations are evaluating not only external threats but also internal processes, system dependencies, and operational practices. This broader perspective helps create more resilient environments.

Collaboration is also playing a larger role. Sharing knowledge, experiences, and best practices across teams and organizations helps improve overall security. Learning from past incidents allows organizations to strengthen their defenses and avoid repeating mistakes.

Balancing Innovation with Stability

Innovation is essential for staying ahead in cybersecurity, but it must be balanced with stability. New technologies and features can enhance protection, but they can also introduce risks if not properly tested and managed.

Organizations must adopt disciplined development and deployment practices to ensure that innovation does not compromise reliability. This includes thorough testing, controlled rollouts, and continuous monitoring of new features.

Stability should always be a priority, especially for systems that support critical operations. Even small disruptions can have significant consequences, which is why reliability must be considered at every stage of development and deployment.

The experience of CrowdStrike highlights the importance of this balance. Advanced technology alone is not enough; it must be supported by strong processes, skilled teams, and careful management.

By focusing on resilience, improving development practices, and maintaining a balance between innovation and stability, organizations can build systems that are both secure and dependable in an increasingly complex digital environment.

Final Insights

The global disruption linked to CrowdStrike has become a defining moment in modern cybersecurity, not because it introduced a new type of threat, but because it revealed how deeply interconnected and fragile digital ecosystems can be when core systems fail. It demonstrated that even the most advanced security platforms, designed to protect millions of devices, can become a source of risk if not managed with precision, discipline, and foresight.

This event has forced organizations to rethink how they approach cybersecurity at a fundamental level. For years, the primary focus has been on defending against external threats such as malware, ransomware, and data breaches. While those threats remain important, this incident highlighted a different category of risk: internal system failure caused by trusted software. This shift expands the definition of cybersecurity to include reliability, operational stability, and system integrity.

One of the most important lessons is that trust in technology must always be supported by verification. Organizations often rely heavily on well-known vendors, assuming their systems will perform as expected. While trust is necessary, it must be balanced with strong internal controls, independent testing, and continuous monitoring. Over-reliance on any single platform creates a potential point of failure that can have widespread consequences.

Automation also plays a critical role in this discussion. Automated updates are essential for maintaining security in a fast-moving threat landscape. However, the same automation that enables rapid protection can also accelerate the spread of errors. This dual nature makes it necessary to implement safeguards such as staged rollouts, testing environments, and rollback capabilities. These practices help ensure that updates can be controlled and evaluated before affecting large numbers of systems.

Visibility is another key factor in building resilient systems. Organizations must have real-time insight into their infrastructure, including system performance, application behavior, and security activity. Without this visibility, it becomes difficult to detect early warning signs or respond effectively to emerging issues. Monitoring tools, alert systems, and analytics platforms provide the necessary awareness to maintain control over complex environments.

The importance of redundancy has also become more evident. Relying on a single solution for critical security functions increases risk. By adopting a layered approach that includes multiple tools and backup systems, organizations can reduce the likelihood of total system failure. This strategy enhances both reliability and flexibility, allowing businesses to continue operating even when one component fails.

Equally important is the role of skilled professionals. Technology alone cannot ensure resilience. IT and cybersecurity teams must be trained to understand system behavior, identify problems, and respond quickly during incidents. Continuous training, hands-on experience, and practical simulations are essential for preparing teams to handle real-world challenges effectively.

The software development lifecycle has come under increased scrutiny as well. Proper testing, validation, and quality assurance must be enforced before any update is released. Updates should be tested across multiple environments to ensure compatibility and stability. Weaknesses in development processes can lead to serious consequences, as demonstrated by the outage. Strong development discipline is essential for maintaining trust in security technologies.

Compatibility across systems is another critical area. The widespread use of Microsoft Windows means that any issue affecting this platform can quickly become a global problem. Organizations must ensure that their tools are tested across all supported systems and configurations. Careful planning and validation help prevent unexpected conflicts and failures.

Artificial intelligence and automation are becoming increasingly important in cybersecurity. These technologies improve detection, speed up response times, and reduce the workload on human teams. However, they must be implemented carefully. Over-reliance on automation without proper oversight can introduce new risks. A balanced approach that combines automation with human judgment is essential.

Looking forward, organizations must adopt a proactive approach to cybersecurity. This includes continuous monitoring, regular system reviews, and ongoing improvements to processes and technologies. The goal is not only to respond to incidents but to prevent them from occurring in the first place. Proactive strategies help reduce risk and improve overall system stability.

Collaboration across the industry is also vital. Sharing knowledge, experiences, and best practices allows organizations to learn from each other and strengthen their defenses. A cooperative approach helps improve resilience across the entire cybersecurity ecosystem.

The broader lesson from this event is that cybersecurity is not just about protection from external threats. It is also about ensuring that systems remain stable, reliable, and functional under all conditions. Organizations must consider both security and operational resilience when designing their strategies.

The experience involving CrowdStrike serves as a strong reminder that even the most advanced technologies require careful management and oversight. By focusing on resilience, improving development practices, and strengthening operational controls, organizations can build systems that are both secure and dependable in an increasingly complex digital world.