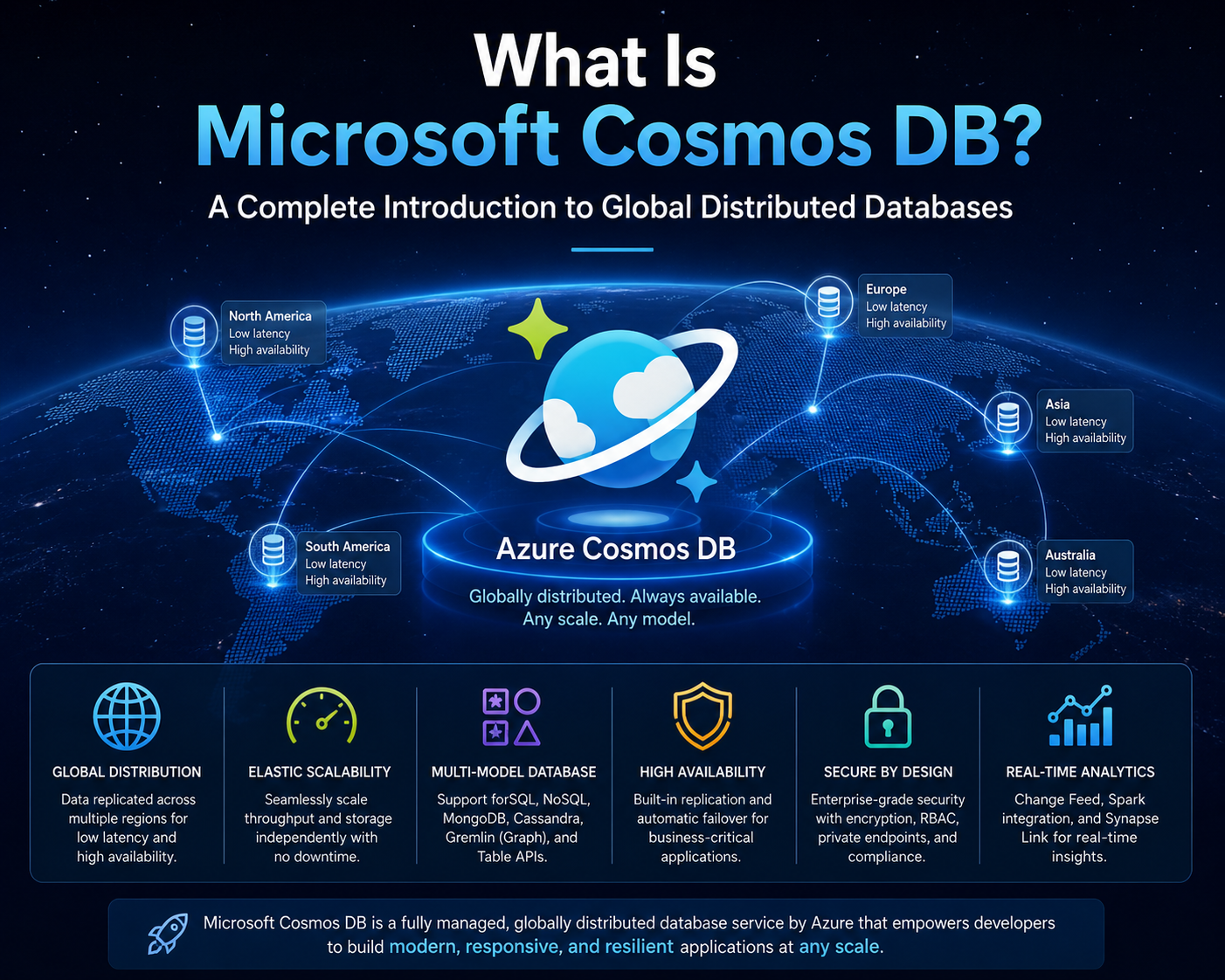

Cosmos DB is a globally distributed, multi-model database service designed to operate within cloud-native environments. It is engineered to support applications that require high availability, low latency, and elastic scalability across geographically distributed regions. At its core, Cosmos DB is a database platform that abstracts infrastructure complexity while allowing developers to focus on data modeling and application logic rather than server management or physical storage concerns. It belongs to the class of NoSQL databases but also incorporates features that allow interaction using structured query approaches, making it flexible across multiple development paradigms. Its architecture is built to support distributed systems where data consistency, replication, and partitioning play central roles in ensuring performance and reliability.

Relational Databases and Structured Data Organization

To understand Cosmos DB effectively, it is essential to examine traditional relational database systems. Relational databases organize data into structured tables consisting of rows and columns. Each table represents a specific entity, such as customers, orders, or products. Columns define attributes of those entities, such as names, identifiers, or timestamps, while each row represents a unique record. This structure enforces a strict schema, meaning data must conform to predefined rules before it can be inserted or modified. Systems like MySQL and PostgreSQL are widely used examples of relational databases that rely on structured query languages for data manipulation and retrieval. This model emphasizes data consistency and integrity, making it suitable for applications where relationships between data points are clearly defined and stable over time.

Limitations of Rigid Schema-Based Systems in Modern Applications

Although relational databases provide strong consistency and structured organization, they often struggle with flexibility and scalability in dynamic environments. Modern applications frequently require rapid iteration, evolving data structures, and distributed workloads. When schema changes are needed in relational systems, they typically involve significant restructuring, data migration, and downtime considerations. This can slow development cycles and increase operational complexity. Additionally, scaling relational databases horizontally across multiple servers introduces challenges due to their tightly coupled schema design and transaction management requirements. These limitations have driven the adoption of alternative database models that prioritize flexibility and distributed architecture.

Introduction to NoSQL Database Models

NoSQL databases were developed to address limitations associated with traditional relational systems. Instead of relying on fixed schemas and tabular structures, NoSQL databases use flexible data models that can adapt to changing application requirements. These systems support various data formats, including key-value pairs, wide-column stores, graph structures, and document-based models. Among these, document databases are particularly relevant to Cosmos DB. In a document database, data is stored in semi-structured formats, typically using JSON-like representations. Each record, known as a document, can contain nested fields, arrays, and varying structures without requiring uniformity across all entries. This flexibility allows developers to evolve data models without significant structural overhead.

Document Data Representation and Flexibility

In a document-based system, each record is self-contained and may differ in structure from other records within the same collection. For example, a user profile document may include fields such as name, age, authentication status, preferences, and metadata. However, another user profile may include additional or fewer fields depending on application requirements. This flexibility allows systems to accommodate diverse data inputs without enforcing strict schema validation at the database level. It also reduces the friction associated with schema migrations, making development cycles faster and more adaptive to changing business logic. The absence of a rigid structure enables applications to evolve organically as data requirements grow more complex.

Cosmos DB as a Distributed Document Database System

Cosmos DB operates as a globally distributed document-oriented database that supports multiple APIs and data models under a unified platform. It is designed to store and manage JSON-like documents while ensuring high availability and low latency through replication across multiple geographic regions. Each document stored in Cosmos DB is assigned a unique identifier and can include dynamic attributes that evolve. The system automatically manages indexing, partitioning, and replication, allowing developers to focus on application design rather than infrastructure management. This makes Cosmos DB particularly suitable for applications that require real-time responsiveness and global accessibility.

Comparison Between SQL and NoSQL Approaches in the Context of Cosmos DB

SQL and NoSQL systems differ fundamentally in how they structure and manage data. SQL databases rely on predefined schemas, relational integrity, and structured queries, while NoSQL databases prioritize flexibility, scalability, and distributed architecture. Cosmos DB aligns more closely with the NoSQL paradigm but introduces hybrid capabilities that allow structured querying within document collections. This hybrid nature enables developers familiar with relational concepts to transition more easily while still benefiting from the scalability advantages of document-based storage. The key distinction lies in data modeling philosophy: relational systems enforce structure before storage, whereas document systems allow structure to emerge dynamically within stored data.

Data Modeling Differences Between Structured and Document Systems

In relational systems, data normalization is a core principle. This involves dividing data into multiple related tables to reduce redundancy and maintain consistency. Queries often require joining multiple tables to reconstruct meaningful datasets. In contrast, document-based systems like Cosmos DB encourage data denormalization, where related information is stored within a single document. This reduces the need for complex joins and improves read performance in distributed environments. However, it also requires careful consideration of data duplication and consistency management at the application level. The choice between normalization and denormalization depends heavily on application requirements and access patterns.

Evolution of Cloud-Native Database Systems

Cloud computing has significantly influenced the design of modern database systems. Traditional databases were built for localized environments with predictable hardware resources. Cloud-native databases like Cosmos DB are designed for elasticity, global distribution, and automated scaling. They leverage distributed infrastructure to provide high availability and fault tolerance across multiple regions. This architectural shift reflects the growing demand for applications that operate continuously across global user bases with minimal latency. Cosmos DB represents a transition from monolithic database systems to distributed, service-oriented data platforms that integrate seamlessly with modern cloud ecosystems.

Foundational Role of Cosmos DB in Modern Application Development

Cosmos DB serves as a foundational component in many modern application architectures due to its ability to handle diverse workloads and data structures. It supports scenarios ranging from web applications and mobile backends to IoT systems and real-time analytics platforms. Its document-based model allows rapid development cycles, while its distributed architecture ensures consistent performance across regions. By abstracting infrastructure complexity, Cosmos DB enables developers to focus on building scalable and responsive applications without managing underlying database servers or replication strategies.

Emerging Importance of Flexible Data Systems in Digital Ecosystems

As digital ecosystems become more complex, the need for adaptable data systems continues to grow. Applications increasingly rely on heterogeneous data sources, unstructured inputs, and dynamic user interactions. Document-based systems like Cosmos DB provide a framework for managing this complexity by allowing data to evolve without rigid structural constraints. This adaptability is particularly important in environments where rapid innovation and continuous deployment are essential. The ability to store and retrieve semi-structured data efficiently positions Cosmos DB as a key enabler of modern cloud application development.

Global Distribution and Cloud-Native Architecture Principles

Cosmos DB is designed around the principles of global distribution and cloud-native scalability, making it fundamentally different from traditional single-region database systems. At its core, the architecture is built to ensure that data can be replicated across multiple geographic regions while maintaining consistent availability and performance. This distributed model allows applications to serve users from the nearest available data center, significantly reducing latency and improving responsiveness. The system is engineered to operate in environments where failure is expected rather than exceptional, meaning redundancy and replication are intrinsic design components rather than optional enhancements. Each region participating in a Cosmos DB deployment acts as a fully functional replica capable of handling read and write operations depending on configuration settings.

Partitioning Strategy and Horizontal Scaling Model

One of the most critical architectural components in Cosmos DB is its partitioning mechanism, which enables horizontal scaling. Instead of relying on vertical scaling, where additional CPU or memory is added to a single server, Cosmos DB distributes data across multiple logical partitions. Each partition contains a subset of the overall dataset, determined by a partition key defined during database design. This approach ensures that workloads are evenly distributed across infrastructure, preventing performance bottlenecks associated with single-node saturation. As data volume increases, new partitions are automatically created and balanced across the system. This horizontal scaling model allows Cosmos DB to handle extremely large datasets without degradation in performance.

Logical and Physical Partition Separation

Cosmos DB distinguishes between logical partitions and physical partitions, which is essential for understanding its scalability behavior. A logical partition is defined by a partition key value and represents a grouping of related data items. Physical partitions, on the other hand, are infrastructure-level containers that store one or more logical partitions. The system automatically manages the mapping between logical and physical partitions, ensuring that data is distributed efficiently. When a physical partition reaches capacity, Cosmos DB automatically splits it and redistributes the logical partitions across new physical nodes. This abstraction allows developers to focus on data modeling without worrying about infrastructure constraints or manual sharding strategies.

Replication Model and Multi-Region Consistency

Replication in Cosmos DB is a foundational feature that ensures data durability and availability across multiple geographic regions. Every write operation can be replicated to multiple regions depending on the configured consistency level. This replication process occurs asynchronously or synchronously based on the selected consistency model. In multi-region configurations, Cosmos DB ensures that data remains accessible even if an entire region becomes unavailable. The replication system is designed to handle network partitions and hardware failures without affecting application availability. This makes Cosmos DB suitable for globally distributed applications that require continuous uptime and resilient data storage.

Consistency Models and Data Reliability Tradeoffs

Cosmos DB provides multiple consistency levels that allow developers to balance performance, availability, and data accuracy. These consistency models define how quickly data written in one region becomes visible in another region. Strong consistency ensures that all reads return the most recent write, but it may introduce higher latency due to synchronization requirements. Bounded staleness allows a controlled delay between writes and reads, providing a balance between performance and accuracy. Session consistency ensures that users see their own writes consistently while allowing eventual synchronization across regions. Eventual consistency prioritizes performance and availability, allowing data to propagate asynchronously. These models give developers flexibility to choose behavior based on application needs.

Automatic Indexing and Query Optimization Mechanisms

Cosmos DB includes an automatic indexing system that eliminates the need for manual index management. Every property within a document is indexed by default, enabling fast query execution across large datasets. This automatic indexing approach contrasts with traditional database systems,s where indexes must be explicitly defined and maintained. The indexing engine is optimized for dynamic and heterogeneous data structures, ensuring that queries remain efficient even as document schemas evolve. The system continuously updates indexes in the background as data changes, allowing real-time query performance without administrative overhead. This design significantly simplifies database management while maintaining high-performance query execution.

Multi-Model API Support and Data Access Flexibility

A distinguishing feature of Cosmos DB is its support for multiple APIs, which allows developers to interact with the database using different programming paradigms. These APIs enable compatibility with various database models, including document-based, graph-based, and key-value structures. This multi-model capability allows applications to use a single underlying database system while interacting through different interfaces depending on use case requirements. It also simplifies migration from other database systems by providing compatibility layers that emulate familiar query languages and access patterns. This flexibility enhances interoperability and reduces the need for multiple specialized database systems within a single application architecture.

Latency Optimization Through Geographic Proximity

Latency optimization is a key design goal in Cosmos DB, achieved through geographic distribution and intelligent routing mechanisms. When users access an application, requests are directed to the nearest available database region. This proximity-based routing reduces network delays and improves response times significantly. The system continuously monitors regional performance and availability to ensure that traffic is directed to optimal endpoints. In cases where a region experiences degradation or failure, requests are automatically rerouted to alternative regions without disrupting application functionality. This dynamic routing mechanism ensures consistent performance across global user bases.

Throughput Management and Request Unit Model

Cosmos DB uses a throughput-based pricing and performance model known as Request Units. Instead of focusing on traditional resource metrics such as CPU or memory, the system measures operations in terms of normalized request costs. Each database operation consumes a certain number of request units depending on its complexity, data size, and indexing requirements. This model allows precise control over performance provisioning, enabling developers to allocate sufficient throughput for expected workloads. Throughput can be provisioned manually or configured to autoscale based on demand. This abstraction simplifies capacity planning and provides predictable performance behavior under varying load conditions.

Autoscaling Mechanisms and Dynamic Resource Allocation

Autoscaling in Cosmos DB enables dynamic adjustment of resource allocation based on workload demand. When application traffic increases, the system automatically allocates additional throughput to maintain performance levels. Conversely, when demand decreases, resources are scaled down to optimize cost efficiency. This process is fully automated and does not require manual intervention. Autoscaling operates within predefined limits set during configuration, ensuring that resource usage remains controlled. The system continuously monitors performance metrics to determine optimal scaling behavior, making it suitable for applications with unpredictable or fluctuating workloads.

Data Consistency Across Distributed Nodes

Maintaining consistency across distributed nodes is a complex challenge in globally replicated systems. Cosmos DB addresses this through a combination of synchronization protocols and conflict resolution strategies. When data is written to one region, replication mechanisms ensure that updates are propagated to other regions according to the selected consistency model. In cases where conflicting writes occur, the system applies predefined resolution rules to determine the authoritative version of the data. These mechanisms ensure that distributed data remains coherent while allowing flexibility in performance and availability tradeoffs. The system is designed to handle network partitions gracefully, ensuring continued operation even under partial connectivity failures.

Fault Tolerance and High Availability Design

Fault tolerance is built into every layer of Cosmos DB architecture. The system is designed to remain operational even when individual components or entire regions fail. Data replication ensures that multiple copies of information exist across geographically distributed nodes. In the event of hardware failure, traffic is automatically redirected to healthy replicas without user intervention. The system continuously monitors health status across all regions and initiates recovery processes when anomalies are detected. This design ensures that applications built on Cosmos DB maintain high availability even under adverse conditions, making it suitable for mission-critical workloads.

Security Isolation and Data Segmentation Principles

Cosmos DB implements strict security and isolation mechanisms to ensure that data remains protected within multi-tenant environments. Each database instance operates within a logically isolated container, preventing unauthorized access between tenants. Data encryption is applied both at rest and in transit to safeguard information from external threats. Access control mechanisms regulate permissions at granular levels, allowing fine-tuned security policies for different users and applications. These security principles are integrated into the architecture rather than applied as external layers, ensuring consistent enforcement across all operations.

Performance Optimization Through Distributed Query Execution

Query execution in Cosmos DB is optimized for distributed environments where data may reside across multiple partitions and regions. When a query is executed, the system identifies relevant partitions and distributes query execution across them in parallel. Results are then aggregated and returned to the client in a unified response. This parallel execution model significantly improves performance for large-scale datasets. The query engine is designed to minimize data movement across network boundaries, reducing latency and improving efficiency. Indexing structures further enhance query performance by enabling rapid data retrieval without full dataset scans.

Resource Governance and Predictable Performance Behavior

Cosmos DB incorporates resource governance mechanisms that ensure predictable performance under varying workloads. By enforcing throughput limits and request unit consumption models, the system prevents individual operations from monopolizing resources. This ensures fair distribution of capacity across concurrent operations and applications. Resource governance also helps maintain system stability during peak usage periods by regulating request flow and balancing load across partitions. This controlled approach to resource allocation contributes to consistent performance behavior across different operational scenarios.

Integration Within Modern Distributed Application Ecosystems

Cosmos DB is often used as a foundational data layer in modern distributed application ecosystems. Its architecture aligns with microservices-based designs where independent services require scalable and reliable data storage. The database supports event-driven architectures, real-time processing systems, and globally distributed applications. Its ability to integrate with various data models and APIs makes it adaptable to diverse application requirements. By providing a unified data platform, Cosmos DB reduces architectural complexity and simplifies system design in large-scale environments.

High-Performance Data Operations in Distributed Environments

Cosmos DB is engineered to deliver consistent high performance across distributed environments where data is spread across multiple regions and partitions. Performance in such a system is not only dependent on hardware capacity but also on how effectively data is partitioned, indexed, and accessed. The architecture ensures that most operations are executed close to the physical location of the data, minimizing network hops and reducing latency. This locality-aware processing model is essential in global applications where users interact with systems from diverse geographic regions. The database optimizes read and write paths independently, ensuring that both transactional and analytical workloads can operate efficiently without interfering with each other.

Read and Write Optimization Strategies in Distributed Systems

In Cosmos DB, read operations are optimized through intelligent indexing and replica selection. When a read request is initiated, the system routes it to the nearest available replica based on latency and consistency requirements. This ensures that data retrieval is fast and efficient, even under high traffic conditions. Write operations, on the other hand, are managed through a coordinated replication mechanism that ensures durability and consistency across selected regions. Writes are first committed to a primary region and then propagated to secondary regions based on the chosen consistency model. This separation of read and write optimization allows the system to scale efficiently while maintaining predictable performance characteristics.

Impact of Partition Key Selection on System Performance

One of the most critical design decisions in Cosmos DB is the selection of an appropriate partition key. The partition key determines how data is distributed across logical and physical partitions, directly influencing scalability and performance. A well-chosen partition key ensures even distribution of data and workload across the system, preventing hotspots where certain partitions become overloaded. Poor partition key selection can lead to uneven data distribution, reduced throughput, and increased latency. Effective partitioning strategies often involve selecting keys that naturally distribute user traffic or data entities evenly, such as user identifiers or region-based attributes.

Query Execution Patterns in Large-Scale Data Environments

Query execution in Cosmos DB is designed to operate efficiently across distributed datasets. When a query is issued, the system determines which partitions contain relevant data and executes the query in parallel across those partitions. This parallel execution model significantly reduces response times for large datasets. The results from each partition are then aggregated into a unified response before being returned to the application. The query engine is optimized to minimize cross-partition communication, which is typically a major bottleneck in distributed databases. By leveraging indexing and partition-aware execution plans, Cosmos DB ensures that queries remain performant even as data volume grows.

Data Modeling Strategies for Document-Based Architectures

Effective data modeling in Cosmos DB requires a shift from traditional relational thinking to a document-centric approach. Instead of normalizing data into multiple related tables, developers design self-contained documents that encapsulate all relevant information. This approach reduces the need for complex joins and improves read performance. Data modeling decisions are heavily influenced by application access patterns, as documents are typically designed to support specific query requirements. Embedding related data within a single document is a common strategy that enhances performance but requires careful consideration of data duplication and update consistency.

Event-Driven Architecture Integration with Cosmos DB

Cosmos DB integrates naturally with event-driven architectures, where changes in data trigger downstream processes and workflows. In such systems, data modifications within the database can generate events that are consumed by other services or applications. This enables real-time processing and reactive system behavior. Event-driven patterns are particularly useful in scenarios such as IoT data ingestion, real-time analytics, and notification systems. Cosmos DB supports change tracking mechanisms that allow applications to monitor updates without continuously polling the database, improving efficiency and responsiveness.

Real-Time Analytics and Operational Data Processing

Cosmos DB is often used in systems that require real-time analytics on operational data. Unlike traditional data warehouses that rely on batch processing, Cosmos DB enables immediate analysis of incoming data streams. This capability is critical for applications that require instant insights, such as fraud detection, monitoring systems, and dynamic pricing engines. The integration of operational and analytical workloads within a single platform reduces data movement and simplifies architecture. Real-time processing is supported through efficient indexing and fast query execution across distributed partitions.

Scalability Patterns for Enterprise Workloads

Enterprise applications require database systems that can scale seamlessly as demand increases. Cosmos DB supports both vertical and horizontal scaling strategies, although horizontal scaling is the primary mechanism. Horizontal scaling is achieved through partitioning and replication, allowing the system to distribute workload across multiple nodes. This ensures that performance remains stable even as data volume and user traffic increase. Enterprises often design their systems to anticipate future growth, selecting partition strategies and throughput configurations that support long-term scalability without requiring major architectural changes.

Hybrid Cloud and Multi-Region Deployment Strategies

Modern enterprises increasingly adopt hybrid and multi-region deployment strategies to ensure resilience and performance optimization. Cosmos DB supports deployment across multiple Azure regions, enabling data replication and failover capabilities. In hybrid scenarios, Cosmos DB can integrate with on-premises systems and other cloud services to create unified data ecosystems. Multi-region deployments improve disaster recovery capabilities by ensuring that data remains accessible even if one region becomes unavailable. These strategies are essential for global applications that require continuous availability and regulatory compliance across different jurisdictions.

Consistency Management in Real-World Application Scenarios

Choosing the appropriate consistency model is a critical decision in Cosmos DB implementations. Different applications require different tradeoffs between performance and data accuracy. Financial systems may require strong consistency to ensure accurate transaction processing, while social media applications may prioritize availability and latency over immediate consistency. Cosmos DB allows developers to configure consistency at the database or request level, providing flexibility in how data synchronization is handled across regions. This adaptability enables the same system to support diverse application requirements.

Cost Optimization Through Throughput Management

Cost efficiency in Cosmos DB is closely tied to throughput management and resource allocation. Since the system operates on a request unit model, developers can control costs by optimizing query efficiency, partition design, and indexing strategies. Efficient data modeling reduces unnecessary request consumption, leading to lower operational costs. Autoscaling features also contribute to cost optimization by adjusting resource allocation based on actual demand rather than peak provisioning. Organizations often analyze usage patterns to fine-tune throughput settings and minimize wasted capacity.

Security Architecture and Data Protection Mechanisms

Security in Cosmos DB is implemented at multiple layers, including network isolation, encryption, and access control. Data is encrypted both at rest and in transit, ensuring protection against unauthorized access and interception. Role-based access control allows administrators to define granular permissions for different users and applications. Network security features restrict access to authorized endpoints, reducing exposure to external threats. These security mechanisms are integrated into the core architecture, ensuring consistent enforcement across all operations and regions.

Backup, Recovery, and Data Durability Systems

Data durability is a fundamental requirement in distributed database systems. Cosmos DB implements continuous backup and replication mechanisms to ensure that data can be recovered in case of failure or corruption. Backup systems operate automatically without requiring manual intervention, capturing data changes in near real time. Recovery processes allow data to be restored to specific points in time, protecting against accidental deletion or corruption. These capabilities ensure that enterprise applications can maintain data integrity even under adverse conditions.

Migration Strategies from Traditional Databases to Cosmos DB

Migrating from traditional relational databases to Cosmos DB requires careful planning and data transformation. The process typically involves restructuring data into document formats and redefining application queries to align with NoSQL paradigms. Migration strategies often include incremental data transfer, dual-write systems, and validation processes to ensure data consistency during transition. Organizations may also adopt hybrid approaches where relational and document systems coexist during migration phases. This allows gradual adaptation without disrupting existing application functionality.

Developer Experience and API Integration Models

Cosmos DB provides multiple APIs that enhance developer experience by supporting familiar programming models. These APIs allow interaction using different query languages and data access patterns, reducing the learning curve for developers transitioning from other database systems. Integration with application frameworks and development tools simplifies deployment and testing processes. The system is designed to abstract infrastructure complexity, enabling developers to focus on application logic rather than database management tasks. This improves productivity and accelerates development cycles.

Enterprise Adoption Patterns and Digital Transformation Role

In enterprise environments, Cosmos DB plays a key role in digital transformation initiatives. Its ability to support scalable, distributed, and flexible data architectures makes it suitable for modernizing legacy systems. Enterprises adopt Cosmos DB to support cloud-native applications, improve global accessibility, and enhance system resilience. It is commonly used in industries such as finance, retail, healthcare, and logistics, where data availability and performance are critical. Its integration capabilities allow it to function as a central data platform within complex enterprise ecosystems.

Future Evolution of Distributed Database Systems in Cloud Ecosystems

Distributed database systems like Cosmos DB represent the future of data management in cloud ecosystems. As applications become more globally distributed and data volumes continue to increase, traditional database models are becoming less effective. Cloud-native systems that support automatic scaling, global replication, and flexible data models are becoming essential. Cosmos DB exemplifies this evolution by combining performance, scalability, and flexibility within a unified platform. The continued advancement of distributed systems will likely focus on further reducing latency, improving automation, and enhancing integration with emerging technologies.

Conclusion

Cosmos DB represents a significant shift in how modern data systems are designed, deployed, and scaled within cloud environments. Instead of relying on rigid, infrastructure-bound database architectures, it introduces a distributed, globally aware model that prioritizes availability, responsiveness, and operational flexibility. This shift reflects broader changes in application design, where systems are no longer built for single-server or single-region performance but for continuous global access under unpredictable workloads. The value of Cosmos DB becomes clearer when viewed through the lens of modern application demands, which require consistent performance across geographic boundaries, seamless scaling under load, and adaptability to rapidly changing data structures.

At the foundation of Cosmos DB is the concept of distributed data management, where information is not confined to a single location but replicated across multiple regions. This design fundamentally changes how data consistency, latency, and availability are balanced. Traditional systems often force a tradeoff between these factors, but Cosmos DB introduces configurable consistency models that allow developers to tune behavior according to application requirements. This flexibility is essential in real-world systems where different components may require different guarantees. For example, user-facing features may prioritize low latency and high availability, while financial or transactional systems may require stronger consistency guarantees even at the cost of increased latency.

Another defining characteristic of Cosmos DB is its approach to scalability. Instead of vertical scaling, which relies on increasing the capacity of a single machine, it uses horizontal scaling through partitioning and distributed resource allocation. This approach allows the system to handle massive datasets and high transaction volumes without becoming constrained by hardware limitations. Partitioning ensures that data is evenly distributed, preventing performance bottlenecks and enabling parallel processing across multiple nodes. This architectural decision aligns with the realities of modern cloud workloads, where demand can grow unpredictably and often exceeds the capacity of traditional single-node systems.

The abstraction of infrastructure complexity is another important aspect that shapes the value proposition of Cosmos DB. Developers are no longer required to manage physical servers, replication topologies, or indexing strategies manually. Instead, these responsibilities are handled by the system itself, allowing development teams to focus on application logic and data modeling. This abstraction reduces operational overhead and accelerates development cycles, especially in environments where agility and rapid iteration are critical. It also reduces the risk of misconfiguration, which is a common source of performance and reliability issues in manually managed database systems.

Data modeling within Cosmos DB reflects a departure from traditional relational thinking. Instead of decomposing data into highly normalized structures, it encourages the use of self-contained documents that represent complete entities. This model reduces the need for complex joins and improves read performance, particularly in distributed environments where cross-node operations can introduce latency. However, this flexibility also places greater responsibility on developers to design efficient data structures that align with application access patterns. The effectiveness of a Cosmos DB implementation is often determined not by the platform itself but by how well the data model is aligned with usage behavior.

Performance optimization in Cosmos DB is closely tied to indexing, partitioning, and query execution strategies. Automatic indexing ensures that all data fields are immediately queryable without manual configuration, which simplifies development but requires careful consideration of indexing overhead. Partition-aware query execution allows the system to distribute workloads efficiently across nodes, reducing response times even for large datasets. These mechanisms work together to ensure that performance remains consistent even as data volume increases. However, achieving optimal performance still depends on thoughtful design decisions, particularly around partition key selection and query structure.

Global distribution is another core strength that defines Cosmos DB’s role in modern systems. By replicating data across multiple geographic regions, it enables applications to deliver low-latency experiences to users regardless of location. This capability is particularly important in globally distributed applications where user bases span continents. The ability to route requests to the nearest available data center reduces latency and improves responsiveness. Additionally, regional redundancy enhances system resilience by ensuring that failures in one region do not result in complete service disruption. This built-in redundancy is a critical feature for applications that require continuous availability.

Consistency management in Cosmos DB introduces a nuanced approach to distributed data correctness. Instead of enforcing a single consistency model, it provides multiple options that allow tradeoffs between performance and accuracy. This flexibility acknowledges that different applications have different requirements and that no single consistency model is universally optimal. Strong consistency ensures correctness but may introduce latency, while eventual consistency improves performance at the cost of temporary data divergence. Intermediate models provide balanced approaches that allow developers to fine-tune behavior based on specific operational needs.

From an enterprise perspective, Cosmos DB supports large-scale digital transformation initiatives by enabling the modernization of legacy systems and supporting cloud-native architectures. Its ability to integrate with distributed application frameworks makes it suitable for microservices-based systems, event-driven architectures, and real-time processing pipelines. Enterprises benefit from its scalability, global reach, and operational simplicity, which reduce the complexity of managing large-scale data infrastructure. This makes it a strategic component in environments where agility, resilience, and performance are essential business requirements.

Security and governance are embedded into the architecture, ensuring that data protection is maintained across all layers of the system. Encryption, access control, and network isolation work together to safeguard information in both transit and storage. These mechanisms are essential in distributed environments where data traverses multiple regions and systems. The integration of security into the core architecture eliminates the need for external security layers and ensures consistent enforcement across all operations.

Ultimately, Cosmos DB reflects the evolution of database systems from static, centrally managed structures to dynamic, distributed platforms capable of supporting global-scale applications. It embodies principles that are increasingly important in modern software design, including elasticity, resilience, and adaptability. As application ecosystems continue to grow in complexity and geographic distribution, systems like Cosmos DB will play an increasingly central role in enabling scalable and responsive data infrastructure.