Servers and desktop computers share a surprising amount of internal architecture, which is why they often appear interchangeable at a glance. Both systems rely on a central processing unit to execute instructions, memory modules to handle active workloads, storage drives for persistent data, and network interfaces for communication. From a purely component-level perspective, the line between the two can seem blurred, especially as high-end desktop workstations increasingly adopt enterprise-grade hardware.

However, the distinction between these two systems is not defined by components alone but by operational intent and system design philosophy. A desktop computer is built to serve a single user at a time, prioritizing interactive responsiveness, application performance, and general-purpose usability. A server, in contrast, is designed to serve multiple clients simultaneously, delivering applications, data, and services over a network consistently and reliably.

This difference in purpose fundamentally shapes every aspect of system engineering, from hardware redundancy to thermal design and lifecycle expectations. While a desktop is optimized for user experience, a server is optimized for service continuity under sustained and often unpredictable workloads.

How Hardware Convergence Has Blurred Traditional Boundaries

Over the past decade, hardware convergence has significantly narrowed the gap between desktop and server systems. Modern desktop machines can include multi-core processors, large memory capacities, and high-speed solid-state storage that rival entry-level servers. Similarly, some servers utilize components that are not far removed from consumer-grade hardware, especially in cost-sensitive deployments.

This convergence is largely driven by advancements in semiconductor manufacturing and standardized component design. CPUs, memory modules, and storage devices are often produced using similar architectural principles regardless of whether they are intended for desktop or server use. As a result, raw computational capability is no longer the primary differentiator.

Despite this similarity, the way these components are assembled, validated, and deployed introduces critical differences. Servers undergo stricter validation processes, extended stress testing, and stricter quality assurance standards to ensure predictable behavior under continuous load conditions.

Reliability as the Core Engineering Principle in Server Design

Reliability is one of the most important characteristics that separates server systems from desktop computers. Servers are expected to operate continuously for extended periods, often measured in months or years, without shutdown. This requirement introduces a design philosophy centered around fault tolerance and redundancy.

One of the most visible examples of this philosophy is redundant power supply design. Many servers include dual power supply units that operate in parallel. If one power supply fails or is disconnected, the other immediately takes over without interrupting system operation. This eliminates a common single point of failure found in desktop systems.

Redundancy extends beyond power systems. Servers frequently include multiple network interfaces, allowing traffic to be rerouted automatically in case of network failure. This ensures that communication with external systems remains uninterrupted even during hardware degradation.

Memory systems also reflect this reliability-focused design. Many servers use error-correcting code memory, which can detect and correct single-bit memory errors in real time. This reduces the likelihood of system crashes caused by memory corruption, a feature that is typically absent in standard desktop configurations.

System Stability Under Continuous Workloads

Servers are engineered to maintain stability under continuous and often heavy workloads. Unlike desktop systems, which experience variable usage patterns depending on user activity, servers are expected to handle consistent processing demands from multiple users or applications simultaneously.

This requires careful thermal management and power regulation. Server components are often designed to operate efficiently at higher temperatures while maintaining performance stability. Cooling systems are also significantly more advanced, often using high-efficiency airflow designs, multiple fan arrays, and optimized chassis layouts to ensure heat dissipation.

In contrast, desktop systems typically rely on simpler cooling solutions designed for intermittent workloads. While high-performance desktops may include advanced cooling systems, they are not generally built for the same level of sustained operational intensity.

Maintainability and Remote Management Architecture

Another defining feature of server systems is maintainability, particularly through remote management capabilities. Servers are often deployed in environments where physical access is limited or impractical, such as data centers or secured infrastructure facilities.

To address this, servers include dedicated management modules that operate independently of the main operating system. These modules provide administrators with the ability to monitor hardware health, configure system settings, and perform recovery operations remotely. Even if the primary operating system becomes unresponsive, the management interface remains accessible.

This capability significantly enhances operational efficiency by reducing the need for physical intervention. It also enables proactive system monitoring, where potential hardware issues can be identified and addressed before they result in system failure.

Desktop systems generally do not include this level of hardware-level remote management. While remote software access is possible, it does not provide the same depth of control or independence from the operating system.

The Importance of System Density in Server Environments

Server systems are designed with physical space efficiency in mind. In enterprise environments, maximizing computing power per unit of physical space is essential for cost-effective infrastructure scaling. This has led to the development of compact server form factors that allow multiple systems to be installed within a single structured environment.

Instead of occupying large standalone footprints, servers are often deployed in vertically organized configurations. This allows organizations to consolidate computing resources into a single location, improving both physical efficiency and operational manageability.

High-density deployments reduce the complexity of power distribution systems and simplify cooling infrastructure. By centralizing computing resources, organizations can optimize environmental controls and reduce operational overhead.

However, increased density also introduces challenges such as heat concentration and noise generation. These factors must be carefully managed in professional environments to ensure system stability and workplace safety.

Quality Assurance and Component Selection in Server Systems

Servers typically use higher-grade components compared to standard desktop systems. This is not necessarily because desktop components are incapable of high performance, but because servers are expected to operate under stricter reliability requirements.

Server-grade components are often selected based on extended operational lifecycles and higher tolerance for continuous workloads. They undergo more rigorous testing procedures to ensure consistent performance under stress conditions.

For example, storage systems in servers are often designed with enhanced durability and error correction capabilities. Memory modules may include additional parity checks and correction mechanisms to prevent data corruption. These enhancements contribute to overall system stability and reduce the risk of unexpected failures.

Desktop systems prioritize cost efficiency and performance optimization for individual users, which results in different design trade-offs. While they can be highly powerful, they are not always engineered for the same level of continuous operational resilience.

Business-Driven Design Philosophy Behind Server Architecture

Server design is fundamentally influenced by business requirements rather than individual user needs. Organizations depend on servers to deliver critical services such as data storage, application hosting, communication systems, and cloud-based infrastructure.

This dependency requires servers to prioritize uptime, scalability, and predictable performance. Downtime in server environments can have significant operational and financial consequences, which is why redundancy and fault tolerance are central design principles.

Servers must also support multiple simultaneous connections and processes. This multi-user capability requires efficient resource scheduling and workload balancing to ensure consistent performance across all active sessions.

In addition, servers are designed to integrate seamlessly into larger IT ecosystems. This includes compatibility with virtualization platforms, storage networks, and centralized monitoring systems. The goal is to create a cohesive infrastructure that can scale efficiently as organizational needs grow.

Evolution of Server Deployment Models

Server systems are deployed in different physical configurations depending on organizational scale and infrastructure requirements. The two primary deployment models include standalone tower systems and structured rack-based systems.

Tower-based systems resemble traditional desktop towers but incorporate enterprise-grade hardware and reliability features. They are typically used in smaller environments where dedicated infrastructure is not required or available.

Rack-based systems are designed for integration into standardized mounting structures. These systems prioritize density, scalability, and centralized management. They are commonly used in data centers and large-scale enterprise environments where efficiency and organization are critical.

The choice between these models depends on factors such as scalability requirements, physical space availability, and operational complexity.

Standalone Server Systems in Small-Scale Environments

Tower servers are often deployed in environments where simplicity and ease of implementation are more important than large-scale scalability. These systems can operate independently without requiring specialized infrastructure such as server racks or advanced cooling systems.

Despite their simpler form factor, tower servers still incorporate many enterprise-level features such as redundant components and enhanced processing capabilities. They can support business applications, file storage systems, and basic virtualization workloads effectively.

Their standalone nature makes them suitable for branch offices, small businesses, and environments where IT infrastructure is limited. However, as computational demands increase, these systems may become less efficient compared to structured deployments.

Transition Toward Scalable Infrastructure Architectures

As organizations grow, their computing requirements often exceed the capabilities of standalone systems. This leads to a transition toward more structured infrastructure models that support scalability, redundancy, and centralized management.

Structured server environments allow for easier expansion by adding additional systems into existing frameworks. They also simplify maintenance operations and improve resource allocation efficiency across multiple systems.

This transition reflects the broader evolution of modern computing infrastructure, where flexibility, scalability, and operational efficiency are more important than isolated system performance.

How Server Hardware Architecture Differs in Practical Design

Server hardware architecture is built around a different set of priorities compared to desktop computing systems. While both rely on similar core components such as CPUs, RAM, storage drives, and network interfaces, the internal design of a server is structured to maximize uptime, service availability, and predictable performance under continuous load conditions.

A key difference lies in how resources are organized and accessed. Servers are designed to support concurrent access from multiple clients, meaning the system must efficiently allocate CPU cycles, memory bandwidth, and I/O throughput across many simultaneous requests. This requires not only higher hardware capacity but also optimized system-level coordination between components.

Another important architectural consideration is modularity. Server systems are frequently designed so that components can be replaced or upgraded without shutting down the entire system. This includes hot-swappable storage drives, replaceable power supplies, and modular fan assemblies. This modular approach is essential in environments where downtime must be minimized.

The Role of Redundancy in Server System Design

Redundancy is one of the most defining principles in server hardware engineering. Unlike desktop systems, where a single point of failure may result in system shutdown, servers are designed to tolerate hardware failures without interrupting service.

Power redundancy is commonly implemented through dual power supply units. Each power supply can independently support the server’s operational load. In normal conditions, both units may share the load, but if one fails, the remaining unit continues powering the system without interruption.

Network redundancy is also a critical feature. Servers often include multiple network interface cards that can operate in failover or load-balancing configurations. This ensures that network connectivity remains stable even if one interface or cable fails.

Storage redundancy is achieved through technologies such as RAID configurations, which distribute or mirror data across multiple drives. This protects against data loss and allows continued operation even if one or more storage devices fail.

Memory reliability is enhanced through error correction mechanisms that detect and fix data corruption in real time. This reduces system instability caused by memory faults and improves long-term reliability.

Server Cooling and Thermal Management Systems

Thermal management is a critical aspect of server design due to the high density of components and continuous operational workloads. Unlike desktop systems, which experience variable usage patterns, servers often operate at consistently high utilization levels.

To manage heat effectively, servers use high-efficiency airflow designs that direct cool air through the system in a controlled path. This is typically achieved using front-to-back airflow configurations, ensuring that cool air enters one side of the system and hot air exits the other.

Multiple high-speed fans are often used in server systems, and these fans are typically designed for redundancy. If one fan fails, others can increase speed to compensate and maintain safe operating temperatures.

In large-scale environments, servers are housed in temperature-controlled rooms where external cooling systems regulate ambient conditions. This ensures that heat generated by dense computing clusters does not affect performance or hardware lifespan.

Physical Form Factors and Their Engineering Purpose

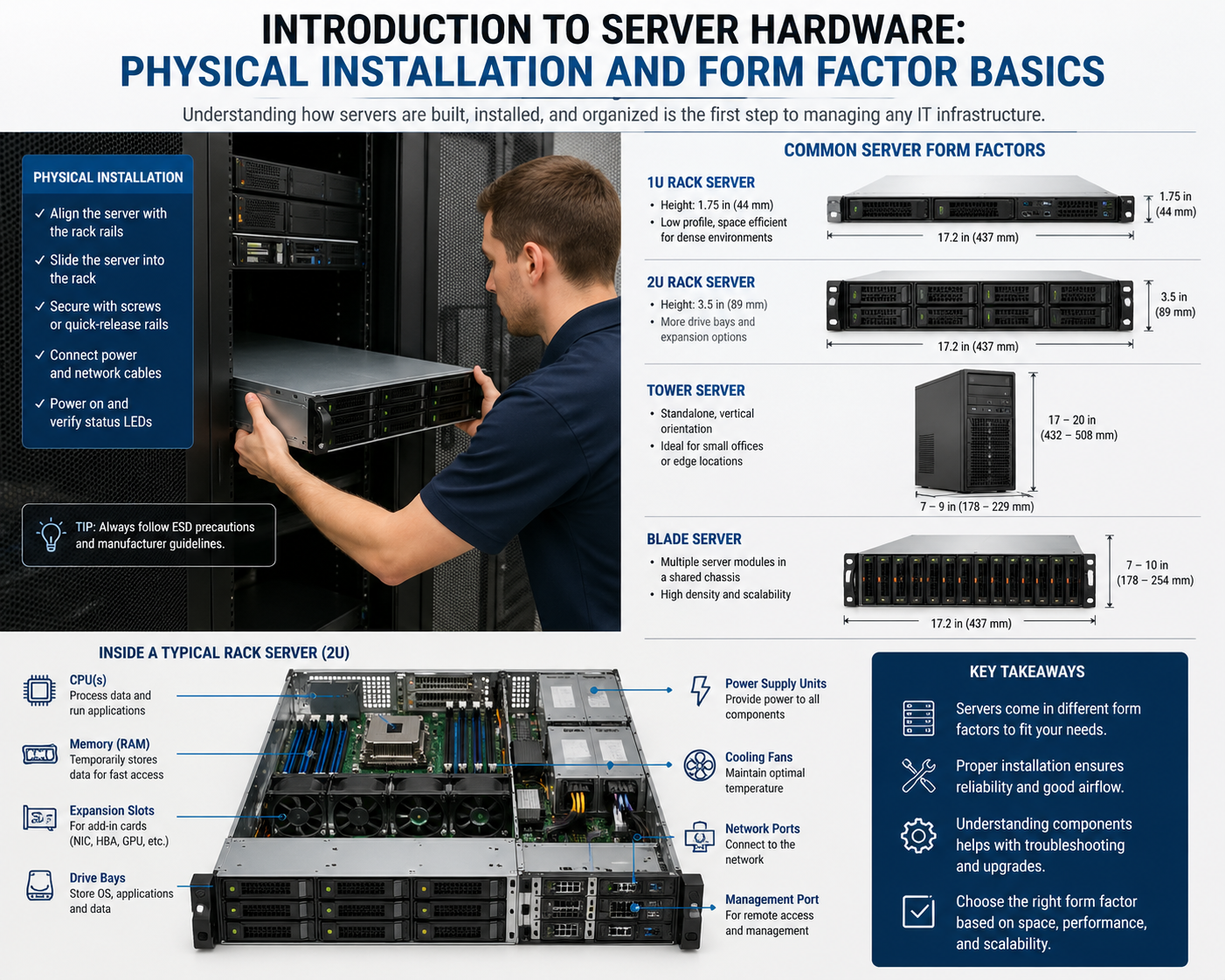

Server systems come in several physical form factors, each designed to meet specific deployment requirements. The most common categories include tower servers, rack-mounted servers, and blade systems.

Tower servers are standalone units that resemble traditional desktop towers but are built with enterprise-grade components. They are typically used in small-scale environments where dedicated server infrastructure is not available.

Rack-mounted servers are designed to be installed in standardized racks. These systems are optimized for space efficiency, allowing multiple servers to be stacked vertically within a single enclosure. This form factor is widely used in data centers due to its scalability and structured organization.

Blade systems represent a more advanced form factor where multiple server modules are housed within a shared chassis. Instead of each server having its own power supply and networking hardware, these resources are centralized in the chassis. Individual server modules, known as blades, share infrastructure resources, improving density and reducing cable complexity.

Understanding Rack-Mounted Server Architecture

Rack-mounted servers are one of the most widely used form factors in enterprise computing environments. They are designed to fit into standardized metal racks, allowing multiple systems to be installed in a vertically stacked configuration.

Each server is measured in units of height, commonly referred to as “U.” One unit represents a standardized vertical measurement used in rack design. Common server sizes include 1U, 2U, and 4U configurations, with larger units offering more internal space for storage devices, expansion cards, or specialized processing components.

Rack-mounted systems are designed for efficient airflow and cable management. The standardized structure of racks allows for organized routing of power and network cables, reducing clutter and improving maintenance efficiency.

One of the key advantages of rack systems is serviceability. Most rack-mounted servers are installed on sliding rails, allowing technicians to extend the server out of the rack for maintenance without fully removing it. This significantly simplifies hardware replacement and inspection procedures.

Blade Server Architecture and Shared Resource Design

Blade servers represent a more compact and highly integrated approach to server deployment. Instead of individual servers operating independently, blade systems use a shared chassis that provides power, cooling, and networking for multiple server modules.

Each blade is a modular computing unit that can be inserted into or removed from the chassis without affecting other blades. This modular design allows for flexible scaling, as additional blades can be added as computing demands increase.

The shared infrastructure model reduces physical space requirements and simplifies cable management. Since power and networking are centralized, the overall system becomes more efficient in terms of physical organization and energy distribution.

However, blade systems often rely on specialized hardware and proprietary designs, which can increase initial costs and reduce flexibility when compared to traditional rack-mounted systems.

Storage Architecture in Server Systems

Storage design in servers is significantly more complex than in desktop systems due to the need for reliability, scalability, and performance under continuous access.

Servers often use multiple storage drives configured in arrays to provide redundancy and performance optimization. These configurations allow data to be distributed or mirrored across multiple disks, reducing the risk of data loss.

Enterprise-grade storage systems may also include hot-swappable drives, allowing failed drives to be replaced without shutting down the system. This ensures the continuous availability of data and services.

In advanced environments, storage systems may be separated from compute systems entirely, forming dedicated storage networks. This allows multiple servers to access centralized storage resources, improving efficiency and simplifying data management.

Network Interface Design in Server Systems

Servers rely heavily on network connectivity, and as a result, their network interface design is more advanced than that of typical desktop systems.

Multiple network interfaces are often used to provide redundancy and load balancing. This ensures that if one network connection fails, traffic can be automatically rerouted through another interface.

In high-performance environments, servers may also use high-speed networking technologies that support large data transfer rates and low-latency communication between systems.

Network interface design is closely integrated with system architecture to ensure consistent communication performance, especially in environments where servers must handle large volumes of simultaneous requests.

Power Management and Electrical Efficiency in Servers

Power management is another critical aspect of server architecture. Servers are designed to operate continuously, which requires efficient power distribution and management systems.

Redundant power supplies ensure that an electrical failure in one unit does not disrupt system operation. In many cases, power supplies are also designed to be hot-swappable, allowing replacement without shutting down the system.

Power efficiency is also an important consideration, especially in large-scale data centers where thousands of servers operate simultaneously. Efficient power usage reduces operational costs and minimizes thermal output.

Advanced power management systems monitor energy consumption in real time and adjust system performance based on workload demand, improving overall efficiency.

Scalability and Infrastructure Expansion Models

One of the key advantages of server systems is scalability. Unlike desktop systems, which are typically limited to single-user performance scaling, servers are designed to grow alongside organizational needs.

Scalability can be achieved by adding more servers to an existing infrastructure, upgrading individual components, or implementing distributed computing models where workloads are shared across multiple systems.

This flexibility allows organizations to adapt their computing resources dynamically, ensuring that performance remains consistent even as demand increases.

Scalable infrastructure design is essential for modern computing environments, particularly in cloud-based systems and enterprise-level applications.

Maintenance Strategies in Server Environments

Server maintenance is structured around minimizing downtime and ensuring continuous availability. This includes proactive monitoring, scheduled maintenance windows, and predictive hardware analysis.

Monitoring systems track performance metrics such as CPU usage, memory utilization, disk health, and network traffic. These metrics are analyzed to detect potential issues before they result in system failure.

Predictive maintenance techniques use historical data to identify patterns that may indicate hardware degradation. This allows administrators to replace components before they fail, reducing the risk of unexpected downtime.

Maintenance procedures are often designed to be non-disruptive, meaning that systems can remain operational during hardware replacement or upgrades.

Deployment Environments and Physical Infrastructure Planning

Server deployment environments are carefully designed to support operational efficiency and hardware stability. This includes considerations such as airflow management, power distribution, physical security, and spatial organization.

Servers are typically installed in controlled environments where temperature and humidity are regulated. This helps maintain hardware longevity and ensures stable performance.

Physical layout planning also plays a key role in infrastructure design. Proper spacing between server racks, organized cable routing, and structured power delivery systems all contribute to efficient operation.

As infrastructure scales, these design considerations become increasingly important for maintaining system reliability and manageability.

Enterprise Evolution of Server Infrastructure and System Design Philosophy

As computing demands scale beyond small office environments, server infrastructure evolves from simple standalone systems into highly engineered enterprise ecosystems. At this level, the distinction between server and desktop computing becomes far more pronounced, not in terms of visible hardware alone but in how systems are designed to operate as part of a coordinated computing fabric.

Enterprise server infrastructure is not built around isolated machines but around interconnected systems designed to distribute workloads, ensure fault tolerance, and maintain continuous availability. The design philosophy prioritizes service delivery rather than individual machine performance. Each server becomes a node in a larger ecosystem where compute, storage, and networking resources are abstracted and managed collectively.

This shift introduces architectural complexity, but it also enables scalability at a level that standalone systems cannot achieve. Enterprise environments rely on structured deployment models, redundant infrastructure layers, and centralized orchestration systems to maintain operational consistency across large-scale computing environments.

Blade Server Systems and High-Density Computing Architecture

Blade server architecture represents one of the most advanced physical deployment models in enterprise computing. Instead of operating as fully independent machines, blade servers function as modular computing units housed within a shared enclosure known as a chassis.

This chassis provides centralized power distribution, cooling systems, and networking infrastructure. Individual blade modules contain only essential computing components such as processors, memory, and minimal local storage. By removing redundant infrastructure components from each server, blade systems achieve significantly higher density compared to traditional rack-mounted systems.

The primary advantage of blade architecture is space efficiency. A single chassis can house multiple compute nodes within a compact footprint, dramatically increasing computational density per rack unit. This is particularly valuable in data centers where physical space and power efficiency directly impact operational costs.

However, this architecture also introduces dependency on shared infrastructure. Since blades rely on the chassis for power and networking, failures at the chassis level can affect multiple compute nodes simultaneously. This trade-off between density and dependency is a critical consideration in system design.

Shared Resource Models in Blade Enclosures

In blade environments, shared resource management is a fundamental design principle. Instead of each server independently managing its own power supply and network interfaces, these functions are centralized within the chassis.

Power distribution units within the chassis supply electricity to all blade modules. This centralized model simplifies cabling and reduces redundancy overhead. Similarly, networking is often handled through integrated switches within the chassis, allowing blades to communicate internally without external cabling.

Cooling systems are also shared across all blades, typically using high-efficiency fan arrays that regulate airflow across the entire enclosure. This unified cooling strategy ensures consistent thermal management but also requires careful load balancing to prevent localized overheating.

The shared infrastructure model improves efficiency but requires highly reliable chassis-level components, as failures in these systems can impact multiple compute nodes simultaneously.

Advanced Rack Infrastructure and Data Center Standardization

Rack-mounted server systems remain the backbone of enterprise data center architecture. However, in advanced deployments, racks are no longer simple physical mounting structures but highly engineered frameworks designed for power distribution, cooling optimization, and cable management.

Standardization plays a crucial role in rack infrastructure design. Servers are built to conform to specific height measurements known as rack units, allowing predictable physical planning and scalable deployment. This standardization enables data centers to mix and match hardware from different vendors while maintaining structural compatibility.

Rack systems are designed with airflow efficiency in mind. Cold air is typically delivered through the front of the rack and expelled through the rear, creating a consistent thermal flow pattern across all installed systems. This reduces heat buildup and improves cooling efficiency.

Cable management systems within racks are equally important. Structured cabling channels reduce physical clutter and ensure that network and power connections remain organized, which simplifies maintenance and reduces the risk of human error during hardware servicing.

Server Virtualization and Resource Abstraction Layers

Modern server environments rely heavily on virtualization technologies that abstract physical hardware into logical computing resources. This allows multiple virtual machines to run on a single physical server, each operating as an independent computing environment.

Virtualization introduces a layer of flexibility that significantly enhances resource utilization. Instead of dedicating a physical server to a single application, multiple workloads can share the same underlying hardware while remaining logically isolated.

This abstraction is managed by a hypervisor layer that controls access to physical resources such as CPU cycles, memory allocation, and storage I/O. The hypervisor ensures that each virtual machine operates independently without interfering with others.

Virtualization also simplifies infrastructure scaling. New computing environments can be deployed as virtual instances without requiring additional physical hardware, reducing both deployment time and operational complexity.

High-Availability Architectures and Fault-Tolerant Systems

Enterprise server systems are designed with high availability as a core objective. This means that systems are engineered to remain operational even in the presence of hardware or software failures.

High availability is achieved through multiple layers of redundancy and failover mechanisms. At the hardware level, redundant power supplies, network interfaces, and storage systems ensure that individual component failures do not disrupt overall system operation.

At the software level, clustering technologies allow multiple servers to operate as a unified system. If one server fails, workloads are automatically redistributed to other active nodes within the cluster.

This combination of hardware and software redundancy creates a fault-tolerant environment capable of maintaining continuous service availability even under adverse conditions.

Enterprise Storage Systems and Distributed Data Architectures

Storage systems in enterprise environments are significantly more complex than those found in desktop systems. Instead of relying on a single storage device, enterprise systems use distributed storage architectures that span multiple physical devices and sometimes multiple geographic locations.

These systems are designed to ensure data durability, availability, and scalability. Data is often replicated across multiple storage nodes to protect against hardware failure. In some cases, data is also distributed across multiple locations to provide disaster recovery capabilities.

Storage area networks are commonly used to decouple storage resources from compute systems. This allows multiple servers to access centralized storage pools, improving efficiency and simplifying data management.

Advanced storage systems also incorporate caching mechanisms and tiered storage models that optimize performance by placing frequently accessed data on faster storage media.

Power Distribution and Energy Optimization in Large-Scale Environments

Power management in enterprise server environments is a critical operational consideration. Large data centers consume significant amounts of electrical power, making efficiency a key design priority.

Power distribution systems are designed to provide a stable and redundant electrical supply to all server components. This includes uninterruptible power supplies that provide backup power during outages and power distribution units that monitor and regulate energy usage.

Energy optimization strategies include dynamic workload balancing, where computing tasks are distributed based on energy efficiency considerations. Servers may also adjust processing speeds based on workload demand to reduce power consumption during periods of low utilization.

These strategies help reduce operational costs while maintaining system performance and reliability.

Cooling Infrastructure and Environmental Control Systems

Cooling systems in enterprise environments are highly engineered to manage the heat generated by dense computing clusters. Unlike standard office environments, data centers require specialized cooling solutions to maintain stable operating temperatures.

Airflow management is carefully designed to ensure consistent temperature distribution across all server racks. Hot and cold aisle configurations are commonly used to separate incoming cool air from outgoing hot air, improving cooling efficiency.

In some advanced environments, liquid cooling systems are used to dissipate heat more efficiently than traditional air-based systems. These systems can be integrated directly into server hardware or deployed at the rack level.

Environmental monitoring systems continuously track temperature, humidity, and airflow to ensure optimal operating conditions.

Network Architecture and High-Speed Interconnect Systems

Server environments rely heavily on high-speed networking to facilitate communication between systems. Network architecture is designed to support large volumes of data transfer with minimal latency.

Multiple network layers are often implemented to separate different types of traffic, such as storage communication, application data, and management traffic. This segmentation improves performance and reduces congestion.

High-speed interconnect technologies are used to connect servers within clusters, enabling rapid data exchange between compute nodes. These interconnects are critical for distributed computing applications where tasks are split across multiple systems.

Network redundancy is also essential, ensuring that communication paths remain available even if individual network components fail.

Security Architecture in Server Environments

Security in server systems is implemented at multiple layers, including physical, network, and application levels. Physical security ensures that unauthorized access to hardware is prevented through controlled access environments.

Network security mechanisms protect data in transit and prevent unauthorized access to systems. This includes segmentation, encryption, and traffic monitoring systems.

At the system level, authentication and authorization mechanisms ensure that only approved users and processes can access specific resources.

Security architecture is designed to be integrated into the overall system infrastructure rather than functioning as an external layer.

Lifecycle Management and Hardware Refresh Cycles

Server systems operate within defined lifecycle management frameworks that determine when hardware should be upgraded or replaced. Unlike desktop systems, which may be replaced based on user preference, server hardware replacement is typically based on performance metrics, reliability thresholds, and operational efficiency.

Lifecycle management includes monitoring hardware performance over time, predicting potential failures, and planning upgrades to maintain system efficiency.

Hardware refresh cycles ensure that infrastructure remains aligned with evolving performance requirements and technological advancements.

Operational Scalability and Distributed Computing Models

Scalability in server environments is achieved through distributed computing models that allow workloads to be spread across multiple systems. This approach enables organizations to handle increasing demand without relying on a single system.

Distributed architectures improve resilience, as workloads can be rerouted in the event of system failure. They also enable horizontal scaling, where additional servers are added to increase overall capacity.

This model is fundamental to modern computing environments, particularly in cloud-based and large-scale enterprise systems.

Integration of Server Systems into Modern Digital Infrastructure

Server systems are deeply integrated into modern digital infrastructure, supporting applications ranging from enterprise software to cloud services and data analytics platforms.

This integration requires compatibility with a wide range of technologies, including virtualization platforms, storage networks, and orchestration systems. Servers function as foundational components within these ecosystems, enabling digital services to operate at scale.

The role of servers continues to evolve as computing demands increase, but their core function as reliable, scalable, and centrally managed computing resources remains consistent.

Conclusion

Servers and desktop computers may share visible similarities at the hardware level, but their underlying purpose, engineering priorities, and operational expectations place them in fundamentally different categories of computing infrastructure. Across modern IT environments, this distinction becomes increasingly important as organizations scale from individual systems to complex distributed architectures that support continuous service delivery.

At the most basic level, both servers and desktops rely on the same foundational computing principles. They use processors to execute instructions, memory to handle active workloads, storage systems to retain data, and network interfaces to communicate with other systems. This shared foundation often leads to the misconception that they are interchangeable. In reality, the way these components are designed, validated, and deployed determines whether a system functions as a personal workstation or as an enterprise-grade service platform.

The defining characteristic of server systems is not raw performance but operational resilience. Servers are engineered to operate continuously under sustained load, often serving multiple users or applications at the same time. This requirement introduces a design philosophy centered on reliability, redundancy, and fault tolerance. While a desktop system may prioritize user experience and cost efficiency, a server prioritizes uninterrupted service delivery under all conditions.

Redundancy is one of the most critical elements that distinguishes server architecture. Power systems are commonly duplicated so that a failure in one unit does not interrupt operation. Network interfaces are often multiplied to ensure continuous connectivity even if one communication path becomes unavailable. Storage systems are frequently configured in redundant arrays to protect against data loss. Even memory systems may include error correction mechanisms that automatically detect and repair data corruption. These layers of redundancy collectively ensure that servers can withstand hardware failures without compromising system availability.

Maintainability is another key differentiator. Server environments are designed for management at scale, often in locations where physical access is limited or impractical. Remote management capabilities allow administrators to monitor system health, configure hardware settings, and perform recovery operations without direct interaction with the machine. This ability to manage infrastructure remotely is essential in large-scale environments where systems may be distributed across multiple facilities or geographic regions.

In contrast, desktop systems are designed for direct user interaction. While remote access tools exist, they do not provide the same depth of hardware-level control or independence from the operating system. This difference reflects the distinct operational roles of the two system types. A desktop supports individual productivity, while a server supports continuous service delivery.

Physical design also plays a significant role in differentiating servers from desktops. Server systems are optimized for space efficiency and density. In enterprise environments, computing resources must be maximized within limited physical infrastructure. This has led to the development of compact server designs that allow multiple systems to be installed within structured environments such as racks or enclosures.

Rack-based systems are particularly important in modern data centers. They allow servers to be organized in standardized vertical structures, enabling efficient use of space and simplified infrastructure management. These systems are designed with serviceability in mind, allowing individual units to be accessed, replaced, or maintained without disrupting surrounding systems. The standardized measurement of server height ensures compatibility across different hardware manufacturers and simplifies infrastructure planning.

Blade systems take this concept further by centralizing shared resources within a single chassis. Instead of each server containing its own power and networking components, these functions are provided by the chassis itself. Individual compute modules operate as blades that can be inserted or removed as needed. This architecture significantly increases computing density and reduces physical complexity, but it also introduces dependency on shared infrastructure components.

Across all server form factors, the emphasis remains consistent: maximize efficiency, maintain reliability, and ensure scalability. These principles guide not only physical design but also system architecture and operational strategy. Servers are not isolated machines but interconnected components within a larger computing ecosystem.

Storage and networking systems further highlight the difference in design philosophy. Servers often rely on distributed storage architectures that span multiple devices or even multiple locations. This ensures data durability and availability even in the event of hardware failure. Network systems are similarly designed for redundancy and high throughput, supporting large volumes of simultaneous communication between systems.

Power and thermal management are also critical considerations in server environments. Continuous operation generates significant heat, requiring advanced cooling systems and controlled environmental conditions. Airflow design, temperature regulation, and energy efficiency all play essential roles in maintaining system stability. Unlike desktop environments, where cooling is typically designed for intermittent usage, server cooling systems must operate continuously under high load conditions.

Scalability is one of the most important advantages of server-based infrastructure. Unlike desktop systems, which are limited to individual performance scaling, server environments are designed to expand horizontally. Additional systems can be integrated into existing infrastructure to increase capacity without disrupting ongoing operations. This scalability is essential for modern computing environments where demand can grow rapidly and unpredictably.

Virtualization has further transformed the role of server systems by introducing abstraction layers that separate physical hardware from logical computing environments. This allows multiple virtual systems to operate on a single physical machine, improving resource utilization and operational flexibility. Workloads can be dynamically allocated, migrated, or scaled based on demand, enabling highly efficient use of infrastructure resources.

High availability architectures ensure that services remain operational even in the presence of system failures. Through clustering, failover mechanisms, and distributed workloads, server environments are designed to tolerate disruptions without affecting end users. This level of resilience is critical in environments where downtime can have significant operational or financial consequences.

Security is also deeply integrated into server design. Protection mechanisms operate across physical, network, and system levels to ensure that unauthorized access is prevented and data integrity is maintained. Unlike desktop systems, where security is often user-centric, server security is infrastructure-centric, focusing on protecting shared resources and services.

As computing demands continue to grow, server systems have become the foundation of modern digital infrastructure. They support everything from enterprise applications and cloud platforms to data analytics and distributed computing environments. Their role extends far beyond individual machines, forming the backbone of interconnected systems that power modern technology ecosystems.

Ultimately, the distinction between servers and desktop computers is defined not by their components but by their purpose. Desktops are designed for individual interaction and productivity, while servers are designed for continuous service delivery, scalability, and reliability at scale. This fundamental difference shapes every aspect of their design, from internal architecture to physical deployment and operational management.

Understanding this distinction provides a clearer perspective on how modern computing environments are structured and why server systems remain essential to the functioning of digital infrastructure.