In contemporary IT environments, system failure is not an exception but a recurring operational reality shaped by complexity, interdependence, and constant change. Applications now rely on distributed architectures, virtualized infrastructure, and interconnected services that span multiple availability zones and sometimes multiple geographic regions. This interdependence increases efficiency and scalability but also expands the surface area of potential failure. Hardware degradation, misconfigurations, software bugs, network interruptions, security incidents, and human operational errors all contribute to service instability.

Cloud-based infrastructure introduces mechanisms to reduce the impact of these failures, but it does not eliminate them. Instead, it shifts the responsibility from physical recovery to architectural resilience. Disaster recovery becomes a structured discipline focused on restoring systems within acceptable operational boundaries rather than preventing failure entirely. The objective is to maintain continuity, protect data integrity, and ensure that business functions can resume with controlled disruption.

Within cloud architecture, disaster recovery is implemented through layered strategies that define how systems behave during normal operation, partial degradation, and complete outage scenarios. These strategies are not uniform; they are selected based on workload criticality, financial tolerance, and acceptable recovery thresholds defined by the organization.

Defining Recovery Objectives and Their Role in Cloud Architecture Design

Two primary metrics govern disaster recovery planning: Recovery Point Objective and Recovery Time Objective. These parameters define the acceptable limits of data loss and system downtime, and they directly influence infrastructure design choices.

Recovery Point Objective represents the maximum acceptable amount of data loss measured in time. It defines how frequently data must be captured or synchronized to ensure that the system can be restored to a recent state without significant loss of transactional integrity. A lower recovery point objective requires more frequent backups or continuous replication, while a higher value allows longer intervals between data capture operations.

Recovery Time Objective represents the maximum acceptable duration required to restore system functionality after a disruption. It defines how quickly infrastructure, applications, and services must be brought back online. A shorter recovery time objective requires pre-provisioned systems, automated failover mechanisms, and highly optimized recovery workflows, while longer objectives allow for manual intervention and slower restoration processes.

These two metrics are deeply interconnected. Systems designed for near-zero downtime typically require continuous replication and fully redundant environments, which significantly increase cost and complexity. Conversely, systems with relaxed objectives prioritize cost efficiency over speed, relying on delayed recovery processes that may involve manual intervention.

Strategic Role of Business Impact Analysis in Recovery Planning

Before selecting a disaster recovery model, organizations conduct structured assessments known as business impact analysis. This evaluation identifies critical business functions and quantifies the consequences of their disruption. It serves as the foundation for aligning technical recovery strategies with business priorities.

Business impact analysis examines multiple dimensions of operational dependency. It evaluates financial loss associated with downtime, reputational damage resulting from service unavailability, regulatory and compliance implications, and downstream effects on customers and internal processes. It also categorizes applications based on criticality, distinguishing between mission-critical systems, business-essential systems, and non-essential workloads.

Mission-critical systems typically require aggressive recovery objectives with minimal downtime tolerance. Business-essential systems may allow short interruptions, while non-essential systems can operate under extended recovery windows. This classification enables infrastructure architects to allocate resources efficiently and design recovery systems that reflect actual business needs rather than uniform technical standards.

Overview of Cloud-Based Disaster Recovery Models

Cloud environments typically implement disaster recovery through a tiered set of architectural patterns. These patterns vary in complexity, cost, and recovery speed, allowing organizations to select models that align with their operational requirements.

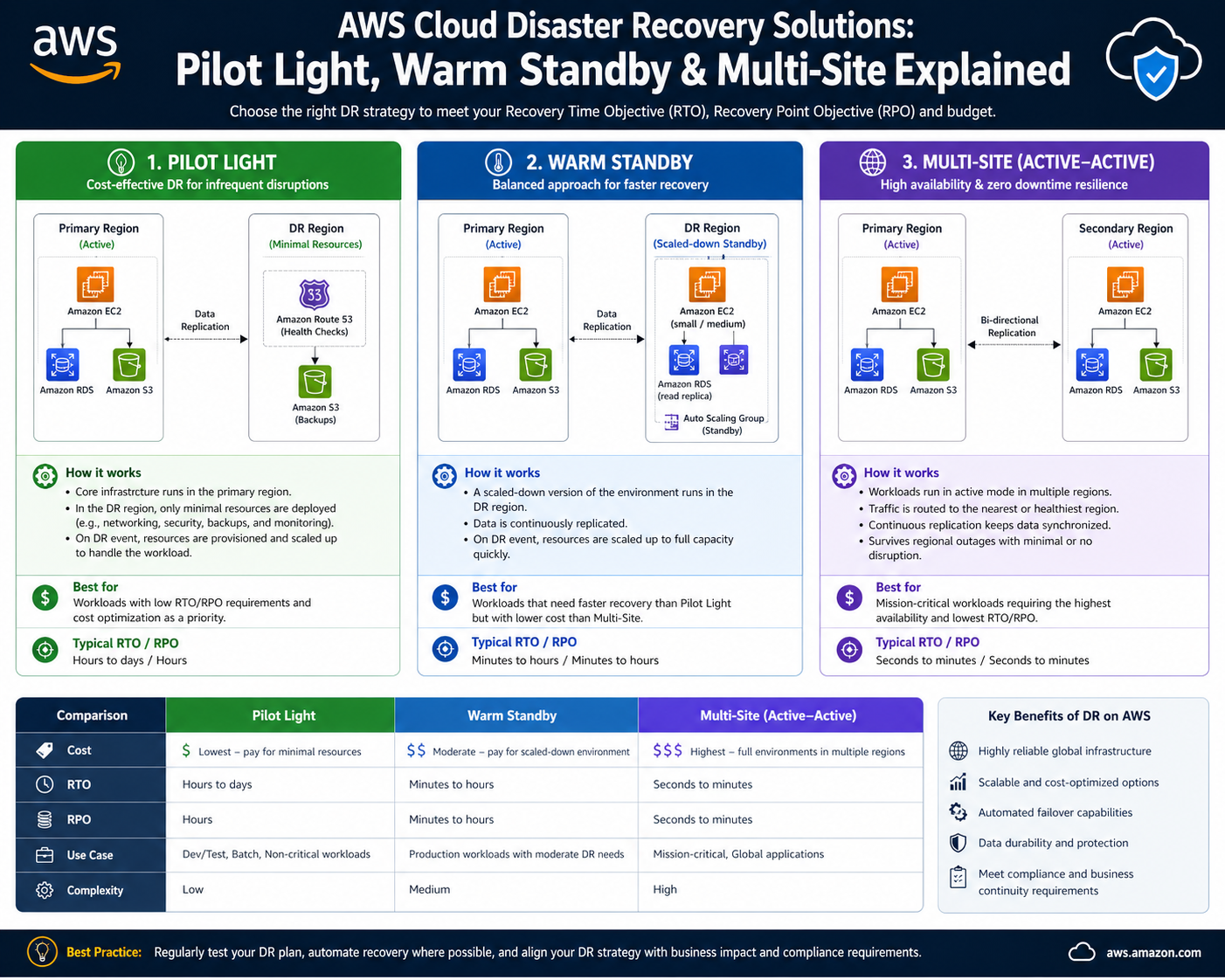

The primary disaster recovery models include backup and restore systems, pilot light architectures, warm standby configurations, and multi-site active-active deployments. Each model represents a progressive increase in system readiness and operational redundancy.

At the foundational level, backup and restore focus on data preservation with delayed recovery. Pilot light introduces minimal always-on infrastructure for faster activation. Warm standby maintains a scaled-down but continuously operational environment. Multi-site architectures replicate full production environments across multiple locations to achieve near-instantaneous failover.

These models are not mutually exclusive. Large organizations often implement multiple recovery strategies across different workloads depending on their importance and performance requirements.

Backup and Restore as a Foundational Recovery Strategy

Backup and restore represent the simplest form of disaster recovery. It is widely used for systems where cost efficiency is prioritized over rapid recovery. In this model, system data is periodically captured and stored in durable storage systems. In the event of a failure, infrastructure is rebuilt, and data is restored from the most recent backup.

This approach focuses primarily on data preservation rather than continuous availability. It assumes that system downtime is acceptable for a defined period while recovery processes are executed. Because of this, it is commonly applied to non-critical applications, archival systems, and environments with low operational urgency.

The primary advantage of this model is its simplicity and cost efficiency. It does not require continuously running redundant infrastructure, which significantly reduces operational expenses. However, it introduces longer recovery times since systems must be reconstructed after failure.

Durable Storage Systems and Data Protection Principles

Backup systems rely on highly durable storage architectures designed to preserve data across hardware failures, regional disruptions, and operational anomalies. These storage systems typically distribute data across multiple physical devices and locations, ensuring redundancy at the infrastructure level.

Data durability is achieved through replication mechanisms that maintain multiple copies of stored information. Even if individual components fail, data remains accessible through redundant nodes. This design ensures that backups remain intact over long periods, supporting long-term retention and recovery requirements.

Storage systems are also optimized for lifecycle management. Data may transition between storage tiers based on access frequency, with frequently used backups stored in higher-performance tiers and older backups moved to cost-optimized archival layers. This hierarchical storage approach balances performance requirements with cost efficiency.

Large-Scale Data Migration and Cloud Ingestion Mechanisms

Organizations dealing with large datasets often encounter challenges when transferring data to cloud-based storage systems. Traditional network-based transfer methods may be insufficient for multi-terabyte or petabyte-scale datasets due to bandwidth limitations and extended transfer durations.

To address this, physical data transfer mechanisms are used to securely transport large volumes of data into cloud environments. These systems enable organizations to load data onto secure storage devices that are then transported to processing facilities, where the data is ingested into a scalable storage infrastructure.

This method significantly reduces migration time compared to network-based transfers and ensures secure handling of sensitive data during transit. Once ingested, the data becomes part of the organization’s backup ecosystem and can be used for restoration in disaster scenarios.

Hybrid Backup Systems and Continuous Synchronization Models

Modern disaster recovery architectures often extend beyond traditional backup models by incorporating hybrid systems that connect on-premise infrastructure with cloud storage environments. These systems enable continuous or scheduled synchronization of data between local systems and remote storage locations.

This approach reduces the gap between production data and backup copies, ensuring that cloud-based recovery points remain relatively current. File-level replication systems allow changes in shared repositories to be automatically reflected in cloud storage environments, reducing the risk of data inconsistency during recovery.

Hybrid models are particularly useful for organizations transitioning from legacy infrastructure to cloud-native environments, as they provide incremental adoption paths without requiring immediate full migration.

Operational Constraints and Limitations of Basic Recovery Models

While backup and restore systems provide a foundational level of disaster recovery, they are limited in their ability to support rapid service restoration. Because infrastructure must be rebuilt after a failure event, recovery time depends heavily on provisioning speed, configuration complexity, and data restoration duration.

Manual intervention may also be required during recovery, introducing additional delays and increasing the risk of inconsistencies. These limitations make the model unsuitable for high-availability systems that require continuous uptime or minimal interruption.

As operational requirements become more demanding, organizations often evolve toward more advanced recovery models that reduce dependency on full system reconstruction. These models introduce pre-configured infrastructure components and partially active environments that accelerate recovery processes.

Progression Toward Advanced Recovery Architectures

As system criticality increases, disaster recovery strategies evolve from purely reactive models toward proactive resilience frameworks. Instead of relying solely on stored backups, advanced architectures maintain partially active systems that can be rapidly scaled or activated during failure scenarios.

This progression reflects a shift in architectural philosophy, where systems are designed not only to recover from failure but to anticipate it through continuous readiness. This transition forms the foundation for intermediate and high-availability recovery models that significantly reduce downtime while maintaining cost efficiency relative to fully redundant systems.

Transition from Basic Recovery to Intermediate Disaster Recovery Models in AWS

As cloud adoption matures, organizations often move beyond simple backup-based recovery into more responsive architectures that reduce downtime without fully duplicating production environments. This shift is driven by increasing dependence on digital services, tighter operational requirements, and the need for faster service restoration. Intermediate disaster recovery models bridge the gap between low-cost backup systems and high-cost fully redundant deployments.

In these architectures, systems are no longer treated as static datasets awaiting restoration. Instead, they incorporate always-available components that maintain a minimal operational footprint in the cloud. These components ensure that recovery is not a full rebuild process but rather a controlled scale-up or activation process. This reduces recovery time significantly while keeping infrastructure costs manageable compared to full duplication strategies.

The two most prominent intermediate models in cloud-based disaster recovery are Pilot Light and Warm Standby. These models progressively increase system readiness, availability of services, and automation depth. They also introduce more sophisticated synchronization mechanisms and infrastructure orchestration techniques that allow faster response during failure events.

Pilot Light Architecture and the Concept of Minimal Always-On Infrastructure

Pilot Light represents a disaster recovery strategy where only the essential components of a system remain continuously active in the cloud. The term is derived from the idea of a small flame that remains lit and can be used to ignite a larger system when needed. In cloud architecture, this translates into maintaining only the core data layer and minimal compute resources in an always-on state.

The primary objective of Pilot Light is to ensure that critical data is continuously replicated and that foundational services are ready for rapid expansion. Typically, databases or storage systems remain active and synchronized with production data, while compute resources remain either stopped or minimally provisioned. This ensures that recovery does not require data reconstruction, only infrastructure scaling and service activation.

This model is particularly effective for workloads where data integrity is critical but full-time compute availability is not required. It provides a balance between cost efficiency and recovery speed, making it suitable for systems that can tolerate short recovery windows but cannot afford extended downtime associated with full rebuild processes.

Core Components of Pilot Light Environments in Cloud Systems

Pilot Light architectures rely on a small set of continuously running components that serve as the foundation for recovery operations. The most critical of these is the data layer, which remains synchronized with production environments. This ensures that when recovery is initiated, the system can immediately operate on up-to-date information without requiring data restoration procedures.

In addition to data replication, minimal compute infrastructure is often maintained in a dormant or lightly loaded state. These compute components are preconfigured with necessary application dependencies, operating system settings, and runtime configurations. While they may not actively handle production traffic, they are prepared to scale rapidly when triggered.

Networking components such as routing configurations, security policies, and identity access controls are also predefined to support rapid activation. This reduces the need for manual setup during recovery, allowing systems to transition more smoothly from standby to active state.

Activation Mechanisms and Automated Recovery Triggers

Pilot Light systems often rely on automated monitoring and triggering mechanisms to detect failures and initiate recovery procedures. These systems continuously monitor application health, service availability, and infrastructure performance indicators. When a failure threshold is detected, automated workflows are triggered to initiate scaling and activation processes.

These workflows may include provisioning additional compute instances, scaling application services, redirecting traffic, and enabling load balancing mechanisms. Automation is essential in this model because manual intervention would significantly increase recovery time and reduce the effectiveness of the architecture.

Event-driven systems play a critical role in enabling this automation. Monitoring tools generate alerts that are processed by orchestration services, which then execute predefined recovery scripts. This reduces human dependency and ensures consistent execution of recovery procedures under pressure conditions.

Recovery Performance Characteristics of Pilot Light Systems

Pilot Light architectures significantly improve recovery time compared to basic backup systems. Since core data and minimal infrastructure are already active, recovery does not require full system reconstruction. Instead, the system focuses on scaling pre-existing components and activating additional services.

Recovery time is typically measured in minutes to tens of minutes, depending on system complexity and scaling requirements. However, this model still requires time to fully restore production-level performance since compute resources must be expanded and traffic must be redistributed.

The recovery point objective in Pilot Light systems is generally much lower than in backup-based systems due to continuous data replication. This ensures that data loss is minimized, even if compute recovery takes longer than data recovery.

Warm Standby Architecture and Fully Configured Minimal Environments

Warm Standby represents a more advanced disaster recovery model where a fully functional but scaled-down version of the production environment is continuously running in the cloud. Unlike Pilot Light, which maintains only core components, Warm Standby maintains all components of the system in an active state, but at reduced capacity.

This includes application servers, databases, load balancers, networking configurations, and supporting services. While the environment is fully operational, it is not designed to handle full production traffic under normal conditions. Instead, it is sized to handle minimal load while remaining ready for immediate scaling.

The primary advantage of Warm Standby is reduced recovery time. Because all system components are already running, recovery primarily involves scaling resources rather than provisioning new infrastructure. This allows systems to transition into full operational capacity rapidly when a failure occurs in the primary environment.

Infrastructure Scaling and Load Management in Warm Standby Systems

Warm Standby environments rely heavily on dynamic scaling mechanisms that allow compute and storage resources to expand rapidly during failover events. These scaling operations are typically driven by automated policies that monitor system load and trigger resource expansion when thresholds are exceeded.

Load balancing systems play a critical role in distributing traffic between standby and production environments during recovery. In a failure scenario, traffic is redirected to the standby environment, which then scales horizontally to accommodate increased demand.

This scaling process is designed to be elastic, ensuring that system performance remains stable even during sudden traffic spikes. The use of preconfigured scaling policies ensures that resource expansion occurs in a controlled and predictable manner.

Operational Efficiency and Cost Considerations in Warm Standby Models

While Warm Standby significantly reduces recovery time compared to Pilot Light, it introduces higher operational costs due to the continuous running of full infrastructure components. Even though these components operate at reduced capacity, they still consume resources and incur ongoing expenses.

Organizations must balance the trade-off between cost and recovery speed when implementing this model. It is typically used for systems where downtime has a high operational or financial impact, but full active-active redundancy is not economically justified.

Cost optimization strategies often include resource sizing adjustments, automated scaling down during low-demand periods, and efficient workload distribution to minimize unnecessary resource consumption.

Data Consistency and Synchronization in Warm Standby Environments

Data synchronization in Warm Standby architectures is continuous and bidirectional in some advanced configurations. This ensures that both primary and standby environments maintain near-identical data states. As transactions occur in the production environment, they are replicated in real time or near real time to the standby environment.

This synchronization minimizes recovery complexity since both environments remain consistent. In failover scenarios, there is minimal risk of data divergence, and systems can resume operations without extensive reconciliation processes.

Consistency mechanisms may include database replication, event streaming, or a log-based synchronization technique,s depending on the underlying data architecture.

Failure Handling and Failover Execution in Warm Standby Systems

Failover in Warm Standby environments is typically faster and more deterministic than in Pilot Light systems. Since infrastructure is already running, failover primarily involves traffic redirection and scaling adjustments rather than system initialization.

DNS switching, load balancer reconfiguration, or routing table updates are commonly used to redirect traffic from the primary environment to the standby environment. Once traffic is redirected, scaling policies ensure that system performance remains stable under increased load.

This process reduces recovery time significantly and allows systems to achieve near-continuous availability in many cases.

Architectural Positioning Between Pilot Light and Fully Redundant Systems

Warm Standby occupies a middle position in disaster recovery architecture. It provides faster recovery than Pilot Light while avoiding the high cost of fully redundant multi-site systems. This makes it suitable for workloads that require high availability but do not justify full active-active deployment.

It also serves as a transitional architecture for organizations moving toward more advanced resilience strategies. By maintaining fully functional but scaled-down environments, organizations gain operational readiness without incurring the full cost of duplication.

This model is often used for business-critical applications such as customer-facing platforms, financial systems, and internal operational tools where downtime must be minimized but cost efficiency remains a priority.

Multi-Site Disaster Recovery Architecture and Active-Active Cloud Resilience

Multi-Site disaster recovery represents the most advanced and resilient model in cloud-based continuity planning. It is designed for systems that require near-zero downtime and continuous availability even during major infrastructure failures. Unlike Pilot Light or Warm Standby, Multi-Site architecture maintains fully operational environments in multiple locations simultaneously, ensuring that no single point of failure can interrupt service delivery.

In this model, production workloads are distributed across two or more geographically separated environments that operate in parallel. Both environments are actively serving traffic, continuously synchronized, and fully capable of handling production workloads independently. This architecture is often referred to as active-active deployment because all sites remain active at all times.

The primary goal of Multi-Site disaster recovery is to eliminate recovery time by ensuring that failover is instantaneous. Instead of rebuilding or scaling infrastructure during a failure event, traffic is simply redirected or redistributed to healthy environments without interruption.

Core Principles of Active-Active Deployment in Cloud Systems

Active-active architectures rely on the principle that redundancy must exist not as idle capacity but as fully functional operational systems. Each site in a Multi-Site setup is capable of independently handling a full production load if required. This requires careful design of compute resources, storage systems, networking layers, and application logic.

Traffic distribution is typically managed through intelligent routing systems that continuously evaluate system health, latency, and performance. These systems ensure that user requests are directed to the most optimal available environment while maintaining load balance across all active regions.

The architecture also depends heavily on real-time or near-real-time data synchronization to ensure consistency across all sites. Without synchronized data states, active-active systems would risk divergence, leading to inconsistent user experiences and potential data corruption.

Data Replication and Synchronization at Global Scale

One of the most complex components of Multi-Site disaster recovery is data synchronization across geographically distributed environments. Since multiple systems are actively processing transactions, maintaining consistency becomes a critical engineering challenge.

Replication strategies in this model are designed to ensure that data changes in one region are reflected across all other regions with minimal delay. Depending on system design, this may involve synchronous replication for strict consistency or asynchronous replication for improved performance with slight delay tolerance.

Synchronous replication ensures that transactions are confirmed across multiple sites before completion, reducing the risk of data inconsistency but introducing latency overhead. Asynchronous replication allows faster local transactions but requires reconciliation mechanisms to handle potential divergence.

Distributed databases, event streaming systems, and global transaction coordination mechanisms are commonly used to manage this complexity. These systems ensure that all nodes maintain a consistent view of data even under high transaction loads.

Traffic Management and Intelligent Routing in Multi-Site Systems

Traffic management is a critical component of Multi-Site disaster recovery architecture. Since multiple environments are active simultaneously, incoming requests must be intelligently distributed to optimize performance and ensure resilience.

Global routing systems continuously monitor the health and responsiveness of each site. Based on this information, they dynamically adjust traffic distribution to avoid overloaded or degraded regions. This ensures that users always experience optimal performance regardless of underlying infrastructure conditions.

In failure scenarios, traffic is automatically rerouted away from affected regions without requiring manual intervention. This process is seamless from the user perspective, resulting in uninterrupted service availability even during major outages.

Fault Tolerance and System Redundancy at Maximum Scale

Multi-Site architecture is fundamentally designed for fault tolerance at the highest level. Each component of the system is duplicated or distributed across multiple environments, ensuring that no single failure can disrupt overall system functionality.

Compute resources, storage systems, networking layers, and application services are all replicated across regions. This redundancy ensures that even if an entire geographic region becomes unavailable, other regions can continue operating independently.

This level of redundancy significantly reduces risk but increases complexity in system design, deployment, and maintenance. Engineers must ensure that all environments remain synchronized, secure, and properly configured at all times.

Consistency Models and Data Integrity Challenges

Maintaining data consistency across multiple active environments is one of the most challenging aspects of Multi-Site disaster recovery. Since multiple systems can process transactions simultaneously, there is a risk of conflicting updates or data divergence.

To address this, systems implement consistency models that define how and when data is synchronized. Strong consistency models ensure that all nodes reflect the same data state at all times, while eventual consistency models allow temporary differences that are later reconciled.

Strong consistency provides higher reliability but may introduce latency due to coordination overhead. Eventual consistency improves performance but requires conflict resolution mechanisms to handle discrepancies.

Choosing the appropriate consistency model depends on application requirements, with financial systems often requiring strict consistency and high-throughput applications prioritizing performance.

Performance Optimization in Distributed Active Systems

Operating multiple active environments introduces performance optimization challenges that must be carefully managed. Network latency between regions, data synchronization delays, and load distribution inefficiencies can all impact system performance.

To mitigate these challenges, systems are designed with regional optimization strategies that minimize cross-region dependencies. This ensures that most operations are handled locally within each region whenever possible.

Caching mechanisms are also widely used to reduce latency and improve responsiveness. By storing frequently accessed data closer to users, systems can reduce the need for cross-region communication and improve overall performance.

Cost Implications of Fully Redundant Architectures

Multi-Site disaster recovery is the most expensive recovery model due to the requirement of maintaining fully operational duplicate environments. Unlike other models where resources are scaled up only during failure, Multi-Site systems continuously operate at full or near-full capacity.

This results in significantly higher infrastructure costs, including compute, storage, networking, and operational overhead. Organizations must carefully evaluate whether the benefits of near-zero downtime justify the financial investment.

Despite its cost, this model is often essential for industries where downtime directly translates into substantial financial loss or regulatory risk, such as financial services, healthcare systems, and global e-commerce platforms.

Operational Complexity and Management Overhead

Managing Multi-Site environments requires advanced operational maturity. System administrators must ensure that all environments remain synchronized, secure, and properly configured at all times.

This includes continuous monitoring, automated configuration management, and strict version control across all deployed systems. Any inconsistency between environments can lead to operational failures or data inconsistencies during failover events.

Automation plays a critical role in reducing management complexity. Infrastructure as code practices, automated deployment pipelines, and centralized monitoring systems are commonly used to maintain consistency across environments.

Security Considerations in Multi-Region Deployments

Security in Multi-Site architectures must be consistently enforced across all environments. Since multiple regions are active simultaneously, security policies must be uniformly applied to prevent vulnerabilities or configuration drift.

Identity and access management systems are typically centralized or synchronized across regions to ensure consistent authentication and authorization controls. Encryption policies are also enforced globally to protect data in transit and at rest.

Security monitoring systems continuously analyze activity across all regions to detect anomalies and potential threats. This distributed monitoring approach ensures that security incidents can be identified and mitigated regardless of where they originate.

Failure Scenarios and Seamless Continuity Mechanisms

In Multi-Site disaster recovery systems, failure scenarios are handled without service interruption. When one region experiences a disruption, traffic is automatically rerouted to healthy regions without requiring manual intervention or system rebuilds.

This seamless failover capability is achieved through continuous health monitoring and automated routing adjustments. Users experience minimal or no disruption because services remain available through alternative active environments.

The system’s ability to maintain continuity under failure conditions is what distinguishes Multi-Site architecture from all other disaster recovery models.

Architectural Positioning of Multi-Site Systems in Cloud Strategy

Multi-Site disaster recovery represents the highest level of cloud resilience architecture. It is typically reserved for mission-critical systems where even short periods of downtime are unacceptable. While expensive and complex, it provides unmatched reliability and operational continuity.

It is often implemented in organizations with global user bases, high transaction volumes, or strict availability requirements. These systems are designed not only to recover from failure but to operate continuously under all conditions without interruption.

Multi-Site architecture completes the progression of disaster recovery models, representing the endpoint of resilience engineering where availability is prioritized above all other constraints, including cost and complexity.

Conclusion

In modern cloud architecture, disaster recovery is no longer a secondary design consideration but a foundational requirement that shapes how systems are built, deployed, and operated. As digital services become deeply embedded in business operations, even short periods of downtime can translate into financial loss, operational disruption, and reduced user trust. This makes structured recovery planning essential rather than optional. AWS-based disaster recovery models provide a structured progression of resilience strategies that allow organizations to align technical implementation with business expectations around availability, data safety, and recovery speed.

At the core of all disaster recovery planning are the concepts of Recovery Point Objective and Recovery Time Objective. These two parameters define the acceptable boundaries of data loss and system downtime, and they directly influence architectural decisions across all workloads. Systems with relaxed objectives can rely on simple backup mechanisms, while systems with strict requirements must implement advanced replication, automation, and redundancy strategies. Understanding these constraints is essential because they form the basis for selecting an appropriate recovery model and determining how resources are allocated across environments.

Backup and restore systems represent the most basic approach to disaster recovery. They prioritize cost efficiency and simplicity, making them suitable for non-critical workloads or systems where extended downtime is acceptable. While effective for preserving data, this model introduces longer recovery times because infrastructure must be rebuilt after failure. It relies heavily on stored data integrity and assumes that restoration time is not a critical constraint. As a result, it is often used in environments where operational urgency is low, but data preservation remains important.

As system criticality increases, organizations transition toward more responsive architectures such as Pilot Light. This model introduces continuously running core components that reduce recovery complexity. By maintaining synchronized data layers and minimal compute infrastructure, Pilot Light systems enable faster recovery compared to backup-only approaches. The key advantage lies in eliminating the need for full data reconstruction during recovery events. Instead, systems can rapidly scale existing components and restore services within significantly reduced timeframes. This makes Pilot Light suitable for workloads that require moderate availability without incurring the cost of full redundancy.

Warm Standby further enhances this concept by maintaining a fully functional but scaled-down version of the production environment. Unlike Pilot Light, which only keeps essential components active, Warm Standby ensures that all system components are operational at reduced capacity. This enables faster failover because the infrastructure is already deployed and running. Recovery primarily involves scaling resources rather than provisioning new systems. This model strikes a balance between cost and performance, making it suitable for business-critical applications where downtime must be minimized but full active-active redundancy is not economically justified.

At the highest level of resilience lies Multi-Site architecture, which eliminates the traditional concept of recovery time by maintaining fully operational environments across multiple regions. In this model, all sites are active simultaneously and capable of handling production workloads independently. This ensures continuous availability even in the event of regional failure. However, this level of redundancy comes with high cost and operational complexity. Data must be continuously synchronized across regions, traffic must be intelligently distributed, and system consistency must be maintained at all times. Despite these challenges, Multi-Site architecture provides unmatched reliability for systems where downtime is unacceptable.

Across all these models, a common theme emerges: resilience is achieved through trade-offs between cost, complexity, and recovery performance. There is no single optimal disaster recovery strategy that applies universally. Instead, each organization must evaluate its operational requirements, financial constraints, and risk tolerance to determine the appropriate balance. Systems that handle sensitive financial transactions, healthcare data, or global user traffic often require more aggressive recovery strategies, while internal tools or archival systems can operate with simpler models.

Another important consideration is the increasing role of automation in disaster recovery. Modern cloud environments rely heavily on automated monitoring, scaling, and failover mechanisms to reduce human intervention during failure events. Automation ensures consistency in recovery execution and significantly reduces response time. It also minimizes the risk of human error, which is often a contributing factor in system outages. As disaster recovery systems become more advanced, automation becomes not just a supporting feature but a central component of resilience design.

Data synchronization also plays a critical role across all advanced recovery models. Whether through continuous replication or event-driven updates, ensuring data consistency is essential for maintaining system integrity during failover scenarios. Inconsistent data states can lead to application errors, user disruption, and operational failures. Therefore, modern disaster recovery strategies place significant emphasis on replication design, consistency models, and conflict resolution mechanisms.

From an architectural perspective, disaster recovery planning also reflects broader trends in distributed computing. Systems are increasingly designed to be location-independent, horizontally scalable, and fault-tolerant by default. This shift moves away from traditional monolithic infrastructure models toward modular, service-oriented architectures that can adapt dynamically to changing conditions. Disaster recovery is therefore not an isolated layer but an integrated aspect of system design.

Ultimately, the evolution from backup-based recovery to Multi-Site active-active systems represents a progression in how organizations think about availability. What begins as simple data preservation evolves into fully redundant global systems capable of uninterrupted operation under failure conditions. This evolution reflects the growing importance of digital continuity in modern business environments, where system availability is directly tied to operational success.

Disaster recovery in cloud environments is not just about responding to failure but about designing systems that anticipate it. By aligning recovery strategies with business objectives, organizations can ensure that their systems remain resilient, adaptable, and capable of sustaining operations under a wide range of conditions.