The AWS Certified Data Engineer Associate certification is an intermediate-level professional credential designed to validate the ability to design, build, and manage data workflows in a cloud environment. It focuses on practical data engineering capabilities rather than purely theoretical knowledge, emphasizing how data is collected, transformed, stored, and secured at scale. This certification reflects the increasing importance of cloud-native data systems in modern enterprise environments where organizations rely heavily on data-driven operations.

The certification is positioned within a structured cloud skills hierarchy that progresses from foundational understanding to specialized technical expertise. It assumes that candidates already have familiarity with cloud computing concepts and basic data handling principles. From there, it builds deeper competency in designing scalable data architectures that can handle high-volume, high-velocity, and diverse data sources.

What makes this certification particularly relevant is its alignment with real-world enterprise data workflows. Organizations today depend on distributed systems that process structured, semi-structured, and unstructured data simultaneously. The certification focuses on ensuring that professionals can work within these environments efficiently while maintaining performance, reliability, and data integrity.

Growing Importance of Data Engineering in Cloud Ecosystems

Data engineering has become a critical discipline due to the exponential growth of digital data. Every interaction across applications, websites, mobile platforms, and connected devices generates valuable information. However, raw data alone has limited value unless it is properly processed and structured for analysis. This is where data engineering plays a foundational role.

In cloud ecosystems, data engineering is responsible for building the pipelines that move data from its source to its destination. These pipelines are not simple linear processes but complex systems involving ingestion, transformation, validation, and storage optimization. The increasing complexity of data environments has made this role essential for organizations that rely on timely insights.

Cloud platforms have changed how data systems are designed. Instead of static infrastructure, engineers now work with dynamic, scalable environments that adjust based on workload demands. This requires a deep understanding of distributed architecture principles, fault tolerance, and performance optimization techniques.

Another key driver of importance is the shift toward real-time analytics. Businesses no longer rely solely on historical data but require immediate insights from streaming data sources. This has introduced new challenges in processing speed, system coordination, and data consistency, all of which fall under the responsibilities of data engineering.

Core Objectives of the Certification Framework

The certification is structured around validating a set of core competencies that define effective data engineering practices in cloud environments. These competencies revolve around designing data systems that are efficient, secure, and scalable.

One major objective is assessing the ability to design data ingestion systems. This involves collecting data from multiple heterogeneous sources and ensuring it is properly captured for downstream processing. These sources may include application logs, transactional databases, IoT devices, and external APIs. Each source requires different handling mechanisms depending on structure and velocity.

Another objective is evaluating transformation capabilities. Raw data is rarely useful in its original form, so it must be cleaned, standardized, and enriched. This process requires knowledge of data formatting techniques, schema management, and processing logic that ensures consistency across datasets.

The certification also emphasizes operational reliability. Data systems must function continuously without interruptions, even under heavy workloads. This requires monitoring systems that detect anomalies, identify bottlenecks, and trigger automated recovery processes when failures occur.

Security and governance represent another critical objective. Data systems often handle sensitive or regulated information, making it essential to implement strict access controls, encryption mechanisms, and compliance frameworks. The certification ensures that professionals understand how to protect data while maintaining accessibility for authorized users.

Data Ingestion and Pipeline Design Principles

Data ingestion is the first stage of any data engineering workflow. It involves collecting data from various sources and transferring it into a system where it can be processed. This stage is critical because any issues in ingestion can affect the entire downstream pipeline.

There are multiple patterns of data ingestion, including batch processing and real-time streaming. Batch processing involves collecting data at scheduled intervals, while streaming processes data continuously as it is generated. Each approach has its own advantages depending on the use case and latency requirements.

Pipeline design focuses on ensuring that data flows efficiently between systems without bottlenecks. This requires careful planning of data formats, transfer protocols, and processing sequences. Engineers must also account for potential failures and design systems that can recover without data loss.

Scalability is another important factor in pipeline design. As data volume increases, pipelines must be able to handle increased load without performance degradation. This often involves distributing workloads across multiple processing nodes and optimizing resource allocation.

Data Transformation and Processing Logic

Once data is ingested, it must be transformed into a usable format. Transformation involves cleaning inconsistencies, removing duplicates, standardizing formats, and applying business rules that make the data meaningful.

This stage is crucial because raw data often contains errors or inconsistencies that can distort analytical outcomes. For example, missing values, incorrect formats, or duplicated records can significantly impact data quality. Transformation processes are designed to resolve these issues systematically.

Processing logic also includes aggregating data into meaningful structures. This may involve summarizing large datasets, joining multiple data sources, or deriving new attributes based on existing information. These operations help convert raw data into actionable insights.

In modern cloud environments, transformation processes are often automated and distributed. This allows large datasets to be processed efficiently without manual intervention. Automation also reduces the risk of human error and improves system reliability.

Data Storage Architecture and Optimization

Data storage is a critical component of any data engineering system. It determines how efficiently data can be accessed, processed, and analyzed. Different types of data require different storage solutions depending on structure, volume, and access patterns.

Structured data is typically stored in relational systems, while unstructured or semi-structured data may be stored in distributed file systems or object-based storage systems. Choosing the right storage model is essential for performance and cost efficiency.

Optimization of storage systems involves organizing data in a way that minimizes retrieval time and reduces resource consumption. This may include partitioning data, indexing frequently accessed fields, or compressing large datasets.

Scalability is a major consideration in storage design. Cloud-based systems allow storage capacity to grow dynamically, which eliminates the limitations of traditional infrastructure. However, this also requires careful management to avoid unnecessary cost increases.

Data Operations Monitoring and System Reliability

Data operations focus on maintaining the stability and performance of data systems. This includes monitoring pipelines, detecting failures, and ensuring that data flows remain uninterrupted.

Monitoring systems track metrics such as processing time, data throughput, and error rates. These metrics help identify potential issues before they escalate into major system failures. Automated alerts and recovery mechanisms are often used to respond to anomalies quickly.

System reliability is achieved through redundancy and fault-tolerant design. This means that if one component fails, another can take over without disrupting the overall workflow. Such designs are essential in environments where data availability is critical.

Troubleshooting is another important aspect of operations. Engineers must be able to diagnose issues quickly and implement corrective actions. This requires a deep understanding of system architecture and data flow dependencies.

Data Security and Governance in Cloud Systems

Security and governance are essential components of modern data engineering. As data systems grow in complexity, ensuring that data is protected from unauthorized access becomes increasingly important.

Security mechanisms include encryption, authentication, and access control policies. Encryption ensures that data is protected both in transit and at rest. Authentication verifies user identity, while access control defines what actions users are permitted to perform.

Governance involves establishing rules and policies for data usage. This includes defining data ownership, maintaining data quality standards, and ensuring compliance with regulatory requirements. Governance frameworks help organizations maintain consistency and accountability across data systems.

In cloud environments, security and governance must be integrated into every stage of the data lifecycle. This ensures that data remains protected from ingestion to storage and processing.

Skills and Knowledge Areas Validated by Certification

The certification evaluates a broad range of technical skills related to data engineering. These include system design, pipeline development, data transformation, and operational monitoring.

Candidates are expected to understand how to design scalable data architectures that can handle large volumes of data efficiently. This involves knowledge of distributed systems and resource optimization techniques.

Data manipulation skills are also critical. This includes the ability to clean, transform, and structure data for analytical use. Strong understanding of data formats and processing logic is essential.

Operational skills such as monitoring and troubleshooting are equally important. Engineers must be able to maintain system performance and resolve issues quickly to ensure continuous data availability.

Security knowledge is also a key requirement. Professionals must understand how to protect sensitive data and implement governance frameworks that ensure compliance and accountability.

Role of Certification in Professional Development Pathways

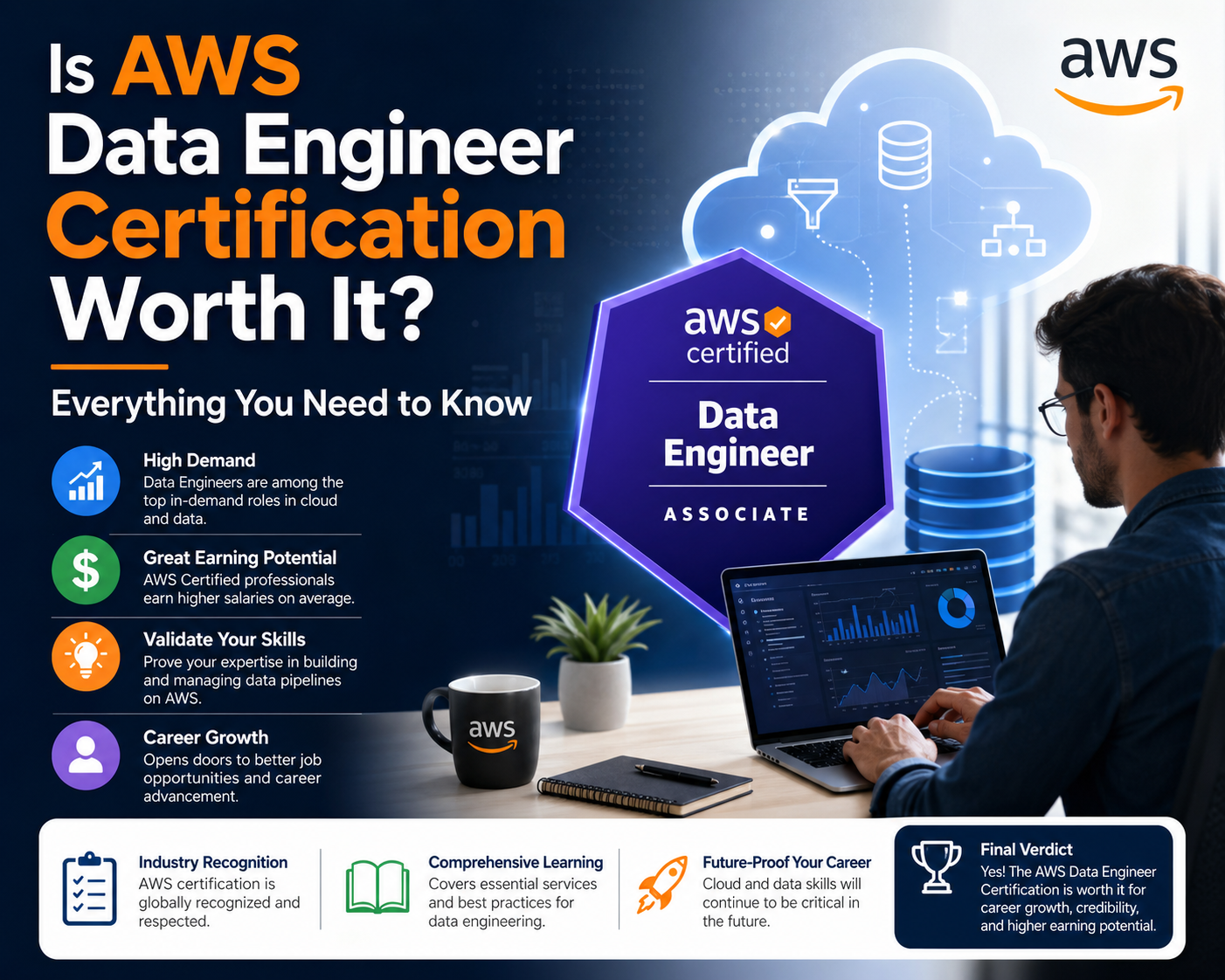

This certification plays a significant role in shaping career development in cloud and data engineering fields. It serves as a structured validation of skills that are increasingly in demand across industries.

Professionals often use certifications like this to formalize their practical experience. While hands-on experience is essential, structured validation provides a standardized way to demonstrate competency in specific technical areas.

The certification also helps professionals transition into more specialized roles. Data engineering is closely connected to fields such as analytics, machine learning, and cloud architecture, making it a valuable stepping stone for broader career advancement.

As organizations continue to adopt data-centric strategies, the demand for skilled data engineers is expected to remain strong. This certification aligns with that demand by focusing on practical, real-world skills rather than purely theoretical knowledge.

Data Engineering Lifecycle in Cloud Environments

The data engineering lifecycle in cloud environments represents a continuous process of collecting, refining, storing, and utilizing data for business and technical purposes. It is not a linear workflow but a cyclical system where data is constantly moving between different states of processing and optimization. Understanding this lifecycle is essential for professionals preparing for the AWS Certified Data Engineer Associate certification because it forms the backbone of real-world cloud data operations.

At the beginning of this lifecycle is data generation, where raw data originates from multiple sources such as applications, sensors, transactional systems, user interactions, and external APIs. This data is often unstructured or semi-structured, meaning it cannot be directly used for analysis without processing. The role of a data engineer is to design systems that can capture this data efficiently without loss or corruption.

Once data is generated, it moves into the ingestion phase. This phase focuses on transferring data from source systems into cloud-based storage or processing environments. Depending on business requirements, ingestion can occur in batches or in real time. Batch ingestion processes data at scheduled intervals, while streaming ingestion handles continuous flows of data. Each method requires different architectural considerations, particularly in terms of latency, throughput, and fault tolerance.

After ingestion, the data enters the transformation stage. This is where raw data is cleaned, standardized, and structured into meaningful formats. Transformation is a critical step because it ensures consistency across datasets and removes inaccuracies that could negatively impact downstream analytics. It often involves filtering irrelevant data, handling missing values, and applying business logic to create derived fields.

The next stage is storage optimization, where processed data is organized in a way that supports efficient retrieval and analysis. Cloud storage systems allow for flexible scaling, but without proper design, costs can increase rapidly and performance can degrade. Data engineers must carefully decide how to partition, index, and format data to balance speed and cost efficiency.

Finally, the lifecycle includes consumption and analysis. At this stage, data is used by analytics tools, machine learning models, and business intelligence systems to generate insights. These insights drive decision-making processes across organizations, from operational improvements to strategic planning.

Data Ingestion Architectures and Processing Models

Data ingestion architectures are designed to handle the movement of data from multiple sources into a centralized or distributed processing system. These architectures vary depending on the nature of the data and the requirements of the organization.

One common model is batch ingestion architecture. In this model, data is collected over a period of time and processed in large groups. Batch processing is efficient for scenarios where real-time insights are not required. It allows systems to optimize resource usage by processing large volumes of data at once. However, it introduces latency because data is not immediately available for analysis.

In contrast, streaming ingestion architecture processes data continuously as it is generated. This model is used in scenarios where real-time insights are critical, such as fraud detection, monitoring systems, or live analytics dashboards. Streaming systems must be highly scalable and resilient because they handle constant data flow without interruption.

Hybrid ingestion models combine both batch and streaming approaches. These systems are designed to handle diverse workloads by processing some data in real time while batching other data for periodic processing. This flexibility allows organizations to balance performance, cost, and complexity.

Another important aspect of ingestion architecture is fault tolerance. Data pipelines must be designed to handle failures without losing information. This often involves implementing retry mechanisms, data buffering, and checkpointing strategies that ensure continuity in case of disruptions.

Scalability is also a key requirement. As data volume grows, ingestion systems must scale horizontally to handle increased load. Cloud environments provide elastic scaling capabilities, but engineers must still design systems that can take advantage of these features efficiently.

Data Transformation Strategies and Engineering Practices

Data transformation is one of the most complex stages in the data engineering lifecycle. It involves converting raw data into structured formats that can be used for analytics and decision-making. This process requires a combination of technical skills, logical reasoning, and understanding of business requirements.

One of the primary transformation strategies is data cleansing. This involves identifying and correcting errors in datasets such as duplicates, missing values, and inconsistent formatting. Clean data is essential for ensuring the accuracy of downstream analytics.

Another important strategy is data normalization. Normalization involves organizing data into a consistent structure, often by standardizing formats or aligning different datasets to a common schema. This is particularly important when integrating data from multiple sources.

Data enrichment is another key transformation practice. In this process, additional information is added to existing datasets to enhance their value. For example, raw transaction data may be enriched with customer demographics or geographic information to provide deeper insights.

Aggregation is also widely used in transformation processes. This involves summarizing large datasets into smaller, more meaningful representations. Aggregation helps reduce complexity and improves performance during analysis.

Modern cloud environments often use automated transformation pipelines. These pipelines are designed to execute transformation logic without manual intervention. Automation improves efficiency, reduces errors, and ensures consistency across large datasets.

Distributed Data Storage Systems and Optimization Techniques

Distributed data storage systems are fundamental to cloud-based data engineering. These systems store data across multiple nodes, allowing for scalability, fault tolerance, and high availability. Unlike traditional storage systems, distributed architectures are designed to handle massive datasets spread across different geographic locations.

One of the key characteristics of distributed storage is data partitioning. Partitioning involves dividing data into smaller segments and distributing them across different storage nodes. This improves performance by allowing parallel processing and reduces the load on individual systems.

Replication is another important feature of distributed storage systems. Replication ensures that multiple copies of data exist across different nodes, which improves fault tolerance and data availability. If one node fails, another can provide access to the same data.

Indexing plays a significant role in optimizing data retrieval. Indexes allow systems to quickly locate specific records without scanning entire datasets. This is especially important when dealing with large-scale data environments.

Compression techniques are also used to reduce storage costs and improve performance. By compressing data, organizations can store more information in less space while also improving data transfer speeds.

Another optimization technique involves data lifecycle management. This refers to the process of moving data between different storage tiers based on usage patterns. Frequently accessed data may be stored in high-performance storage, while older or less frequently accessed data is moved to lower-cost storage solutions.

Data Pipeline Engineering and Workflow Automation

Data pipelines are the core mechanism through which data flows in cloud environments. A data pipeline is a series of processes that move data from one system to another while applying transformations and validations along the way.

Pipeline engineering involves designing these workflows in a way that ensures reliability, scalability, and efficiency. Each stage of the pipeline must be carefully configured to handle expected data volumes and processing requirements.

Automation plays a critical role in modern pipeline design. Automated pipelines reduce the need for manual intervention and ensure that data processing occurs consistently. Automation also allows for faster processing and reduces the risk of human error.

Workflow orchestration is another key aspect of pipeline engineering. Orchestration involves coordinating multiple tasks within a pipeline to ensure they execute in the correct order. This is particularly important in complex systems where different stages depend on the output of previous stages.

Monitoring is essential for maintaining pipeline health. Engineers must track performance metrics such as processing time, error rates, and data throughput. Monitoring tools help identify bottlenecks and ensure that pipelines are operating efficiently.

Error handling mechanisms are also integrated into pipeline design. These mechanisms ensure that failures are detected and resolved without disrupting the entire workflow. Common techniques include retries, alerts, and fallback processes.

Data Operations and System Reliability Engineering

Data operations focus on maintaining the stability and performance of data systems in production environments. This includes monitoring pipelines, managing system resources, and ensuring continuous data availability.

Reliability engineering is a key component of data operations. It involves designing systems that can withstand failures without significant disruption. This is achieved through redundancy, failover mechanisms, and distributed architectures.

Monitoring systems provide real-time insights into system performance. These systems track metrics such as latency, throughput, and error rates. When anomalies are detected, alerts are triggered to notify engineers of potential issues.

Incident management is another important aspect of data operations. When system failures occur, engineers must quickly diagnose the issue and implement corrective actions. This requires a deep understanding of system architecture and dependencies.

Capacity planning is also essential for ensuring system reliability. Engineers must anticipate future data growth and allocate resources accordingly. This helps prevent performance degradation as system demands increase.

Data Security Frameworks in Cloud Engineering

Data security is a critical concern in cloud environments where sensitive information is often stored and processed. Security frameworks are designed to protect data from unauthorized access, breaches, and corruption.

Encryption is one of the most important security mechanisms. It ensures that data is unreadable without proper authorization. Encryption is applied both to data at rest and data in transit.

Access control systems define who can access specific data and what actions they can perform. These systems are based on identity verification and role-based permissions.

Authentication mechanisms ensure that only authorized users can access data systems. This may involve multi-factor authentication or token-based systems.

Auditing is used to track data access and modifications. Audit logs provide a record of system activity, which is essential for compliance and security analysis.

Governance frameworks define policies for data usage and management. These frameworks ensure that data is handled consistently and in accordance with organizational and regulatory standards.

Evolution of Cloud Data Engineering Practices

Cloud data engineering has evolved significantly over the past decade. Early systems were primarily focused on simple data storage and batch processing. However, modern systems are far more complex and capable of handling real-time data processing and advanced analytics.

The introduction of distributed computing has transformed how data systems are designed. Engineers now build systems that can scale horizontally across multiple regions and handle massive workloads.

Automation has also played a key role in this evolution. Modern data pipelines are highly automated, reducing the need for manual intervention and increasing efficiency.

Another major development is the integration of machine learning into data workflows. Data systems now not only process data but also generate predictive insights using advanced algorithms.

As cloud technologies continue to evolve, data engineering will remain a critical discipline that supports innovation and decision-making across industries.

Data Security, Governance, and Compliance in Modern Cloud Systems

Data security and governance form the backbone of trustworthy cloud-based data engineering systems. Without structured governance and strong security controls, even the most advanced data pipelines become vulnerable to misuse, breaches, and compliance failures. In modern cloud ecosystems, data is constantly moving between ingestion systems, transformation engines, storage layers, and analytics platforms, which increases the number of potential exposure points.

Security in data engineering begins with controlling access. Every dataset, pipeline, and processing system must enforce strict identity-based access rules. These controls ensure that only authorized users or services can interact with sensitive data. In cloud environments, this is typically implemented using role-based access control, where permissions are assigned based on job function rather than individual identity. This reduces complexity while improving security consistency across large organizations.

Encryption is another fundamental layer of protection. Data is encrypted both during transfer and while stored in databases or storage systems. This ensures that even if unauthorized access occurs, the information remains unreadable without proper decryption keys. Modern cloud architectures often automate encryption processes, reducing the risk of human error while maintaining consistent protection standards.

Governance extends beyond security and focuses on how data is managed throughout its lifecycle. This includes defining ownership, classification, retention policies, and usage guidelines. Data governance ensures that organizations maintain control over how data is collected, stored, and used. It also ensures that data quality remains consistent across systems, which is critical for accurate analytics and reporting.

Compliance is closely tied to governance and security. Organizations must adhere to regional and industry regulations that dictate how data is handled. These regulations may require specific storage methods, access restrictions, or auditing practices. Data engineers play a key role in ensuring that systems are designed to meet these requirements from the ground up.

Auditability is a critical part of governance frameworks. Every action performed on data systems must be traceable. This includes data access, modifications, and transfers. Audit logs provide transparency and help organizations identify security incidents or policy violations. They also support regulatory reporting requirements.

Advanced Data Pipeline Architecture and Design Patterns

Modern data pipelines are complex systems that require careful architectural planning. These pipelines are responsible for moving data across multiple stages while ensuring consistency, reliability, and performance. Unlike traditional linear workflows, modern pipelines are distributed and often event-driven.

One widely used design pattern is the modular pipeline architecture. In this approach, pipelines are broken into independent components that perform specific tasks such as ingestion, transformation, or validation. This modularity allows engineers to scale individual components independently and reuse them across multiple workflows.

Another important design pattern is event-driven architecture. In this model, data processing is triggered by events such as new data arrival or system changes. This approach is particularly useful for real-time analytics systems where immediate processing is required. Event-driven pipelines improve responsiveness and reduce unnecessary resource consumption.

Lambda-style architecture combines both batch and streaming processing. It allows systems to process real-time data while also performing large-scale batch computations. This hybrid approach ensures that organizations can balance speed and accuracy depending on business requirements.

Kappa-style architecture focuses entirely on stream processing. In this model, all data is treated as a continuous stream, simplifying system design and reducing duplication between batch and real-time processing layers. This architecture is often used in environments where real-time insights are critical.

Pipeline reliability is achieved through redundancy and fault tolerance. Distributed systems are designed to continue operating even if individual components fail. This is achieved through replication, checkpointing, and automatic recovery mechanisms.

Data Transformation at Scale and Optimization Techniques

Data transformation at scale is one of the most technically demanding aspects of data engineering. As data volumes grow, transformation processes must be optimized to handle large workloads efficiently without compromising accuracy or consistency.

Parallel processing is a key optimization technique used in large-scale transformations. By dividing tasks across multiple processing units, systems can significantly reduce execution time. This approach is essential in cloud environments where datasets can reach petabyte scale.

Another optimization method is incremental processing. Instead of reprocessing entire datasets, systems only process new or modified data. This reduces computational overhead and improves efficiency. Incremental processing is especially useful in environments where data is continuously updated.

Schema evolution is another important consideration in transformation systems. As data structures change over time, pipelines must adapt without breaking existing workflows. Flexible schema management ensures that systems remain stable even when data formats evolve.

Data partitioning also plays a major role in optimization. By dividing datasets into smaller logical segments, systems can process data in parallel and reduce query latency. Partitioning strategies are often based on time, geography, or data type.

Caching mechanisms are used to improve performance by storing frequently accessed intermediate results. This reduces the need for repeated computations and improves overall system efficiency.

Distributed Storage Systems and Performance Engineering

Distributed storage systems are designed to handle massive datasets by spreading data across multiple nodes. This architecture ensures scalability, fault tolerance, and high availability, which are essential for modern cloud-based data systems.

One of the primary characteristics of distributed storage is horizontal scalability. Instead of increasing the capacity of a single machine, systems add more nodes to handle increased workload. This allows storage systems to grow dynamically as data volumes increase.

Consistency models play a crucial role in distributed systems. These models define how data is synchronized across nodes. Strong consistency ensures that all nodes reflect the same data at all times, while eventual consistency allows temporary differences that are resolved over time. Each model has trade-offs in terms of performance and reliability.

Data replication is used to ensure durability and availability. Multiple copies of data are stored across different nodes or regions. This ensures that data remains accessible even in the event of hardware failure or network issues.

Partitioning strategies are used to distribute data efficiently across nodes. Effective partitioning reduces latency and improves query performance. Poor partitioning can lead to uneven workloads and system bottlenecks.

Indexing techniques are used to accelerate data retrieval. Indexes allow systems to locate specific records without scanning entire datasets. This is especially important in large-scale analytics environments.

Compression techniques help reduce storage costs and improve transfer speeds. By reducing the size of data, systems can store more information and process it more efficiently.

Data Operations, Monitoring, and Reliability Engineering

Data operations focus on ensuring that data systems run smoothly in production environments. This includes monitoring system health, managing performance, and responding to incidents.

Monitoring systems collect metrics such as processing speed, error rates, system load, and data throughput. These metrics provide visibility into system performance and help detect anomalies early.

Alerting mechanisms notify engineers when system behavior deviates from expected patterns. This allows for quick intervention before issues escalate into major failures.

Incident response processes are used to handle system outages or performance degradation. These processes involve identifying root causes, applying fixes, and restoring system functionality.

Reliability engineering focuses on designing systems that can withstand failures without significant disruption. This is achieved through redundancy, failover systems, and distributed architectures.

Capacity planning ensures that systems can handle future growth in data volume and processing demand. Engineers must anticipate workload increases and allocate resources accordingly to prevent performance bottlenecks.

Automation plays a key role in operations management. Automated recovery systems can detect and resolve common issues without human intervention, improving system resilience and reducing downtime.

Data Engineering Integration with Analytics and Machine Learning Systems

Data engineering serves as the foundation for analytics and machine learning systems. Without well-structured data pipelines, these advanced systems cannot function effectively.

Analytics systems rely on clean, structured data to generate insights. Data engineers ensure that data is properly formatted and stored in a way that supports efficient querying and reporting.

Machine learning systems require large volumes of high-quality data for training models. Data engineering pipelines are responsible for preparing this data by cleaning, labeling, and transforming it into suitable formats.

Feature engineering is a key process where raw data is converted into meaningful variables that can be used in predictive models. This requires a deep understanding of both data structures and business context.

Real-time analytics systems depend on streaming data pipelines. These systems provide immediate insights by processing data as it is generated. This is particularly useful in scenarios such as fraud detection, system monitoring, and customer behavior analysis.

Integration between data engineering and machine learning systems requires careful coordination to ensure data consistency and availability. Pipelines must be designed to support both batch training and real-time inference workloads.

Career Impact and Professional Growth in Data Engineering Fields

The field of data engineering offers strong career growth opportunities due to increasing demand for data-driven decision-making across industries. Organizations require skilled professionals who can design and maintain scalable data systems.

Professionals in this field often progress into roles such as senior data engineer, data architect, cloud solutions engineer, or analytics engineer. These roles involve greater responsibility in system design, optimization, and strategic planning.

Skill development in data engineering also opens pathways into related fields such as machine learning engineering and cloud architecture. The overlap between these disciplines allows professionals to diversify their expertise.

Hands-on experience with distributed systems, pipeline design, and cloud platforms is highly valued in the industry. Certifications serve as structured validation of these skills and help professionals demonstrate their capabilities to employers.

Continuous learning is essential in this field due to rapid technological advancements. New tools, frameworks, and architectural patterns are constantly emerging, requiring professionals to stay updated with industry trends.

Strategic Value of Data Engineering Expertise in Organizations

Data engineering plays a strategic role in enabling organizations to become data-driven. It provides the infrastructure required to transform raw data into actionable insights that support decision-making.

Organizations rely on data engineers to ensure that data systems are reliable, scalable, and efficient. Without these systems, analytics and machine learning initiatives cannot function effectively.

Data engineering also supports operational efficiency by automating data workflows and reducing manual intervention. This allows organizations to process larger volumes of data with fewer resources.

As data continues to grow in importance, the role of data engineering will remain central to digital transformation strategies across industries.

Conclusion

The AWS Certified Data Engineer Associate certification represents a structured validation of practical cloud data engineering capability rather than a purely academic achievement. Its value is rooted in how closely it reflects real-world data systems that organizations rely on for operational intelligence, analytics, and machine learning workflows. In modern cloud-driven environments, data is no longer a static asset stored in isolated databases; it is a continuously flowing resource that must be captured, transformed, secured, and delivered across multiple systems in near real time.

This certification aligns with that reality by focusing on the end-to-end data lifecycle. It emphasizes ingestion pipelines, transformation logic, scalable storage systems, operational monitoring, and governance frameworks. Each of these areas reflects essential components of enterprise-grade data platforms. Professionals who engage with this certification are exposed to the architectural thinking required to manage distributed systems where performance, reliability, and cost efficiency must be balanced simultaneously.

One of the most important aspects of its value is its emphasis on applied knowledge. Data engineering is not a discipline that can be mastered through theory alone. It requires familiarity with system behavior under load, understanding of failure scenarios, and the ability to optimize workflows based on real constraints. The certification framework reflects this by focusing on scenarios that simulate operational challenges such as pipeline failures, data inconsistency, scaling bottlenecks, and security enforcement.

From a career perspective, this certification functions as a signal of readiness for roles that involve working with large-scale data systems. It does not replace experience but complements it by demonstrating structured knowledge across key domains. In competitive job markets, such validation helps differentiate professionals who understand cloud data architecture principles from those who only have fragmented exposure to tools or platforms.

The practical relevance of this certification becomes even clearer when viewed through the lens of modern data-driven organizations. Businesses today depend heavily on continuous data streams coming from applications, devices, and user interactions. This creates a need for systems that can process both real-time and historical data efficiently. The certification reflects this requirement by emphasizing ingestion models, transformation workflows, and storage optimization techniques that align with production-scale environments.

Real-time processing has become a major expectation in many industries. Organizations now require immediate insights for fraud detection, customer behavior analysis, system monitoring, and operational decision-making. This demands architectures that support streaming data pipelines and event-driven processing. The certification indirectly prepares professionals for these challenges by reinforcing design principles that support continuous data movement and low-latency processing.

Security and governance are also deeply embedded into modern data strategies. As data volumes increase, so does the importance of protecting sensitive information and ensuring compliance with regulatory frameworks. The certification emphasizes these aspects by covering access control mechanisms, encryption practices, and data lifecycle governance. These elements are essential for maintaining trust and integrity in enterprise systems.

Another important dimension of the certification’s value lies in its integration with broader cloud ecosystems. Data engineering is not an isolated discipline; it serves as the foundation for analytics, machine learning, and business intelligence systems. Without properly designed data pipelines and storage structures, these advanced systems cannot function effectively.

In analytics environments, data engineers are responsible for ensuring that datasets are clean, structured, and optimized for querying. This allows analysts and decision-makers to work with reliable information without dealing with raw or inconsistent data. The certification reinforces this responsibility by emphasizing transformation processes and storage strategies that support analytical workloads.

In machine learning environments, the importance of data engineering becomes even more pronounced. Machine learning models depend on large volumes of high-quality data for training and validation. Data engineers are responsible for preparing this data, maintaining pipelines, and ensuring consistency across datasets used for model development and inference. The certification supports this understanding by focusing on lifecycle management and transformation workflows that are essential for machine learning pipelines.

Cloud architecture integration is another key aspect of modern data engineering. Data systems must interact seamlessly with compute, storage, networking, and security layers within cloud platforms. Engineers must understand how these components work together to create scalable and resilient systems. The certification encourages this architectural mindset by focusing on system design principles rather than isolated tool usage.

The long-term career impact of this certification is closely tied to the increasing demand for data engineering expertise. As organizations continue to adopt cloud-first strategies, the need for professionals who can design, build, and maintain scalable data systems will continue to grow. This creates strong career opportunities across industries such as finance, healthcare, retail, technology, and logistics.

Professionals with this certification often progress into advanced roles that involve greater architectural responsibility. These roles may include cloud data architect, analytics engineer, or platform engineering specialist. The certification provides a structured foundation that supports this progression by reinforcing core principles of scalable and reliable system design.

Another important benefit is adaptability. Cloud technologies evolve rapidly, and professionals who understand foundational principles are better equipped to adapt to new tools and platforms. The certification emphasizes conceptual understanding of data systems, which remains relevant even as specific technologies change over time.

Ultimately, this certification represents more than a credential. It reflects a structured approach to understanding how data flows through modern cloud ecosystems and how that data can be transformed into meaningful insights. It reinforces a mindset focused on scalability, efficiency, and reliability, which are essential qualities in any data-driven environment.