DevOps is a modern software engineering approach that combines software development and IT operations into a single continuous workflow. Instead of separating development and operations teams, DevOps brings them together to improve speed, reliability, and efficiency in delivering software. The focus is on building, testing, and releasing applications in shorter cycles while maintaining high quality and stability.

This approach eliminates traditional delays caused by siloed teams and manual processes. By integrating collaboration and automation into every stage of the software lifecycle, DevOps allows organizations to respond faster to business needs and user demands. Updates, bug fixes, and new features can be delivered more frequently without disrupting system performance.

Core Principles of DevOps

DevOps is built on several foundational principles that define how modern software delivery works. Continuous integration is one of the key practices, where developers frequently merge their code into a shared repository. This helps identify issues early and ensures that the software remains stable throughout development.

Continuous delivery ensures that code is always in a deployable state. Once changes pass automated testing, they can be released quickly and safely. This reduces deployment risks and shortens release cycles.

Automation is another critical principle, used to handle repetitive tasks such as testing, deployment, and infrastructure management. Infrastructure as code is also widely used, allowing systems to be defined and managed through code rather than manual configuration. Collaboration across teams ensures shared responsibility for application performance and delivery.

Challenges in Traditional Software Development

Before DevOps practices became common, software development followed a slow and fragmented process. Development teams built applications independently and then handed them over to operations teams for deployment. This separation often caused delays, miscommunication, and system inconsistencies.

Manual deployment processes increased the risk of human error and made releases time-consuming. Differences between development, testing, and production environments often led to unexpected failures during deployment.

Testing was usually performed late in the development cycle, which meant that critical issues were discovered after significant resources had already been spent. As software systems grew more complex, these limitations made traditional approaches inefficient and unreliable.

Introduction to Azure Cloud Platform

Azure is a cloud computing platform that provides a wide range of services for building, deploying, and managing applications. It removes the need for physical infrastructure by offering computing resources through global data centers. These resources include virtual machines, storage systems, databases, networking tools, and analytics services.

One of Azure’s key strengths is scalability. Resources can automatically expand or shrink based on demand, ensuring optimal performance while controlling costs. The platform also provides built-in security features such as identity management, encryption, and monitoring to protect applications and data across environments.

Azure’s global infrastructure allows applications to be deployed closer to users, improving speed and reliability across different regions.

How Azure Supports Software Development

Azure provides a complete environment for modern software development. Developers can build applications using multiple programming languages and frameworks while leveraging cloud-based services to improve scalability and performance.

The platform supports automated workflows that streamline processes such as building code, running tests, and deploying applications. This reduces manual effort and ensures consistency across different environments.

Azure also supports global application hosting, allowing systems to run in multiple regions. This improves availability and reduces latency for users in different parts of the world.

Connection Between DevOps and Azure

DevOps practices align closely with cloud platforms like Azure. The combination allows organizations to automate software delivery pipelines and manage infrastructure more efficiently.

In Azure environments, infrastructure can be defined and deployed using code. This ensures consistent configurations across development, testing, and production environments. It also reduces setup time and eliminates manual errors.

Azure supports continuous integration and continuous delivery workflows, enabling teams to automatically build, test, and deploy applications whenever changes are made. This leads to faster release cycles and improved system reliability.

Overview of Azure DevOps Ecosystem

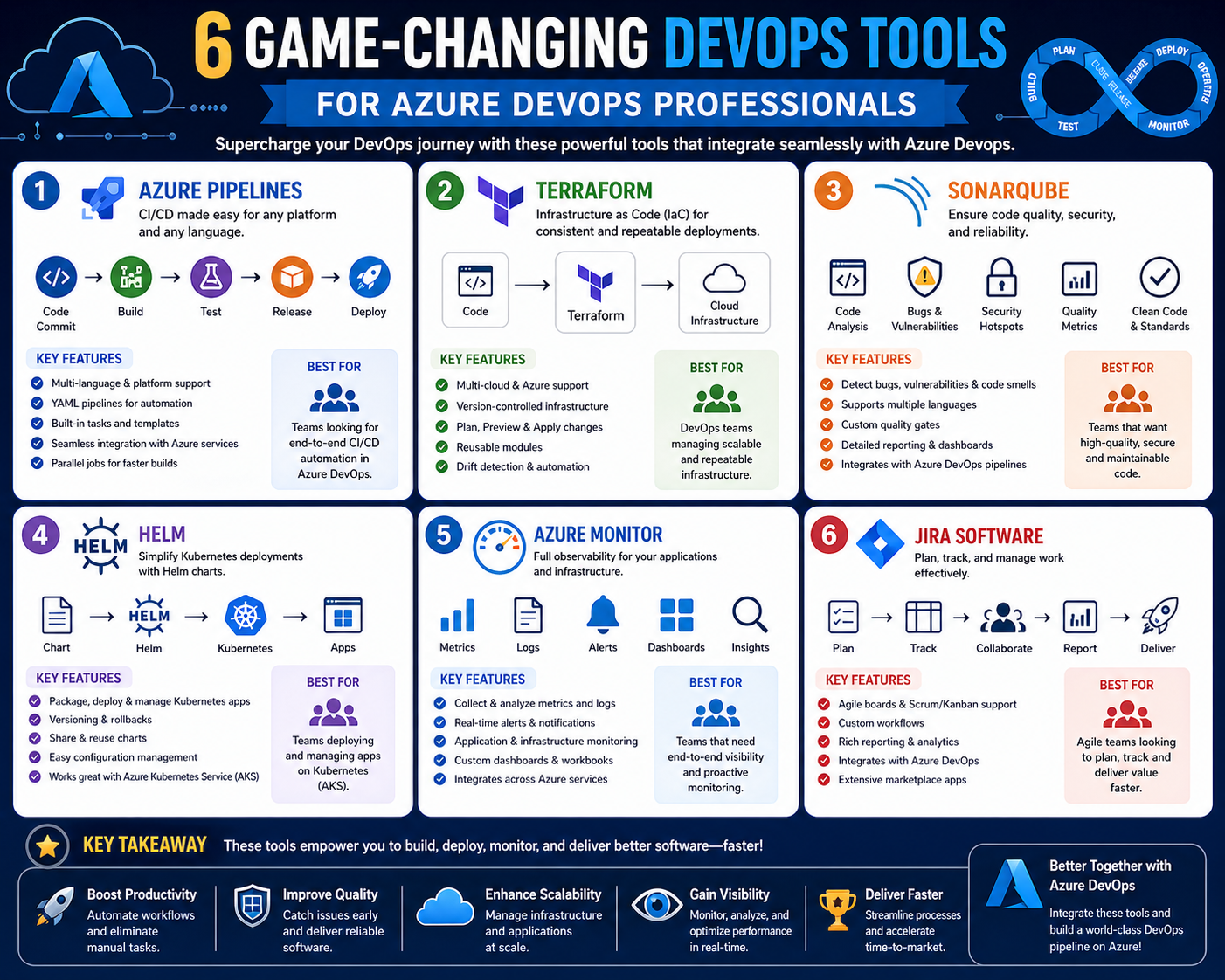

The Azure DevOps ecosystem consists of integrated tools designed to support the entire software development lifecycle. These tools help teams plan work, manage code, automate builds, run tests, and deploy applications.

Work tracking systems help organize tasks and monitor progress across projects. Source control systems manage code versions and allow collaboration among developers.

Automated pipelines handle build, testing, and deployment processes, reducing manual intervention. Testing systems ensure software quality before release, while package management tools handle dependencies and reusable components.

Together, these tools create a unified environment that improves productivity and collaboration across development teams.

Collaboration in Cloud-Based Development

Collaboration is essential in modern software development, especially in cloud environments where teams may be distributed across different locations. DevOps promotes shared responsibility, meaning all team members contribute to the success of the software lifecycle.

This approach improves communication, reduces delays, and helps teams detect and resolve issues earlier in the development process. Better collaboration leads to more stable systems and faster delivery of updates and features.

Role of Automation in DevOps Workflows

Automation is a key component of DevOps that improves efficiency and reduces manual workload. Tasks such as code compilation, testing, deployment, and system monitoring are automated using pipelines and scripts.

This ensures consistency across environments and minimizes human errors. Automated testing helps detect bugs early, while monitoring tools continuously track system performance and alert teams when issues arise.

Automation allows teams to focus on innovation and development rather than repetitive operational tasks.

Evolution of Integrated Development Systems

Software development has evolved toward integrated systems that combine multiple tools into a single platform. Instead of using separate tools for coding, testing, deployment, and monitoring, organizations now use unified environments that streamline workflows.

These integrated systems provide better visibility across the entire development lifecycle. Teams can track changes, monitor performance, and manage deployments from one centralized system.

This approach reduces complexity, improves coordination, and supports faster and more reliable software delivery in modern cloud environments.

Azure DevOps Architecture and Service Integration Model

Azure DevOps is designed as a modular but tightly integrated ecosystem where each service contributes to a specific stage of the software delivery lifecycle. The architecture is structured in a way that allows teams to either adopt individual services independently or combine them into a fully unified development pipeline. This flexibility makes it suitable for both small-scale development teams and large enterprise environments.

At the core of this architecture is the concept of interconnected services that share data and workflows. Work items created in planning systems can be linked directly to source code changes, build pipelines, and deployment stages. This traceability ensures that every change in the system can be tracked from requirement to production. It also improves accountability and visibility across teams, enabling better coordination between development, testing, and operations functions.

The integration model also supports extensibility, allowing organizations to connect external tools and services into their workflow. This ensures that Azure DevOps can adapt to different engineering environments without disrupting existing processes.

Source Control Management and Branching Strategies

Source control is a foundational component of modern software development, and Azure DevOps provides a structured environment for managing code repositories. It supports distributed version control systems that allow multiple developers to work on the same codebase simultaneously without conflicts. Every change is tracked, versioned, and stored, enabling teams to revert to previous states when needed.

Branching strategies play a critical role in organizing development work. Feature branching allows developers to work on new functionality in isolated branches without affecting the main codebase. Once the feature is complete and tested, it is merged back into the primary branch through controlled review processes. Release branching is used to stabilize code for production deployment, while hotfix branching is used for urgent production fixes.

Code review mechanisms are integrated into the workflow, ensuring that changes are evaluated before being merged. This improves code quality and reduces the likelihood of introducing defects into production environments.

Continuous Integration Pipeline Design Principles

Continuous integration is a practice where code changes are automatically built and tested whenever they are committed to a repository. In Azure DevOps, this process is implemented through pipeline systems that define a sequence of automated steps. These steps typically include code compilation, unit testing, and validation checks.

The primary goal of continuous integration is to detect issues early in the development cycle. Instead of waiting until the end of a release cycle to test software, every change is validated immediately. This reduces integration problems and ensures that the codebase remains stable at all times.

Pipeline design often follows a staged approach where each step depends on the successful completion of the previous one. This structured flow ensures that only validated code progresses through the system, improving overall reliability and consistency in software delivery.

Continuous Delivery and Deployment Automation Flow

Continuous delivery extends continuous integration by ensuring that validated code is always ready for deployment. Once code passes all automated tests, it is packaged and prepared for release to different environments such as staging or production.

Deployment automation removes the need for manual intervention during release cycles. Instead of manually copying files or configuring servers, automated pipelines handle the entire process. This includes environment provisioning, application deployment, configuration updates, and post-deployment verification.

Different deployment strategies are used depending on system requirements. Rolling deployments gradually replace older versions with new ones, while parallel deployments allow multiple versions to run simultaneously during transition periods. These approaches help reduce downtime and minimize risk during updates.

Pipeline Configuration Using Declarative Workflow Models

Modern pipeline systems often use declarative configuration models that define workflows as structured code. This allows teams to version-control their pipeline definitions alongside application code. These configurations describe how software should be built, tested, and deployed in a repeatable manner.

Declarative pipelines improve consistency because they eliminate manual setup variations between environments. Every execution follows the same defined structure, ensuring predictable outcomes. This also makes pipelines easier to audit and troubleshoot since every step is explicitly defined in configuration files.

Pipeline reuse is another important concept. Templates can be created for common workflows and reused across multiple projects, reducing duplication and improving standardization across development teams.

Infrastructure as Code in Cloud Deployment Environments

Infrastructure as code is a practice that enables infrastructure resources to be defined and managed through code rather than manual configuration. In cloud environments, this approach is essential for maintaining consistency and scalability.

Using infrastructure as code, environments such as development, testing, and production can be provisioned automatically using predefined templates. This ensures that all environments are identical in structure, reducing configuration drift and deployment inconsistencies.

Infrastructure definitions can include virtual machines, networking components, storage accounts, and security configurations. These definitions are stored in version control systems, allowing teams to track changes and maintain historical records of infrastructure evolution.

Containerization and Microservices Deployment Patterns

Containerization has become a key practice in modern software architecture. It allows applications to be packaged with all their dependencies into lightweight, portable units that can run consistently across different environments.

In DevOps environments, containers are used to simplify deployment and improve scalability. Applications are broken down into smaller services that can be developed, deployed, and scaled independently. This microservices approach improves system flexibility and resilience.

Container orchestration systems manage the deployment, scaling, and monitoring of containerized applications. These systems automatically distribute workloads, handle failures, and ensure optimal resource utilization across clusters.

Cloud-Native Application Hosting and Scaling Models

Cloud-native applications are designed to take full advantage of cloud computing capabilities. These applications are built to be scalable, resilient, and distributed across multiple environments.

Scaling models in cloud environments can be vertical or horizontal. Vertical scaling increases the resources of existing systems, while horizontal scaling adds more instances to distribute workloads. Horizontal scaling is more commonly used in cloud-native architectures due to its flexibility and fault tolerance.

Load balancing systems distribute incoming traffic across multiple instances, ensuring that no single system becomes overloaded. This improves performance and maintains availability during high-demand periods.

Testing Automation and Quality Assurance Integration

Automated testing is a critical part of modern software delivery pipelines. It ensures that applications meet quality standards before being deployed to production environments.

Different types of automated tests are used throughout the development lifecycle. Unit tests validate individual components, integration tests ensure that different modules work together correctly, and end-to-end tests simulate real user interactions.

Test automation reduces manual effort and improves reliability by ensuring that every code change is validated consistently. It also enables faster feedback loops, allowing developers to identify and fix issues earlier in the development process.

Observability and System Monitoring Frameworks

Observability is the ability to understand the internal state of a system based on external outputs such as logs, metrics, and traces. In cloud environments, observability is essential for maintaining performance and reliability.

Monitoring systems collect data from applications and infrastructure to provide real-time insights into system behavior. Metrics track performance indicators such as response time, resource usage, and error rates. Logs provide detailed records of system events, while tracing follows the flow of requests across distributed systems.

These components work together to help teams detect issues, diagnose problems, and optimize system performance. Observability also supports proactive maintenance by identifying potential issues before they impact users.

Security Integration in DevOps Workflows

Security is an integral part of modern DevOps practices and is often integrated directly into development pipelines. This approach ensures that security checks are performed continuously rather than at the end of the development cycle.

Identity management systems control access to resources based on roles and permissions. This ensures that only authorized users can access specific systems or perform certain actions. Encryption is used to protect data both at rest and in transit, preventing unauthorized access.

Automated security scanning tools analyze code and dependencies for vulnerabilities during the build process. This helps identify security risks early and reduces the likelihood of vulnerabilities reaching production environments.

Governance and Policy Enforcement in Cloud Systems

Governance frameworks ensure that cloud environments operate in a controlled and compliant manner. Policies define rules for resource usage, security configurations, and operational standards.

Policy enforcement mechanisms automatically evaluate resources against predefined rules and take corrective actions when violations are detected. This ensures consistency across environments and helps maintain compliance with organizational standards.

Resource tagging and classification systems are also used to organize cloud assets and track usage across teams. This improves cost management and operational transparency.

Release Management and Deployment Strategies at Scale

Release management involves coordinating the delivery of software updates across different environments. In large-scale systems, structured deployment strategies are essential to minimize risk and ensure stability.

Blue-green deployment models maintain two identical environments where one serves live traffic while the other is used for updates. Once the new version is validated, traffic is switched to the updated environment.

Canary deployments gradually expose new versions to a small subset of users before full rollout. This allows teams to monitor performance and detect issues early. Feature flags provide additional control by enabling or disabling specific functionality without redeploying the application.

Scalability Patterns in Enterprise DevOps Systems

Enterprise DevOps systems are designed to handle large-scale workloads across distributed environments. Scalability is achieved through a combination of infrastructure automation, load distribution, and modular architecture design.

Distributed systems allow workloads to be processed across multiple nodes, improving performance and reliability. Auto-scaling mechanisms dynamically adjust resource allocation based on demand, ensuring efficient resource usage.

Decoupling components through service-oriented architectures improves system flexibility and allows individual components to scale independently without affecting the entire system.

Reliability Engineering and System Stability Practices

Reliability engineering focuses on ensuring that systems remain stable and available under varying conditions. This involves designing systems that can tolerate failures and recover automatically without significant disruption.

Redundancy is used to ensure that backup systems are available in case of failure. Failover mechanisms automatically switch traffic to healthy systems when issues are detected.

Stress testing and performance testing are used to evaluate system behavior under high load conditions. These practices help identify weaknesses and improve system resilience before deployment into production environments.

Advanced Azure DevOps Workflow Optimization Techniques

Modern DevOps environments rely heavily on workflow optimization to ensure that software delivery pipelines remain efficient, scalable, and resilient under increasing complexity. Optimization begins with structuring pipelines in a way that minimizes redundant operations while maximizing parallel execution of tasks. Instead of executing all steps sequentially, modern workflows distribute workloads across multiple agents, allowing builds, tests, and deployments to occur simultaneously. This significantly reduces overall execution time and improves system throughput.

Another important aspect of workflow optimization is caching frequently used dependencies and artifacts. By storing previously downloaded packages, compiled binaries, and intermediate build outputs, systems avoid repetitive processing, which reduces both compute costs and pipeline duration. Incremental builds are also widely used, where only modified components of a codebase are rebuilt instead of the entire application. This approach is particularly effective in large-scale enterprise systems where full builds can be resource-intensive.

Pipeline efficiency is further improved through conditional execution logic. Instead of running every step for every commit, workflows can be configured to trigger specific stages only when relevant changes are detected. This prevents unnecessary execution of testing or deployment stages when unrelated code modifications occur. Over time, these optimization strategies collectively contribute to faster release cycles and more predictable delivery timelines.

Advanced Source Control Collaboration Models in Enterprise Systems

In large-scale development environments, source control is not just a storage mechanism but a structured collaboration system that governs how teams interact with shared codebases. Advanced collaboration models focus on maintaining stability while enabling parallel development across multiple teams. Trunk-based development is one such model where developers frequently integrate changes into a central branch, reducing long-lived feature branches and minimizing merge conflicts.

Another widely used model is Git flow, which organizes development into structured branches such as feature, release, and hotfix branches. Each branch type serves a specific purpose in the development lifecycle, ensuring that production code remains stable while new features are developed in isolation. Code review systems play a critical role in maintaining quality by enforcing peer evaluation before changes are merged.

Automated validation checks are often integrated into pull request workflows, ensuring that code meets predefined standards before approval. These checks include syntax validation, security scanning, and automated test execution. This structured approach to collaboration ensures that code quality remains consistent even as multiple teams contribute simultaneously to the same codebase.

Enterprise-Grade Continuous Integration Scaling Strategies

As organizations scale their development efforts, continuous integration systems must be capable of handling large volumes of code changes without performance degradation. Scaling CI systems requires distributing workloads across multiple build agents, allowing simultaneous execution of independent tasks. This parallelization significantly reduces queue times and ensures that developers receive rapid feedback on their changes.

Another key scaling strategy involves separating build environments based on workload type. Lightweight builds such as linting and unit tests can be executed on smaller agents, while resource-intensive tasks such as integration testing and packaging are handled by more powerful compute nodes. This segmentation improves resource utilization and reduces bottlenecks.

Artifact management also plays a role in CI scalability. Instead of rebuilding dependencies for every pipeline execution, reusable artifacts are stored and retrieved when needed. This reduces redundant computation and accelerates build processes. Additionally, distributed caching mechanisms ensure that frequently accessed resources are shared across multiple pipeline executions, further improving efficiency.

Multi-Stage Deployment Architectures for Production Systems

Modern deployment architectures are structured into multiple stages to ensure controlled and reliable software releases. Each stage represents a different environment with increasing levels of stability requirements. Development environments are used for active coding and initial testing, while staging environments simulate production conditions for final validation. Production environments are highly controlled and optimized for stability and performance.

Multi-stage deployment pipelines ensure that code transitions through each environment only after passing predefined validation checks. This reduces the risk of deploying unstable or untested code into production systems. Approval gates are often introduced between stages, requiring manual or automated confirmation before proceeding to the next deployment phase.

Rollback mechanisms are also integrated into deployment architectures to handle failure scenarios. If issues are detected after deployment, systems can automatically revert to a previous stable version. This ensures minimal disruption to end users and maintains service continuity even in case of deployment failures.

Cloud Resource Optimization and Cost Efficiency Strategies

Cloud environments provide flexibility and scalability, but without proper optimization, resource usage can become inefficient and costly. Resource optimization strategies focus on ensuring that computing resources are allocated based on actual demand rather than static provisioning. Auto-scaling systems dynamically adjust resource allocation based on workload patterns, ensuring optimal performance during peak usage and cost savings during low activity periods.

Right-sizing is another important optimization strategy, where virtual machines and services are configured with appropriate resource levels based on workload requirements. Oversized resources lead to unnecessary costs, while undersized resources can cause performance issues. Continuous monitoring helps identify inefficiencies and supports informed scaling decisions.

Idle resource detection mechanisms identify unused or underutilized resources and recommend or automatically shut them down when not needed. This includes inactive virtual machines, unused storage accounts, and redundant network components. Together, these strategies help maintain a balance between performance efficiency and cost management in cloud-based environments.

Advanced Security Automation in DevOps Pipelines

Security automation plays a critical role in ensuring that software systems remain protected throughout the development lifecycle. Instead of treating security as a final step, modern DevOps practices integrate security checks directly into pipelines. This approach is often referred to as shifting security left, where vulnerabilities are identified early in the development process.

Automated code scanning tools analyze source code for known vulnerabilities, insecure coding patterns, and dependency risks. These scans are executed during build processes, ensuring that security issues are detected before deployment. Dependency analysis tools evaluate third-party libraries to identify potential security risks introduced through external packages.

Infrastructure security is also automated through configuration validation tools that check cloud resources against predefined security policies. These tools ensure that environments are configured securely and comply with organizational standards. Continuous security monitoring systems track runtime behavior to detect anomalies and potential threats in production environments.

Distributed System Design for High Availability Architectures

Distributed systems are designed to ensure high availability, scalability, and fault tolerance in modern cloud environments. These systems distribute workloads across multiple nodes, ensuring that no single point of failure can disrupt overall system functionality.

Data replication is a key component of distributed architectures, where data is duplicated across multiple locations to ensure availability even in case of hardware failure. Consistency models define how data synchronization is managed across distributed nodes, balancing performance and accuracy requirements.

Load distribution mechanisms ensure that incoming traffic is evenly spread across available resources, preventing overload on individual components. Health monitoring systems continuously evaluate system components and reroute traffic away from unhealthy nodes to maintain service reliability.

Performance Engineering in Continuous Delivery Systems

Performance engineering focuses on ensuring that applications meet defined performance standards under various conditions. In continuous delivery systems, performance testing is integrated into pipelines to evaluate system behavior before deployment.

Load testing simulates real-world traffic conditions to evaluate how systems respond under stress. Stress testing pushes systems beyond normal operational limits to identify breaking points and performance bottlenecks. These tests help ensure that applications can handle expected and unexpected workload variations.

Performance monitoring tools collect real-time metrics such as response time, throughput, and resource utilization. These metrics are analyzed to identify performance degradation and optimize system configurations. Continuous performance evaluation ensures that applications maintain consistent responsiveness over time.

Modern Artifact Management and Dependency Control Systems

Artifact management systems store and manage build outputs, dependencies, and reusable components across development pipelines. These systems ensure that consistent versions of packages are used throughout the development lifecycle.

Dependency control mechanisms help manage external libraries and packages used in applications. Versioning strategies ensure that applications use compatible and stable versions of dependencies, reducing the risk of runtime conflicts.

Artifact repositories also support secure storage and access control, ensuring that only authorized systems and users can retrieve or publish artifacts. This improves both security and traceability across development workflows.

Environment Consistency and Configuration Management Practices

Maintaining consistency across development, testing, and production environments is essential for reliable software delivery. Configuration management systems ensure that environments are provisioned with identical settings, reducing discrepancies that can lead to deployment failures.

Environment templates define standard configurations for infrastructure components such as compute resources, networking setups, and security settings. These templates ensure that environments can be recreated consistently whenever needed.

Drift detection mechanisms identify differences between intended configurations and actual system states. When discrepancies are detected, automated remediation processes restore systems to their expected configurations.

Advanced Monitoring, Logging, and Observability Patterns

Observability in modern systems is achieved through a combination of monitoring, logging, and tracing mechanisms. These components work together to provide deep visibility into system behavior and performance.

Centralized logging systems collect logs from multiple services and aggregate them into a unified platform for analysis. This enables teams to investigate issues across distributed systems more effectively.

Distributed tracing follows requests as they move through different system components, helping identify bottlenecks and performance issues. Monitoring dashboards provide real-time visualization of system health, enabling proactive identification of potential failures.

DevOps Maturity Models and Organizational Transformation

Organizations adopting DevOps practices typically progress through different maturity stages. Early stages focus on basic automation and process integration, while advanced stages involve fully automated, self-healing systems with minimal manual intervention.

Cultural transformation is a critical aspect of DevOps maturity. Teams transition from siloed responsibilities to shared ownership models, where accountability is distributed across development and operations functions. This shift improves collaboration and accelerates decision-making.

Continuous improvement practices are embedded into mature DevOps environments, where systems are regularly evaluated and optimized based on performance metrics and operational feedback.

Future-Oriented Trends in Cloud DevOps Ecosystems

Modern DevOps ecosystems are evolving toward greater automation, intelligence, and autonomy. Machine learning is increasingly being used to predict system failures, optimize resource allocation, and enhance deployment strategies.

Self-healing systems are emerging as a key trend, where infrastructure automatically detects and resolves issues without human intervention. This reduces downtime and improves system resilience.

Edge computing is also influencing DevOps practices by enabling computation closer to end users, reducing latency and improving performance for distributed applications. These advancements continue to reshape how software is developed, deployed, and managed in cloud environments.

Conclusion

The evolution of modern software development practices has fundamentally reshaped how organizations design, build, and operate applications at scale. What once relied on slow, segmented workflows has shifted toward highly integrated, automated, and continuously improving systems. This transformation is largely driven by the convergence of development and operations disciplines, combined with the adoption of cloud computing platforms that provide flexible, scalable, and globally distributed infrastructure. The result is a development environment where speed, reliability, and adaptability are no longer competing goals but interconnected outcomes of well-structured engineering practices.

One of the most significant outcomes of this evolution is the reduction of friction across the software delivery lifecycle. In traditional environments, code moved through isolated stages where development, testing, and operations teams worked independently. This separation often introduced delays, miscommunication, and inconsistent system behavior. Modern integrated workflows eliminate much of this fragmentation by creating continuous feedback loops between all stages of development. Every code change is evaluated, tested, and validated in an automated manner before reaching production environments, significantly reducing the risk of failure and improving overall system stability.

Another important impact is the increased role of automation in every layer of the development process. Automation is no longer limited to simple task execution but now extends to complex workflows that include infrastructure provisioning, application deployment, security validation, and performance monitoring. This shift reduces reliance on manual intervention, which historically has been a major source of errors and inefficiencies. Automated systems ensure that processes remain consistent regardless of scale, team size, or complexity of the application being developed. As a result, organizations can maintain predictable delivery cycles even as their software ecosystems grow.

Cloud computing platforms play a central role in enabling this level of automation and scalability. By providing on-demand access to computing resources, storage, networking, and advanced services, cloud environments remove the constraints of physical infrastructure. Teams are no longer required to manage hardware or maintain local data centers, allowing them to focus entirely on application development and optimization. The ability to dynamically scale resources based on demand also ensures that systems remain efficient during both peak and low usage periods. This elasticity is a key factor in supporting modern applications that serve global user bases with varying traffic patterns.

The integration of development and operations practices also introduces a stronger emphasis on collaboration and shared responsibility. Instead of isolated teams working independently, modern engineering environments encourage cross-functional collaboration where developers, operations engineers, security specialists, and testers work together throughout the entire lifecycle. This shared ownership model improves communication and ensures that potential issues are identified earlier in the development process. It also fosters a culture of continuous improvement, where teams collectively analyze system performance and refine workflows to enhance efficiency and reliability.

Security has also become an integral part of modern software delivery rather than a separate or final-stage consideration. In traditional models, security checks were often performed late in the development cycle, which increased the risk of vulnerabilities reaching production systems. Modern integrated approaches embed security into every stage of development, ensuring that vulnerabilities are detected and addressed early. Automated security scanning, configuration validation, and runtime monitoring help maintain strong security postures across complex environments. This proactive approach significantly reduces exposure to risks and strengthens overall system resilience.

Another key advancement is the ability to manage infrastructure programmatically. Infrastructure is no longer manually configured but defined through structured code, allowing environments to be consistently reproduced across development, testing, and production stages. This approach eliminates configuration drift and ensures that systems behave predictably regardless of where they are deployed. It also simplifies scaling, as entire environments can be replicated or modified through code-based changes rather than manual intervention. This level of control is essential for maintaining stability in large-scale distributed systems.

Observability and monitoring have also become critical components of modern software ecosystems. With applications distributed across multiple services and environments, understanding system behavior requires comprehensive visibility into logs, metrics, and execution traces. Real-time monitoring systems allow teams to detect anomalies quickly, identify performance bottlenecks, and diagnose issues before they impact end users. This proactive approach to system management improves reliability and ensures that applications maintain consistent performance even under changing workloads or unexpected conditions.

As software systems continue to grow in complexity, the importance of modular architecture becomes increasingly evident. Breaking applications into smaller, independent components allows teams to develop, deploy, and scale individual services without affecting the entire system. This modular approach improves flexibility and enables faster innovation, as updates can be made to specific components without requiring full system redeployment. It also enhances fault tolerance, as failures in one service do not necessarily impact the entire application.

The shift toward continuous delivery models has also redefined how organizations approach software releases. Instead of large, infrequent updates, modern systems support frequent, incremental deployments that reduce risk and improve adaptability. Each change is validated through automated pipelines before reaching production environments, ensuring that only stable and tested code is released. This continuous flow of updates enables organizations to respond rapidly to user needs, market changes, and emerging technical requirements.

In addition to technical improvements, these modern practices also influence organizational structure and culture. Teams adopting these methodologies often experience a shift toward more agile and responsive working models. Decision-making becomes faster, feedback cycles become shorter, and overall productivity increases as teams gain greater autonomy and clarity in their workflows. This cultural transformation is as important as the technical changes, as it ensures that organizations can fully leverage the benefits of modern engineering practices.

Looking forward, the trajectory of software development continues to move toward greater automation, intelligence, and autonomy. Emerging technologies such as predictive analytics, intelligent scaling systems, and self-healing infrastructure are expected to further reduce manual intervention in system management. These advancements will allow systems to not only respond to changes but also anticipate and adapt to them proactively. As these capabilities mature, the distinction between development and operations will continue to blur, creating even more unified and efficient software ecosystems.

Ultimately, the modern approach to software engineering represents a shift from isolated processes to fully integrated systems that prioritize speed, quality, and resilience. By combining automation, collaboration, cloud infrastructure, and continuous feedback, organizations can build systems that are not only efficient but also adaptable to future demands. This evolution continues to redefine how software is created and maintained, establishing a foundation for more intelligent and scalable digital environments.