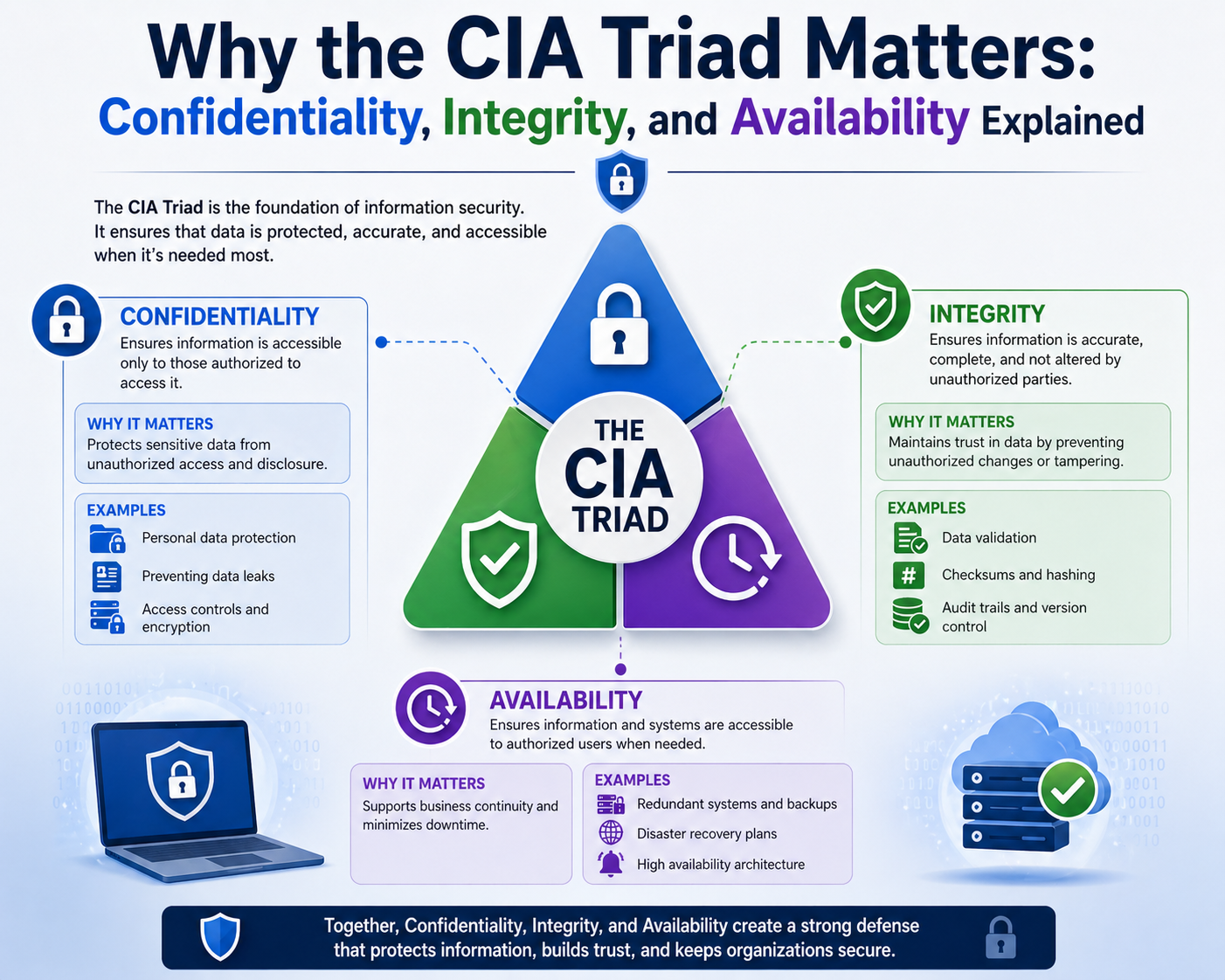

Cybersecurity is the discipline of safeguarding digital systems, networks, and data from unauthorized access, disruption, modification, or destruction. In modern digital environments where information flows continuously across devices and platforms, maintaining trust in data and systems is essential. The CIA Triad represents the fundamental model that defines how security is structured and evaluated. It consists of three interconnected principles: confidentiality, integrity, and availability. These principles collectively ensure that data remains protected, accurate, and accessible under controlled conditions. Any weakness in one of these areas can compromise the entire security posture of a system. Understanding this triad is essential for anyone working in information technology or preparing for entry-level networking and security roles because it forms the conceptual foundation for almost every security control, policy, and architecture decision.

Understanding Confidentiality in Information Security

Confidentiality refers to the protection of information from unauthorized access and disclosure. It ensures that sensitive data is only accessible to individuals, systems, or processes that have been explicitly granted permission. In practical terms, confidentiality is what prevents private communication, financial records, medical data, or corporate secrets from being exposed to unintended recipients. In a digital ecosystem where data constantly moves between servers, devices, and applications, maintaining confidentiality requires structured controls and mechanisms that regulate who can view or interact with information. The objective is not only to prevent external attackers but also to minimize internal misuse or accidental exposure. Without confidentiality, sensitive information becomes vulnerable to exploitation, leading to financial loss, identity theft, regulatory violations, and erosion of trust in digital systems. In modern environments, confidentiality also extends beyond simple data protection to include safeguarding user behavior patterns, metadata, and communication channels, which can indirectly reveal sensitive insights if exposed.

Core Mechanisms That Enforce Confidentiality

Confidentiality is implemented through a combination of technical controls, administrative policies, and procedural safeguards. One of the most widely used mechanisms is encryption, which transforms readable data into an encoded format that can only be interpreted with a specific decryption key. This ensures that even if data is intercepted during transmission or accessed without authorization, it remains unintelligible. Another critical mechanism is access control, which defines who can view or modify specific resources within a system. Access control is often implemented through authentication methods such as passwords, biometric verification, and multi-factor authentication systems. Authorization frameworks further refine access by assigning roles and permissions based on user responsibilities. Data classification is another important method, where information is categorized based on sensitivity levels. Highly sensitive data receives stricter protection measures, while less critical information may have broader accessibility. These mechanisms work together to enforce structured confidentiality across complex systems. In advanced environments, monitoring and auditing systems are also used to track access behavior and detect unusual patterns that may indicate potential breaches.

Role of Encryption in Maintaining Confidentiality

Encryption plays a central role in preserving confidentiality in modern cybersecurity environments. It ensures that data remains protected both during storage and transmission. When data is encrypted, it is converted into a structured format that appears random without the corresponding decryption key. This process protects information from interception during network communication, especially in unsecured environments. Encryption is commonly applied in online transactions, messaging systems, cloud storage, and enterprise databases. Symmetric encryption uses a single key for both encryption and decryption, while asymmetric encryption uses a pair of public and private keys for secure communication. The strength of encryption depends on the complexity of the algorithm and the length of the cryptographic key. As computational power increases, encryption standards evolve to maintain resistance against brute-force attacks and other decryption attempts. In addition, modern encryption practices also incorporate end-to-end encryption, ensuring that data remains protected throughout its entire journey without being exposed at intermediary points.

Access Control Systems and User Authorization

Access control is a fundamental component of confidentiality that governs how users interact with systems and data. It ensures that only authorized individuals can access specific resources based on predefined rules. Authentication verifies the identity of a user, while authorization determines what that user is allowed to do within the system. Role-based access control is widely used in organizational environments, where users are assigned roles such as administrator, analyst, or general user, each with different levels of access. This structure reduces the risk of unnecessary exposure of sensitive information. Multi-factor authentication adds an additional layer of protection by requiring multiple forms of verification before granting access. These mechanisms collectively reduce the likelihood of unauthorized access, whether caused by external attackers or compromised credentials. In more advanced systems, adaptive authentication is also used, where access decisions are influenced by context such as location, device type, and user behavior patterns.

Data Classification and Sensitivity Management

Data classification is a structured approach to organizing information based on its level of sensitivity and importance. It allows organizations to apply appropriate security controls depending on the value and risk associated with specific data types. For example, confidential data may include financial records, personal identification information, or proprietary business strategies, while public data may consist of general announcements or non-sensitive documentation. By categorizing data, organizations can prioritize security resources and implement stricter protections where necessary. This approach not only improves efficiency but also ensures compliance with regulatory standards. Proper classification reduces the risk of accidental exposure by clearly defining how different types of information should be handled, stored, and transmitted across systems. In large enterprises, automated classification tools are increasingly used to reduce human error and ensure consistency across vast datasets.

Real-World Impact of Confidentiality Failures

When confidentiality is compromised, the consequences can be severe and far-reaching. Data breaches often expose sensitive personal and organizational information, leading to identity theft, financial fraud, and reputational damage. Large-scale incidents involving unauthorized access to personal records demonstrate how quickly trust can be eroded when protective measures fail. In corporate environments, exposure of internal communications or strategic documents can lead to competitive disadvantages and legal consequences. Healthcare systems are particularly vulnerable because they store highly sensitive patient information that must remain private. When confidentiality controls are weak or improperly configured, attackers can exploit vulnerabilities to extract large volumes of data without detection. These incidents highlight the importance of maintaining strict access controls and encryption standards to protect sensitive information across all digital platforms. In many cases, the long-term impact of such breaches extends beyond immediate financial loss, affecting customer confidence, regulatory standing, and organizational reputation for years.

Importance of Confidentiality in Modern Digital Environments

In today’s interconnected world, confidentiality is not limited to isolated systems but extends across cloud infrastructures, mobile devices, and distributed networks. As organizations increasingly rely on remote access and digital collaboration tools, the risk of unauthorized exposure grows significantly. Confidentiality ensures that even in highly dynamic environments, sensitive information remains protected from unintended access. It also supports regulatory compliance by enforcing standards related to data privacy and protection. Without confidentiality, digital trust cannot be sustained, and users lose confidence in systems that handle their personal or professional information. This makes confidentiality a critical pillar not only in cybersecurity design but also in organizational governance and operational strategy. In addition, confidentiality plays a key role in supporting secure communication between systems operating across different geographic regions, where data often traverses multiple networks and security boundaries. As digital transformation expands, maintaining confidentiality becomes a continuous requirement rather than a one-time implementation.

Confidentiality in Cloud and Distributed Systems

In modern cloud and distributed environments, confidentiality becomes more complex due to the shared and multi-tenant nature of infrastructure. Data is often stored in virtualized environments where multiple users and organizations share underlying physical resources. This increases the importance of strict isolation mechanisms to ensure that one user’s data cannot be accessed by another. Encryption at rest and in transit is widely used to protect data across cloud platforms, while identity management systems enforce strict authentication and authorization policies. Additionally, secure API communication plays a crucial role in maintaining confidentiality between interconnected services. As workloads move dynamically across regions and servers, maintaining consistent confidentiality policies requires automated enforcement mechanisms that adapt in real time to changing environments.

Business and Regulatory Importance of Confidentiality

Confidentiality is also deeply connected to legal and regulatory frameworks that govern data protection. Organizations are required to follow strict guidelines to ensure that sensitive information such as customer data, financial records, and personal identifiers are properly secured. Failure to comply with these regulations can result in legal penalties, financial fines, and reputational damage. Beyond compliance, confidentiality also supports business continuity by preventing data leaks that could disrupt operations or compromise competitive advantage. In highly competitive industries, protecting proprietary information such as research, product designs, and strategic plans is essential for maintaining market position. This makes confidentiality not just a technical requirement but a core component of business strategy and risk management.

Human Factors in Maintaining Confidentiality

While technical controls are essential, human behavior plays a significant role in maintaining confidentiality. Many security breaches occur not because of system failures, but due to human error such as weak passwords, phishing attacks, or accidental data sharing. This highlights the importance of user awareness and training in cybersecurity practices. Employees must understand how their actions can impact data security and why confidentiality protocols are necessary. Organizations often implement security awareness programs to educate users about safe handling of sensitive information. Additionally, policies such as least privilege access and regular credential updates help reduce the risk of accidental or intentional data exposure. When human behavior is aligned with technical safeguards, confidentiality becomes significantly more effective.

Integrity in Cybersecurity: Ensuring Data Accuracy and Trustworthiness

Integrity in cybersecurity refers to the assurance that data remains accurate, consistent, and unaltered unless changes are authorized and properly controlled. It is the principle that guarantees information can be trusted throughout its lifecycle, whether it is stored, processed, or transmitted. In digital systems, data is constantly moving and being updated, which introduces risks of corruption, unauthorized modification, or accidental alteration. Integrity ensures that when data is accessed, it reflects its true and intended state. Without integrity, even properly secured and available systems lose value because the information they deliver cannot be trusted. This principle is essential in environments such as financial systems, healthcare records, and enterprise databases where decisions rely heavily on precise and reliable data. It also supports accountability by ensuring that every modification to data can be traced and verified.

Fundamental Role of Data Integrity in Digital Systems

Data integrity forms the backbone of reliable computing environments. It ensures that information remains consistent across all stages of processing and storage. When integrity is maintained, users can confidently rely on system outputs for decision-making. However, if data is altered—whether intentionally by attackers or unintentionally through system errors—the consequences can be severe. Incorrect financial transactions, corrupted medical records, or distorted analytical reports can lead to operational failures and significant losses. Integrity is not only about preventing malicious attacks but also about protecting systems from hardware failures, software bugs, and transmission errors. It acts as a safeguard that preserves the authenticity of information across complex digital ecosystems where multiple systems interact simultaneously. In distributed systems, maintaining consistency across replicated databases becomes especially important to prevent conflicting or outdated information.

Mechanisms That Protect Data Integrity

Maintaining integrity requires structured mechanisms that detect, prevent, and correct unauthorized or accidental changes. One widely used method is cryptographic hashing, which generates a unique digital fingerprint for a dataset. If even a single character in the data is altered, the hash value changes, signaling potential tampering. Digital signatures extend this concept by combining hashing with encryption to verify both the authenticity and integrity of data. These signatures confirm that the information originates from a trusted source and has not been modified during transmission. Version control systems also contribute to integrity by tracking changes over time, allowing administrators to review modifications and restore previous versions if necessary. Together, these mechanisms create a layered defense that ensures data remains reliable throughout its lifecycle. Additional techniques such as checksums, redundancy verification, and database constraints further enhance integrity by identifying inconsistencies at multiple levels of system operation.

Cryptographic Hashing and Its Importance

Cryptographic hashing plays a crucial role in maintaining data integrity by transforming input data into a fixed-length output known as a hash value. This process is one-way, meaning the original data cannot be reconstructed from the hash. Even the smallest change in input produces a completely different hash output, making it an effective tool for detecting tampering. Hashing is widely used in software distribution, password storage, and data verification processes. When files are downloaded or transmitted, their hash values are compared before and after transfer to ensure they have not been altered. In authentication systems, hashed passwords are stored instead of plain text, ensuring that even if databases are compromised, original credentials remain protected. This mechanism significantly strengthens trust in digital data handling.

Digital Signatures and Authentication of Data Integrity

Digital signatures provide a robust method for verifying both the origin and integrity of digital information. They function by combining hashing with asymmetric encryption, where a private key is used to create the signature and a public key is used for verification. When a digital document is signed, any subsequent modification invalidates the signature, indicating that the data has been altered. This ensures that recipients can confirm both the authenticity of the sender and the integrity of the message. Digital signatures are widely used in secure communications, software distribution, and legal documentation systems. They play a critical role in preventing impersonation and ensuring that data has not been tampered with during transit or storage.

Version Control Systems and Data Tracking

Version control systems are essential tools for maintaining integrity in collaborative and dynamic environments. They allow multiple users to work on the same data while tracking every modification made over time. Each change is recorded as a version, enabling administrators to review historical states of data and revert to previous versions if errors or unauthorized changes are detected. This creates a transparent environment where every modification is accountable. Version control is widely used in software development, document management, and database systems. It reduces the risk of irreversible errors and ensures that data evolution is traceable. By maintaining a complete history of changes, these systems enhance both reliability and accountability in data management processes.

Impact of Integrity Violations in Real Systems

When data integrity is compromised, the consequences can be far-reaching and sometimes irreversible. In financial systems, even small alterations in transaction records can lead to significant monetary discrepancies and legal disputes. In healthcare environments, incorrect patient data can result in improper treatment decisions, putting lives at risk. In corporate settings, manipulated data can lead to flawed business strategies and operational inefficiencies. Integrity breaches may occur due to malicious attacks such as data manipulation or insider threats, but they can also result from system failures, software bugs, or synchronization errors. Regardless of the cause, the outcome is the same: loss of trust in the reliability of the system. Restoring integrity often requires extensive auditing, correction processes, and validation procedures, which can be both time-consuming and costly.

Integrity in Data Transmission and Communication Systems

In communication systems, maintaining integrity is essential to ensure that messages are delivered exactly as they were sent. During transmission, data may pass through multiple networks and devices, each introducing potential risks of alteration or corruption. Error detection and correction techniques are used to identify and fix transmission errors before data reaches its destination. Protocols that incorporate checksums and cyclic redundancy checks help verify that data packets remain unchanged during transfer. Secure communication protocols also integrate encryption and authentication mechanisms to protect against tampering. Without these safeguards, data integrity in communication systems would be highly vulnerable to interception and manipulation, undermining the reliability of digital interactions.

Human and System Factors Affecting Integrity

Integrity is influenced not only by technical mechanisms but also by human behavior and system design. Human errors such as incorrect data entry, accidental deletion, or misconfiguration can compromise data accuracy. Similarly, poorly designed systems may allow unauthorized modifications or fail to detect inconsistencies. Insider threats also pose significant risks, where individuals with legitimate access intentionally alter data for personal gain or malicious purposes. To mitigate these risks, organizations implement strict access controls, audit trails, and monitoring systems. Training and awareness programs also play a role in reducing human errors by ensuring that users understand the importance of maintaining data accuracy. A combination of technical safeguards and responsible user behavior is essential for preserving integrity.

Integrity in Modern Cloud and Distributed Environments

In cloud-based and distributed systems, maintaining integrity becomes more complex due to the decentralized nature of data storage and processing. Data is often replicated across multiple servers and locations, increasing the risk of inconsistencies. Synchronization mechanisms are used to ensure that all copies of data remain consistent and up to date. Distributed ledger technologies also enhance integrity by recording transactions in a way that prevents retroactive modification. In cloud environments, providers implement encryption, validation checks, and monitoring tools to ensure that data remains unchanged unless authorized. As organizations continue to adopt cloud infrastructure, maintaining integrity across distributed systems remains a critical challenge that requires continuous oversight and advanced security mechanisms.

Availability in Cybersecurity: Ensuring Continuous Access to Systems and Data

Availability in cybersecurity refers to the assurance that systems, applications, and data remain accessible to authorized users whenever they are needed. It is a critical component of the CIA Triad because even highly secure and accurate data loses its value if it cannot be accessed in a timely manner. Modern digital environments depend heavily on continuous uptime, where interruptions can lead to financial loss, operational disruption, and reduced user trust. Availability is not simply about keeping systems online; it is about ensuring consistent performance, resilience under stress, and rapid recovery in the event of failures. In large-scale infrastructures, availability is achieved through careful design, redundancy, monitoring, and recovery planning that collectively ensure systems remain operational under varying conditions.

Core Principles That Support System Availability

The foundation of availability lies in designing systems that can withstand disruptions without significant downtime. One of the key principles is redundancy, where critical components are duplicated so that if one fails, another can immediately take over. This applies to servers, network paths, storage systems, and even power supplies. Another principle is fault tolerance, which enables systems to continue functioning even when part of the system experiences failure. Load balancing also plays an important role by distributing traffic across multiple servers, preventing any single system from becoming overwhelmed. Together, these principles ensure that services remain stable even under heavy usage or unexpected failures. Availability is therefore not accidental but engineered through deliberate architectural decisions.

Redundancy and Failover Mechanisms in System Design

Redundancy is one of the most important strategies for maintaining availability in complex systems. It involves maintaining duplicate components that can take over instantly if the primary system fails. This can be implemented at multiple levels, including hardware, network infrastructure, and data storage. Failover mechanisms automatically detect system failures and switch operations to backup systems without requiring manual intervention. This seamless transition is essential for minimizing downtime and ensuring uninterrupted service. In high-availability environments, redundant systems are often distributed across different physical locations to reduce the risk of localized failures. This geographic distribution ensures that even large-scale disruptions do not affect overall system availability.

Disaster Recovery and Business Continuity Planning

Disaster recovery planning is a structured approach to restoring systems after a major disruption. It focuses on minimizing downtime and ensuring that critical services can be restored as quickly as possible. This includes maintaining backups, defining recovery time objectives, and establishing recovery point objectives that determine how much data loss is acceptable. Business continuity planning extends this concept by ensuring that essential operations can continue even during disruptions. It includes identifying critical systems, creating alternative workflows, and establishing communication protocols. These strategies ensure that organizations can maintain functionality even when primary systems are unavailable due to hardware failures, cyber incidents, or environmental events.

Scalability and Its Role in Maintaining Availability

Scalability is a crucial factor in ensuring availability, especially in environments where demand can fluctuate rapidly. A scalable system can adjust its resources based on workload, ensuring that performance remains stable even during peak usage. Vertical scaling involves increasing the capacity of existing systems, while horizontal scaling involves adding more systems to distribute the load. Cloud-based infrastructures often rely heavily on horizontal scaling due to its flexibility and efficiency. Without scalability, systems can become overloaded, leading to slow response times or complete outages. By dynamically adjusting resources, scalable systems maintain availability even under unpredictable demand conditions.

Load Balancing and Traffic Distribution Strategies

Load balancing is a technique used to distribute incoming network traffic across multiple servers or resources to ensure that no single system becomes overwhelmed. It plays a direct role in maintaining availability by preventing bottlenecks and ensuring efficient resource utilization. Load balancers monitor server performance and route traffic based on capacity, response time, and health status. This dynamic distribution ensures that even if one server fails, others continue handling requests without interruption. Load balancing is commonly used in web applications, cloud services, and large enterprise systems where high traffic volumes are expected. It enhances both performance and reliability by ensuring continuous service delivery.

Monitoring and Real-Time System Observability

Continuous monitoring is essential for maintaining availability in modern IT environments. It involves tracking system performance, resource usage, and potential failure indicators in real time. Monitoring tools provide alerts when anomalies are detected, allowing administrators to respond quickly before issues escalate into outages. Observability extends this concept by providing deeper insights into system behavior, enabling teams to understand not just what is happening but why it is happening. Metrics such as uptime, latency, error rates, and resource utilization are continuously analyzed to ensure systems remain healthy. Effective monitoring reduces downtime by enabling proactive identification and resolution of potential issues.

Resilience Engineering in Modern Infrastructure

Resilience engineering focuses on designing systems that can adapt to and recover from unexpected disruptions. Instead of simply preventing failures, resilient systems are built to continue operating under degraded conditions. This approach acknowledges that failures are inevitable in complex environments and prioritizes recovery and adaptation. Techniques such as graceful degradation allow systems to maintain partial functionality even when certain components fail. Resilient architectures often incorporate self-healing mechanisms that automatically detect and correct issues without human intervention. This ensures that availability is maintained even under challenging and unpredictable conditions.

Network Availability and Communication Stability

Network availability is a critical aspect of overall system availability because most digital services rely on constant communication between devices and servers. Network disruptions can lead to service outages even if underlying systems are functioning correctly. To maintain network availability, redundant communication paths are used to ensure that data can still flow even if one route fails. Protocols are designed to detect packet loss and reroute traffic accordingly. High-performance networks also use quality-of-service mechanisms to prioritize critical traffic. These strategies ensure that communication remains stable and reliable, supporting uninterrupted access to services and applications.

Impact of Availability Failures on Digital Systems

When availability is compromised, the effects can be immediate and widespread. Users may lose access to critical services, leading to productivity loss and operational disruption. In financial systems, downtime can prevent transactions from being processed, resulting in financial losses and customer dissatisfaction. In communication platforms, outages can interrupt essential interactions and workflows. Even short periods of unavailability can damage trust in digital services, especially when systems are expected to operate continuously. Availability failures may be caused by hardware malfunctions, software errors, cyberattacks, or natural disasters. Regardless of the cause, the inability to access systems highlights the importance of robust availability planning and infrastructure design.

The Interdependence of Confidentiality, Integrity, and Availability

The three components of the CIA Triad are deeply interconnected and must function together to ensure comprehensive cybersecurity. Confidentiality ensures that data is protected from unauthorized access, integrity ensures that data remains accurate and unaltered, and availability ensures that data and systems remain accessible when needed. If any one of these principles is compromised, the overall security framework becomes unstable. For example, highly secure data that is inaccessible provides no practical value, while accessible data that lacks integrity cannot be trusted. Similarly, data that is both accessible and accurate but exposed to unauthorized users creates significant security risks. The balance between these principles is essential for maintaining a secure and functional digital environment.

Balancing Security Objectives in System Design

Designing secure systems requires balancing confidentiality, integrity, and availability in a way that aligns with operational requirements. Overemphasis on confidentiality may restrict access to the point where usability is affected, while excessive focus on availability may weaken security controls. Similarly, prioritizing integrity without considering accessibility may result in rigid systems that are difficult to use. Effective cybersecurity design involves evaluating risk levels and implementing controls that provide adequate protection without compromising functionality. This balance is achieved through layered security architectures, adaptive access controls, and continuous risk assessment. The goal is to create systems that are secure, reliable, and efficient under all operating conditions.

Integrated Security Strategies for Modern Environments

Modern cybersecurity strategies rely on integrating confidentiality, integrity, and availability into a unified framework rather than treating them as separate elements. This involves designing systems with multiple layers of defense, continuous monitoring, and adaptive response mechanisms. Security policies are aligned with operational requirements to ensure that protection measures do not hinder performance. Automation plays an increasingly important role in maintaining this balance by enabling real-time responses to threats and system changes. Machine-based analytics help detect anomalies that may affect any component of the CIA Triad, allowing for faster mitigation. This integrated approach ensures that systems remain secure, accurate, and accessible in dynamic digital environments where threats and demands constantly evolve.

Conclusion

The CIA Triad—Confidentiality, Integrity, and Availability—serves as the structural foundation of modern cybersecurity. Every secure system, whether it is a small application or a global-scale infrastructure, relies on these three principles working together in balance. Without them, digital environments become unstable, unreliable, and vulnerable to a wide range of threats. Understanding the triad is not simply an academic requirement for certification exams; it is a practical necessity for anyone involved in designing, managing, or interacting with digital systems.

Confidentiality ensures that sensitive information remains protected from unauthorized access. It establishes boundaries around data, defining who can view, modify, or share it. In real-world terms, confidentiality protects personal identities, financial records, healthcare information, and organizational secrets. As digital ecosystems expand and data moves across multiple platforms, maintaining confidentiality becomes increasingly complex. Encryption, authentication systems, and access control mechanisms all contribute to preserving privacy, but the underlying principle remains consistent: information must only be accessible to those who are explicitly authorized. When confidentiality fails, trust is the first casualty, followed closely by financial and reputational damage. In many modern environments, confidentiality also extends to protecting metadata, communication patterns, and behavioral data, which can be just as sensitive as the content itself.

Integrity, on the other hand, ensures that information remains accurate, consistent, and trustworthy throughout its lifecycle. In a world where decisions are heavily dependent on digital data, integrity becomes essential for reliability. Whether it is a financial transaction, a medical diagnosis, or a system log, the accuracy of information determines the quality of outcomes. Even minor alterations in data can lead to significant consequences, especially in critical systems. Integrity mechanisms such as hashing, digital signatures, and version control systems are designed to detect and prevent unauthorized modifications. These tools ensure that data remains in its intended state, free from corruption or manipulation. Without integrity, systems may still function, but the results they produce cannot be trusted, rendering them effectively useless in high-stakes environments. In advanced infrastructures, integrity also includes synchronization across distributed systems, ensuring that multiple copies of data remain consistent and aligned in real time.

Availability completes the triad by ensuring that systems and data remain accessible when needed. It is not enough for information to be secure and accurate if users cannot reach it at the right time. Modern digital services are expected to operate continuously, often without interruption. Any downtime, even for a short period, can result in lost productivity, financial losses, and user dissatisfaction. Availability is achieved through redundancy, load balancing, disaster recovery planning, and scalable infrastructure. These strategies ensure that systems can withstand failures, adapt to increased demand, and recover quickly from disruptions. In essence, availability guarantees that the digital world remains functional and responsive under varying conditions. In highly dependent ecosystems such as online services, even milliseconds of delay can affect user experience, making availability a performance as well as a security concern.

While each pillar of the CIA Triad serves a distinct purpose, their true strength lies in their interdependence. They are not isolated concepts but interconnected components of a unified security framework. Strengthening one aspect without considering the others can create imbalance. For example, increasing confidentiality without regard for availability may make systems overly restrictive and difficult to use. Similarly, prioritizing availability without sufficient security controls can expose systems to unauthorized access. A well-designed cybersecurity strategy requires careful balancing of all three principles to ensure that systems remain secure, reliable, and usable simultaneously. In practice, organizations often face trade-offs where enhancing one dimension introduces pressure on another, making strategic decision-making essential.

In practical environments, this balance is achieved through layered security architectures and continuous monitoring. Organizations must evaluate risks dynamically and adjust their controls based on changing threats and operational demands. Cybersecurity is no longer a static discipline; it is an ongoing process that evolves alongside technology and attack methods. As systems become more complex and interconnected, maintaining the CIA Triad requires not only technical solutions but also strategic planning and organizational awareness. Every layer of infrastructure, from network design to application development, must incorporate these principles to ensure comprehensive protection. Modern security frameworks increasingly rely on automation and intelligent monitoring systems to maintain this balance at scale.

The importance of the CIA Triad extends beyond technical systems and into organizational behavior and decision-making. Security is not solely the responsibility of IT departments; it is a shared responsibility across all levels of an organization. Employees, administrators, developers, and users all play a role in maintaining confidentiality, ensuring integrity, and supporting availability. Human error remains one of the most common causes of security breaches, highlighting the need for awareness, training, and consistent adherence to security practices. When individuals understand the importance of these principles, they contribute to a stronger and more resilient security environment. Over time, this creates a culture where security becomes embedded in daily operations rather than treated as an external requirement.

In modern digital ecosystems such as cloud computing, mobile networks, and distributed systems, the relevance of the CIA Triad becomes even more pronounced. Data is no longer confined to a single location; it exists across multiple platforms and devices. This distributed nature increases both opportunity and risk. While it enables flexibility and scalability, it also introduces new challenges in maintaining consistent security standards. Organizations must implement advanced monitoring tools, automated response systems, and adaptive security models to ensure that the CIA Triad is upheld across all environments. The complexity of these systems means that security must be continuously evaluated rather than periodically reviewed.

Ultimately, the CIA Triad represents more than just a theoretical model; it is a practical framework that defines how digital trust is established and maintained. Every secure interaction in the digital world depends on the successful implementation of confidentiality, integrity, and availability. When these principles are effectively balanced, systems become resilient, data becomes trustworthy, and services remain reliable. When they are neglected, the consequences can be severe, ranging from data breaches and system failures to complete operational breakdowns. The long-term stability of any digital ecosystem depends on how effectively these three principles are enforced and maintained over time.

As technology continues to evolve, the importance of the CIA Triad will only increase. Emerging technologies such as artificial intelligence, Internet of Things devices, and cloud-native architectures introduce new complexities that demand stronger security foundations. The core principles, however, remain unchanged. Confidentiality protects information, integrity ensures accuracy, and availability guarantees access. Together, they form the backbone of cybersecurity and provide a stable framework for navigating an increasingly digital world where data is one of the most valuable assets.