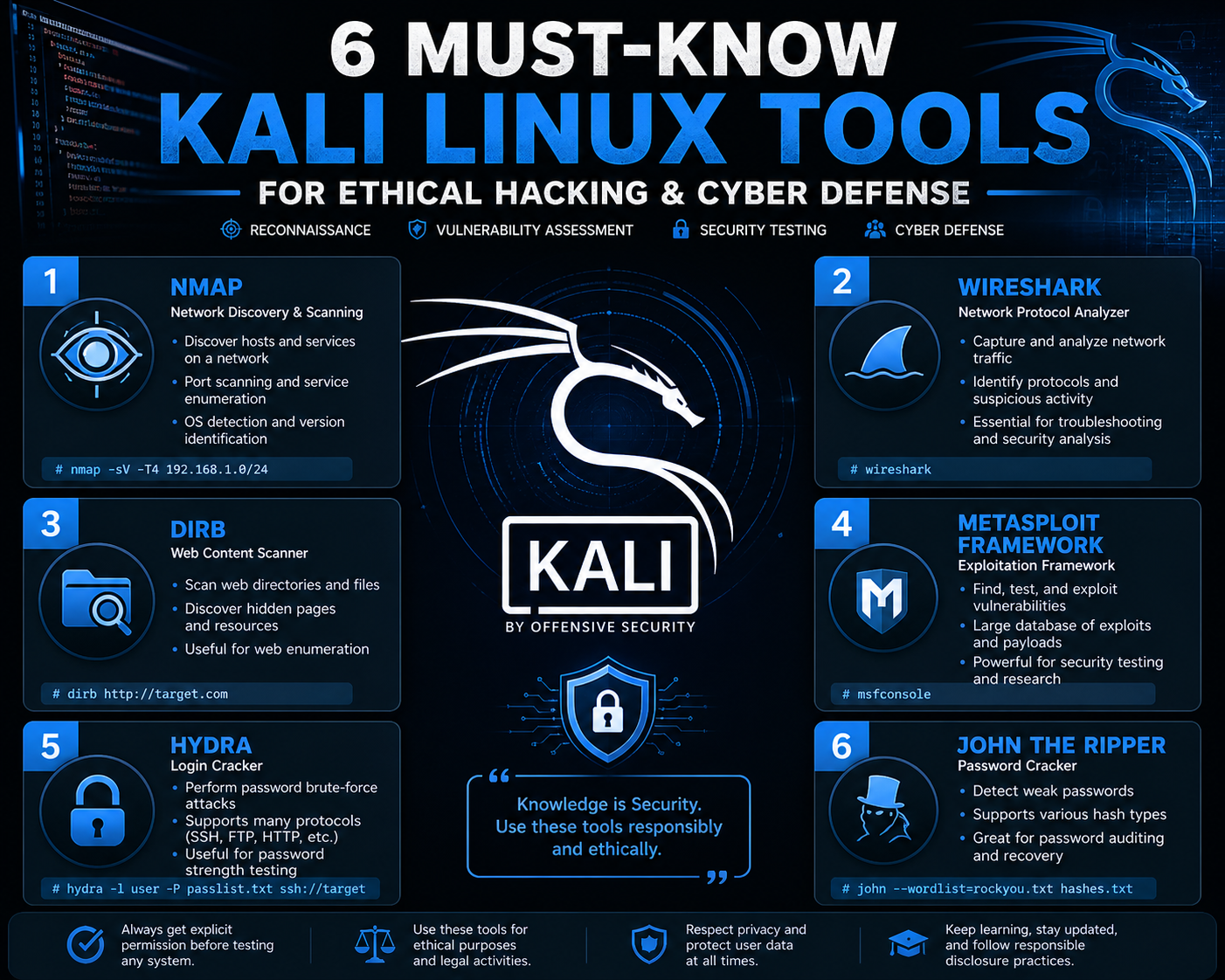

Kali Linux is a specialized operating system built for security testing, penetration analysis, and digital defense evaluation. It is designed to support professionals who assess the resilience of systems by simulating real-world attack techniques in controlled environments. The strength of Kali Linux comes from its extensive collection of preinstalled tools that cover nearly every stage of a security assessment, from initial discovery to deeper exploitation analysis.

In practical use, most security assessments do not rely on all available tools at once. Instead, a small set of highly effective utilities is used repeatedly because they provide consistent and reliable insights. These tools help identify active systems, map network structures, analyze exposed services, and evaluate authentication mechanisms. The early stages of testing are particularly important because they define the direction of the entire assessment process. If initial discovery is incomplete or inaccurate, later stages may miss critical vulnerabilities.

The workflow typically begins with reconnaissance and enumeration, where the goal is to gather as much information as possible without interacting deeply with the target systems. This phase focuses on visibility rather than exploitation. Understanding what systems exist, what services they expose, and how they are configured creates the foundation for all further analysis.

Network Discovery and Port Visibility Analysis

One of the most important steps in security testing is identifying which systems are active on a network and what services they are exposing. This is done through structured scanning techniques that observe how systems respond to network requests. Every system communicates through ports, which act as gateways for specific services such as web traffic, file sharing, or remote administration.

During network discovery, a range of ports is analyzed to determine which ones are open, closed, or filtered. Open ports indicate active services that are listening for incoming connections. Closed ports respond negatively, while filtered ports may be restricted by security controls such as firewalls. By interpreting these responses, testers can build a clear picture of the system’s exposure.

In real-world environments, scanning is often optimized to focus on commonly used ports first. This is because most systems only expose a limited number of services. However, deeper analysis may involve scanning the full range of possible ports to ensure no hidden or non-standard services are overlooked. This approach is essential in complex environments where administrators may intentionally or unintentionally leave unusual services exposed.

Service Identification and System Fingerprinting Techniques

After identifying open ports, the next step is to determine what services are running on those ports. This process involves analyzing responses from applications to identify software types and versions. Each service behaves in a slightly different way, and these differences can be used to infer what is running behind the scenes.

Service identification is important because vulnerabilities are often tied to specific software versions. Outdated applications may contain known security weaknesses that can be analyzed further in later stages of testing. Even when systems are patched, configuration details can sometimes reveal useful information about the underlying infrastructure.

Fingerprinting techniques also help determine operating system characteristics. By analyzing network behavior, response timing, and protocol implementation details, it is possible to estimate whether a system is running Linux, Windows, or another platform. This information is valuable for planning further testing strategies.

Deep Enumeration and Information Expansion Strategies

Enumeration goes beyond simply identifying services. It focuses on extracting as much contextual information as possible from those services. This includes configuration details, user access structures, and service dependencies. The goal is to understand how different components of a system interact with each other.

In many cases, services reveal metadata that can be used to infer internal architecture. For example, web servers may expose version headers, database services may reveal connection structures, and file-sharing systems may show directory configurations. These small details often become important clues when building an understanding of system security.

Enumeration also involves identifying relationships between services. A web application might depend on a database backend, while authentication systems may rely on centralized directory services. Understanding these relationships helps identify potential attack paths where one weak component can affect the entire system.

Authentication Testing and Credential Validation Analysis

Once systems and services have been mapped, attention often shifts to authentication mechanisms. Many systems rely on username and password combinations to control access. While this is a common security method, it is also one of the most frequently misconfigured areas in real environments.

Authentication testing involves evaluating how systems respond to repeated login attempts and whether they enforce restrictions against excessive access attempts. Weak systems may allow unlimited login attempts without delay or restriction, making them vulnerable to automated testing techniques.

Credential validation analysis focuses on determining whether default, weak, or commonly used passwords are present in a system. Many breaches occur not because of advanced exploitation techniques but due to simple authentication failures. Systems that do not enforce strong password policies or account protection mechanisms are particularly vulnerable.

Modern environments often implement defenses such as account lockouts, request throttling, and anomaly detection. However, these protections are not always configured correctly, and inconsistencies can still be identified during testing.

Automated Password Testing and Risk Evaluation

Automated password testing is used to evaluate the strength of authentication systems by attempting multiple credential combinations. These tests rely on structured lists of commonly used passwords, predictable patterns, and previously exposed credentials. The effectiveness of this approach depends heavily on the quality of the password selection strategy.

Weak password usage remains one of the most persistent security issues in digital environments. Many users still rely on simple or repetitive patterns that are easy to predict. In corporate environments, password reuse across multiple systems increases the risk of unauthorized access if one system is compromised.

Automated testing tools evaluate how systems respond to repeated login attempts and whether they allow unrestricted guessing. However, modern systems may include protective mechanisms such as rate limiting or multi-factor authentication, which reduce the success rate of these methods. As a result, careful analysis of system behavior is required to understand whether authentication systems are truly secure or only superficially protected.

Web Application Exposure and Content Structure Analysis

Web applications represent one of the most common attack surfaces in modern environments. These systems often expose a wide range of functionality through browser-based interfaces, making them accessible but also potentially vulnerable if not properly configured.

Security analysis of web applications involves identifying visible components such as login pages, administrative panels, and publicly accessible directories. In many cases, additional hidden components may exist that are not immediately visible through standard browsing. These components may include backup files, development interfaces, or outdated modules.

Understanding the structure of a web application is essential for identifying weak points. Applications that rely on multiple plugins or external extensions may inherit vulnerabilities from those components. Even if the core application is secure, third-party additions can introduce significant risk if they are not maintained properly.

User Discovery and Access Role Mapping in Web Systems

Many web platforms include multiple user roles with different levels of access. These roles may include administrators, editors, contributors, and standard users. Understanding how these roles are structured is an important part of security analysis.

User discovery involves identifying valid usernames or account identifiers within a system. This information can sometimes be obtained through system responses or observable behavior differences during login attempts. Once valid accounts are identified, further testing can be conducted to evaluate how securely those accounts are protected.

Role mapping helps determine what level of access each user type has within the system. In some cases, lower-level accounts may still have access to sensitive functions due to misconfiguration. Identifying these inconsistencies is important for understanding the true security posture of the application.

Early-Stage Security Mapping and Attack Surface Identification

The combination of network scanning, service identification, enumeration, and authentication testing forms the foundation of early-stage security analysis. This phase is focused on building a complete picture of the target environment without actively modifying or disrupting systems.

By collecting and correlating information from multiple sources, testers can identify potential weaknesses and map out possible attack paths. This includes understanding which services are exposed, how they are configured, and where authentication weaknesses may exist.

Early-stage mapping is essential because it determines the efficiency of all later stages of testing. A well-constructed map allows for targeted analysis, while incomplete information may result in missed vulnerabilities or inefficient testing approaches.

Expanding Security Testing Beyond Initial Reconnaissance

Once initial reconnaissance and network mapping are complete, the focus of security testing shifts toward deeper interaction with services and applications. At this stage, the goal is not just to identify what exists, but to understand how it behaves under different conditions. This includes analyzing authentication systems, web application structures, and service-level vulnerabilities that may not be visible during surface-level scanning.

Kali Linux provides a set of tools that support this deeper phase of analysis. These tools are designed to test assumptions made during reconnaissance and validate whether identified systems can be accessed, manipulated, or bypassed under certain conditions. The testing process becomes more targeted, focusing on specific applications and entry points rather than broad network discovery.

This phase is where many real-world vulnerabilities are uncovered because systems that appear secure externally often reveal misconfigurations or weak logic when subjected to structured interaction.

Web Application Analysis and Structural Mapping Techniques

Web applications are one of the most common interfaces exposed by modern systems. They often serve as the primary entry point for users and administrators, making them a critical focus for security evaluation. Web application analysis involves understanding how different components of a site interact, how data flows between client and server, and how user input is processed.

The structure of a web application can reveal a great deal about its internal design. Pages may be connected through predictable URL patterns, hidden directories may exist outside normal navigation paths, and administrative interfaces may be exposed without proper protection. Even when applications appear visually simple, their backend structure can be complex and layered.

Security testing in this area focuses on identifying these structures and determining whether they are properly secured. This includes analyzing session behavior, input validation, authentication flows, and access control mechanisms. Each of these components plays a role in determining whether the application can resist unauthorized access or manipulation.

Understanding Authentication Flows and Access Control Behavior

Authentication systems are responsible for verifying user identity before granting access to protected resources. In web applications, this typically involves login forms, session tokens, and backend verification systems. While authentication is a standard security feature, it is often implemented inconsistently across different systems.

Security testing in this area focuses on understanding how authentication requests are processed. This includes analyzing how the system responds to valid and invalid credentials, how sessions are managed after login, and whether authentication states can be manipulated. Weak implementations may allow session reuse, predictable token generation, or improper logout handling.

Access control is closely related to authentication, but focuses on what users can do after they have logged in. Systems may incorrectly assign permissions or fail to enforce role restrictions, allowing users to access functions beyond their intended privileges. Identifying these weaknesses requires careful analysis of how requests are handled across different user roles.

Credential Attack Strategies and Authentication Stress Testing

Credential-based attacks remain one of the most widely used testing methods in security assessments. These methods evaluate how resilient authentication systems are against repeated login attempts using different combinations of usernames and passwords. The goal is not simply to guess credentials but to measure how well a system defends against systematic testing.

Authentication stress testing involves simulating multiple login attempts in a controlled manner. This helps identify whether systems enforce protective mechanisms such as account lockouts, request delays, or IP-based restrictions. Systems without these protections are significantly more vulnerable to automated access attempts.

The effectiveness of credential testing depends heavily on input data quality. Common passwords, predictable patterns, and reused credentials across systems significantly increase the likelihood of successful authentication bypass. Many security breaches occur not due to complex exploits but because of weak or reused passwords.

Modern systems often attempt to mitigate these risks by enforcing complexity requirements, limiting login attempts, and introducing multi-factor authentication. However, inconsistent implementation of these controls can still result in exploitable weaknesses.

Password Pattern Analysis and Human Behavior Exploitation

Human behavior plays a major role in password selection, which makes password pattern analysis an important aspect of security testing. Many users choose passwords that are easy to remember, such as names, dates, or simple sequences. These patterns create predictable structures that can be analyzed and tested systematically.

Security testing in this area focuses on identifying these predictable behaviors and evaluating whether systems are vulnerable to them. Even when password policies enforce complexity requirements, users often adapt in predictable ways, such as adding numbers or symbols to common words.

Understanding these behavioral patterns allows testers to assess whether authentication systems rely too heavily on user discipline rather than technical enforcement. Systems that do not enforce strong password policies at the technical level remain vulnerable even if users attempt to comply with guidelines.

Web-Based Authentication Interception and Request Analysis

Web applications transmit authentication data through structured requests that can be analyzed to understand how login systems operate. These requests often include usernames, passwords, session identifiers, and additional metadata. By examining these elements, it becomes possible to understand how authentication decisions are made.

Request analysis involves observing how the server responds to different input conditions. For example, variations in response timing or error messages can reveal information about whether a username exists or whether a password is incorrect. These subtle differences can be used to refine further testing strategies.

Session handling is another critical area of analysis. Once a user logs in, the system typically issues a session token that maintains authentication state. Weak session management can lead to predictable tokens, session reuse, or session fixation issues. These weaknesses can compromise the integrity of authentication systems even if login mechanisms appear secure.

User Enumeration Techniques in Web Applications

User enumeration is the process of identifying valid usernames within a system. Many applications unintentionally expose this information through differences in response behavior. For example, a system may respond differently when a valid username is entered compared to an invalid one.

This information can be used to build a list of valid accounts, which can then be tested further for authentication weaknesses. In environments where user privacy is important, preventing enumeration is a key security requirement. However, poorly designed systems often leak this information through subtle inconsistencies.

User enumeration can also occur through publicly accessible features such as password recovery systems, profile pages, or error messages. These features may inadvertently confirm whether a username exists in the system.

Privilege Structures and Role-Based Security Evaluation

Modern web applications often implement role-based access control to manage user permissions. This system assigns different levels of access based on user roles such as administrator, moderator, or standard user. Proper implementation ensures that users can only access functions relevant to their role.

Security testing in this area focuses on identifying whether these role boundaries are enforced correctly. In some cases, users may be able to access restricted functions by manipulating requests or exploiting misconfigurations. These issues often arise when access control checks are not consistently applied across all application components.

Privilege escalation occurs when a user gains access to functions or data beyond their intended permission level. This can happen through direct exploitation or through logical flaws in the application’s design. Identifying these weaknesses requires a detailed understanding of how roles are assigned and validated.

Service Interaction Analysis and Backend Dependency Mapping

Web applications often rely on multiple backend services such as databases, authentication servers, and file storage systems. Understanding how these components interact is essential for identifying security weaknesses that may not be visible at the application level.

Service interaction analysis involves mapping how data flows between different system components. For example, a user login request may be processed by a web server, validated by an authentication service, and verified against a database. Each of these steps introduces potential points of failure.

Backend dependency mapping helps identify which services are critical to application functionality. If one service is compromised or misconfigured, it may impact the entire application. This layered understanding is essential for evaluating system resilience.

Early Exploitation Readiness and Vulnerability Correlation

At the end of this phase, security testers begin correlating all gathered information to identify potential exploitation opportunities. This involves connecting network data, service information, authentication behavior, and application structure into a unified model.

Vulnerability correlation is not about immediate exploitation but about identifying relationships between weaknesses. For example, a weak authentication system combined with an exposed service interface may indicate a higher-risk entry point. Similarly, user enumeration combined with weak password policies may suggest potential credential compromise risks.

This stage prepares the foundation for more advanced testing in later phases, where actual exploitation techniques and system interaction methods are explored in greater depth.

Transitioning from Enumeration to Exploitation Strategy

After completing reconnaissance and deep enumeration, the security testing process shifts into a more advanced phase where identified weaknesses are evaluated for real-world impact. This stage focuses on understanding whether discovered vulnerabilities can be actively exploited and what level of system control they may provide. The transition from passive analysis to active testing is a critical step because it separates theoretical vulnerabilities from practical security risks.

In Kali Linux environments, exploitation is supported by a wide range of tools designed to test known vulnerabilities, misconfigurations, and logic flaws. However, effective exploitation is not simply about running tools. It requires understanding the context of discovered weaknesses, the structure of the target system, and the potential consequences of interacting with specific services. The goal is to validate security issues in a controlled manner and determine their severity.

Exploitation also involves prioritization. Not every weakness identified during earlier phases is equally important. Some may only result in minor information disclosure, while others may provide full system access. Understanding this hierarchy of risk is essential for a structured security assessment.

Exploit Validation and Vulnerability Confirmation Techniques

Before attempting full exploitation, vulnerabilities must be validated to confirm they are real and reproducible. Many potential issues identified during enumeration may turn out to be false positives or require specific conditions to trigger. Validation ensures that security testers are working with accurate and actionable findings.

This process often involves testing how services respond to carefully crafted inputs or modified requests. If a system behaves unexpectedly or reveals sensitive information, it may indicate a deeper underlying vulnerability. Validation also helps determine whether security controls are in place but misconfigured, or whether they are absent.

In many cases, vulnerabilities exist not because systems lack security features, but because those features are inconsistently applied. For example, input validation may be enforced on one endpoint, but not another, or authentication checks may be bypassed through alternative access paths. Identifying these inconsistencies is a key part of exploitation readiness.

Exploit Mapping and Attack Path Construction

Once vulnerabilities are validated, the next step is to understand how they can be connected into meaningful attack paths. An attack path is a sequence of steps that leads from an initial weakness to a higher level of system access. This may involve chaining multiple vulnerabilities together or combining weak configurations with exposed services.

Exploit mapping focuses on identifying relationships between different components of a system. For example, a web application vulnerability may provide access to backend credentials, which in turn allow access to a database. Similarly, a low-privilege user account may be used to escalate privileges if additional weaknesses exist in the system.

This stage is critical because individual vulnerabilities often do not represent full compromise on their own. Instead, security risks emerge when multiple weaknesses interact in unexpected ways. Mapping these relationships helps prioritize remediation efforts and understand real-world impact.

Remote Exploitation Techniques and Service-Level Weaknesses

Remote exploitation involves targeting services that are accessible over a network. These services may include web applications, file transfer systems, remote administration interfaces, or database connections. If these services contain vulnerabilities, they may allow unauthorized actions or system access.

Service-level weaknesses often arise from outdated software, misconfigurations, or insecure default settings. In some cases, services may expose debugging interfaces or administrative functions that were never intended for public access. These exposures create opportunities for exploitation if they are discovered and validated.

Remote exploitation requires careful analysis of service behavior, including how it handles unexpected input, how it manages sessions, and how it enforces authentication. Even small inconsistencies in these areas can lead to significant security risks when properly understood.

Payload Delivery and System Interaction Analysis

In exploitation testing, payloads are used to interact with vulnerable systems in a controlled way. A payload is a structured input designed to trigger specific behavior in a target application or service. This behavior may include revealing system information, executing commands, or altering application logic.

Payload delivery must be carefully aligned with the target environment. Different systems interpret input differently depending on the operating system, service type, and configuration. Understanding these differences is essential for successful testing.

System interaction analysis involves observing how the target responds after payload execution. This includes monitoring changes in system behavior, response messages, and service availability. These observations help determine whether a vulnerability is exploitable and what level of control it may provide.

Post-Exploitation Environment Analysis and System Control Evaluation

Once initial access is achieved, the focus shifts to post-exploitation analysis. This phase involves understanding the extent of control gained over the target system and identifying additional opportunities for expansion within the environment.

Post-exploitation analysis begins with system enumeration from an internal perspective. Unlike earlier external reconnaissance, this stage provides direct access to system-level information. This includes user accounts, process lists, network connections, and file system structures.

The goal is to evaluate how deeply the system has been compromised. Limited access may restrict visibility to a single application or user account, while deeper access may reveal administrative privileges or access to multiple connected systems.

Privilege Escalation Path Identification

Privilege escalation occurs when a user or process gains higher levels of access than originally intended. This is one of the most important stages in security testing because it determines whether limited access can be transformed into full system control.

Privilege escalation paths may exist due to misconfigured permissions, outdated software components, or insecure system configurations. In some cases, applications may run with elevated privileges while still accepting input from lower-level users. This creates opportunities for escalation if input handling is not properly secured.

Identifying escalation paths requires a detailed understanding of system architecture, including how permissions are assigned and enforced. It also involves analyzing how processes interact with each other and whether any trust relationships exist that could be abused.

Internal Network Pivoting and System Expansion Techniques

In more complex environments, a single compromised system may provide access to internal networks that are not directly exposed externally. Pivoting refers to the process of using one compromised system as a gateway to access additional systems within the same network.

Internal network analysis often reveals services that were not visible during initial reconnaissance. These may include internal databases, administrative interfaces, or development environments. Because these systems are not publicly exposed, they may have weaker security controls or outdated configurations.

Pivoting requires careful mapping of internal connections and understanding how systems communicate with each other. Once internal pathways are identified, additional testing can be performed to evaluate the security of connected systems.

Persistence Evaluation and Access Stability Analysis

In advanced security testing scenarios, it is important to evaluate whether access to a system can be maintained over time. Persistence refers to the ability to retain access even after system changes or restarts.

Persistence analysis involves understanding how systems manage authentication, session states, and user accounts. In some cases, vulnerabilities may allow the creation of persistent access points that remain active even after initial entry points are closed.

Evaluating persistence is important for understanding long-term risk. Systems that allow sustained unauthorized access represent a higher level of security concern than those where access is temporary or easily revoked.

Data Exposure and Sensitive Information Discovery

Once access is established, one of the key concerns is identifying sensitive data that may be exposed within the system. This includes configuration files, credential storage, internal communications, and application data.

Data exposure often occurs due to improper access controls or insecure storage practices. Sensitive information may be stored in plain text, embedded within application files, or accessible through misconfigured services.

Identifying exposed data is critical for understanding the full impact of a security compromise. Even if initial access is limited, exposed data can often be used to expand access or escalate privileges within the environment.

System Hardening Observation and Defensive Weakness Analysis

Beyond exploitation, security testing also involves analyzing how well systems are protected against attacks. This includes evaluating defensive mechanisms such as firewalls, intrusion detection systems, authentication policies, and logging systems.

System hardening observation focuses on identifying gaps in these defenses. For example, inconsistent logging may prevent detection of unauthorized activity, while weak firewall rules may allow unnecessary service exposure. Authentication systems may also lack sufficient enforcement of complexity or multi-factor requirements.

Understanding defensive weaknesses is essential for providing meaningful security recommendations. It helps organizations prioritize improvements based on actual risk rather than theoretical concerns.

Integrated Security Assessment and Risk Interpretation

The final stage of advanced testing involves integrating all findings into a unified understanding of system security. This includes combining reconnaissance data, enumeration results, exploitation outcomes, and post-exploitation analysis.

Risk interpretation focuses on understanding how different vulnerabilities interact and what their combined impact may be. A single weakness may not represent a critical issue on its own, but multiple weaknesses combined can create significant exposure.

This integrated view is essential for an accurate security assessment. It allows testers to move beyond isolated findings and focus on real-world attack scenarios that could be used against the system in practice.

At this stage, the overall security posture of the environment becomes clear, including its strengths, weaknesses, and areas requiring improvement.

Conclusion

Kali Linux remains one of the most widely recognized platforms for security testing because it consolidates a vast range of offensive security tools into a single, structured environment. The progression from reconnaissance to exploitation and post-exploitation demonstrates a fundamental principle of modern cybersecurity work: effective security assessment is not about isolated tools, but about how information flows between stages of analysis. Each phase builds on the previous one, gradually transforming raw technical data into a structured understanding of system behavior, weaknesses, and risk exposure.

At the beginning of any security assessment, the most important objective is visibility. Without accurate visibility into systems, services, and network structures, deeper analysis becomes unreliable. This is why reconnaissance and enumeration form the foundation of all penetration testing workflows. Identifying live hosts, open ports, and exposed services provides the initial map of an environment. However, what makes this stage powerful is not just the discovery itself, but the interpretation of that discovery. A single open port is not inherently a vulnerability, but it becomes meaningful when combined with service identification, version analysis, and configuration context. This layered interpretation is what allows security professionals to move from simple observation to informed analysis.

As the assessment progresses into deeper enumeration and interaction with services, the focus shifts from what is exposed to how it behaves. Web applications, authentication systems, and network services often reveal subtle inconsistencies when they are tested under structured conditions. These inconsistencies can include differences in response behavior, error handling patterns, session management flaws, or unexpected information disclosure. While each of these issues may appear minor in isolation, they often represent underlying weaknesses in system design or configuration. The ability to recognize these patterns is what separates surface-level scanning from meaningful security evaluation.

Authentication systems play a particularly important role in this phase because they serve as the primary barrier between external users and internal resources. Weaknesses in authentication are among the most commonly exploited issues in real-world environments, not because they are complex, but because they are frequently underestimated. Systems that rely solely on user behavior without enforcing strong technical controls remain vulnerable to predictable access patterns. This is why structured testing of authentication behavior is essential, not to bypass security, but to measure how effectively it resists common failure scenarios such as repeated login attempts, credential reuse, or predictable password selection.

Web applications add another layer of complexity because they often combine multiple systems into a single interface. A single application may rely on backend databases, external APIs, authentication services, and file storage systems. This interconnected structure means that a weakness in one component can have cascading effects across the entire system. For example, a misconfigured web interface may expose internal administrative functions, or a poorly validated input field may allow unintended interactions with backend services. Understanding these relationships is critical because modern security risks rarely exist in isolation.

As testing moves into exploitation, the nature of analysis changes from observation to validation. At this stage, the goal is to determine whether identified weaknesses can be actively leveraged in a controlled manner. Exploitation is not simply about gaining access; it is about confirming the real-world impact of vulnerabilities. A theoretical weakness becomes significant only when it can be demonstrated to affect system integrity, confidentiality, or availability. This distinction is important because it ensures that security assessments remain grounded in practical risk rather than hypothetical scenarios.

However, exploitation alone does not represent the end of the analysis process. Once access is achieved, post-exploitation evaluation becomes essential. This stage reveals how much control has actually been gained and what additional systems or data may now be exposed. Internal system analysis often uncovers information that was not visible externally, including user accounts, configuration details, running processes, and internal network structures. This expanded visibility is critical for understanding the full scope of a security incident or vulnerability chain.

Privilege escalation is one of the most important concepts within post-exploitation analysis because it determines whether limited access can be transformed into broader control. Many systems contain layered permission structures intended to restrict user actions. However, misconfigurations or design flaws can sometimes allow users to bypass these restrictions. When this occurs, the impact of an initial vulnerability increases significantly. A low-level access point may evolve into full administrative control if escalation paths exist. This is why security testing does not stop at initial access but continues into deeper system analysis.

Another important aspect of advanced security evaluation is internal network understanding. Many environments are segmented into external-facing systems and internal-only resources. When one system is compromised, it may act as a gateway to other parts of the network. This concept, often referred to as lateral movement, highlights the importance of internal segmentation and strict access controls. Weak internal boundaries can allow a single vulnerability to propagate across multiple systems, significantly increasing overall risk.

Data exposure also becomes a key concern during advanced analysis. Once access is established, systems may reveal sensitive information such as configuration files, authentication credentials, or internal communication logs. Even if initial access is limited, exposed data can often be used to expand access or uncover additional vulnerabilities. This reinforces the importance of secure data storage practices and proper access restrictions across all system components.

Throughout all stages of testing, one of the most important outcomes is risk interpretation. Security findings are only meaningful when they are understood in context. A single vulnerability may not represent a critical issue on its own, but when combined with other weaknesses, it can contribute to a larger attack path. This interconnected view of risk allows organizations to prioritize remediation efforts based on real-world impact rather than isolated technical severity.

Ultimately, Kali Linux serves as a framework for structured thinking in security analysis rather than just a collection of tools. Its value lies in enabling a methodical approach to understanding systems from multiple perspectives: external exposure, internal behavior, and post-access impact. The tools themselves are only as effective as the methodology behind their use.

The broader lesson from studying these tools and techniques is that cybersecurity is fundamentally about understanding systems in depth rather than attempting to break them in isolation. Each stage of analysis builds a more complete picture of how systems operate, where they are vulnerable, and how those vulnerabilities can interact. When approached correctly, this process leads not only to the identification of weaknesses but also to a deeper understanding of how to design and maintain more resilient systems in the future.