Kubernetes has become a defining technology in the evolution of modern software development, influencing how applications are built, deployed, and maintained across diverse environments. Its rise is closely tied to the increasing reliance on cloud-native architectures, where scalability, flexibility, and automation are no longer optional but essential. As organizations move away from traditional monolithic systems toward microservices-based designs, Kubernetes provides the orchestration capabilities needed to manage these distributed systems effectively.

At a fundamental level, Kubernetes simplifies the complexities associated with running containerized applications at scale. Containers themselves offer a lightweight and portable way to package applications along with their dependencies, ensuring consistency across development, testing, and production environments. However, managing large numbers of containers manually quickly becomes impractical. Kubernetes addresses this challenge by automating deployment, scaling, load balancing, and recovery processes, enabling teams to maintain high levels of efficiency and reliability.

The adoption of Kubernetes has been driven by its ability to abstract infrastructure complexities. Developers can define how applications should run using declarative configurations, while Kubernetes ensures that the desired state is continuously maintained. This approach reduces the need for manual intervention and minimizes the risk of human error, making it particularly valuable in large-scale environments where consistency is critical.

Another factor contributing to Kubernetes’ popularity is its flexibility. It is not tied to a specific cloud provider or infrastructure setup, allowing organizations to deploy applications across multiple environments, including on-premises data centers, public clouds, and hybrid systems. This portability has made Kubernetes an attractive choice for organizations seeking to avoid vendor lock-in while maintaining control over their infrastructure.

The growing ecosystem surrounding Kubernetes has further accelerated its adoption. A wide range of tools and extensions has emerged to enhance its capabilities, covering areas such as monitoring, security, networking, and continuous integration. This ecosystem enables organizations to tailor Kubernetes to their specific needs, creating highly customized and efficient workflows.

As Kubernetes becomes more deeply integrated into development processes, the demand for skilled professionals continues to rise. Organizations are actively seeking individuals who can design, deploy, and manage applications within Kubernetes environments. This demand is not limited to large enterprises; startups and mid-sized companies are also embracing Kubernetes as they scale their operations.

For developers, learning Kubernetes represents an opportunity to stay relevant in a rapidly changing industry. Traditional development skills are no longer sufficient on their own, as modern applications require an understanding of how they interact with infrastructure. Kubernetes bridges this gap by providing a platform where development and operations converge, aligning with the principles of DevOps.

The increasing importance of Kubernetes has also influenced hiring practices. Employers are placing greater emphasis on practical experience with container orchestration, often prioritizing candidates who can demonstrate hands-on skills. This shift has made certifications an important tool for validating expertise, providing a standardized way to assess a candidate’s capabilities.

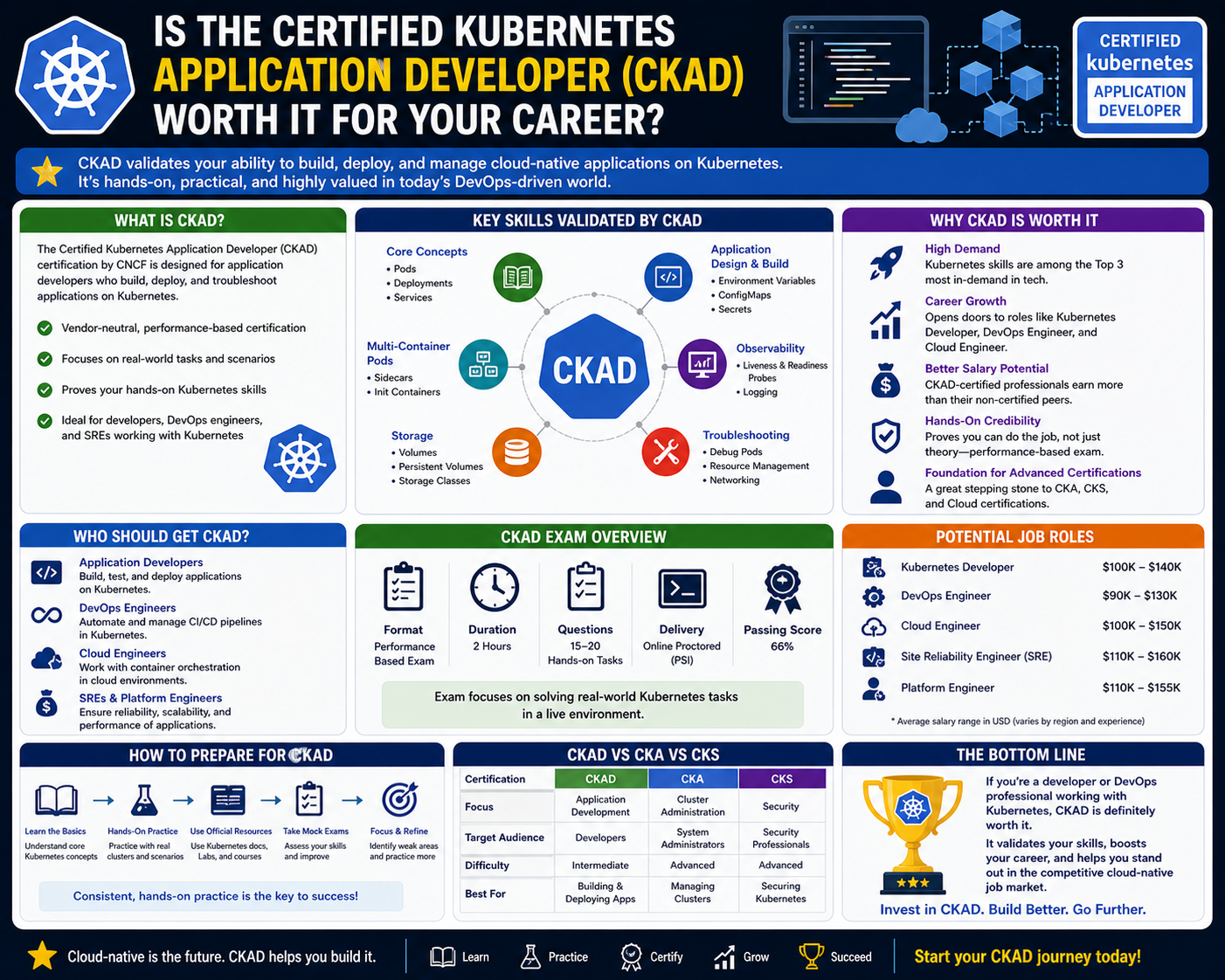

In this context, certifications focused on Kubernetes have gained significant traction. They offer a structured way for professionals to learn and demonstrate their skills, helping them stand out in a competitive job market. Among these certifications, the Certified Kubernetes Application Developer has emerged as a key credential for those involved in application development within Kubernetes environments.

Understanding the broader impact of Kubernetes is essential for appreciating the value of such certifications. It is not merely a tool but a foundational technology that is reshaping how software is developed and delivered. As organizations continue to adopt Kubernetes, the need for professionals who can work effectively within this ecosystem will only continue to grow.

What the Certified Kubernetes Application Developer Certification Represents

The Certified Kubernetes Application Developer certification was created to establish a clear benchmark for developers working with Kubernetes. It focuses specifically on the skills required to design, build, and manage applications within a Kubernetes environment, distinguishing it from other certifications that may focus more on infrastructure or administrative tasks.

This certification reflects a shift toward practical, skills-based validation. Rather than emphasizing theoretical knowledge, it requires candidates to demonstrate their ability to perform real-world tasks. This approach aligns with the needs of modern organizations, which prioritize hands-on expertise over abstract understanding.

One of the defining aspects of this certification is its focus on application-level responsibilities. Candidates are expected to understand how to work with Kubernetes resources to deploy and manage applications effectively. This includes creating and configuring components, ensuring that applications run smoothly, and addressing issues as they arise.

The certification also emphasizes the importance of working with configuration data. In Kubernetes, applications often rely on external configuration to operate correctly. Being able to manage this data efficiently is a critical skill, as it allows developers to adapt applications to different environments without modifying the underlying code.

Another important aspect is the ability to work with multi-container architectures. Modern applications frequently consist of multiple components that must work together seamlessly. The certification ensures that candidates can design and manage these complex setups, enabling them to build more sophisticated and scalable applications.

Observability is also a key focus area. Developers must be able to monitor the health and performance of their applications, identify potential issues, and respond to them effectively. This involves implementing mechanisms that provide insight into application behavior, ensuring that problems can be detected and resolved quickly.

The certification is designed to be accessible to individuals who already have some experience with Kubernetes. It is not intended as an entry-level credential but rather as a way to validate and enhance existing skills. Candidates are expected to be familiar with the basics of containerization and have practical experience working in Kubernetes environments.

Earning this certification demonstrates a commitment to professional development and a willingness to invest in acquiring valuable skills. It signals to employers that a candidate has taken the initiative to deepen their understanding of Kubernetes and is capable of applying that knowledge in practical scenarios.

The recognition associated with this certification also adds to its value. It is backed by organizations that are widely respected within the technology community, giving it a level of credibility that is recognized by employers around the world. This recognition helps ensure that the certification remains relevant and valuable over time.

As the technology landscape continues to evolve, certifications like this play an important role in helping professionals keep their skills up to date. They provide a structured learning path and a clear set of objectives, making it easier to navigate the complexities of modern development environments.

Breaking Down the CKAD Exam Format and Expectations

The exam associated with this certification is designed to assess practical skills in a realistic setting. Unlike traditional exams that rely on multiple-choice questions, this exam requires candidates to complete tasks using a live environment. This hands-on approach ensures that candidates are evaluated based on their ability to perform real-world tasks rather than simply recall information.

The exam consists of a series of challenges that must be completed within a limited time frame. Each challenge represents a scenario that a Kubernetes application developer might encounter in their work. These scenarios require candidates to apply their knowledge to solve problems, configure resources, and ensure that applications function as intended.

One of the key characteristics of the exam is its focus on efficiency. Candidates must not only understand how to perform tasks but also be able to do so quickly and accurately. This requires a deep familiarity with Kubernetes tools and commands, as well as the ability to navigate the environment effectively.

The use of a command-line interface is central to the exam. Candidates are expected to interact with Kubernetes primarily through command-line tools, which are commonly used in real-world environments. This emphasis on practical tools reinforces the exam’s focus on real-world applicability.

Another important aspect of the exam is its emphasis on troubleshooting. Candidates must be able to identify and resolve issues that arise during the execution of tasks. This requires a strong understanding of how different components interact and the ability to diagnose problems efficiently.

The exam also tests a candidate’s ability to work with configuration files. These files play a critical role in defining how applications are deployed and managed within Kubernetes. Being able to create, modify, and troubleshoot these configurations is an essential skill for any Kubernetes developer.

Time management is a significant factor in the exam. With multiple tasks to complete within a fixed period, candidates must prioritize their efforts and allocate their time effectively. This adds a layer of complexity, as it requires both technical expertise and strategic thinking.

The performance-based nature of the exam has made it widely respected within the industry. It ensures that individuals who earn the certification have demonstrated their ability to perform practical tasks, making the credential a reliable indicator of skill.

Preparing for this type of exam requires a different approach compared to traditional tests. Candidates must focus on gaining hands-on experience, practicing real-world scenarios, and becoming comfortable with the tools and techniques used in Kubernetes environments. This often involves setting up practice environments and experimenting with different configurations.

The exam’s structure also encourages continuous learning. As candidates prepare, they are likely to encounter a wide range of scenarios that deepen their understanding of Kubernetes. This process not only prepares them for the exam but also enhances their overall skill set.

Core Knowledge Areas Covered in the CKAD Certification

The certification covers a comprehensive range of topics that are essential for Kubernetes application development. These topics are organized into key areas that reflect the different aspects of working with Kubernetes.

One of the primary areas is the understanding of basic application components. Candidates must be able to create and manage these components, ensuring that applications are deployed correctly and function as expected. This includes working with the fundamental building blocks of Kubernetes and understanding how they interact.

Configuration management is another critical area. Candidates must demonstrate their ability to manage application settings and ensure that applications have access to the necessary resources. This involves working with various tools and techniques to handle configuration data effectively.

The ability to work with multi-container setups is also essential. Candidates must understand how to design and manage applications that consist of multiple components, ensuring that they operate together seamlessly. This requires a deep understanding of how containers interact within a Kubernetes environment.

Observability plays a key role in maintaining application health. Candidates must be able to implement monitoring and logging mechanisms that provide insight into application performance. This enables them to detect issues early and respond effectively.

Application deployment and management are also central to the certification. Candidates must understand how to update applications, manage changes, and ensure that deployments are carried out smoothly. This involves working with various deployment strategies and understanding their implications.

Networking is another important area, as it determines how applications communicate within the Kubernetes environment. Candidates must understand how to configure and manage network interactions, ensuring that services can communicate effectively.

Persistent storage is also covered, highlighting the importance of data management in containerized environments. Candidates must be able to work with storage resources, ensuring that applications can retain data even when containers are replaced.

These knowledge areas collectively provide a comprehensive foundation for working with Kubernetes. By covering a wide range of topics, the certification ensures that candidates are well-equipped to handle the challenges of modern application development.

The combination of theoretical understanding and practical application makes this certification particularly valuable. It ensures that candidates not only understand Kubernetes concepts but also know how to apply them effectively in real-world scenarios.

Deep Dive into CKAD Practical Skills and Real-World Application Scenarios

The Certified Kubernetes Application Developer certification is built around one central idea: proving that a developer can work effectively in real Kubernetes environments, not just understand theory. This focus on practical execution is what separates it from many other technology certifications. In real-world cloud-native systems, success depends less on memorizing concepts and more on the ability to solve problems under constraints, configure systems accurately, and understand how distributed components behave under load.

Kubernetes environments are inherently dynamic. Applications are not static deployments but living systems that scale, recover, and evolve continuously. Because of this, CKAD emphasizes scenarios where developers must interact directly with running clusters, diagnose issues, and implement solutions in real time. This mirrors the day-to-day responsibilities of developers working in DevOps-driven organizations, where application reliability and performance depend heavily on proper Kubernetes usage.

A major part of CKAD-level competency involves understanding how workloads behave inside pods. Pods are the smallest deployable units in Kubernetes, and they represent tightly coupled containers that share networking and storage resources. Developers must understand how to design pods effectively so that applications remain stable and efficient. This includes deciding when multiple containers should share a pod and when they should be separated into independent units.

Real-world application design often requires balancing simplicity with scalability. A poorly designed pod structure can lead to performance bottlenecks, while a well-designed one can significantly improve system reliability. CKAD scenarios often simulate these decisions by requiring candidates to modify existing deployments or troubleshoot misconfigured workloads.

Another important aspect of practical Kubernetes usage is configuration management. Applications rarely operate in isolation; they depend on external configuration values such as environment variables, secrets, and runtime parameters. In Kubernetes, these configurations are handled using specialized resources that allow developers to decouple configuration from application code.

This separation is essential for modern DevOps workflows. It allows applications to be deployed across multiple environments without modification. For example, the same application image can run in development, testing, and production environments with different configurations. CKAD emphasizes the ability to manage these configurations efficiently, ensuring that applications remain flexible and portable.

Developers also need to understand how Kubernetes handles updates and rollouts. In traditional systems, updating an application often involves downtime or manual intervention. Kubernetes introduces rolling updates, where new versions of an application are gradually deployed while the old version is still running. This ensures zero downtime deployments, which are critical for modern web services.

CKAD-level tasks often involve managing these deployment strategies. Candidates may be required to update applications, roll back changes, or modify deployment behavior to ensure stability. These scenarios reflect real-world challenges where updates must be applied without disrupting user experience.

Networking is another essential area of practical Kubernetes knowledge. In a distributed system, communication between services is fundamental. Kubernetes provides abstractions that simplify networking, but understanding how these abstractions work is crucial for troubleshooting and optimization.

Services in Kubernetes act as stable endpoints that allow communication between pods. They abstract away the dynamic nature of pod IP addresses, ensuring that applications can communicate reliably even as underlying infrastructure changes. CKAD scenarios often test a candidate’s ability to configure services correctly and ensure that applications can be accessed as intended.

In addition to internal communication, external exposure of applications is also important. Many applications need to be accessible outside the Kubernetes cluster. This requires proper configuration of networking resources to route traffic from external sources into the cluster safely and efficiently.

Troubleshooting network issues is a common real-world challenge. Misconfigured services, incorrect selectors, or network policy restrictions can all lead to connectivity problems. CKAD emphasizes the ability to identify and resolve these issues quickly using command-line tools and diagnostic techniques.

Storage management is another critical skill area. Unlike stateless applications, many real-world systems require persistent data storage. Kubernetes provides mechanisms to attach persistent volumes to pods, ensuring that data remains intact even if containers are restarted or rescheduled.

Understanding how storage works in Kubernetes is essential for building reliable applications. Developers must know how to request storage resources, bind them to applications, and ensure data integrity. CKAD scenarios often involve configuring persistent storage or troubleshooting issues related to volume mounting.

Another key practical skill is observability. In modern distributed systems, visibility into application behavior is essential. Without proper monitoring, it becomes nearly impossible to identify performance issues or system failures.

Kubernetes provides several mechanisms for observability, including logs, health checks, and probes. Developers must understand how to implement these tools effectively. Liveness probes help determine whether an application is running correctly, while readiness probes determine whether it is ready to serve traffic.

CKAD tasks often simulate scenarios where applications fail due to misconfigured probes or missing logging configurations. Candidates must diagnose these issues and implement corrections to restore normal operation.

Understanding CKAD Configuration, Debugging, and Troubleshooting in Depth

One of the most important aspects of Kubernetes development is configuration accuracy. Even small misconfigurations can lead to application failures, making precision a critical skill. CKAD places significant emphasis on the ability to read, write, and modify configuration files that define how applications behave within the cluster.

These configuration files are typically written in a structured format that defines resources such as deployments, services, and pods. Developers must understand how to interpret these files and identify issues when applications do not behave as expected.

In real-world environments, debugging is a daily activity. Applications may fail due to incorrect configurations, resource limitations, or networking issues. Kubernetes provides a variety of tools to help diagnose these problems, but developers must know how to use them effectively.

Logs are one of the primary sources of debugging information. By examining logs, developers can gain insight into application behavior and identify errors. CKAD scenarios often involve analyzing logs to determine the root cause of failures.

Another important debugging technique involves inspecting resource states. Kubernetes provides commands that allow developers to view the status of pods, deployments, and services. Understanding how to interpret this information is essential for identifying issues quickly.

Resource constraints are another common source of problems. Applications may fail if they request more resources than are available in the cluster. Developers must understand how to define resource requests and limits to ensure that applications run efficiently without overloading the system.

CKAD-level tasks often simulate resource-related failures, requiring candidates to adjust configurations to resolve issues. This reflects real-world scenarios where resource management is critical for maintaining system stability.

Another key area of troubleshooting involves understanding scheduling behavior. Kubernetes uses a scheduler to determine where pods should run within a cluster. If scheduling rules are too restrictive or misconfigured, pods may fail to start.

Developers must understand how scheduling works and how to influence it using configuration settings. This includes understanding node constraints, affinity rules, and tolerations. These concepts allow developers to control where applications run and ensure optimal resource utilization.

Security is also an important consideration in Kubernetes environments. While CKAD is not primarily a security certification, developers must still understand basic security principles such as access control and secret management.

Sensitive information such as passwords and API keys must be handled securely. Kubernetes provides mechanisms to store and manage this data safely. Developers must understand how to use these mechanisms to protect application data.

Another important aspect of real-world Kubernetes usage is managing application lifecycle events. Applications are not static; they evolve through updates, scaling events, and configuration changes.

CKAD scenarios often involve managing these lifecycle events. Developers may need to scale applications up or down, update configurations, or roll back changes. These tasks reflect real operational challenges in production environments.

Understanding how Kubernetes handles scaling is particularly important. Applications may need to scale based on demand, and Kubernetes provides automated mechanisms to handle this. Developers must understand how to configure scaling policies and ensure that applications respond effectively to changing workloads.

Kubernetes Multi-Container Architectures and Design Thinking

Modern applications are rarely composed of a single container. Instead, they often consist of multiple interconnected components that work together to deliver functionality. This multi-container approach allows developers to separate concerns and build more modular systems.

CKAD emphasizes understanding how to design and manage these multi-container environments. Containers within a pod share resources such as networking and storage, allowing them to communicate efficiently. This design pattern is often used for sidecar containers, where auxiliary processes support the main application.

For example, a logging container may run alongside an application container to collect and forward logs. Similarly, a proxy container may handle networking tasks on behalf of the main application. Understanding these patterns is essential for effective Kubernetes development.

Designing multi-container applications requires careful planning. Developers must consider how containers interact, how resources are shared, and how failures are handled. Poor design can lead to performance issues or instability, while well-designed systems can improve reliability and scalability.

CKAD scenarios often test this design thinking by requiring candidates to implement or modify multi-container setups. These tasks simulate real-world situations where applications must be structured for efficiency and maintainability.

Another important aspect of design is modularity. Kubernetes encourages breaking applications into smaller, independent components. This makes systems easier to manage and scale. Developers must understand how to structure applications in a way that aligns with this philosophy.

Dependency management is also important. In multi-container systems, components often depend on each other. Developers must ensure that these dependencies are properly managed to avoid failures or performance issues.

Communication between containers is typically handled through shared networking interfaces. Understanding how this communication works is essential for building effective multi-container applications. CKAD emphasizes the ability to configure and troubleshoot these interactions.

CKAD Learning Progression and Skill Development Path

Developing the skills required for CKAD is a gradual process. It involves moving from basic Kubernetes concepts to more advanced application development techniques. This progression typically begins with understanding container fundamentals and gradually expands into complex orchestration scenarios.

Early stages of learning focus on understanding how Kubernetes organizes resources. This includes learning about pods, deployments, and services. These foundational concepts are essential for everything else that follows.

As learners progress, they begin to work with configuration management and application deployment strategies. This stage involves understanding how applications are defined and managed within Kubernetes environments.

The next stage involves troubleshooting and debugging. This is where learners begin to encounter real-world challenges such as misconfigured applications, resource limitations, and networking issues. Developing strong debugging skills is essential for success at this level.

Advanced stages of learning focus on optimization and design. This includes building scalable architectures, managing complex deployments, and ensuring that applications perform efficiently under load.

Throughout this progression, hands-on practice is essential. Kubernetes is a practical technology, and theoretical knowledge alone is not sufficient. Real understanding comes from working directly with clusters and solving problems in real time.

By the time a learner reaches CKAD-level proficiency, they should be comfortable working independently in Kubernetes environments. This includes deploying applications, troubleshooting issues, and managing complex configurations.

The certification represents the culmination of this learning journey. It validates that an individual has developed the practical skills required to operate effectively in modern cloud-native environments.

Advanced CKAD Concepts and Production-Level Kubernetes Thinking

As Kubernetes adoption matures across industries, the expectations placed on developers extend far beyond basic cluster interaction. At an advanced level, Kubernetes is no longer just a deployment platform but a full operational ecosystem where application behavior, infrastructure constraints, and system design decisions converge. The Certified Kubernetes Application Developer certification reflects this reality by testing not only foundational skills but also the ability to think like a production-ready cloud-native engineer.

In real production environments, Kubernetes workloads are rarely isolated or simple. They exist within interconnected systems where reliability, scalability, and resilience are non-negotiable. Advanced Kubernetes thinking requires understanding how applications behave under stress, how failures propagate, and how system design decisions influence long-term stability. This mindset is critical for anyone aiming to work effectively in cloud-native environments at scale.

One of the key advanced concepts in Kubernetes is workload resilience. Applications must be designed with the assumption that failures will happen. Nodes may go offline, containers may crash, and network interruptions may occur. Kubernetes provides mechanisms to handle these failures automatically, but developers must design applications that take full advantage of these capabilities.

Resilience is achieved through replication, self-healing mechanisms, and proper configuration of deployment strategies. When an application is replicated across multiple pods, Kubernetes ensures that failed instances are automatically replaced. However, this only works effectively if the application is designed to be stateless or properly manages state externally.

State management becomes one of the most critical challenges in advanced Kubernetes usage. Stateless applications are easier to scale and manage, but many real-world systems require persistent data storage. Kubernetes provides persistent volume abstractions that allow data to survive beyond the lifecycle of individual containers.

Understanding how to design applications that safely interact with persistent storage is a key CKAD-level skill. Poorly managed storage can lead to data loss, corruption, or performance degradation. Developers must ensure that storage claims are correctly configured and that applications handle data access in a consistent and reliable way.

Another advanced area involves workload scaling strategies. Kubernetes supports both manual and automatic scaling mechanisms, allowing applications to adjust dynamically based on demand. Horizontal scaling, in particular, plays a major role in modern cloud-native systems.

Scaling decisions are not just about increasing or decreasing the number of pods. They also involve understanding resource utilization, application bottlenecks, and system constraints. In production environments, improper scaling configurations can lead to resource exhaustion or inefficient infrastructure usage.

Advanced Kubernetes usage also requires understanding scheduling behavior at a deeper level. The Kubernetes scheduler is responsible for placing pods onto appropriate nodes based on resource availability and constraints. Developers must understand how scheduling decisions are made and how to influence them using advanced configuration options.

Node affinity, pod affinity, and anti-affinity rules allow developers to control where workloads are placed. These rules are particularly important in large-scale environments where workload distribution affects performance, latency, and fault tolerance.

Taints and tolerations introduce another layer of scheduling control. They allow nodes to repel certain workloads unless explicitly allowed. This mechanism is often used to isolate workloads, enforce security boundaries, or optimize resource allocation.

Understanding these scheduling mechanisms is essential for building efficient and reliable systems. CKAD-level scenarios often test the ability to configure and troubleshoot scheduling behavior under constrained conditions.

CKAD in Real DevOps and Cloud-Native Ecosystems

In modern DevOps environments, Kubernetes is not used in isolation. It is part of a broader ecosystem that includes continuous integration, continuous delivery, monitoring systems, and infrastructure automation tools. CKAD-level expertise is most valuable when applied within this integrated environment.

Continuous delivery pipelines rely heavily on Kubernetes for deploying applications in a controlled and repeatable manner. Developers must understand how application changes move through different stages, from development to production. Kubernetes plays a central role in ensuring that these transitions are smooth and reliable.

In real-world workflows, applications are often built, tested, and deployed automatically. Kubernetes handles the runtime environment, while external systems manage build and deployment pipelines. Developers working at the CKAD level must understand how their applications behave within this automated lifecycle.

Monitoring and observability are also critical components of production Kubernetes environments. Applications must be continuously monitored to ensure they are functioning correctly. Metrics, logs, and events provide insights into system behavior and help identify issues before they become critical failures.

In advanced scenarios, observability is not just about detecting failures but also about understanding performance trends and optimizing system behavior. Developers must design applications that expose meaningful metrics and logs that can be consumed by monitoring systems.

Another important aspect of production Kubernetes usage is security. While CKAD does not focus deeply on security architecture, developers must still understand basic security principles that affect application design. These include managing secrets, controlling access permissions, and ensuring secure communication between components.

Secrets management is particularly important because applications often require sensitive data such as credentials or API keys. Kubernetes provides mechanisms to store and inject this data securely into running applications. Developers must ensure that secrets are handled properly to avoid exposure or misuse.

Role-based access control is another important concept in Kubernetes environments. It determines what actions users and applications are allowed to perform within the cluster. Understanding how permissions are structured helps developers design applications that operate securely within organizational boundaries.

Network security also plays a role in advanced Kubernetes usage. Network policies can be used to control traffic flow between pods, ensuring that only authorized communication is allowed. This is essential for maintaining isolation between different application components or environments.

Performance Optimization and Efficiency in Kubernetes Applications

As Kubernetes environments scale, performance optimization becomes increasingly important. Applications must be designed not only to function correctly but also to use resources efficiently. Poorly optimized workloads can lead to increased costs, degraded performance, and system instability.

Resource management is a key factor in performance optimization. Kubernetes allows developers to define resource requests and limits for containers. These settings determine how much CPU and memory an application can use. Proper configuration ensures that applications have enough resources to function without overwhelming the system.

Over-provisioning resources can lead to wasted infrastructure, while under-provisioning can cause application failures. Finding the right balance requires careful analysis of application behavior and workload patterns.

Another important aspect of optimization is understanding container startup and shutdown behavior. Applications should be designed to start quickly and shut down gracefully. Slow startup times can affect scalability, while improper shutdown handling can lead to data corruption or inconsistent states.

Caching strategies can also play a role in improving performance. By reducing the need for repeated computation or data retrieval, caching can significantly improve application responsiveness. However, caching must be carefully managed to avoid stale or inconsistent data.

Network performance is another area where optimization is important. In distributed systems, communication between services can introduce latency. Developers must understand how to minimize network overhead and ensure efficient communication between components.

Load balancing is also a critical component of performance management. Kubernetes distributes traffic across multiple instances of an application, ensuring that no single instance becomes a bottleneck. Understanding how load balancing works helps developers design more efficient systems.

Advanced Troubleshooting and Diagnostic Strategies

Troubleshooting in Kubernetes environments becomes more complex as systems scale. Issues are often not isolated but distributed across multiple components. Effective debugging requires a structured approach and a deep understanding of how Kubernetes operates internally.

One of the most important troubleshooting techniques involves analyzing system logs. Logs provide a detailed record of application behavior and are often the first source of information when diagnosing issues. Developers must know how to filter and interpret logs effectively.

Another key diagnostic tool is resource inspection. Kubernetes provides detailed information about the state of all resources within a cluster. This includes pod status, node health, and deployment conditions. Understanding how to interpret this information is essential for identifying problems.

Event monitoring is also a powerful troubleshooting tool. Kubernetes generates events that record significant changes or issues within the system. These events can provide valuable insights into why a particular problem occurred.

In more complex scenarios, issues may arise from interactions between multiple components. For example, a networking issue may be caused by a misconfigured service, a failing pod, or a network policy restriction. Diagnosing such issues requires a systematic approach that considers all possible causes.

Performance-related issues are another common area of troubleshooting. Applications may experience slow response times or high resource usage. Identifying the root cause requires analyzing metrics, logs, and resource utilization patterns.

CKAD-level scenarios often simulate these types of complex issues, requiring candidates to apply multiple diagnostic techniques to resolve problems. This reflects real-world environments where issues are rarely simple or isolated.

CKAD as a Career Development Catalyst in Cloud-Native Engineering

Beyond technical skills, CKAD certification plays a significant role in shaping career development paths in cloud-native engineering. It serves as a structured validation of practical Kubernetes knowledge, signaling readiness to work in modern DevOps environments.

The certification aligns closely with industry demand for professionals who can bridge the gap between development and operations. As organizations adopt cloud-native architectures, the need for developers who understand infrastructure becomes increasingly important.

CKAD-certified professionals are often positioned to work on critical application deployment and management tasks. This includes designing scalable systems, troubleshooting production issues, and optimizing application performance.

The certification also serves as a foundation for further specialization. Many professionals use CKAD as a stepping stone toward more advanced Kubernetes certifications or broader cloud engineering roles. It provides a strong technical base that supports long-term career growth.

In competitive job markets, demonstrated Kubernetes expertise can significantly enhance professional opportunities. Employers value candidates who can contribute immediately to cloud-native environments without extensive onboarding.

The skills developed through CKAD preparation extend beyond Kubernetes itself. They also reinforce broader engineering principles such as system design, automation, and distributed computing. These skills are transferable across many areas of software development and infrastructure engineering.

As cloud-native adoption continues to grow, Kubernetes expertise is becoming a core requirement rather than a specialized skill. CKAD certification reflects this shift by providing a standardized way to validate practical knowledge in this domain.

Ultimately, CKAD represents more than just a technical credential. It reflects a deeper understanding of modern application architecture, operational thinking, and system design principles that are essential in today’s technology landscape.

Conclusion

Kubernetes has fundamentally changed how modern software systems are designed, deployed, and maintained, and this shift has naturally elevated the importance of skills validation through practical certifications such as CKAD. What makes this certification particularly relevant is not just the credential itself, but the philosophy it represents. It reflects a broader transformation in the technology industry, where theoretical knowledge alone is no longer sufficient, and real-world operational capability has become the true measure of expertise.

In today’s cloud-native ecosystem, applications are no longer built as isolated units running on single machines. Instead, they are distributed across clusters, broken into microservices, and designed to scale dynamically based on demand. This evolution has introduced both opportunities and complexities. While systems are more scalable and resilient than ever before, they also require a deeper understanding of orchestration, automation, and infrastructure behavior. Kubernetes sits at the center of this transformation, acting as the control layer that enables these modern architectures to function efficiently.

The CKAD certification aligns closely with these industry realities by focusing on hands-on skills that directly translate into production environments. It emphasizes the ability to work with live clusters, manage application deployments, troubleshoot failures, and configure systems under real constraints. This practical orientation ensures that individuals who pursue this path are not only learning concepts but also internalizing how those concepts behave in real systems where performance, reliability, and scalability matter.

One of the most important takeaways from studying Kubernetes at this level is the realization that modern application development is no longer just about writing code. It is equally about understanding how that code behaves once it is deployed into a distributed environment. Developers are expected to think beyond application logic and consider infrastructure interactions, networking flows, resource consumption, and system resilience. This shift in responsibility represents a significant evolution in the role of a software developer.

CKAD preparation reinforces this mindset by exposing learners to scenarios where problems are rarely straightforward. A misconfigured deployment, a failing service, or a resource constraint can all lead to unexpected system behavior. Solving these issues requires not only technical knowledge but also structured thinking and the ability to interpret system signals effectively. This is where Kubernetes experience becomes invaluable, as it trains professionals to analyze systems holistically rather than focusing on isolated components.

Another important aspect of this learning journey is the emphasis on automation and declarative configuration. Kubernetes encourages users to define desired system states rather than manually controlling every operational step. This approach reduces complexity and improves consistency, especially in large-scale environments. However, it also requires a shift in mindset. Instead of thinking in terms of direct actions, developers must think in terms of system behavior and desired outcomes. This conceptual shift is one of the most powerful outcomes of engaging deeply with Kubernetes.

The value of CKAD also extends into how it prepares professionals for collaboration within DevOps-oriented teams. Modern software delivery is highly collaborative, involving developers, operations engineers, and infrastructure specialists working together in tightly integrated workflows. Kubernetes acts as a shared platform that connects these roles, enabling consistent deployment practices and standardized operational procedures. By gaining hands-on experience with Kubernetes, professionals become better equipped to participate in these collaborative environments.

In addition, the certification journey encourages familiarity with debugging and troubleshooting at a system level. In real-world environments, issues are rarely caused by a single obvious factor. Instead, they often result from a combination of misconfigurations, resource limitations, or network constraints. Developing the ability to trace these issues across multiple layers of the system is a critical skill in cloud-native operations. This diagnostic capability is one of the most valuable outcomes of CKAD-level expertise.

Scalability is another central theme that emerges throughout Kubernetes learning. Modern applications must be able to handle fluctuating workloads without manual intervention. Kubernetes provides mechanisms for horizontal scaling, load distribution, and automated recovery, but these mechanisms must be properly configured to be effective. Understanding how and when to apply these capabilities is essential for building robust systems that can adapt to real-world demands.

Security considerations also play an increasingly important role in Kubernetes environments. While CKAD is not a security-focused certification, it still introduces foundational concepts that influence secure application design. Managing sensitive data, controlling access permissions, and ensuring secure communication between services are all essential aspects of working in production systems. These principles become even more important as systems scale and handle larger volumes of critical data.

Beyond technical skills, the broader significance of CKAD lies in how it positions professionals within the evolving technology landscape. As organizations continue transitioning to cloud-native architectures, the demand for individuals who understand container orchestration is steadily increasing. This demand is not limited to specific industries but spans across sectors such as finance, healthcare, retail, and technology services. Kubernetes has effectively become a universal platform for modern application deployment.

The certification also acts as a stepping stone for further growth in cloud engineering and DevOps careers. It provides a strong foundation that can be built upon with more advanced infrastructure, architecture, or platform engineering roles. The skills developed through CKAD preparation are highly transferable and applicable to a wide range of technical domains, making it a valuable investment in long-term career development.

However, the true value of CKAD is not limited to career advancement alone. It also fosters a deeper understanding of how modern systems operate at scale. This understanding changes how developers approach problem-solving, system design, and application architecture. It encourages a mindset that prioritizes resilience, scalability, and automation, which are essential qualities in today’s software systems.

Ultimately, Kubernetes represents more than just a technology; it represents a shift in how software is conceived and operated. CKAD certification serves as a structured pathway into this world, guiding professionals through the practical realities of cloud-native development. It bridges the gap between theoretical knowledge and operational expertise, ensuring that individuals are not only familiar with Kubernetes concepts but are also capable of applying them effectively in real environments.

As the industry continues to evolve, the importance of such practical, hands-on skills will only increase. Systems will become more distributed, workloads more dynamic, and infrastructure more abstracted. In this context, the ability to navigate Kubernetes environments confidently will remain a highly valuable skill. CKAD stands as a reflection of this reality, offering a clear benchmark for those who want to validate their readiness for modern application development challenges.

In the broader perspective, the journey of learning Kubernetes and pursuing CKAD is less about earning a certification and more about adapting to a new way of thinking about software systems. It encourages professionals to embrace complexity, understand distributed architectures, and develop the skills needed to operate in environments where automation and scalability are fundamental requirements. This transformation in thinking is what ultimately defines success in the cloud-native era.