The modern cybersecurity landscape is defined by constant exposure, rapid technological change, and increasingly sophisticated attack methodologies. Organizations today operate across distributed infrastructures that include cloud platforms, hybrid networks, remote endpoints, containerized applications, and API-driven ecosystems. Each of these components introduces its own set of vulnerabilities and misconfiguration risks. As a result, the traditional perimeter-based security model has become largely ineffective in isolation. Attackers no longer need to breach a central firewall to gain access; instead, they exploit weak credentials, unpatched services, insecure APIs, and human error to move laterally across systems.

Cyber threats have also evolved in scale and automation. Attackers often use automated scanning tools, botnets, and exploit frameworks to identify vulnerabilities across millions of systems simultaneously. This industrialization of cybercrime has significantly increased the frequency and severity of breaches. Data theft, ransomware deployment, identity compromise, and infrastructure disruption have become routine outcomes of successful attacks. In this environment, security is no longer a static defense mechanism but a continuous process of testing, validation, and improvement.

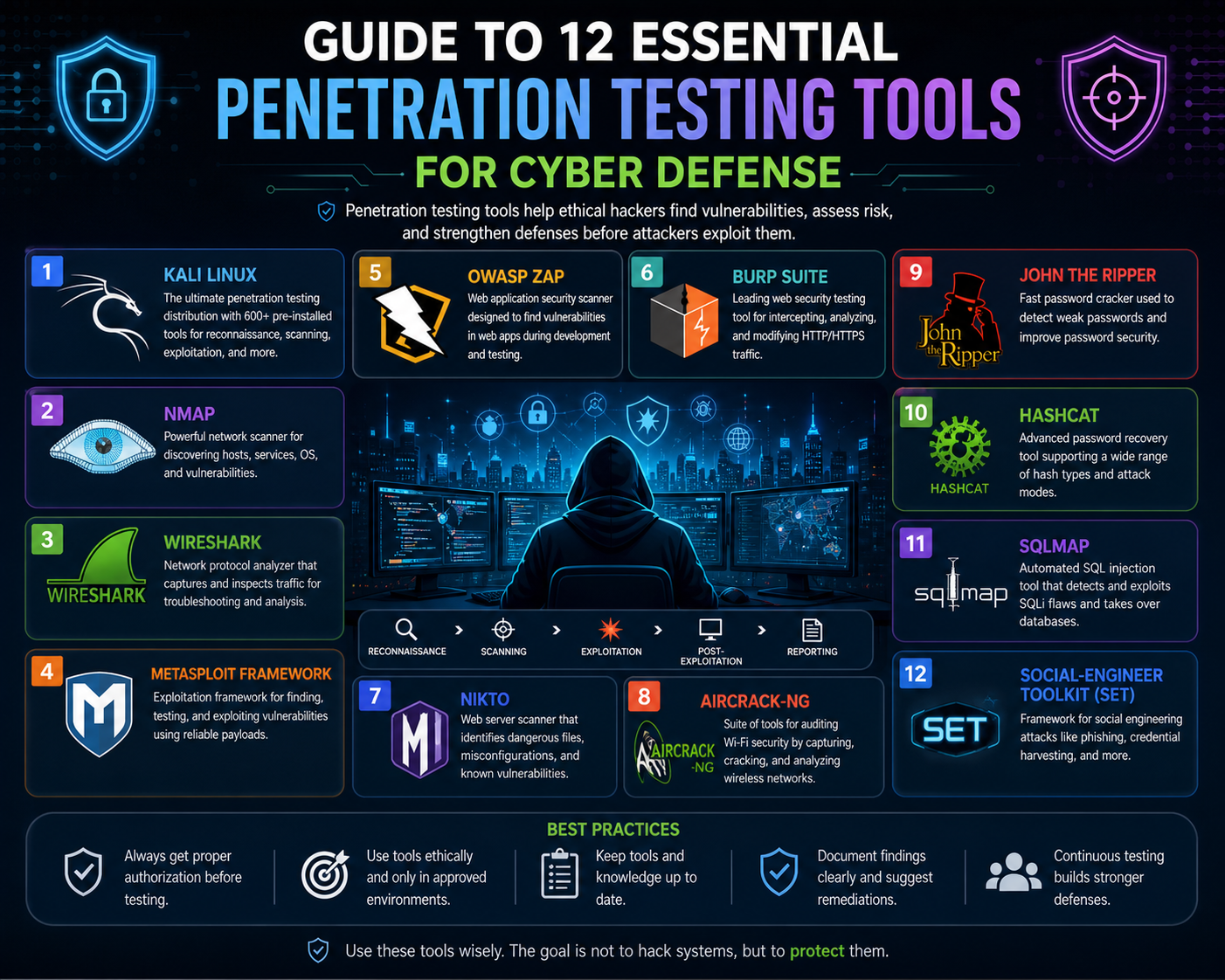

Penetration testing has emerged as one of the most effective methodologies for addressing these challenges. It provides a structured way to simulate real-world attacks against systems, applications, and networks in order to identify weaknesses before malicious actors can exploit them. Unlike passive vulnerability scanning, penetration testing involves active exploitation attempts that replicate attacker behavior. This makes it a critical component of modern cybersecurity strategies.

Defining Penetration Testing in Practical Security Operations

Penetration testing is a controlled and authorized simulation of cyberattacks conducted to evaluate the security posture of digital systems. It involves a systematic approach to identifying vulnerabilities, testing exploitability, and assessing the potential impact of security weaknesses. The process is typically carried out by trained security professionals who follow predefined rules of engagement to ensure that testing remains ethical, safe, and non-disruptive.

The penetration testing lifecycle generally includes several key phases. The first phase is reconnaissance, where information about the target system is collected. This may include network ranges, domain structures, publicly exposed services, and application endpoints. The second phase involves scanning and enumeration, where active systems and services are identified along with potential vulnerabilities. The third phase is exploitation, where testers attempt to take advantage of identified weaknesses to gain unauthorized access. The fourth phase is post-exploitation, which evaluates how far an attacker could move within the system after initial compromise. Finally, reporting consolidates all findings into structured documentation that includes vulnerabilities, severity levels, and remediation recommendations.

This structured approach ensures that penetration testing provides actionable insights rather than just theoretical risk assessments. It also helps organizations prioritize security investments based on real-world exploitability rather than abstract vulnerability scores.

Invicti Security Scanner and Automated Web Application Testing

Web applications are among the most frequently targeted components in modern infrastructures due to their accessibility and complexity. Invicti Security Scanner is designed to address this attack surface by providing automated vulnerability detection for web-based systems. It evaluates applications by simulating malicious input behavior and analyzing server responses to detect security flaws.

One of the primary strengths of this tool is its ability to integrate automation into continuous testing workflows. This allows organizations to perform security validation during development and deployment cycles rather than relying solely on post-release assessments. By embedding security checks into development pipelines, vulnerabilities can be identified earlier in the software lifecycle, reducing the cost and complexity of remediation.

The scanner identifies common web application vulnerabilities such as SQL injection, cross-site scripting, insecure authentication mechanisms, and misconfigured access controls. It also evaluates API endpoints, which are increasingly important in modern architectures where applications communicate through structured data exchanges rather than traditional web pages.

Another important feature is its ability to reduce false positives through validation-based scanning. Instead of simply reporting potential issues, it attempts to confirm exploitability, ensuring that security teams focus on real threats. This improves efficiency and reduces alert fatigue in large-scale environments where thousands of vulnerabilities may be detected across multiple applications.

John the Ripper and Password Security Assessment

Authentication remains one of the most critical security boundaries in any system. Weak passwords continue to be a major vulnerability exploited by attackers. John the Ripper is a password auditing tool designed to evaluate the strength of authentication systems by attempting to recover passwords through various cracking techniques.

It operates using multiple modes, including dictionary-based attacks, brute-force combinations, and rule-based transformations. Dictionary attacks rely on precompiled lists of commonly used passwords, while brute-force methods systematically test character combinations. Rule-based transformations modify existing words by applying variations such as capitalization changes, number substitutions, and symbol additions.

In real-world security testing, this tool is used to assess password policies and identify weak credential patterns. For example, if users consistently create predictable passwords based on personal information or common phrases, the system is considered vulnerable. This highlights the importance of enforcing strong password complexity requirements and implementing multi-factor authentication.

Beyond password cracking, the tool also provides insights into organizational security culture. Weak password usage often reflects insufficient user awareness or inadequate enforcement policies. By identifying these weaknesses, organizations can improve authentication strategies and reduce the risk of unauthorized access.

Wireshark for Network Traffic Visibility and Analysis

Network communication forms the backbone of all digital systems. Every interaction between devices, applications, and services generates data packets that travel across networks. Wireshark is a packet analysis tool that captures and inspects this traffic in real time, providing deep visibility into network behavior.

It decodes communication protocols such as HTTP, DNS, TCP, UDP, and VoIP, allowing analysts to examine both metadata and payload data. This level of inspection is critical for identifying abnormal behavior, such as unauthorized data transmission, unencrypted communication, or suspicious external connections.

In penetration testing, Wireshark is often used to monitor network activity during exploitation attempts. For example, if a system is compromised, packet analysis can reveal command-and-control traffic or data exfiltration attempts. It can also be used to detect misconfigured services that expose sensitive information over unencrypted channels.

Another important use case is troubleshooting network anomalies. By analyzing packet flow, security professionals can identify latency issues, routing problems, or misconfigured firewall rules. This makes Wireshark not only a security tool but also a diagnostic instrument for network performance analysis.

Kali Linux as a Dedicated Security Testing Platform

Security testing requires a controlled environment equipped with specialized tools and configurations. Kali Linux is a Linux-based operating system designed specifically for penetration testing and security auditing. It provides a preconfigured environment that includes hundreds of security tools for scanning, exploitation, analysis, and forensic investigation.

One of its key advantages is tool integration. Instead of manually installing and configuring individual utilities, Kali Linux provides a centralized platform where tools are already optimized for security testing workflows. These include network scanners, password crackers, wireless analysis tools, and reverse engineering utilities.

Kali Linux also supports both virtual and physical deployments, making it suitable for different testing environments. Security professionals often use it in isolated virtual machines to ensure that testing activities do not interfere with production systems. This isolation is important because penetration testing often involves simulated attacks that could disrupt normal operations if not properly contained.

Another important feature is its flexibility. Users can customize toolsets based on specific testing requirements, allowing for tailored environments focused on web applications, network security, or forensic analysis. This adaptability makes it a foundational platform in cybersecurity education and professional security operations.

Burp Suite for Intercepting and Manipulating Web Traffic

Web application security testing requires the ability to observe and manipulate data exchanges between clients and servers. Burp Suite functions as an intercepting proxy that sits between a browser and a target application, capturing all HTTP and HTTPS traffic.

This allows security testers to analyze request structures, modify input parameters, and replay interactions to test application behavior. One of its most powerful features is automated crawling, which maps application structures by discovering endpoints, hidden parameters, and dynamically generated content.

Burp Suite also includes scanning capabilities that identify common vulnerabilities such as injection flaws, authentication weaknesses, and session management issues. These scans simulate attacker behavior by injecting malicious payloads into input fields and analyzing server responses.

In addition to automation, the tool supports manual testing workflows that allow precise control over request manipulation. This is essential for testing complex business logic vulnerabilities that cannot be detected through automated scanning alone.

Social Engineering Toolkit and Human-Centric Security Risks

While technical vulnerabilities are critical, human behavior remains one of the most exploited attack vectors in cybersecurity. Social engineering attacks rely on psychological manipulation rather than system weaknesses. The Social Engineering Toolkit is designed to simulate these types of attacks in controlled environments.

It enables the creation of phishing scenarios, credential harvesting pages, and deceptive communication flows that mimic real-world attack strategies. These simulations help organizations evaluate how users respond to suspicious emails, fake login pages, and impersonation attempts.

By analyzing user behavior during these simulations, organizations can identify gaps in awareness and training. This helps improve security education programs and reduce susceptibility to manipulation-based attacks. It also highlights the importance of combining technical security controls with human-focused defenses.

PowerShell-Based Security Analysis for Windows Infrastructure

Windows environments are widely deployed across enterprise networks, making them a primary focus for penetration testing. PowerShell provides a scripting interface that allows deep interaction with system components, network configurations, and security policies.

Security professionals use PowerShell scripts to enumerate users, analyze permissions, inspect system configurations, and identify active services. This enables large-scale auditing across multiple systems simultaneously.

PowerShell is also used to simulate attacker techniques such as lateral movement and privilege escalation. These simulations help organizations understand how attackers could move within Windows environments if initial access is gained.

IDA for Binary-Level Security Analysis

IDA is a reverse engineering tool used to analyze compiled software at the binary level. It converts machine code into a structured format that allows analysts to understand program behavior without access to source code.

This is particularly important in malware analysis and vulnerability research. Security professionals use IDA to identify hidden functions, analyze control flow, and detect logic flaws within compiled applications.

Binary analysis provides insights into vulnerabilities that cannot be detected through surface-level testing, such as memory corruption issues and insecure function calls.

The Evolution of Penetration Testing Beyond Basic Vulnerability Scanning

As enterprise systems grow more distributed and interconnected, penetration testing has shifted from simple vulnerability detection toward full-scale behavioral analysis of systems under simulated attack conditions. Modern infrastructure is no longer limited to a single server or application; instead, it spans cloud services, APIs, microservices, container clusters, remote endpoints, and third-party integrations. This complexity creates layered attack surfaces where weaknesses can exist at multiple points simultaneously, often interacting in unexpected ways.

Traditional scanning tools alone are no longer sufficient to understand these environments. Instead, penetration testing now requires a combination of automated analysis, manual exploitation techniques, traffic inspection, and behavioral simulation. The goal is not only to identify vulnerabilities but to understand how they can be chained together to form a complete attack path. This includes entry point discovery, privilege escalation opportunities, lateral movement possibilities, and data extraction risks. In this context, advanced penetration testing tools play a critical role in bridging the gap between theoretical vulnerabilities and real-world exploit scenarios.

Burp Suite for Deep Web Application Security Analysis

Web applications are one of the most frequently targeted attack surfaces in modern cybersecurity due to their direct exposure to users and external networks. Burp Suite is a comprehensive web security testing platform designed to analyze, intercept, and manipulate HTTP and HTTPS traffic between clients and servers. It functions as an intercepting proxy, allowing security testers to observe all data exchanges in real time.

One of the most important capabilities of Burp Suite is its ability to map web applications automatically. This process, often referred to as crawling, identifies endpoints, parameters, input fields, and hidden resources that may not be visible through standard navigation. By building a complete structural map of an application, testers gain visibility into its full attack surface, including overlooked or deprecated components.

Burp Suite also includes active scanning capabilities that simulate malicious inputs to identify vulnerabilities such as SQL injection, cross-site scripting, insecure deserialization, and authentication bypass flaws. These scans analyze how the application responds to abnormal or unexpected input conditions. If the application processes input without proper validation or sanitization, vulnerabilities are flagged for further investigation.

Beyond automated scanning, Burp Suite provides extensive manual testing functionality. Security testers can intercept requests, modify headers, alter parameters, and replay traffic to evaluate how the system responds under manipulated conditions. This is particularly useful for identifying business logic vulnerabilities, where the issue is not a technical flaw but a flaw in application workflow design.

Burp Suite also supports session analysis, allowing testers to evaluate how authentication tokens are generated, stored, and validated. Weak session management can lead to session hijacking, unauthorized access, or privilege escalation. By analyzing these mechanisms, security professionals can assess the robustness of authentication systems and identify potential weaknesses in user session handling.

Social Engineering Toolkit for Behavioral Attack Simulation

Technical defenses alone are not sufficient to secure modern systems because human behavior remains a critical attack vector. Social engineering exploits psychological patterns such as trust, urgency, authority, and curiosity to manipulate users into performing actions that compromise security. The Social Engineering Toolkit is designed to simulate these types of attacks in controlled environments.

This toolkit enables the creation of phishing campaigns that replicate realistic communication patterns used in malicious email attacks. These simulations often include cloned login pages, fake password reset portals, and deceptive messaging structures designed to trick users into entering sensitive credentials. The goal is not to cause harm but to evaluate user awareness and response behavior.

One of the key insights gained from social engineering simulations is the identification of behavioral vulnerabilities. Even in environments with strong technical defenses, users may still fall victim to deception-based attacks. This highlights the importance of security awareness training as part of a comprehensive defense strategy.

The toolkit also supports multi-stage attack simulations where initial contact leads to secondary exploitation steps. For example, a user may be directed to a fake login page, and once credentials are entered, those credentials may be used to access internal systems. This demonstrates how attackers combine psychological manipulation with technical exploitation to bypass security controls.

In addition to phishing simulations, social engineering testing can include impersonation scenarios and pretext-based interactions. These methods evaluate how easily unauthorized individuals can gain access to restricted environments by exploiting human trust rather than technical vulnerabilities.

PowerShell for Windows System Security and Internal Assessment

Windows-based environments dominate enterprise infrastructure, making them a primary focus for penetration testing activities. PowerShell provides a powerful scripting interface that enables deep interaction with system configurations, network settings, and security policies.

Security professionals use PowerShell to perform system enumeration, including user account analysis, group membership mapping, service inspection, and permission auditing. This allows testers to identify misconfigurations that may not be visible through graphical interfaces. For example, excessive administrative privileges or improperly configured access controls can be detected through scripted analysis.

PowerShell is also used for remote system management, enabling security professionals to assess multiple machines within a network from a centralized location. This is particularly useful in large enterprise environments where manual inspection would be inefficient.

From an attack simulation perspective, PowerShell is frequently used to replicate attacker behavior within Windows environments. Attackers often use scripting tools to execute commands, escalate privileges, and move laterally across systems. By simulating these actions, penetration testers can evaluate how well detection systems and endpoint protections respond to suspicious activity.

PowerShell also plays a role in identifying persistence mechanisms. Attackers often attempt to maintain access to compromised systems by modifying startup scripts, scheduled tasks, or registry entries. By analyzing these areas, security professionals can detect hidden persistence strategies and remove unauthorized access points.

IDA for Reverse Engineering and Binary-Level Security Analysis

While application and network testing focus on external behavior, binary analysis provides insight into internal software logic. IDA is a reverse engineering tool used to analyze compiled executables at the machine code level. It converts binary instructions into a structured representation that allows analysts to understand program behavior without access to source code.

This capability is essential in malware analysis, exploit research, and proprietary software auditing. Security professionals use IDA to identify hidden functions, analyze control flow structures, and detect logic flaws embedded within compiled applications.

Binary analysis is particularly important for identifying vulnerabilities that are not visible at higher abstraction levels. These include memory corruption issues, buffer overflows, and insecure pointer handling. Such vulnerabilities often require deep inspection of program execution flow to identify potential exploitation paths.

IDA also supports static analysis, which allows researchers to examine software without executing it. This reduces risk when analyzing potentially malicious binaries. In advanced scenarios, dynamic analysis can also be performed to observe runtime behavior and system interactions.

By reconstructing program logic, IDA helps security professionals understand how software behaves under different conditions. This is particularly useful when analyzing malware, where obfuscation techniques are used to hide malicious functionality.

Burp Suite Intruder and Automated Input Testing

Within web application testing, automated input manipulation plays a significant role in identifying vulnerabilities. Burp Suite Intruder is a component designed to systematically test input fields by injecting a wide range of payloads.

This tool is particularly useful for identifying injection-based vulnerabilities such as SQL injection, command injection, and cross-site scripting. Automating payload delivery, it allows testers to evaluate how applications respond to unexpected or malicious input at scale.

Intruder can also be used to test authentication mechanisms by attempting multiple credential combinations. This helps identify weak password policies or insufficient rate-limiting controls that could allow brute-force attacks.

The results generated by automated input testing provide valuable insight into how applications handle edge-case scenarios. Even minor inconsistencies in input validation can lead to significant security risks if exploited in a real-world environment.

Integration of Web and System-Level Security Testing

Modern penetration testing requires integration across multiple layers of infrastructure. Web application tools, system analysis scripts, and binary inspection utilities all contribute to a unified understanding of system security.

For example, a vulnerability identified in a web application may lead to access to backend systems where additional weaknesses exist. Similarly, a misconfigured Windows service identified through PowerShell analysis may expose administrative interfaces that can be exploited through web-based attack vectors.

This interconnected approach reflects real-world attacker behavior, where multiple small vulnerabilities are combined into a complete compromise path. Penetration testing tools are therefore used not in isolation but as part of a coordinated workflow that evaluates the entire attack surface.

Advanced Exploitation Simulation and Attack Path Modeling

One of the most important aspects of modern penetration testing is the ability to simulate full attack chains. This involves combining reconnaissance, exploitation, privilege escalation, and lateral movement into a single structured scenario.

In a typical attack simulation, network scanning tools identify exposed services. Web application tools detect input vulnerabilities. System-level scripts uncover misconfigurations. Binary analysis reveals deeper logic flaws. Social engineering tools simulate human compromise.

When combined, these steps create a realistic model of how an attacker could progress through an environment. This allows organizations to understand not just individual vulnerabilities but the overall impact of chained exploitation.

Understanding Full Attack Surface Penetration Testing in Modern Infrastructure

Modern penetration testing is no longer limited to identifying isolated vulnerabilities within a single system. Instead, it focuses on evaluating the entire attack surface across interconnected environments. This includes networks, web applications, databases, content management systems, authentication layers, APIs, and internal services. Attackers in real-world scenarios rarely rely on a single weakness. Instead, they combine multiple small misconfigurations and vulnerabilities into a complete exploitation chain that leads to full system compromise.

As organizations adopt hybrid cloud environments, microservices architectures, and distributed applications, the complexity of their infrastructure increases significantly. Each new service introduces additional endpoints, communication paths, and configuration dependencies. These interconnected components create opportunities for attackers to pivot from one system to another once initial access is gained. Penetration testing tools in this phase are designed to map these relationships and simulate how attackers move across systems in a real-world compromise scenario.

The objective of full-spectrum penetration testing is not only to identify vulnerabilities but to understand how those vulnerabilities interact. A low-severity issue in one system may become critical when combined with another weakness elsewhere. This chain-based perspective is essential for evaluating real-world risk.

Nmap for Network Discovery and Service Enumeration

Network visibility is the foundation of any penetration testing engagement. Without understanding what systems exist within an environment, it is impossible to evaluate security posture accurately. Nmap is a powerful network discovery tool used to identify active hosts, open ports, running services, and system configurations across a network.

It works by sending specially crafted packets to target systems and analyzing responses to determine network behavior. Through this process, security professionals can build a comprehensive map of infrastructure assets, including servers, endpoints, and network devices. This mapping process is critical because many vulnerabilities exist simply due to exposed services that were not intended to be publicly accessible.

Nmap also provides service version detection, which helps identify outdated software running on exposed ports. Outdated services often contain known vulnerabilities that can be exploited if not patched. By identifying these services early, penetration testers can highlight high-risk exposures that require immediate remediation.

Another important feature of Nmap is its scripting engine, which allows automated vulnerability detection and advanced scanning logic. These scripts can detect insecure configurations, weak authentication protocols, and potential exploit conditions. This makes Nmap not just a discovery tool but also an early-stage vulnerability assessment platform.

In large-scale environments, Nmap is often used to scan entire IP ranges, providing a macro-level view of network structure. This helps identify shadow IT systems, unauthorized devices, and misconfigured services that may not be documented in official inventories.

SQLMap for Database Injection and Data Exposure Analysis

Databases are one of the most critical components of any digital infrastructure because they store sensitive information such as user credentials, financial records, and operational data. SQLMap is a specialized penetration testing tool designed to detect and exploit SQL injection vulnerabilities in web applications.

SQL injection occurs when user input is improperly sanitized before being included in database queries. This allows attackers to manipulate query logic and interact directly with backend databases. SQLMap automates this process by testing input parameters for injection points and attempting controlled exploitation.

Once a vulnerability is identified, SQLMap can extract database schemas, retrieve table contents, and analyze database structure. This provides insight into what data could be exposed if the vulnerability were exploited in a real-world attack. In some cases, it can also test for privilege escalation within the database environment, determining whether administrative access is possible.

One of the most important aspects of SQLMap is its ability to perform blind injection testing. In many modern systems, error messages are suppressed to prevent information leakage. However, SQLMap can infer database behavior based on response timing and content differences. This allows it to detect vulnerabilities even in hardened environments.

Database security testing is critical because successful SQL injection attacks can bypass authentication systems entirely, expose sensitive records, and allow attackers to modify or delete data. In some cases, database compromise can lead to full system takeover depending on stored credentials or application logic dependencies.

WPScan for Content Management System Vulnerability Assessment

Content management systems are widely used for building and maintaining websites due to their flexibility and ease of use. However, their widespread adoption also makes them attractive targets for attackers. WPScan is a penetration testing tool designed to evaluate security weaknesses in such systems.

It focuses on identifying outdated plugins, insecure themes, weak authentication mechanisms, and publicly exposed configuration files. One of the most significant risks in content management systems is the reliance on third-party extensions. These components are often developed by independent contributors and may not follow strict security standards.

WPScan compares installed components against known vulnerability databases to identify potential risks. This includes outdated plugins with known exploits, insecure administrative configurations, and exposed directories that should not be publicly accessible.

Another important function of WPScan is credential testing. It can evaluate password strength and detect weak administrative credentials that could be easily compromised. Weak authentication remains one of the most common entry points for attackers targeting content management systems.

Content platforms also frequently suffer from misconfigured file permissions and exposed backup files. WPScan helps identify these issues by scanning for publicly accessible resources that may contain sensitive data. This makes it an essential tool for securing high-traffic websites and digital platforms.

SkipFish for Automated Web Application Reconnaissance

Web reconnaissance is the process of systematically analyzing web applications to identify potential vulnerabilities and structural weaknesses. SkipFish is an automated tool designed to perform this function by crawling web applications and mapping their structure.

It begins by exploring available endpoints, input fields, and linked resources within a web application. This allows it to construct a complete representation of the application’s structure, including hidden or dynamically generated components. Many vulnerabilities exist in areas that are not directly accessible through standard navigation, making automated crawling essential for full coverage.

Once the structure is mapped, SkipFish evaluates each component for potential security risks. It identifies misconfigurations, exposed files, insecure input handling, and outdated components. The results are categorized based on severity, allowing security professionals to prioritize remediation efforts effectively.

SkipFish is particularly useful in large-scale environments where manual testing would be inefficient. It reduces the time required to perform initial reconnaissance and provides a structured overview of the application security posture.

Integrating Network, Web, and Database Security Testing

Modern penetration testing requires integration across multiple layers of infrastructure. Network scanning tools identify exposed services, web application tools detect input vulnerabilities, and database tools expose backend risks. When combined, these tools provide a complete view of potential attack paths.

For example, a misconfigured web application may expose an endpoint that allows SQL injection. This vulnerability can then be used to access the backend database, which may contain credentials for internal systems. Those credentials may then be used to gain access to network services identified through scanning tools.

This chain-based approach reflects real-world attacker behavior. Attackers rarely rely on a single vulnerability. Instead, they combine multiple weaknesses to escalate privileges and expand access. Penetration testing tools are designed to simulate these behaviors in controlled environments.

By integrating multiple tools into a single workflow, security professionals can evaluate not just individual vulnerabilities but the overall risk of system compromise.

Attack Chain Construction and Realistic Exploitation Modeling

One of the most important aspects of modern penetration testing is the ability to construct realistic attack chains. This involves simulating how an attacker would move from initial access to full system compromise.

The process typically begins with reconnaissance using network scanning tools. Once potential targets are identified, web application testing tools are used to identify entry points. Database exploitation tools then evaluate backend data access risks. Content management tools assess platform-level vulnerabilities, while system analysis tools evaluate internal configurations.

After initial access is achieved, attackers often attempt lateral movement within the network. This involves using compromised credentials or exploiting internal services to access additional systems. Penetration testers simulate these behaviors to evaluate how well internal segmentation and access controls are enforced.

Attack chain modeling provides a realistic understanding of system resilience. It shows how seemingly minor vulnerabilities can escalate into full compromise when combined with other weaknesses.

Automation in Large-Scale Penetration Testing Environments

As infrastructure grows more complex, manual testing alone is no longer sufficient. Automation plays a critical role in scaling penetration testing across large environments. Tools like Nmap, SQLMap, and SkipFish support automated scanning and analysis, allowing continuous evaluation of security posture.

Automation enables regular scanning of networks, applications, and databases without requiring constant manual intervention. This is particularly important in environments where systems are frequently updated or redeployed.

However, automation has limitations. It can identify vulnerabilities but cannot fully understand business logic or complex system interactions. Human expertise is required to interpret results and design realistic attack scenarios.

The combination of automation and manual analysis provides the most effective penetration testing approach.

Continuous Security Validation and Modern Development Integration

Modern development practices rely heavily on continuous integration and deployment pipelines. This means systems are updated frequently, sometimes multiple times per day. In such environments, security testing must also be continuous.

Penetration testing tools are increasingly integrated into development pipelines to evaluate security during build and deployment phases. This ensures that vulnerabilities are detected early in the lifecycle rather than after deployment.

Continuous validation helps reduce the window of exposure between vulnerability introduction and detection. It also ensures that security is treated as an ongoing process rather than a one-time assessment.

Final Technical Perspective on Full-Spectrum Penetration Testing Tools

The tools and methodologies discussed in this section represent the most comprehensive level of penetration testing. They cover network discovery, database exploitation, web application analysis, content management security, and automated reconnaissance.

Together, they provide a complete framework for evaluating modern digital infrastructure. By simulating real-world attack chains across multiple system layers, penetration testing reveals how vulnerabilities interact and escalate.

This full-spectrum approach is essential in modern cybersecurity, where systems are interconnected, dynamic, and constantly evolving under continuous deployment and cloud-based architectures.

Conclusion

Penetration testing, as a discipline, has evolved into a structured and essential component of modern cybersecurity strategy rather than a periodic technical exercise. Across today’s highly distributed digital environments, security cannot be understood through isolated system checks or static vulnerability reports. Instead, it must be evaluated through continuous, layered, and behavior-driven analysis. The tools discussed throughout collectively demonstrate how security professionals simulate real-world adversarial behavior to identify weaknesses across networks, applications, databases, systems, and human interactions.

At its core, penetration testing is about understanding how systems fail under controlled attack conditions. This includes not only identifying vulnerabilities but also evaluating how those vulnerabilities interact when combined. Modern attackers rarely rely on a single exploit. Instead, they build attack chains that begin with reconnaissance, progress through exploitation, and end with data extraction, system disruption, or persistent access. Penetration testing tools are designed to replicate this exact mindset in a controlled and ethical environment.

Network-level tools such as Nmap establish the foundation of this process by revealing what systems exist, how they communicate, and which services are exposed. Without this visibility, security analysis would be incomplete. Network discovery is essentially the mapping of an organization’s digital footprint. It exposes not only intended infrastructure but also forgotten assets, misconfigured services, and shadow systems that often become entry points for attackers. This foundational layer ensures that security professionals understand the full scope of exposure before deeper analysis begins.

Building on this foundation, web application testing tools such as Burp Suite and SkipFish provide insight into how external-facing systems handle user interaction and data processing. Web applications represent one of the most critical attack surfaces because they directly interface with users and internal systems. Even minor flaws in input validation, session handling, or access control logic can lead to significant compromise. These tools allow testers to observe and manipulate traffic, revealing how applications behave under unexpected or malicious input conditions.

Database-focused tools such as SQLMap extend this analysis into backend systems where sensitive organizational data is stored. Databases are often the ultimate target in cyberattacks because they contain structured, high-value information such as credentials, financial records, and operational data. SQL injection vulnerabilities, when present, can bypass application logic entirely and allow direct interaction with backend systems. By simulating these attacks, penetration testing tools demonstrate how small input handling errors can escalate into full data exposure scenarios.

Content management system tools such as WPScan highlight another critical dimension of modern infrastructure: dependency-driven security risk. Many platforms rely on third-party plugins, themes, and extensions to extend functionality. While these components provide flexibility, they also introduce variability in security quality. Outdated or poorly maintained extensions often become entry points for attackers. WPScan helps identify these risks by analyzing installed components, configuration states, and known vulnerability patterns. This reinforces the importance of maintaining not only core systems but also their extended ecosystems.

System-level tools such as PowerShell scripts and binary analysis platforms like IDA provide deeper visibility into internal system behavior. These tools operate at a lower abstraction layer, allowing security professionals to analyze operating system configurations, compiled software logic, and internal execution flows. This level of analysis is essential for identifying vulnerabilities that are not visible at the application or network level. Memory corruption issues, insecure privilege handling, and hidden execution paths can only be understood through this type of deep technical inspection.

Another critical dimension covered throughout is human behavior, represented through social engineering simulation tools. Technical security controls are often bypassed not through direct system exploitation but through psychological manipulation. Users may unknowingly reveal credentials, approve malicious requests, or interact with deceptive interfaces. Social engineering testing demonstrates how attackers exploit trust and urgency to bypass even strong technical defenses. This highlights that cybersecurity is not solely a technical discipline but also a behavioral and organizational challenge.

When all of these toolsets are viewed together, a clear pattern emerges. Penetration testing is fundamentally about building a complete picture of risk across interconnected systems. No single tool provides a full understanding of security posture. Instead, each tool contributes a specific layer of insight. Network scanners reveal exposure, web tools reveal application logic flaws, database tools expose backend risks, system tools uncover internal misconfigurations, and social engineering tools evaluate human vulnerability. The integration of these perspectives is what transforms penetration testing from a technical process into a strategic security discipline.

Another key takeaway is the importance of attack chain thinking. Modern cybersecurity threats are rarely linear. An attacker may begin with a simple phishing email, gain limited access through weak credentials, escalate privileges through system misconfigurations, and ultimately reach sensitive databases or administrative controls. Each stage of this process relies on different types of vulnerabilities working together. Penetration testing tools are designed to replicate this chain so organizations can understand not just where they are vulnerable, but how those vulnerabilities can be combined in practice.

Automation also plays an increasingly important role in scaling penetration testing efforts. As systems grow more complex and continuously updated, manual testing alone cannot provide sufficient coverage. Automated tools help identify known vulnerabilities quickly and consistently across large environments. However, automation alone is not sufficient. Human analysis remains essential for interpreting results, validating exploitability, and understanding contextual risk. The most effective security strategies combine automated scanning with expert-level manual testing.

Continuous security validation is another critical evolution reflected in modern penetration testing practices. Rather than conducting periodic assessments, organizations now integrate security testing into development and deployment pipelines. This ensures that vulnerabilities are identified early in the lifecycle, reducing both risk and remediation cost. Security becomes an ongoing process embedded within system development rather than a separate post-deployment activity.

Ultimately, penetration testing tools serve a single overarching purpose: to provide realistic, actionable insight into how systems behave under adversarial conditions. They bridge the gap between theoretical vulnerability assessments and practical exploitation scenarios. By simulating real attacker behavior across multiple layers of infrastructure, these tools allow organizations to strengthen defenses in a meaningful and prioritized way.

The true value of penetration testing lies not in the individual tools themselves, but in how they are combined into a cohesive methodology. When network analysis, application testing, database evaluation, system inspection, and human behavior simulation are integrated, they form a complete security validation framework. This framework reflects the reality of modern cyber threats, where complexity, interconnection, and continuous evolution define both risk and defense.