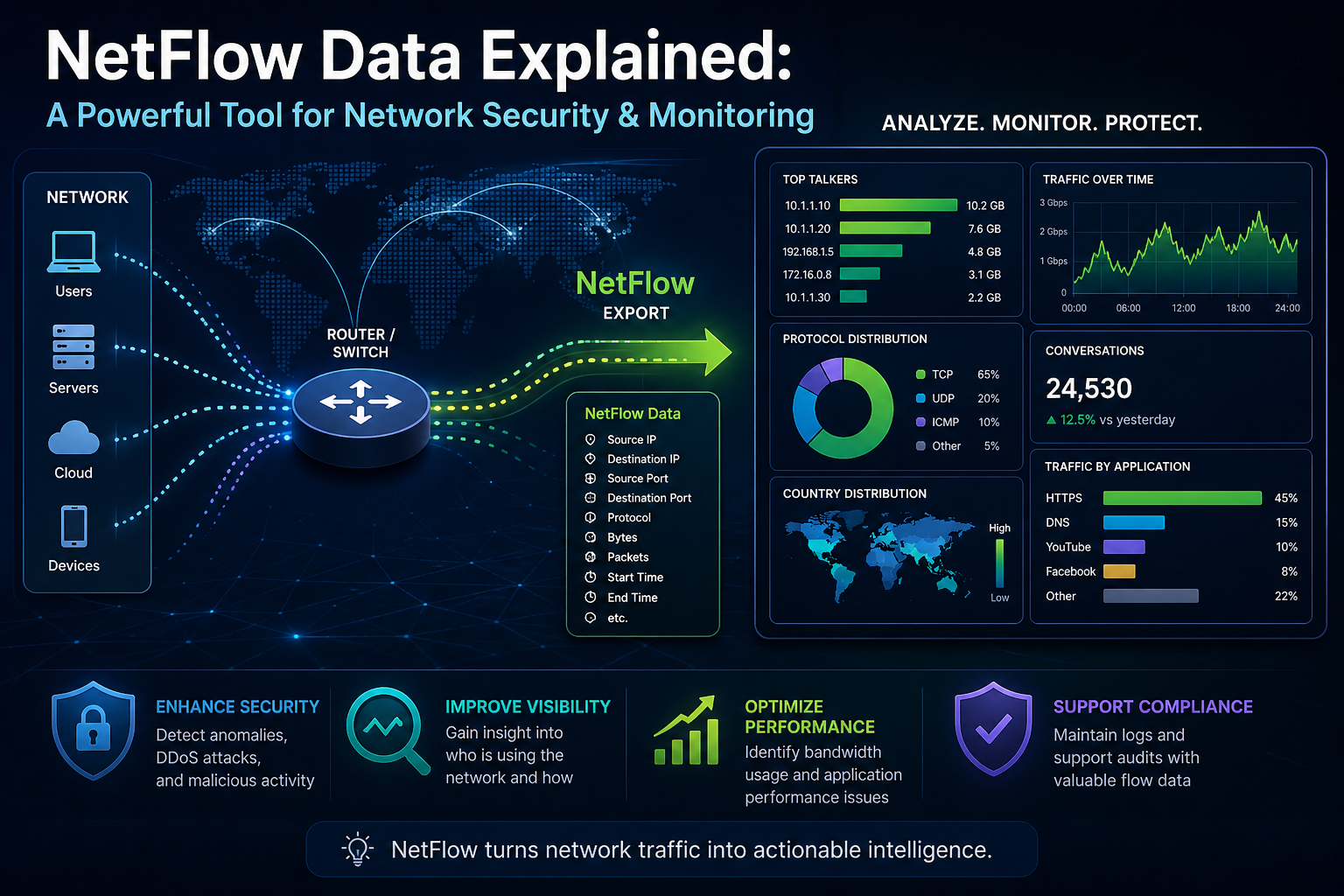

NetFlow data represents a structured method of observing how information moves across a network without capturing the full content of each packet. Instead of focusing on payload inspection, it concentrates on metadata, which provides a summarized yet highly effective perspective on communication patterns between devices. This approach allows network professionals to understand traffic behavior, identify inefficiencies, and maintain operational stability without overwhelming system resources. By transforming raw packet activity into structured records, it becomes significantly easier to interpret how systems interact within complex infrastructures.

Historical Development and Evolution of NetFlow

NetFlow emerged in the mid-1990s as a response to increasing network complexity and the limitations of traditional packet analysis. As organizations expanded their digital environments, it became impractical to capture and analyze every packet due to storage and processing constraints. NetFlow addressed this challenge by introducing a method that captures only essential traffic attributes. Over time, it evolved into a widely adopted framework for monitoring and analyzing network activity, forming a foundational layer in modern network management practices.

Core Concept of Network Flows

At the heart of NetFlow lies the concept of a flow, which is defined as a unidirectional sequence of packets sharing common characteristics. These characteristics typically include source and destination IP addresses, source and destination ports, and the protocol being used. By grouping packets based on these attributes, the system creates a logical representation of communication between devices. This abstraction simplifies the analysis process by reducing the complexity associated with raw packet data.

Flow Records and Their Significance

Flow records serve as the primary data structure within NetFlow systems. Each record contains both identifying attributes and statistical information about the flow. This includes metrics such as the number of packets transferred, total bytes, start time, and end time. These records provide a condensed yet informative view of network activity, enabling engineers to analyze usage patterns and detect anomalies. The structured nature of flow records allows for efficient storage and rapid retrieval, making them suitable for large-scale environments.

Role of Flow Exporters in Data Collection

Flow exporters are responsible for observing network traffic and generating flow records. These are typically embedded within networking devices such as routers, switches, and firewalls. As traffic passes through these devices, exporters classify packets into flows and maintain counters for each flow. Once a flow is complete or reaches a predefined threshold, the exporter prepares a record and sends it to a collector. This process occurs continuously, ensuring that network activity is captured in near real time.

Function of Flow Collectors in Data Processing

Flow collectors act as centralized systems that receive flow records from one or more exporters. Their primary function is to store, process, and organize incoming data. Collectors are designed to handle high volumes of information efficiently, ensuring that no critical data is lost during transmission. In addition to storage, they often perform initial processing tasks such as indexing and aggregation, which facilitate faster analysis. This centralized approach enables a unified view of network activity across multiple devices.

Transforming Data into Actionable Insights

The true value of NetFlow data emerges during the analysis phase. Analytical systems process flow records to identify patterns, trends, and irregularities. This enables network professionals to understand how bandwidth is being utilized, which applications are generating the most traffic, and whether any unusual behavior is present. By converting raw data into actionable insights, NetFlow supports informed decision-making and proactive network management.

Efficiency Through Metadata-Based Monitoring

One of the most significant advantages of NetFlow is its efficiency. By focusing on metadata rather than full packet capture, it reduces the amount of data that needs to be processed and stored. This makes it possible to monitor high-speed networks without introducing significant overhead. The balance between detail and efficiency is a key factor in its widespread adoption, allowing organizations to maintain visibility without compromising performance.

Enhancing Network Visibility and Control

Network visibility refers to the ability to observe and understand traffic behavior in a meaningful way. NetFlow enhances this visibility by providing a comprehensive overview of communication patterns. This allows administrators to identify bottlenecks, detect performance issues, and optimize resource allocation. Without such visibility, managing complex networks would be significantly more challenging, as issues could remain hidden until they cause noticeable disruptions.

Application in Performance Optimization

NetFlow data plays a critical role in optimizing network performance. By analyzing flow records, engineers can identify which applications or services are consuming the most bandwidth. This information can be used to implement traffic shaping policies, prioritize critical applications, and reduce congestion. The ability to pinpoint the source of performance issues enables targeted solutions, improving overall network efficiency.

Contribution to Network Security Monitoring

Security monitoring is another area where NetFlow data proves invaluable. By examining traffic patterns, it becomes possible to detect anomalies that may indicate malicious activity. For example, an unexpected increase in traffic from a single source or unusual communication with external systems can signal potential threats. NetFlow provides the contextual information needed to identify such patterns, supporting early detection and mitigation efforts.

Scalability in Expanding Network Environments

As networks grow in size and complexity, scalability becomes a critical requirement. NetFlow addresses this need by offering a solution that can handle increasing volumes of traffic without a proportional increase in resource consumption. Its metadata-based approach ensures that even large-scale environments can be monitored effectively. This scalability makes it suitable for a wide range of applications, from small networks to enterprise-level infrastructures.

Interoperability Across Diverse Systems

Another key strength of NetFlow is its adaptability across different environments. Although it originated within a specific ecosystem, its underlying principles have been widely adopted. This has led to a level of interoperability that allows different systems and devices to work together seamlessly. Such compatibility is essential in modern networks, where multiple technologies and platforms coexist.

Operational Benefits and Workflow Efficiency

NetFlow contributes to operational efficiency by automating the process of data collection and analysis. Instead of manually inspecting traffic, network teams can rely on automated systems to generate insights. This reduces the time required to identify and resolve issues, allowing professionals to focus on strategic initiatives. The streamlined workflow enhances productivity and ensures that resources are utilized effectively.

Integration with Analytical Ecosystems

While NetFlow provides the foundational data, its capabilities are often enhanced through integration with analytical tools. These systems process flow records to generate visualizations, reports, and alerts. The combination of raw data and advanced analytics creates a comprehensive monitoring environment that supports both operational and strategic decision-making.

Importance of Sampling Strategies

In high-traffic environments, capturing every packet may not be practical. Sampling techniques are used to analyze a representative portion of traffic, reducing the load on network devices. By selecting an appropriate sampling rate, it is possible to maintain a balance between accuracy and efficiency. Properly implemented sampling ensures that meaningful insights can be derived without overwhelming system resources.

Managing Data Retention and Storage

Flow data can accumulate rapidly, making effective data management essential. Establishing clear retention policies helps ensure that storage resources are used efficiently. Organizations must determine how long data should be retained based on operational and regulatory requirements. Proper data management not only optimizes performance but also ensures that historical information is available when needed.

Deep Dive into NetFlow Architecture

NetFlow operates through a distributed yet coordinated architecture that enables continuous observation of network traffic. This architecture is composed of multiple interconnected components that work together to capture, export, collect, and analyze flow data. Each component performs a specialized function, ensuring that the overall system remains efficient and scalable. The architecture is designed to minimize resource consumption on network devices while still delivering detailed insights into traffic behavior. By separating responsibilities across exporters, collectors, and analyzers, NetFlow achieves a balance between performance and visibility.

At a high level, the architecture begins at the data plane, where packets traverse networking devices. These devices are responsible for identifying flows and maintaining flow caches. Once flows are identified, metadata is extracted and prepared for export. The exported data is then transmitted to centralized systems, where it is stored and processed for further analysis. This layered approach allows NetFlow to scale across complex infrastructures without introducing bottlenecks.

Understanding the Flow Lifecycle

The lifecycle of a network flow is a structured sequence of events that begins when packets are first observed and ends when the flow record is exported and analyzed. This lifecycle is fundamental to understanding how NetFlow transforms raw traffic into meaningful data. It starts with packet classification, where incoming packets are inspected and matched against existing flow entries. If a matching entry exists, the packet is added to that flow; otherwise, a new flow entry is created.

As packets continue to arrive, the flow entry is updated with additional statistics such as byte count and packet count. This process continues until the flow reaches a termination condition. Termination can occur due to inactivity, expiration timers, or the completion of a communication session. Once a flow is terminated, it is prepared for export, marking the transition from observation to reporting.

Flow Cache and Its Operational Role

The flow cache is a critical component within the exporter. It acts as a temporary storage area where active flows are maintained. Each entry in the cache represents a unique flow, along with its associated statistics. The cache must be managed efficiently to ensure that it can handle high volumes of traffic without overflow. When the cache reaches its capacity, older or inactive flows are expired and exported to make room for new entries.

Efficient cache management is essential for maintaining accuracy. If the cache is too small, flows may be prematurely expired, resulting in incomplete data. Conversely, an excessively large cache may consume unnecessary memory resources. The configuration of cache size and timeout values plays a significant role in optimizing performance and ensuring reliable data collection.

Packet Classification and Flow Identification

Packet classification is the process of examining incoming packets and determining their flow membership. This involves analyzing key attributes such as IP addresses, ports, and protocol types. These attributes form the basis of the flow key, which uniquely identifies each flow. The classification process must be performed at high speed to keep up with network traffic, especially in high-throughput environments.

Once a packet is classified, it is either associated with an existing flow or used to create a new flow entry. This decision is made based on whether the packet’s attributes match an existing flow key. Accurate classification ensures that flows are correctly grouped, which is essential for meaningful analysis. Any errors in classification can lead to fragmented or misleading data.

Timers and Flow Expiration Mechanisms

Timers play a crucial role in determining when a flow should be exported. There are typically two types of timers: active timers and inactive timers. The active timer ensures that long-lived flows are periodically exported, even if they are still active. This prevents the cache from being occupied by a single flow for an extended period. The inactive timer, on the other hand, triggers export when no packets have been observed for a specified duration.

These timers ensure that flow data remains current and that the cache is efficiently utilized. Proper configuration of timer values is important for balancing data granularity with system performance. Shorter timers provide more frequent updates but increase the volume of exported data, while longer timers reduce overhead but may delay visibility.

Flow Export Process and Data Transmission

Once a flow is ready for export, it is converted into a structured record and transmitted to a collector. This process involves formatting the data according to the chosen NetFlow version or protocol. The exporter encapsulates flow records into packets and sends them over the network, typically using a lightweight transport mechanism.

The export process must be reliable and efficient, as it involves continuous transmission of data. Any delays or losses during this stage can impact the accuracy of analysis. To mitigate such issues, exporters often implement buffering mechanisms that temporarily store flow records before transmission. This ensures that data is not lost during periods of high activity.

Role of Transport Protocols in Export

The choice of transport protocol influences how flow data is delivered to the collector. Lightweight protocols are commonly used to minimize overhead and ensure rapid transmission. However, these protocols may not guarantee delivery, which introduces a trade-off between performance and reliability. In environments where data integrity is critical, additional mechanisms may be implemented to detect and compensate for lost records.

The transport layer must also handle variations in network conditions. Congestion, latency, and packet loss can all affect the delivery of flow data. Proper network design and configuration are essential to ensure that flow records reach the collector without significant disruption.

Flow Collectors and Data Ingestion Pipelines

Flow collectors serve as the central repository for NetFlow data. They are designed to handle large volumes of incoming records and store them in a structured format. The ingestion pipeline within the collector is optimized for high throughput, allowing it to process data from multiple exporters simultaneously. This pipeline includes stages for parsing, validation, and indexing.

Parsing involves interpreting the structure of incoming records and extracting relevant fields. Validation ensures that the data is complete and consistent, while indexing organizes the data for efficient retrieval. Together, these processes enable the collector to maintain a comprehensive and accessible dataset.

Data Normalization and Aggregation Techniques

Once flow records are ingested, they often undergo normalization and aggregation. Normalization involves standardizing data formats to ensure consistency across different sources. This is particularly important in environments with multiple exporters or varying protocol versions. Aggregation, on the other hand, combines related records to provide a higher-level view of network activity.

These techniques reduce the complexity of analysis by presenting data in a more manageable form. For example, instead of analyzing individual flows, aggregated data can reveal trends such as total bandwidth usage over time. This enables more efficient decision-making and simplifies reporting.

Analytical Processing and Pattern Recognition

The analytical phase involves processing flow data to extract meaningful insights. This includes identifying patterns, trends, and anomalies within the dataset. Advanced analytical methods may involve statistical modeling and machine learning techniques, which enhance the ability to detect subtle changes in network behavior.

Pattern recognition is particularly valuable for identifying recurring issues or unusual activity. For instance, consistent spikes in traffic at specific intervals may indicate scheduled processes, while irregular spikes could signal potential threats. By analyzing these patterns, network professionals can gain a deeper understanding of system behavior.

Handling High-Volume Data Streams

Modern networks generate vast amounts of traffic, resulting in high volumes of flow data. Managing these data streams requires efficient processing techniques and scalable infrastructure. Collectors must be capable of handling continuous input without performance degradation. This often involves distributed processing systems that can scale horizontally as demand increases.

Load balancing is another important consideration. By distributing data across multiple processing units, the system can maintain performance even under heavy load. This ensures that flow data is processed in real time, enabling timely insights and responses.

Storage Strategies for Flow Data

Effective storage is essential for maintaining access to historical flow data. Different storage strategies may be employed depending on the volume and retention requirements. High-performance storage systems are used for recent data, while older data may be archived to reduce costs. Compression techniques are often applied to minimize storage requirements without sacrificing data integrity.

The choice of storage architecture impacts both performance and accessibility. Fast storage enables quick retrieval for analysis, while scalable storage ensures that the system can accommodate growing data volumes. Balancing these factors is key to maintaining an efficient NetFlow deployment.

Real-Time Versus Historical Analysis

NetFlow supports both real-time and historical analysis, each serving distinct purposes. Real-time analysis focuses on immediate visibility, allowing network professionals to respond quickly to emerging issues. This is particularly important for security monitoring, where rapid detection of threats is critical.

Historical analysis, on the other hand, provides insights into long-term trends and patterns. By examining past data, organizations can identify recurring issues, plan capacity upgrades, and evaluate the effectiveness of previous actions. The combination of real-time and historical perspectives provides a comprehensive view of network performance.

Challenges in Flow Data Accuracy

While NetFlow provides valuable insights, it is not without limitations. One of the primary challenges is ensuring data accuracy. Factors such as sampling, packet loss, and timing discrepancies can affect the completeness of flow records. Understanding these limitations is essential for interpreting data correctly.

To mitigate these challenges, organizations must carefully configure their NetFlow systems and validate the data they collect. Cross-referencing flow data with other monitoring tools can also improve accuracy and provide a more complete picture of network activity.

Scalability Considerations in Large Networks

Scalability is a key concern in large network environments. As the number of devices and volume of traffic increase, the NetFlow system must be able to accommodate this growth. This requires careful planning of infrastructure, including processing power, storage capacity, and network bandwidth.

Scalable architectures often involve distributed systems that can expand as needed. By adding additional collectors or processing nodes, the system can handle increased demand without compromising performance. This flexibility ensures that NetFlow remains effective even as network requirements evolve.

Security Implications of Flow Data Collection

The collection and transmission of flow data introduce certain security considerations. Flow records may contain sensitive information about network activity, making them a potential target for unauthorized access. Protecting this data requires the implementation of appropriate security measures, such as encryption and access controls.

Ensuring the integrity of flow data is also important. Any tampering or corruption can lead to inaccurate analysis and potentially harmful decisions. By securing both the data and the systems that process it, organizations can maintain trust in their monitoring infrastructure.

Integration with Broader Monitoring Systems

NetFlow is often integrated with other monitoring and management systems to provide a more comprehensive view of network activity. These integrations enable correlation between flow data and other types of data, such as system logs and performance metrics. This holistic approach enhances the ability to diagnose issues and identify root causes.

By combining multiple data sources, organizations can achieve a deeper level of insight. This integrated perspective supports more effective decision-making and improves overall network management.

Optimizing Performance Through Configuration Tuning

Proper configuration is essential for maximizing the effectiveness of NetFlow. This includes tuning parameters such as sampling rates, cache sizes, and timer values. Each of these parameters influences the balance between data accuracy and system performance.

Fine-tuning these settings requires an understanding of network characteristics and operational requirements. By adjusting configurations based on real-world conditions, organizations can optimize their NetFlow deployments for both efficiency and reliability.

Evolving Capabilities in Flow-Based Monitoring

As network technologies continue to advance, the capabilities of flow-based monitoring systems are also evolving. Enhancements in processing power and analytical techniques are enabling more sophisticated analysis of flow data. This includes the ability to detect complex patterns and predict future trends.

These advancements are expanding the role of NetFlow beyond traditional monitoring. It is increasingly being used as a foundation for advanced analytics and automation, supporting more intelligent and adaptive network management strategies.

Evolution of Flow-Based Monitoring Technologies

Flow-based monitoring has undergone significant transformation since its initial introduction. Early implementations focused on basic traffic visibility, providing limited flexibility in how data was captured and exported. As network environments became more diverse and complex, the need for adaptable and extensible monitoring solutions grew. This led to the development of multiple NetFlow versions and related protocols, each designed to address specific limitations of earlier models while improving scalability, flexibility, and interoperability.

The evolution of these technologies reflects the broader shift in networking toward standardized and vendor-neutral solutions. While early designs were tightly coupled with specific hardware ecosystems, modern implementations emphasize flexibility and cross-platform compatibility. This progression has enabled organizations to deploy flow monitoring solutions across heterogeneous environments without being restricted to a single vendor’s infrastructure.

Limitations of Early NetFlow Implementations

The earliest versions of NetFlow were effective for basic traffic monitoring but had inherent limitations. They relied on fixed data structures, which restricted the type and amount of information that could be collected. This rigidity made it difficult to adapt to emerging protocols and new network requirements. As a result, organizations often faced challenges when attempting to extend their monitoring capabilities beyond predefined parameters.

Another limitation was scalability. As network traffic increased, the volume of flow data grew significantly, placing additional strain on both exporters and collectors. Without efficient mechanisms for handling large datasets, early implementations struggled to maintain performance in high-throughput environments. These challenges highlighted the need for more flexible and scalable solutions.

Introduction of Template-Based Flow Structures

To address the limitations of fixed data formats, newer versions introduced template-based structures. Templates define the format of flow records dynamically, allowing exporters to specify which fields are included in each record. This approach provides greater flexibility, enabling the system to adapt to different monitoring requirements without requiring significant changes to the underlying infrastructure.

Template-based structures also support extensibility. As new protocols and technologies emerge, additional fields can be incorporated into flow records without disrupting existing systems. This adaptability is essential for maintaining relevance in rapidly evolving network environments. By allowing customization of data fields, templates ensure that flow monitoring can meet a wide range of analytical needs.

Advancements in NetFlow Version 9

NetFlow Version 9 marked a significant milestone in the evolution of flow-based monitoring. It introduced the concept of templates, enabling dynamic definition of flow record formats. This innovation addressed many of the limitations associated with earlier versions, providing a more flexible and scalable solution. Version 9 also improved support for modern networking protocols, including IPv6, which was becoming increasingly important at the time.

Another key enhancement in Version 9 was its ability to handle larger volumes of data more efficiently. By optimizing the export process and introducing more sophisticated data structures, it reduced the overhead associated with flow monitoring. This made it suitable for deployment in large-scale environments where performance and scalability are critical considerations.

Understanding Vendor-Specific Variants

As flow-based monitoring gained popularity, various vendors developed their own implementations based on the original concept. These variants often introduced additional features or optimizations tailored to specific hardware and software environments. While they maintained compatibility with the core principles of NetFlow, they sometimes included proprietary extensions that enhanced functionality.

Vendor-specific variants provided organizations with more options but also introduced challenges related to interoperability. Differences in data formats and export mechanisms could complicate integration with third-party tools. Despite these challenges, the widespread adoption of flow-based monitoring ensured that most systems retained a degree of compatibility, allowing for effective data exchange across platforms.

Emergence of IP Flow Information Export (IPFIX)

IP Flow Information Export, commonly known as IPFIX, represents a standardized approach to flow-based monitoring. It builds upon the concepts introduced in NetFlow Version 9 while providing a vendor-neutral framework. This standardization enables interoperability between devices and systems from different manufacturers, making it easier to deploy unified monitoring solutions.

IPFIX retains the template-based structure, allowing for flexible definition of flow records. It also introduces additional capabilities, such as support for enterprise-specific fields and improved data modeling. These features make it a powerful tool for organizations seeking a comprehensive and adaptable monitoring solution. By adhering to an open standard, IPFIX ensures that flow data can be shared and analyzed across diverse environments.

Comparative Analysis of NetFlow and IPFIX

While NetFlow and IPFIX share many similarities, there are important distinctions between the two. NetFlow is often associated with its original implementations and vendor-specific variants, whereas IPFIX represents a standardized evolution of the same concept. Both use template-based structures and support flexible data collection, but IPFIX provides a more formalized framework for interoperability.

In practice, the choice between NetFlow and IPFIX depends on organizational requirements and existing infrastructure. Some environments may prioritize compatibility with legacy systems, while others may benefit from the standardization and extensibility offered by IPFIX. Understanding these differences is essential for selecting the most appropriate solution.

Role of Flexible Data Fields in Modern Monitoring

The ability to define custom data fields is a key feature of modern flow-based monitoring systems. Flexible data fields allow organizations to capture information that is specifically relevant to their operational needs. This may include application identifiers, quality of service parameters, or security-related attributes. By tailoring flow records to include these fields, organizations can gain deeper insights into network behavior.

This flexibility also supports advanced analytical techniques. By incorporating additional data points, it becomes possible to perform more detailed analysis and identify subtle patterns that might otherwise go unnoticed. The ability to adapt data collection to changing requirements ensures that flow monitoring remains effective in dynamic environments.

Support for IPv6 and Emerging Protocols

As networking technologies evolve, support for new protocols becomes increasingly important. Modern flow-based monitoring systems are designed to accommodate these changes, ensuring that they remain relevant in diverse environments. IPv6, in particular, has become a critical component of modern networks, and its integration into flow monitoring systems is essential.

In addition to IPv6, newer protocols and communication methods continue to emerge. Flow monitoring systems must be capable of adapting to these developments, capturing relevant data without requiring extensive reconfiguration. This adaptability is a key factor in the long-term viability of flow-based monitoring solutions.

Scalability Enhancements in Modern Versions

Scalability is a defining characteristic of modern flow monitoring systems. Enhancements in data processing and export mechanisms have enabled these systems to handle significantly larger volumes of traffic. Techniques such as data aggregation, efficient encoding, and optimized transmission protocols contribute to improved scalability.

These enhancements ensure that flow monitoring can keep pace with the growth of network traffic. As organizations expand their digital operations, the ability to scale monitoring solutions becomes increasingly important. Modern versions of NetFlow and related protocols are designed with this requirement in mind, providing robust performance even in demanding environments.

Interoperability and Standardization Benefits

Standardization plays a crucial role in enabling interoperability between different systems and devices. By adhering to common protocols and data formats, flow monitoring solutions can operate seamlessly across diverse environments. This reduces the complexity of integration and allows organizations to leverage a wide range of tools and technologies.

Interoperability also facilitates data sharing and collaboration. By using standardized formats, organizations can exchange flow data with external partners or integrate it into broader analytical frameworks. This capability enhances the overall value of flow monitoring, extending its applications beyond individual networks.

Security Enhancements in Flow Protocols

Modern flow monitoring protocols incorporate various features designed to enhance security. These include mechanisms for data validation, integrity checking, and secure transmission. By ensuring that flow data is accurate and protected, these features help maintain trust in the monitoring system.

Security enhancements also support the detection of threats. By providing detailed insights into traffic patterns, flow monitoring systems enable the identification of suspicious activity. This capability is essential for maintaining the security of network environments, particularly in the face of increasingly sophisticated threats.

Customization and Extensibility in Flow Monitoring

Customization is a key advantage of modern flow monitoring systems. By allowing organizations to define their own data fields and templates, these systems can be tailored to specific requirements. This level of customization ensures that monitoring solutions remain aligned with organizational goals and operational needs.

Extensibility further enhances this capability by enabling the addition of new features and data fields over time. As network environments evolve, monitoring systems can be updated to capture new types of information. This ensures that they remain relevant and effective in the face of changing requirements.

Impact of Protocol Evolution on Network Operations

The evolution of flow monitoring protocols has had a profound impact on network operations. By providing more detailed and flexible data, these protocols have enabled more sophisticated analysis and decision-making. This has led to improvements in performance optimization, capacity planning, and security management.

The ability to adapt to changing requirements has also enhanced the resilience of network infrastructures. By leveraging advanced monitoring capabilities, organizations can respond more effectively to challenges and maintain consistent performance. This adaptability is a key factor in the success of modern network operations.

Adoption Trends Across Industries

Flow-based monitoring has been widely adopted across various industries due to its effectiveness and versatility. Organizations in sectors such as telecommunications, finance, and healthcare rely on these systems to manage complex network environments. The ability to gain detailed insights into traffic behavior makes flow monitoring an essential tool for maintaining operational efficiency.

Adoption trends also reflect the increasing importance of data-driven decision-making. By providing accurate and actionable insights, flow monitoring systems support a wide range of applications, from performance optimization to security management. This widespread adoption underscores their value in modern IT environments.

Future Directions in Flow Monitoring Technologies

The future of flow-based monitoring is shaped by ongoing advancements in networking and data analysis. Emerging technologies such as artificial intelligence and machine learning are being integrated into monitoring systems, enabling more advanced analysis and predictive capabilities. These developments are enhancing the ability to detect anomalies and anticipate potential issues before they occur.

In addition to analytical advancements, improvements in hardware and software are enabling more efficient data processing. This includes faster processing speeds, improved storage solutions, and more sophisticated algorithms. These innovations are expanding the capabilities of flow monitoring systems, ensuring that they remain a critical component of network management.

Balancing Flexibility and Complexity

While modern flow monitoring systems offer significant flexibility, they also introduce a degree of complexity. Configuring templates, defining data fields, and managing large datasets require careful planning and expertise. Balancing flexibility with usability is an important consideration for organizations implementing these systems.

Effective management of this complexity involves establishing clear policies and procedures. By standardizing configurations and maintaining consistent practices, organizations can ensure that their monitoring systems remain efficient and manageable. This balance is essential for maximizing the benefits of flow-based monitoring.

Integration with Evolving Network Architectures

As network architectures evolve, flow monitoring systems must adapt to new environments. This includes support for virtualized networks, cloud-based infrastructures, and software-defined networking. These environments introduce new challenges and opportunities for monitoring, requiring systems that can operate effectively across different layers of the network.

Integration with these architectures ensures that flow monitoring remains relevant in modern IT environments. By providing visibility into both physical and virtual components, these systems enable comprehensive monitoring and analysis. This capability is essential for managing the complexity of contemporary network infrastructures.

Conclusion

NetFlow data has become a foundational element in how modern networks are observed, understood, and optimized. Across evolving infrastructures, its value lies not in capturing every detail of network traffic, but in structuring communication into meaningful flow-based records. This transformation from raw packet-level activity into aggregated metadata enables a clearer and more efficient perspective on how systems interact at scale. In environments where traffic volume is continuously increasing, this abstraction is what makes practical monitoring possible without overwhelming computational and storage resources.

One of the most significant contributions of NetFlow is its ability to bridge visibility and efficiency. Traditional packet inspection methods, while detailed, are often impractical in high-speed or large-scale networks due to the heavy processing demands they impose. NetFlow resolves this challenge by focusing on essential attributes such as source and destination information, protocol behavior, and traffic volume metrics. These elements are sufficient to reconstruct a reliable picture of network activity, making it possible to analyze performance trends and operational behavior without requiring full packet reconstruction.

The structured nature of flow records also enhances interpretability. Instead of dealing with unstructured streams of packet data, network professionals work with organized datasets that summarize communication sessions. This structure allows for faster identification of anomalies, more efficient troubleshooting, and improved capacity planning. Whether diagnosing latency issues, investigating unusual traffic spikes, or analyzing long-term bandwidth consumption, flow data provides the necessary context to make informed decisions.

Security is another domain where NetFlow data demonstrates substantial value. Modern cyber threats often rely on subtle patterns of abnormal communication rather than obvious disruptions. Flow-based monitoring makes it possible to detect these patterns by highlighting deviations in traffic behavior. For instance, unexpected outbound connections, unusual data transfer volumes, or irregular communication intervals can all indicate potential security incidents. Because NetFlow focuses on communication behavior rather than content, it also supports privacy-conscious monitoring approaches while still delivering actionable intelligence.

Beyond operational and security use cases, NetFlow plays a critical role in strategic network planning. Organizations rely on historical flow data to understand how their networks evolve over time. This includes identifying peak usage periods, forecasting future bandwidth requirements, and evaluating the impact of infrastructure changes. By analyzing trends rather than isolated events, decision-makers gain a long-term perspective that supports more accurate planning and resource allocation.

Another important aspect of NetFlow is its adaptability. Over time, it has evolved through multiple versions and standards, each improving flexibility, scalability, and interoperability. The introduction of template-based structures and vendor-neutral protocols has ensured that flow-based monitoring remains relevant across diverse environments. This adaptability is essential in today’s networking landscape, where hybrid infrastructures combine on-premises systems, cloud platforms, and virtualized networks.

Despite its strengths, effective use of NetFlow requires careful implementation. Factors such as sampling rates, export timing, and data retention strategies must be configured thoughtfully to balance accuracy and system performance. Poor configuration can lead to incomplete insights or unnecessary resource consumption. Therefore, successful deployment depends not only on the technology itself but also on how it is tuned to match the specific needs of the environment in which it operates.

The integration of NetFlow with analytical systems further extends its capabilities. When combined with visualization tools and data processing platforms, flow records become part of a larger ecosystem of network intelligence. This integration enables deeper insights through correlation with logs, metrics, and external data sources. As a result, organizations can move beyond basic monitoring and toward comprehensive network intelligence frameworks that support automation and predictive analysis.

Looking at the broader technological landscape, the importance of flow-based monitoring continues to grow. Networks are becoming more complex, distributed, and dynamic. Applications are increasingly cloud-based, traffic patterns are more unpredictable, and security threats are more sophisticated. In this environment, traditional monitoring methods alone are insufficient. Flow-based approaches provide the scalability and abstraction needed to maintain visibility across highly dynamic systems.

At the same time, advancements in data analytics, machine learning, and real-time processing are expanding what can be achieved with flow data. Instead of simply observing traffic, modern systems can now identify patterns, predict anomalies, and automate responses. This evolution is transforming NetFlow from a passive monitoring tool into an active component of intelligent network management systems. It is no longer just about seeing what is happening, but about understanding why it is happening and what is likely to happen next.

The continued relevance of NetFlow data also reflects a broader principle in network engineering: the need for balance between detail and scalability. While deep packet inspection provides granular insights, it does not scale efficiently in large environments. Flow-based monitoring offers a middle ground, delivering meaningful insights without excessive overhead. This balance is what ensures its ongoing adoption across industries ranging from enterprise IT to telecommunications and cloud infrastructure.

Ultimately, NetFlow data remains a critical tool for maintaining visibility, stability, and security in modern networks. Its ability to simplify complexity, support scalable monitoring, and provide actionable insights ensures that it continues to play a central role in network operations. As infrastructures continue to evolve, the principles behind flow-based monitoring will remain relevant, adapting to new technologies while preserving the core objective of understanding how data moves across systems in a meaningful and efficient way.